一、环境

#OS

Ubuntu :22.04

#K8S

root@ubuntu-k8s-master01:~# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

ubuntu-k8s-master01 Ready control-plane 21d v1.28.2 192.168.40.132 <none> Ubuntu 22.04 LTS 5.15.0-100-generic containerd://1.6.28

ubuntu-k8s-node01 Ready <none> 21d v1.28.2 192.168.40.133 <none> Ubuntu 22.04 LTS 5.15.0-100-generic containerd://1.6.28

ubuntu-k8s-node02 Ready <none> 21d v1.28.2 192.168.40.134 <none> Ubuntu 22.04 LTS 5.15.0-100-generic containerd://1.6.28

#插件

Cilium

#NFS服务器 Centos 7

192.168.40.104

/data/nfs

二、部署minio

#使用docker-compose拉起minio服务

[root@k8s-harbor ~]# mkdir minio

[root@k8s-harbor ~]# vim minio/docker-compose.yml

version: '3.8'

services:

minio:

image: minio/minio:RELEASE.2024-02-26T09-33-48Z

container_name: minio

restart: unless-stopped

environment:

MINIO_ROOT_USER: 'minioadmin'

MINIO_ROOT_PASSWORD: 'sheca123'

MINIO_ADDRESS: ':9000'

MINIO_CONSOLE_ADDRESS: ':9001'

ports:

- "9000:9000"

- "9001:9001"

networks:

- minionetwork

volumes:

- ./data:/data

healthcheck:

test: ["CMD", "curl", "-f", "http://localhost:9000/minio/health/live"]

interval: 30s

timeout: 20s

retries: 3

command: server /data

networks:

minionetwork:

driver: bridge

[root@k8s-harbor ~]# cd minio/

[root@k8s-harbor minio]# docker-compose up -d

#http://192.168.40.104:9001/login

#minioadmin/sheca123

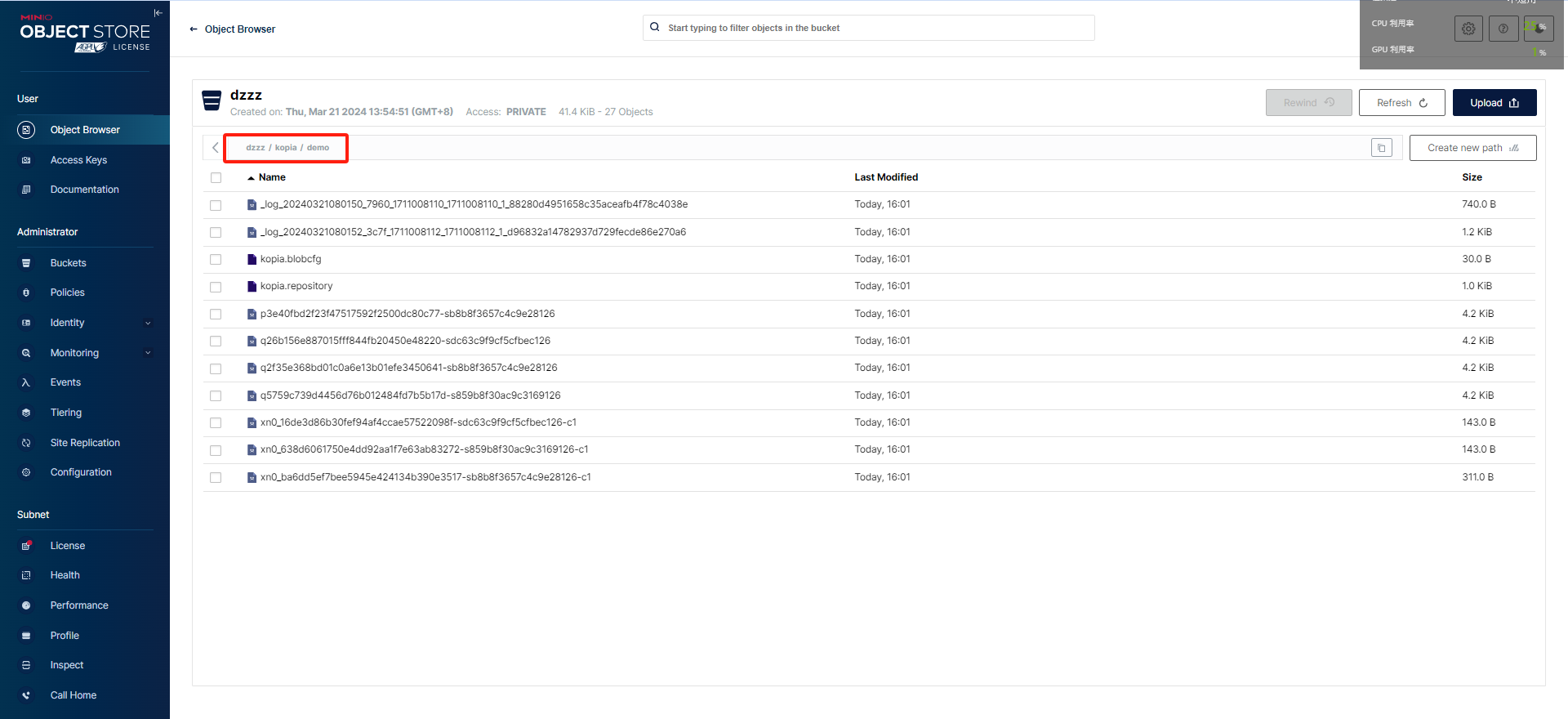

#管理界面创建 Bucket - dzzz

![]() 三、部署velero

三、部署velero

#【1】

#创建一个凭据文件 - credentials-velero

root@ubuntu-k8s-master01:~# cat credentials-velero

[default]

aws_access_key_id = minioadmin

aws_secret_access_key = sheca123

region = minio

#【2】

#部署Velero CTL - 1.13.1 版本

#https://github.com/vmware-tanzu/velero/releases/download/v1.13.1/velero-v1.13.1-linux-amd64.tar.gz

root@ubuntu-k8s-master01:~# tar zxvf velero-v1.13.1-linux-amd64.tar.gz

root@ubuntu-k8s-master01:~# cp velero-v1.13.1-linux-amd64/velero /usr/local/bin

root@ubuntu-k8s-master01:~# velero --help

#【3】

#部署Velero

#--use-node-agent/--uploader-type=kopia => 在每个节点创建一个Daemonset agent 可基于文件系统备份(FileSystem Backup)备份Pod卷中的数据,并借助于restict或kopia上传到对象存储系统

velero install \

--secret-file ./credentials-velero \

--provider aws \

--bucket dzzz \

--backup-location-config region=minio,s3ForcePathStyle="true",s3Url=http://192.168.40.104:9000 \

--plugins velero/velero-plugin-for-aws:v1.9.0 \

--use-volume-snapshots=true \

--snapshot-location-config region=minio \

--use-node-agent \

--uploader-type=kopia

上面命令中使用了众多选项,其中各选项的功能如下。

--provider:用于保存备份和卷数据的Provider的名称;Velero支持多种Provider,不同的Provider通常需要依赖专用的插件

--plugins:要加载的插件列表,各插件是引用的Image的名称

--backup-location-config:保存备份的存储系统的具体信息,格式为“key1=value1,key2=value2”

--snapshot-location-config:保存PV快照的存储系统的具体信息,格式为“key1=value1,key2=value2”

--use-volume-snapshots:是否自动创建用于保存快照的snapshot location,默认为true

--secret-file:保存有认证到存储系统的认证凭据的文件

--bucket:远端对象存储系统上用于保存备份信息的bucket

--use-node-agent:是否创建用于部署node agent的DaemonSet,它们负责基于Restic或Kopia上传卷和快照中的数据至远端存储系统;

--uploader-type:上传数据使用的uploader,可用值为Restic或Kopia

#创建了一个velero名称空间

root@ubuntu-k8s-master01:~# kubectl get ns

NAME STATUS AGE

cilium-secrets Active 20d

default Active 21d

kube-node-lease Active 21d

kube-public Active 21d

kube-system Active 21d

metallb-system Active 20d

velero Active 41s

#两个node-agent Pod、以及velero Pod

root@ubuntu-k8s-master01:~# kubectl get pods -n velero

NAME READY STATUS RESTARTS AGE

node-agent-4bzjg 1/1 Running 0 11m

node-agent-7wnsq 1/1 Running 0 11m

velero-76ddc79b5-4b6jl 1/1 Running 0 11m

#--api-group=velero.io

root@ubuntu-k8s-master01:~# kubectl api-resources --api-group=velero.io

NAME SHORTNAMES APIVERSION NAMESPACED KIND

backuprepositories velero.io/v1 true BackupRepository

backups velero.io/v1 true Backup

backupstoragelocations bsl velero.io/v1 true BackupStorageLocation

datadownloads velero.io/v2alpha1 true DataDownload

datauploads velero.io/v2alpha1 true DataUpload

deletebackuprequests velero.io/v1 true DeleteBackupRequest

downloadrequests velero.io/v1 true DownloadRequest

podvolumebackups velero.io/v1 true PodVolumeBackup

podvolumerestores velero.io/v1 true PodVolumeRestore

restores velero.io/v1 true Restore

schedules velero.io/v1 true Schedule

serverstatusrequests ssr velero.io/v1 true ServerStatusRequest

volumesnapshotlocations vsl velero.io/v1 true VolumeSnapshotLocation

#查看 BackupStorageLocation-default 配置

root@ubuntu-k8s-master01:~# kubectl describe BackupStorageLocation default -n velero

Name: default

Namespace: velero

Labels: component=velero

Annotations: <none>

API Version: velero.io/v1

Kind: BackupStorageLocation

Metadata:

Creation Timestamp: 2024-03-21T06:11:17Z

Generation: 23

Resource Version: 1558144

UID: cceec49a-20a1-47b0-93b4-a2c87ca7399a

Spec:

Config:

Region: minio

s3ForcePathStyle: true

s3Url: http://192.168.40.104:9000

Default: true

Object Storage:

Bucket: dzzz

Provider: aws

Status:

Last Synced Time: 2024-03-21T06:26:43Z

Last Validation Time: 2024-03-21T06:26:43Z

Phase: Available

Events: <none>

#查看 VolumeSnapshotLocation - default 配置

root@ubuntu-k8s-master01:~# kubectl describe VolumeSnapshotLocation default -n velero

Name: default

Namespace: velero

Labels: component=velero

Annotations: <none>

API Version: velero.io/v1

Kind: VolumeSnapshotLocation

Metadata:

Creation Timestamp: 2024-03-21T06:11:17Z

Generation: 1

Resource Version: 1555836

UID: fda79885-7ac6-46a4-8dd0-a851c1cffca9

Spec:

Config:

Region: minio

Provider: aws

Events: <none>

四、创建无状态备份

#【1】创建一个无状态应用

#创建在demo/demoapp app

root@ubuntu-k8s-master01:~# kubectl create ns demo

namespace/demo created

root@ubuntu-k8s-master01:~# kubectl create deployment demoapp --image=ikubernetes/demoapp:v1.0 --replicas=3 -n demo

deployment.apps/demoapp created

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 49s

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 49s

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 49s

#【2】创建一个备份 - 只备份 demo名称空间

root@ubuntu-k8s-master01:~# velero backup create demo --include-namespaces demo

Backup request "demo" submitted successfully.

Run `velero backup describe demo` or `velero backup logs demo` for more details.

root@ubuntu-k8s-master01:~# velero backup get

NAME STATUS ERRORS WARNINGS CREATED EXPIRES STORAGE LOCATION SELECTOR

demo Completed 0 0 2024-03-21 06:46:30 +0000 UTC 29d default <none>

#【3】模拟误删除demo名称空间 进行恢复操作

root@ubuntu-k8s-master01:~# kubectl delete ns demo

root@ubuntu-k8s-master01:~# kubectl get ns #确定无demo名称空间

NAME STATUS AGE

cilium-secrets Active 20d

default Active 21d

kube-node-lease Active 21d

kube-public Active 21d

kube-system Active 21d

metallb-system Active 20d

velero Active 40m

#进行恢复

root@ubuntu-k8s-master01:~# velero restore create --from-backup demo

Restore request "demo-20240321065255" submitted successfully.

Run `velero restore describe demo-20240321065255` or `velero restore logs demo-20240321065255` for more details.

root@ubuntu-k8s-master01:~# velero restore get

NAME BACKUP STATUS STARTED COMPLETED ERRORS WARNINGS CREATED SELECTOR

demo-20240321065255 demo Completed 2024-03-21 06:52:55 +0000 UTC 2024-03-21 06:52:55 +0000 UTC 0 2 2024-03-21 06:52:55 +0000 UTC <none>

root@ubuntu-k8s-master01:~# kubectl get ns #已恢复demo名称空间

NAME STATUS AGE

cilium-secrets Active 20d

default Active 21d

demo Active 23s

kube-node-lease Active 21d

kube-public Active 21d

kube-system Active 21d

metallb-system Active 20d

velero Active 42m

root@ubuntu-k8s-master01:~# kubectl get pods -n demo #pod重新拉起

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 36s

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 36s

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 36s

![]()

五、创建有状态redis应用备份恢复数据-使用csi-nfs

#如果卷不支持快照-使用参数

#--default-volumes-to-fs-backup: 是否使用FSB机制备份Pod卷中的内容

#hostpath挂载的不行 不能进行恢复卷数据

#使用csi-nfs

#【1】安装一个NFS服务

[root@k8s-harbor ~]# yum install -y nfs-utils

[root@k8s-harbor ~]# systemctl start nfs-server

[root@k8s-harbor ~]# systemctl status nfs-server

[root@k8s-harbor ~]# ps -ef | grep nfs

[root@k8s-harbor ~]# mkdir /data/nfs -p

[root@k8s-harbor ~]# cat /etc/exports

/data/nfs 192.168.40.0/24(rw,fsid=0,async,no_subtree_check,no_auth_nlm,insecure,no_root_squash)

[root@k8s-harbor ~]# exportfs -arv

exporting 192.168.40.0/24:/data/nfs

#【2】安装 csi-driver-nfs

#https://github.com/kubernetes-csi/csi-driver-nfs

#https://github.com/kubernetes-csi/csi-driver-nfs/blob/master/deploy/example/README.md

#https://github.com/kubernetes-csi/csi-driver-nfs/blob/master/deploy/example/nfs-provisioner/README.md

root@ubuntu-k8s-master01:~# git clone https://github.com/kubernetes-csi/csi-driver-nfs.git

#Install NFS CSI driver master version on a kubernetes cluster

#https://github.com/kubernetes-csi/csi-driver-nfs/blob/master/docs/install-csi-driver-master.md

root@ubuntu-k8s-master01:~/csi-nfs/csi-driver-nfs-master/deploy/v4.6.0# cd /root/csi-nfs/csi-driver-nfs-master/deploy/v4.6.0

root@ubuntu-k8s-master01:~/csi-nfs/csi-driver-nfs-master/deploy/v4.6.0# grep image: * #由于服务无法连接仓库 修改 镜像

csi-nfs-controller.yaml: image: registry.k8s.io/sig-storage/csi-provisioner:v4.0.0

csi-nfs-controller.yaml: image: registry.k8s.io/sig-storage/csi-snapshotter:v6.3.3

csi-nfs-controller.yaml: image: registry.k8s.io/sig-storage/livenessprobe:v2.12.0

csi-nfs-controller.yaml: image: registry.k8s.io/sig-storage/nfsplugin:v4.6.0

csi-nfs-node.yaml: image: registry.k8s.io/sig-storage/livenessprobe:v2.12.0

csi-nfs-node.yaml: image: registry.k8s.io/sig-storage/csi-node-driver-registrar:v2.10.0

csi-nfs-node.yaml: image: registry.k8s.io/sig-storage/nfsplugin:v4.6.0

csi-snapshot-controller.yaml: image: registry.k8s.io/sig-storage/snapshot-controller:v6.3.3

#每个节点进行下载

#registry.k8s.io 改为 registry.lank8s.cn

crictl pull registry.lank8s.cn/sig-storage/csi-provisioner:v4.0.0

crictl pull registry.lank8s.cn/sig-storage/csi-snapshotter:v6.3.3

crictl pull registry.lank8s.cn/sig-storage/livenessprobe:v2.12.0

crictl pull registry.lank8s.cn/sig-storage/nfsplugin:v4.6.0

crictl pull registry.lank8s.cn/sig-storage/csi-node-driver-registrar:v2.10.0

crictl pull registry.lank8s.cn/sig-storage/snapshot-controller:v6.3.3

#vi csi-nfs-controller.yaml

#vi csi-nfs-node.yaml

#vi csi-snapshot-controller.yaml

%s/registry.k8s.cn/registry.lank8s.cn/g

root@ubuntu-k8s-master01:~/csi-nfs/csi-driver-nfs-master/deploy/v4.6.0# grep image: *

csi-nfs-controller.yaml: image: registry.lank8s.cn/sig-storage/csi-provisioner:v4.0.0

csi-nfs-controller.yaml: image: registry.lank8s.cn/sig-storage/csi-snapshotter:v6.3.3

csi-nfs-controller.yaml: image: registry.lank8s.cn/sig-storage/livenessprobe:v2.12.0

csi-nfs-controller.yaml: image: registry.lank8s.cn/sig-storage/nfsplugin:v4.6.0

csi-nfs-node.yaml: image: registry.lank8s.cn/sig-storage/livenessprobe:v2.12.0

csi-nfs-node.yaml: image: registry.lank8s.cn/sig-storage/csi-node-driver-registrar:v2.10.0

csi-nfs-node.yaml: image: registry.lank8s.cn/sig-storage/nfsplugin:v4.6.0

csi-snapshot-controller.yaml: image: registry.lank8s.cn/sig-storage/snapshot-controller:v6.3.3

##应用所有配置文件

root@ubuntu-k8s-master01:~/csi-nfs/csi-driver-nfs-master/deploy/v4.6.0# kubectl apply -f .

customresourcedefinition.apiextensions.k8s.io/volumesnapshots.snapshot.storage.k8s.io created

customresourcedefinition.apiextensions.k8s.io/volumesnapshotclasses.snapshot.storage.k8s.io created

customresourcedefinition.apiextensions.k8s.io/volumesnapshotcontents.snapshot.storage.k8s.io created

deployment.apps/csi-nfs-controller created

csidriver.storage.k8s.io/nfs.csi.k8s.io created

daemonset.apps/csi-nfs-node created

deployment.apps/snapshot-controller created

serviceaccount/csi-nfs-controller-sa created

serviceaccount/csi-nfs-node-sa created

clusterrole.rbac.authorization.k8s.io/nfs-external-provisioner-role created

clusterrolebinding.rbac.authorization.k8s.io/nfs-csi-provisioner-binding created

serviceaccount/snapshot-controller created

clusterrole.rbac.authorization.k8s.io/snapshot-controller-runner created

clusterrolebinding.rbac.authorization.k8s.io/snapshot-controller-role created

role.rbac.authorization.k8s.io/snapshot-controller-leaderelection created

rolebinding.rbac.authorization.k8s.io/snapshot-controller-leaderelection created

#kube-system名称空间又会有 新的pod拉起

root@ubuntu-k8s-master01:~/csi-nfs/csi-driver-nfs-master/deploy/v4.6.0# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

cilium-jvvdp 1/1 Running 4 (25h ago) 20d

cilium-mnr4p 1/1 Running 4 (28h ago) 20d

cilium-operator-7764cf64d6-zfw96 1/1 Running 4 (25h ago) 20d

cilium-svx6j 1/1 Running 4 (25h ago) 20d

coredns-774bbd8588-5qx92 1/1 Running 4 (28h ago) 21d

coredns-774bbd8588-ggfjp 1/1 Running 4 (28h ago) 21d

csi-nfs-controller-558cff4c87-sgfmz 4/4 Running 0 27s #

csi-nfs-node-5sd4q 3/3 Running 0 27s #

csi-nfs-node-79kjj 3/3 Running 0 27s #

csi-nfs-node-qkjvq 3/3 Running 0 27s #

etcd-ubuntu-k8s-master01 1/1 Running 33 (25h ago) 21d

etcd-ubuntu-k8s-node01 1/1 Running 4 (25h ago) 21d

etcd-ubuntu-k8s-node02 1/1 Running 4 (25h ago) 21d

hubble-relay-59b8bfd6fb-c7bd4 1/1 Running 5 (25h ago) 20d

hubble-ui-6b4d867c59-jkppj 2/2 Running 8 (25h ago) 20d

kube-apiserver-ubuntu-k8s-master01 1/1 Running 42 (25h ago) 21d

kube-apiserver-ubuntu-k8s-node01 1/1 Running 4 (25h ago) 21d

kube-apiserver-ubuntu-k8s-node02 1/1 Running 4 (25h ago) 21d

kube-controller-manager-ubuntu-k8s-master01 1/1 Running 6 (25h ago) 21d

kube-controller-manager-ubuntu-k8s-node01 1/1 Running 4 (25h ago) 21d

kube-controller-manager-ubuntu-k8s-node02 1/1 Running 4 (25h ago) 21d

kube-scheduler-ubuntu-k8s-master01 1/1 Running 6 (25h ago) 21d

kube-scheduler-ubuntu-k8s-node01 1/1 Running 4 (25h ago) 21d

kube-scheduler-ubuntu-k8s-node02 1/1 Running 4 (25h ago) 21d

snapshot-controller-7d894f54bd-jvzhr 1/1 Running 0 27s #

snapshot-controller-7d894f54bd-lqc96 1/1 Running 0 27s #

#会创建快照些资源 后续卷快照使用

root@ubuntu-k8s-master01:~/csi-nfs/csi-driver-nfs-master/deploy/v4.6.0# kubectl get crds | grep storage.k8s.io

volumesnapshotclasses.snapshot.storage.k8s.io 2024-03-21T07:34:41Z

volumesnapshotcontents.snapshot.storage.k8s.io 2024-03-21T07:34:41Z

volumesnapshots.snapshot.storage.k8s.io 2024-03-21T07:34:41Z

#【3】k8s三台服务器 安装nfs-common

root@ubuntu-k8s-master01:~# apt install nfs-common -y

root@ubuntu-k8s-node01:~# apt install nfs-common -y

root@ubuntu-k8s-node02:~# apt install nfs-common -y

#【4】创建StorageClass

#https://github.com/kubernetes-csi/csi-driver-nfs/blob/master/deploy/example/README.md

root@ubuntu-k8s-master01:~# vim csi-storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-csi

provisioner: nfs.csi.k8s.io

parameters:

server: 192.168.40.104

share: /data/nfs

# csi.storage.k8s.io/provisioner-secret is only needed for providing mountOptions in DeleteVolume

# csi.storage.k8s.io/provisioner-secret-name: "mount-options"

# csi.storage.k8s.io/provisioner-secret-namespace: "default"

reclaimPolicy: Delete

volumeBindingMode: Immediate

root@ubuntu-k8s-master01:~# kubectl apply -f csi-storageclass.yaml

storageclass.storage.k8s.io/nfs-csi created

root@ubuntu-k8s-master01:~# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-csi nfs.csi.k8s.io Delete Immediate false 10s

#【5】创建一个测试PVC

root@ubuntu-k8s-master01:~# cat nfs-pvc-demo.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: nfs-pvc

annotations:

velero.io/csi-volumesnapshot-class: "nfs-csi"

spec:

storageClassName: nfs-csi

accessModes:

- ReadWriteMany

resources:

requests:

storage: 3Gi

root@ubuntu-k8s-master01:~# kubectl apply -f nfs-pvc-demo.yaml -n demo

persistentvolumeclaim/nfs-pvc created

root@ubuntu-k8s-master01:~# kubectl get pvc -n demo

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

nfs-pvc Bound pvc-6a17223d-6628-4eb4-877c-954e9b0eef09 3Gi RWX nfs-csi <unset> 6s

#【6】创建demo/redis 使用nfs-pvc

root@ubuntu-k8s-master01:~# cat redis-with-nfs-pvc.yaml

apiVersion: v1

kind: Pod

metadata:

name: redis-with-nfs-pvc

spec:

containers:

- name: redis

image: redis:7-alpine

ports:

- containerPort: 6379

name: redis

volumeMounts:

- mountPath: /data

name: data-storage

volumes:

- name: data-storage

persistentVolumeClaim:

claimName: nfs-pvc

root@ubuntu-k8s-master01:~# kubectl apply -f redis-with-nfs-pvc.yaml -n demo

pod/redis-with-nfs-pvc created

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 64m

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 64m

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 64m

redis-with-nfs-pvc 1/1 Running 0 5s

#【7】测试写数据

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 65m

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 65m

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 65m

redis-with-nfs-pvc 1/1 Running 0 98s

root@ubuntu-k8s-master01:~# kubectl exec -it redis-with-nfs-pvc -n demo -- /bin/sh

/data # redis-cli

127.0.0.1:6379> set mykey "BIRKHOFF 2024-03-21"

OK

127.0.0.1:6379> BGSAVE

Background saving started

127.0.0.1:6379> exit

/data # ls

dump.rdb

#【8】

#备份数据 - redis-backup

root@ubuntu-k8s-master01:~# velero backup create redis-backup --include-namespaces demo --default-volumes-to-fs-backup

Backup request "redis-backup" submitted successfully.

Run `velero backup describe redis-backup` or `velero backup logs redis-backup` for more details.

root@ubuntu-k8s-master01:~# velero backup get

NAME STATUS ERRORS WARNINGS CREATED EXPIRES STORAGE LOCATION SELECTOR

demo Completed 0 0 2024-03-21 06:46:30 +0000 UTC 29d default <none>

redis-backup Completed 0 0 2024-03-21 08:01:48 +0000 UTC 29d default <none>

root@ubuntu-k8s-master01:~# velero backup describe redis-backup --details

Name: redis-backup

Namespace: velero

Labels: velero.io/storage-location=default

Annotations: velero.io/resource-timeout=10m0s

velero.io/source-cluster-k8s-gitversion=v1.29.2

velero.io/source-cluster-k8s-major-version=1

velero.io/source-cluster-k8s-minor-version=29

Phase: Completed

Namespaces:

Included: demo

Excluded: <none>

Resources:

Included: *

Excluded: <none>

Cluster-scoped: auto

Label selector: <none>

Or label selector: <none>

Storage Location: default

Velero-Native Snapshot PVs: auto

Snapshot Move Data: false

Data Mover: velero

TTL: 720h0m0s

CSISnapshotTimeout: 10m0s

ItemOperationTimeout: 4h0m0s

Hooks: <none>

Backup Format Version: 1.1.0

Started: 2024-03-21 08:01:48 +0000 UTC

Completed: 2024-03-21 08:01:52 +0000 UTC

Expiration: 2024-04-20 08:01:48 +0000 UTC

Total items to be backed up: 23

Items backed up: 23

Resource List:

apiextensions.k8s.io/v1/CustomResourceDefinition:

- ciliumendpoints.cilium.io

apps/v1/Deployment:

- demo/demoapp

apps/v1/ReplicaSet:

- demo/demoapp-7c58cd6bb

cilium.io/v2/CiliumEndpoint:

- demo/demoapp-7c58cd6bb-cs2fc

- demo/demoapp-7c58cd6bb-r4rcb

- demo/demoapp-7c58cd6bb-w7ckj

- demo/redis-with-nfs-pvc

v1/ConfigMap:

- demo/kube-root-ca.crt

v1/Event:

- demo/nfs-pvc.17beb8890487bc71

- demo/nfs-pvc.17beb88905411edd

- demo/nfs-pvc.17beb8890aa62b1b

- demo/redis-with-nfs-pvc.17beb88c587fdc81

- demo/redis-with-nfs-pvc.17beb88c78733a92

- demo/redis-with-nfs-pvc.17beb88c78e45daf

- demo/redis-with-nfs-pvc.17beb88c7abb19b1

v1/Namespace:

- demo

v1/PersistentVolume:

- pvc-6a17223d-6628-4eb4-877c-954e9b0eef09

v1/PersistentVolumeClaim:

- demo/nfs-pvc

v1/Pod:

- demo/demoapp-7c58cd6bb-cs2fc

- demo/demoapp-7c58cd6bb-r4rcb

- demo/demoapp-7c58cd6bb-w7ckj

- demo/redis-with-nfs-pvc

v1/ServiceAccount:

- demo/default

Backup Volumes:

Velero-Native Snapshots: <none included>

CSI Snapshots: <none included>

Pod Volume Backups - kopia:

Completed:

demo/redis-with-nfs-pvc: data-storage

HooksAttempted: 0

HooksFailed: 0

#【9】模拟故障 删除ns :demo

root@ubuntu-k8s-master01:~# kubectl delete ns demo

namespace "demo" deleted

root@ubuntu-k8s-master01:~# kubectl get pv

No resources found

root@ubuntu-k8s-master01:~# velero backup get

NAME STATUS ERRORS WARNINGS CREATED EXPIRES STORAGE LOCATION SELECTOR

demo Completed 0 0 2024-03-21 06:46:30 +0000 UTC 29d default <none>

redis-backup Completed 0 0 2024-03-21 08:01:48 +0000 UTC 29d default <none>

root@ubuntu-k8s-master01:~# velero restore create --from-backup redis-backup

Restore request "redis-backup-20240321080651" submitted successfully.

Run `velero restore describe redis-backup-20240321080651` or `velero restore logs redis-backup-20240321080651` for more details.

root@ubuntu-k8s-master01:~# kubectl get pvc

No resources found in default namespace.

root@ubuntu-k8s-master01:~# kubectl get pvc -n demo

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

nfs-pvc Bound pvc-5628dd5b-5360-4e33-93b6-8ce42e2da07e 3Gi RWX nfs-csi <unset> 9s

root@ubuntu-k8s-master01:~# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS VOLUMEATTRIBUTESCLASS REASON AGE

pvc-5628dd5b-5360-4e33-93b6-8ce42e2da07e 3Gi RWX Delete Bound demo/nfs-pvc nfs-csi <unset> 24s

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 2m13s

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 2m13s

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 2m13s

redis-with-nfs-pvc 1/1 Running 0 2m13s

#【10】-验证数据恢复情况

#NFS 服务确认

[root@k8s-harbor ~]# cd /data/nfs/

[root@k8s-harbor nfs]# ll

total 0

drwxr-xr-x 3 polkitd root 74 Mar 21 16:08 pvc-5628dd5b-5360-4e33-93b6-8ce42e2da07e

#服务中数据确认

root@ubuntu-k8s-master01:~# kubectl exec -it redis-with-nfs-pvc -n demo -- /bin/sh

Defaulted container "redis" out of: redis, restore-wait (init)

/data # ls

BGSAVE dump.rdb exit set

/data # redis-cli

127.0.0.1:6379> get mykey

"BIRKHOFF 2024-03-21"

![]()

六、周期性定时任务备份

#

root@ubuntu-k8s-master01:~# velero schedule create all-namespaces --exclude-namespaces kube-system,velero --default-volumes-to-fs-backup --schedule="@every 24h"

Schedule "all-namespaces" created successfully.

root@ubuntu-k8s-master01:~# velero schedule get

NAME STATUS CREATED SCHEDULE BACKUP TTL LAST BACKUP SELECTOR PAUSED

all-namespaces Enabled 2024-03-21 08:15:40 +0000 UTC @every 24h 0s n/a <none> false

#恢复时指定 --from-schedule

root@ubuntu-k8s-master01:~# velero restore create --from-schedule all-namespaces

七、卷快照

#Kubernetes集群上部署内置的卷支持快照功能,例如Amazon EBS Volumes、Azure Managed Disks和Google Persistent Disks等,

#而可通过CSI扩展的很多存储服务也能支持卷快照(Snapshot)功能,对象这些类型的卷的备份,

#Velero能够自动在其备份任务中请求创建卷快照来作为备份集的一部分,恢复作业也能够自动从卷相关的快照中执行数据恢复操作,

#这能够帮助用户在灾难恢复场景中,快速将数据恢复到快照创建时刻。而且,Velero还在支持在备份作业创建快照后,

#将快照中的数据移动到“Volume Snapshot Location”所定义的位置进行保存。

#准备支持快照的CSI卷服务

#支持卷快照功能的CSI存储服务有很多,例如csi-driver-nfs、csi-driver-host-path和OpenEBS cStor等,本示例将以基于NFS的csi-driver-nfs为例进行说明。首先,在准备好的NFS服务器上,创建exports,导出某个路径(例如/data/nfs)作为存储后端,相关的配置示例如下所示。

/data/nfs 172.29.0.0/16(rw,fsid=0,async,no_subtree_check,no_auth_nlm,insecure,no_root_squash)

root@ubuntu-k8s-master01:~# cat nfs-csi-volumesnapshotclass.yaml

apiVersion: snapshot.storage.k8s.io/v1

kind: VolumeSnapshotClass

metadata:

name: nfs-csi

labels:

velero.io/csi-volumesnapshot-class: "true"

driver: nfs.csi.k8s.io

parameters:

parameters:

server: 192.168.40.104

share: /data/nfs

deletionPolicy: Delete

root@ubuntu-k8s-master01:~# kubectl apply -f nfs-csi-volumesnapshotclass.yaml

volumesnapshotclass.snapshot.storage.k8s.io/nfs-csi created

root@ubuntu-k8s-master01:~# kubectl get vsclass

NAME DRIVER DELETIONPOLICY AGE

nfs-csi nfs.csi.k8s.io Delete 46s

基于CSI卷快照的备份机制

#对于Kubernetes内置的原生支持卷快照的某些卷插件,例如Amazon EBS Volumes、Azure Managed Disks和Google Persistent Disks等

#Velero能够在执行备份任务时自动对这类的卷创建快照。而且,Veleror的插件化体系架构,

#亦可让用户快速构建插件支持自定义的对象存储后端和块存储后端。再或者,如果用户使用的是通过CSI接口扩展出的存储服务,而该插件支持卷快照时,Velero也能统一基于velero-plugin-for-csi插件在备份和恢复时使用CSI卷快照。

#Velero FSB备份机制只是对上述快照方法的补充机制,而且也是在Pod上使用了不支持快照的卷时的惟一可用方法。

#重要提示:CSI卷快照是PV的时间点副本,它比文件系统备份具有更一致的数据。

#Velero支持两种基于CSI快照备份Kubernetes资源和卷数据的方式。

#将Kubernetes资源备份到对象存储并创建PV的CSI快照

#将Kubernetes资源备份到对象存储并创建PV的CSI快照,然后,再将快照中的数据上传到对象存储系统

#Velero要使用CSI卷快照,必须事先部署velero-plugin-for-csi插件,且在部署Velero时启用了CSI特性。下面的命令,附带了部署Velero时启用支持CSI的两个必要配置,“--features=EnableCSI”和“--plugins=velero/velero-plugin-for-csi:v0.7.0”。

root@ubuntu-k8s-master01:~# velero uninstall

#--features=EnableCSI 、 velero/velero-plugin-for-csi:v0.7.0

velero install \

--secret-file=./credentials-velero \

--provider=aws \

--bucket=dzzz \

--backup-location-config region=minio,s3ForcePathStyle=true,s3Url=http://192.168.40.104:9000 \

--plugins=velero/velero-plugin-for-aws:v1.9.0,velero/velero-plugin-for-csi:v0.7.0 \

--use-volume-snapshots=true \

--features=EnableCSI \

--snapshot-location-config region=minio \

--use-node-agent \

--uploader-type=kopia

root@ubuntu-k8s-master01:~# kubectl get pods -n velero

NAME READY STATUS RESTARTS AGE

node-agent-7tnnl 1/1 Running 0 2m14s

node-agent-f7bw4 1/1 Running 0 2m14s

velero-759b79bcb-pr9gp 1/1 Running 0 2m14s

#

root@ubuntu-k8s-master01:~# kubectl get pvc -n demo

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

nfs-pvc Bound pvc-5628dd5b-5360-4e33-93b6-8ce42e2da07e 3Gi RWX nfs-csi <unset> 34m

root@ubuntu-k8s-master01:~# velero backup create snapshot-volumes --include-namespaces demo --snapshot-volumes --snapshot-move-data

Backup request "snapshot-volumes" submitted successfully.

Run `velero backup describe snapshot-volumes` or `velero backup logs snapshot-volumes` for more details.

root@ubuntu-k8s-master01:~# velero backup get

NAME STATUS ERRORS WARNINGS CREATED EXPIRES STORAGE LOCATION SELECTOR

demo Completed 0 0 2024-03-21 06:46:30 +0000 UTC 29d default <none>

demo002 Completed 0 0 2024-03-21 08:43:05 +0000 UTC 29d default <none>

redis-backup Completed 0 0 2024-03-21 08:01:48 +0000 UTC 29d default <none>

snapshot-volumes Completed 0 0 2024-03-21 08:45:23 +0000 UTC 29d default <none>

root@ubuntu-k8s-master01:~# kubectl get vsc -n demo

NAME READYTOUSE RESTORESIZE DELETIONPOLICY DRIVER VOLUMESNAPSHOTCLASS VOLUMESNAPSHOT VOLUMESNAPSHOTNAMESPACE AGE

snapcontent-cb699f44-26eb-4b8f-874b-63dce92db198 true 0 Retain nfs.csi.k8s.io nfs-csi name-7e426614-0670-4ecc-9722-fd4179dc64bc ns-7e426614-0670-4ecc-9722-fd4179dc64bc 15s

#查看详细信息

root@ubuntu-k8s-master01:~# velero backup describe snapshot-volumes --details

Name: snapshot-volumes

Namespace: velero

Labels: velero.io/storage-location=default

Annotations: velero.io/resource-timeout=10m0s

velero.io/source-cluster-k8s-gitversion=v1.29.2

velero.io/source-cluster-k8s-major-version=1

velero.io/source-cluster-k8s-minor-version=29

Phase: Completed

Namespaces:

Included: demo

Excluded: <none>

Resources:

Included: *

Excluded: <none>

Cluster-scoped: auto

Label selector: <none>

Or label selector: <none>

Storage Location: default

Velero-Native Snapshot PVs: true

Snapshot Move Data: true

Data Mover: velero

TTL: 720h0m0s

CSISnapshotTimeout: 10m0s

ItemOperationTimeout: 4h0m0s

Hooks: <none>

Backup Format Version: 1.1.0

Started: 2024-03-21 08:45:23 +0000 UTC

Completed: 2024-03-21 08:45:43 +0000 UTC

Expiration: 2024-04-20 08:45:23 +0000 UTC

Total items to be backed up: 42

Items backed up: 42

Backup Item Operations:

Operation for persistentvolumeclaims demo/nfs-pvc:

Backup Item Action Plugin: velero.io/csi-pvc-backupper

Operation ID: du-114c27e0-3e91-4d7c-8bf2-c0030a4fa931.5628dd5b-5360-4e3d09bc0

Items to Update:

datauploads.velero.io velero/snapshot-volumes-w6q69

Phase: Completed

Progress: 120 of 120 complete (Bytes)

Progress description: Completed

Created: 2024-03-21 08:45:30 +0000 UTC

Started: 2024-03-21 08:45:30 +0000 UTC

Updated: 2024-03-21 08:45:40 +0000 UTC

Resource List:

apiextensions.k8s.io/v1/CustomResourceDefinition:

- ciliumendpoints.cilium.io

apps/v1/Deployment:

- demo/demoapp

apps/v1/ReplicaSet:

- demo/demoapp-7c58cd6bb

cilium.io/v2/CiliumEndpoint:

- demo/demoapp-7c58cd6bb-cs2fc

- demo/demoapp-7c58cd6bb-r4rcb

- demo/demoapp-7c58cd6bb-w7ckj

- demo/redis-with-nfs-pvc

v1/ConfigMap:

- demo/kube-root-ca.crt

v1/Event:

- demo/demoapp-7c58cd6bb-cs2fc.17beb915c18e0850

- demo/demoapp-7c58cd6bb-cs2fc.17beb915eca8e9c4

- demo/demoapp-7c58cd6bb-cs2fc.17beb915ed78ff43

- demo/demoapp-7c58cd6bb-cs2fc.17beb915f0fbf262

- demo/demoapp-7c58cd6bb-r4rcb.17beb915c46ea653

- demo/demoapp-7c58cd6bb-r4rcb.17beb916072e412f

- demo/demoapp-7c58cd6bb-r4rcb.17beb91607a6f8b5

- demo/demoapp-7c58cd6bb-r4rcb.17beb91609d37aca

- demo/demoapp-7c58cd6bb-w7ckj.17beb915c6f029a9

- demo/demoapp-7c58cd6bb-w7ckj.17beb91608d08835

- demo/demoapp-7c58cd6bb-w7ckj.17beb916094e2622

- demo/demoapp-7c58cd6bb-w7ckj.17beb9160b1db7be

- demo/nfs-pvc.17beb915be41a41e

- demo/nfs-pvc.17beb915bff08f05

- demo/nfs-pvc.17beb915c64de497

- demo/redis-with-nfs-pvc.17beb915c9a43cc7

- demo/redis-with-nfs-pvc.17beb915eae13de8

- demo/redis-with-nfs-pvc.17beb93142b2d330

- demo/redis-with-nfs-pvc.17beb9314341f490

- demo/redis-with-nfs-pvc.17beb9314544c178

- demo/redis-with-nfs-pvc.17beb931d1dfb14e

- demo/redis-with-nfs-pvc.17beb931d24af1f0

- demo/redis-with-nfs-pvc.17beb931d444c8ce

- demo/velero-nfs-pvc-g74jf.17bebb105d4f87da

- demo/velero-nfs-pvc-g74jf.17bebb10740513d9

- demo/velero-nfs-pvc-g74jf.17bebb1074053f69

v1/Namespace:

- demo

v1/PersistentVolume:

- pvc-5628dd5b-5360-4e33-93b6-8ce42e2da07e

v1/PersistentVolumeClaim:

- demo/nfs-pvc

v1/Pod:

- demo/demoapp-7c58cd6bb-cs2fc

- demo/demoapp-7c58cd6bb-r4rcb

- demo/demoapp-7c58cd6bb-w7ckj

- demo/redis-with-nfs-pvc

v1/ServiceAccount:

- demo/default

Backup Volumes:

Velero-Native Snapshots: <none included>

CSI Snapshots:

demo/nfs-pvc:

Data Movement:

Operation ID: du-114c27e0-3e91-4d7c-8bf2-c0030a4fa931.5628dd5b-5360-4e3d09bc0

Data Mover: velero

Uploader Type: kopia

Pod Volume Backups: <none included>

HooksAttempted: 0

HooksFailed: 0

#故障删除demo ns

root@ubuntu-k8s-master01:~# kubectl delete ns demo

namespace "demo" deleted

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

No resources found in demo namespace.

root@ubuntu-k8s-master01:~# kubectl get pvc -n demo

No resources found in demo namespace.

root@ubuntu-k8s-master01:~# velero restore create --from-backup snapshot-volumes

Restore request "snapshot-volumes-20240321085244" submitted successfully.

Run `velero restore describe snapshot-volumes-20240321085244` or `velero restore logs snapshot-volumes-20240321085244` for more details.

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 8s

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 8s

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 8s

redis-with-nfs-pvc 0/1 Pending 0 7s

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 44s

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 44s

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 44s

redis-with-nfs-pvc 1/1 Running 0 43s

root@ubuntu-k8s-master01:~# kubectl get pvc -n demo #恢复回来了pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

nfs-pvc Bound pvc-80ab07f3-d254-4b36-8b46-072f273cc1ca 3Gi RWX nfs-csi <unset> 72s

root@ubuntu-k8s-master01:~# kubectl describe pvc nfs-pvc -n demo

Name: nfs-pvc

Namespace: demo

StorageClass: nfs-csi

Status: Bound

Volume: pvc-80ab07f3-d254-4b36-8b46-072f273cc1ca

Labels: velero.io/backup-name=snapshot-volumes

velero.io/restore-name=snapshot-volumes-20240321085244

velero.io/volume-snapshot-name=velero-nfs-pvc-fztlf

Annotations: backup.velero.io/must-include-additional-items: true

pv.kubernetes.io/bind-completed: yes

velero.io/csi-volumesnapshot-class: nfs-csi

volume.beta.kubernetes.io/storage-provisioner: nfs.csi.k8s.io

volume.kubernetes.io/storage-provisioner: nfs.csi.k8s.io

Finalizers: [kubernetes.io/pvc-protection]

Capacity: 3Gi

Access Modes: RWX

VolumeMode: Filesystem

Used By: redis-with-nfs-pvc

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning ProvisioningFailed 88s persistentvolume-controller Error saving claim: Operation cannot be fulfilled on persistentvolumeclaims "nfs-pvc": the object has been modified; please apply your changes to the latest version and try again

Normal Provisioning 82s nfs.csi.k8s.io_ubuntu-k8s-master01_935add09-f5e4-4b8a-a2c8-7826428a2f8e External provisioner is provisioning volume for claim "demo/nfs-pvc"

Warning ProvisioningFailed 82s nfs.csi.k8s.io_ubuntu-k8s-master01_935add09-f5e4-4b8a-a2c8-7826428a2f8e failed to provision volume with StorageClass "nfs-csi": claim Selector is not supported

Normal ExternalProvisioning 82s (x2 over 82s) persistentvolume-controller Waiting for a volume to be created either by the external provisioner 'nfs.csi.k8s.io' or manually by the system administrator. If volume creation is delayed, please verify that the provisioner is running and correctly registered.

Warning FailedBinding 82s persistentvolume-controller volume "pvc-80ab07f3-d254-4b36-8b46-072f273cc1ca" already bound to a different claim.

root@ubuntu-k8s-master01:~# velero restore get

NAME BACKUP STATUS STARTED COMPLETED ERRORS WARNINGS CREATED SELECTOR

snapshot-volumes-20240321085244 snapshot-volumes Completed 2024-03-21 08:52:44 +0000 UTC 2024-03-21 08:53:13 +0000 UTC 0 2 2024-03-21 08:52:44 +0000 UTC <none>

#验证数据

root@ubuntu-k8s-master01:~# kubectl get pods -n demo

NAME READY STATUS RESTARTS AGE

demoapp-7c58cd6bb-cs2fc 1/1 Running 0 3m1s

demoapp-7c58cd6bb-r4rcb 1/1 Running 0 3m1s

demoapp-7c58cd6bb-w7ckj 1/1 Running 0 3m1s

redis-with-nfs-pvc 1/1 Running 0 3m

root@ubuntu-k8s-master01:~# kubectl exec -it redis-with-nfs-pvc -n demo -- /bin/sh

Defaulted container "redis" out of: redis, restore-wait (init)

/data # redis-cli

127.0.0.1:6379> get mykey

"BIRKHOFF 2024-03-21"

127.0.0.1:6379>

![]() 三、部署velero

三、部署velero

三、部署velero

三、部署velero

浙公网安备 33010602011771号

浙公网安备 33010602011771号