Knative - Eventing系统组件、工作机制及入门实践【七】

Knative Eventing:

三个组件:

Serving: BaaS

编排无状态、基于HTTP或gRPC协议的服务器(daemon)应用

这类应用本身也可以由Kubernetes直接编排运行(Deployment, HPA, Service, Ingress)

Eventing: 事件驱动架构的基础设施

无状态、基于HTTP或gRPC协议的服务器(daemon)应用,采用了EDA架构

编排:仍由Serving负责

Eventing仅负责提供消息消息设施

Build:CI/CD Pipeline,Tekton

事件驱动架构:

事件

消息传递

实现消息传递:

Sources:消息的生产者,通常应该是一个分布式应用中的某个程序(服务),该程序通常由Knative Serving或直接由Kubernete Deployment编排运行;

Sinks: 消息的接收者

可寻址,或可调用

(1) Channel

(2) Broker

(3) 是一个分布式应用中的某个程序(服务),该程序通常由Knative Serving或直接由Kubernete Deployment编排运行

提示:消息的最终目的端,通常必然是一个分布式应用中的某个程序(服务),所以消息如何传递给了Channel或Broker,则通常还要再传递下云,直到传递给

消息传递模式:

一对一: source --> ksvc或svc

一对多(不过滤):source --> Channel <-- Subscriptions --> Subscriber (ksvc或svc)

一对多(过滤):source --> Broker <-- Triggers --> ksvc或svc

存在复杂处理流程的可能:

可将处理过程定义为Flow组件:

Sequence:

A --> B --> C --> D --> E

Parallel:

|--> B |

A --> |--> C |--> E

|--> D |Knative Eventing的相关组件

#Knative Eventing具有四个最基本的组件:Sources、Brokers、Triggers 和 Sinks

◼ 事件会从Source发送至Sink

◼ Sink是能够接收传入的事件可寻址(Addressable)或可调用(Callable)资源

◆Knative Service、Channel和Broker都可用作Sink

#Knative Eventing的关键术语

◼ Event Source

◆Knative Eventing中的事件源主要就是指事件的生产者

◆事件将被发往Sink或Subscriber

◼ Channel和Subscription

◆事件管道模型,负责在Channel及其各Subscription之间格式化和路由事件

◼ Broker和Trigger

◆事件网格(mesh)模型,Producer把事件发往Broker,再由Broker统一经Trigger发往各Consumer

◆各Consumer利用Trigger向Broker订阅其感兴趣的事件

◼ Event Registry

◆Knative Eventing使用EventType来帮助Consumer从Broker上发现其能够消费的事件类型

◆Event Registry即为存储着EventType的集合,这些EventType含有Consumer创建Trigger的所有必要信息Event 处理示意图

Event Source:事件源,即生产者抽象,负责从真正的事件源导入事件至Eventing拓扑中

Event Type:事件类型,它们定义于Event Registry中

Flow:事件处理流,可简单地手工定义流,也可使用专用的API进行定义

Event Sinks:能接收Event的可寻址(Addressable)或可调用(Callable)资源,例如KService等Knative的事件传递模式1

#Knative Eventing中的Sink(接收事件)的用例主要有Knative Service、Channels和Brokers三种

#Knative Eventing支持的事件传递模式

◼ Sources to Sink

◆单一Sink模式,事件接收过程中不存在排队和过滤等操作

◆Event Source的职责仅是传递消息,且无需等待Sink响应

◆fire and forget

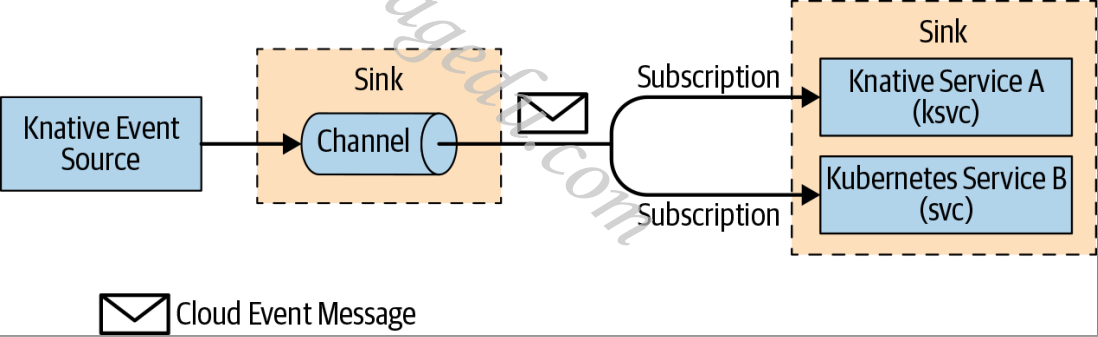

◼ Channels and Subscriptions

◆Event Source将事件发往Channel

◆Channel可以有一到多个Subscription(即Sink)

◆Channel中的每个事件都被格式化Cloud Event并发送至各Subscription

◆不支持消息过滤机制

Knative的事件传递模式2

#Knative Eventing支持的事件传递模式

◼ Brokers and Triggers

◆功能类似于Channel和Subscription模式,但支持消息过滤机制

◆事件过滤机制允许Subscription使用基于事件属性的条件表达式(Trigger)筛选感兴趣的事件

◆Trigger负责订阅Broker,并对Broker上的消息进行过滤

◆Trigger将消息传递给感兴趣的Subscription之前,还需要负责完成消息的格式化

◆这是在生产中推荐使用的消息投递模式

部署安装Eventing

部署Knative Eventing:

部署核心组件:

crd

controller

部署Channel层

部署Broker层

Sources的作用:

负责将传统的生成非CloudEvents格式的程序,所生成的事件转换为CloudEvents格式1.Install Knative Eventing

【1.7.1版本】

https://github.com/knative/eventing/releases/download/knative-v1.7.1/eventing-core.yaml

https://github.com/knative/eventing/releases/download/knative-v1.7.1/eventing-crds.yaml

[root@xianchaomaster1 bookinfo]# kubectl apply -f /root/KnativeSrc/eventing-crds.yaml

customresourcedefinition.apiextensions.k8s.io/apiserversources.sources.knative.dev created

customresourcedefinition.apiextensions.k8s.io/brokers.eventing.knative.dev created

customresourcedefinition.apiextensions.k8s.io/channels.messaging.knative.dev created

customresourcedefinition.apiextensions.k8s.io/containersources.sources.knative.dev created

customresourcedefinition.apiextensions.k8s.io/eventtypes.eventing.knative.dev created

customresourcedefinition.apiextensions.k8s.io/parallels.flows.knative.dev created

customresourcedefinition.apiextensions.k8s.io/pingsources.sources.knative.dev created

customresourcedefinition.apiextensions.k8s.io/sequences.flows.knative.dev created

customresourcedefinition.apiextensions.k8s.io/sinkbindings.sources.knative.dev created

customresourcedefinition.apiextensions.k8s.io/subscriptions.messaging.knative.dev created

customresourcedefinition.apiextensions.k8s.io/triggers.eventing.knative.dev created

[root@xianchaomaster1 bookinfo]# kubectl api-resources --api-group=messaging.knative.dev

NAME SHORTNAMES APIVERSION NAMESPACED KIND

channels ch messaging.knative.dev/v1 true Channel

subscriptions sub messaging.knative.dev/v1 true Subscription

[root@xianchaomaster1 bookinfo]# kubectl api-resources --api-group=eventing.knative.dev

NAME SHORTNAMES APIVERSION NAMESPACED KIND

brokers eventing.knative.dev/v1 true Broker

eventtypes eventing.knative.dev/v1beta1 true EventType

triggers eventing.knative.dev/v1 true Trigger

[root@xianchaomaster1 bookinfo]# kubectl api-resources --api-group=flows.knative.dev

NAME SHORTNAMES APIVERSION NAMESPACED KIND

parallels flows.knative.dev/v1 true Parallel

sequences flows.knative.dev/v1 true Sequence

#部署eventing-core.yaml

gcr.io/knative-releases/knative.dev/eventing/cmd/controller@sha256:49110e5609ee3ae7a64015e467619312553e3a6f7defdf295f1c8c2d9b718834

gcr.io/knative-releases/knative.dev/eventing/cmd/mtping@sha256:ee90e11a11c14f9210255da1a834690321a3b525143d9bafec6f1381c9de53c1

gcr.io/knative-releases/knative.dev/eventing/cmd/webhook@sha256:7bbc84ea817f692cf0b2e36e890387a8e9feb0a3e04f632e57fafeada485a833

改为

gcr.io/knative-releases 改为 gcr.lank8s.cn/knative-releases

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/controller@sha256:49110e5609ee3ae7a64015e467619312553e3a6f7defdf295f1c8c2d9b718834

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/mtping@sha256:ee90e11a11c14f9210255da1a834690321a3b525143d9bafec6f1381c9de53c1

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/webhook@sha256:7bbc84ea817f692cf0b2e36e890387a8e9feb0a3e04f632e57fafeada485a833

#拉取镜像

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/controller@sha256:49110e5609ee3ae7a64015e467619312553e3a6f7defdf295f1c8c2d9b718834

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/mtping@sha256:ee90e11a11c14f9210255da1a834690321a3b525143d9bafec6f1381c9de53c1

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/webhook@sha256:7bbc84ea817f692cf0b2e36e890387a8e9feb0a3e04f632e57fafeada485a833

[root@xianchaomaster1 bookinfo]# kubectl apply -f /root/KnativeSrc/eventing-core.yaml

namespace/knative-eventing created

serviceaccount/eventing-controller created

clusterrolebinding.rbac.authorization.k8s.io/eventing-controller created

clusterrolebinding.rbac.authorization.k8s.io/eventing-controller-resolver created

clusterrolebinding.rbac.authorization.k8s.io/eventing-controller-source-observer created

clusterrolebinding.rbac.authorization.k8s.io/eventing-controller-sources-controller created

clusterrolebinding.rbac.authorization.k8s.io/eventing-controller-manipulator created

serviceaccount/pingsource-mt-adapter created

clusterrolebinding.rbac.authorization.k8s.io/knative-eventing-pingsource-mt-adapter created

serviceaccount/eventing-webhook created

clusterrolebinding.rbac.authorization.k8s.io/eventing-webhook created

rolebinding.rbac.authorization.k8s.io/eventing-webhook created

clusterrolebinding.rbac.authorization.k8s.io/eventing-webhook-resolver created

clusterrolebinding.rbac.authorization.k8s.io/eventing-webhook-podspecable-binding created

configmap/config-br-default-channel created

configmap/config-br-defaults created

configmap/default-ch-webhook created

configmap/config-ping-defaults created

configmap/config-features created

configmap/config-kreference-mapping created

configmap/config-leader-election created

configmap/config-logging created

configmap/config-observability created

configmap/config-sugar created

configmap/config-tracing created

deployment.apps/eventing-controller created

deployment.apps/pingsource-mt-adapter created

Warning: autoscaling/v2beta2 HorizontalPodAutoscaler is deprecated in v1.23+, unavailable in v1.26+; use autoscaling/v2 HorizontalPodAutoscaler

horizontalpodautoscaler.autoscaling/eventing-webhook created

poddisruptionbudget.policy/eventing-webhook created

deployment.apps/eventing-webhook created

service/eventing-webhook created

customresourcedefinition.apiextensions.k8s.io/apiserversources.sources.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/brokers.eventing.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/channels.messaging.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/containersources.sources.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/eventtypes.eventing.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/parallels.flows.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/pingsources.sources.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/sequences.flows.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/sinkbindings.sources.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/subscriptions.messaging.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/triggers.eventing.knative.dev unchanged

clusterrole.rbac.authorization.k8s.io/addressable-resolver created

clusterrole.rbac.authorization.k8s.io/service-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/serving-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/channel-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/broker-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/flows-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/eventing-broker-filter created

clusterrole.rbac.authorization.k8s.io/eventing-broker-ingress created

clusterrole.rbac.authorization.k8s.io/eventing-config-reader created

clusterrole.rbac.authorization.k8s.io/channelable-manipulator created

clusterrole.rbac.authorization.k8s.io/meta-channelable-manipulator created

clusterrole.rbac.authorization.k8s.io/knative-eventing-namespaced-admin created

clusterrole.rbac.authorization.k8s.io/knative-messaging-namespaced-admin created

clusterrole.rbac.authorization.k8s.io/knative-flows-namespaced-admin created

clusterrole.rbac.authorization.k8s.io/knative-sources-namespaced-admin created

clusterrole.rbac.authorization.k8s.io/knative-bindings-namespaced-admin created

clusterrole.rbac.authorization.k8s.io/knative-eventing-namespaced-edit created

clusterrole.rbac.authorization.k8s.io/knative-eventing-namespaced-view created

clusterrole.rbac.authorization.k8s.io/knative-eventing-controller created

clusterrole.rbac.authorization.k8s.io/knative-eventing-pingsource-mt-adapter created

clusterrole.rbac.authorization.k8s.io/podspecable-binding created

clusterrole.rbac.authorization.k8s.io/builtin-podspecable-binding created

clusterrole.rbac.authorization.k8s.io/source-observer created

clusterrole.rbac.authorization.k8s.io/eventing-sources-source-observer created

clusterrole.rbac.authorization.k8s.io/knative-eventing-sources-controller created

clusterrole.rbac.authorization.k8s.io/knative-eventing-webhook created

role.rbac.authorization.k8s.io/knative-eventing-webhook created

validatingwebhookconfiguration.admissionregistration.k8s.io/config.webhook.eventing.knative.dev created

mutatingwebhookconfiguration.admissionregistration.k8s.io/webhook.eventing.knative.dev created

validatingwebhookconfiguration.admissionregistration.k8s.io/validation.webhook.eventing.knative.dev created

secret/eventing-webhook-certs created

mutatingwebhookconfiguration.admissionregistration.k8s.io/sinkbindings.webhook.sources.knative.dev created

[root@xianchaomaster1 bookinfo]# kubectl get ns

NAME STATUS AGE

default Active 11d

istio-system Active 28h

knative-eventing Active 78s

knative-serving Active 29h

kube-node-lease Active 11d

kube-public Active 11d

kube-system Active 11d

[root@xianchaomaster1 bookinfo]# kubectl get pods -n knative-eventing

NAME READY STATUS RESTARTS AGE

eventing-controller-7b6fc5969c-rnxqn 1/1 Running 0 92s

eventing-webhook-786dbf4c49-nckrk 1/1 Running 0 92s2.Optional: Install a default Channel (messaging) layer

2.1 In-Memory (standalone)

https://github.com/knative/eventing/releases/download/knative-v1.7.1/in-memory-channel.yaml

gcr.io/knative-releases/knative.dev/eventing/cmd/in_memory/channel_controller@sha256:1e9602791e76dc7556446ea1645b8721c853f747a59c7ef3d3f028510e0d5072

gcr.io/knative-releases/knative.dev/eventing/cmd/in_memory/channel_dispatcher@sha256:029c65cad7c7b1b63427c0df25dbbf8a02815c829949821d972354e9e983525b

改为

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/in_memory/channel_controller@sha256:1e9602791e76dc7556446ea1645b8721c853f747a59c7ef3d3f028510e0d5072

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/in_memory/channel_dispatcher@sha256:029c65cad7c7b1b63427c0df25dbbf8a02815c829949821d972354e9e983525b

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/in_memory/channel_controller@sha256:1e9602791e76dc7556446ea1645b8721c853f747a59c7ef3d3f028510e0d5072

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/in_memory/channel_dispatcher@sha256:029c65cad7c7b1b63427c0df25dbbf8a02815c829949821d972354e9e983525b

[root@xianchaomaster1 bookinfo]# kubectl apply -f /root/KnativeSrc/in-memory-channel.yaml

serviceaccount/imc-controller created

clusterrolebinding.rbac.authorization.k8s.io/imc-controller created

rolebinding.rbac.authorization.k8s.io/imc-controller created

clusterrolebinding.rbac.authorization.k8s.io/imc-controller-resolver created

serviceaccount/imc-dispatcher created

clusterrolebinding.rbac.authorization.k8s.io/imc-dispatcher created

configmap/config-imc-event-dispatcher created

configmap/config-observability unchanged

configmap/config-tracing unchanged

deployment.apps/imc-controller created

service/inmemorychannel-webhook created

service/imc-dispatcher created

deployment.apps/imc-dispatcher created

customresourcedefinition.apiextensions.k8s.io/inmemorychannels.messaging.knative.dev created

clusterrole.rbac.authorization.k8s.io/imc-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/imc-channelable-manipulator created

clusterrole.rbac.authorization.k8s.io/imc-controller created

clusterrole.rbac.authorization.k8s.io/imc-dispatcher created

role.rbac.authorization.k8s.io/knative-inmemorychannel-webhook created

mutatingwebhookconfiguration.admissionregistration.k8s.io/inmemorychannel.eventing.knative.dev created

validatingwebhookconfiguration.admissionregistration.k8s.io/validation.inmemorychannel.eventing.knative.dev created

secret/inmemorychannel-webhook-certs created

[root@xianchaomaster1 bookinfo]# kubectl get pods -n knative-eventing

NAME READY STATUS RESTARTS AGE

eventing-controller-7b6fc5969c-rnxqn 1/1 Running 0 10m

eventing-webhook-786dbf4c49-nckrk 1/1 Running 0 10m

imc-controller-68d854949d-w9x4k 1/1 Running 0 35s

imc-dispatcher-7c8f6559b9-ngwzq 1/1 Running 0 35s3.Optional: Install a Broker layer

3.1 MT-Channel-based

https://github.com/knative/eventing/releases/download/knative-v1.7.1/mt-channel-broker.yaml

gcr.io/knative-releases/knative.dev/eventing/cmd/broker/filter@sha256:2d118fa07545b7b510b06416dcfb8396f5a815303ce70e91f5b1ab1f335751ed

gcr.io/knative-releases/knative.dev/eventing/cmd/mtchannel_broker@sha256:84db786596618bb49bd864f6689e0447c7d4d795a7bd69e61f868b1b7747aa20

gcr.io/knative-releases/knative.dev/eventing/cmd/broker/ingress@sha256:5cfacba62237cef36072b2f594d0314cf216628fcb5fcf54043aaa748ec5f40d

改为

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/broker/filter@sha256:2d118fa07545b7b510b06416dcfb8396f5a815303ce70e91f5b1ab1f335751ed

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/mtchannel_broker@sha256:84db786596618bb49bd864f6689e0447c7d4d795a7bd69e61f868b1b7747aa20

gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/broker/ingress@sha256:5cfacba62237cef36072b2f594d0314cf216628fcb5fcf54043aaa748ec5f40d

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/broker/filter@sha256:2d118fa07545b7b510b06416dcfb8396f5a815303ce70e91f5b1ab1f335751ed

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/mtchannel_broker@sha256:84db786596618bb49bd864f6689e0447c7d4d795a7bd69e61f868b1b7747aa20

crictl pull gcr.lank8s.cn/knative-releases/knative.dev/eventing/cmd/broker/ingress@sha256:5cfacba62237cef36072b2f594d0314cf216628fcb5fcf54043aaa748ec5f40d

[root@xianchaomaster1 bookinfo]# kubectl apply -f /root/KnativeSrc/mt-channel-broker.yaml

clusterrole.rbac.authorization.k8s.io/knative-eventing-mt-channel-broker-controller created

clusterrole.rbac.authorization.k8s.io/knative-eventing-mt-broker-filter created

serviceaccount/mt-broker-filter created

clusterrole.rbac.authorization.k8s.io/knative-eventing-mt-broker-ingress created

serviceaccount/mt-broker-ingress created

clusterrolebinding.rbac.authorization.k8s.io/eventing-mt-channel-broker-controller created

clusterrolebinding.rbac.authorization.k8s.io/knative-eventing-mt-broker-filter created

clusterrolebinding.rbac.authorization.k8s.io/knative-eventing-mt-broker-ingress created

deployment.apps/mt-broker-filter created

service/broker-filter created

deployment.apps/mt-broker-ingress created

service/broker-ingress created

deployment.apps/mt-broker-controller created

Warning: autoscaling/v2beta2 HorizontalPodAutoscaler is deprecated in v1.23+, unavailable in v1.26+; use autoscaling/v2 HorizontalPodAutoscaler

horizontalpodautoscaler.autoscaling/broker-ingress-hpa created

horizontalpodautoscaler.autoscaling/broker-filter-hpa created

[root@xianchaomaster1 bookinfo]# kubectl get pods -n knative-eventing

NAME READY STATUS RESTARTS AGE

eventing-controller-7b6fc5969c-rnxqn 1/1 Running 0 21m

eventing-webhook-786dbf4c49-nckrk 1/1 Running 0 21m

imc-controller-68d854949d-w9x4k 1/1 Running 0 11m

imc-dispatcher-7c8f6559b9-ngwzq 1/1 Running 0 11m

mt-broker-controller-86dd98fd58-zqsh7 1/1 Running 0 26s

mt-broker-filter-587668dcc6-9sx8c 1/1 Running 0 26s

mt-broker-ingress-84bddff64f-xw8xj 1/1 Running 0 26s

[root@xianchaomaster1 bookinfo]# kubectl get cm -n knative-eventing

NAME DATA AGE

config-br-default-channel 1 22m

config-br-defaults 1 22m

config-features 6 22m

config-imc-event-dispatcher 2 12m

config-kreference-mapping 1 22m

config-leader-election 1 22m

config-logging 3 22m

config-observability 1 22m

config-ping-defaults 1 22m

config-sugar 1 22m

config-tracing 1 22m

default-ch-webhook 1 22m

istio-ca-root-cert 1 22m

kube-root-ca.crt 1 22m

[root@xianchaomaster1 bookinfo]# kubectl get cm config-br-defaults -o yaml -n knative-eventing

apiVersion: v1

data:

default-br-config: |

clusterDefault:

brokerClass: MTChannelBasedBroker

apiVersion: v1

kind: ConfigMap

name: config-br-default-channel

namespace: knative-eventing

delivery:

retry: 10

backoffPolicy: exponential

backoffDelay: PT0.2S

kind: ConfigMap

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","data":{"default-br-config":"clusterDefault:\n brokerClass: MTChannelBasedBroker\n apiVersion: v1\n kind: ConfigMap\n name: config-br-default-channel\n namespace: knative-eventing\n delivery:\n retry: 10\n backoffPolicy: exponential\n backoffDelay: PT0.2S\n"},"kind":"ConfigMap","metadata":{"annotations":{},"labels":{"app.kubernetes.io/name":"knative-eventing","app.kubernetes.io/version":"1.7.1","eventing.knative.dev/release":"v1.7.1"},"name":"config-br-defaults","namespace":"knative-eventing"}}

creationTimestamp: "2023-07-06T08:50:35Z"

labels:

app.kubernetes.io/name: knative-eventing

app.kubernetes.io/version: 1.7.1

eventing.knative.dev/release: v1.7.1

name: config-br-defaults

namespace: knative-eventing

resourceVersion: "466564"

uid: 5df83f4e-55a5-4209-875a-afd391376b7f

# apiVersion: messaging.knative.dev/v1

# kind: InMemoryChannel

[root@xianchaomaster1 bookinfo]# kubectl get cm config-br-default-channel -o yaml -n knative-eventing

apiVersion: v1

data:

channel-template-spec: |

apiVersion: messaging.knative.dev/v1

kind: InMemoryChannel

kind: ConfigMap

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","data":{"channel-template-spec":"apiVersion: messaging.knative.dev/v1\nkind: InMemoryChannel\n"},"kind":"ConfigMap","metadata":{"annotations":{},"labels":{"app.kubernetes.io/name":"knative-eventing","app.kubernetes.io/version":"1.7.1","eventing.knative.dev/release":"v1.7.1"},"name":"config-br-default-channel","namespace":"knative-eventing"}}

creationTimestamp: "2023-07-06T08:50:35Z"

labels:

app.kubernetes.io/name: knative-eventing

app.kubernetes.io/version: 1.7.1

eventing.knative.dev/release: v1.7.1

name: config-br-default-channel

namespace: knative-eventing

resourceVersion: "466563"

uid: 06aa90b2-6b06-4794-bc17-6c7c24a63e124.Install optional Eventing extensions

未安装

CLoudEvent示例

#node节点下载镜像

[root@xianchaonode1 ~]# crictl pull ikubernetes/event_display

#启动

kn service create event-display --image ikubernetes/event_display --port 8080 --scale-min 1

#为了自动化创建CDC 修改配置ConfigMap 参考《Knative - 域名映射-CDC配置【三】》

#编辑knative-serving名称空间中的"configmap/config-network",添加如下配置项:

#autocreate-cluster-domain-claims: "true"

[root@xianchaomaster1 domainmapping]# kubectl edit cm config-network -n knative-serving

autocreate-cluster-domain-claims: "true"

configmap/config-network edited

#添加CDC

[root@xianchaomaster1 ~]# kn domain create event.xks.com --ref "ksvc:event-display"

Domain mapping 'event.xks.com' created in namespace 'default'.

[root@xianchaomaster1 ~]# kubectl get cdc

NAME AGE

event.xks.com 12s

#外部访问

#1.先将域名解析

[root@sonarqube plugins]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.40.145 jenkinsnew

192.168.40.146 jenkinsagent

192.168.40.147 sonarqube

192.168.40.190 event.xks.com

#2.curl命令

[root@sonarqube plugins]# curl -v "http://event.xks.com/" -X POST \

-H "Ce-Id: say-hello" \

-H "Ce-Specversion: 1.0" \

-H "Ce-Type: com.magedu.sayhievent" \

-H "Ce-Time: 2022-10-02T11:36:56.7181741Z" \

-H "Ce-Source: sendoff" \

-H "Content-Type: application/json" \

-d '{"msg":"Hello MageEdu Knative from External!"}'

* About to connect() to event.xks.com port 80 (#0)

* Trying 192.168.40.190...

* Connected to event.xks.com (192.168.40.190) port 80 (#0)

> POST / HTTP/1.1

> User-Agent: curl/7.29.0

> Host: event.xks.com

> Accept: */*

> Ce-Id: say-hello

> Ce-Specversion: 1.0

> Ce-Type: com.magedu.sayhievent

> Ce-Time: 2022-10-02T11:36:56.7181741Z

> Ce-Source: sendoff

> Content-Type: application/json

> Content-Length: 46

>

* upload completely sent off: 46 out of 46 bytes

< HTTP/1.1 200 OK

< content-length: 0

< date: Thu, 06 Jul 2023 13:28:58 GMT

< x-envoy-upstream-service-time: 7

< server: istio-envoy

<

* Connection #0 to host event.xks.com left intact

#3.查看日志是否接收到

[root@xianchaomaster1 ~]# kubectl logs -f event-display-00001-deployment-84596bcb8d-h9qlq

Defaulted container "user-container" out of: user-container, queue-proxy

☁️ cloudevents.Event

Context Attributes,

specversion: 1.0

type: com.magedu.sayhievent

source: sendoff

id: say-hello

time: 2022-10-02T11:36:56.7181741Z

datacontenttype: application/json

Data,

{

"msg": "Hello MageEdu Knative from External!"

}

#内部进行访问

#1.启动一个pod

[root@xianchaonode1 ~]# crictl pull ikubernetes/admin-box:v1.2

[root@xianchaomaster1 ~]# kubectl run client-$RANDOM --image=ikubernetes/admin-box:v1.2 --restart=Never -it --command -- /bin/sh

~$ curl -v "http://event-display.default.svc.cluster.local" -X POST \

-H "Ce-Id: say-hello" \

-H "Ce-Specversion: 1.0" \

-H "Ce-Type: com.magedu.sayhievent" \

-H "Ce-Time: 2022-10-02T11:35:56.7181741Z" \

-H "Ce-Source: sendoff" \

-H "Content-Type: application/json" \

-d '{"msg":"Hello MageEdu Knative from Internal!"}'

Note: Unnecessary use of -X or --request, POST is already inferred.

* Trying 10.96.27.221:80...

* TCP_NODELAY set

* Connected to event-display.default.svc.cluster.local (10.96.27.221) port 80 (#0)

> POST / HTTP/1.1

> Host: event-display.default.svc.cluster.local

> User-Agent: curl/7.67.0

> Accept: */*

> Ce-Id: say-hello

> Ce-Specversion: 1.0

> Ce-Type: com.magedu.sayhievent

> Ce-Time: 2022-10-02T11:35:56.7181741Z

> Ce-Source: sendoff

> Content-Type: application/json

> Content-Length: 46

>

* upload completely sent off: 46 out of 46 bytes

* Mark bundle as not supporting multiuse

< HTTP/1.1 200 OK

< content-length: 0

< date: Thu, 06 Jul 2023 13:33:16 GMT

< x-envoy-upstream-service-time: 1

< server: istio-envoy

<

* Connection #0 to host event-display.default.svc.cluster.local left intact

#3.查看日志是否接收到

[root@xianchaomaster1 ~]# kubectl logs -f event-display-00001-deployment-84596bcb8d-h9qlq

☁️ cloudevents.Event

Context Attributes,

specversion: 1.0

type: com.magedu.sayhievent

source: sendoff

id: say-hello

time: 2022-10-02T11:35:56.7181741Z

datacontenttype: application/json

Data,

{

"msg": "Hello MageEdu Knative from Internal!"

}Eventing的逻辑组件

#Eventing API群组及相应的CRD

◼ sources.knative.dev # 声明式配置Event Source的API,提供了四个开箱即用的Source;

◆ApiServerSource:监听Kubernetes API事件

◆ContainerSource:在特定的容器中发出针对Sink的事件

◆PingSource:以周期性任务(cron)的方式生具有固定负载的事件

◆SinkBinding:链接任何可寻址的Kubernetes资源,以接收来自可能产生事件的任何其他Kubernetes资源的事件

◼ eventing.knative.dev # 声明式配置“事件网格模型”的API

◆Broker

◆EventType

◆Trigger

◼ messaging.knative.dev # 声明式配置“事件管道模型”的API

◆Channel

◆Subscription

◼ flows.knative.dev # 事件流模型,即事件是以并行还是串行的被多个函数处理

◆Parallel

◆Sequence

[root@xianchaomaster1 ~]# kubectl api-resources --api-group=sources.knative.dev

NAME SHORTNAMES APIVERSION NAMESPACED KIND

apiserversources sources.knative.dev/v1 true ApiServerSource

containersources sources.knative.dev/v1 true ContainerSource

pingsources sources.knative.dev/v1 true PingSource

sinkbindings sources.knative.dev/v1 true SinkBinding

[root@xianchaomaster1 ~]# kubectl api-resources --api-group=eventing.knative.dev

NAME SHORTNAMES APIVERSION NAMESPACED KIND

brokers eventing.knative.dev/v1 true Broker

eventtypes eventing.knative.dev/v1beta1 true EventType

triggers eventing.knative.dev/v1 true Trigger

[root@xianchaomaster1 ~]# kubectl api-resources --api-group=messaging.knative.dev

NAME SHORTNAMES APIVERSION NAMESPACED KIND

channels ch messaging.knative.dev/v1 true Channel

inmemorychannels imc messaging.knative.dev/v1 true InMemoryChannel

subscriptions sub messaging.knative.dev/v1 true Subscription

[root@xianchaomaster1 ~]# kubectl api-resources --api-group=flows.knative.dev

NAME SHORTNAMES APIVERSION NAMESPACED KIND

parallels flows.knative.dev/v1 true Parallel

sequences flows.knative.dev/v1 true Sequence

事件与Knative Eventing

#Knative Eventing

◼ 负责为事件的生产和消费提供基础设施,可将事件从生产者路由到目标消费者,从而让开发人员能够使用

#事件驱动架构

◼ 各资源者是松散耦合关系,可分别独立开发和部署

◼ 遵循CloudEvents规范

浙公网安备 33010602011771号

浙公网安备 33010602011771号