Tensorflow实战第十课(RNN MNIST分类)

设置RNN的参数

我们本节采用RNN来进行分类的训练(classifiction)。会继续使用手写数据集MNIST。

让RNN从每张图片的第一行像素读到最后一行,然后进行分类判断。接下来我们导入MNIST数据并确定RNN的各种参数(hyper-parameters)

注:

参数(parameters)/参数模型

由模型通过学习得到变量,比如权重w,偏置b

超参数(hyper-parameters)/算法参数

根据经验设定,影响到权重w和偏置b的大小,比如迭代次数、隐藏层的层数、每层神经元的个数、学习速率等。

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data #data mnist = input_data.read_data_sets('MNIST_data',one_hot=True) #hyperparameters lr = 0.001 #learing rate training_iters = 100000 #train step 上限 batch_size = 128 n_inputs = 28 #mnist data input(img shape:28*28) n_steps = 28 #time step n_hidden_units = 128 #neurons in hidden layer n_classes = 10 #mnist classes(0-9 digits)

接下来定义的是x,y的placeholder和weights,biases的初始状况

#tf graph input x = tf.placeholder(tf.float32,[None,n_steps,n_inputs]) y = tf.placeholder(tf.float32,[None,n_classes]) #define weights & biases weights = { #(28,128) 'in': tf.Variable(tf.random_normal([n_inputs,n_hidden_units])), #(128,10) 'out': tf.Variable(tf.random_normal([n_hidden_units,n_classes])) } biases = { #(128,) 'in' : tf.Variable(tf.constant(0.1,shape=[n_hidden_units,])), #(10,) 'out' : tf.Variable(tf.constant(0.1,shape=[n_classes,])) }

定义RNN的主体结构

接着我们定义RNN主体结构,RNN共有3个组成部分(input_layer,cell,output_layer),首先我们定义input_layer:

#hidden layer for input to cell# # 原始的 X 是 3 维数据, 我们需要把它变成 2 维数据才能使用 weights 的矩阵乘法 # X ==> (128 batches * 28 steps, 28 inputs) X = tf.reshape(X,[-1,n_inputs]) #into hidden #X_in = W*X + b X_in = tf.matmul(X,weights['in'])+biases['in'] # X_in ==> (128 batches, 28 steps, 128 hidden) 换回3维 X_in = tf.reshape(X_in,[-1,n_steps,n_hidden_units])

接下来就是lstm_cell中的计算,有两种途径:

1.使用tf.nn.rnn(cell,inputs) 不推荐

2.使用tf.nndynamic_rnn(cell,inputs) 推荐

因 Tensorflow 版本升级原因,state_is_tuple = True将在之后的版本中变为默认. 对于LSTM来说,state可被分为(c_state,h_state)

#use basic lstm cell lstm_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units) init_state = lstm_cell.zero_state(batch_size,dtype=tf.float32) #初始化为零 outputs, final_state = tf.nn.dynamic_rnn(lstm_cell, X_in,initial_state=init_state, time_major=False)

若使用tf.nn.dynamic_rnn(cell,inputs),我们要确定inputs的格式,tf.nn.dynamic_rnn中的time_major参数会针对不同的inputs格式有不同的值。

1.如果inputs为(batches,steps,inputs)==>time_major=False

2.如果inputs为(steps,batches,inputs)==>time_major=True

outputs, final_state = tf.nn.dynamic_rnn(lstm_cell, X_in, initial_state=init_state, time_major=False)

最后是output_layer和return的值,因为这个例子的特殊性,有两种方法可以求得results。

method 1:直接调用final_state中的h_state(final_states[1])来进行运算:

results = tf.matmul(final_state[1], weights['out']) + biases['out']

method 2:调用最后一个outputs(在这个例子中,和上面的final_states[1]是一样的):

# 把 outputs 变成 列表 [(batch, outputs)..] * steps outputs = tf.unstack(tf.transpose(outputs, [1,0,2])) results = tf.matmul(outputs[-1], weights['out']) + biases['out'] #选取最后一个 output

在def RNN()的最后输出results

return results

定义好了RNN主体结构后 我们就可以来计算cost 和 train_op:

pred = RNN(x, weights, biases) cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(pred, y)) train_op = tf.train.AdamOptimizer(lr).minimize(cost)

训练RNN,不断输出accuracy观看结果:

correct_pred = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1)) accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32)) with tf.Session() as sess: init = tf.global_variables_initializer() sess.run(init) step = 0 while step * batch_size < training_iters: batch_xs, batch_ys = mnist.train.next_batch(batch_size) batch_xs = batch_xs.reshape([batch_size, n_steps, n_inputs]) sess.run([train_op], feed_dict={ x: batch_xs, y: batch_ys, }) if step % 20 == 0: print(sess.run(accuracy, feed_dict={ x: batch_xs, y: batch_ys, })) step += 1

完整代码如下:

#RNN practice import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data #data mnist = input_data.read_data_sets('MNIST_data',one_hot=True) #hyperparameters lr = 0.001 #learing rate training_iters = 100000 #train step 上限 batch_size = 128 n_inputs = 28 #mnist data input(img shape:28*28) n_steps = 28 #time step n_hidden_units = 128 #neurons in hidden layer n_classes = 10 #mnist classes(0-9 digits) #tf graph input x = tf.placeholder(tf.float32,[None,n_steps,n_inputs]) y = tf.placeholder(tf.float32,[None,n_classes]) #define weights & biases weights = { #(28,128) 'in': tf.Variable(tf.random_normal([n_inputs,n_hidden_units])), #(128,10) 'out': tf.Variable(tf.random_normal([n_hidden_units,n_classes])) } biases = { #(128,) 'in' : tf.Variable(tf.constant(0.1,shape=[n_hidden_units,])), #(10,) 'out' : tf.Variable(tf.constant(0.1,shape=[n_classes,])) } #define rnn def RNN(X,weights,biases): #hidden layer for input to cell# # 原始的 X 是 3 维数据, 我们需要把它变成 2 维数据才能使用 weights 的矩阵乘法 # X ==> (128 batches * 28 steps, 28 inputs) X = tf.reshape(X,[-1,n_inputs]) #into hidden #X_in = W*X + b X_in = tf.matmul(X,weights['in'])+biases['in'] # X_in ==> (128 batches, 28 steps, 128 hidden) 换回3维 X_in = tf.reshape(X_in,[-1,n_steps,n_hidden_units]) #use basic lstm cell lstm_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units) init_state = lstm_cell.zero_state(batch_size,dtype=tf.float32) #初始化为零 outputs, final_state = tf.nn.dynamic_rnn(lstm_cell, X_in, initial_state=init_state, time_major=False) #hidden layer for output as the final results outputs = tf.unstack(tf.transpose(outputs,[1,0,2])) results = tf.matmul(outputs[-1],weights['out']+biases['out']) return results pred = RNN(x, weights, biases) cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=pred, labels=y)) train_op = tf.train.AdamOptimizer(lr).minimize(cost) correct_pred = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1)) accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32)) with tf.Session() as sess: init = tf.global_variables_initializer() sess.run(init) step = 0 while step * batch_size < training_iters: batch_xs, batch_ys = mnist.train.next_batch(batch_size) batch_xs = batch_xs.reshape([batch_size, n_steps, n_inputs]) sess.run([train_op], feed_dict={ x: batch_xs, y: batch_ys, }) if step % 20 == 0: print(sess.run(accuracy, feed_dict={ x: batch_xs, y: batch_ys, })) step += 1

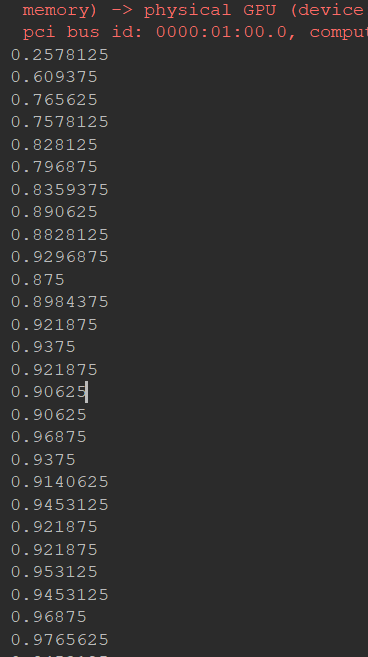

运行截图:

浙公网安备 33010602011771号

浙公网安备 33010602011771号