基于Spark的FPGrowth算法的运用

一、FPGrowth算法理解

Spark.mllib 提供并行FP-growth算法,这个算法属于关联规则算法【关联规则:两不相交的非空集合A、B,如果A=>B,就说A=>B是一条关联规则,常提及的{啤酒}-->{尿布}就是一条关联规则】,经常用于挖掘频度物品集。关于算法的介绍网上很多,这里不再赘述。主要搞清楚几个概念:

1)支持度support(A => B) = P(AnB) = |A n B| / |N|,表示数据集D中,事件A和事件B共同出现的概率;

2)置信度confidence(A => B) = P(B|A) = |A n B| / |A|,表示数据集D中,出现事件A的事件中出现事件B的概率;

3)提升度lift(A => B) = P(B|A):P(B) = |A n B| / |A| : |B| / |N|,表示数据集D中,出现A的条件下出现事件B的概率和没有条件A出现B的概率;

由上可以看出,支持度表示这条规则的可能性大小,而置信度表示由事件A得到事件B的可信性大小。

举个列子:10000个消费者购买了商品,尿布1000个,啤酒2000个,同时购买了尿布和啤酒800个。

1)支持度:在所有项集中出现的可能性,项集同时含有,x与y的概率。尿布和啤酒的支持度为:800/10000=8%

2)置信度:在X发生的条件下,Y发生的概率。尿布-》啤酒的置信度为:800/1000=80%,啤酒-》尿布的置信度为:800/2000=40%

3)提升度:在含有x条件下同时含有Y的可能性(x->y的置信度)比没有x这个条件下含有Y的可能性之比:confidence(尿布=> 啤酒)/概率(啤酒)) = 80%/(2000/10000) 。如果提升度=1,那就是没啥关系这两个

通过支持度和置信度可以得出强关联关系,通过提升的,可判别有效的强关联关系。

直接拿例子来说明问题。首先数据集如下:

r z h k p

z y x w v u t s

s x o n r

x z y m t s q e

z

x z y r q t p二、代码实现。在IDEA中建立Maven工程,然后本地模式调试代码如下:

import org.apache.spark.SparkConf; import org.apache.spark.api.java.JavaRDD; import org.apache.spark.api.java.JavaSparkContext; import org.apache.spark.api.java.function.Function; import org.apache.spark.mllib.fpm.AssociationRules; import org.apache.spark.mllib.fpm.FPGrowth; import org.apache.spark.mllib.fpm.FPGrowthModel; import java.util.Arrays; import java.util.List; public class FPDemo { public static void main(String[] args){ String data_path; //数据集路径 double minSupport = 0.2;//最小支持度 int numPartition = 10; //数据分区 double minConfidence = 0.8;//最小置信度 if(args.length < 1){ System.out.println("<input data_path>"); System.exit(-1); } data_path = args[0]; if(args.length >= 2) minSupport = Double.parseDouble(args[1]); if(args.length >= 3) numPartition = Integer.parseInt(args[2]); if(args.length >= 4) minConfidence = Double.parseDouble(args[3]); SparkConf conf = new SparkConf().setAppName("FPDemo").setMaster("local"); JavaSparkContext sc = new JavaSparkContext(conf); //加载数据,并将数据通过空格分割 JavaRDD<List<String>> transactions = sc.textFile(data_path) .map(new Function<String, List<String>>() { public List<String> call(String s) throws Exception { String[] parts = s.split(" "); return Arrays.asList(parts); } }); //创建FPGrowth的算法实例,同时设置好训练时的最小支持度和数据分区 FPGrowth fpGrowth = new FPGrowth().setMinSupport(minSupport).setNumPartitions(numPartition); FPGrowthModel<String> model = fpGrowth.run(transactions);//执行算法 //查看所有频繁諅,并列出它出现的次数 for(FPGrowth.FreqItemset<String> itemset : model.freqItemsets().toJavaRDD().collect()) System.out.println("[" + itemset.javaItems() + "]," + itemset.freq()); //通过置信度筛选出强规则 //antecedent表示前项 //consequent表示后项 //confidence表示规则的置信度 for(AssociationRules.Rule<String> rule : model.generateAssociationRules(minConfidence).toJavaRDD().collect()) System.out.println(rule.javaAntecedent() + "=>" + rule.javaConsequent() + ", " + rule.confidence()); } }

直接在Maven工程中运用上面的代码会有问题,因此这里需要添加依赖项解决项目中的问题,依赖项的添加如下:

<dependencies>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.10</artifactId>

<version>2.1.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-mllib_2.10</artifactId>

<version>2.1.0</version>

</dependency>

</dependencies>

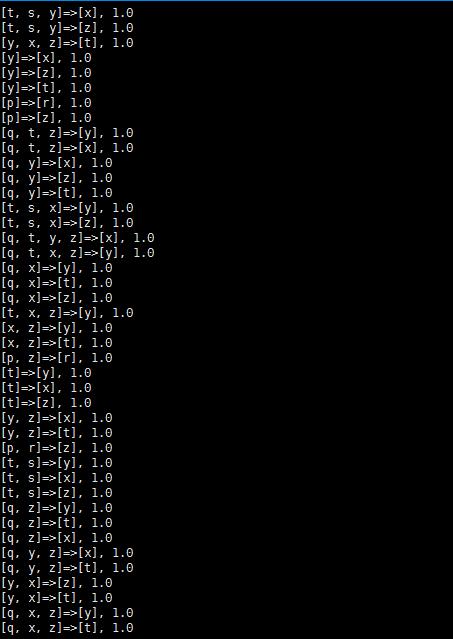

本地模式运行的结果如下:

[t, s, y]=>[x], 1.0 [t, s, y]=>[z], 1.0 [y, x, z]=>[t], 1.0 [y]=>[x], 1.0 [y]=>[z], 1.0 [y]=>[t], 1.0 [p]=>[r], 1.0 [p]=>[z], 1.0 [q, t, z]=>[y], 1.0 [q, t, z]=>[x], 1.0 [q, y]=>[x], 1.0 [q, y]=>[z], 1.0 [q, y]=>[t], 1.0 [t, s, x]=>[y], 1.0 [t, s, x]=>[z], 1.0 [q, t, y, z]=>[x], 1.0 [q, t, x, z]=>[y], 1.0 [q, x]=>[y], 1.0 [q, x]=>[t], 1.0 [q, x]=>[z], 1.0 [t, x, z]=>[y], 1.0 [x, z]=>[y], 1.0 [x, z]=>[t], 1.0 [p, z]=>[r], 1.0 [t]=>[y], 1.0 [t]=>[x], 1.0 [t]=>[z], 1.0 [y, z]=>[x], 1.0 [y, z]=>[t], 1.0 [p, r]=>[z], 1.0 [t, s]=>[y], 1.0 [t, s]=>[x], 1.0 [t, s]=>[z], 1.0 [q, z]=>[y], 1.0 [q, z]=>[t], 1.0 [q, z]=>[x], 1.0 [q, y, z]=>[x], 1.0 [q, y, z]=>[t], 1.0 [y, x]=>[z], 1.0 [y, x]=>[t], 1.0 [q, x, z]=>[y], 1.0 [q, x, z]=>[t], 1.0 [t, y, z]=>[x], 1.0 [q, y, x]=>[z], 1.0 [q, y, x]=>[t], 1.0 [q, t, y, x]=>[z], 1.0 [t, s, x, z]=>[y], 1.0 [s, y, x]=>[z], 1.0 [s, y, x]=>[t], 1.0 [s, x, z]=>[y], 1.0 [s, x, z]=>[t], 1.0 [q, y, x, z]=>[t], 1.0 [s, y]=>[x], 1.0 [s, y]=>[z], 1.0 [s, y]=>[t], 1.0 [q, t, y]=>[x], 1.0 [q, t, y]=>[z], 1.0 [t, y]=>[x], 1.0 [t, y]=>[z], 1.0 [t, z]=>[y], 1.0 [t, z]=>[x], 1.0 [t, s, y, x]=>[z], 1.0 [t, y, x]=>[z], 1.0 [q, t]=>[y], 1.0 [q, t]=>[x], 1.0 [q, t]=>[z], 1.0 [q]=>[y], 1.0 [q]=>[t], 1.0 [q]=>[x], 1.0 [q]=>[z], 1.0 [t, s, z]=>[y], 1.0 [t, s, z]=>[x], 1.0 [t, x]=>[y], 1.0 [t, x]=>[z], 1.0 [s, z]=>[y], 1.0 [s, z]=>[x], 1.0 [s, z]=>[t], 1.0 [s, y, x, z]=>[t], 1.0 [s]=>[x], 1.0 [t, s, y, z]=>[x], 1.0 [s, y, z]=>[x], 1.0 [s, y, z]=>[t], 1.0 [q, t, x]=>[y], 1.0 [q, t, x]=>[z], 1.0 [r, z]=>[p], 1.0

三、Spark集群部署。代码修改正如:

import org.apache.spark.SparkConf; import org.apache.spark.api.java.JavaRDD; import org.apache.spark.api.java.JavaSparkContext; import org.apache.spark.api.java.function.Function; import org.apache.spark.mllib.fpm.AssociationRules; import org.apache.spark.mllib.fpm.FPGrowth; import org.apache.spark.mllib.fpm.FPGrowthModel; import java.util.Arrays; import java.util.List; public class FPDemo { public static void main(String[] args){ String data_path; //数据集路径 double minSupport = 0.2;//最小支持度 int numPartition = 10; //数据分区 double minConfidence = 0.8;//最小置信度 if(args.length < 1){ System.out.println("<input data_path>"); System.exit(-1); } data_path = args[0]; if(args.length >= 2) minSupport = Double.parseDouble(args[1]); if(args.length >= 3) numPartition = Integer.parseInt(args[2]); if(args.length >= 4) minConfidence = Double.parseDouble(args[3]); SparkConf conf = new SparkConf().setAppName("FPDemo");////修改的地方 JavaSparkContext sc = new JavaSparkContext(conf); //加载数据,并将数据通过空格分割 JavaRDD<List<String>> transactions = sc.textFile(data_path) .map(new Function<String, List<String>>() { public List<String> call(String s) throws Exception { String[] parts = s.split(" "); return Arrays.asList(parts); } }); //创建FPGrowth的算法实例,同时设置好训练时的最小支持度和数据分区 FPGrowth fpGrowth = new FPGrowth().setMinSupport(minSupport).setNumPartitions(numPartition); FPGrowthModel<String> model = fpGrowth.run(transactions);//执行算法 //查看所有频繁諅,并列出它出现的次数 for(FPGrowth.FreqItemset<String> itemset : model.freqItemsets().toJavaRDD().collect()) System.out.println("[" + itemset.javaItems() + "]," + itemset.freq()); //通过置信度筛选出强规则 //antecedent表示前项 //consequent表示后项 //confidence表示规则的置信度 for(AssociationRules.Rule<String> rule : model.generateAssociationRules(minConfidence).toJavaRDD().collect()) System.out.println(rule.javaAntecedent() + "=>" + rule.javaConsequent() + ", " + rule.confidence()); } }

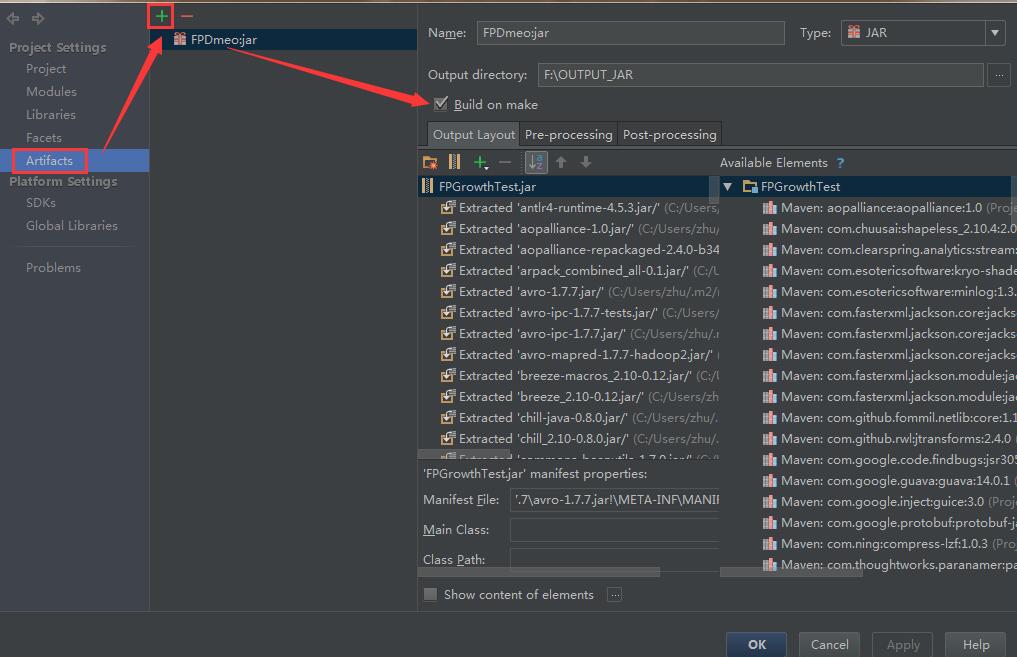

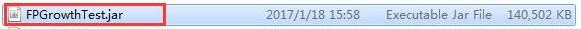

然后在IDEA中打包成JAR包

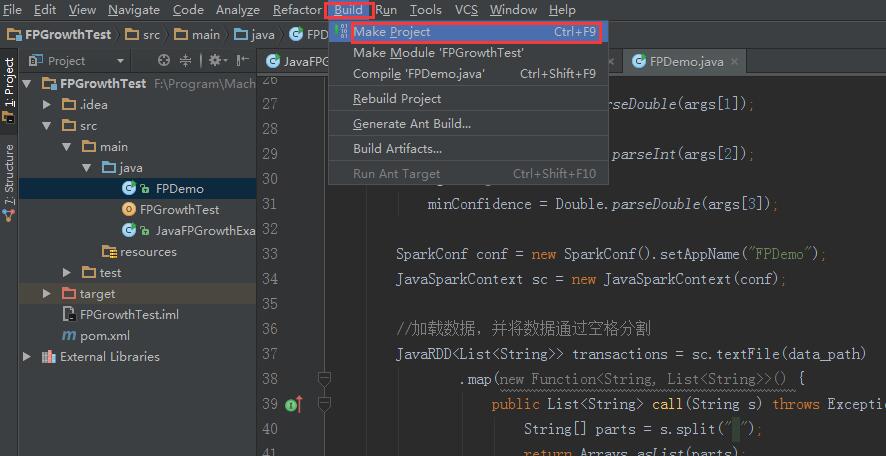

然后在工具栏

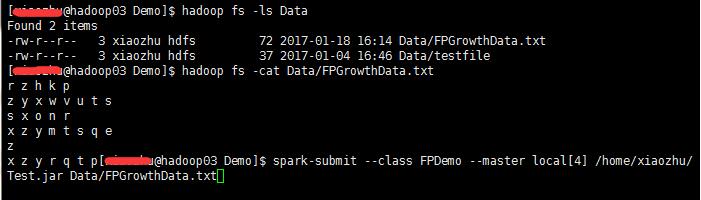

生成Jar包,然后上传到集群中执行命令

得到结果

浙公网安备 33010602011771号

浙公网安备 33010602011771号