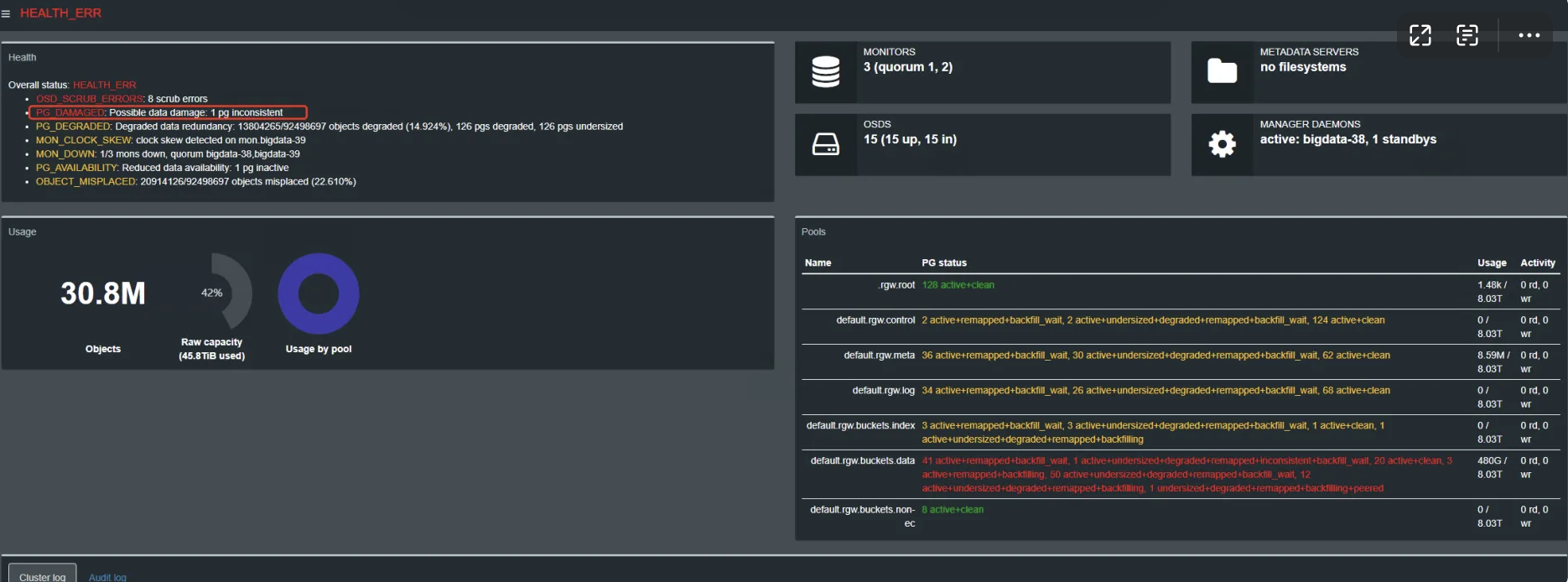

ceph修复记录

修复inconsistent pg

ceph运维参考手册

修复unknow pg

修复REQUEST_STUCK

疑问点

修复 pg down

修复incomplete状态的pg

如何加快recovery的速度

OSD新增节点记录

修复inconsistent pg

[root@bigdata-47 ceph]# ceph health detail

HEALTH_ERR 15660741/92498685 objects misplaced (16.931%); 1/30832895 objects unfound (0.000%); 8 scrub errors; Reduced data availability: 30 pgs inactive; Possible data damage: 1 pg inconsistent; Degraded data redundancy: 17396682/92498685 objects degraded (18.807%), 169 pgs degraded, 168 pgs undersized; 14 slow requests are blocked > 32 sec. Implicated osds ; 113 stuck requests are blocked > 4096 sec. Implicated osds 3,8; 1/3 mons down, quorum bigdata-38,bigdata-39

OBJECT_MISPLACED 15660741/92498685 objects misplaced (16.931%)

OBJECT_UNFOUND 1/30832895 objects unfound (0.000%)

pg 6.7c has 1 unfound objects

OSD_SCRUB_ERRORS 8 scrub errors

PG_AVAILABILITY Reduced data availability: 30 pgs inactive

pg 1.69 is stuck inactive for 186115.705459, current state unknown, last acting []

pg 1.6c is stuck inactive for 186115.705459, current state unknown, last acting []

pg 2.6 is stuck inactive for 186115.705459, current state unknown, last acting []

pg 2.2d is stuck inactive for 18659.437866, current state undersized+degraded+remapped+backfill_wait+peered, last acting [10]

pg 3.3 is stuck inactive for 18500.679066, current state undersized+degraded+remapped+backfill_wait+peered, last acting [1]

pg 3.42 is stuck inactive for 18524.880670, current state undersized+degraded+remapped+backfill_wait+peered, last acting [9]

pg 3.4a is stuck inactive for 18405.922286, current state undersized+degraded+remapped+backfill_wait+peered, last acting [2]

pg 3.4d is stuck inactive for 18368.538811, current state undersized+degraded+remapped+backfill_wait+peered, last acting [16]

pg 3.55 is stuck inactive for 18545.749610, current state undersized+degraded+remapped+backfill_wait+peered, last acting [15]

pg 3.56 is stuck inactive for 186115.705459, current state unknown, last acting []

pg 3.5a is stuck inactive for 1054971.940815, current state undersized+degraded+remapped+backfill_wait+peered, last acting [9]

pg 4.1 is stuck inactive for 18524.877997, current state undersized+degraded+remapped+backfill_wait+peered, last acting [9]

pg 4.b is stuck inactive for 18545.832758, current state undersized+degraded+remapped+backfill_wait+peered, last acting [15]

pg 4.d is stuck inactive for 18405.925508, current state undersized+degraded+remapped+backfill_wait+peered, last acting [2]

pg 4.11 is stuck inactive for 18659.460272, current state undersized+degraded+remapped+backfill_wait+peered, last acting [10]

pg 4.12 is stuck inactive for 186115.705459, current state unknown, last acting []

pg 4.23 is stuck inactive for 18500.691431, current state undersized+degraded+remapped+backfill_wait+peered, last acting [1]

pg 4.24 is stuck inactive for 18405.934198, current state undersized+degraded+remapped+backfill_wait+peered, last acting [2]

pg 4.2f is stuck inactive for 18385.742774, current state undersized+degraded+remapped+backfill_wait+peered, last acting [18]

pg 4.30 is stuck inactive for 211847.663409, current state undersized+degraded+remapped+backfill_wait+peered, last acting [6]

pg 4.49 is stuck inactive for 18385.721631, current state undersized+degraded+remapped+backfill_wait+peered, last acting [18]

pg 4.4e is stuck inactive for 18545.762481, current state undersized+degraded+remapped+backfill_wait+peered, last acting [15]

pg 4.5b is stuck inactive for 18500.682948, current state undersized+degraded+remapped+backfill_wait+peered, last acting [1]

pg 4.6a is stuck inactive for 18405.937536, current state undersized+degraded+remapped+backfill_wait+peered, last acting [2]

pg 5.5 is stuck inactive for 46870.150659, current state undersized+degraded+remapped+backfill_wait+peered, last acting [6]

pg 6.1 is stuck inactive for 212238.985136, current state undersized+degraded+remapped+backfill_wait+peered, last acting [10]

pg 6.13 is stuck inactive for 18368.530036, current state undersized+degraded+remapped+backfill_wait+peered, last acting [16]

pg 6.16 is stuck inactive for 18659.453565, current state undersized+degraded+remapped+backfilling+peered, last acting [10]

pg 6.2c is stuck inactive for 18524.862667, current state undersized+degraded+remapped+backfill_wait+peered, last acting [9]

pg 6.7c is stuck inactive for 18524.871478, current state undersized+degraded+remapped+backfill_wait+peered, last acting [9]

PG_DAMAGED Possible data damage: 1 pg inconsistent

pg 6.36 is active+undersized+degraded+remapped+inconsistent+backfill_wait, acting [9,10]

PG_DEGRADED Degraded data redundancy: 17396682/92498685 objects degraded (18.807%), 169 pgs degraded, 168 pgs undersized

pg 2.6e is stuck undersized for 17995.084413, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,9]

pg 3.24 is stuck undersized for 18542.893880, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,10]

pg 3.61 is stuck undersized for 18172.450738, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,6]

pg 3.66 is stuck undersized for 18307.508340, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,16]

pg 3.6d is stuck undersized for 17995.096651, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,15]

pg 3.73 is stuck undersized for 18382.716905, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,18]

pg 3.79 is stuck undersized for 18497.652933, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,9]

pg 3.7a is stuck undersized for 18242.385416, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,16]

pg 4.20 is stuck undersized for 17999.892080, current state active+undersized+degraded+remapped+backfill_wait, last acting [11,10]

pg 4.21 is stuck undersized for 18521.884116, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,9]

pg 4.23 is stuck undersized for 18403.877592, current state undersized+degraded+remapped+backfill_wait+peered, last acting [1]

pg 4.29 is stuck undersized for 18173.458299, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,9]

pg 4.2f is stuck undersized for 18382.713388, current state undersized+degraded+remapped+backfill_wait+peered, last acting [18]

pg 4.63 is stuck undersized for 17994.088783, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,11]

pg 4.65 is stuck undersized for 18306.511068, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,6]

pg 4.66 is stuck undersized for 18402.870165, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,2]

pg 4.6a is stuck undersized for 18402.869648, current state undersized+degraded+remapped+backfill_wait+peered, last acting [2]

pg 4.6e is stuck undersized for 18241.402616, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,7]

pg 4.6f is stuck undersized for 18402.866525, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,9]

pg 4.70 is stuck undersized for 18402.873258, current state active+undersized+degraded+remapped+backfill_wait, last acting [2,15]

pg 4.76 is stuck undersized for 18382.714264, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,18]

pg 4.78 is stuck undersized for 18382.713597, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,18]

pg 4.7d is stuck undersized for 18366.067927, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,16]

pg 4.7f is stuck undersized for 18521.871964, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,10]

pg 6.22 is stuck undersized for 18497.650971, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,1]

pg 6.24 is active+undersized+degraded+remapped+backfill_wait, acting [2,18]

pg 6.2b is stuck undersized for 18307.504855, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,15]

pg 6.2c is stuck undersized for 18521.869767, current state undersized+degraded+remapped+backfill_wait+peered, last acting [9]

pg 6.2e is stuck undersized for 18092.136257, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,12]

pg 6.2f is stuck undersized for 18402.868681, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,2]

pg 6.61 is stuck undersized for 18383.706268, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,10]

pg 6.62 is stuck undersized for 18306.487265, current state active+undersized+degraded+remapped+backfill_wait, last acting [6,18]

pg 6.63 is stuck undersized for 17994.073746, current state active+undersized+degraded+remapped+backfill_wait, last acting [12,11]

pg 6.64 is stuck undersized for 18241.394363, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,7]

pg 6.65 is stuck undersized for 17994.086364, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,11]

pg 6.66 is stuck undersized for 18241.404390, current state active+undersized+degraded+remapped+backfilling, last acting [7,10]

pg 6.67 is stuck undersized for 18306.510727, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,6]

pg 6.6b is stuck undersized for 18366.065045, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,16]

pg 6.6c is stuck undersized for 18092.136271, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,12]

pg 6.6d is stuck undersized for 18092.123337, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,9]

pg 6.6e is stuck undersized for 18382.715263, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,18]

pg 6.6f is stuck undersized for 18242.398419, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,6]

pg 6.70 is stuck undersized for 18382.709439, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,9]

pg 6.72 is stuck undersized for 18092.133243, current state active+undersized+degraded+remapped+backfill_wait, last acting [12,1]

pg 6.76 is stuck undersized for 18172.447658, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,5]

pg 6.78 is stuck undersized for 18382.714765, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,18]

pg 6.7a is stuck undersized for 18307.514437, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,16]

pg 6.7b is stuck undersized for 18241.401165, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,7]

pg 6.7c is stuck undersized for 18521.878519, current state undersized+degraded+remapped+backfill_wait+peered, last acting [9]

pg 6.7d is stuck undersized for 17994.087696, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,11]

pg 6.7f is stuck undersized for 18092.133114, current state active+undersized+degraded+remapped+backfill_wait, last acting [16,12]

REQUEST_SLOW 14 slow requests are blocked > 32 sec. Implicated osds

7 ops are blocked > 2097.15 sec

4 ops are blocked > 1048.58 sec

2 ops are blocked > 524.288 sec

1 ops are blocked > 32.768 sec

REQUEST_STUCK 113 stuck requests are blocked > 4096 sec. Implicated osds 3,8

16 ops are blocked > 33554.4 sec

55 ops are blocked > 16777.2 sec

28 ops are blocked > 8388.61 sec

14 ops are blocked > 4194.3 sec

osds 3,8 have stuck requests > 33554.4 sec

MON_DOWN 1/3 mons down, quorum bigdata-38,bigdata-39

mon.bigdata-37 (rank 0) addr 10.21.0.47:6789/0 is down (out of quorum)

从health detail输出可以看到有一个pg 6.36出现了inconsistent

参考资料:https://blog.csdn.net/a13568hki/article/details/134389003

首先要找到pg 6.36对应的osd

发现是osd9,osd9在机器bigdata-47上,到这台机器执行如下命令修复

停止osd服务

systemctl stop ceph-osd@9.service

刷入数据 执行这个命令时可以打开对应的osd日志,查看日志输出判断进度

ceph-osd -i 9 --flush-journal

启动osd服务

systemctl start ceph-osd@9.service

检查osd已经成功加入集群了,这个在启动osd服务后要等待一段时间

ceph osd tree

修复pg

ceph pg repair 6.36

输入数据时的osd日志:

2024-12-08 02:49:30.595913 7f3d013e0d00 0 ceph version 12.2.7 (3ec878d1e53e1aeb47a9f619c49d9e7c0aa384d5) luminous (stable), process ceph-osd, pid 802618

2024-12-08 02:49:30.626158 7f3d013e0d00 1 bluestore(/var/lib/ceph/osd/ceph-9) _mount path /var/lib/ceph/osd/ceph-9

2024-12-08 02:49:30.632812 7f3d013e0d00 1 bdev create path /var/lib/ceph/osd/ceph-9/block type kernel

2024-12-08 02:49:30.632830 7f3d013e0d00 1 bdev(0x7f3d0bb37800 /var/lib/ceph/osd/ceph-9/block) open path /var/lib/ceph/osd/ceph-9/block

2024-12-08 02:49:30.633067 7f3d013e0d00 1 bdev(0x7f3d0bb37800 /var/lib/ceph/osd/ceph-9/block) open size 8001016561664 (0x746e1c00000, 7451 GB) block_size 4096 (4096 B) rotational

2024-12-08 02:49:30.633392 7f3d013e0d00 1 bluestore(/var/lib/ceph/osd/ceph-9) _set_cache_sizes max 0.5 < ratio 0.99

2024-12-08 02:49:30.633438 7f3d013e0d00 1 bluestore(/var/lib/ceph/osd/ceph-9) _set_cache_sizes cache_size 1073741824 meta 0.5 kv 0.5 data 0

2024-12-08 02:49:30.633527 7f3d013e0d00 1 bdev create path /var/lib/ceph/osd/ceph-9/block type kernel

2024-12-08 02:49:30.633535 7f3d013e0d00 1 bdev(0x7f3d0bb37e00 /var/lib/ceph/osd/ceph-9/block) open path /var/lib/ceph/osd/ceph-9/block

2024-12-08 02:49:30.633754 7f3d013e0d00 1 bdev(0x7f3d0bb37e00 /var/lib/ceph/osd/ceph-9/block) open size 8001016561664 (0x746e1c00000, 7451 GB) block_size 4096 (4096 B) rotational

2024-12-08 02:49:30.633783 7f3d013e0d00 1 bluefs add_block_device bdev 1 path /var/lib/ceph/osd/ceph-9/block size 7451 GB

2024-12-08 02:49:30.633855 7f3d013e0d00 1 bluefs mount

2024-12-08 02:49:54.592854 7f3d013e0d00 0 set rocksdb option compaction_readahead_size = 2097152

2024-12-08 02:49:54.592885 7f3d013e0d00 0 set rocksdb option compression = kNoCompression

2024-12-08 02:49:54.592890 7f3d013e0d00 0 set rocksdb option max_write_buffer_number = 4

2024-12-08 02:49:54.592894 7f3d013e0d00 0 set rocksdb option min_write_buffer_number_to_merge = 1

2024-12-08 02:49:54.592898 7f3d013e0d00 0 set rocksdb option recycle_log_file_num = 4

2024-12-08 02:49:54.592900 7f3d013e0d00 0 set rocksdb option writable_file_max_buffer_size = 0

2024-12-08 02:49:54.592904 7f3d013e0d00 0 set rocksdb option write_buffer_size = 268435456

2024-12-08 02:49:54.592939 7f3d013e0d00 0 set rocksdb option compaction_readahead_size = 2097152

2024-12-08 02:49:54.592944 7f3d013e0d00 0 set rocksdb option compression = kNoCompression

2024-12-08 02:49:54.592948 7f3d013e0d00 0 set rocksdb option max_write_buffer_number = 4

2024-12-08 02:49:54.592951 7f3d013e0d00 0 set rocksdb option min_write_buffer_number_to_merge = 1

2024-12-08 02:49:54.592954 7f3d013e0d00 0 set rocksdb option recycle_log_file_num = 4

2024-12-08 02:49:54.592957 7f3d013e0d00 0 set rocksdb option writable_file_max_buffer_size = 0

2024-12-08 02:49:54.592959 7f3d013e0d00 0 set rocksdb option write_buffer_size = 268435456

2024-12-08 02:49:54.593181 7f3d013e0d00 4 rocksdb: RocksDB version: 5.4.0

2024-12-08 02:49:54.593194 7f3d013e0d00 4 rocksdb: Git sha rocksdb_build_git_sha:@0@

2024-12-08 02:49:54.593196 7f3d013e0d00 4 rocksdb: Compile date Jul 16 2018

2024-12-08 02:49:54.593199 7f3d013e0d00 4 rocksdb: DB SUMMARY

2024-12-08 02:49:54.622064 7f3d013e0d00 4 rocksdb: CURRENT file: CURRENT

2024-12-08 02:49:54.622069 7f3d013e0d00 4 rocksdb: IDENTITY file: IDENTITY

2024-12-08 02:49:54.622080 7f3d013e0d00 4 rocksdb: MANIFEST file: MANIFEST-293268 size: 4735287 Bytes

2024-12-08 02:49:54.622082 7f3d013e0d00 4 rocksdb: SST files in db dir, Total Num: 37959, files: 001304.sst 001305.sst 001306.sst 001307.sst 001308.sst 001323.sst 001347.sst 001350.sst 001351.sst

2024-12-08 02:49:54.622085 7f3d013e0d00 4 rocksdb: Write Ahead Log file in db: 293269.log size: 9460508 ;

2024-12-08 02:49:54.622477 7f3d013e0d00 4 rocksdb: Options.error_if_exists: 0

2024-12-08 02:49:54.622482 7f3d013e0d00 4 rocksdb: Options.create_if_missing: 0

2024-12-08 02:49:54.622483 7f3d013e0d00 4 rocksdb: Options.paranoid_checks: 1

2024-12-08 02:49:54.622483 7f3d013e0d00 4 rocksdb: Options.env: 0x7f3d0bdbec60

2024-12-08 02:49:54.622484 7f3d013e0d00 4 rocksdb: Options.info_log: 0x7f3d0c8910c0

2024-12-08 02:49:54.622485 7f3d013e0d00 4 rocksdb: Options.max_open_files: -1

2024-12-08 02:49:54.622486 7f3d013e0d00 4 rocksdb: Options.max_file_opening_threads: 16

2024-12-08 02:49:54.622487 7f3d013e0d00 4 rocksdb: Options.use_fsync: 0

2024-12-08 02:49:54.622487 7f3d013e0d00 4 rocksdb: Options.max_log_file_size: 0

2024-12-08 02:49:54.622488 7f3d013e0d00 4 rocksdb: Options.max_manifest_file_size: 18446744073709551615

2024-12-08 02:49:54.622490 7f3d013e0d00 4 rocksdb: Options.log_file_time_to_roll: 0

2024-12-08 02:49:54.622491 7f3d013e0d00 4 rocksdb: Options.keep_log_file_num: 1000

2024-12-08 02:49:54.622492 7f3d013e0d00 4 rocksdb: Options.recycle_log_file_num: 4

2024-12-08 02:49:54.622492 7f3d013e0d00 4 rocksdb: Options.allow_fallocate: 1

2024-12-08 02:49:54.622493 7f3d013e0d00 4 rocksdb: Options.allow_mmap_reads: 0

2024-12-08 02:49:54.622494 7f3d013e0d00 4 rocksdb: Options.allow_mmap_writes: 0

2024-12-08 02:49:54.622494 7f3d013e0d00 4 rocksdb: Options.use_direct_reads: 0

2024-12-08 02:49:54.622495 7f3d013e0d00 4 rocksdb: Options.use_direct_io_for_flush_and_compaction: 0

2024-12-08 02:49:54.622496 7f3d013e0d00 4 rocksdb: Options.create_missing_column_families: 0

2024-12-08 02:49:54.622496 7f3d013e0d00 4 rocksdb: Options.db_log_dir:

2024-12-08 02:49:54.622497 7f3d013e0d00 4 rocksdb: Options.wal_dir: db

2024-12-08 02:49:54.622498 7f3d013e0d00 4 rocksdb: Options.table_cache_numshardbits: 6

2024-12-08 02:49:54.622499 7f3d013e0d00 4 rocksdb: Options.max_subcompactions: 1

2024-12-08 02:49:54.622499 7f3d013e0d00 4 rocksdb: Options.max_background_flushes: 1

2024-12-08 02:49:54.622500 7f3d013e0d00 4 rocksdb: Options.WAL_ttl_seconds: 0

2024-12-08 02:49:54.622501 7f3d013e0d00 4 rocksdb: Options.WAL_size_limit_MB: 0

2024-12-08 02:49:54.622501 7f3d013e0d00 4 rocksdb: Options.manifest_preallocation_size: 4194304

2024-12-08 02:49:54.622502 7f3d013e0d00 4 rocksdb: Options.is_fd_close_on_exec: 1

2024-12-08 02:49:54.622503 7f3d013e0d00 4 rocksdb: Options.advise_random_on_open: 1

2024-12-08 02:49:54.622504 7f3d013e0d00 4 rocksdb: Options.db_write_buffer_size: 0

2024-12-08 02:49:54.622504 7f3d013e0d00 4 rocksdb: Options.access_hint_on_compaction_start: 1

2024-12-08 02:49:54.622505 7f3d013e0d00 4 rocksdb: Options.new_table_reader_for_compaction_inputs: 1

2024-12-08 02:49:54.622505 7f3d013e0d00 4 rocksdb: Options.compaction_readahead_size: 2097152

2024-12-08 02:49:54.622506 7f3d013e0d00 4 rocksdb: Options.random_access_max_buffer_size: 1048576

2024-12-08 02:49:54.622507 7f3d013e0d00 4 rocksdb: Options.writable_file_max_buffer_size: 0

2024-12-08 02:49:54.622507 7f3d013e0d00 4 rocksdb: Options.use_adaptive_mutex: 0

2024-12-08 02:49:54.622508 7f3d013e0d00 4 rocksdb: Options.rate_limiter: (nil)

2024-12-08 02:49:54.622509 7f3d013e0d00 4 rocksdb: Options.sst_file_manager.rate_bytes_per_sec: 0

2024-12-08 02:49:54.622510 7f3d013e0d00 4 rocksdb: Options.bytes_per_sync: 0

2024-12-08 02:49:54.622511 7f3d013e0d00 4 rocksdb: Options.wal_bytes_per_sync: 0

2024-12-08 02:49:54.622511 7f3d013e0d00 4 rocksdb: Options.wal_recovery_mode: 0

2024-12-08 02:49:54.622512 7f3d013e0d00 4 rocksdb: Options.enable_thread_tracking: 0

2024-12-08 02:49:54.622512 7f3d013e0d00 4 rocksdb: Options.allow_concurrent_memtable_write: 1

2024-12-08 02:49:54.622513 7f3d013e0d00 4 rocksdb: Options.enable_write_thread_adaptive_yield: 1

2024-12-08 02:49:54.622514 7f3d013e0d00 4 rocksdb: Options.write_thread_max_yield_usec: 100

2024-12-08 02:49:54.622514 7f3d013e0d00 4 rocksdb: Options.write_thread_slow_yield_usec: 3

2024-12-08 02:49:54.622515 7f3d013e0d00 4 rocksdb: Options.row_cache: None

2024-12-08 02:49:54.622516 7f3d013e0d00 4 rocksdb: Options.wal_filter: None

2024-12-08 02:49:54.622516 7f3d013e0d00 4 rocksdb: Options.avoid_flush_during_recovery: 0

2024-12-08 02:49:54.622517 7f3d013e0d00 4 rocksdb: Options.base_background_compactions: 1

2024-12-08 02:49:54.622518 7f3d013e0d00 4 rocksdb: Options.max_background_compactions: 1

2024-12-08 02:49:54.622518 7f3d013e0d00 4 rocksdb: Options.avoid_flush_during_shutdown: 0

2024-12-08 02:49:54.622519 7f3d013e0d00 4 rocksdb: Options.delayed_write_rate : 16777216

2024-12-08 02:49:54.622520 7f3d013e0d00 4 rocksdb: Options.max_total_wal_size: 0

2024-12-08 02:49:54.622522 7f3d013e0d00 4 rocksdb: Options.delete_obsolete_files_period_micros: 21600000000

2024-12-08 02:49:54.622536 7f3d013e0d00 4 rocksdb: Options.stats_dump_period_sec: 600

2024-12-08 02:49:54.622537 7f3d013e0d00 4 rocksdb: Compression algorithms supported:

2024-12-08 02:49:54.622540 7f3d013e0d00 4 rocksdb: Snappy supported: 1

2024-12-08 02:49:54.622540 7f3d013e0d00 4 rocksdb: Zlib supported: 0

2024-12-08 02:49:54.622541 7f3d013e0d00 4 rocksdb: Bzip supported: 0

2024-12-08 02:49:54.622542 7f3d013e0d00 4 rocksdb: LZ4 supported: 0

2024-12-08 02:49:54.622543 7f3d013e0d00 4 rocksdb: ZSTD supported: 0

2024-12-08 02:49:54.622544 7f3d013e0d00 4 rocksdb: Fast CRC32 supported: 1

2024-12-08 02:49:54.636521 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/version_set.cc:2609] Recovering from manifest file: MANIFEST-293268

2024-12-08 02:49:54.636605 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/column_family.cc:407] --------------- Options for column family [default]:

2024-12-08 02:49:54.636614 7f3d013e0d00 4 rocksdb: Options.comparator: leveldb.BytewiseComparator

2024-12-08 02:49:54.636626 7f3d013e0d00 4 rocksdb: Options.merge_operator: .T:int64_array.b:bitwise_xor

2024-12-08 02:49:54.636628 7f3d013e0d00 4 rocksdb: Options.compaction_filter: None

2024-12-08 02:49:54.636632 7f3d013e0d00 4 rocksdb: Options.compaction_filter_factory: None

2024-12-08 02:49:54.636635 7f3d013e0d00 4 rocksdb: Options.memtable_factory: SkipListFactory

2024-12-08 02:49:54.636636 7f3d013e0d00 4 rocksdb: Options.table_factory: BlockBasedTable

2024-12-08 02:49:54.636665 7f3d013e0d00 4 rocksdb: table_factory options: flush_block_policy_factory: FlushBlockBySizePolicyFactory (0x7f3d0baf21c8)

cache_index_and_filter_blocks: 1

cache_index_and_filter_blocks_with_high_priority: 1

pin_l0_filter_and_index_blocks_in_cache: 1

index_type: 0

hash_index_allow_collision: 1

checksum: 1

no_block_cache: 0

block_cache: 0x7f3d0bb30698

block_cache_name: LRUCache

block_cache_options:

capacity : 536870912

num_shard_bits : 4

strict_capacity_limit : 0

high_pri_pool_ratio: 0.000

block_cache_compressed: (nil)

persistent_cache: (nil)

block_size: 4096

block_size_deviation: 10

block_restart_interval: 16

index_block_restart_interval: 1

filter_policy: rocksdb.BuiltinBloomFilter

whole_key_filtering: 1

format_version: 2

2024-12-08 02:49:54.636672 7f3d013e0d00 4 rocksdb: Options.write_buffer_size: 268435456

2024-12-08 02:49:54.636676 7f3d013e0d00 4 rocksdb: Options.max_write_buffer_number: 4

2024-12-08 02:49:54.636678 7f3d013e0d00 4 rocksdb: Options.compression: NoCompression

2024-12-08 02:49:54.636680 7f3d013e0d00 4 rocksdb: Options.bottommost_compression: Disabled

2024-12-08 02:49:54.636682 7f3d013e0d00 4 rocksdb: Options.prefix_extractor: nullptr

2024-12-08 02:49:54.636684 7f3d013e0d00 4 rocksdb: Options.memtable_insert_with_hint_prefix_extractor: nullptr

2024-12-08 02:49:54.636686 7f3d013e0d00 4 rocksdb: Options.num_levels: 7

2024-12-08 02:49:54.636687 7f3d013e0d00 4 rocksdb: Options.min_write_buffer_number_to_merge: 1

2024-12-08 02:49:54.636690 7f3d013e0d00 4 rocksdb: Options.max_write_buffer_number_to_maintain: 0

2024-12-08 02:49:54.636692 7f3d013e0d00 4 rocksdb: Options.compression_opts.window_bits: -14

2024-12-08 02:49:54.636695 7f3d013e0d00 4 rocksdb: Options.compression_opts.level: -1

2024-12-08 02:49:54.636697 7f3d013e0d00 4 rocksdb: Options.compression_opts.strategy: 0

2024-12-08 02:49:54.636698 7f3d013e0d00 4 rocksdb: Options.compression_opts.max_dict_bytes: 0

2024-12-08 02:49:54.636701 7f3d013e0d00 4 rocksdb: Options.level0_file_num_compaction_trigger: 4

2024-12-08 02:49:54.636703 7f3d013e0d00 4 rocksdb: Options.level0_slowdown_writes_trigger: 20

2024-12-08 02:49:54.636704 7f3d013e0d00 4 rocksdb: Options.level0_stop_writes_trigger: 36

2024-12-08 02:49:54.636705 7f3d013e0d00 4 rocksdb: Options.target_file_size_base: 67108864

2024-12-08 02:49:54.636706 7f3d013e0d00 4 rocksdb: Options.target_file_size_multiplier: 1

2024-12-08 02:49:54.636709 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_base: 268435456

2024-12-08 02:49:54.636711 7f3d013e0d00 4 rocksdb: Options.level_compaction_dynamic_level_bytes: 0

2024-12-08 02:49:54.636712 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier: 10.000000

2024-12-08 02:49:54.636719 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier_addtl[0]: 1

2024-12-08 02:49:54.636721 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier_addtl[1]: 1

2024-12-08 02:49:54.636722 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier_addtl[2]: 1

2024-12-08 02:49:54.636723 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier_addtl[3]: 1

2024-12-08 02:49:54.636727 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier_addtl[4]: 1

2024-12-08 02:49:54.636729 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier_addtl[5]: 1

2024-12-08 02:49:54.636730 7f3d013e0d00 4 rocksdb: Options.max_bytes_for_level_multiplier_addtl[6]: 1

2024-12-08 02:49:54.636731 7f3d013e0d00 4 rocksdb: Options.max_sequential_skip_in_iterations: 8

2024-12-08 02:49:54.636733 7f3d013e0d00 4 rocksdb: Options.max_compaction_bytes: 1677721600

2024-12-08 02:49:54.636734 7f3d013e0d00 4 rocksdb: Options.arena_block_size: 33554432

2024-12-08 02:49:54.636736 7f3d013e0d00 4 rocksdb: Options.soft_pending_compaction_bytes_limit: 68719476736

2024-12-08 02:49:54.636737 7f3d013e0d00 4 rocksdb: Options.hard_pending_compaction_bytes_limit: 274877906944

2024-12-08 02:49:54.636739 7f3d013e0d00 4 rocksdb: Options.rate_limit_delay_max_milliseconds: 100

2024-12-08 02:49:54.636740 7f3d013e0d00 4 rocksdb: Options.disable_auto_compactions: 0

2024-12-08 02:49:54.636744 7f3d013e0d00 4 rocksdb: Options.compaction_style: kCompactionStyleLevel

2024-12-08 02:49:54.636746 7f3d013e0d00 4 rocksdb: Options.compaction_pri: kByCompensatedSize

2024-12-08 02:49:54.636756 7f3d013e0d00 4 rocksdb: Options.compaction_options_universal.size_ratio: 1

2024-12-08 02:49:54.636758 7f3d013e0d00 4 rocksdb: Options.compaction_options_universal.min_merge_width: 2

2024-12-08 02:49:54.636759 7f3d013e0d00 4 rocksdb: Options.compaction_options_universal.max_merge_width: 4294967295

2024-12-08 02:49:54.636761 7f3d013e0d00 4 rocksdb: Options.compaction_options_universal.max_size_amplification_percent: 200

2024-12-08 02:49:54.636762 7f3d013e0d00 4 rocksdb: Options.compaction_options_universal.compression_size_percent: -1

2024-12-08 02:49:54.636763 7f3d013e0d00 4 rocksdb: Options.compaction_options_fifo.max_table_files_size: 1073741824

2024-12-08 02:49:54.636765 7f3d013e0d00 4 rocksdb: Options.table_properties_collectors:

2024-12-08 02:49:54.636766 7f3d013e0d00 4 rocksdb: Options.inplace_update_support: 0

2024-12-08 02:49:54.636767 7f3d013e0d00 4 rocksdb: Options.inplace_update_num_locks: 10000

2024-12-08 02:49:54.636769 7f3d013e0d00 4 rocksdb: Options.memtable_prefix_bloom_size_ratio: 0.000000

2024-12-08 02:49:54.636771 7f3d013e0d00 4 rocksdb: Options.memtable_huge_page_size: 0

2024-12-08 02:49:54.636772 7f3d013e0d00 4 rocksdb: Options.bloom_locality: 0

2024-12-08 02:49:54.636773 7f3d013e0d00 4 rocksdb: Options.max_successive_merges: 0

2024-12-08 02:49:54.636775 7f3d013e0d00 4 rocksdb: Options.optimize_filters_for_hits: 0

2024-12-08 02:49:54.636776 7f3d013e0d00 4 rocksdb: Options.paranoid_file_checks: 0

2024-12-08 02:49:54.636777 7f3d013e0d00 4 rocksdb: Options.force_consistency_checks: 0

2024-12-08 02:49:54.636778 7f3d013e0d00 4 rocksdb: Options.report_bg_io_stats: 0

2024-12-08 02:55:23.594424 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/version_set.cc:2859] Recovered from manifest file:db/MANIFEST-293268 succeeded,manifest_file_number is 293268, next_file_number is 293303, last_sequence is 19032676262, log_number is 0,prev_log_number is 0,max_column_family is 0

2024-12-08 02:55:23.594457 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/version_set.cc:2867] Column family [default] (ID 0), log number is 293265

2024-12-08 02:55:23.661365 7f3d013e0d00 4 rocksdb: EVENT_LOG_v1 {"time_micros": 1733622923661345, "job": 1, "event": "recovery_started", "log_files": [293269]}

2024-12-08 02:55:23.661377 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/db_impl_open.cc:482] Recovering log #293269 mode 0

2024-12-08 02:55:23.803529 7f3d013e0d00 4 rocksdb: EVENT_LOG_v1 {"time_micros": 1733622923803504, "cf_name": "default", "job": 1, "event": "table_file_creation", "file_number": 293303, "file_size": 1735621, "table_properties": {"data_size": 1657075, "index_size": 22662, "filter_size": 54902, "raw_key_size": 1017829, "raw_average_key_size": 49, "raw_value_size": 1262447, "raw_average_value_size": 61, "num_data_blocks": 414, "num_entries": 20477, "filter_policy_name": "rocksdb.BuiltinBloomFilter", "kDeletedKeys": "14387", "kMergeOperands": "69"}}

2024-12-08 02:55:23.805658 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/version_set.cc:2395] Creating manifest 293304

2024-12-08 02:55:23.854165 7f3d013e0d00 4 rocksdb: EVENT_LOG_v1 {"time_micros": 1733622923854154, "job": 1, "event": "recovery_finished"}

2024-12-08 02:55:23.896475 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/db_impl_open.cc:1063] DB pointer 0x7f3d0cc22000

2024-12-08 02:55:23.897636 7f3d013e0d00 1 bluestore(/var/lib/ceph/osd/ceph-9) _open_db opened rocksdb path db options compression=kNoCompression,max_write_buffer_number=4,min_write_buffer_number_to_merge=1,recycle_log_file_num=4,write_buffer_size=268435456,writable_file_max_buffer_size=0,compaction_readahead_size=2097152

2024-12-08 02:55:23.911727 7f3d013e0d00 1 freelist init

2024-12-08 02:55:24.015320 7f3d013e0d00 1 bluestore(/var/lib/ceph/osd/ceph-9) _open_alloc opening allocation metadata

2024-12-08 02:55:24.368699 7f3d013e0d00 1 bluestore(/var/lib/ceph/osd/ceph-9) _open_alloc loaded 6841 G in 76716 extents

2024-12-08 02:55:24.374005 7f3d013e0d00 1 bluestore(/var/lib/ceph/osd/ceph-9) umount

2024-12-08 02:55:24.374365 7f3d013e0d00 1 stupidalloc 0x0x7f3d0bb30f40 shutdown

2024-12-08 02:55:24.374738 7f3d013e0d00 1 freelist shutdown

2024-12-08 02:55:24.374763 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/db_impl.cc:217] Shutdown: canceling all background work

2024-12-08 02:55:24.788435 7f3d013e0d00 4 rocksdb: [/home/jenkins-build/build/workspace/ceph-build/ARCH/x86_64/AVAILABLE_ARCH/x86_64/AVAILABLE_DIST/centos7/DIST/centos7/MACHINE_SIZE/huge/release/12.2.7/rpm/el7/BUILD/ceph-12.2.7/src/rocksdb/db/db_impl.cc:343] Shutdown complete

2024-12-08 02:55:24.789585 7f3d013e0d00 1 bluefs umount

2024-12-08 02:55:24.792720 7f3d013e0d00 1 stupidalloc 0x0x7f3d0bb303e0 shutdown

2024-12-08 02:55:24.809305 7f3d013e0d00 1 bdev(0x7f3d0bb37e00 /var/lib/ceph/osd/ceph-9/block) close

2024-12-08 02:55:25.051161 7f3d013e0d00 1 bdev(0x7f3d0bb37800 /var/lib/ceph/osd/ceph-9/block) close

2024-12-08 02:55:25.276194 7f3d013e0d00 -1 flushed journal /var/lib/ceph/osd/ceph-9/journal for object store /var/lib/ceph/osd/ceph-9

最终还是不行,估计只能重建osd。

接着查看pg 6.36 信息

ceph pg 6.36 query |more

{

"state": "active+undersized+degraded+remapped+inconsistent+backfill_wait",

"snap_trimq": "[]",

"snap_trimq_len": 0,

"epoch": 144397,

"up": [

9,

10,

13

],

"acting": [

9,

10

],

"backfill_targets": [

"13"

],

"actingbackfill": [

"9",

"10",

"13"

],

从 ceph pg 6.36 query 的输出可以看到:

PG 状态: active+undersized+degraded+remapped+inconsistent+backfill_wait

说明这个 PG 存在以下问题:

undersized:PG 当前的副本数量少于期望的副本数。

degraded:数据不是最新或完整的,副本正在恢复或同步。

remapped:PG 由于某些原因(比如 OSD 状态变化)被重新映射到了不同的 OSD。

inconsistent:数据一致性出现问题,需要修复。

backfill_wait:PG 正在等待回填数据,通常是目标 OSD 有问题或资源繁忙。

acting 集合: [9, 10]

说明当前只有两个 OSD (9 和 10) 正在提供服务,但缺少一个副本(期望三副本)。

backfill_targets: ["13"]

OSD 13 被选为回填目标,PG 正等待将数据同步到 OSD 13。

actingbackfill: [9, 10, 13]

说明 PG 的回填操作正在进行或等待操作。

如果回填被阻塞,可以强制触发回填:

ceph osd force-recovery 6.36

ceph osd force-backfill 6.36

ceph运维参考手册

运维手册:https://lihaijing.gitbooks.io/ceph-handbook/content/Troubleshooting/troubleshooting_pg.html

rgw详细讲解:https://www.cnblogs.com/punchlinux/p/17070273.html

pg官方修复资料:https://docs.ceph.com/en/reef/rados/troubleshooting/troubleshooting-pg/#pgs-inconsistent

英文版本运维手册,但是内容较全:

https://github.com/TheJJ/ceph-cheatsheet

修复unknow pg

这个使用repair无法修复,最终使用强制创建命令

detail查看哪些pg是unknow

ceph health detail

HEALTH_ERR 20935710/92498685 objects misplaced (22.634%); 8 scrub errors; Reduced data availability: 4 pgs inactive; Possible data damage: 1 pg inconsistent; Degraded data redundancy: 13874542/92498685 objects degraded (15.000%), 126 pgs degraded, 126 pgs undersized; 1 slow requests are blocked > 32 sec. Implicated osds 13; 3 stuck requests are blocked > 4096 sec. Implicated osds 16; clock skew detected on mon.bigdata-39; 1/3 mons down, quorum bigdata-38,bigdata-39

OBJECT_MISPLACED 20935710/92498685 objects misplaced (22.634%)

OSD_SCRUB_ERRORS 8 scrub errors

PG_AVAILABILITY Reduced data availability: 4 pgs inactive

pg 2.6 is stuck inactive for 213085.245908, current state unknown, last acting []

pg 3.56 is stuck inactive for 213085.245908, current state unknown, last acting []

pg 4.12 is stuck inactive for 213085.245908, current state unknown, last acting []

pg 6.70 is stuck inactive for 11267.486731, current state undersized+degraded+remapped+backfilling+peered, last acting [10]

PG_DAMAGED Possible data damage: 1 pg inconsistent

pg 6.36 is active+undersized+degraded+remapped+inconsistent+backfill_wait, acting [9,10]

PG_DEGRADED Degraded data redundancy: 13874542/92498685 objects degraded (15.000%), 126 pgs degraded, 126 pgs undersized

pg 2.6e is stuck undersized for 2706.364393, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,9]

pg 3.14 is stuck undersized for 11266.484468, current state active+undersized+degraded+remapped+backfill_wait, last acting [16,12]

pg 3.19 is stuck undersized for 2706.363600, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,18]

pg 3.20 is stuck undersized for 17261.663826, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,16]

pg 3.22 is stuck undersized for 2706.361310, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,18]

pg 3.23 is stuck undersized for 10714.216787, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,16]

pg 3.24 is stuck undersized for 2988.746385, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,10]

pg 3.66 is stuck undersized for 10714.284006, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,16]

pg 3.6c is stuck undersized for 11266.491201, current state active+undersized+degraded+remapped+backfill_wait, last acting [7,12]

pg 3.6d is stuck undersized for 2699.317849, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,15]

pg 3.74 is stuck undersized for 11266.484022, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,15]

pg 3.79 is stuck undersized for 10716.635373, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,9]

pg 3.7f is stuck undersized for 11266.479744, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,15]

pg 4.13 is active+undersized+degraded+remapped+backfill_wait, acting [1,16]

pg 4.20 is stuck undersized for 2706.345836, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,11]

pg 4.29 is stuck undersized for 2958.118844, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,9]

pg 4.66 is stuck undersized for 2989.761319, current state active+undersized+degraded+remapped+backfill_wait, last acting [2,10]

pg 4.6c is stuck undersized for 16312.304117, current state active+undersized+degraded+remapped+backfill_wait, last acting [16,1]

pg 4.6d is stuck undersized for 11266.489239, current state active+undersized+degraded+remapped+backfill_wait, last acting [16,6]

pg 4.6e is stuck undersized for 2989.761198, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,7]

pg 4.6f is stuck undersized for 11265.479589, current state active+undersized+degraded+remapped+backfill_wait, last acting [6,15]

pg 4.78 is stuck undersized for 15305.672658, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,18]

pg 4.7f is stuck undersized for 2989.759372, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,10]

pg 6.10 is stuck undersized for 11233.746849, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,18]

pg 6.11 is stuck undersized for 2989.739705, current state active+undersized+degraded+remapped+backfill_wait, last acting [12,16]

pg 6.1a is stuck undersized for 2989.762446, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,12]

pg 6.22 is stuck undersized for 10716.596551, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,9]

pg 6.25 is stuck undersized for 45335.606261, current state active+undersized+degraded+remapped+backfilling, last acting [2,16]

pg 6.26 is stuck undersized for 17261.645839, current state active+undersized+degraded+remapped+backfill_wait, last acting [5,12]

pg 6.27 is stuck undersized for 2699.328012, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,1]

pg 6.2b is stuck undersized for 16307.264674, current state active+undersized+degraded+remapped+backfilling, last acting [1,15]

pg 6.2d is stuck undersized for 16312.303026, current state active+undersized+degraded+remapped+backfilling, last acting [2,16]

pg 6.2e is stuck undersized for 45061.676706, current state active+undersized+degraded+remapped+backfilling, last acting [1,12]

pg 6.60 is stuck undersized for 11266.487019, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,12]

pg 6.61 is stuck undersized for 10838.691206, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,10]

pg 6.62 is stuck undersized for 45276.027714, current state active+undersized+degraded+remapped+backfill_wait, last acting [6,18]

pg 6.63 is stuck undersized for 2706.360383, current state active+undersized+degraded+remapped+backfill_wait, last acting [12,11]

pg 6.64 is stuck undersized for 15305.665953, current state active+undersized+degraded+remapped+backfill_wait, last acting [1,7]

pg 6.65 is stuck undersized for 2706.362044, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,11]

pg 6.66 is stuck undersized for 10838.837738, current state active+undersized+degraded+remapped+backfill_wait, last acting [7,10]

pg 6.67 is stuck undersized for 16307.260177, current state active+undersized+degraded+remapped+backfilling, last acting [15,6]

pg 6.6c is stuck undersized for 10716.620848, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,12]

pg 6.6e is stuck undersized for 16307.264700, current state active+undersized+degraded+remapped+backfill_wait, last acting [15,18]

pg 6.70 is stuck undersized for 10837.849826, current state undersized+degraded+remapped+backfilling+peered, last acting [10]

pg 6.72 is stuck undersized for 17094.159452, current state active+undersized+degraded+remapped+backfilling, last acting [12,1]

pg 6.76 is stuck undersized for 10714.235739, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,5]

pg 6.77 is stuck undersized for 16860.026431, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,13]

pg 6.78 is stuck undersized for 10716.518456, current state active+undersized+degraded+remapped+backfill_wait, last acting [9,18]

pg 6.7b is stuck undersized for 10838.832694, current state active+undersized+degraded+remapped+backfill_wait, last acting [10,7]

pg 6.7d is stuck undersized for 2706.369624, current state active+undersized+degraded+remapped+backfill_wait, last acting [18,11]

pg 6.7f is stuck undersized for 45061.673564, current state active+undersized+degraded+remapped+backfill_wait, last acting [16,12]

REQUEST_SLOW 1 slow requests are blocked > 32 sec. Implicated osds 13

1 ops are blocked > 32.768 sec

osd.13 has blocked requests > 32.768 sec

REQUEST_STUCK 3 stuck requests are blocked > 4096 sec. Implicated osds 16

2 ops are blocked > 33554.4 sec

1 ops are blocked > 16777.2 sec

osd.16 has stuck requests > 33554.4 sec

MON_CLOCK_SKEW clock skew detected on mon.bigdata-39

mon.bigdata-39 addr 10.21.0.49:6789/0 clock skew 6.95044s > max 0.5s (latency 0.000904326s)

MON_DOWN 1/3 mons down, quorum bigdata-38,bigdata-39

mon.bigdata-37 (rank 0) addr 10.21.0.47:6789/0 is down (out of quorum)

例如如上输出可以看到pg 2.6,pg 3.56等是unknow 状态

$ ceph osd force-create-pg 1.69

pg 1.69 now creating, ok

表示这个pg创建成功了。注意force-create-pg只使用于已经在unknow状态的pg,这是因为这个unknow状态的pg的数据就是已经彻底丢了,重建没问题,对于还能使用其他手段修复的pg,使用force-create-pg会丢数据

修复REQUEST_STUCK

这个是等active的pg恢复到一定水平,自动就好了

疑问点

这里有个问题就是在osd9和osd10上都找不到scrub对应的报错日志,也不知道是ceph版本原因还是什么其他原因。按说pg出现inconsistent导致有scrub报错,在osd中应该会有报错日志的。

修复 pg down

查看集群状态

查看 6.72 pg状态:ceph pg 6.52 query

"peering_blocked_by"

这里列出了阻塞 peering 的 OSD。这些 OSD 需要被处理,才能让 PG 状态恢复正常:

OSD 0:current_lost_at: 0(可能已经丢失或无法启动)

OSD 25:current_lost_at: 0(同样可能丢失或无法启动)

建议:启动或标记这些 OSD 为 lost 可能会让 peering 继续进行。

修复incomplete状态的pg

pg dump在 recovery block的内容中提示“peering_blocked_by_history_les_bound”

$ ceph pg 5.2f query

........

"peering_blocked_by_detail": [

{

"detail": "peering_blocked_by_history_les_bound"

}

]

这种时候这个pg无法自动修复的,找到这个pg的主分片所在的osd,例如本操作示例中就是osd21,然后修改ceph的配置文件

增加内容osd_find_best_info_ignore_history_les = true,然后重启这个osd。

systemctl restart ceph-osd@21.service

后面就看到这个pg好了

然后再修改配置文件去掉增加的这行,再重启osd服务。

如何加快recovery的速度

使用ceph -s命令可以查看到recovery的速度

[root@bigdata-40 ~]# ceph -s

cluster:

id: 4114abcc-93fd-42ef-932f-9f8f5d4de01d

health: HEALTH_WARN

12664058/92500155 objects misplaced (13.691%)

Reduced data availability: 14 pgs inactive

Degraded data redundancy: 10607844/92500155 objects degraded (11.468%), 97 pgs degraded, 96 pgs undersized

clock skew detected on mon.bigdata-39

1/3 mons down, quorum bigdata-38,bigdata-39

services:

mon: 3 daemons, quorum bigdata-38,bigdata-39, out of quorum: bigdata-37

mgr: bigdata-38(active), standbys: bigdata-39

osd: 14 osds: 14 up, 14 in; 176 remapped pgs

rgw: 3 daemons active

data:

pools: 7 pools, 656 pgs

objects: 30110k objects, 447 GB

usage: 51302 GB used, 53019 GB / 101 TB avail

pgs: 2.134% pgs not active

10607844/92500155 objects degraded (11.468%)

12664058/92500155 objects misplaced (13.691%)

475 active+clean

67 active+undersized+degraded+remapped+backfill_wait

63 active+remapped+backfill_wait

19 active+remapped+backfilling

12 active+undersized+degraded+remapped+backfilling

7 undersized+degraded+remapped+backfill_wait+peered

5 undersized+degraded+remapped+backfilling+peered

2 active+recovery_wait+undersized+degraded+remapped

1 active+recovery_wait+degraded+remapped

1 undersized+degraded+remapped+backfilling+forced_backfill+peered

1 undersized+degraded+remapped+backfill_wait+forced_backfill+peered

1 active+recovery_wait+remapped

1 active+recovery_wait+degraded

1 active+undersized+remapped

io:

recovery: 595 kB/s, 24 objects/s

才几百k的速度明显太慢了,查询资料:https://cloud.tencent.com/developer/article/2074531

对影响比较大的osd执行如下命令,或者所有的osd都执行

ceph tell osd.3 injectargs --osd_max_backfills=128

ceph tell osd.3 injectargs --osd_recovery_op_priority=0

ceph tell osd.3 injectargs --osd_recovery_max_active=64

ceph tell osd.3 injectargs --osd_recovery_max_single_start=64

ceph tell osd.3 injectargs --osd_recovery_sleep_hdd=0

然后可以明显发现recovery的速度的确提升了

[root@bigdata-40 ~]# ceph -s

cluster:

id: 4114abcc-93fd-42ef-932f-9f8f5d4de01d

health: HEALTH_WARN

12594078/92500155 objects misplaced (13.615%)

Reduced data availability: 13 pgs inactive

Degraded data redundancy: 10579546/92500155 objects degraded (11.437%), 90 pgs degraded, 88 pgs undersized

2 slow requests are blocked > 32 sec. Implicated osds 6,10

clock skew detected on mon.bigdata-39

1/3 mons down, quorum bigdata-38,bigdata-39

services:

mon: 3 daemons, quorum bigdata-38,bigdata-39, out of quorum: bigdata-37

mgr: bigdata-38(active), standbys: bigdata-39

osd: 14 osds: 14 up, 14 in; 163 remapped pgs

rgw: 3 daemons active

data:

pools: 7 pools, 656 pgs

objects: 30110k objects, 447 GB

usage: 51312 GB used, 53009 GB / 101 TB avail

pgs: 1.982% pgs not active

10579546/92500155 objects degraded (11.437%)

12594078/92500155 objects misplaced (13.615%)

492 active+clean

65 active+undersized+degraded+remapped+backfill_wait

63 active+remapped+backfill_wait

10 active+remapped+backfilling

8 active+undersized+degraded+remapped+backfilling

7 undersized+degraded+remapped+backfill_wait+peered

5 undersized+degraded+remapped+backfilling+peered

2 active+recovery_wait+undersized+degraded+remapped

1 active+recovery_wait+degraded+remapped

1 undersized+degraded+remapped+backfilling+forced_backfill+peered

1 active+recovery_wait+degraded

1 active+recovery_wait+remapped

io:

recovery: 7736 kB/s, 195 kkeys/s, 504 objects/s

OSD新增节点记录

posted on 2025-01-19 16:12 Mr.Ray.zhang 阅读(98) 评论(0) 收藏 举报

浙公网安备 33010602011771号

浙公网安备 33010602011771号