实验三 朴素贝叶斯算法及应用

实验三 朴素贝叶斯算法及应用

一、实验目的

理解朴素贝叶斯算法原理,掌握朴素贝叶斯算法框架;

掌握常见的高斯模型,多项式模型和伯努利模型;

能根据不同的数据类型,选择不同的概率模型实现朴素贝叶斯算法;

针对特定应用场景及数据,能应用朴素贝叶斯解决实际问题。

二、实验内容

实现高斯朴素贝叶斯算法。

熟悉sklearn库中的朴素贝叶斯算法;

针对iris数据集,应用sklearn的朴素贝叶斯算法进行类别预测。

针对iris数据集,利用自编朴素贝叶斯算法进行类别预测。

三、实验报告要求

对照实验内容,撰写实验过程、算法及测试结果;

代码规范化:命名规则、注释;

分析核心算法的复杂度;

查阅文献,讨论各种朴素贝叶斯算法的应用场景;

讨论朴素贝叶斯算法的优缺点。

四、实验代码

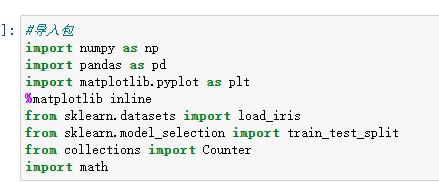

1、

#导入包 import numpy as np import pandas as pd import matplotlib.pyplot as plt %matplotlib inline from sklearn.datasets import load_iris from sklearn.model_selection import train_test_split from collections import Counter import math

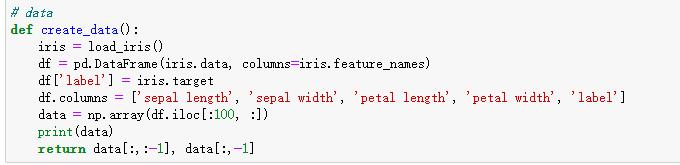

2、

# data def create_data(): iris = load_iris() df = pd.DataFrame(iris.data, columns=iris.feature_names) df['label'] = iris.target df.columns = ['sepal length', 'sepal width', 'petal length', 'petal width', 'label'] data = np.array(df.iloc[:100, :]) print(data) return data[:,:-1], data[:,-1]

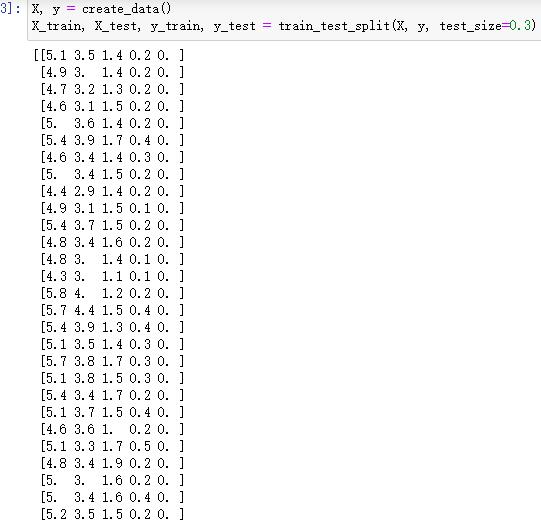

3、

X, y = create_data() X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

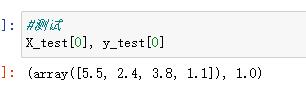

4、

#测试 X_test[0], y_test[0]

5、

`(array([5.6, 3. , 4.5, 1.5]), 1.0)

"""

"""

GaussianNB 高斯朴素贝叶斯,特征的可能性被假设为高斯

class NaiveBayes:

def init(self):

self.model = None

# 数学期望

@staticmethod

def mean(X):

return sum(X) / float(len(X))

# 标准差(方差)

def stdev(self, X):

avg = self.mean(X)

return math.sqrt(sum([pow(x - avg, 2) for x in X]) / float(len(X)))

# 概率密度函数

def gaussian_probability(self, x, mean, stdev):

exponent = math.exp(-(math.pow(x - mean, 2) /(2 * math.pow(stdev, 2))))

return (1 / (math.sqrt(2 * math.pi) * stdev)) * exponent

# 处理X_train

def summarize(self, train_data):

summaries = [(self.mean(i), self.stdev(i)) for i in zip(*train_data)]

return summaries

# 分类别求出数学期望和标准差

def fit(self, X, y):

labels = list(set(y))

data = {label: [] for label in labels}

for f, label in zip(X, y):

data[label].append(f)

self.model = {label: self.summarize(value)for label, value in data.items()}

return 'gaussianNB train done!'

# 计算概率

def calculate_probabilities(self, input_data):

# summaries:{0.0: [(5.0, 0.37),(3.42, 0.40)], 1.0: [(5.8, 0.449),(2.7, 0.27)]}

# input_data:[1.1, 2.2]

probabilities = {}

for label, value in self.model.items():

probabilities[label] = 1

for i in range(len(value)):

mean, stdev = value[i]

probabilities[label] *= self.gaussian_probability(input_data[i], mean, stdev)

return probabilities

# 类别

def predict(self, X_test):

# {0.0: 2.9680340789325763e-27, 1.0: 3.5749783019849535e-26}

label = sorted(self.calculate_probabilities(X_test).items(),key=lambda x: x[-1])[-1][0]

return label

def score(self, X_test, y_test):

right = 0

for X, y in zip(X_test, y_test):

label = self.predict(X)

if label == y:

right += 1

return right / float(len(X_test))`

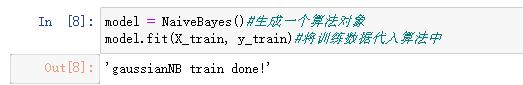

6、

model = NaiveBayes()#生成一个算法对象 model.fit(X_train, y_train)#将训练数据代入算法中

7、

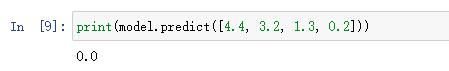

print(model.predict([4.4, 3.2, 1.3, 0.2]))

8、

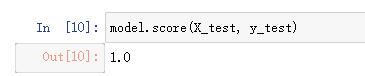

model.score(X_test, y_test)

9、

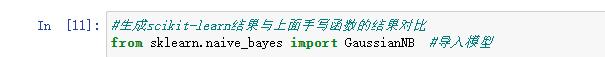

#生成scikit-learn结果与上面手写函数的结果对比 from sklearn.naive_bayes import GaussianNB #导入模型

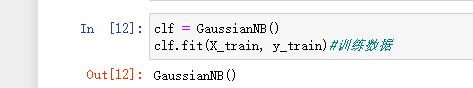

10、

clf = GaussianNB(;) clf.fit(X_train, y_train)#训练数据

11、

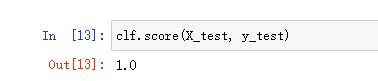

clf.score(X_test, y_test)

五、运行结果截图

六、实验小结

朴素贝叶斯的优缺点

优点:效率较高;对缺失数据不太敏感;能处理多分类任务。

缺点:要求数据的相关性很低,各个数据之间的依赖性要保持在很低的水平上

各种朴素贝叶斯算法的应用场景

高斯贝叶斯:

能处理连续数据,特别当数据是高斯分布时,有一个很好的表现。处理连续数据数值问题的另一种常用技术是通过离散化连续数值的方法。通常,当训练样本数量较少或者是精确的分布已知时,通过概率分布的方法是一种更好的选择。在大量样本的情形下离散化的方法表现最优,因为大量的样本可以学习到数据的分布。

朴素贝叶斯:

朴素贝叶斯算法,可以将同类中的互斥数据分解出来,形成一种聚类,这些算法可以广泛运用在生活中。例如,垃圾邮件问题中,做贝叶斯公式计算过滤方法识别出类似特性邮件并归集。

浙公网安备 33010602011771号

浙公网安备 33010602011771号