Tensorflow2(预课程)---2.1、多层感知器-层方式

Tensorflow2(预课程)---2.1、多层感知器-层方式

一、总结

一句话总结:

分好步骤写神经网络的确是一件非常简单的事情

1、读取数据集 2、拆分数据集(拆分成训练数据集和测试数据集) 3、构建模型 4、训练模型 5、检验模型 6、模型可视化

1、pandas读取数据方法?

直接iloc方法就好

train_x = data.iloc[:170,1:-1] train_y = data.iloc[:170,-1] test_x = data.iloc[171:,1:-1] test_y = data.iloc[171:,-1]

2、训练模型的时候获取loss以方便画图?

history的history的get方法:plt.plot(history.epoch,history.history.get('loss'))

3、numpy将多维数组降维成一维?

可以用reshape方法,但是感觉flatten方法更好

4、pandas.Series转numpy的n维数组?

可以直接用np的array方法

二、多层感知器-层方式

博客对应课程的视频位置:

步骤

1、读取数据集

2、拆分数据集(拆分成训练数据集和测试数据集)

3、构建模型

4、训练模型

5、检验模型

6、模型可视化问题需求

根据TV、radio、newspaper的广告情况,来看sales情况

In [1]:

import pandas as pd

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

1、读取数据集

In [2]:

data=pd.read_csv("Advertising.csv")

print(data)

Unnamed: 0 TV radio newspaper sales 0 1 230.1 37.8 69.2 22.1 1 2 44.5 39.3 45.1 10.4 2 3 17.2 45.9 69.3 9.3 3 4 151.5 41.3 58.5 18.5 4 5 180.8 10.8 58.4 12.9 .. ... ... ... ... ... 195 196 38.2 3.7 13.8 7.6 196 197 94.2 4.9 8.1 9.7 197 198 177.0 9.3 6.4 12.8 198 199 283.6 42.0 66.2 25.5 199 200 232.1 8.6 8.7 13.4 [200 rows x 5 columns]

In [3]:

tv_data=data.iloc[:,1:2]

radio_data=data.iloc[:,2:3]

newspaper_data=data.iloc[:,3:-1]

sales_data=data.iloc[:,-1]

newspaper_data

Out[3]:

| newspaper | |

|---|---|

| 0 | 69.2 |

| 1 | 45.1 |

| 2 | 69.3 |

| 3 | 58.5 |

| 4 | 58.4 |

| ... | ... |

| 195 | 13.8 |

| 196 | 8.1 |

| 197 | 6.4 |

| 198 | 66.2 |

| 199 | 8.7 |

200 rows × 1 columns

分别画TV、radio、newspaper和sales图

In [4]:

# 解决中文乱码

plt.rcParams["font.sans-serif"]=["SimHei"]

plt.rcParams["font.family"]="sans-serif"

# 解决负号无法显示的问题

plt.rcParams['axes.unicode_minus'] =False

# 画TV和sales图

plt.figure()

plt.scatter(tv_data,sales_data)

plt.title("TV和sales关系图")

plt.xlabel('tv_data')

plt.ylabel('sales_data')

# 画radio_data和sales图

plt.figure()

plt.scatter(radio_data,sales_data)

plt.title("radio_data和sales关系图")

plt.xlabel('radio_data')

plt.ylabel('sales_data')

# 画newspaper_data和sales图

plt.figure()

plt.scatter(newspaper_data,sales_data)

plt.title("newspaper_data和sales关系图")

plt.xlabel('newspaper_data')

plt.ylabel('sales_data')

plt.show()

2、拆分数据集(拆分成训练数据集和测试数据集)

训练数据前170,测试数据后30

In [5]:

train_x = data.iloc[:170,1:-1]

train_y = data.iloc[:170,-1]

test_x = data.iloc[171:,1:-1]

test_y = data.iloc[171:,-1]

print(train_x)

print(train_y)

print(test_x)

print(test_y)

TV radio newspaper

0 230.1 37.8 69.2

1 44.5 39.3 45.1

2 17.2 45.9 69.3

3 151.5 41.3 58.5

4 180.8 10.8 58.4

.. ... ... ...

165 234.5 3.4 84.8

166 17.9 37.6 21.6

167 206.8 5.2 19.4

168 215.4 23.6 57.6

169 284.3 10.6 6.4

[170 rows x 3 columns]

0 22.1

1 10.4

2 9.3

3 18.5

4 12.9

...

165 11.9

166 8.0

167 12.2

168 17.1

169 15.0

Name: sales, Length: 170, dtype: float64

TV radio newspaper

171 164.5 20.9 47.4

172 19.6 20.1 17.0

173 168.4 7.1 12.8

174 222.4 3.4 13.1

175 276.9 48.9 41.8

176 248.4 30.2 20.3

177 170.2 7.8 35.2

178 276.7 2.3 23.7

179 165.6 10.0 17.6

180 156.6 2.6 8.3

181 218.5 5.4 27.4

182 56.2 5.7 29.7

183 287.6 43.0 71.8

184 253.8 21.3 30.0

185 205.0 45.1 19.6

186 139.5 2.1 26.6

187 191.1 28.7 18.2

188 286.0 13.9 3.7

189 18.7 12.1 23.4

190 39.5 41.1 5.8

191 75.5 10.8 6.0

192 17.2 4.1 31.6

193 166.8 42.0 3.6

194 149.7 35.6 6.0

195 38.2 3.7 13.8

196 94.2 4.9 8.1

197 177.0 9.3 6.4

198 283.6 42.0 66.2

199 232.1 8.6 8.7

171 14.5

172 7.6

173 11.7

174 11.5

175 27.0

176 20.2

177 11.7

178 11.8

179 12.6

180 10.5

181 12.2

182 8.7

183 26.2

184 17.6

185 22.6

186 10.3

187 17.3

188 15.9

189 6.7

190 10.8

191 9.9

192 5.9

193 19.6

194 17.3

195 7.6

196 9.7

197 12.8

198 25.5

199 13.4

Name: sales, dtype: float64

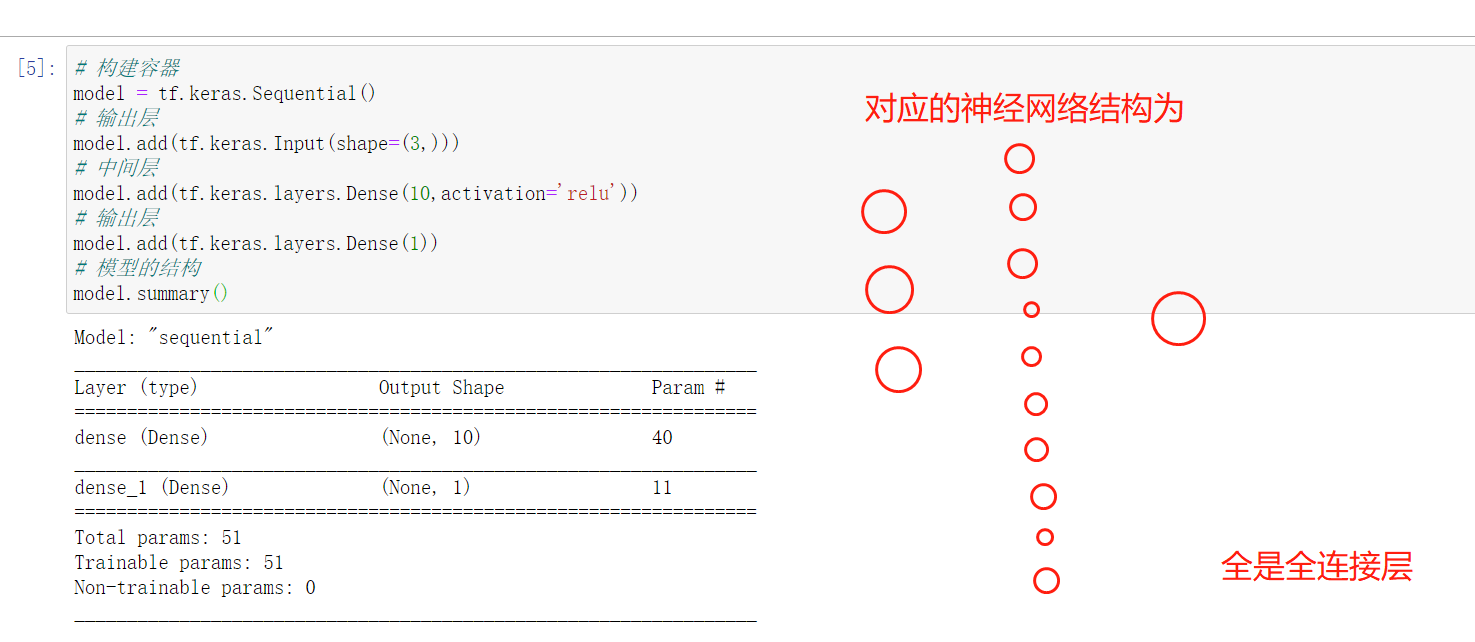

3、构建模型

我该建构一个怎样的模型,现在目标是非常明确

3->10->1

输入数据TV、radio、newspaper三维,所以对应的神经网络的输入是3维

输出就是sale,所以是1维

中间层设置10个节点,这是随便设置的In [6]:

# 构建容器

model = tf.keras.Sequential()

# 输出层

model.add(tf.keras.Input(shape=(3,)))

# 中间层

model.add(tf.keras.layers.Dense(10,activation='relu'))

# 输出层

model.add(tf.keras.layers.Dense(1))

# 模型的结构

model.summary()

Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= dense (Dense) (None, 10) 40 _________________________________________________________________ dense_1 (Dense) (None, 1) 11 ================================================================= Total params: 51 Trainable params: 51 Non-trainable params: 0 _________________________________________________________________

4、训练模型

In [7]:

# 配置优化函数和损失器

model.compile(optimizer='adam',loss='mse')

# 开始训练

history = model.fit(train_x,train_y,epochs=500) #epochs表示训练的次数

Epoch 1/500 6/6 [==============================] - 0s 2ms/step - loss: 2118.2290 Epoch 2/500 6/6 [==============================] - 0s 2ms/step - loss: 1684.5564 Epoch 3/500 6/6 [==============================] - 0s 2ms/step - loss: 1313.0490 Epoch 4/500 6/6 [==============================] - 0s 2ms/step - loss: 996.1891 Epoch 5/500 6/6 [==============================] - 0s 2ms/step - loss: 736.1262 Epoch 6/500 6/6 [==============================] - 0s 2ms/step - loss: 532.3463 Epoch 7/500 6/6 [==============================] - 0s 2ms/step - loss: 375.0568 Epoch 8/500 6/6 [==============================] - 0s 2ms/step - loss: 256.6672 Epoch 9/500 6/6 [==============================] - 0s 2ms/step - loss: 174.4297 Epoch 10/500 6/6 [==============================] - 0s 1ms/step - loss: 121.2772 Epoch 11/500 6/6 [==============================] - 0s 2ms/step - loss: 84.2133 Epoch 12/500 6/6 [==============================] - 0s 1ms/step - loss: 63.7477 Epoch 13/500 6/6 [==============================] - 0s 2ms/step - loss: 52.1158 Epoch 14/500 6/6 [==============================] - 0s 2ms/step - loss: 45.4272 Epoch 15/500 6/6 [==============================] - 0s 2ms/step - loss: 41.6236 Epoch 16/500 6/6 [==============================] - 0s 2ms/step - loss: 39.6139 Epoch 17/500 6/6 [==============================] - 0s 2ms/step - loss: 37.0812 Epoch 18/500 6/6 [==============================] - 0s 2ms/step - loss: 35.2360 Epoch 19/500 6/6 [==============================] - 0s 2ms/step - loss: 33.3176 Epoch 20/500 6/6 [==============================] - 0s 2ms/step - loss: 31.5148 Epoch 21/500 6/6 [==============================] - 0s 2ms/step - loss: 29.4039 Epoch 22/500 6/6 [==============================] - 0s 2ms/step - loss: 27.3318 Epoch 23/500 6/6 [==============================] - 0s 2ms/step - loss: 25.4498 Epoch 24/500 6/6 [==============================] - 0s 2ms/step - loss: 23.7019 Epoch 25/500 6/6 [==============================] - 0s 2ms/step - loss: 21.8664 Epoch 26/500 6/6 [==============================] - 0s 2ms/step - loss: 20.2506 Epoch 27/500 6/6 [==============================] - 0s 2ms/step - loss: 18.7423 Epoch 28/500 6/6 [==============================] - 0s 2ms/step - loss: 17.3918 Epoch 29/500 6/6 [==============================] - 0s 2ms/step - loss: 16.1902 Epoch 30/500 6/6 [==============================] - 0s 2ms/step - loss: 15.0339 Epoch 31/500 6/6 [==============================] - 0s 2ms/step - loss: 14.1116 Epoch 32/500 6/6 [==============================] - 0s 2ms/step - loss: 13.2311 Epoch 33/500 6/6 [==============================] - 0s 2ms/step - loss: 12.5364 Epoch 34/500 6/6 [==============================] - 0s 2ms/step - loss: 11.8957 Epoch 35/500 6/6 [==============================] - 0s 2ms/step - loss: 11.2512 Epoch 36/500 6/6 [==============================] - 0s 2ms/step - loss: 10.7433 Epoch 37/500 6/6 [==============================] - 0s 2ms/step - loss: 10.2287 Epoch 38/500 6/6 [==============================] - 0s 2ms/step - loss: 9.8031 Epoch 39/500 6/6 [==============================] - 0s 2ms/step - loss: 9.4483 Epoch 40/500 6/6 [==============================] - 0s 2ms/step - loss: 9.1189 Epoch 41/500 6/6 [==============================] - 0s 2ms/step - loss: 8.8540 Epoch 42/500 6/6 [==============================] - 0s 2ms/step - loss: 8.5907 Epoch 43/500 6/6 [==============================] - 0s 2ms/step - loss: 8.3590 Epoch 44/500 6/6 [==============================] - 0s 2ms/step - loss: 8.1202 Epoch 45/500 6/6 [==============================] - 0s 2ms/step - loss: 7.9098 Epoch 46/500 6/6 [==============================] - 0s 2ms/step - loss: 7.7186 Epoch 47/500 6/6 [==============================] - 0s 2ms/step - loss: 7.5315 Epoch 48/500 6/6 [==============================] - 0s 2ms/step - loss: 7.3893 Epoch 49/500 6/6 [==============================] - 0s 2ms/step - loss: 7.2161 Epoch 50/500 6/6 [==============================] - 0s 2ms/step - loss: 7.0980 Epoch 51/500 6/6 [==============================] - 0s 2ms/step - loss: 6.9527 Epoch 52/500 6/6 [==============================] - 0s 2ms/step - loss: 6.8373 Epoch 53/500 6/6 [==============================] - 0s 1ms/step - loss: 6.6988 Epoch 54/500 6/6 [==============================] - 0s 2ms/step - loss: 6.5813 Epoch 55/500 6/6 [==============================] - 0s 2ms/step - loss: 6.4663 Epoch 56/500 6/6 [==============================] - 0s 2ms/step - loss: 6.3501 Epoch 57/500 6/6 [==============================] - 0s 2ms/step - loss: 6.2533 Epoch 58/500 6/6 [==============================] - 0s 2ms/step - loss: 6.1398 Epoch 59/500 6/6 [==============================] - 0s 2ms/step - loss: 6.0507 Epoch 60/500 6/6 [==============================] - 0s 2ms/step - loss: 5.9494 Epoch 61/500 6/6 [==============================] - 0s 2ms/step - loss: 5.8565 Epoch 62/500 6/6 [==============================] - 0s 2ms/step - loss: 5.7692 Epoch 63/500 6/6 [==============================] - 0s 2ms/step - loss: 5.6742 Epoch 64/500 6/6 [==============================] - 0s 2ms/step - loss: 5.6228 Epoch 65/500 6/6 [==============================] - 0s 2ms/step - loss: 5.5029 Epoch 66/500 6/6 [==============================] - 0s 2ms/step - loss: 5.4161 Epoch 67/500 6/6 [==============================] - 0s 2ms/step - loss: 5.3318 Epoch 68/500 6/6 [==============================] - 0s 2ms/step - loss: 5.2511 Epoch 69/500 6/6 [==============================] - 0s 2ms/step - loss: 5.1920 Epoch 70/500 6/6 [==============================] - 0s 2ms/step - loss: 5.1112 Epoch 71/500 6/6 [==============================] - 0s 2ms/step - loss: 5.0468 Epoch 72/500 6/6 [==============================] - 0s 1ms/step - loss: 4.9791 Epoch 73/500 6/6 [==============================] - 0s 2ms/step - loss: 4.9046 Epoch 74/500 6/6 [==============================] - 0s 2ms/step - loss: 4.8426 Epoch 75/500 6/6 [==============================] - 0s 2ms/step - loss: 4.7759 Epoch 76/500 6/6 [==============================] - 0s 2ms/step - loss: 4.7111 Epoch 77/500 6/6 [==============================] - 0s 2ms/step - loss: 4.6421 Epoch 78/500 6/6 [==============================] - 0s 2ms/step - loss: 4.5890 Epoch 79/500 6/6 [==============================] - 0s 1ms/step - loss: 4.5365 Epoch 80/500 6/6 [==============================] - 0s 1ms/step - loss: 4.4895 Epoch 81/500 6/6 [==============================] - 0s 2ms/step - loss: 4.4269 Epoch 82/500 6/6 [==============================] - 0s 2ms/step - loss: 4.3644 Epoch 83/500 6/6 [==============================] - 0s 2ms/step - loss: 4.3312 Epoch 84/500 6/6 [==============================] - 0s 2ms/step - loss: 4.2849 Epoch 85/500 6/6 [==============================] - 0s 2ms/step - loss: 4.2166 Epoch 86/500 6/6 [==============================] - 0s 2ms/step - loss: 4.1825 Epoch 87/500 6/6 [==============================] - 0s 2ms/step - loss: 4.1545 Epoch 88/500 6/6 [==============================] - 0s 2ms/step - loss: 4.1114 Epoch 89/500 6/6 [==============================] - 0s 2ms/step - loss: 4.0765 Epoch 90/500 6/6 [==============================] - 0s 2ms/step - loss: 4.0438 Epoch 91/500 6/6 [==============================] - 0s 2ms/step - loss: 4.0144 Epoch 92/500 6/6 [==============================] - 0s 1ms/step - loss: 3.9878 Epoch 93/500 6/6 [==============================] - 0s 2ms/step - loss: 3.9648 Epoch 94/500 6/6 [==============================] - 0s 2ms/step - loss: 3.9458 Epoch 95/500 6/6 [==============================] - 0s 2ms/step - loss: 3.9189 Epoch 96/500 6/6 [==============================] - 0s 2ms/step - loss: 3.9016 Epoch 97/500 6/6 [==============================] - 0s 2ms/step - loss: 3.8866 Epoch 98/500 6/6 [==============================] - 0s 2ms/step - loss: 3.8675 Epoch 99/500 6/6 [==============================] - 0s 2ms/step - loss: 3.8469 Epoch 100/500 6/6 [==============================] - 0s 2ms/step - loss: 3.8328 Epoch 101/500 6/6 [==============================] - 0s 2ms/step - loss: 3.8160 Epoch 102/500 6/6 [==============================] - 0s 2ms/step - loss: 3.8047 Epoch 103/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7842 Epoch 104/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7782 Epoch 105/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7633 Epoch 106/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7487 Epoch 107/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7324 Epoch 108/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7254 Epoch 109/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7111 Epoch 110/500 6/6 [==============================] - 0s 2ms/step - loss: 3.7014 Epoch 111/500 6/6 [==============================] - 0s 1ms/step - loss: 3.6946 Epoch 112/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6848 Epoch 113/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6745 Epoch 114/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6639 Epoch 115/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6597 Epoch 116/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6499 Epoch 117/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6439 Epoch 118/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6469 Epoch 119/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6296 Epoch 120/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6195 Epoch 121/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6218 Epoch 122/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6097 Epoch 123/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6048 Epoch 124/500 6/6 [==============================] - 0s 2ms/step - loss: 3.6128 Epoch 125/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5930 Epoch 126/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5914 Epoch 127/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5810 Epoch 128/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5748 Epoch 129/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5757 Epoch 130/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5653 Epoch 131/500 6/6 [==============================] - 0s 1ms/step - loss: 3.5597 Epoch 132/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5616 Epoch 133/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5519 Epoch 134/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5496 Epoch 135/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5434 Epoch 136/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5378 Epoch 137/500 6/6 [==============================] - 0s 1ms/step - loss: 3.5303 Epoch 138/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5240 Epoch 139/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5215 Epoch 140/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5180 Epoch 141/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5117 Epoch 142/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5077 Epoch 143/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5056 Epoch 144/500 6/6 [==============================] - 0s 2ms/step - loss: 3.5042 Epoch 145/500 6/6 [==============================] - 0s 1ms/step - loss: 3.4959 Epoch 146/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4922 Epoch 147/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4912 Epoch 148/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4847 Epoch 149/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4723 Epoch 150/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4758 Epoch 151/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4773 Epoch 152/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4662 Epoch 153/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4850 Epoch 154/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4709 Epoch 155/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4566 Epoch 156/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4570 Epoch 157/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4501 Epoch 158/500 6/6 [==============================] - 0s 1ms/step - loss: 3.4465 Epoch 159/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4314 Epoch 160/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4469 Epoch 161/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4350 Epoch 162/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4257 Epoch 163/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4187 Epoch 164/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4145 Epoch 165/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4069 Epoch 166/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4043 Epoch 167/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3939 Epoch 168/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4092 Epoch 169/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4210 Epoch 170/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3978 Epoch 171/500 6/6 [==============================] - 0s 2ms/step - loss: 3.4012 Epoch 172/500 6/6 [==============================] - 0s 1ms/step - loss: 3.3833 Epoch 173/500 6/6 [==============================] - 0s 1ms/step - loss: 3.3656 Epoch 174/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3618 Epoch 175/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3665 Epoch 176/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3605 Epoch 177/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3475 Epoch 178/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3549 Epoch 179/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3398 Epoch 180/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3494 Epoch 181/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3364 Epoch 182/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3232 Epoch 183/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3177 Epoch 184/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3143 Epoch 185/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3090 Epoch 186/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3019 Epoch 187/500 6/6 [==============================] - 0s 2ms/step - loss: 3.3001 Epoch 188/500 6/6 [==============================] - 0s 1ms/step - loss: 3.2951 Epoch 189/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2922 Epoch 190/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2878 Epoch 191/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2832 Epoch 192/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2785 Epoch 193/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2784 Epoch 194/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2749 Epoch 195/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2749 Epoch 196/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2690 Epoch 197/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2553 Epoch 198/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2484 Epoch 199/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2451 Epoch 200/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2374 Epoch 201/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2411 Epoch 202/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2443 Epoch 203/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2372 Epoch 204/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2260 Epoch 205/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2209 Epoch 206/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2131 Epoch 207/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2133 Epoch 208/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2005 Epoch 209/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2045 Epoch 210/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1882 Epoch 211/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1928 Epoch 212/500 6/6 [==============================] - 0s 2ms/step - loss: 3.2004 Epoch 213/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1866 Epoch 214/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1837 Epoch 215/500 6/6 [==============================] - 0s 1ms/step - loss: 3.1740 Epoch 216/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1688 Epoch 217/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1727 Epoch 218/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1659 Epoch 219/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1477 Epoch 220/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1577 Epoch 221/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1753 Epoch 222/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1587 Epoch 223/500 6/6 [==============================] - 0s 1ms/step - loss: 3.1521 Epoch 224/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1387 Epoch 225/500 6/6 [==============================] - 0s 1ms/step - loss: 3.1251 Epoch 226/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1212 Epoch 227/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1182 Epoch 228/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1159 Epoch 229/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1197 Epoch 230/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1152 Epoch 231/500 6/6 [==============================] - 0s 2ms/step - loss: 3.1045 Epoch 232/500 6/6 [==============================] - 0s 1ms/step - loss: 3.0997 Epoch 233/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0909 Epoch 234/500 6/6 [==============================] - 0s 1ms/step - loss: 3.0851 Epoch 235/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0836 Epoch 236/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0791 Epoch 237/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0953 Epoch 238/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0753 Epoch 239/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0719 Epoch 240/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0698 Epoch 241/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0573 Epoch 242/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0664 Epoch 243/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0558 Epoch 244/500 6/6 [==============================] - 0s 1ms/step - loss: 3.0410 Epoch 245/500 6/6 [==============================] - 0s 1ms/step - loss: 3.0456 Epoch 246/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0367 Epoch 247/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0285 Epoch 248/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0261 Epoch 249/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0273 Epoch 250/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0187 Epoch 251/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0163 Epoch 252/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0191 Epoch 253/500 6/6 [==============================] - 0s 1ms/step - loss: 3.0161 Epoch 254/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0070 Epoch 255/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0879 Epoch 256/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0270 Epoch 257/500 6/6 [==============================] - ETA: 0s - loss: 3.165 - 0s 2ms/step - loss: 3.0012 Epoch 258/500 6/6 [==============================] - 0s 2ms/step - loss: 3.0025 Epoch 259/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9790 Epoch 260/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9901 Epoch 261/500 6/6 [==============================] - 0s 1ms/step - loss: 2.9792 Epoch 262/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9782 Epoch 263/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9704 Epoch 264/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9594 Epoch 265/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9523 Epoch 266/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9681 Epoch 267/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9585 Epoch 268/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9558 Epoch 269/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9472 Epoch 270/500 6/6 [==============================] - 0s 1ms/step - loss: 2.9436 Epoch 271/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9251 Epoch 272/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9353 Epoch 273/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9414 Epoch 274/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9259 Epoch 275/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9215 Epoch 276/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9111 Epoch 277/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9460 Epoch 278/500 6/6 [==============================] - 0s 1ms/step - loss: 2.9048 Epoch 279/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9121 Epoch 280/500 6/6 [==============================] - 0s 2ms/step - loss: 2.9000 Epoch 281/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8945 Epoch 282/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8866 Epoch 283/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8760 Epoch 284/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8731 Epoch 285/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8738 Epoch 286/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8763 Epoch 287/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8841 Epoch 288/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8575 Epoch 289/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8548 Epoch 290/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8457 Epoch 291/500 6/6 [==============================] - 0s 1ms/step - loss: 2.8519 Epoch 292/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8417 Epoch 293/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8344 Epoch 294/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8330 Epoch 295/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8342 Epoch 296/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8228 Epoch 297/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8277 Epoch 298/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8220 Epoch 299/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8200 Epoch 300/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8213 Epoch 301/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8286 Epoch 302/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8272 Epoch 303/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8238 Epoch 304/500 6/6 [==============================] - 0s 1ms/step - loss: 2.8143 Epoch 305/500 6/6 [==============================] - 0s 2ms/step - loss: 2.8015 Epoch 306/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7933 Epoch 307/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7824 Epoch 308/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7786 Epoch 309/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7683 Epoch 310/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7736 Epoch 311/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7629 Epoch 312/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7629 Epoch 313/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7554 Epoch 314/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7530 Epoch 315/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7446 Epoch 316/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7536 Epoch 317/500 6/6 [==============================] - 0s 1ms/step - loss: 2.7395 Epoch 318/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7631 Epoch 319/500 6/6 [==============================] - 0s 1ms/step - loss: 2.7350 Epoch 320/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7223 Epoch 321/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7345 Epoch 322/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7214 Epoch 323/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7283 Epoch 324/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7126 Epoch 325/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7076 Epoch 326/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6987 Epoch 327/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6955 Epoch 328/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6939 Epoch 329/500 6/6 [==============================] - 0s 1ms/step - loss: 2.6941 Epoch 330/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6920 Epoch 331/500 6/6 [==============================] - 0s 2ms/step - loss: 2.7199 Epoch 332/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6907 Epoch 333/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6890 Epoch 334/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6865 Epoch 335/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6590 Epoch 336/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6656 Epoch 337/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6632 Epoch 338/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6608 Epoch 339/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6483 Epoch 340/500 6/6 [==============================] - 0s 1ms/step - loss: 2.6464 Epoch 341/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6441 Epoch 342/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6328 Epoch 343/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6345 Epoch 344/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6245 Epoch 345/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6263 Epoch 346/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6170 Epoch 347/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6151 Epoch 348/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6115 Epoch 349/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6063 Epoch 350/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5997 Epoch 351/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6008 Epoch 352/500 6/6 [==============================] - 0s 2ms/step - loss: 2.6043 Epoch 353/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5839 Epoch 354/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5900 Epoch 355/500 6/6 [==============================] - 0s 1ms/step - loss: 2.5836 Epoch 356/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5778 Epoch 357/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5711 Epoch 358/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5874 Epoch 359/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5870 Epoch 360/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5584 Epoch 361/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5569 Epoch 362/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5579 Epoch 363/500 6/6 [==============================] - 0s 1ms/step - loss: 2.5465 Epoch 364/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5503 Epoch 365/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5606 Epoch 366/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5364 Epoch 367/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5414 Epoch 368/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5294 Epoch 369/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5307 Epoch 370/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5220 Epoch 371/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5289 Epoch 372/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5222 Epoch 373/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5074 Epoch 374/500 6/6 [==============================] - 0s 1ms/step - loss: 2.5137 Epoch 375/500 6/6 [==============================] - 0s 2ms/step - loss: 2.5182 Epoch 376/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4974 Epoch 377/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4956 Epoch 378/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4858 Epoch 379/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4862 Epoch 380/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4806 Epoch 381/500 6/6 [==============================] - 0s 1ms/step - loss: 2.4976 Epoch 382/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4698 Epoch 383/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4857 Epoch 384/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4666 Epoch 385/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4494 Epoch 386/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4514 Epoch 387/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4371 Epoch 388/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4458 Epoch 389/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4333 Epoch 390/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4278 Epoch 391/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4281 Epoch 392/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4148 Epoch 393/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4122 Epoch 394/500 6/6 [==============================] - 0s 2ms/step - loss: 2.4046 Epoch 395/500 6/6 [==============================] - ETA: 0s - loss: 2.261 - 0s 2ms/step - loss: 2.4192 Epoch 396/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3955 Epoch 397/500 6/6 [==============================] - 0s 1ms/step - loss: 2.3965 Epoch 398/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3867 Epoch 399/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3826 Epoch 400/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3748 Epoch 401/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3919 Epoch 402/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3718 Epoch 403/500 6/6 [==============================] - 0s 1ms/step - loss: 2.3772 Epoch 404/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3535 Epoch 405/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3671 Epoch 406/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3561 Epoch 407/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3449 Epoch 408/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3400 Epoch 409/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3426 Epoch 410/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3446 Epoch 411/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3215 Epoch 412/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3252 Epoch 413/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3258 Epoch 414/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3249 Epoch 415/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3150 Epoch 416/500 6/6 [==============================] - 0s 1ms/step - loss: 2.2958 Epoch 417/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3259 Epoch 418/500 6/6 [==============================] - 0s 2ms/step - loss: 2.3159 Epoch 419/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2866 Epoch 420/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2873 Epoch 421/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2761 Epoch 422/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2762 Epoch 423/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2845 Epoch 424/500 6/6 [==============================] - 0s 1ms/step - loss: 2.2680 Epoch 425/500 6/6 [==============================] - 0s 1ms/step - loss: 2.2587 Epoch 426/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2687 Epoch 427/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2497 Epoch 428/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2648 Epoch 429/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2424 Epoch 430/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2558 Epoch 431/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2619 Epoch 432/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2573 Epoch 433/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2232 Epoch 434/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2154 Epoch 435/500 6/6 [==============================] - 0s 1ms/step - loss: 2.2141 Epoch 436/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2110 Epoch 437/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2101 Epoch 438/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2038 Epoch 439/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2084 Epoch 440/500 6/6 [==============================] - 0s 2ms/step - loss: 2.2077 Epoch 441/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1886 Epoch 442/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1859 Epoch 443/500 6/6 [==============================] - 0s 1ms/step - loss: 2.1832 Epoch 444/500 6/6 [==============================] - 0s 1ms/step - loss: 2.1899 Epoch 445/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1849 Epoch 446/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1730 Epoch 447/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1724 Epoch 448/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1671 Epoch 449/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1476 Epoch 450/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1632 Epoch 451/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1494 Epoch 452/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1416 Epoch 453/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1379 Epoch 454/500 6/6 [==============================] - 0s 1ms/step - loss: 2.1311 Epoch 455/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1308 Epoch 456/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1369 Epoch 457/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1246 Epoch 458/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1099 Epoch 459/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1161 Epoch 460/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1096 Epoch 461/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1046 Epoch 462/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1039 Epoch 463/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0930 Epoch 464/500 6/6 [==============================] - 0s 1ms/step - loss: 2.0957 Epoch 465/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0997 Epoch 466/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0986 Epoch 467/500 6/6 [==============================] - 0s 2ms/step - loss: 2.1142 Epoch 468/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0691 Epoch 469/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0793 Epoch 470/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0595 Epoch 471/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0985 Epoch 472/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0878 Epoch 473/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0547 Epoch 474/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0520 Epoch 475/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0429 Epoch 476/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0375 Epoch 477/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0357 Epoch 478/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0493 Epoch 479/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0155 Epoch 480/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0537 Epoch 481/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0320 Epoch 482/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0352 Epoch 483/500 6/6 [==============================] - 0s 1ms/step - loss: 2.0162 Epoch 484/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0154 Epoch 485/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9926 Epoch 486/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0211 Epoch 487/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9970 Epoch 488/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0029 Epoch 489/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9937 Epoch 490/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9871 Epoch 491/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9801 Epoch 492/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9872 Epoch 493/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9682 Epoch 494/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0296 Epoch 495/500 6/6 [==============================] - 0s 2ms/step - loss: 2.0032 Epoch 496/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9953 Epoch 497/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9638 Epoch 498/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9485 Epoch 499/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9575 Epoch 500/500 6/6 [==============================] - 0s 2ms/step - loss: 1.9401

In [28]:

# print(dir(history))

# print(dir(history.epoch))

# print(dir(history.history))

In [29]:

history.history.keys()

Out[29]:

dict_keys(['loss'])

In [30]:

# 把epoch当横左边,把loss当纵坐标

plt.plot(history.epoch,history.history.get('loss'))

Out[30]:

[<matplotlib.lines.Line2D at 0x2288bf67c88>]

5、检验模型

In [11]:

#####测试#####

type(pridict_y)

Out[11]:

numpy.ndarray

numpy给多维数组降维成一维

可以用reshape方法,但是感觉flatten方法更好

In [15]:

#####测试#####

pridict_y.reshape(29,)

Out[15]:

array([14.394563 , 4.5585423, 10.817445 , 12.291978 , 26.076233 ,

20.033213 , 11.320534 , 14.528755 , 11.454205 , 9.153889 ,

12.769189 , 5.7419834, 25.451023 , 18.215645 , 21.743513 ,

8.488817 , 17.128687 , 17.53172 , 4.953989 , 11.3504 ,

7.5612407, 4.2715034, 20.316795 , 17.732632 , 4.2850647,

6.971166 , 11.657596 , 24.968727 , 13.93272 ], dtype=float32)

In [18]:

#####测试#####

pridict_y.flatten()

Out[18]:

array([14.394563 , 4.5585423, 10.817445 , 12.291978 , 26.076233 ,

20.033213 , 11.320534 , 14.528755 , 11.454205 , 9.153889 ,

12.769189 , 5.7419834, 25.451023 , 18.215645 , 21.743513 ,

8.488817 , 17.128687 , 17.53172 , 4.953989 , 11.3504 ,

7.5612407, 4.2715034, 20.316795 , 17.732632 , 4.2850647,

6.971166 , 11.657596 , 24.968727 , 13.93272 ], dtype=float32)

In [19]:

#####测试#####

type(test_y)

Out[19]:

pandas.core.series.Series

pandas.Series转numpy的n维数组

可以直接用np的array方法

In [21]:

#####测试#####

import numpy as np

np.array(test_y)

Out[21]:

array([14.5, 7.6, 11.7, 11.5, 27. , 20.2, 11.7, 11.8, 12.6, 10.5, 12.2,

8.7, 26.2, 17.6, 22.6, 10.3, 17.3, 15.9, 6.7, 10.8, 9.9, 5.9,

19.6, 17.3, 7.6, 9.7, 12.8, 25.5, 13.4])

In [22]:

# 看一下模型的预测能力

pridict_y=model.predict(test_x)

print(pridict_y)

print(test_y)

# print(test_y-pridict_y)

[[14.394563 ] [ 4.5585423] [10.817445 ] [12.291978 ] [26.076233 ] [20.033213 ] [11.320534 ] [14.528755 ] [11.454205 ] [ 9.153889 ] [12.769189 ] [ 5.7419834] [25.451023 ] [18.215645 ] [21.743513 ] [ 8.488817 ] [17.128687 ] [17.53172 ] [ 4.953989 ] [11.3504 ] [ 7.5612407] [ 4.2715034] [20.316795 ] [17.732632 ] [ 4.2850647] [ 6.971166 ] [11.657596 ] [24.968727 ] [13.93272 ]] 171 14.5 172 7.6 173 11.7 174 11.5 175 27.0 176 20.2 177 11.7 178 11.8 179 12.6 180 10.5 181 12.2 182 8.7 183 26.2 184 17.6 185 22.6 186 10.3 187 17.3 188 15.9 189 6.7 190 10.8 191 9.9 192 5.9 193 19.6 194 17.3 195 7.6 196 9.7 197 12.8 198 25.5 199 13.4 Name: sales, dtype: float64

我们可以看到模型预测的结果和真实结果非常接近

In [23]:

# pridict_y和test_y都装化成numpy的一维数组,便于做误差

pridict_y=pridict_y.flatten()

test_y=np.array(test_y)

print(test_y)

print(pridict_y)

print(test_y-pridict_y)

[14.5 7.6 11.7 11.5 27. 20.2 11.7 11.8 12.6 10.5 12.2 8.7 26.2 17.6 22.6 10.3 17.3 15.9 6.7 10.8 9.9 5.9 19.6 17.3 7.6 9.7 12.8 25.5 13.4] [14.394563 4.5585423 10.817445 12.291978 26.076233 20.033213 11.320534 14.528755 11.454205 9.153889 12.769189 5.7419834 25.451023 18.215645 21.743513 8.488817 17.128687 17.53172 4.953989 11.3504 7.5612407 4.2715034 20.316795 17.732632 4.2850647 6.971166 11.657596 24.968727 13.93272 ] [ 0.10543728 3.04145775 0.8825552 -0.79197788 0.92376709 0.16678734 0.37946625 -2.72875519 1.14579544 1.3461113 -0.56918888 2.95801659 0.7489769 -0.61564484 0.85648689 1.81118279 0.1713131 -1.63171921 1.74601097 -0.55039997 2.33875933 1.62849655 -0.71679535 -0.43263168 3.3149353 2.72883387 1.14240437 0.53127289 -0.53272018]

我们可以看到,误差都比较小

In [ ]:

我的旨在学过的东西不再忘记(主要使用艾宾浩斯遗忘曲线算法及其它智能学习复习算法)的偏公益性质的完全免费的编程视频学习网站:

【读书编程笔记】fanrenyi.com;有各种前端、后端、算法、大数据、人工智能等课程。

版权申明:欢迎转载,但请注明出处

一些博文中有一些参考内容因时间久远找不到来源了没有注明,如果侵权请联系我删除。

在校每年国奖、每年专业第一,加拿大留学,先后工作于华东师范大学和香港教育大学。

2025-04-30:宅加太忙,特此在网上找女朋友,坐标上海,非诚勿扰,vx:fan404006308

AI交流资料群:753014672

浙公网安备 33010602011771号

浙公网安备 33010602011771号