PyTorch 深度学习实践 第9讲:多分类问题(下)

1.代码:

import torch

from torchvision import transforms#图像处理

from torchvision import datasets

from torch.utils.data import DataLoader#为了构建Dataloader

import torch.nn.functional as F#为了使用relu激活函数

import torch.optim as optim#优化器的包

# 1.prepare dataset

#要使用dataset,dataloader所以要设置batch容量

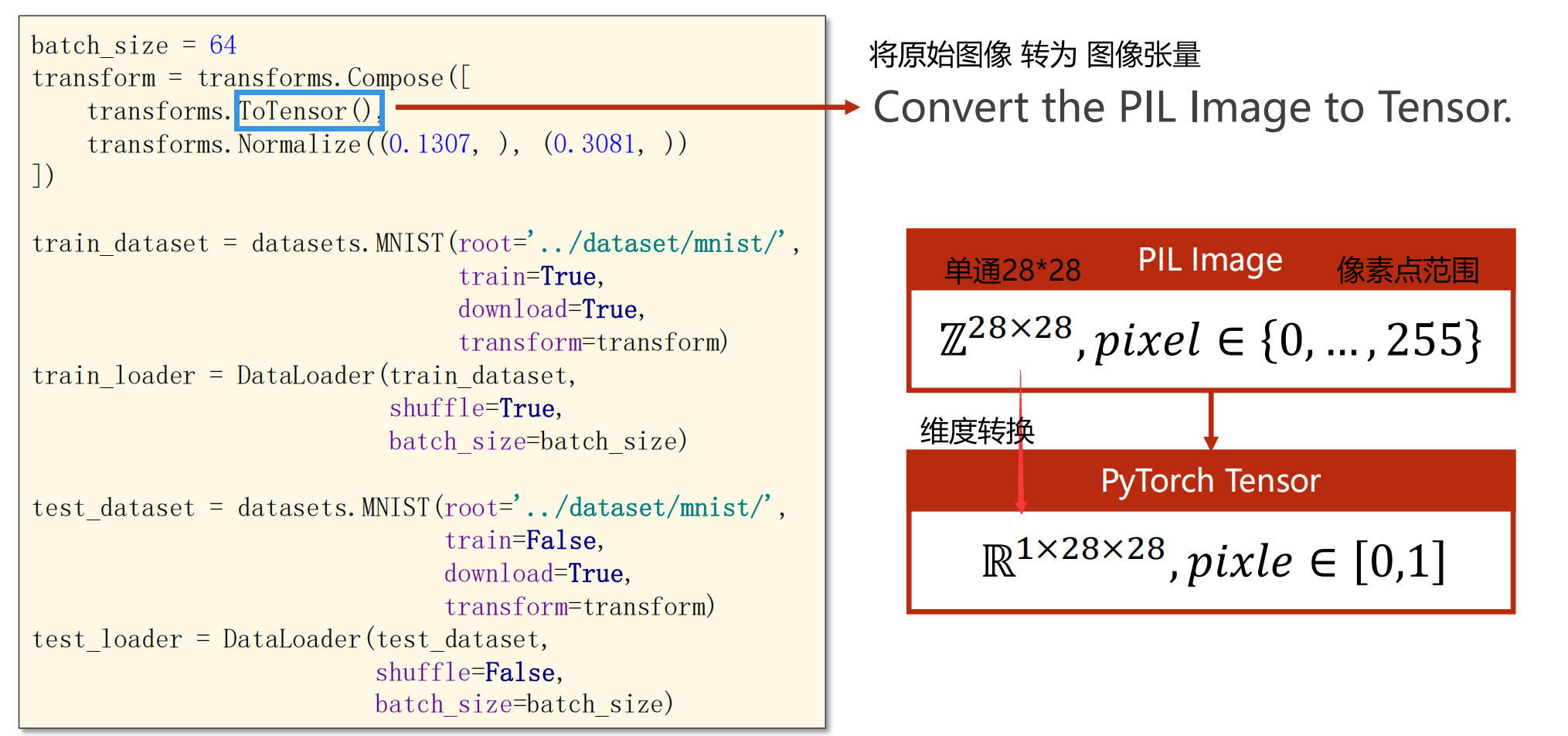

#ToTensor讲原始图片转成图像张量(维度1->3,像素值属于【0,1】

#Normalize(均值,标准差)像素值切换成0,1分布

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))])

train_dataset = datasets.MNIST(root='../dataset/mnist/', train=True, download=True, transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist/', train=False, download=True, transform=transform)

test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)

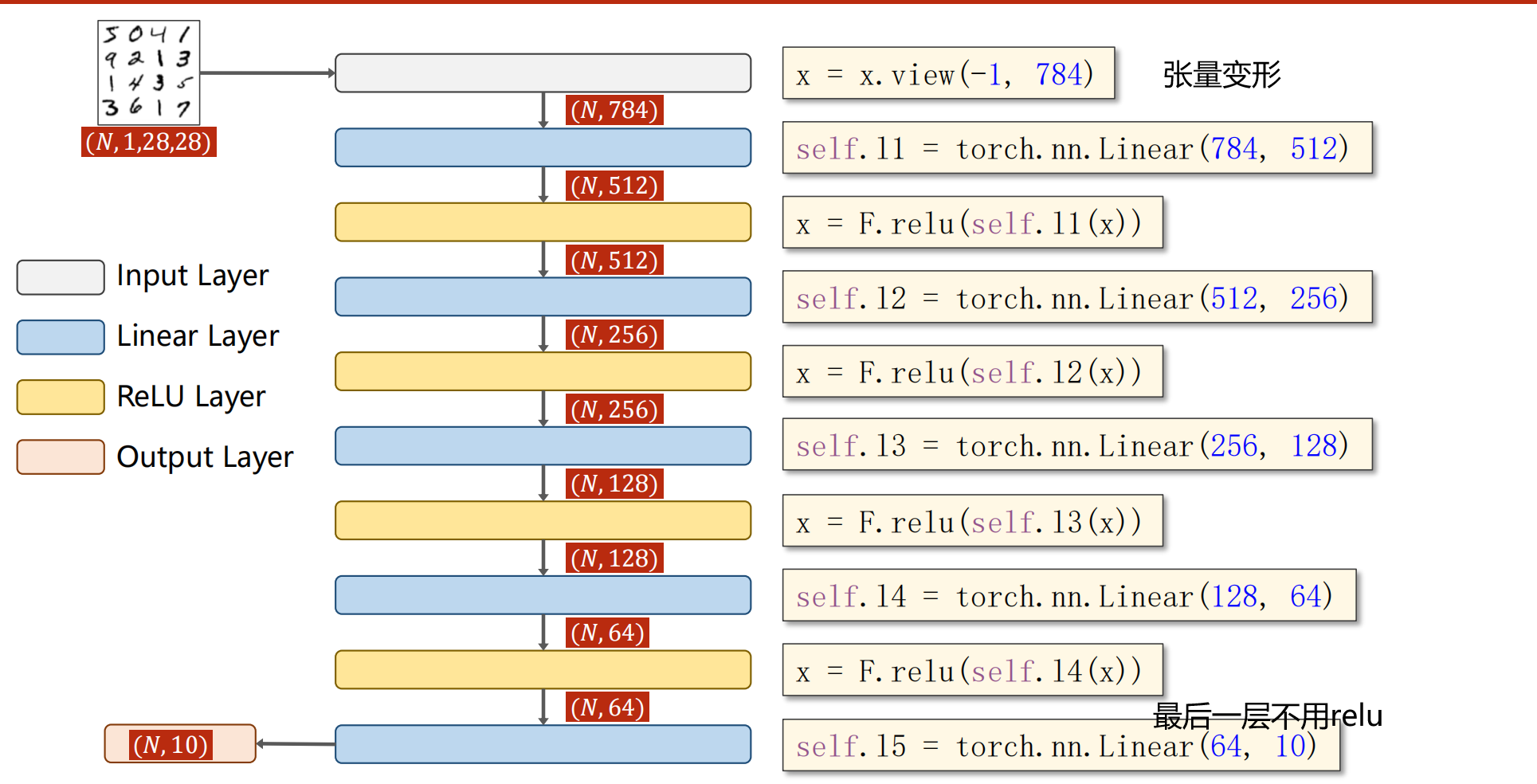

#2.Design Model

#构建网络,__init__函数中是线性变换层(输入,输出)

#__forward__函数,将线性变换的每一层结果用relu激活,注意最后一层l5不需要激活

#L5输出结果 利用nn.CrossEntropyLoss输出loss值

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.l1 = torch.nn.Linear(784,512)

self.l2 = torch.nn.Linear(512,256)

self.l3 = torch.nn.Linear(256,128)

self.l4 = torch.nn.Linear(128,64)

self.l5 = torch.nn.Linear(64,10)

def forward(self, x):

x = x.view(-1, 784)

x = F.relu(self.l1(x))

x = F.relu(self.l2(x))

x = F.relu(self.l3(x))

x = F.relu(self.l4(x))

return self.l5(x)#l5不激活,输出结果

model = Net()#实例化为model

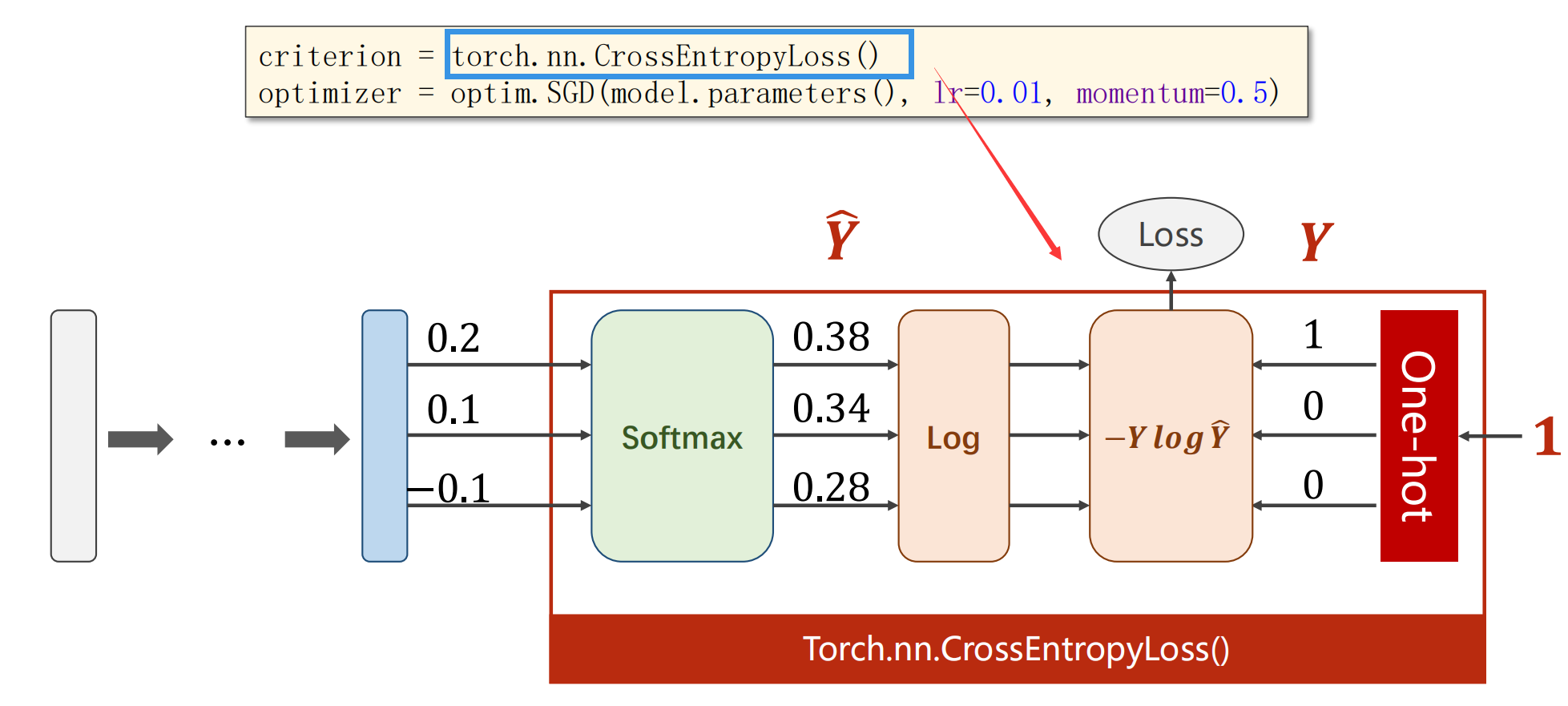

#3.construct Loss and Optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(),lr=0.01, momentum=0.5)

#4.Train and Test

def train(epoch):#封装train的一轮循环函数

running_loss = 0.0

for batch_idx, data in enumerate(train_loader,0):

inputs, target = data#输入x,输出y

optimizer.zero_grad()#清空过往梯度

#forward + backward + updata

outputs = model(inputs)#forward 计算y^

loss = criterion(outputs, target)#(y^,y)计算损失值

loss.backward()#反向传播,计算当前梯度

optimizer.step()#根据梯度更新网络参数

running_loss += loss.item()#累计相加的是loss拿出的值,而非构建计算图!

if batch_idx % 300 ==299:

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1,running_loss / 300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():#以下不用计算梯度

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim = 1)#输出数据,dim =1检索每行最大值,并输出下标

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set:%d %%' %(100 * correct / total))

#5.

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

[1, 300] loss: 2.194

[1, 600] loss: 0.784

[1, 900] loss: 0.396

Accuracy on test set:89 %

[2, 300] loss: 0.310

[2, 600] loss: 0.266

[2, 900] loss: 0.229

Accuracy on test set:93 %

[3, 300] loss: 0.181

[3, 600] loss: 0.166

[3, 900] loss: 0.160

Accuracy on test set:95 %

[4, 300] loss: 0.127

[4, 600] loss: 0.129

[4, 900] loss: 0.116

Accuracy on test set:96 %

[5, 300] loss: 0.098

[5, 600] loss: 0.097

[5, 900] loss: 0.093

Accuracy on test set:96 %

[6, 300] loss: 0.079

[6, 600] loss: 0.079

[6, 900] loss: 0.069

Accuracy on test set:96 %

[7, 300] loss: 0.057

[7, 600] loss: 0.063

[7, 900] loss: 0.063

Accuracy on test set:97 %

[8, 300] loss: 0.050

[8, 600] loss: 0.050

[8, 900] loss: 0.048

Accuracy on test set:97 %

[9, 300] loss: 0.040

[9, 600] loss: 0.040

[9, 900] loss: 0.042

Accuracy on test set:97 %

[10, 300] loss: 0.031

[10, 600] loss: 0.030

[10, 900] loss: 0.036

Accuracy on test set:97 %

2.代码图解

ToTensor():

- 单通道->多通道(维度转换:矩阵->张量)

- 像素值从(0,255)压缩到【0,1】

x.view():

参考一下其他小伙伴的优秀:

for batch_idx(或者i), data in enumerate(train_loader, 0): 理解

浙公网安备 33010602011771号

浙公网安备 33010602011771号