keepalived+haproxy部署k8s v1.21.14 高可用集群

kubernetes版本:v1.21.14

集群规模:三主两从

集群架构:keepalived+haproxy作为高可用

环境信息:

10.50.209.162 k8s-master01

10.50.209.163 k8s-master02

10.50.209.164 k8s-master03

10.50.209.165 k8s-node01

10.50.209.166 k8s-node01

修改服务器root密码

passwd

master节点对node节点做免密

ssh-keygen -t rsa

ssh-copy-id ip地址做免密

以下操作是需要在每个节点上操作的

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

关闭selinux

sed -i 's/enforcing/disabled/' /etc/selinux/config # 永久

setenforce 0 # 临时

关闭swap

swapoff -a # 临时

sed -ri '/swap/s/^(.*)$/#\1/g' /etc/fstab #永久

设置主机名

hostnamectl set-hostname <hostname>

在master添加hosts:

cat >> /etc/hosts << EOF

10.50.195.2 k8s-master

10.50.195.3 k8s-node1

10.50.195.4 k8s-node2

EOF

将hosts文件scp到每个节点

scp -r /etc/hosts k8s-master02:/etc/hosrs

......

将桥接的IPv4流量传递到iptables的链:

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system # 生效

时间同步:

yum install ntpdate -y

ntpdate time.windows.com

Master节点部署keepalived

安装keepalived

yum install -y keepalived

Master01替换配置文件

vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id master01

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 3

weight -2

fall 10

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface eth0 # 虚拟网卡桥接的真实网卡

virtual_router_id 51

# 优先级配置,每台服务器最好都不一样,如100,90,80等,优先级越高越先使用

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 111

}

virtual_ipaddress {

10.50.209.169 # 对外提供的虚拟IP

}

track_script {

check_haproxy

}

}

Master02替换配置文件

vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id master02

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 3

weight -2

fall 10

rise 2

}

vrrp_instance VI_1 {

state BACKUP

interface eth0 # 虚拟网卡桥接的真实网卡

virtual_router_id 51

# 优先级配置,每台服务器最好都不一样,如100,90,80等,优先级越高越先使用

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 111

}

virtual_ipaddress {

10.50.209.169 # 对外提供的虚拟IP

}

track_script {

check_haproxy

}

}

Master03替换配置文件

vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id master03

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 3

weight -2

fall 10

rise 2

}

vrrp_instance VI_1 {

state BACKUP

interface eth1 # 虚拟网卡桥接的真实网卡

virtual_router_id 51

# 优先级配置,每台服务器最好都不一样,如100,90,80等,优先级越高越先使用

priority 80

advert_int 1

authentication {

auth_type PASS

auth_pass 111

}

virtual_ipaddress {

10.50.209.169 # 对外提供的虚拟IP

}

track_script {

check_haproxy

}

}

启动keepalived

systemctl start keepalived

查看master01上是否生成vip

ip addr show eth0

关闭master01的keepalived,看虚拟ip是否漂移到master02上,master03同理,挨个测试一下

注意:移动云服务器自建vip需要客户在控制台进行服务器相关的配置,不然其他节点是无法ping通vip的

Master节点部署haproxy

yum install -y haproxy

修改配置文件

vim /etc/haproxy/haproxy.cfg

#---------------------------------------------------------------------

# Global settings

#---------------------------------------------------------------------

global

# to have these messages end up in /var/log/haproxy.log you will

# need to:

#

# 1) configure syslog to accept network log events. This is done

# by adding the '-r' option to the SYSLOGD_OPTIONS in

# /etc/sysconfig/syslog

#

# 2) configure local2 events to go to the /var/log/haproxy.log

# file. A line like the following can be added to

# /etc/sysconfig/syslog

#

# local2.* /var/log/haproxy.log

#

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

# turn on stats unix socket

stats socket /var/lib/haproxy/stats

#---------------------------------------------------------------------

# common defaults that all the 'listen' and 'backend' sections will

# use if not designated in their block

#---------------------------------------------------------------------

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

#option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

#---------------------------------------------------------------------

# kubernetes apiserver frontend which proxys to the backends

#---------------------------------------------------------------------

frontend kubernetes-apiserver

mode tcp

bind *:6444 # 对外提供服务的端口,必须和kubernetes一致

option tcplog

default_backend kubernetes-apiserver #后端服务的名称

#---------------------------------------------------------------------

# round robin balancing between the various backends

#---------------------------------------------------------------------

backend kubernetes-apiserver

mode tcp

balance roundrobin

server k8s-master01 10.50.209.162:6443 check port 6443 inter 1000 maxconn 51200 #只需要修改ip即可

server k8s-master02 10.50.209.163:6443 check port 6443 inter 1000 maxconn 51200

server k8s-master03 10.50.209.164:6443 check port 6443 inter 1000 maxconn 51200

启动haproxy

systemctl start haproxy

注:三台节点配置一样直接scp即可

安装docker

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

yum -y install docker-ce-18.06.1.ce-3.el7

systemctl enable docker --now

docker --version

修改docker镜像源(为了使拉取镜像更快)

vim /etc/docker/daemon.json

{

"registry-mirrors": ["https://docker.1panel.live"]

}

重启docker

systemctl restart docker

添加K8S YUM源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

安装kubeadm,kubelet和kubectl

yum install -y kubeadm-1.21.14-0 kubelet-1.21.14-0 kubectl-1.21.14-0

设置开机自启

systemctl enable kubelet

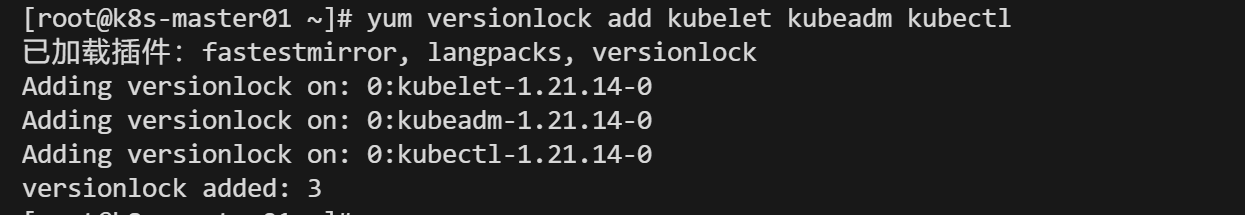

锁定插件版本不允许升级

安装并启用该插件(如果尚未安装):

yum install yum-plugin-versionlock

锁定k8s工具版本

yum versionlock add kubelet kubeadm kubectl

以下是Master节点操作

生成初始化的文件

kubeadm config print init-defaults > kubeadm-config.yaml

内容如下:

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 10.50.209.162

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master01 #添加节点名称,不然集群构建成功后节点名显示为node

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: "10.50.209.169:6444" #添加vip:haproxy端口作为集群接入点

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: 1.21.14

networking:

dnsDomain: cluster.local

podSubnet: 10.202.0.0/16

serviceSubnet: 10.200.0.0/16

scheduler: {}

###修改advertiseAddress为本机地址

###imageRepository修改为国内的镜像源(registry.aliyuncs.com/google_containers)或者网速好的话可以不修改

###指定podSubnet、serviceSubnet的地址段,注意不要和本机地址段冲突,否则coredns会报错无法启动

添加controlPlaneEndpoint: "10.50.209.169:6444" 来指定k8s集群的vip,端口为haproxy的端口

修改node名为k8s-master01

初始化集群

kubeadm init --config=kubeadm-config.yaml

初始化完毕后,会出现两条命令,一条是添加master节点,一条是添加node节点

使用kubectl命令

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

添加master节点前需要拷贝master01的证书文件到master02和master03上

先在master02、master03创建目录

mkdir -p /etc/kubernetes/pki/etcd

拷贝证书文件

Master02:

scp /etc/kubernetes/admin.conf k8s-master02:/etc/kubernetes/admin.conf

scp /etc/kubernetes/pki/{ca.*,sa.*,front-proxy-ca.*} k8s-master02:/etc/kubernetes/pki

scp /etc/kubernetes/pki/etcd/ca.* k8s-master02:/etc/kubernetes/pki/etcd

Master03:

scp /etc/kubernetes/admin.conf k8s-master03:/etc/kubernetes/admin.conf

scp /etc/kubernetes/pki/{ca.*,sa.*,front-proxy-ca.*} k8s-master03:/etc/kubernetes/pki

scp /etc/kubernetes/pki/etcd/ca.* k8s-master03:/etc/kubernetes/pki/etcd

执行加入master节点的命令(master02和03一样)

查看节点

kubectl get node

如果加入master节点的命令过期或者遗忘执行以下命令生成

生成control-plane-endpoint的密钥

kubeadm init phase upload-certs --upload-certs

生成加入集群的命令

kubeadm token create --print-join-command

拼接一下,以下示例

kubeadm join 192.168.66.50:6443 --token h305n1.vpc223usk7yze0fm --discovery-token-ca-cert-hash sha256:5d73260eb588e2f31183bfa04b63fab12f614fa6cba8c382ceb9ea555b1e70e7 --control-plane --certificate-key 74890361532bc100970cb253c7cd6e939c985694324f5233b29c721b79e4e693

部署flannel网络插件

复制链接内的yaml内容

raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

如果网址失效,访问我的网盘即可

链接:https://pan.baidu.com/s/1WcfqFJxZPy96qr-Ry-Sf0g?pwd=9ish

提取码:9ish

编辑flannel.yaml,修改网段为pod网段

启动flannel插件

kubectl create -f flannel.yaml

查看节点状态

把node节点加入集群

使用初始化完成后给的命令加入node节点

或者

执行下面的命令生成加入node命令

kubeadm token create --print-join-command

Node节点使用kubectl命令

临时生效

export KUBECONFIG=/etc/kubernetes/kubelet.conf

source /etc/profile #让他生效

浙公网安备 33010602011771号

浙公网安备 33010602011771号