1 import os

2 import pandas as pd

3

4

5 # 修改工作路径到指定文件夹

6 os.chdir("C:\Users\86184\Desktop\文件集\data")

7

8 # 第一种连接方式

9 #from sqlalchemy import create_engine

10

11 #engine = create_engine('mysql+pymysql://root:123@192.168.31.140:3306/test?charset=utf8')

12 #sql = pd.read_sql('all_gzdata', engine, chunksize = 10000)

13

14 # 第二种连接方式

15 import pymysql as pm

16

17 con = pm.connect('localhost','root','2262kwy','test',charset='utf8')

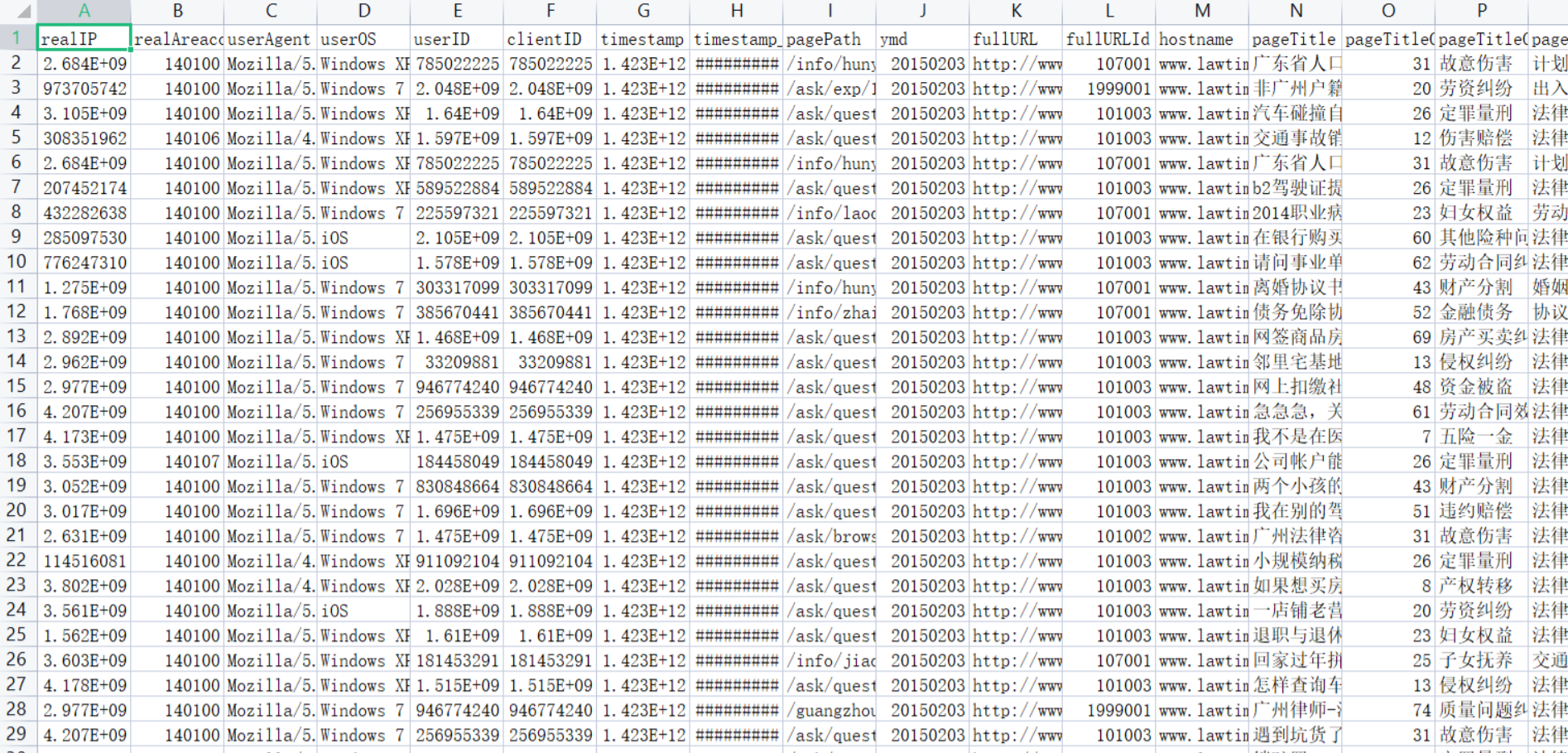

18 data = pd.read_sql('select * from all_gzdata',con=con)

19 con.close() #关闭连接

20

21 # 保存读取的数据

22 data.to_csv('C:\Users\86184\Desktop\文件集\data\all_gzdata.csv', index=False, encoding='utf-8')

![]()

1 import pandas as pd

2 from sqlalchemy import create_engine

3

4 engine = create_engine('mysql+pymysql://root:2262kwy@127.0.0.1:3306/test?charset=utf8')

5 sql = pd.read_sql('all_gzdata', engine, chunksize = 10000)

6 # 分析网页类型

7 counts = [i['fullURLId'].value_counts() for i in sql] #逐块统计

8 counts = counts.copy()

9 counts = pd.concat(counts).groupby(level=0).sum() # 合并统计结果,把相同的统计项合并(即按index分组并求和)

10 counts = counts.reset_index() # 重新设置index,将原来的index作为counts的一列。

11 counts.columns = ['index', 'num'] # 重新设置列名,主要是第二列,默认为0

12 counts['type'] = counts['index'].str.extract('(\d{3})') # 提取前三个数字作为类别id

13 counts_ = counts[['type', 'num']].groupby('type').sum() # 按类别合并

14 counts_.sort_values(by='num', ascending=False, inplace=True) # 降序排列

15 counts_['ratio'] = counts_.iloc[:,0] / counts_.iloc[:,0].sum()

16 print(counts_)

17

18

19 # 因为只有107001一类,但是可以继续细分成三类:知识内容页、知识列表页、知识首页

20 def count107(i): #自定义统计函数

21 j = i[['fullURL']][i['fullURLId'].str.contains('107')].copy() # 找出类别包含107的网址

22 j['type'] = None # 添加空列

23 j['type'][j['fullURL'].str.contains('info/.+?/')]= '知识首页'

24 j['type'][j['fullURL'].str.contains('info/.+?/.+?')]= '知识列表页'

25 j['type'][j['fullURL'].str.contains('/\d+?_*\d+?\.html')]= '知识内容页'

26 return j['type'].value_counts()

27 # 注意:获取一次sql对象就需要重新访问一下数据库(!!!)

28 #engine = create_engine('mysql+pymysql://root:123456@127.0.0.1:3306/test?charset=utf8')

29 sql = pd.read_sql('all_gzdata', engine, chunksize = 10000)

30

31 counts2 = [count107(i) for i in sql] # 逐块统计

32 counts2 = pd.concat(counts2).groupby(level=0).sum() # 合并统计结果

33 print(counts2)

34 #计算各个部分的占比

35 res107 = pd.DataFrame(counts2)

36 # res107.reset_index(inplace=True)

37 res107.index.name= '107类型'

38 res107.rename(columns={'type':'num'}, inplace=True)

39 res107['比例'] = res107['num'] / res107['num'].sum()

40 res107.reset_index(inplace = True)

41 print(res107)

42

43 # 代码11-4

44

45 def countquestion(i): # 自定义统计函数

46 j = i[['fullURLId']][i['fullURL'].str.contains('\?')].copy() # 找出类别包含107的网址

47 return j

48

49 #engine = create_engine('mysql+pymysql://root:123456@127.0.0.1:3306/test?charset=utf8')

50 sql = pd.read_sql('all_gzdata', engine, chunksize = 10000)

51

52 counts3 = [countquestion(i)['fullURLId'].value_counts() for i in sql]

53 counts3 = pd.concat(counts3).groupby(level=0).sum()

54 print(counts3)

55

56 # 求各个类型的占比并保存数据

57 df1 = pd.DataFrame(counts3)

58 df1['perc'] = df1['fullURLId']/df1['fullURLId'].sum()*100

59 df1.sort_values(by='fullURLId',ascending=False,inplace=True)

60 print(df1.round(4))

61

62

63

64 # 代码11-5

65

66 def page199(i): #自定义统计函数

67 j = i[['fullURL','pageTitle']][(i['fullURLId'].str.contains('199')) &

68 (i['fullURL'].str.contains('\?'))]

69 j['pageTitle'].fillna('空',inplace=True)

70 j['type'] = '其他' # 添加空列

71 j['type'][j['pageTitle'].str.contains('法律快车-律师助手')]= '法律快车-律师助手'

72 j['type'][j['pageTitle'].str.contains('咨询发布成功')]= '咨询发布成功'

73 j['type'][j['pageTitle'].str.contains('免费发布法律咨询' )] = '免费发布法律咨询'

74 j['type'][j['pageTitle'].str.contains('法律快搜')] = '快搜'

75 j['type'][j['pageTitle'].str.contains('法律快车法律经验')] = '法律快车法律经验'

76 j['type'][j['pageTitle'].str.contains('法律快车法律咨询')] = '法律快车法律咨询'

77 j['type'][(j['pageTitle'].str.contains('_法律快车')) |

78 (j['pageTitle'].str.contains('-法律快车'))] = '法律快车'

79 j['type'][j['pageTitle'].str.contains('空')] = '空'

80

81 return j

82

83 # 注意:获取一次sql对象就需要重新访问一下数据库

84 #engine = create_engine('mysql+pymysql://root:2262kwy@127.0.0.1:3306/test?charset=utf8')

85 sql = pd.read_sql('all_gzdata', engine, chunksize = 10000)# 分块读取数据库信息

86 #sql = pd.read_sql_query('select * from all_gzdata limit 10000', con=engine)

87

88 counts4 = [page199(i) for i in sql] # 逐块统计

89 counts4 = pd.concat(counts4)

90 d1 = counts4['type'].value_counts()

91 print(d1)

92 d2 = counts4[counts4['type']=='其他']

93 print(d2)

94 # 求各个部分的占比并保存数据

95 df1_ = pd.DataFrame(d1)

96 df1_['perc'] = df1_['type']/df1_['type'].sum()*100

97 df1_.sort_values(by='type',ascending=False,inplace=True)

98 print(df1_)

99

100

101 # 代码11-6

102

103 def xiaguang(i): #自定义统计函数

104 j = i.loc[(i['fullURL'].str.contains('\.html'))==False,

105 ['fullURL','fullURLId','pageTitle']]

106 return j

107

108 # 注意获取一次sql对象就需要重新访问一下数据库

109 #engine = create_engine('mysql+pymysql://root:123456@127.0.0.1:3306/test?charset=utf8')

110 sql = pd.read_sql('all_gzdata', engine, chunksize = 10000)# 分块读取数据库信息

111

112 counts5 = [xiaguang(i) for i in sql]

113 counts5 = pd.concat(counts5)

114

115 xg1 = counts5['fullURLId'].value_counts()

116 print(xg1)

117 # 求各个部分的占比

118 xg_ = pd.DataFrame(xg1)

119 xg_.reset_index(inplace=True)

120 xg_.columns= ['index', 'num']

121 xg_['perc'] = xg_['num']/xg_['num'].sum()*100

122 xg_.sort_values(by='num',ascending=False,inplace=True)

123

124 xg_['type'] = xg_['index'].str.extract('(\d{3})') #提取前三个数字作为类别id

125

126 xgs_ = xg_[['type', 'num']].groupby('type').sum() #按类别合并

127 xgs_.sort_values(by='num', ascending=False,inplace=True) #降序排列

128 xgs_['percentage'] = xgs_['num']/xgs_['num'].sum()*100

129

130 print(xgs_.round(4))

131

132

133

134 # 代码11-7

135

136 # 分析网页点击次数

137 # 统计点击次数

138 #engine = create_engine('mysql+pymysql://root:2262kwy@127.0.0.1:3306/test?charset=utf8')

139 sql = pd.read_sql('all_gzdata', engine, chunksize = 10000)# 分块读取数据库信息

140

141 counts1 = [i['realIP'].value_counts() for i in sql] # 分块统计各个IP的出现次数

142 counts1 = pd.concat(counts1).groupby(level=0).sum() # 合并统计结果,level=0表示按照index分组

143 print(counts1)

144

145 counts1_ = pd.DataFrame(counts1)

146 counts1_

147 counts1['realIP'] = counts1.index.tolist()

148

149 counts1_[1]=1 # 添加1列全为1

150 hit_count = counts1_.groupby('realIP').sum() # 统计各个“不同点击次数”分别出现的次数

151 # 也可以使用counts1_['realIP'].value_counts()功能

152 hit_count.columns=['用户数']

153 hit_count.index.name = '点击次数'

154

155 # 统计1~7次、7次以上的用户人数

156 hit_count.sort_index(inplace = True)

157 hit_count_7 = hit_count.iloc[:7,:]

158 time = hit_count.iloc[7:,0].sum() # 统计点击次数7次以上的用户数

159 hit_count_7 = hit_count_7.append([{'用户数':time}], ignore_index=True)

160 hit_count_7.index = ['1','2','3','4','5','6','7','7次以上']

161 hit_count_7['用户比例'] = hit_count_7['用户数'] / hit_count_7['用户数'].sum()

162 print(hit_count_7)

163

164

165

166 # 代码11-8

167

168 # 分析浏览一次的用户行为

169

170 #engine = create_engine('mysql+pymysql://root:2262kwy@127.0.0.1:3306/test?charset=utf8')

171 all_gzdata = pd.read_sql_table('all_gzdata', con = engine) # 读取all_gzdata数据

172

173 #对realIP进行统计

174 # 提取浏览1次网页的数据

175 real_count = pd.DataFrame(all_gzdata.groupby("realIP")["realIP"].count())

176 real_count.columns = ["count"]

177 real_count["realIP"] = real_count.index.tolist()

178 user_one = real_count[(real_count["count"] == 1)] # 提取只登录一次的用户

179 # 通过realIP与原始数据合并

180 real_one = pd.merge(user_one, all_gzdata, right_on = 'realIP',left_index=True,how ='left')

181

182 # 统计浏览一次的网页类型

183 URL_count = pd.DataFrame(real_one.groupby("fullURLId")["fullURLId"].count())

184 URL_count.columns = ["count"]

185 URL_count.sort_values(by='count', ascending=False, inplace=True) # 降序排列

186 # 统计排名前4和其他的网页类型

187 URL_count_4 = URL_count.iloc[:4,:]

188 time = hit_count.iloc[4:,0].sum() # 统计其他的

189 URLindex = URL_count_4.index.values

190 URL_count_4 = URL_count_4.append([{'count':time}], ignore_index=True)

191 URL_count_4.index = [URLindex[0], URLindex[1], URLindex[2], URLindex[3],

192 '其他']

193 URL_count_4['比例'] = URL_count_4['count'] / URL_count_4['count'].sum()

194 print(URL_count_4)

195

196

197

198 # 代码11-9

199

200 # 在浏览1次的前提下, 得到的网页被浏览的总次数

201 fullURL_count = pd.DataFrame(real_one.groupby("fullURL")["fullURL"].count())

202 fullURL_count.columns = ["count"]

203 fullURL_count["fullURL"] = fullURL_count.index.tolist()

204 fullURL_count.sort_values(by='count', ascending=False, inplace=True) # 降序排列

num ratio

type

101 411665 0.491570

199 201426 0.240523

107 182900 0.218401

301 18430 0.022007

102 17357 0.020726

106 3957 0.004725

103 1715 0.002048

知识内容页 164243

知识列表页 9656

知识首页 9001

Name: type, dtype: int64

107类型 num 比例

0 知识内容页 164243 0.897993

1 知识列表页 9656 0.052794

2 知识首页 9001 0.049213

101003 47

102002 25

107001 346

1999001 64718

301001 356

Name: fullURLId, dtype: int64

fullURLId perc

1999001 64718 98.8182

301001 356 0.5436

107001 346 0.5283

101003 47 0.0718

102002 25 0.0382

法律快车-律师助手 49894

法律快车法律咨询 6421

咨询发布成功 5220

快搜 1943

法律快车 818

其他 359

法律快车法律经验 59

空 4

Name: type, dtype: int64

fullURL ... type

2631 http://www.lawtime.cn/spelawyer/index.php?py=g... ... 其他

2632 http://www.lawtime.cn/spelawyer/index.php?py=g... ... 其他

1677 http://m.baidu.com/from=844b/bd_page_type=1/ss... ... 其他

4303 http://m.baidu.com/from=0/bd_page_type=1/ssid=... ... 其他

3673 http://www.lawtime.cn/lawyer/lll25879862593080... ... 其他

... ... ...

4829 http://www.lawtime.cn/spelawyer/index.php?m=se... ... 其他

4837 http://www.lawtime.cn/spelawyer/index.php?m=se... ... 其他

4842 http://www.lawtime.cn/spelawyer/index.php?m=se... ... 其他

8302 http://www.lawtime.cn/spelawyer/index.php?m=se... ... 其他

5034 http://www.baidu.com/link?url=O7iBD2KmoJdkHWTZ... ... 其他

[359 rows x 3 columns]

type perc

法律快车-律师助手 49894 77.094471

法律快车法律咨询 6421 9.921506

咨询发布成功 5220 8.065762

快搜 1943 3.002256

法律快车 818 1.263945

其他 359 0.554714

法律快车法律经验 59 0.091165

空 4 0.006181

1999001 117124

107001 17843

102002 12021

101001 5603

106001 3957

102001 2129

102003 1235

301001 1018

101009 854

102007 538

102008 404

101008 378

102004 361

102005 271

102009 214

102006 184

101004 125

101006 107

101005 63

Name: fullURLId, dtype: int64

num percentage

type

199 117124 71.2307

107 17843 10.8515

102 17357 10.5559

101 7130 4.3362

106 3957 2.4065

301 1018 0.6191

82033 2

95502 1

103182 1

116010 2

136206 1

..

4294809358 2

4294811150 1

4294852154 3

4294865422 2

4294917690 1

Name: realIP, Length: 230149, dtype: int64

用户数 用户比例

1 132119 0.574059

2 44175 0.191941

3 17573 0.076355

4 10156 0.044128

5 5952 0.025862

6 4132 0.017954

7 2632 0.011436

7次以上 13410 0.058267

count 比例

101003 102560 0.649011

107001 19443 0.123037

1999001 9381 0.059364

301001 515 0.003259

其他 26126 0.165328

1 import os

2 import re

3 import pandas as pd

4 import pymysql as pm

5 from random import sample

6

7 # 修改工作路径到指定文件夹

8 os.chdir("C:\Users\86184\Desktop\文件集\data")

9

10 # 读取数据

11 con = pm.connect('localhost','root','2262kwy','test',charset='utf8')

12 data = pd.read_sql('select * from all_gzdata',con=con)

13 con.close() # 关闭连接

14

15 # 取出107类型数据

16 index107 = [re.search('107',str(i))!=None for i in data.loc[:,'fullURLId']]

17 data_107 = data.loc[index107,:]

18

19 # 在107类型中筛选出婚姻类数据

20 index = [re.search('hunyin',str(i))!=None for i in data_107.loc[:,'fullURL']]

21 data_hunyin = data_107.loc[index,:]

22

23 # 提取所需字段(realIP、fullURL)

24 info = data_hunyin.loc[:,['realIP','fullURL']]

25

26 # 去除网址中“?”及其后面内容

27 da = [re.sub('\?.*','',str(i)) for i in info.loc[:,'fullURL']]

28 info.loc[:,'fullURL'] = da # 将info中‘fullURL’那列换成da

29 # 去除无html网址

30 index = [re.search('\.html',str(i))!=None for i in info.loc[:,'fullURL']]

31 index.count(True) # True 或者 1 , False 或者 0

32 info1 = info.loc[index,:]

33

34

35

36 # 代码11-11

37

38 # 找出翻页和非翻页网址

39 index = [re.search('/\d+_\d+\.html',i)!=None for i in info1.loc[:,'fullURL']]

40 index1 = [i==False for i in index]

41 info1_1 = info1.loc[index,:] # 带翻页网址

42 info1_2 = info1.loc[index1,:] # 无翻页网址

43 # 将翻页网址还原

44 da = [re.sub('_\d+\.html','.html',str(i)) for i in info1_1.loc[:,'fullURL']]

45 info1_1.loc[:,'fullURL'] = da

46 # 翻页与非翻页网址合并

47 frames = [info1_1,info1_2]

48 info2 = pd.concat(frames)

49 # 或者

50 info2 = pd.concat([info1_1,info1_2],axis = 0) # 默认为0,即行合并

51 # 去重(realIP和fullURL两列相同)

52 info3 = info2.drop_duplicates()

53 # 将IP转换成字符型数据

54 info3.iloc[:,0] = [str(index) for index in info3.iloc[:,0]]

55 info3.iloc[:,1] = [str(index) for index in info3.iloc[:,1]]

56 len(info3)

57

58

59

60 # 代码11-12

61

62 # 筛选满足一定浏览次数的IP

63 IP_count = info3['realIP'].value_counts()

64 # 找出IP集合

65 IP = list(IP_count.index)

66 count = list(IP_count.values)

67 # 统计每个IP的浏览次数,并存放进IP_count数据框中,第一列为IP,第二列为浏览次数

68 IP_count = pd.DataFrame({'IP':IP,'count':count})

69 # 3.3筛选出浏览网址在n次以上的IP集合

70 n = 2

71 index = IP_count.loc[:,'count']>n

72 IP_index = IP_count.loc[index,'IP']

73

74

75

76 # 代码11-13

77

78 # 划分IP集合为训练集和测试集

79 index_tr = sample(range(0,len(IP_index)),int(len(IP_index)*0.8)) # 或者np.random.sample

80 index_te = [i for i in range(0,len(IP_index)) if i not in index_tr]

81 IP_tr = IP_index[index_tr]

82 IP_te = IP_index[index_te]

83 # 将对应数据集划分为训练集和测试集

84 index_tr = [i in list(IP_tr) for i in info3.loc[:,'realIP']]

85 index_te = [i in list(IP_te) for i in info3.loc[:,'realIP']]

86 data_tr = info3.loc[index_tr,:]

87 data_te = info3.loc[index_te,:]

88 print(len(data_tr))

89 IP_tr = data_tr.iloc[:,0] # 训练集IP

90 url_tr = data_tr.iloc[:,1] # 训练集网址

91 IP_tr = list(set(IP_tr)) # 去重处理

92 url_tr = list(set(url_tr)) # 去重处理

93 len(url_tr)

94

95 import pandas as pd

96 # 利用训练集数据构建模型

97 UI_matrix_tr = pd.DataFrame(0,index=IP_tr,columns=url_tr)

98 # 求用户-物品矩阵

99 for i in data_tr.index:

100 UI_matrix_tr.loc[data_tr.loc[i,'realIP'],data_tr.loc[i,'fullURL']] = 1

101 sum(UI_matrix_tr.sum(axis=1))

102

103 # 求物品相似度矩阵(因计算量较大,需要耗费的时间较久)

104 Item_matrix_tr = pd.DataFrame(0,index=url_tr,columns=url_tr)

105 for i in Item_matrix_tr.index:

106 for j in Item_matrix_tr.index:

107 a = sum(UI_matrix_tr.loc[:,[i,j]].sum(axis=1)==2)

108 b = sum(UI_matrix_tr.loc[:,[i,j]].sum(axis=1)!=0)

109 Item_matrix_tr.loc[i,j] = a/b

110

111 # 将物品相似度矩阵对角线处理为零

112 for i in Item_matrix_tr.index:

113 Item_matrix_tr.loc[i,i]=0

114

115 # 利用测试集数据对模型评价

116 IP_te = data_te.iloc[:,0]

117 url_te = data_te.iloc[:,1]

118 IP_te = list(set(IP_te))

119 url_te = list(set(url_te))

120

121 # 测试集数据用户物品矩阵

122 UI_matrix_te = pd.DataFrame(0,index=IP_te,columns=url_te)

123 for i in data_te.index:

124 UI_matrix_te.loc[data_te.loc[i,'realIP'],data_te.loc[i,'fullURL']] = 1

125

126 # 对测试集IP进行推荐

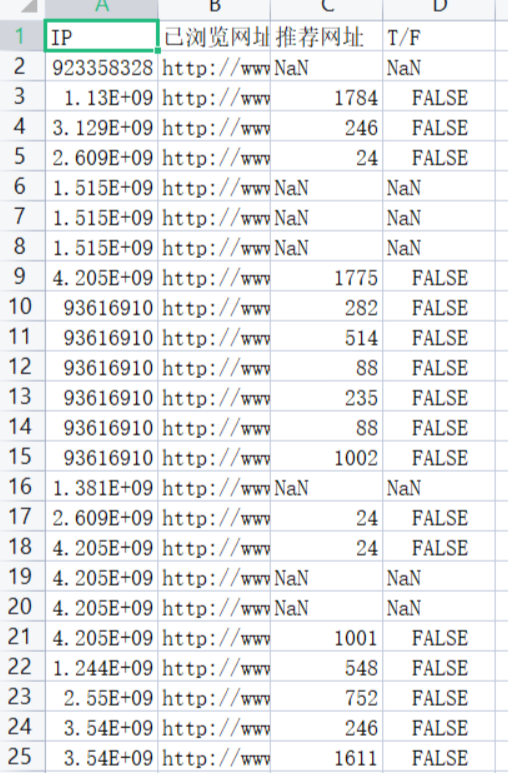

127 Res = pd.DataFrame('NaN',index=data_te.index,

128 columns=['IP','已浏览网址','推荐网址','T/F'])

129 Res.loc[:,'IP']=list(data_te.iloc[:,0])

130 Res.loc[:,'已浏览网址']=list(data_te.iloc[:,1])

131

132 # 开始推荐

133 for i in Res.index:

134 if Res.loc[i,'已浏览网址'] in list(Item_matrix_tr.index):

135 Res.loc[i,'推荐网址'] = Item_matrix_tr.loc[Res.loc[i,'已浏览网址'],

136 :].argmax()

137 if Res.loc[i,'推荐网址'] in url_te:

138 Res.loc[i,'T/F']=UI_matrix_te.loc[Res.loc[i,'IP'],

139 Res.loc[i,'推荐网址']]==1

140 else:

141 Res.loc[i,'T/F'] = False

142

143 # 保存推荐结果

144 Res.to_csv('C:\Users\86184\Desktop\文件集\data\Res.csv',index=False,encoding='utf8')

![]()

1 # 代码11-15

2

3 import pandas as pd

4 # 读取保存的推荐结果

5 Res = pd.read_csv('C:\Users\86184\Desktop\文件集\data\Res.csv',keep_default_na=False, encoding='utf8')

6

7 # 计算推荐准确率

8 Pre = round(sum(Res.loc[:,'T/F']=='False') / (len(Res.index)-sum(Res.loc[:,'T/F']=='NaN')), 3)

9

10 print(Pre)

11

12 # 计算推荐召回率

13 Rec = round(sum(Res.loc[:,'T/F']=='False') / (sum(Res.loc[:,'T/F']=='False')+sum(Res.loc[:,'T/F']=='NaN')), 3)

14

15 print(Rec)

16

17 # 计算F1指标

18 F1 = round(2*Pre*Rec/(Pre+Rec),3)

19 print(F1)

runfile('C:\Users\86184\Desktop\文件集\data\model_dianshan.py', wdir='C:\Users\86184\Desktop\文件集\data')

1.0

0.427

0.598

浙公网安备 33010602011771号

浙公网安备 33010602011771号