kubeadm部署k8s

一:简介及规划部署:

云原生生态系统:

http://dockone.io/article/3006

CNCF 最新景观图:

https://landscape.cncf.io/

CNCF 元原生主要框架简介:

https://www.kubernetes.org.cn/5482.html

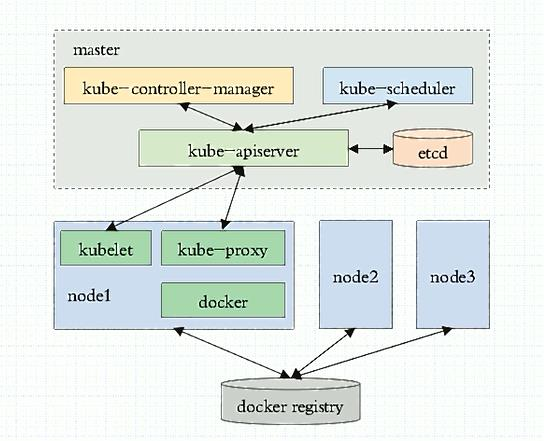

kubernetes 设计架构:

https://www.kubernetes.org.cn/kubernetes设计架构

K8s 核心优势:

基于 yaml 文件实现容器的自动创建、删除

更快速实现业务的弹性横向扩容

动态发现新扩容的容器并对自动用户提供访问

更简单、更快速的实现业务代码升级和回滚

1.1:k8s 组件介绍:

https://k8smeetup.github.io/docs/admin/kube-apiserver/

kube-apiserver:Kubernetes API server 为 api 对象验证并配置数据,包括 pods、 services、 replicationcontrollers 和其它 api 对象,API Server 提供 REST 操作和到集群共享状态的前端, 所有其他组件通过它进行交互。

https://k8smeetup.github.io/docs/admin/kube-scheduler/

kube-scheduler 是一个拥有丰富策略、能够感知拓扑变化、支持特定负载的功能组件,它对 集群的可用性、性能表现以及容量都影响巨大。scheduler 需要考虑独立的和集体的资源需 求、服务质量需求、硬件/软件/策略限制、亲和与反亲和规范、数据位置、内部负载接口、 截止时间等等。如有必要,特定的负载需求可以通过 API 暴露出来。

https://k8smeetup.github.io/docs/admin/kube-controller-manager/

kube-controller-manager:Controller Manager 作为集群内部的管理控制中心,负责集群内的 Node、Pod 副本、服务端点(Endpoint)、命名空间(Namespace)、服务账号(ServiceAccount)、 资源定额(ResourceQuota)的管理,当某个 Node 意外宕机时,Controller Manager 会及时发 现并执行自动化修复流程,确保集群始终处于预期的工作状态。

https://k8smeetup.github.io/docs/admin/kube-proxy/

kube-proxy:Kubernetes 网络代理运行在 node 上,它反映了 node 上 Kubernetes API 中定 义的服务,并可以通过一组后端进行简单的 TCP、UDP 流转发或循环模式(round robin)) 的 TCP、UDP 转发,用户必须使用 apiserver API 创建一个服务来配置代理,其实就是 kube- proxy 通过在主机上维护网络规则并执行连接转发来实现 Kubernetes 服务访问。

https://k8smeetup.github.io/docs/admin/kubelet/

kubelet:是主要的节点代理,它会监视已分配给节点的 pod,具体功能如 下:

向 master 汇报 node 节点的状态信息

接受指令并在 Pod 中创建 docker 容器

准备 Pod 所需的数据卷

返回 pod 的运行状态

在 node 节点执行容器健康检查

https://github.com/etcd-io/etcd

etcd:

etcd 是 CoreOS 公司开发目前是 Kubernetes 默认使用的 key-value 数据存储系统,用于保存 所有集群数据,支持分布式集群功能,生产环境使用时需要为 etcd 数据提供定期备份机制。

https://kubernetes.io/zh/docs/concepts/overview/components/ #新版本组件介绍

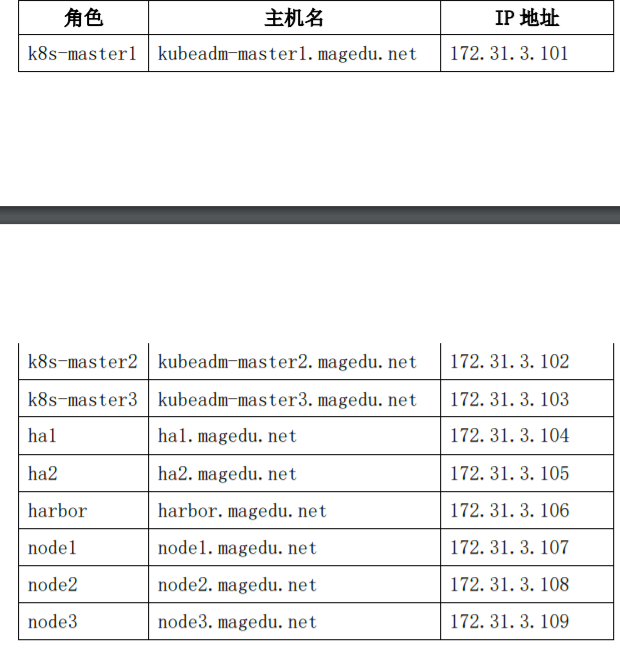

1.2:部署规划图:

1.3:安装方式:

1.3.1:部署工具:

使用批量部署工具如(ansible/ saltstack)、手动二进制、kubeadm、apt-get/yum 等方式安装, 以守护进程的方式启动在宿主机上,类似于是 Nginx 一样使用 service 脚本启动。

1.3.2:kubeadm:

https://kubernetes.io/zh/docs/setup/independent/create-cluster-kubeadm/ #beta 阶段。

使用 k8s 官方提供的部署工具 kubeadm 自动安装,需要在 master 和 node 节点上安装 docker 等组件,然后初始化,把管理端的控制服务和 node 上的服务都以 pod 的方式运行。

1.3.3:kubeadm 介绍:

https://kubernetes.io/zh/docs/reference/setup-tools/kubeadm/kubeadm/

V1.10 版本 kubeadm 介绍:

https://github.com/kubernetes/kubeadm/blob/master/docs/design/design_v1.10.md

1.3.4:安装注意事项:

注意:禁用 swap,selinux,iptables 内核参数优化,net.ipv4.ip_forward = 1

Linux ip_forward 数据包转发 https://www.jianshu.com/p/134eeae69281

vim /etc/sysctl.conf

net.ipv4.ip_forward = 1

sysctl -p

echo '* - nofile 65535' >> /etc/security/limits.conf

ulimit -SHn 65535

swapoff -a

vim /etc/fstab

# /swap

1.4:kubernetes 部署过程:

具体步骤:

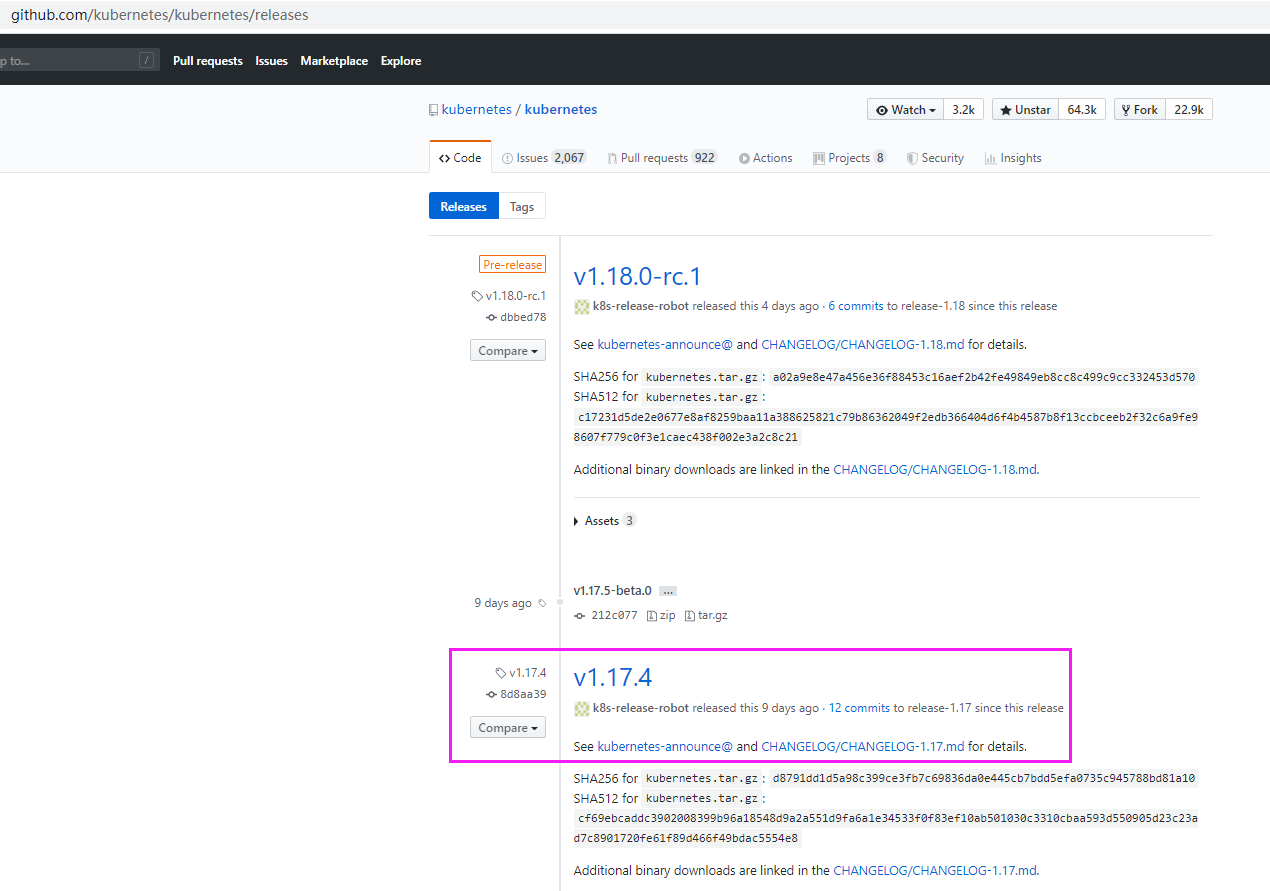

当前部署版本为当前次新版本,因为后面需要使用 kubeadm 做 kubernetes 版本升级演示。 目前官方最新版本为 1.17.4,因此本次先以 1.17.3 版本 kubernetes 为例。

1、 基础环境准备

2、 部署 harbor 及 haproxy 高可用反向代理

3、在所有 master 安装指定版本的 kubeadm 、kubelet、kubectl、docker

4、在所有 node 节点安装指定版本的 kubeadm 、kubelet、docker,在 node 节点 kubectl 为 可选安装,看是否需要在 node 执行 kubectl 命令进行集群管理及 pod 管理等操作。 5、master 节点运行 kubeadm init 初始化命令

6、验证 master 节点状态

7、在 node 节点使用 kubeadm 命令将自己加入 k8s master(需要使用 master 生成 token 认 证)

8、验证 node 节点状态

9、创建 pod 并测试网络通信

10、部署 web 服务 Dashboard

11、k8s 集群升级

1.4.1:基础环境准备:

服务器环境:

最小化安装基础系统,并关闭防火墙 selinux 和 swap,更新软件源、时间同步、安装常用命 令,重启后验证基础配置

1.4.2:harbor 及反向代理:

1.4.2.1:keepalived:

# apt update

# apt install keepalived

root@ha1:~# find / -name "keepalived.conf*"

/var/lib/dpkg/info/keepalived.conffiles

/usr/share/man/man5/keepalived.conf.5.gz

/usr/share/doc/keepalived/samples/keepalived.conf.vrrp

# cp /usr/share/doc/keepalived/samples/keepalived.conf.vrrp /etc/keepalived/keepalived.conf

root@ha1:~# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_instance VI_1 {

state MASTER

interface ens33

garp_master_delay 10

smtp_alert

virtual_router_id 56

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.31.3.248 dev ens33 label ens33:1

}

}

root@ha2:~# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

garp_master_delay 10

smtp_alert

virtual_router_id 56

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.31.3.248 dev ens33 label ens33:1

}

}

# systemctl restart keepalived.service

ip a

1.4.2.2:haproxy:

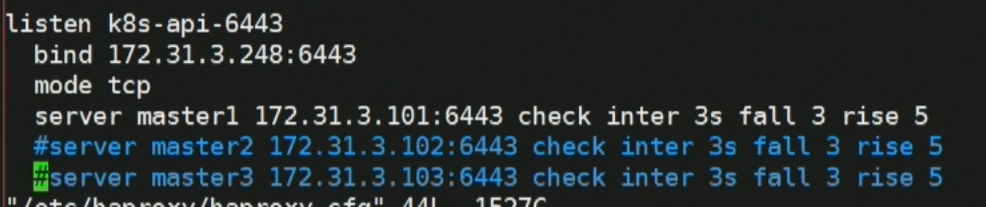

# vim /etc/haproxy/haproxy.cfg

listen k8s-api-6443

bind 172.31.3.248:6443

mode tcp

server master1 172.31.3.101:6443 check inter 3s fall 3 rise 5

server master2 172.31.3.102:6443 check inter 3s fall 3 rise 5

server master3 172.31.3.103:6443 check inter 3s fall 3 rise 5

1.4.2.3:harbor:

root@habor:~# bash docker-install.sh

apt install docker-compose

harbor-offline-installer-v1.7.6.tgz

root@habor:/usr/local/src/harbor# vim harbor.cfg

hostname = harbor.linux39.com

harbor_admin_password = 123456

./install.sh

修改win hosts

1.4.3:安装 kubeadm 等组件:

在 master 和 node 节点安装 kubeadm 、kubelet、kubectl、docker 等软件。

1.4.3.1:所有 master 和 node 节点安装 docker:

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.17.md

downloads-for-v1174 #安装经过验证的 docker 版本

装docker 19.03

#安装必要的一些系统工具

# sudo apt-get update

#apt-get -y install apt-transport-https ca-certificates curl software-properties-common

安装 GPG 证书

# curl -fsSL http://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add -

写入软件源信息

# sudo add-apt-repository "deb [arch=amd64] http://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable"

更新软件源

# apt-get -y update

查看可安装的 Docker 版本

# apt-cache madison docker-ce docker-ce-cli

安装并启动 docker 19.03.8:

# apt install docker-ce=5:19.03.8~3-0~ubuntu-bionic docker-ce-cli=5:19.03.8~3-0~ubuntu- bionic -y

# systemctl start docker && systemctl enable docker

验证 docker 版本:

# docker version

1.4.3.2:master 节点配置 docker 加速器:

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://rb55hpi7.mirror.aliyuncs.com"]

}

EOF

# sudo systemctl daemon-reload && sudo systemctl restart docker

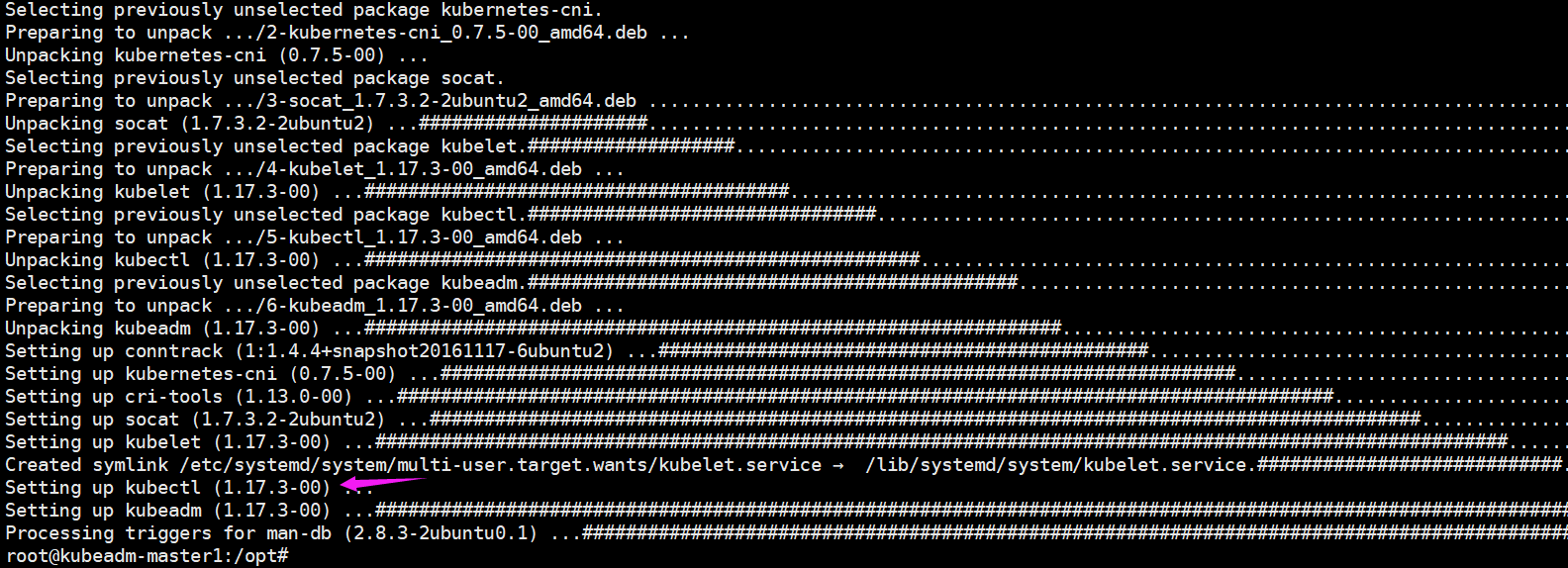

1.4.3.3:所有节点(master和node) 安装 kubelet kubeadm kubectl:

https://developer.aliyun.com/mirror/kubernetes?spm=a2c6h.13651102.0.0.3e221b11jNU2W5

apt-get update && apt-get install -y apt-transport-https

curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -

cat <<EOF >/etc/apt/sources.list.d/kubernetes.list

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

EOF

#手动输入 EOF

apt-get update

# kubeadm和k8s版本对应

apt-cache madison kubeadm

apt install kubeadm=1.17.2-00 kubectl=1.17.2-00 kubelet=1.17.2-00 -y

# node节点可以不装kubectl,如果不需要在node上管理k8s的话

启动并验证 kubelet

# systemctl start kubelet && systemctl enable kubelet && systemctl status kubelet

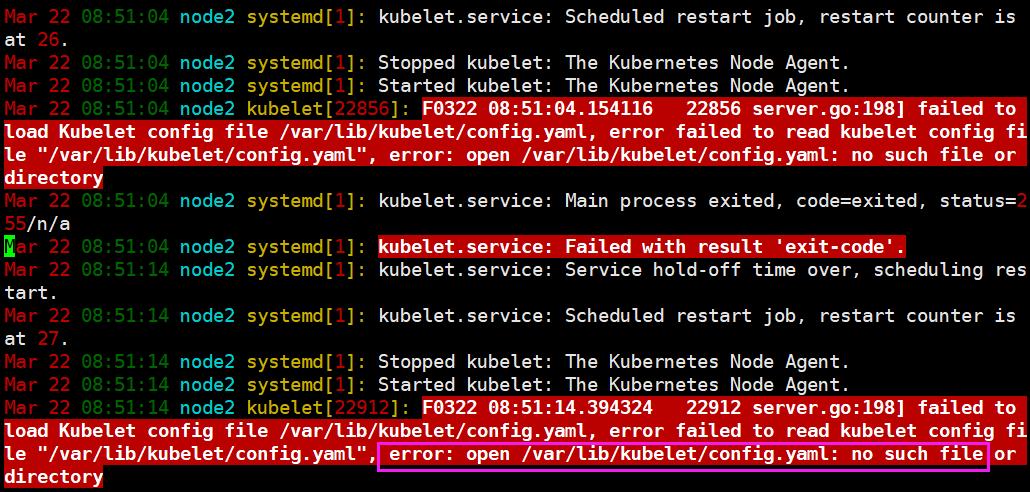

1.4.3.3.3:验证 master 节点 kubelet 服务:

目前启动 kbelet 以下报错:

1.4.5:master 节点运行 kubeadm init 初始化命令:

在三台 master 中任意一台 master 进行集群初始化,而且集群初始化只需要初始化一次。

1.4.5.1:kubeadm 命令使用:

--help

Available Commands:

alpha #kubeadm 处于测试阶段的命令

completion #bash 命令补全,需要安装 bash-completion

#mkdir /data/scripts -p

#kubeadm completion bash > /data/scripts/kubeadm_completion.sh

#source /data/scripts/kubeadm_completion.sh

#vim /etc/profile

source /data/scripts/kubeadm_completion.sh

config #管理 kubeadm 集群的配置,该配置保留在集群的 ConfigMap 中

#kubeadm config print init-defaults

help Help about any command

init #启动一个 Kubernetes 主节点

join #将节点加入到已经存在的 k8s master

reset 还原使用 kubeadm init 或者 kubeadm join 对系统产生的环境变化

token #管理 token

upgrade #升级 k8s 版本

version #查看版本信息

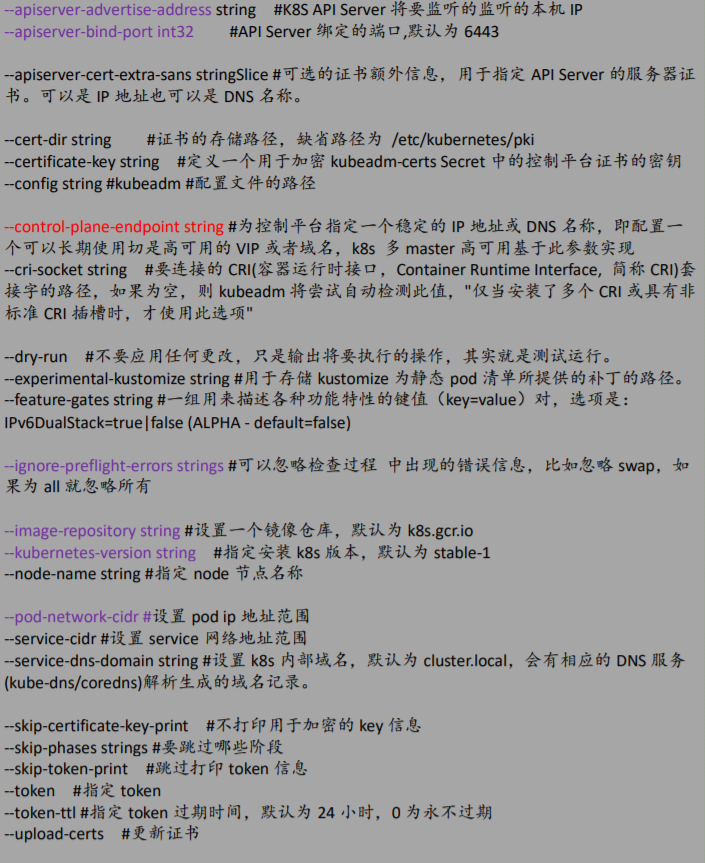

1.4.5.2:kubeadm init 命令简介:

命令使用:

https://kubernetes.io/zh/docs/reference/setup-tools/kubeadm/kubeadm/

集群初始化:

https://kubernetes.io/zh/docs/reference/setup-tools/kubeadm/kubeadm-init/

root@docker-node1:~# kubeadm init --help

1.4.5.3:验证当前 kubeadm 版本:

# kubeadm version #查看当前 kubeadm 版本

# kubeadm version

kubeadm version: &version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.2", GitCommit:"06ad960bfd03b39c8310aaf92d1e7c12ce618213", GitTreeState:"clean", BuildDate:"2020-02-11T18:12:12Z", GoVersion:"go1.13.6", Compiler:"gc", Platform:"linux/amd64"

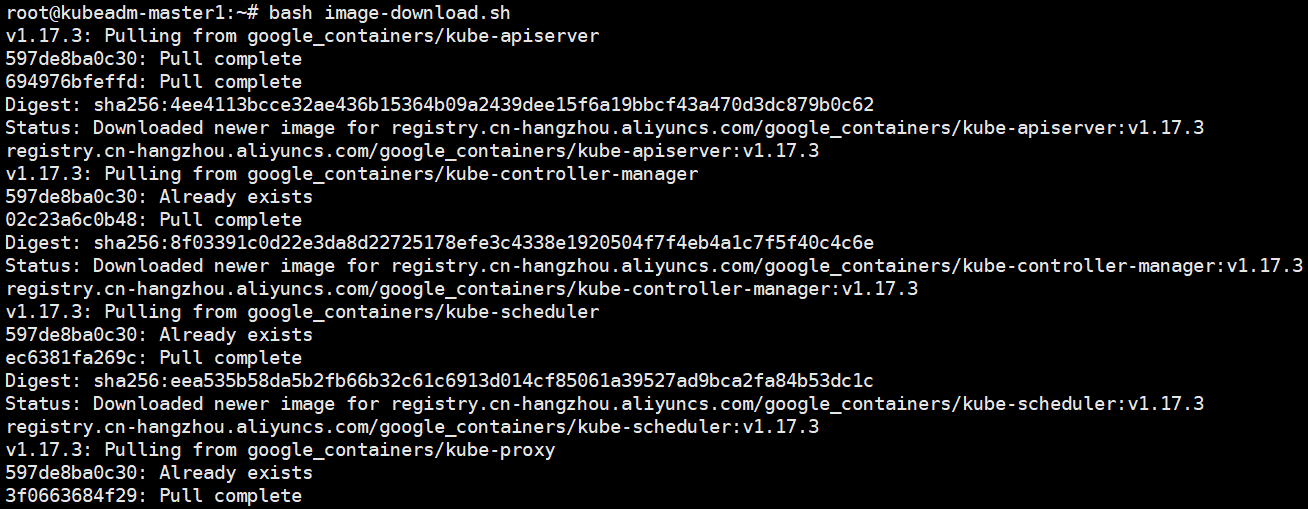

1.4.5.4:准备镜像:

查看安装指定版本 k8s 需要的镜像有哪些

# kubeadm config images list --kubernetes-version v1.17.2

k8s.gcr.io/kube-apiserver:v1.17.2

k8s.gcr.io/kube-controller-manager:v1.17.2

k8s.gcr.io/kube-scheduler:v1.17.2

k8s.gcr.io/kube-proxy:v1.17.2

k8s.gcr.io/pause:3.1

k8s.gcr.io/etcd:3.4.3-0

k8s.gcr.io/coredns:1.6.5

1.4.5.5:master 节点镜像下载:

推荐提前在 master 节点下载镜像以减少安装等待时间,但是镜像默认使用 Google 的镜像仓 库,所以国内无法直接下载,但是可以通过阿里云的镜像仓库把镜像先提前下载下来,可以 避免后期因镜像下载异常而导致 k8s 部署异常。

#!/bin/bash

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.17.2

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.17.2

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.17.2

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.17.2

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.3-0

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.6.5

1.4.5.6:单节点 master 初始化: (略过,此文档演示高可用,执行下面1.4.5.7的高可用初始化)

# kubeadm init --apiserver-advertise-address=192.168.7.101 --apiserver-bind-port=6443 -- kubernetes-version=v1.17.3 --pod-network-cidr=10.10.0.0/16 --service-cidr=10.20.0.0/16 -- service-dns-domain=linux36.local --image-repository=registry.cn-

hangzhou.aliyuncs.com/google_containers --ignore-preflight-errors=swap

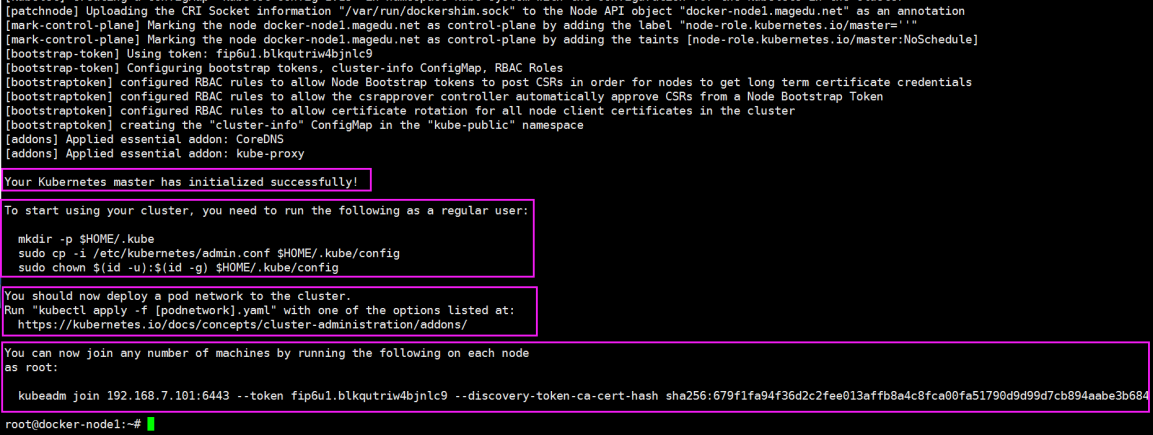

1.4.5.6.1:初始化结果:

1.4.5.7:高可用 master 初始化:

首先基于 keepalived 实现高可用 VIP,然后实现三台 k8s master 基于 VIP 实现高可用。

1.4.5.7.1:基于命令初始化高可用 master 方式:

$master1

kubeadm init --apiserver-advertise-address=172.31.3.101 --apiserver-bind-port=6443 --control-plane-endpoint=172.31.3.248 --ignore-preflight-errors=swap --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers/ --kubernetes-version=v1.17.2 --pod-network-cidr=10.10.0.0/16 --service-cidr=192.168.1.0/20 --service-dns-domain=linux39.local

master1初始化失败。 换master2成功:

root@master2:~# kubeadm init --apiserver-advertise-address=172.31.3.102 --control-plane-endpoint=172.31.3.248 --apiserver-bind-port=6443 --kubernetes-version=v1.17.2 --pod-network-cidr=10.10.0.0/16 --service-cidr=172.26.0.0/16 --service-dns-domain=linux39.local --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers --ignore-preflight-errors=swap

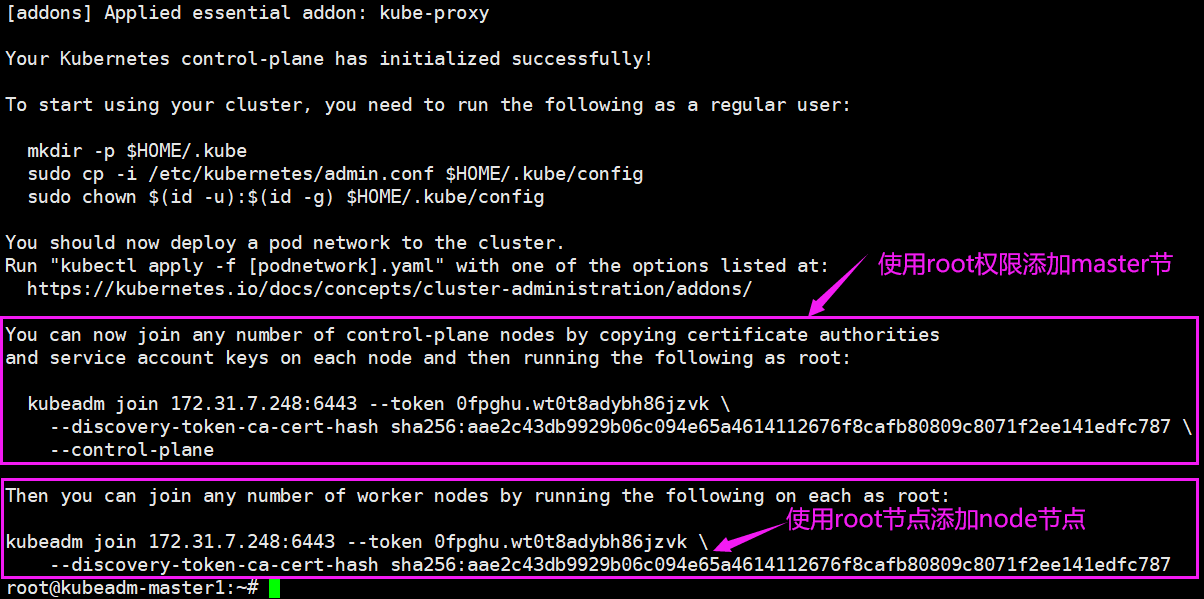

成功

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join 172.31.3.248:6443 --token w17ocw.41tbr749f4va31ir \

--discovery-token-ca-cert-hash sha256:3a75ac6d2f6340113e14c84dec36d0469e2f265796e29b61cffabcd1b5644b26 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.31.3.248:6443 --token w17ocw.41tbr749f4va31ir \

--discovery-token-ca-cert-hash sha256:3a75ac6d2f6340113e14c84dec36d0469e2f265796e29b61cffabcd1b5644b26

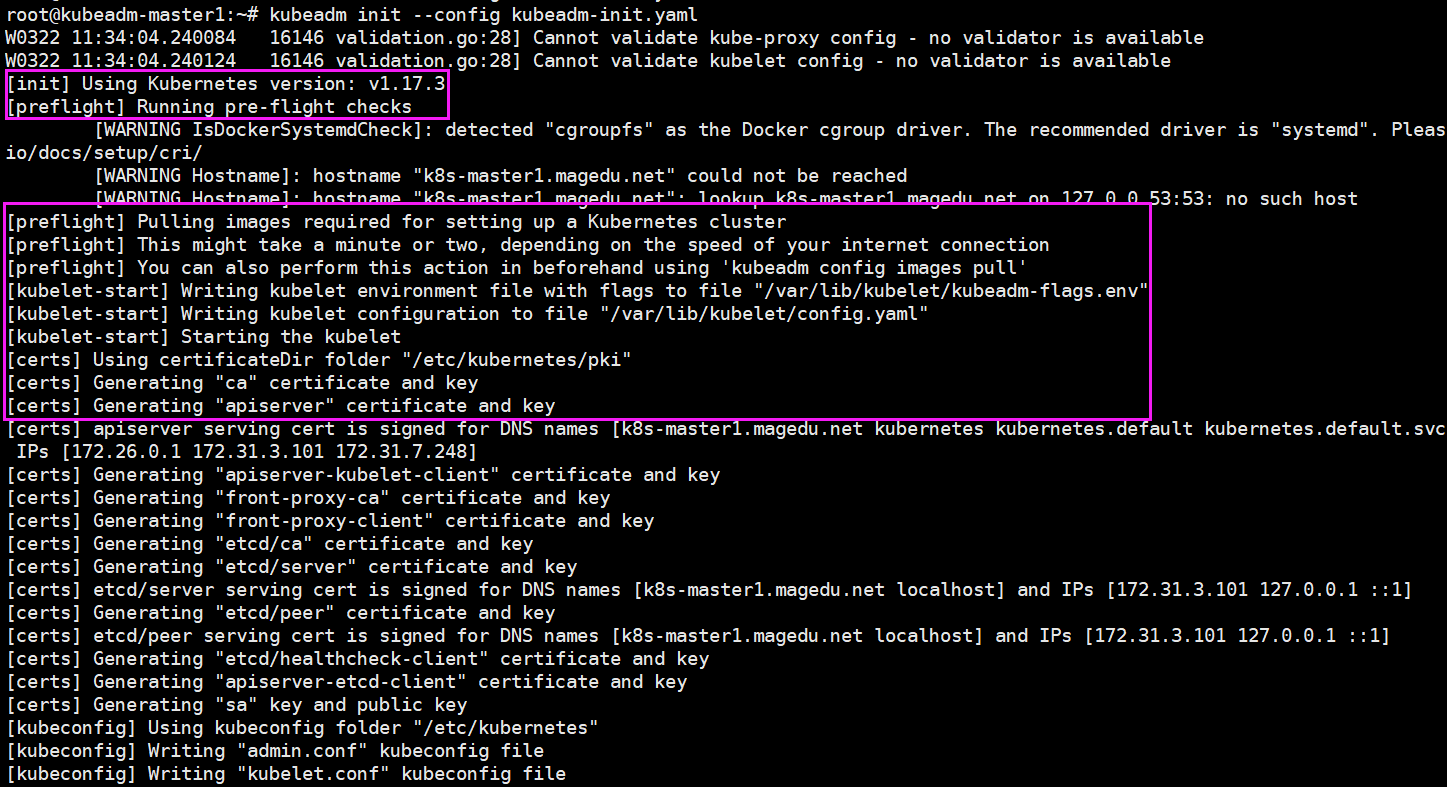

1.4.5.7.2:基于文件初始化高可用 master 方式:

(两种方式都行,kubectl reset 尝试一下这种)

(文档后面操作都是基于这个)

# kubeadm config print init-defaults > kubeadm-init.yaml #将默认配置输出至文件

root@master1:~# vim kubeadm-init.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 48h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 172.31.3.101

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: master1

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: 172.31.3.248:6443

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: v1.17.2

networking:

dnsDomain: linux39.local

podSubnet: 10.10.0.0/16

serviceSubnet: 192.168.0.0/20

scheduler: {}

kubeadm init --config kubeadm-init.yaml #基于文件执行 k8s master 初始化

推荐文件方式初始化

(图误,基于上面的配置初始化后是1.17.2)

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join 172.31.3.248:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:6c81f84a68c240e6196a05c6e4e16dc608764abdadb2195a38a6019125a0678e \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.31.3.248:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:6c81f84a68c240e6196a05c6e4e16dc608764abdadb2195a38a6019125a0678e

1.4.5.7.3:配置 kube-config 文件

Kube-config 文件中包含 kube-apiserver 地址及相关认证信息

# mkdir -p $HOME/.kube

# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

# sudo chown $(id -u):$(id -g) $HOME/.kube/config

# kubectl get node

NAME STATUS ROLES AGE VERSION k8s-master1.magedu.net NotReady master 10m v1.17.3

1.4.5.7.4:当前 master(初始化成功的master2) 生成证书用于添加新控制节点:

# kubeadm init phase upload-certs --upload-certs

W0322 11:38:39.512764 18966 validation.go:28] Cannot validate kube-proxy config - no validator is available

W0322 11:38:39.512817 18966 validation.go:28] Cannot validate kubelet config - no validator is available

[upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[upload-certs] Using certificate key:

d463abc439854a1f42b0f4c5d811d67a8b1518b9ca675eb3dbd128dcd29b5b72

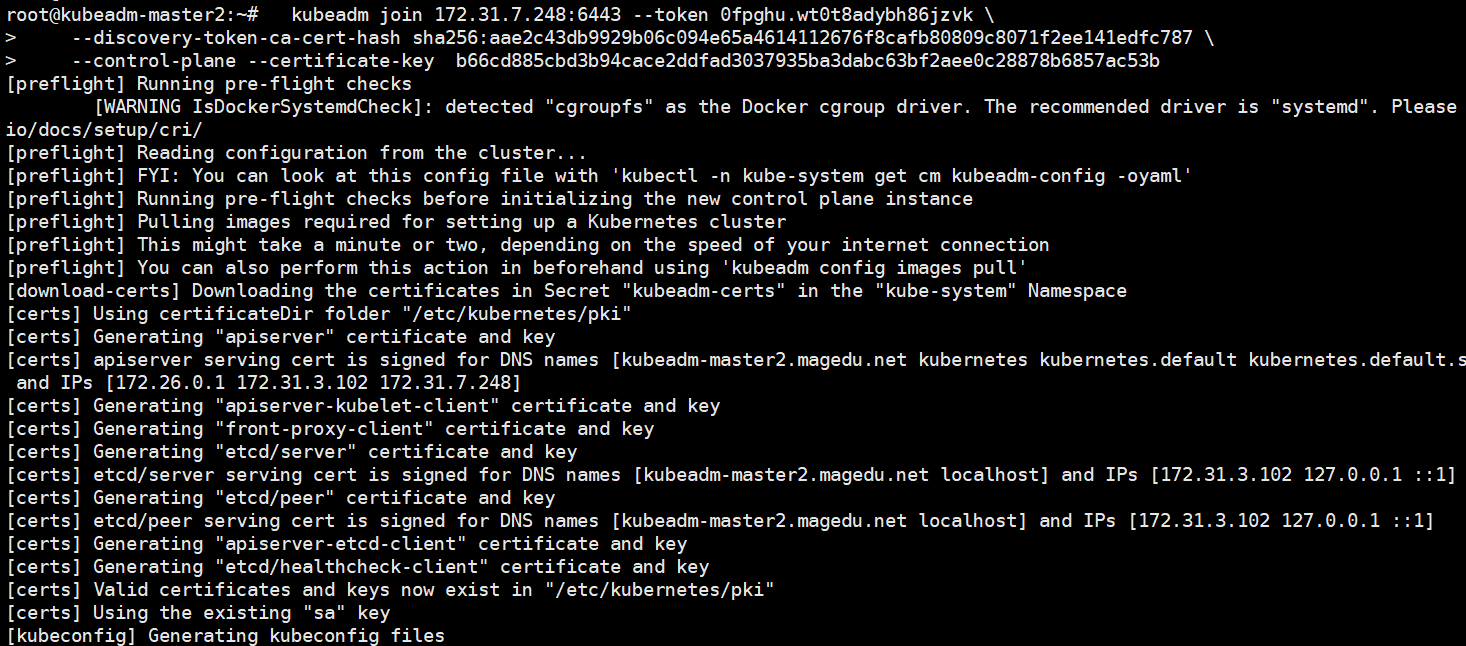

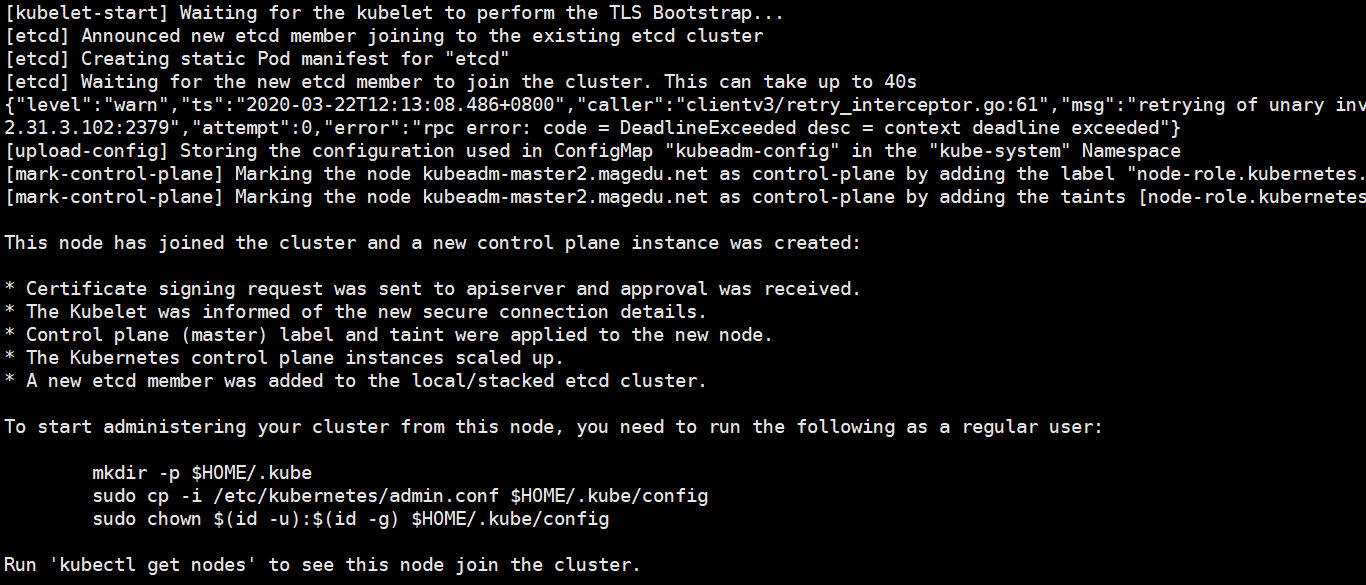

1.4.5.7.5:添加新 master 节点:

在另外一台已经安装了 docker、kubeadm 和 kubelet 的 master 节点上执行以下操作:

(最好把haproxy里没启起来的节点先删掉,不弄好像也行。。。)

# master3

kubeadm join 172.31.3.248:6443 --token w17ocw.41tbr749f4va31ir \

--discovery-token-ca-cert-hash sha256:3a75ac6d2f6340113e14c84dec36d0469e2f265796e29b61cffabcd1b5644b26 \

--control-plane --certificate-key d463abc439854a1f42b0f4c5d811d67a8b1518b9ca675eb3dbd128dcd29b5b72

master1也加进去

master1:~# kubeadm reset

kubeadm join 172.31.3.248:6443 --token w17ocw.41tbr749f4va31ir --discovery-token-ca-cert-hash sha256:3a75ac6d2f6340113e14c84dec36d0469e2f265796e29b61cffabcd1b5644b26 --control-plane --certificate-key d463abc439854a1f42b0f4c5d811d67a8b1518b9ca675eb3dbd128dcd29b5b72

root@master2:~# kubectl get node

NAME STATUS ROLES AGE VERSION

master1 STATUS master 50s v1.17.2

master2 NotReady master 10h v1.17.2

master3 NotReady master 6m56s v1.17.2

装网络组件

STATUS NotReady 得装网络组件

https://github.com/coreos/flannel

For Kubernetes v1.7+ :

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

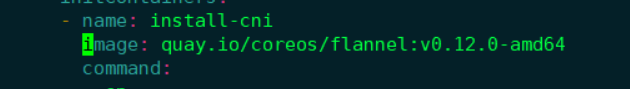

因为这个文件里默认网段跟之前我们初始化的网段不一致,所以把文件下载下来改一下

--service-cidr=172.26.0.0/16

vi kube-flannel.yml

net-conf.json: |

{

"Network": "10.10.0.0/16",

Network 网段一定要和pod地址一样! (kubeadm-init.yaml里的podSubnet

如果设置不一样,容器内ping不出去,需要重装

root@master1:~# kubectl delete -f kube-flannel.yml

root@master1:~# kubectl get pod -A

termiting..

root@master1:~# kubectl apply -f kube-flannel.yml

(这个文件会下载flannel镜像,如果下载不下来,改一下地址)

k8s中flannel:镜像下载不了 https://www.cnblogs.com/dalianpai/p/12070902.html

root@master2:~# kubectl apply -f kube-flannel.yml

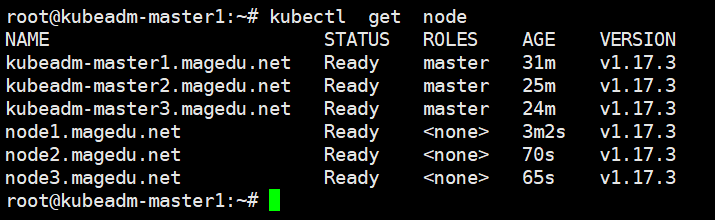

验证 master 节点状态:

# kubectl get node

NAME STATUS ROLES AGE VERSION

master1 Ready master 26m v1.17.2

master2 Ready master 11h v1.17.2

master3 Ready master 32m v1.17.2

1.4.5.7.6:kubeadm init 创建 k8s 集群流程:

https://k8smeetup.github.io/docs/reference/setup-tools/kubeadm/kubeadm-init/#init-workflow

1.4.6:验证 master 状态:

验证 master 状态:

root@master1:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master1 Ready master 3h26m v1.17.2

master2 Ready master 3h10m v1.17.2

master3 Ready master 3h9m v1.17.2

node1 Ready <none> 3h5m v1.17.2

node2 Ready <none> 3h5m v1.17.2

node3 Ready <none> 3h5m v1.17.2

1.4.6.1:验证 k8s 集群状态:

root@master1:~# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

1.4.6.2:当前 csr 证书状态:

# kubectl get csr

NAME AGE REQUESTOR CONDITION csr-7bzrk 14m system:bootstrap:0fpghu Approved,Issued csr-jns87 20m system:node:kubeadm-master1.magedu.net Approved,Issued csr-vcjdl 14m system:bootstrap:0fpghu Approved,Issued

1.4.7:k8s 集群添加 node 节点:

各需要加入到 k8s master 集群中的 node 节点都要安装 docker kubeadm kubelet ,因此都要 重新执行安装 docker kubeadm kubelet 的步骤,即配置 apt 仓库、配置 docker 加速器、安装 命令、启动 kubelet 服务。

- 添加命令为 master 端 kubeadm init 初始化完成之后返回的添加命令:

kubeadm join 172.31.7.248:6443 --token 0fpghu.wt0t8adybh86jzvk \

--discovery-token-ca-cert-hash

sha256:aae2c43db9929b06c094e65a4614112676f8cafb80809c8071f2ee141edfc787

注:Node 节点会自动加入到 master 节点,下载镜像并启动 flannel,直到最终在 master 看 到 node 处于 Ready 状态。

1.4.8:验证 node 节点状态:

(拉镜像可能比较慢,多等会)

/var/log/syslog

k8s中flannel:镜像下载不了 https://www.cnblogs.com/dalianpai/p/12070902.html

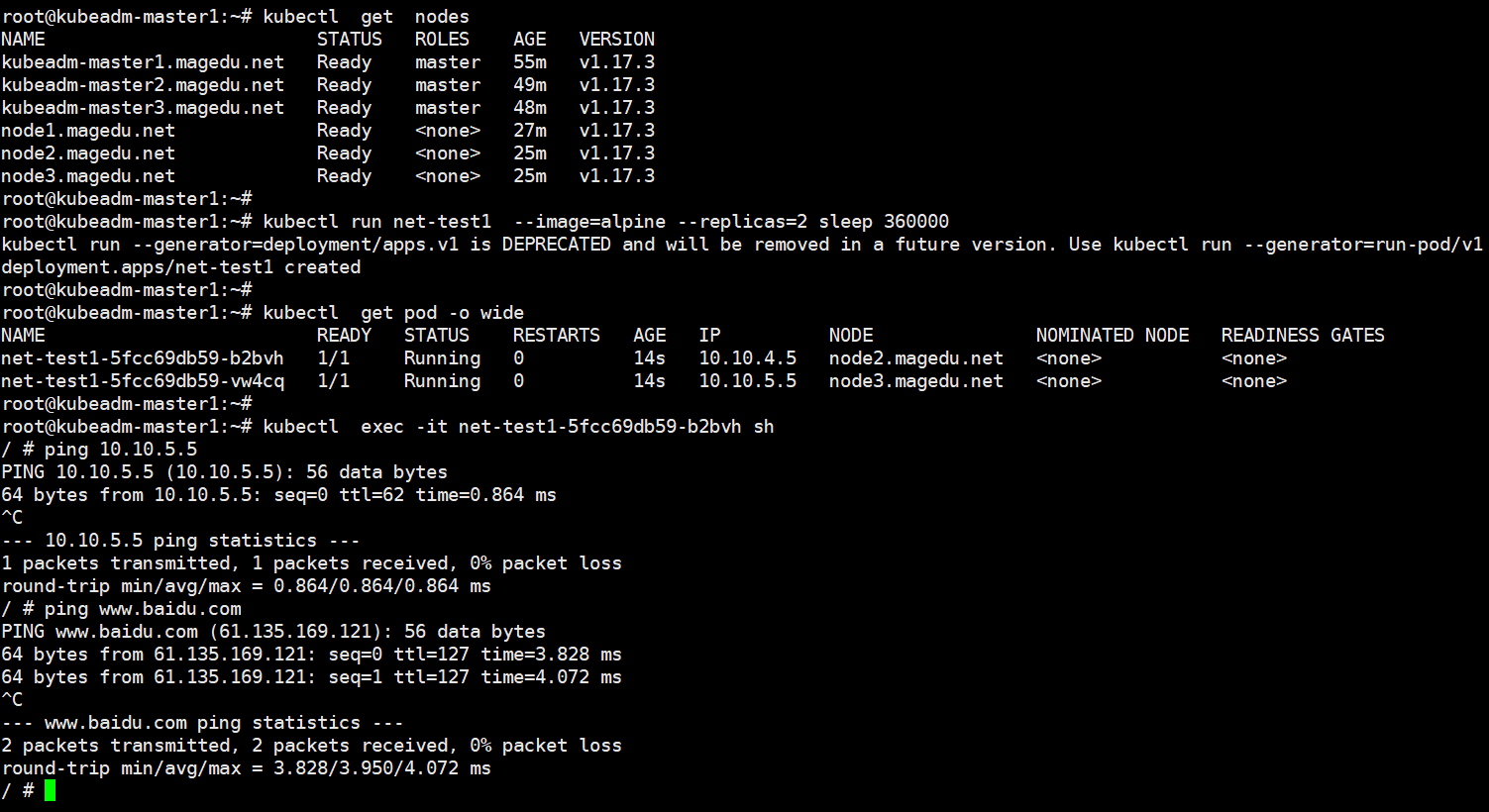

1.4.9:k8s 创建容器并测试网络:

创建测试容器,测试网络连接是否可以通信:

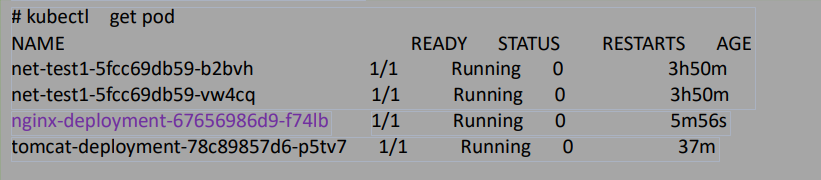

# kubectl run net-test1 --image=alpine --replicas=2 sleep 360000 (运行sleep命令)

重建dns

发现dns有问题,进入容器无法ping域名

root@master1:~# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

default net-test1-5fcc69db59-fzlzg 1/1 Running 1 2d3h

default net-test1-5fcc69db59-l5w6h 1/1 Running 0 4m34s

kube-system coredns-7f9c544f75-2bljh 1/1 Running 0 2d7h

kube-system coredns-7f9c544f75-4vrmb 1/1 Running 0 2d7h

指定namespace删除

root@master1:~# kubectl delete pod coredns-7f9c544f75-2bljh -n kube-system

pod "coredns-7f9c544f75-2bljh" deleted

root@master1:~# kubectl delete pod coredns-7f9c544f75-4vrmb -n kube-system

pod "coredns-7f9c544f75-4vrmb" deleted

root@master1:~# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

default net-test1-5fcc69db59-fzlzg 1/1 Running 1 2d3h

default net-test1-5fcc69db59-l5w6h 1/1 Running 0 8m25s

kube-system coredns-7f9c544f75-hncbm 1/1 Running 0 38s

kube-system coredns-7f9c544f75-m2dwv 1/1 Running 0 65s

通了

root@master1:~# kubectl get pod

NAME READY STATUS RESTARTS AGE

net-test1-5fcc69db59-fzlzg 1/1 Running 1 2d3h

net-test1-5fcc69db59-l5w6h 1/1 Running 0 8m51s

root@master1:~# kubectl exec -it net-test1-5fcc69db59-l5w6h sh

/ # cat /etc/resolv.conf

nameserver 192.168.0.10

search default.svc.linux39.local svc.linux39.local linux39.local

options ndots:5

/ # ping baidu.com

PING baidu.com (39.156.69.79): 56 data bytes

64 bytes from 39.156.69.79: seq=0 ttl=127 time=20.698 ms

(可能是网络组件没有在短时间内起来,pod起了后没网)

1.4.10:部署 web 服务 dashboard:

https://github.com/kubernetes/dashboard

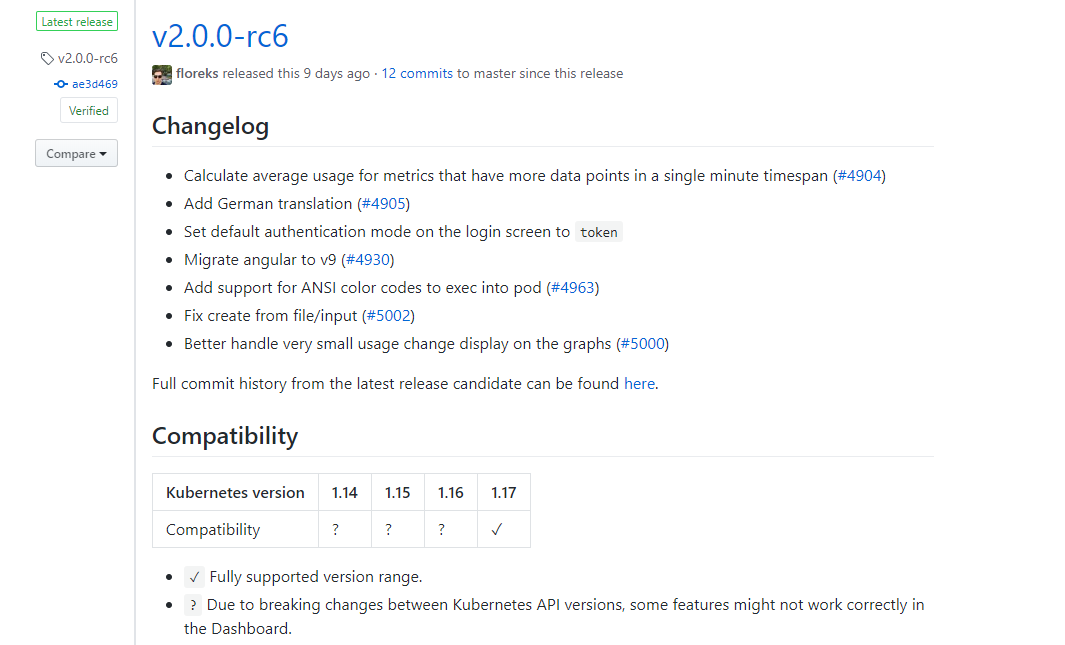

1.4.10.1:部署 dashboard 2.0.0-rc6:

root@master1:~# vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --insecure-registry harbor.linux39.com

root@master1:~# systemctl daemon-reload

root@master1:~# systemctl restart docker

把node上也加上--insecure-registry harbor.linux39.com

root@master1:~# docker login harbor.linux39.com

Username: admin

Password:

root@master1:~# docker pull kubernetesui/dashboard:v2.0.0-rc6

root@master1:~# docker tag cdc71b5a8a0e harbor.linux39.com/baseimages/dashboard:v2.0.0-rc6

docker push harbor.linux39.com/baseimages/dashboard:v2.0.0-rc6

root@master1:~# docker tag docker.io/kubernetesui/metrics-scraper:v1.0.3 harbor.linux39.com/baseimages/metrics-scraper:v1.0.3

root@master1:~# docker push harbor.linux39.com/baseimages/metrics-scraper:v1.0.3

(metrics-scraper是用来收集一些指标数据的)

文档里有yml文件

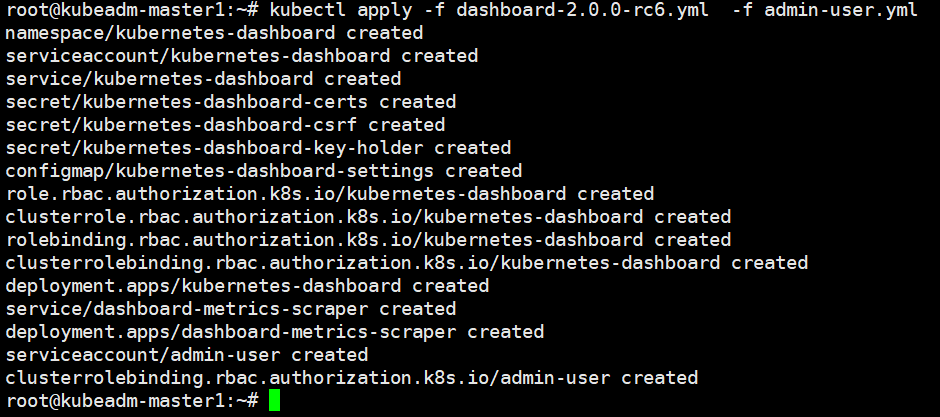

# root@master1:~# kubectl apply -f dash_board-2.0.0-rc6.yml

root@master1:~# kubectl apply -f ad_min-user.yml

[k8s]kubernetes安装dashboard步骤 https://www.jianshu.com/p/073577bdec98

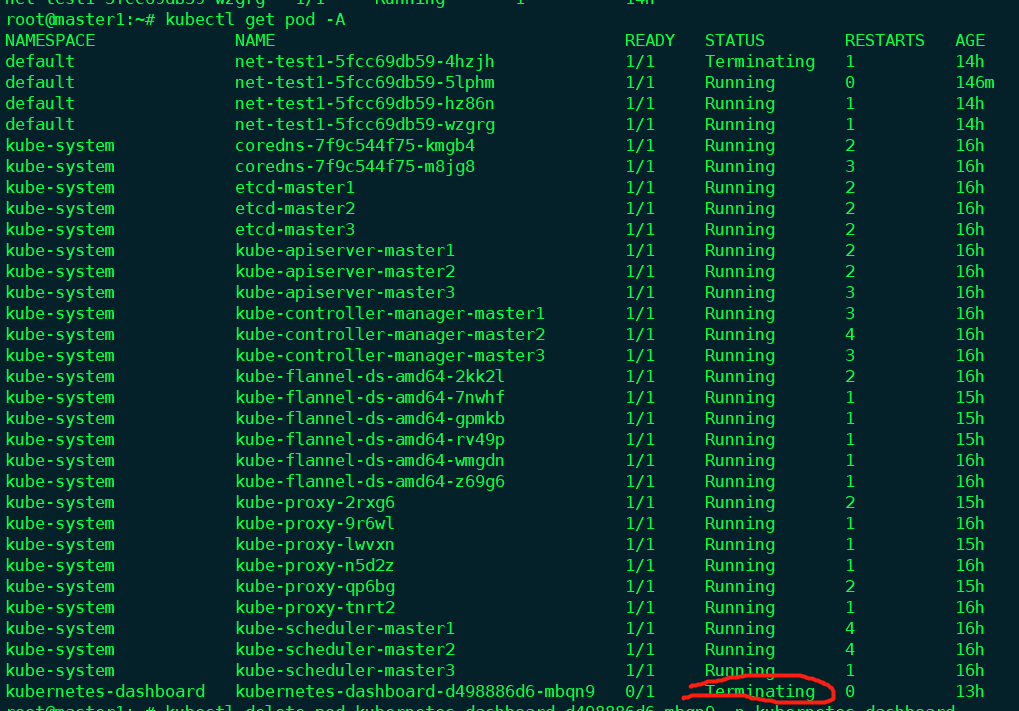

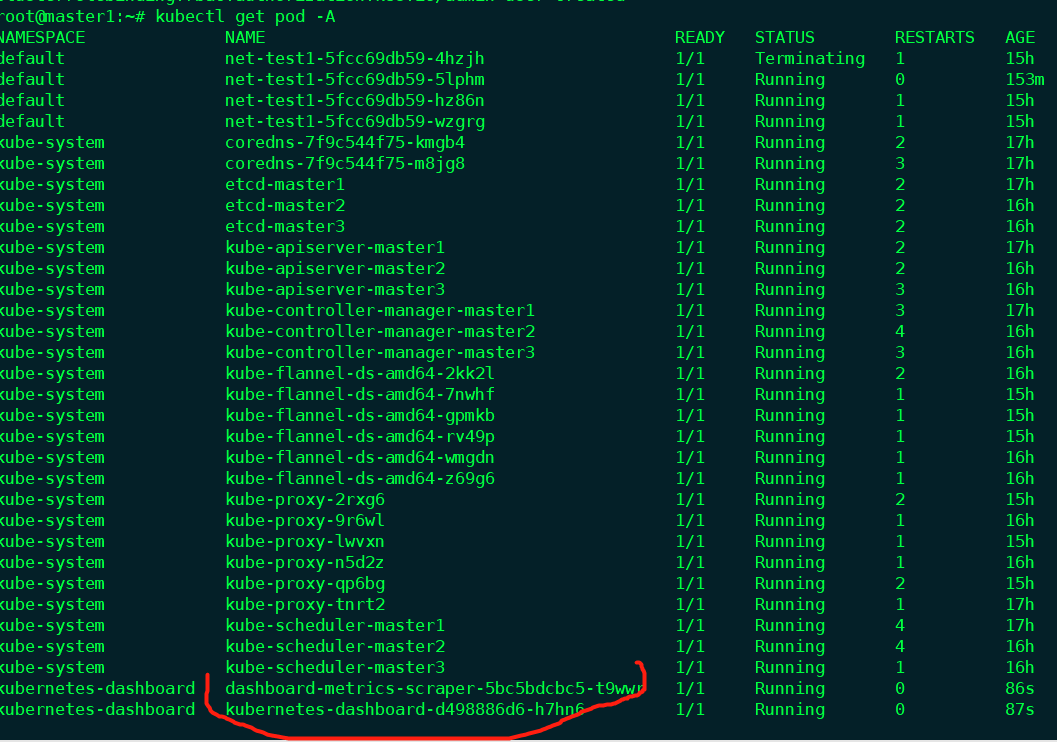

如果teminating,删除

kubectl delete pod kubernetes-dashboard-d498886d6-mbqn9 -n kubernetes-dashboard

删不掉..

kubectl delete pod kubernetes-dashboard-d498886d6-mbqn9 -n kubernetes-dashboard --grace-period=0 --force

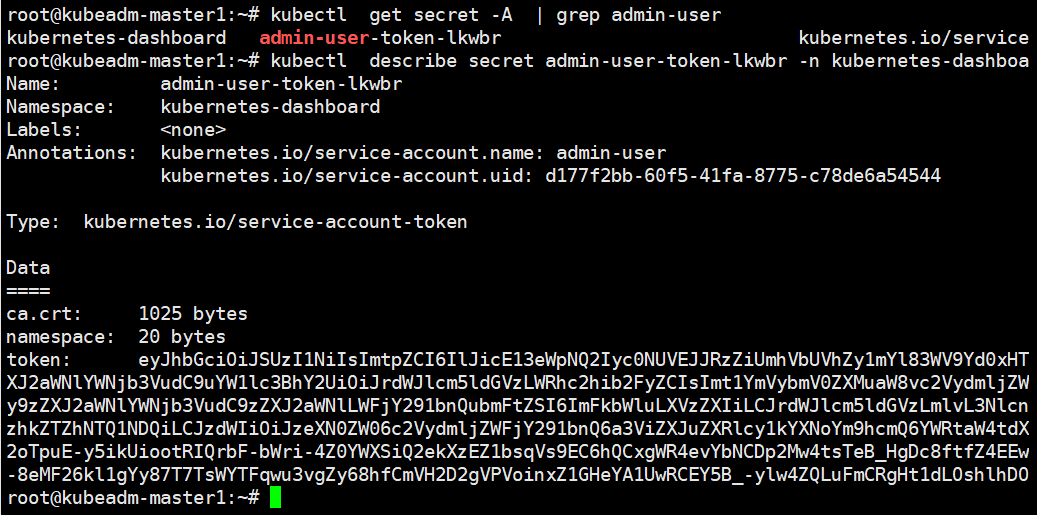

1.4.10.2:获取登录 token:

# kubectl get secret -A | grep admin-user

kubernetes-dashboard admin-user-token-lkwbr

kubernetes.io/service-account-token 3 3m15s

# kubectl describe secret admin-user-token-lkwbr -n kubernetes-dashboard

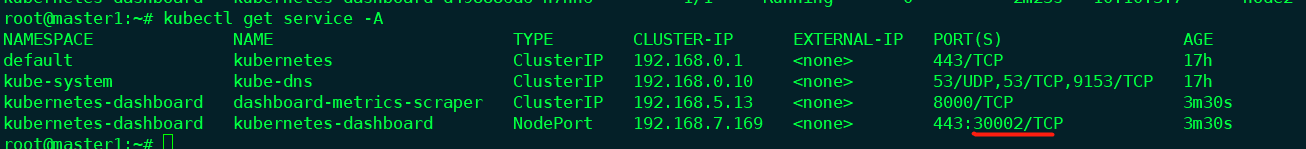

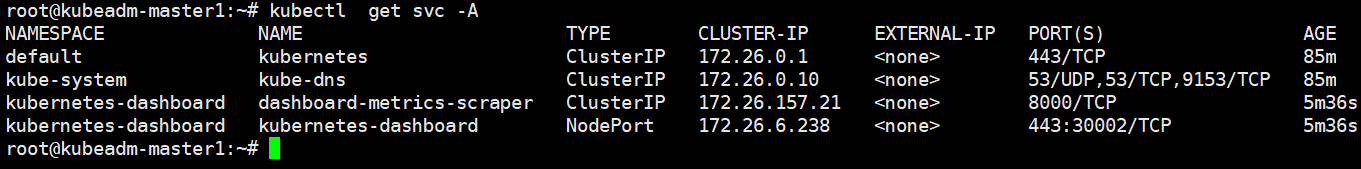

1.4.10.3:验证 NodePort:

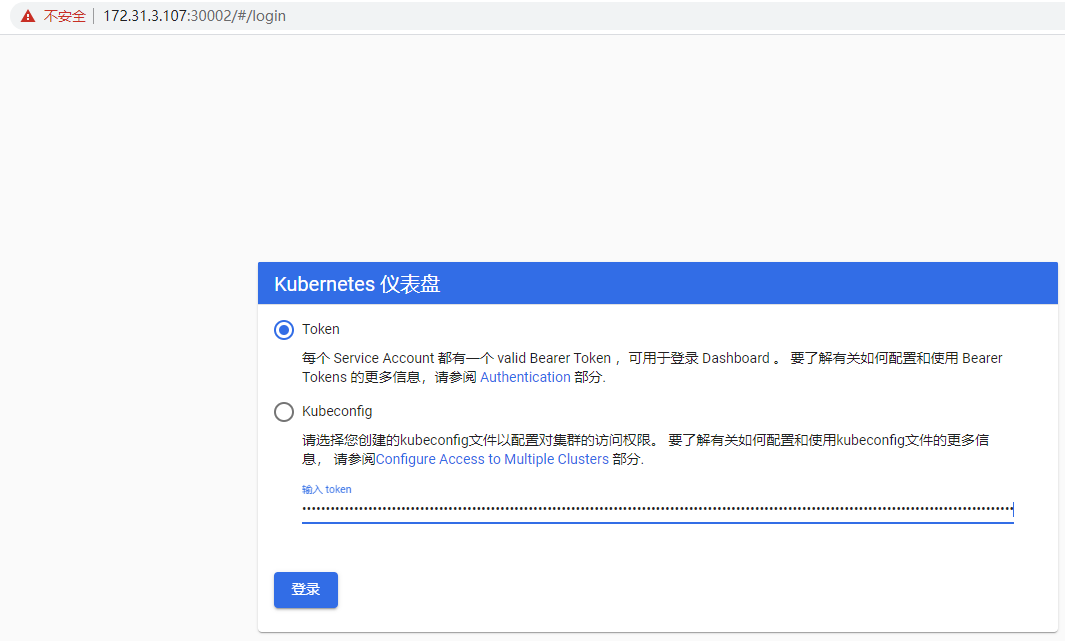

1.4.10.4:登录 dashboard:

(访问哪个node ip都行,因为nodeport端口会在任何node节点上都会监听)

https://172.31.3.107:30002/#/login

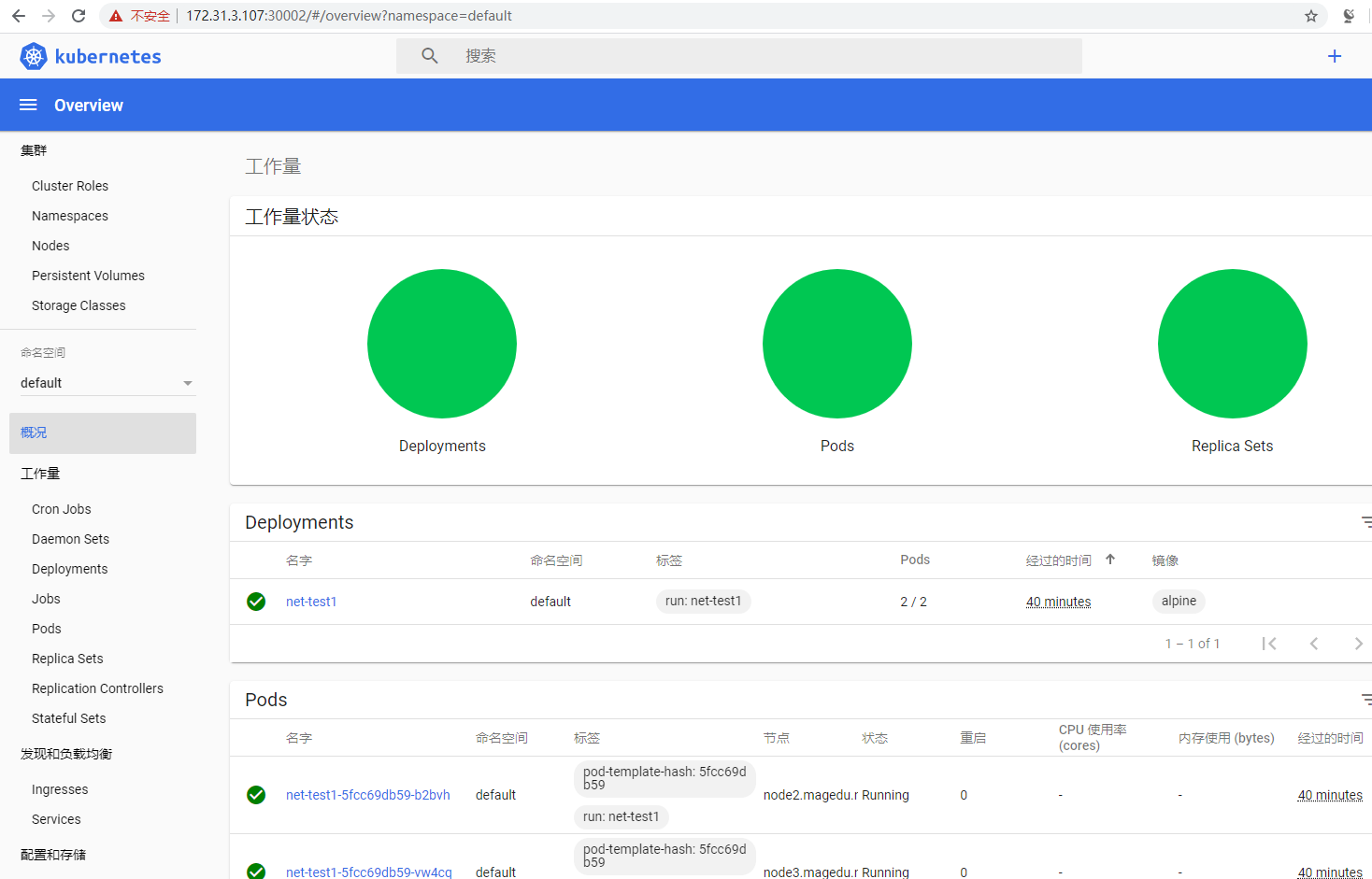

1.4.10.5:登录成功:

1.4.11:k8s 集群升级:

升级 k8s 集群必须 先升级 kubeadm 版本到目的 k8s 版本,也就是说 kubeadm 是 k8s 升级 的 ”准升证”。

1.4.11.1:升级 k8s master 服务:

在 k8s 的所有 master 进行升级,将管理端服务 kube-controller-manager、kube-apiserver、 kube-scheduler、kube-proxy

1.4.11.1.1:验证当 k8s 前版本:

# kubeadm version

kubeadm version: &version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.2", GitCommit:"59603c6e503c87169aea6106f57b9f242f64df89", GitTreeState:"clean", BuildDate:"2020-01-18T23:27:49Z", GoVersion:"go1.13.5", Compiler:"gc", Platform:"linux/amd64"}

1.4.11.1.2:各 master 安装指定新版本 kubeadm:

# apt-cache madison kubeadm #查看 k8s 版本列表

# apt-get install kubeadm=1.17.4-00 #安装新版本 kubeadm

(master1,2,3都执行)

# kubeadm version #验证 kubeadm 版本

kubeadm version: &version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.4", GitCommit:"8d8aa39598534325ad77120c120a22b3a990b5ea", GitTreeState:"clean", BuildDate:"2020-03-12T21:01:11Z", GoVersion:"go1.13.8", Compiler:"gc",

Platform:"linux/amd64"}

1.4.11.1.3:kubeadm 升级命令使用帮助:

# kubeadm upgrade --help

Upgrade your cluster smoothly to a newer version with this command

Usage:

kubeadm upgrade [flags]

kubeadm upgrade [command]

Available Commands:

apply Upgrade your Kubernetes cluster to the specified version

diff Show what differences would be applied to existing static pod manifests. See also: kubeadm upgrade apply --dry-run

node Upgrade commands for a node in the cluster

plan Check which versions are available to upgrade to and validate whether your current cluster is upgradeable. To skip the internet check, pass in the optional [version] parameter

1.4.11.1.4:升级计划:

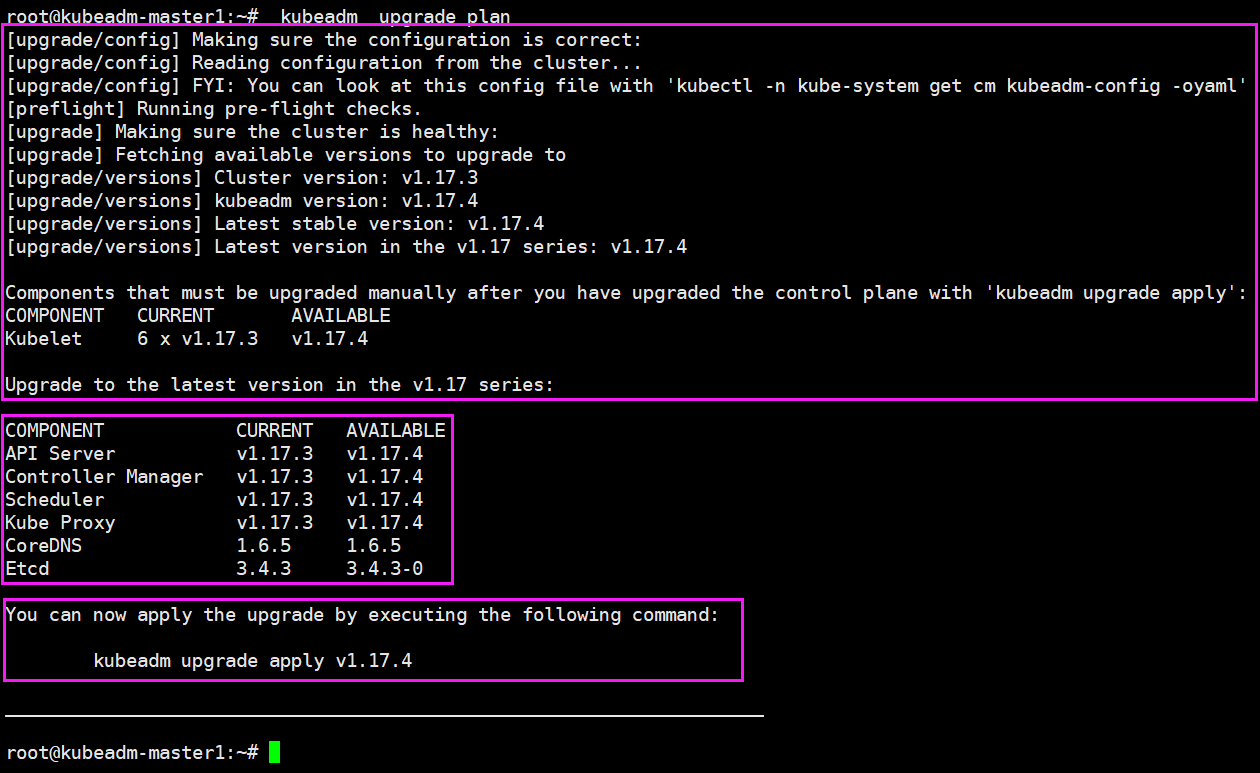

# kubeadm upgrade plan #查看升级计划

升级前最好在haproxy里把它摘下去,避免请求转发给正在升级的机器

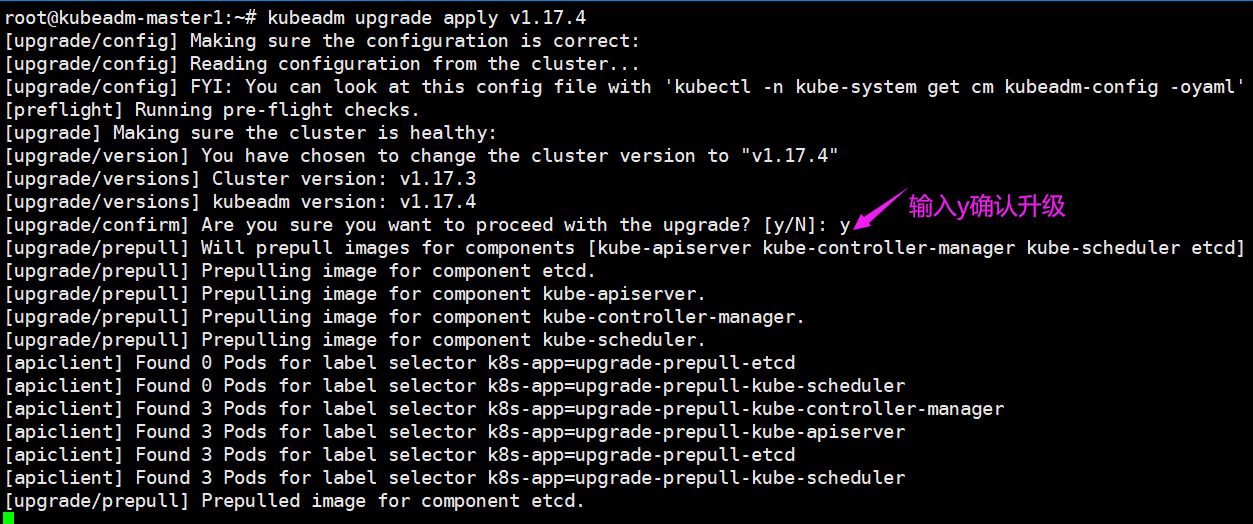

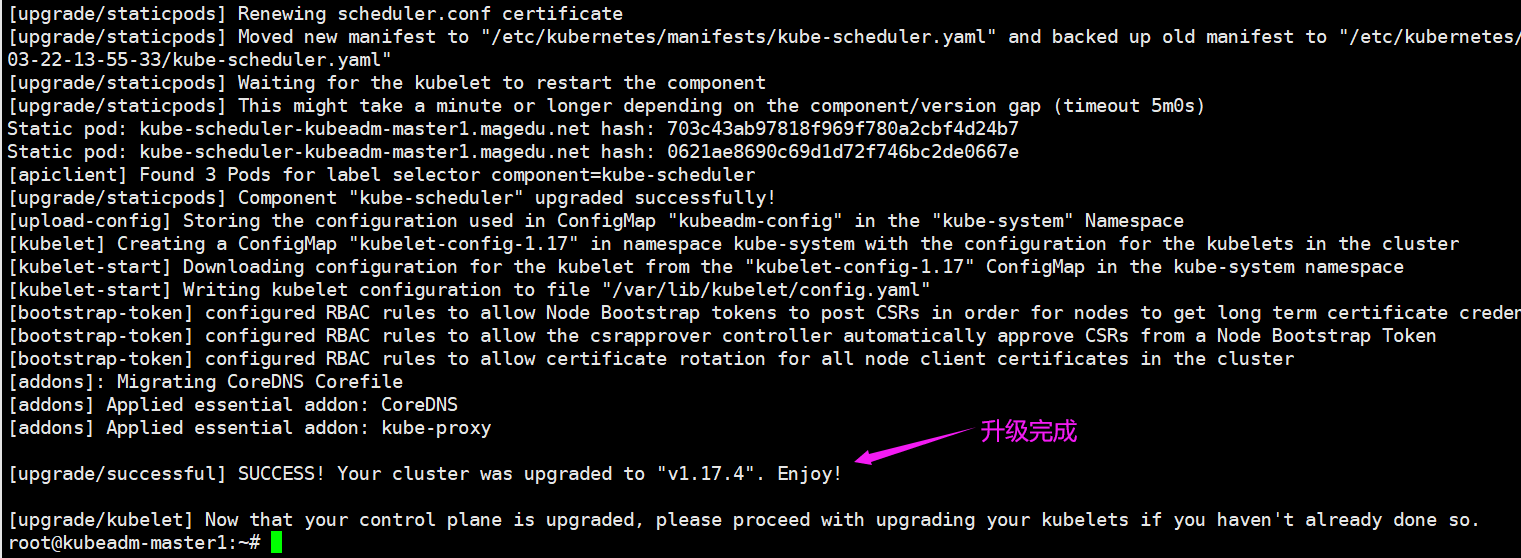

1.4.11.1.5:开始升级:

拉镜像太慢了,也可以先从阿里云拉下来

#!/bin/bash

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.17.4

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.17.4

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.17.4

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.17.4

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers//etcd:3.4.3-0

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.6.5

bash k8s-1.17.4-images-download.sh

#kubeadm upgrade apply v1.17.4 #master1

#kubeadm upgrade apply v1.17.4 #master2

#kubeadm upgrade apply v1.17.4 #master3

(不要同时升级)

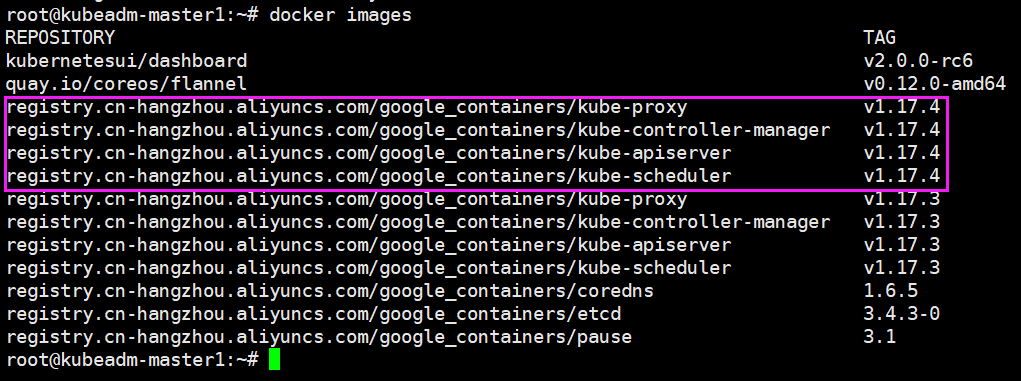

1.4.11.1.6:验证镜像:

1.4.11.2:升级 k8s node 服务:

升级客户端服务 kubectl kubelet

apt install kubectl=1.17.4-00 kubelet=1.17.4-00

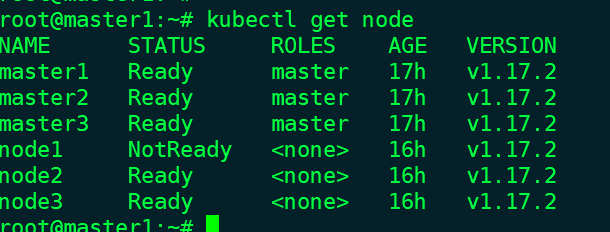

1.4.11.2.1:验证当前 node 版本信息: node 节点还是旧版本:

root@master3:~# kubectl get node

NAME STATUS ROLES AGE VERSION

master1 Ready master 18h v1.17.4

master2 Ready master 18h v1.17.4

master3 Ready master 17h v1.17.4

node1 NotReady <none> 16h v1.17.2

node2 Ready <none> 16h v1.17.2

node3 Ready <none> 16h v1.17.2

1.4.15.1.7:升级各 node 节点配置文件:

# kubeadm upgrade node --kubelet-version 1.17.4

[upgrade] Reading configuration from the cluster...

[upgrade] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[upgrade] Skipping phase. Not a control plane node.

[upgrade] Using kubelet config version 1.17.4, while kubernetes-version is v1.17.4

[kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.17" ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[upgrade] The configuration for this node was successfully updated!

[upgrade] Now you should go ahead and upgrade the kubelet package using your package manager.

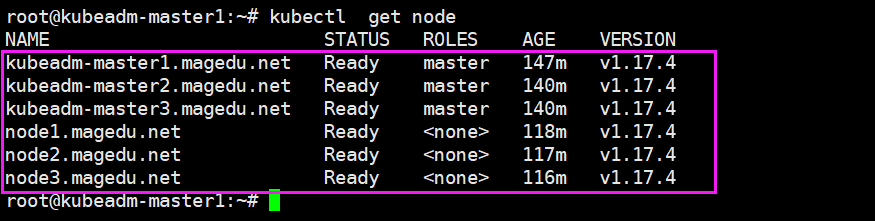

1.4.15.8:各 Node 节点升级 kubelet 二进制包: 包含 master 节点在的 kubectl、kubelet 也要升级

apt install kubelet=1.17.4-00 kubeadm=1.17.4-00 kubectl=1.17.4-00

验证最终版本:

1.5:测试运行 Nginx+Tomcat:

https://kubernetes.io/zh/docs/concepts/workloads/controllers/deployment/ 测试运行 nginx,并最终可以将实现动静分离

官方测试yml:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

1.5.1:运行 Nginx:

root@master1:/opt/kubdadm-yaml/nginx# docker pull nginx:1.14.2

root@master1:/opt/kubdadm-yaml/nginx# docker tag nginx:1.14.2 harbor.linux39.com/baseimages/nginx:1.14.2

root@master1:/opt/kubdadm-yaml/nginx# docker push harbor.linux39.com/baseimages/nginx:1.14.2

# pwd

/opt/kubdadm-yaml

# cat nginx/nginx.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: harbor.linux39.com/baseimages/nginx:1.14.2

ports:

- containerPort: 80

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-nginx-service-label

name: magedu-nginx-service

namespace: default

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 80

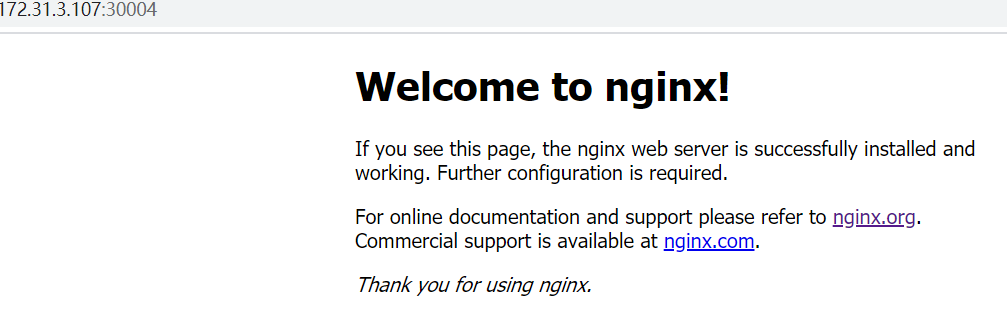

nodePort: 30004

selector:

app: nginx

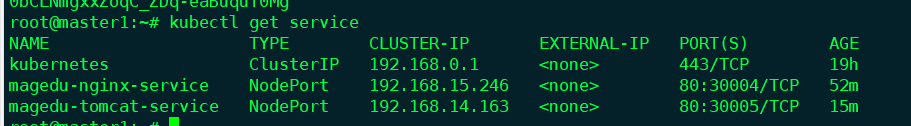

# kubectl apply -f nginx/nginx.yml

deployment.apps/nginx-deployment created

service/magedu-nginx-service created

# kubectl get pod

进入每个pod,测试页面

root@master1:/opt/kubdadm-yaml/nginx# kubectl get pod

NAME READY STATUS RESTARTS AGE

net-test1-5fcc69db59-fzlzg 1/1 Running 2 4d1h

net-test1-5fcc69db59-l5w6h 1/1 Running 1 45h

nginx-deployment-66fc88798-s5w4m 1/1 Running 0 3m25s

nginx-deployment-66fc88798-vdpxl 1/1 Running 0 7m14s

nginx-deployment-66fc88798-w29zq 1/1 Running 0 3m25s

root@master1:/opt/kubdadm-yaml/nginx# kubectl exec -it nginx-deployment-66fc88798-vdpxl bash

root@nginx-deployment-66fc88798-vdpxl:/# cd /usr/share/nginx/html/

root@nginx-deployment-66fc88798-vdpxl:/usr/share/nginx/html# ls

50x.html index.html

root@nginx-deployment-66fc88798-vdpxl:/usr/share/nginx/html# echo pod2 > index.html

...

基于 haproxy 和 keepalived 实现高可用的反向代理,并访问到运行在 kubernetes 集群中业务 Pod。

再弄一个VIP 172.31.3.249

haproxy来调度后端 nginx pod

root@ha1:/etc/haproxy# vim haproxy.cfg

listen k8s-api-6443

bind 172.31.3.248:6443

mode tcp

server master1 172.31.3.101:6443 check inter 3s fall 3 rise 5

server master2 172.31.3.102:6443 check inter 3s fall 3 rise 5

server master3 172.31.3.103:6443 check inter 3s fall 3 rise 5

listen k8s-web-80

bind 172.31.3.249:80

mode tcp

balance roundrobin

server 172.31.3.107 172.31.3.107:30004 check inter 2s fall 3 rise 5

server 172.31.3.108 172.31.3.108:30004 check inter 2s fall 3 rise 5

server 172.31.3.109 172.31.3.109:30004 check inter 2s fall 3 rise 5

root@ha1:/etc/haproxy# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

}

vrrp_instance VI_1 {

state MASTER

interface ens33

garp_master_delay 10

smtp_alert

virtual_router_id 56

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.31.3.248 dev ens33 label ens33:1

172.31.3.249 dev ens33 label ens33:2

}

}

172.31.3.249 www.linux39.com

1.5.2:运行 tomcat:

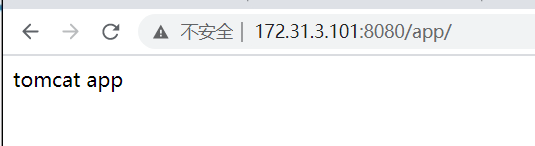

root@master1:~# docker pull tomcat

root@master1:~# docker run -it --rm -p 8080:8080 tomcat

root@master1:~# docker exec -it c89dbb6c7462 bash

root@c89dbb6c7462:/usr/local/tomcat# cd webapps

root@c89dbb6c7462:/usr/local/tomcat/webapps# mkdir app

root@c89dbb6c7462:/usr/local/tomcat/webapps# echo "tomcat app" > app/index.html

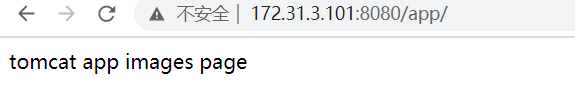

root@master1:/opt/kubdadm-yaml/tomcat# vim Dockerfile

FROM tomcat

ADD ./app /usr/local/tomcat/webapps/app/

root@master1:/opt/kubdadm-yaml/tomcat# mkdir app

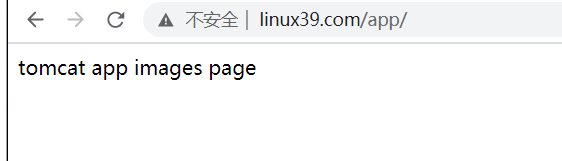

root@master1:/opt/kubdadm-yaml/tomcat# echo "tomcat app images page" > app/index.html

root@master1:/opt/kubdadm-yaml/tomcat# docker build -t harbor.linux39.com/linux39/tomcat:app .

root@master1:/opt/kubdadm-yaml/tomcat#

docker run -it --rm -p 8080:8080 harbor.linux39.com/linux39/tomcat:app

docker push harbor.linux39.com/linux39/tomcat:app

root@master1:/opt/kubdadm-yaml/tomcat# cp ../nginx/nginx.yml ./tomcat.yml

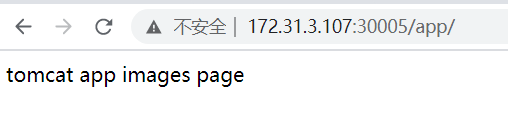

root@master1:/opt/kubdadm-yaml/tomcat# vim ./tomcat.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat-deployment

labels:

app: tomcat

spec:

replicas: 3

selector:

matchLabels:

app: tomcat

template:

metadata:

labels:

app: tomcat

spec:

containers:

- name: tomcat

image: harbor.linux39.com/linux39/tomcat:app

ports:

- containerPort: 8080 # 容器端口

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-tomcat-service-label

name: magedu-tomcat-service

namespace: default

spec:

type: NodePort

ports:

- name: http

port: 80 # service端口

protocol: TCP

targetPort: 8080 # 转发到容器哪个端口

nodePort: 30005 #

selector:

app: tomcat

root@master1:/opt/kubdadm-yaml/tomcat# kubectl apply -f tomcat.yml

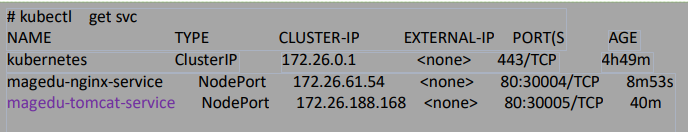

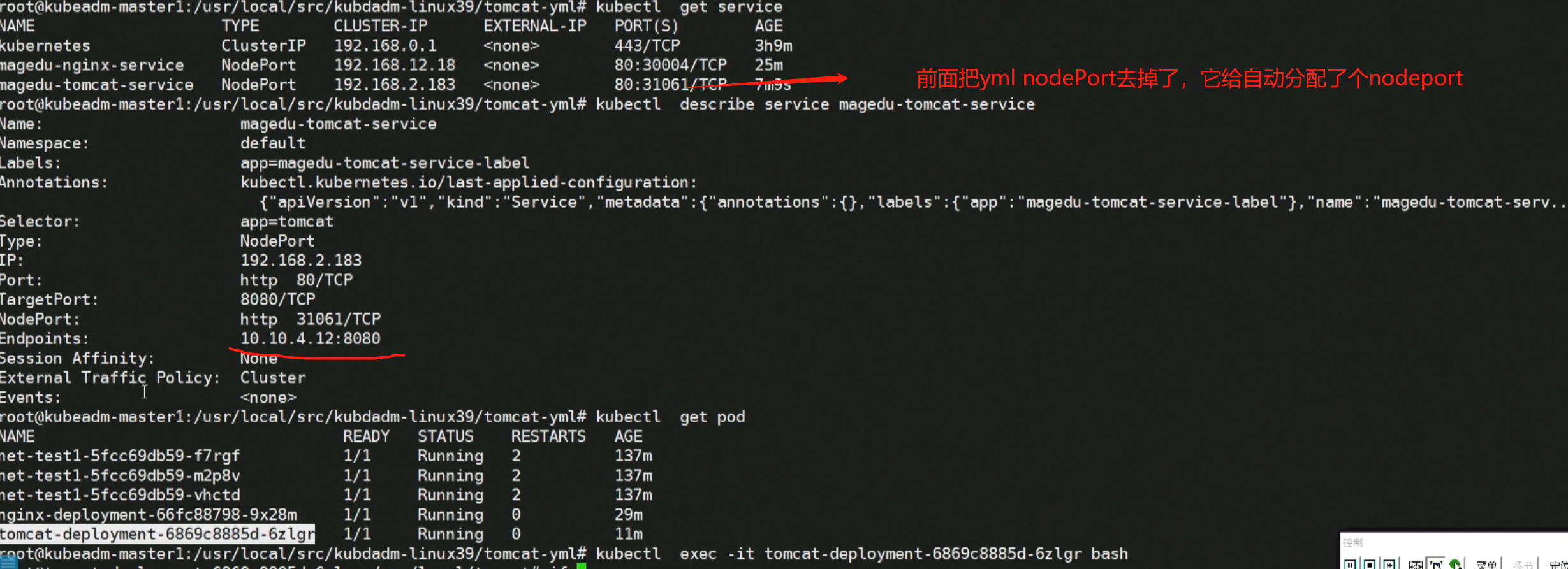

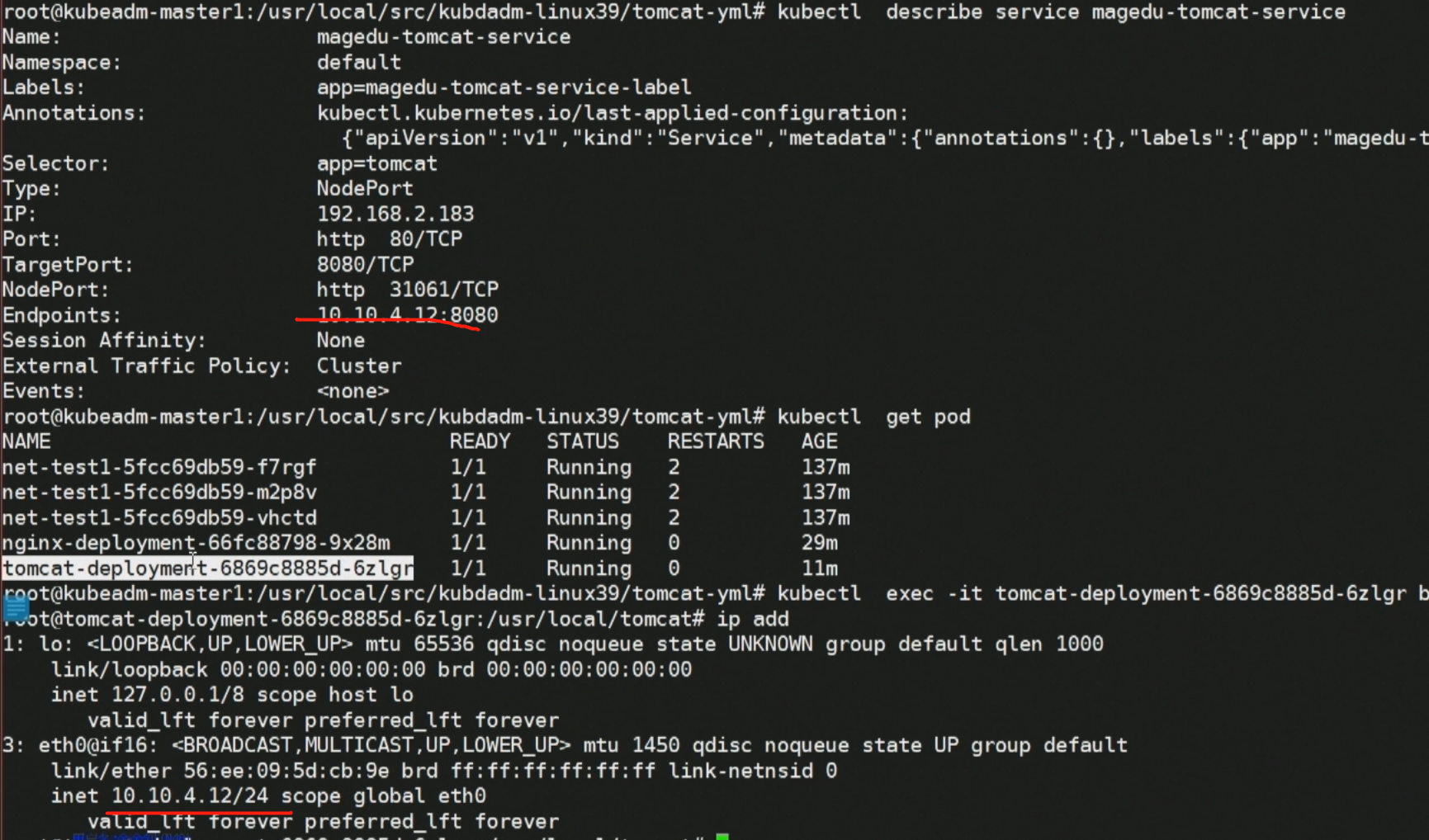

验证tomcat没问题,把# nodePort: 30005 关掉不对外

为方便测试,把 replicas: 1

注释掉

# nodePort: 30005

重新

kubectl apply -f tomcat.yml

kubectl apply -f nginx.yml

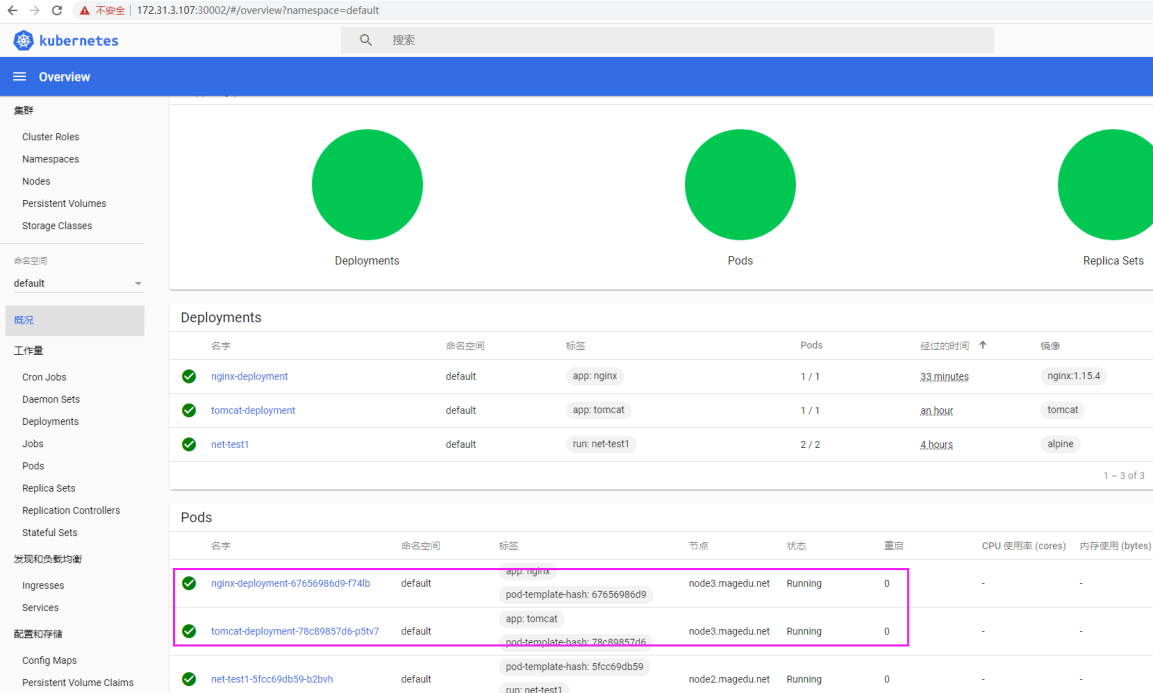

1.5.3:dashboard 验证 Pod:

1.5.3.1:web 界面验证 Pod:

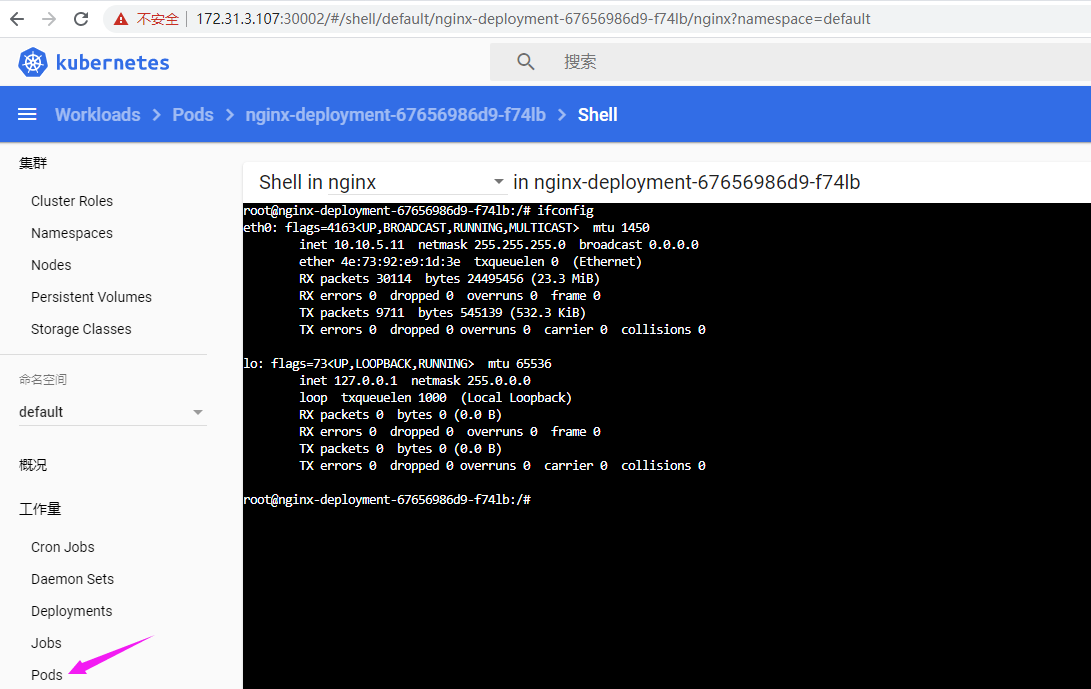

1.5.3.2:从 dashboard 进入容器:

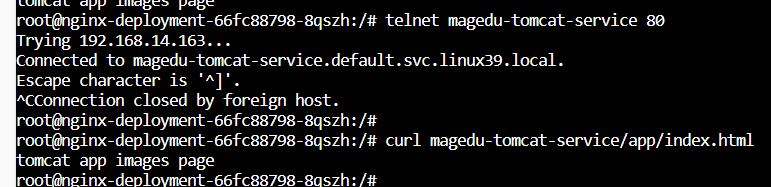

1.5.8:Nginx 实现动静分离:

进入到 nginx Pod (dashborad直接进也可)

# kubectl exec -it nginx-deployment-67656986d9-f74lb bash

# cat /etc/issue

Debian GNU/Linux 9 \n \l

更新软件源并安装基础命令

# apt update

# apt install procps vim iputils-ping net-tools curl -y

测试 service 解析

/# ping magedu-tomcat-service

PING magedu-tomcat-service.default.svc.magedu.local (172.26.188.168) 56(84) bytes of data. 测试在 nginx Pod

通过 tomcat Pod 的 service 域名访问:

/# curl magedu-tomcat-service/app/index.html

Tomcat ...

修改 Nginx 配置文件实现动静分离,Nginx 一旦接受到有/app 的 uri 就转发给 tomcat /

# vim /etc/nginx/conf.d/default.conf

location /app {

proxy_pass http://magedu-tomcat-service;

}

测试配置文件

/# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

重新加载配置文件

/# nginx -s reload

2020/03/22 09:10:49 [notice] 4057#4057: signal process started

haproxy VIP > NGINX > TOMCAT

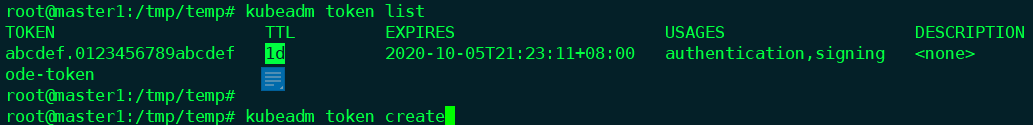

1.6:kubeadm 其他命令: 1.6.1:token 管理:

# kubeadm token --help

create #创建 token,默认有效期 24 小时

delete #删除 token

generate #生成并打印 token,但不在服务器上创建,即将 token 用于其他操作

list #列出服务器所有的 token

如果到期了,想再加master,就可以create生成新的

1.6.2:reset 命令:

# kubeadm reset #还原 kubeadm 操作

kubeadm部署的,3台master只能挂1个,5个master可以挂2

浙公网安备 33010602011771号

浙公网安备 33010602011771号