OmniParser for Pure Vision Based GUI Agent - MicroSoft 基于纯视觉的客户端代理OmniParser - MicroSoft

Abstract

摘要

The recent success of large vision language models shows great potential in driving the agent system operating on user interfaces.

大型视觉语言模型最近的成功展示,驱动代理系统在用户界面上进行操作具有巨大的潜力。

However, we argue that the power multimodal models like GPT-4V as a general agent on multiple operating systems across different applications is largely underestimated due to the lack of a robust screen parsing technique capable of:

1、reliably identifying interactable icons within the user interface, and

2、understanding the semantics of various elements in a screenshot and accurately associate the intended action with the corresponding region on the screen.

然而,我们认为,由于缺乏有效的屏幕解析技术,像GPT-4V这样的多模态大模型,作为跨应用程序的多系统通用代理在很大程序上被低估。这种技术能够:

1、可靠地识别用户界面内的可交互图标,并

2、理解屏幕截图内不同元素的语义,并准确地将用户预期行为与屏幕相应区域关联起来。

To fill these gaps, we introduce OMNIPARSER, a comprehensive method for parsing user interface screenshots into structured elements, which significantly enhances the ability of GPT-4V to generate actions that can be accurately grounded in the corresponding regions of the interface.

为了填补这些空白,我们引入了omniparser,这是一种将用户界面截图解析为结构化元素的综合性方法,该方法可以显著增强GPT-4V生成可以在界面相应区域准确定位动作的能力。

We first curated an interactable icon detection dataset using popular webpages and an icon description dataset.

我们首先使用主流网页和一个图标描述数据集,来策划了一个可交互图标检测数据集。

These datasets were utilized to fine-tune specialized models: a detection model to parse interactable regions on the screen and a caption model to extract the functional semantics of the detected elements.

这些数据集被用来微调专门的模型:一个是用来解析屏幕上可交互区域的检测模型,一个是用来提取检测到元素功能语义的字幕模型。

OMNIPARSER significantly improves GPT-4V's performance on ScreenSpot benchmark. And on Mind2Web and AITW benchmark, OMNIPARSER with screenshot only input outperforms the GPT-4V baselines requiring additional information outside of screenshot。

OMNIPARSER 显著提升了 GPT-4V 在ScreenSpot基准测试中的性能。且在 Mind2Web 和 AITW 基准测试中,只使用屏幕截图输入的OMNIPARSER,其性能也优于那些需要屏幕截图之外额外信息的GPT-4V基线模型。

Examples of parsed screenshot image and local semantics by OmniParser. The inputs to OmniParse are user task and UI screenshot, from which it will produce: 1. parsed screenshot image with bounding boxes and numeric IDs overlayed, and 2. local semantics contains both text extracted and icon description.

OmniParser解析屏幕截图图像和局部语义示例。OmniParse的输入是用户任务和UI截图,从中将产生:

1、屏幕截图图像解析,上面覆盖有边界框和数字ID,

2、局部语义,包含提取的文本和图标描述。

Curated Dataset for Interactable Region Detection and Icon Functionality Description

用于可交互区域检测和图标功能描述的精选数据集

We curate a dataset of interactable icon detection dataset, containing 67k unique screenshot images, each labeled with bounding boxes of interactable icons derived from DOM tree. We first took a 100k uniform sample of popular publicly availabe urls on the clueweb dataset, and collect bounding boxes of interactable regions of the webpage from the DOM tree of each urls. We also collected 7k icon-description pairs for finetuning the caption model.

我们策划了一个可交互图标检测的数据集,包含67000张唯一的屏幕截图图像,每张都标有从DOM树导出的可交互图标的边界框。我们首先从clueweb数据集上获取10万个主流公共可用网址的统一样本,并从每个网址的DOM树收集网页上的可交互区域的边界框。我们还收集了7k个图标描述对,用来进行字幕模型的微调。

Examples from the Interactable Region Detection dataset. . The bounding boxes are based on the interactable region extracted from the DOM tree of the webpage.

可交互区域检测数据集示例。边界框是基于网页DOM树的可交互区域进行提取的。

Results

结果

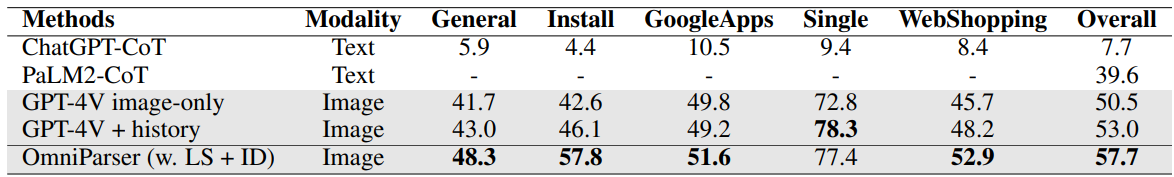

We evaluate our model on SeeClick, Mind2Web, and AITW benchmarks. We show that our model outperforms the GPT-4V baseline on all benchmarks. We also show that our model with screenshot only input outperforms the GPT-4V baselines requiring additional information outside of screenshot.

我们在SeeClick,Mind2Web和AITW基准测试中对我们的模型进行评估。结果显示,我们的模型在所有基准测试中表现均优于GPT-4V基线模型。我们还发现,我们的模型在只使用屏幕截图作为输入的情况下,其表现也是优于需要屏幕截图之外额外信息的GPT-4V基线模型。

Plugin-ready for Other Vision Language Models

适合其他视觉语言模型插件

To further demonstrate OmniParser is a plugin choice for off-the-shelf vision langauge models, we show the performance of OmniParser combined with recently announced vision language models: Phi-3.5-V and Llama-3.2-V. As seen in table, our finetuned interactable region detection (ID) model significantly improves the task performance compared to grounding dino model (w.o. ID) with local semantics across all subcategories for GPT-4V, Phi-3.5-V and Llama-3.2-V.

为进一步证明OmniParser是现成视觉语言模型的插件选择,我们展示了OmniParser和近期宣布的视觉语言模型:Phi-3.5-V和Llama-3.2-V 结合的性能。如表中所示,和具有所有子类局部语义基础dino模型的GPT-4V, Phi-3.5-V和Llama-3.2-V相比,我们的微调可交互区域检测模型显著提升了任务性能。

In addition, the local semantics of icon functionality helps significantly with the performance for every vision language model. In the table, LS is short for local semantics of icon functionality, ID is short for the interactable region detection model we finetune. The setting w.o. ID means we replace the ID model with original Grounding DINO model not finetuned on our data, and with local semantics. The setting w.o. ID and w.o LS means we use Grounding DINO model, and further without using the icon description in the text prompt.

此外,图标功能局部语义显著提升了不同视觉语言模型的性能。在表中,LS是图标功能局部语义的缩写。ID是我们微调的可交互区域检测模型的缩写。w.o. ID设置表示我们使用原始的基础dino模型替换掉ID模型,没在我们的数据上进行微调,且具有局部语义。w.o. ID 和 w.o LS 设置表示我们使用了基础的dino模型,且不使用文本提示中的图标描述。

Demo of Mind2Web Tasks

Mind2Web任务演示

Citation

引用

@misc{lu2024omniparserpurevisionbased,

title={OmniParser for Pure Vision Based GUI Agent},

author={Yadong Lu and Jianwei Yang and Yelong Shen and Ahmed Awadallah},

year={2024},

eprint={2408.00203},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2408.00203},

}

浙公网安备 33010602011771号

浙公网安备 33010602011771号