推荐系统实践—UserCF实现

参考:https://github.com/Lockvictor/MovieLens-RecSys/blob/master/usercf.py#L169

数据集

本文使用了MovieLens中的ml-100k小数据集,数据集的地址为:传送门

该数据集中包含了943个独立用户对1682部电影做的10000次评分。

首先看一下数据:

data = pd.read_csv('u.data', sep='\t', names=['user_id', 'item_id', 'rating', 'timestamp']) print(data)

完整代码

import numpy as np

import pandas as pd

import math

from collections import defaultdict

from operator import itemgetter

np.random.seed(1)

class UserCF(object):

def __init__(self):

self.train_set = {}

self.test_set = {}

self.movie_popularity = {}

self.tot_movie = 0

self.W = {} # 相似度矩阵

self.K = 20 # 最接近的K个用户

self.M = 10 # 推荐电影数

def split_data(self, data, ratio):

''' 按ratio的比例分成训练集和测试集 '''

for line in data.itertuples():

user, movie, rating = line[1], line[2], line[3]

if np.random.random() < ratio:

self.train_set.setdefault(user, {})

self.train_set[user][movie] = int(rating)

else:

self.test_set.setdefault(user, {})

self.test_set[user][movie] = int(rating)

print('数据预处理完成')

def user_similarity(self):

''' 计算用户相似度 '''

movie_users = {}

for user, items in self.train_set.items():

for movie in items.keys():

if movie not in movie_users:

movie_users[movie] = set()

movie_users[movie].add(user)

if movie not in self.movie_popularity: # 用于后面计算新颖度

self.movie_popularity[movie] = 0

self.movie_popularity[movie] += 1

print('倒排表完成')

self.tot_movie = len(movie_users) # 用于计算覆盖率

C, N = {}, {} # C记录u,v之间给相同电影打分的数量, N记录用户打分的电影数量

for movie, users in movie_users.items():

for u in users:

C.setdefault(u, defaultdict(int))

N.setdefault(u, 0)

N[u] += 1

for v in users:

if u == v:

continue

C[u][v] += 1

train_user_num = len(self.train_set) # 训练集用户数

count = 1

for u, related_users in C.items():

print('\r相似度计算进度:{:.2f}%'.format(count * 100 / train_user_num), end='')

count += 1

self.W.setdefault(u, {})

for v, cuv in related_users.items():

self.W[u][v] = float(cuv) / math.sqrt(N[u] * N[v])

print('\n相似度计算完成')

def recommend(self, u):

''' 通过与u最相似的K个用户推荐M部电影 '''

rank = {}

user_movies = self.train_set[u]

for v, similarity in sorted(self.W[u].items(), key=itemgetter(1), reverse=True)[0:self.K]:

for movie, rating in self.train_set[v].items():

if movie in user_movies:

continue

rank.setdefault(movie, 0)

rank[movie] += similarity * rating

return sorted(rank.items(), key=itemgetter(1), reverse=True)[0:self.M]

def evaluate(self):

''' 评测算法 '''

hit = 0

ret = 0

rec_tot = 0

pre_tot = 0

tot_rec_movies = set() # 推荐电影

for user in self.train_set:

test_movies = self.test_set.get(user, {})

rec_movies = self.recommend(user)

for movie, pui in rec_movies:

if movie in test_movies.keys():

hit += 1

tot_rec_movies.add(movie)

ret += math.log(1+self.movie_popularity[movie])

pre_tot += self.M

rec_tot += len(test_movies)

precision = hit / (1.0 * pre_tot)

recall = hit / (1.0 * rec_tot)

coverage = len(tot_rec_movies) / (1.0 * self.tot_movie)

ret /= 1.0 * pre_tot

print('precision=%.4f' % precision)

print('recall=%.4f' % recall)

print('coverage=%.4f' % coverage)

print('popularity=%.4f' % ret)

if __name__ == '__main__':

data = pd.read_csv('u.data', sep='\t', names=['user_id', 'item_id', 'rating', 'timestamp'])

usercf = UserCF()

usercf.split_data(data, 0.7)

usercf.user_similarity()

usercf.evaluate()

结果

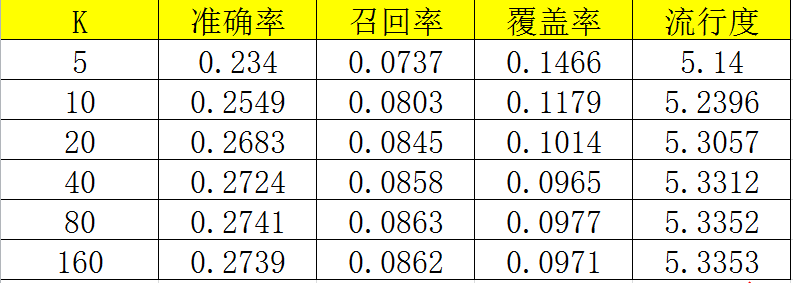

在不同的K值下运行的结果

相似度计算的改进

在现实中,很多人因为电影热门而去看它,此时也许这并不是他的兴趣所在,如果两个人同时看了相同的冷门电影,那么也许他们更有可能有更高的相似度。

对此,可以适当降低热门电影的加成比例,提高冷门电影的加成比例。

因此,只需对上述代码做此修改

C[u][v] += 1 / math.log(1 + len(users))

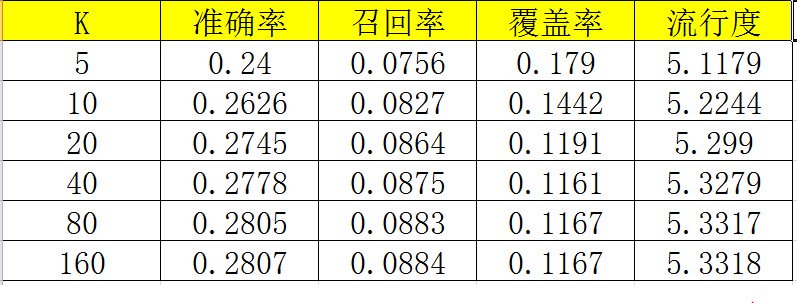

再重新进行评测,发现修改后在各项性能上都有所提高。

浙公网安备 33010602011771号

浙公网安备 33010602011771号