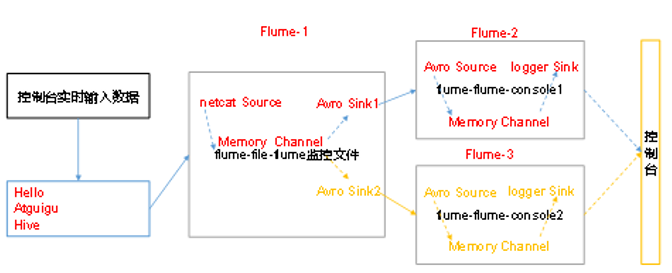

Flume—(5)单数据源多出口

单Source、Channel多Sink(负载均衡)

1. 案例需求

使用Flume-1监控文件变动,Flume-1将变动内容传递给Flume-2,Flume-2负责存储到HDFS。同时Flume-1将变动内容传递给Flume-3,Flume-3也负责存储到HDFS。

2. 需求分析

3.实现步骤

0).准备工作

在/opt/module/flume-1.9.0/job目录下创建group2文件夹

[atguigu@hadoop102 job]$ cd group2

1).创建flume-netcat-flume.conf

配置1个接收日志文件的source和1个Channel、两个sink,分别输送给flume-flume-console1 和flume-flume-console2。

创建配置文件并打开

[atguigu@hadoop102 job]$ touch flume-netcat-flume.conf [atguigu@hadoop102 job]$ vim flume-netcat-flume.conf

添加如下内容

# Name the components on this agent a1.sources = r1 a1.channels = c1 a1.sinkgroups = g1 a1.sinks = k1 k2 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = localhost a1.sources.r1.port = 44445 a1.sinkgroups.g1.processor.type = load_balance a1.sinkgroups.g1.processor.backoff = true a1.sinkgroups.g1.processor.selector = round_robin a1.sinkgroups.g1.processor.selector.maxTimeOut=10000 # Describe the sink a1.sinks.k1.type = avro a1.sinks.k1.hostname = hadoop102 a1.sinks.k1.port = 4141 a1.sinks.k2.type = avro a1.sinks.k2.hostname = hadoop102 a1.sinks.k2.port = 4142 # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinkgroups.g1.sinks = k1 k2 a1.sinks.k1.channel = c1 a1.sinks.k2.channel = c1

注:Avro是hadoop创始人Doug Cutting创建的一种语言无关的数据序列化和RPC框架。

注:RPC(Remote Procedure Call)一远程过程调用,它是一种通过网络从远程计算机程序上请求服务,而不需要了解底层网络技术的协议。

2).创建flume-flume-console1.conf

配置上级Flume输出的Source,输出时到本地控制台。

创建配置文件并打开

[atguigu@hadoop102 group2]$ touch flume-flume-console1.conf [atguigu@hadoop102 group2]$ vim flume-flume-console1.conf

添加如下内容

# Name the components on this agent a2.sources = r1 a2.sinks = k1 a2.channels = c1 # Describe/configure the source a2.sources.r1.type = avro a2.sources.r1.bind = hadoop102 a2.sources.r1.port = 4141 # Describe the sink a2.sinks.k1.type = logger # Describe the channel a2.channels.c1.type = memory a2.channels.c1.capacity = 1000 a2.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r1.channels = c1 a2.sinks.k1.channel = c1

3).创建flume-flume-console2.conf

配置上级Flume输出的Source,输出时到本地控制台。

创建配置文件并打开

[atguigu@hadoop102 group2]$ touch flume-flume-console2.conf [atguigu@hadoop102 group2]$ vim flume-flume-console2.conf

添加一下内容:

# Name the components on this agent a3.sources = r1 a3.sinks = k1 a3.channels = c2 # Describe/configure the source a3.sources.r1.type = avro a3.sources.r1.bind = hadoop102 a3.sources.r1.port = 4142 # Describe the sink a3.sinks.k1.type = logger # Describe the channel a3.channels.c2.type = memory a3.channels.c2.capacity = 1000 a3.channels.c2.transactionCapacity = 100 # Bind the source and sink to the channel a3.sources.r1.channels = c2 a3.sinks.k1.channel = c2

4).执行配置文件

分别开启对应配置文件:flume-flume-console2,flume-flume-console1,flume-netcat-flume。

[ck@hadoop102 flume]$ bin/flume-ng agent –conf conf/ –name a3 –conf-file job/group2/flume-flume-console2.conf -Dflume.root.logger=INFO,console [ck@hadoop102 flume]$ bin/flume-ng agent –conf conf/ –name a2 –conf-file job/group2/flume-flume-console1.conf -Dflume.root.logger=INFO,console [ck@hadoop102 flume]$ bin/flume-ng agent –conf conf/ –name a1 –conf-file job/group2/flume-netcat-flume.conf

5).使用telnet工具向本机的44444端口发送内容

[atguigu@hadoop102 ~]$ telnet localhost 44445

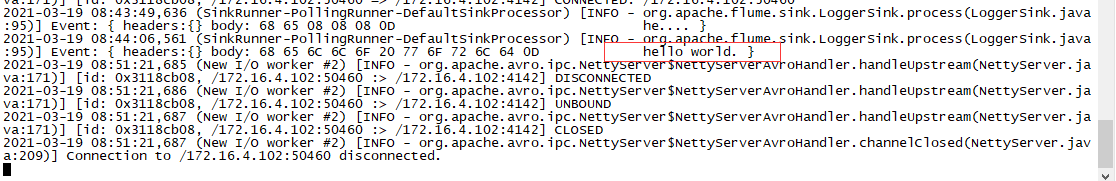

查看Flume2及Flume3的控制台打印日志

源于atguigu视频

浙公网安备 33010602011771号

浙公网安备 33010602011771号