kubeadm部署k8s-v1.18.0

部署介绍

kubernetes 官方提供的三种部署方式:

minikube:Minikube是一个工具,可以在本地快速运行一个单点的Kubernetes,仅用于尝试Kubernetes或日常开发的用户使用。部署地址:https://kubernetes.io/docs/setup/minikube/

kubeadm:Kubeadm也是一个工具,提供kubeadm init和kubeadm join,用于快速部署Kubernetes集群。部署地址:https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

二进制包:从官方下载发行版的二进制包,手动部署每个组件,组成Kubernetes集群。下载地址:https://github.com/kubernetes/kubernetes/releases kubeadm是目前社区主推的自动化安装部署方式,可用于生产,测试,高可用集群等。特点是方便,简单,快捷。

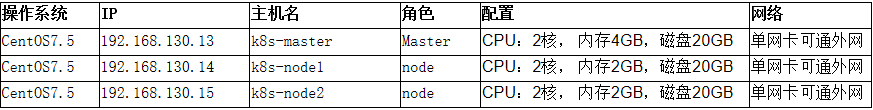

示例环境规划/配置如下:

部署步骤

环境初始化

*以下操作在所有节点执行

1.关闭防火墙及selinux

# systemctl stop firewalld && systemctl disable firewalld # sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config && setenforce 0

2.关闭 swap 分区 ( Kubernetes默认要求关闭swap,影响性能)

# swapoff -a # sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

3.所有节点上配置主机名及hosts

# hostnamectl set-hostname k8s-master //192.168.130.13 上执行 # hostnamectl set-hostname k8s-node1 //192.168.130.14 上执行 # hostnamectl set-hostname k8s-node2 //192.168.130.15 上执行

//所有节点下执行

# cat >> /etc/hosts <<EOF 192.168.130.13 k8s-master 192.168.130.14 k8s-node1 192.168.130.15 k8s-node2 EOF

4.内核参数调整,将桥接的IPv4流量传递到iptables的链

# cat <<EOF > /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF

# sysctl --system

5.国内使用阿里云的源

# cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes Repo baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ gpgcheck=0 enabled=1 EOF

安装docker

1.yum安装gcc相关

# yum -y install gcc gcc+

2.安装需要的软件包

# yum install -y yum-utils device-mapper-persistent-data lvm2

3.设置stable镜像仓库

# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

4.更新yum软件包索引

# yum makecache fast

5.安装DOCKER CE

# yum -y install docker-ce

6.启动docker

#systemctl start docker

7.测试是否安装成功

#docker version

8.配置镜像加速

鉴于国内网络问题,后续拉取 Docker 镜像十分缓慢,我们可以需要配置加速器来解决, 我使用的是阿里云的本人自己账号的镜像地址(需要自己注册有一个属于你自己的): 访问阿里云 https://dev.aliyun.com/search.html ,使用账号登录,找到容器镜像服务-镜像加速器,获取 自己的加速地址

sudo mkdir -p /etc/docker sudo tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors": ["https://2scpgdgo.mirror.aliyuncs.com"] } EOF sudo systemctl daemon-reload sudo systemctl restart docker

安装k8s组件

1.安装kubeadm,kubelet和kubectlhi

# yum install -y kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0 // 由于版本更新频繁,这里指定版本号1.18.0部署

2.设置开机启动

# systemctl enable --now docker # systemctl enable --now kubelet

3.部署Kubernetes Master

kubeadm init \ --apiserver-advertise-address=192.168.130.13 \ --image-repository registry.aliyuncs.com/google_containers \ --kubernetes-version v1.18.0 \ --service-cidr=10.111.0.0/16 \ --pod-network-cidr=10.112.0.0/16

//参数说明 : //--apiserver-advertise-address 192.168.130.13 #这里是apiserver的地址,也就master主机IP地址 //--image-repository registry.aliyuncs.com/google_containers \ #更换成从阿里云拉取镜像 //--kubernetes-version v1.18.0 #指定安装k8s版本,如果不指定默认使用最新版本 //--service-cidr=10.111.0.0/16 #后期创建service所用的网段 //--pod-network-cidr=10.112.0.0/16 #创建pod所用的网段,需要与后//面的calico podsubnet参数相同

安装打印信息

# kubeadm init \ > --apiserver-advertise-address=192.168.130.13 \ > --image-repository registry.aliyuncs.com/google_containers \ > --kubernetes-version v1.18.0 \ > --service-cidr=10.111.0.0/16 \ > --pod-network-cidr=10.112.0.0/16 W0513 13:45:30.441548 20978 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] [init] Using Kubernetes version: v1.18.0 [preflight] Running pre-flight checks [WARNING Service-Docker]: docker service is not enabled, please run 'systemctl enable docker.service' [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/ [WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.6. Latest validated version: 19.03 [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.111.0.1 192.168.130.13] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.130.13 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.130.13 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" W0513 14:02:25.753137 20978 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC" [control-plane] Creating static Pod manifest for "kube-scheduler" W0513 14:02:25.754305 20978 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC" [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 17.002431 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node k8s-master as control-plane by adding the label "node-role.kubernetes.io/master=''" [mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule] [bootstrap-token] Using token: 2qispp.gg8714dxjdg9yob8 [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.130.13:6443 --token 2qispp.gg8714dxjdg9yob8 \ --discovery-token-ca-cert-hash sha256:c2e70b7d0faed212e92eeb2968091bca8f815ced6d3b2f1c2b858be8867a1f9b

*以下操作只在master节点上执行

1.客户端配置

# mkdir -p $HOME/.kube # sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config # sudo chown $(id -u):$(id -g) $HOME/.kube/config

2.master节点调度pod开关开启

# kubectl taint nodes --all node-role.kubernetes.io/master-

安装网络插件calico

我们安装 v3.13.3 版本

calico.yaml 文件内容

--- # Source: calico/templates/calico-config.yaml # This ConfigMap is used to configure a self-hosted Calico installation. kind: ConfigMap apiVersion: v1 metadata: name: calico-config namespace: kube-system data: # Typha is disabled. typha_service_name: "none" # Configure the backend to use. calico_backend: "bird" # Configure the MTU to use veth_mtu: "1440" # The CNI network configuration to install on each node. The special # values in this config will be automatically populated. cni_network_config: |- { "name": "k8s-pod-network", "cniVersion": "0.3.1", "plugins": [ { "type": "calico", "log_level": "info", "datastore_type": "kubernetes", "nodename": "__KUBERNETES_NODE_NAME__", "mtu": __CNI_MTU__, "ipam": { "type": "calico-ipam" }, "policy": { "type": "k8s" }, "kubernetes": { "kubeconfig": "__KUBECONFIG_FILEPATH__" } }, { "type": "portmap", "snat": true, "capabilities": {"portMappings": true} }, { "type": "bandwidth", "capabilities": {"bandwidth": true} } ] } --- # Source: calico/templates/kdd-crds.yaml apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: bgpconfigurations.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: BGPConfiguration plural: bgpconfigurations singular: bgpconfiguration --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: bgppeers.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: BGPPeer plural: bgppeers singular: bgppeer --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: blockaffinities.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: BlockAffinity plural: blockaffinities singular: blockaffinity --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: clusterinformations.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: ClusterInformation plural: clusterinformations singular: clusterinformation --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: felixconfigurations.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: FelixConfiguration plural: felixconfigurations singular: felixconfiguration --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: globalnetworkpolicies.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: GlobalNetworkPolicy plural: globalnetworkpolicies singular: globalnetworkpolicy --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: globalnetworksets.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: GlobalNetworkSet plural: globalnetworksets singular: globalnetworkset --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: hostendpoints.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: HostEndpoint plural: hostendpoints singular: hostendpoint --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ipamblocks.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPAMBlock plural: ipamblocks singular: ipamblock --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ipamconfigs.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPAMConfig plural: ipamconfigs singular: ipamconfig --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ipamhandles.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPAMHandle plural: ipamhandles singular: ipamhandle --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ippools.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPPool plural: ippools singular: ippool --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: networkpolicies.crd.projectcalico.org spec: scope: Namespaced group: crd.projectcalico.org version: v1 names: kind: NetworkPolicy plural: networkpolicies singular: networkpolicy --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: networksets.crd.projectcalico.org spec: scope: Namespaced group: crd.projectcalico.org version: v1 names: kind: NetworkSet plural: networksets singular: networkset --- --- # Source: calico/templates/rbac.yaml # Include a clusterrole for the kube-controllers component, # and bind it to the calico-kube-controllers serviceaccount. kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: calico-kube-controllers rules: # Nodes are watched to monitor for deletions. - apiGroups: [""] resources: - nodes verbs: - watch - list - get # Pods are queried to check for existence. - apiGroups: [""] resources: - pods verbs: - get # IPAM resources are manipulated when nodes are deleted. - apiGroups: ["crd.projectcalico.org"] resources: - ippools verbs: - list - apiGroups: ["crd.projectcalico.org"] resources: - blockaffinities - ipamblocks - ipamhandles verbs: - get - list - create - update - delete # Needs access to update clusterinformations. - apiGroups: ["crd.projectcalico.org"] resources: - clusterinformations verbs: - get - create - update --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: calico-kube-controllers roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: calico-kube-controllers subjects: - kind: ServiceAccount name: calico-kube-controllers namespace: kube-system --- # Include a clusterrole for the calico-node DaemonSet, # and bind it to the calico-node serviceaccount. kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: calico-node rules: # The CNI plugin needs to get pods, nodes, and namespaces. - apiGroups: [""] resources: - pods - nodes - namespaces verbs: - get - apiGroups: [""] resources: - endpoints - services verbs: # Used to discover service IPs for advertisement. - watch - list # Used to discover Typhas. - get # Pod CIDR auto-detection on kubeadm needs access to config maps. - apiGroups: [""] resources: - configmaps verbs: - get - apiGroups: [""] resources: - nodes/status verbs: # Needed for clearing NodeNetworkUnavailable flag. - patch # Calico stores some configuration information in node annotations. - update # Watch for changes to Kubernetes NetworkPolicies. - apiGroups: ["networking.k8s.io"] resources: - networkpolicies verbs: - watch - list # Used by Calico for policy information. - apiGroups: [""] resources: - pods - namespaces - serviceaccounts verbs: - list - watch # The CNI plugin patches pods/status. - apiGroups: [""] resources: - pods/status verbs: - patch # Calico monitors various CRDs for config. - apiGroups: ["crd.projectcalico.org"] resources: - globalfelixconfigs - felixconfigurations - bgppeers - globalbgpconfigs - bgpconfigurations - ippools - ipamblocks - globalnetworkpolicies - globalnetworksets - networkpolicies - networksets - clusterinformations - hostendpoints - blockaffinities verbs: - get - list - watch # Calico must create and update some CRDs on startup. - apiGroups: ["crd.projectcalico.org"] resources: - ippools - felixconfigurations - clusterinformations verbs: - create - update # Calico stores some configuration information on the node. - apiGroups: [""] resources: - nodes verbs: - get - list - watch # These permissions are only requried for upgrade from v2.6, and can # be removed after upgrade or on fresh installations. - apiGroups: ["crd.projectcalico.org"] resources: - bgpconfigurations - bgppeers verbs: - create - update # These permissions are required for Calico CNI to perform IPAM allocations. - apiGroups: ["crd.projectcalico.org"] resources: - blockaffinities - ipamblocks - ipamhandles verbs: - get - list - create - update - delete - apiGroups: ["crd.projectcalico.org"] resources: - ipamconfigs verbs: - get # Block affinities must also be watchable by confd for route aggregation. - apiGroups: ["crd.projectcalico.org"] resources: - blockaffinities verbs: - watch # The Calico IPAM migration needs to get daemonsets. These permissions can be # removed if not upgrading from an installation using host-local IPAM. - apiGroups: ["apps"] resources: - daemonsets verbs: - get --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: calico-node roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: calico-node subjects: - kind: ServiceAccount name: calico-node namespace: kube-system --- # Source: calico/templates/calico-node.yaml # This manifest installs the calico-node container, as well # as the CNI plugins and network config on # each master and worker node in a Kubernetes cluster. kind: DaemonSet apiVersion: apps/v1 metadata: name: calico-node namespace: kube-system labels: k8s-app: calico-node spec: selector: matchLabels: k8s-app: calico-node updateStrategy: type: RollingUpdate rollingUpdate: maxUnavailable: 1 template: metadata: labels: k8s-app: calico-node annotations: # This, along with the CriticalAddonsOnly toleration below, # marks the pod as a critical add-on, ensuring it gets # priority scheduling and that its resources are reserved # if it ever gets evicted. scheduler.alpha.kubernetes.io/critical-pod: '' spec: nodeSelector: kubernetes.io/os: linux hostNetwork: true tolerations: # Make sure calico-node gets scheduled on all nodes. - effect: NoSchedule operator: Exists # Mark the pod as a critical add-on for rescheduling. - key: CriticalAddonsOnly operator: Exists - effect: NoExecute operator: Exists serviceAccountName: calico-node # Minimize downtime during a rolling upgrade or deletion; tell Kubernetes to do a "force # deletion": https://kubernetes.io/docs/concepts/workloads/pods/pod/#termination-of-pods. terminationGracePeriodSeconds: 0 priorityClassName: system-node-critical initContainers: # This container performs upgrade from host-local IPAM to calico-ipam. # It can be deleted if this is a fresh installation, or if you have already # upgraded to use calico-ipam. - name: upgrade-ipam image: calico/cni:v3.13.3 command: ["/opt/cni/bin/calico-ipam", "-upgrade"] env: - name: KUBERNETES_NODE_NAME valueFrom: fieldRef: fieldPath: spec.nodeName - name: CALICO_NETWORKING_BACKEND valueFrom: configMapKeyRef: name: calico-config key: calico_backend volumeMounts: - mountPath: /var/lib/cni/networks name: host-local-net-dir - mountPath: /host/opt/cni/bin name: cni-bin-dir securityContext: privileged: true # This container installs the CNI binaries # and CNI network config file on each node. - name: install-cni image: calico/cni:v3.13.3 command: ["/install-cni.sh"] env: # Name of the CNI config file to create. - name: CNI_CONF_NAME value: "10-calico.conflist" # The CNI network config to install on each node. - name: CNI_NETWORK_CONFIG valueFrom: configMapKeyRef: name: calico-config key: cni_network_config # Set the hostname based on the k8s node name. - name: KUBERNETES_NODE_NAME valueFrom: fieldRef: fieldPath: spec.nodeName # CNI MTU Config variable - name: CNI_MTU valueFrom: configMapKeyRef: name: calico-config key: veth_mtu # Prevents the container from sleeping forever. - name: SLEEP value: "false" volumeMounts: - mountPath: /host/opt/cni/bin name: cni-bin-dir - mountPath: /host/etc/cni/net.d name: cni-net-dir securityContext: privileged: true # Adds a Flex Volume Driver that creates a per-pod Unix Domain Socket to allow Dikastes # to communicate with Felix over the Policy Sync API. - name: flexvol-driver image: calico/pod2daemon-flexvol:v3.13.3 volumeMounts: - name: flexvol-driver-host mountPath: /host/driver securityContext: privileged: true containers: # Runs calico-node container on each Kubernetes node. This # container programs network policy and routes on each # host. - name: calico-node image: calico/node:v3.13.3 env: # Use Kubernetes API as the backing datastore. - name: DATASTORE_TYPE value: "kubernetes" # Wait for the datastore. - name: WAIT_FOR_DATASTORE value: "true" # Set based on the k8s node name. - name: NODENAME valueFrom: fieldRef: fieldPath: spec.nodeName # Choose the backend to use. - name: CALICO_NETWORKING_BACKEND valueFrom: configMapKeyRef: name: calico-config key: calico_backend # Cluster type to identify the deployment type - name: CLUSTER_TYPE value: "k8s,bgp" # Auto-detect the BGP IP address. - name: IP value: "autodetect" # Enable IPIP - name: CALICO_IPV4POOL_IPIP value: "Always" # Set MTU for tunnel device used if ipip is enabled - name: FELIX_IPINIPMTU valueFrom: configMapKeyRef: name: calico-config key: veth_mtu # The default IPv4 pool to create on startup if none exists. Pod IPs will be # chosen from this range. Changing this value after installation will have # no effect. This should fall within `--cluster-cidr`. # - name: CALICO_IPV4POOL_CIDR # value: "192.168.0.0/16" # Disable file logging so `kubectl logs` works. - name: CALICO_DISABLE_FILE_LOGGING value: "true" # Set Felix endpoint to host default action to ACCEPT. - name: FELIX_DEFAULTENDPOINTTOHOSTACTION value: "ACCEPT" # Disable IPv6 on Kubernetes. - name: FELIX_IPV6SUPPORT value: "false" # Set Felix logging to "info" - name: FELIX_LOGSEVERITYSCREEN value: "info" - name: FELIX_HEALTHENABLED value: "true" securityContext: privileged: true resources: requests: cpu: 250m livenessProbe: exec: command: - /bin/calico-node - -felix-live - -bird-live periodSeconds: 10 initialDelaySeconds: 10 failureThreshold: 6 readinessProbe: exec: command: - /bin/calico-node - -felix-ready - -bird-ready periodSeconds: 10 volumeMounts: - mountPath: /lib/modules name: lib-modules readOnly: true - mountPath: /run/xtables.lock name: xtables-lock readOnly: false - mountPath: /var/run/calico name: var-run-calico readOnly: false - mountPath: /var/lib/calico name: var-lib-calico readOnly: false - name: policysync mountPath: /var/run/nodeagent volumes: # Used by calico-node. - name: lib-modules hostPath: path: /lib/modules - name: var-run-calico hostPath: path: /var/run/calico - name: var-lib-calico hostPath: path: /var/lib/calico - name: xtables-lock hostPath: path: /run/xtables.lock type: FileOrCreate # Used to install CNI. - name: cni-bin-dir hostPath: path: /opt/cni/bin - name: cni-net-dir hostPath: path: /etc/cni/net.d # Mount in the directory for host-local IPAM allocations. This is # used when upgrading from host-local to calico-ipam, and can be removed # if not using the upgrade-ipam init container. - name: host-local-net-dir hostPath: path: /var/lib/cni/networks # Used to create per-pod Unix Domain Sockets - name: policysync hostPath: type: DirectoryOrCreate path: /var/run/nodeagent # Used to install Flex Volume Driver - name: flexvol-driver-host hostPath: type: DirectoryOrCreate path: /usr/libexec/kubernetes/kubelet-plugins/volume/exec/nodeagent~uds --- apiVersion: v1 kind: ServiceAccount metadata: name: calico-node namespace: kube-system --- # Source: calico/templates/calico-kube-controllers.yaml # See https://github.com/projectcalico/kube-controllers apiVersion: apps/v1 kind: Deployment metadata: name: calico-kube-controllers namespace: kube-system labels: k8s-app: calico-kube-controllers spec: # The controllers can only have a single active instance. replicas: 1 selector: matchLabels: k8s-app: calico-kube-controllers strategy: type: Recreate template: metadata: name: calico-kube-controllers namespace: kube-system labels: k8s-app: calico-kube-controllers annotations: scheduler.alpha.kubernetes.io/critical-pod: '' spec: nodeSelector: kubernetes.io/os: linux tolerations: # Mark the pod as a critical add-on for rescheduling. - key: CriticalAddonsOnly operator: Exists - key: node-role.kubernetes.io/master effect: NoSchedule serviceAccountName: calico-kube-controllers priorityClassName: system-cluster-critical containers: - name: calico-kube-controllers image: calico/kube-controllers:v3.13.3 env: # Choose which controllers to run. - name: ENABLED_CONTROLLERS value: node - name: DATASTORE_TYPE value: kubernetes readinessProbe: exec: command: - /usr/bin/check-status - -r --- apiVersion: v1 kind: ServiceAccount metadata: name: calico-kube-controllers namespace: kube-system --- # Source: calico/templates/calico-etcd-secrets.yaml --- # Source: calico/templates/calico-typha.yaml --- # Source: calico/templates/configure-canal.yaml

创建calico

# kubectl apply -f calico.yaml

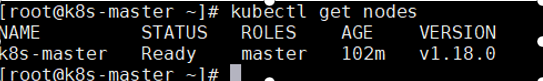

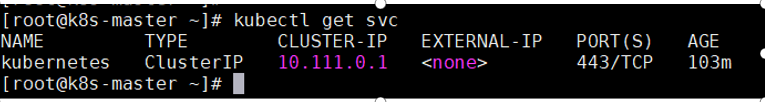

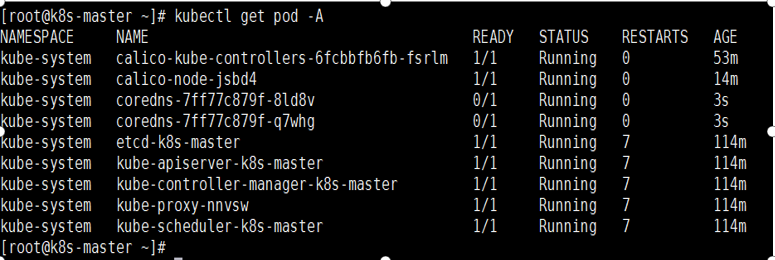

验证是否部署成功

# kubectl get nodes # kubectl get svc # kubectl get pods -A

添加node节点

使用kubeadm join 注册Node节点到Matser,kubeadm join 的内容,在上面kubeadm init 已经生成好了

kubeadm join 192.168.130.14:6443 --token 2qispp.gg8714dxjdg9yob8 \ --discovery-token-ca-cert-hash sha256:c2e70b7d0faed212e92eeb2968091bca8f815ced6d3b2f1c2b858be8867a1f9b

默认token的有效期为24小时,当过期之后,该token就不可用了,则需要生成新的token

kubeadm token create --print-join-command

再次验证集群状态

[root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready master 12d v1.18.0 k8s-node1 Ready <none> 12d v1.18.0 k8s-node2 NotReady <none> 12d v1.18.0 [root@k8s-master ~]# [root@k8s-master ~]# kubectl get svc -n kube-system NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kube-dns ClusterIP 10.111.0.10 <none> 53/UDP,53/TCP,9153/TCP 12d [root@k8s-master ~]# [root@k8s-master ~]# kubectl get po -n kube-system NAME READY STATUS RESTARTS AGE calico-kube-controllers-6fcbbfb6fb-fsrlm 1/1 Running 11 12d calico-node-5plbs 1/1 Running 2 12d calico-node-gsgjp 0/1 Running 8 12d calico-node-jsbd4 0/1 Running 11 12d coredns-7ff77c879f-8ld8v 1/1 Running 11 12d coredns-7ff77c879f-q7whg 1/1 Running 11 12d etcd-k8s-master 1/1 Running 18 12d kube-apiserver-k8s-master 1/1 Running 22 12d kube-controller-manager-k8s-master 1/1 Running 19 12d kube-proxy-84q8x 1/1 Running 8 12d kube-proxy-nnvsw 1/1 Running 18 12d kube-proxy-rfv8n 1/1 Running 2 12d kube-scheduler-k8s-master 1/1 Running 19 12d [root@k8s-master ~]#

浙公网安备 33010602011771号

浙公网安备 33010602011771号