pytorch中文官方教程(四)——训练分类器

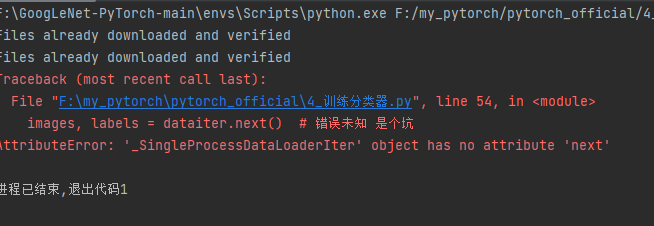

1、代码的坑

images, labels = dataiter.next() # 错误未知 是个坑

解决办法:

用

images, labels = next(dataiter)替换images, labels = dataiter.next()

成功运行!

在网上找python迭代器的写法,也没看到.next()这样子的写法

2、相关代码

"""

我们将按顺序执行以下步骤:

使用torchvision加载并标准化 CIFAR10 训练和测试数据集

定义卷积神经网络

定义损失函数

根据训练数据训练网络

在测试数据上测试网络

"""

"""1.加载并标准化 CIFAR10"""

import torch

import torchvision

import torchvision.transforms as transforms

# TorchVision 数据集的输出是[0, 1]范围的PILImage图像。 我们将它们转换为归一化范围[-1, 1]的张量。 .. 注意:

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='.\\data', train=True,

download=True, transform=transform)

# trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

# shuffle=True, num_workers=2) # 报错原因:在linux系统中可以使用多个子进程加载数据,而在windows系统中不能。所以在windows中要将DataLoader中的num_workers设置为0或者采用默认为0的设置

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

shuffle=True, num_workers=0)

testset = torchvision.datasets.CIFAR10(root='.\\data', train=False,

download=True, transform=transform)

# testloader = torch.utils.data.DataLoader(testset, batch_size=4,

# shuffle=False, num_workers=2)

testloader = torch.utils.data.DataLoader(testset, batch_size=4,

shuffle=False, num_workers=0)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

import matplotlib.pyplot as plt

import numpy as np

# functions to show an image

def imshow(img):

img = img / 2 + 0.5 # unnormalize

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

plt.show()

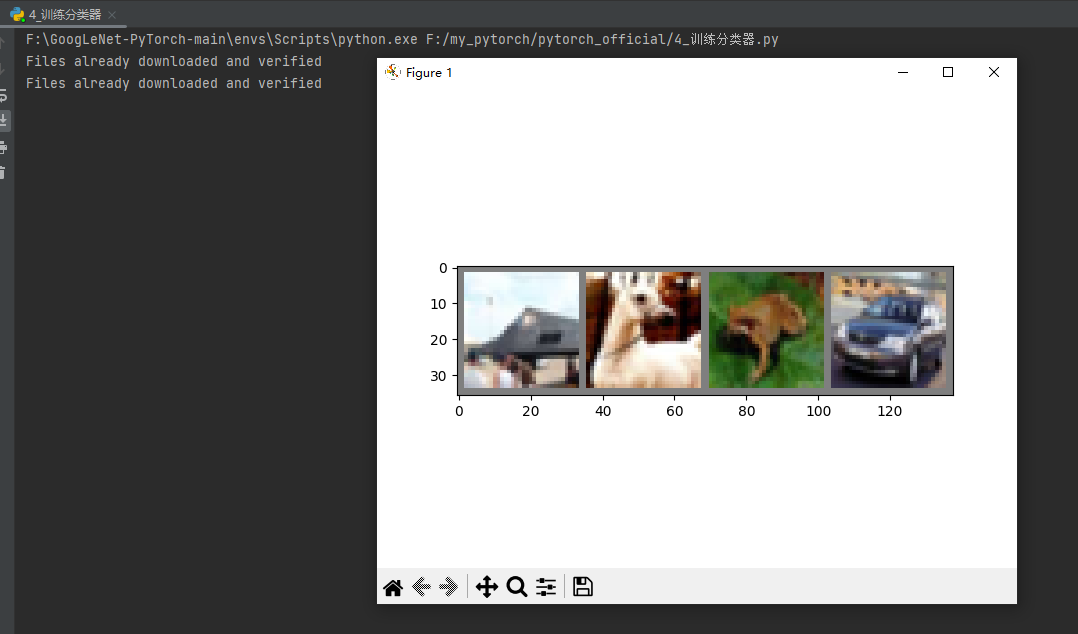

# get some random training images

dataiter = iter(trainloader)

# images, labels = dataiter.next() # 错误未知 是个坑

images, labels = next(dataiter)

# show images

imshow(torchvision.utils.make_grid(images))

# print labels

print(' '.join('%5s' % classes[labels[j]] for j in range(4)))

"""2.定义卷积神经网络"""

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 5 * 5)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net = Net()

"""3.定义损失函数和优化器"""

import torch.optim as optim

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(net.parameters(), lr=0.001, momentum=0.9)

"""4.训练网络"""

is_train = True

if is_train:

for epoch in range(10): # loop over the dataset multiple times

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

# get the inputs; data is a list of [inputs, labels]

inputs, labels = data

# zero the parameter gradients

optimizer.zero_grad()

# forward + backward + optimize

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

if i % 2000 == 1999: # print every 2000 mini-batches

print('[%d, %5d] loss: %.3f' %

(epoch + 1, i + 1, running_loss / 2000))

running_loss = 0.0

print('Finished Training')

PATH = './cifar_net.pth'

torch.save(net.state_dict(), PATH)

"""5.根据测试数据测试网络"""

dataiter = iter(testloader)

# images, labels = dataiter.next()

images, labels = next(dataiter)

# print images

imshow(torchvision.utils.make_grid(images))

print('GroundTruth: ', ' '.join('%5s' % classes[labels[j]] for j in range(4)))

net = Net()

PATH = './cifar_net.pth'

net.load_state_dict(torch.load(PATH))

outputs = net(images)

_, predicted = torch.max(outputs, 1)

print('Predicted: ', ' '.join('%5s' % classes[predicted[j]]

for j in range(4)))

# 让我们看一下网络在整个数据集上的表现。

correct = 0

total = 0

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy of the network on the 10000 test images: %d %%' % (

100 * correct / total))

# # 嗯,哪些类的表现良好,哪些类的表现不佳:

# class_correct = list(0. for i in range(10))

# class_total = list(0. for i in range(10))

# with torch.no_grad():

# for data in testloader:

# images, labels = data

# outputs = net(images)

# _, predicted = torch.max(outputs, 1)

# c = (predicted == labels).squeeze()

# for i in range(4):

# label = labels[i]

# class_correct[label] += c[i].item()

# class_total[label] += 1

#

# for i in range(10):

# print('Accuracy of %5s : %2d %%' % (

# classes[i], 100 * class_correct[i] / class_total[i]))

# """

# 在 GPU 上进行训练

# """

# device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

#

# # Assuming that we are on a CUDA machine, this should print a CUDA device:

#

# print(device)

# # 然后,这些方法将递归遍历所有模块,并将其参数和缓冲区转换为 CUDA 张量:

# net.to(device)

# # 请记住,您还必须将每一步的输入和目标也发送到 GPU:

# inputs, labels = data[0].to(device), data[1].to(device)

3、输出实例

F:\GoogLeNet-PyTorch-main\envs\Scripts\python.exe F:/my_pytorch/pytorch_official/4_训练分类器.py

Files already downloaded and verified

Files already downloaded and verified

deer dog bird bird

[1, 2000] loss: 2.210

[1, 4000] loss: 1.897

[1, 6000] loss: 1.708

[1, 8000] loss: 1.609

[1, 10000] loss: 1.531

[1, 12000] loss: 1.463

[2, 2000] loss: 1.414

[2, 4000] loss: 1.392

[2, 6000] loss: 1.365

[2, 8000] loss: 1.321

[2, 10000] loss: 1.315

[2, 12000] loss: 1.283

[3, 2000] loss: 1.225

[3, 4000] loss: 1.208

[3, 6000] loss: 1.238

[3, 8000] loss: 1.207

[3, 10000] loss: 1.198

[3, 12000] loss: 1.214

[4, 2000] loss: 1.118

[4, 4000] loss: 1.128

[4, 6000] loss: 1.127

[4, 8000] loss: 1.113

[4, 10000] loss: 1.109

[4, 12000] loss: 1.149

[5, 2000] loss: 1.035

[5, 4000] loss: 1.050

[5, 6000] loss: 1.077

[5, 8000] loss: 1.037

[5, 10000] loss: 1.041

[5, 12000] loss: 1.058

[6, 2000] loss: 0.981

[6, 4000] loss: 1.002

[6, 6000] loss: 1.010

[6, 8000] loss: 0.993

[6, 10000] loss: 1.011

[6, 12000] loss: 0.984

[7, 2000] loss: 0.900

[7, 4000] loss: 0.966

[7, 6000] loss: 0.944

[7, 8000] loss: 0.952

[7, 10000] loss: 0.954

[7, 12000] loss: 0.962

[8, 2000] loss: 0.874

[8, 4000] loss: 0.901

[8, 6000] loss: 0.891

[8, 8000] loss: 0.899

[8, 10000] loss: 0.913

[8, 12000] loss: 0.927

[9, 2000] loss: 0.817

[9, 4000] loss: 0.845

[9, 6000] loss: 0.874

[9, 8000] loss: 0.874

[9, 10000] loss: 0.891

[9, 12000] loss: 0.903

[10, 2000] loss: 0.795

[10, 4000] loss: 0.813

[10, 6000] loss: 0.851

[10, 8000] loss: 0.854

[10, 10000] loss: 0.852

[10, 12000] loss: 0.866

Finished Training

GroundTruth: cat ship ship plane

Predicted: dog plane ship plane

Accuracy of the network on the 10000 test images: 62 %

进程已结束,退出代码0

本文来自博客园,作者:JaxonYe,转载请注明原文链接:https://www.cnblogs.com/yechangxin/articles/16861588.html

侵权必究

浙公网安备 33010602011771号

浙公网安备 33010602011771号