Centos 7.9 部署Kubernetes集群 (基于containerd 运行时)

前言

- 支持OCI镜像规范,也就是runc

- 支持OCI运行时规范

- 支持镜像的pull

- 支持容器网络管理

- 存储支持多租户

- 支持容器运行时和容器的生命周期管理

- 支持管理网络名称空间

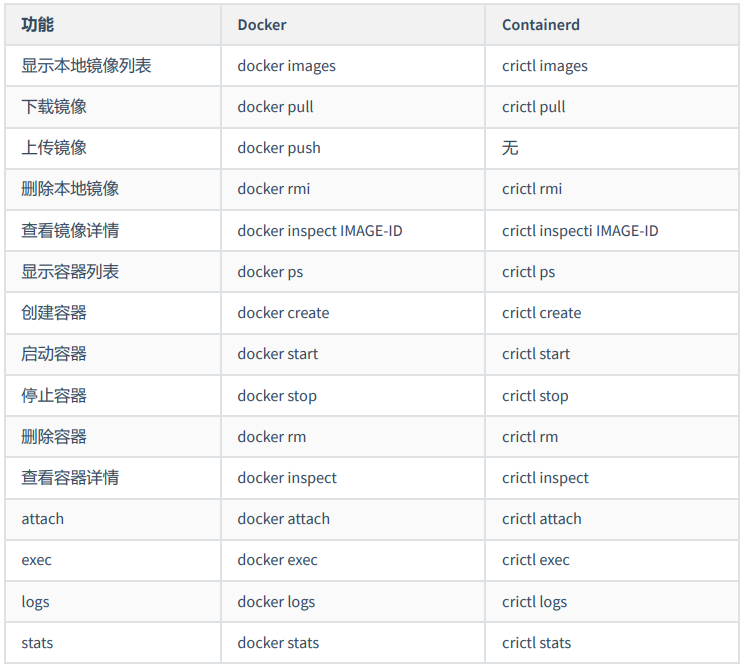

可以看到使用方式大同小异。

下面介绍一下使用kubeadm安装K8S集群,并使用containerd作为容器运行时的具体安装步骤。

一、环境说明

主机节点

| 服务器系统 | 节点IP | 节点类型 | CUP/内存 | Hostname | 内核 |

| Centos 7.9.2009 | 192.168.1.92 | 主节点 | 2核/4G | master | 3.10.0 |

| Centos 7.9.2009 | 192.168.1.93 | 工作节点1 | 2核/4G | node1 | 3.10.0 |

| Centos 7.9.2009 | 192.168.1.94 | 工作节点2 | 2核/4G | node2 | 3.10.0 |

软件说明

| 软件 | 版本 |

| kubernetes | v1.25.0 |

| containerd | 1.6.10 |

二、环境准备

注:所有节点上执行------------------------开始----------------------------

2.1 修改hostname

hostnamectl set-hostname master hostnamectl set-hostname node1 hostnamectl set-hostname node2

2.2 三台机器网络连通(修改所有节点)

[root@master ~]# cat >> /etc/hosts << EOF 192.168.1.92 master 192.168.1.93 node1 192.168.1.94 node2 EOF

2.3 关闭防火墙

systemctl stop firewalld && systemctl disable firewalld && systemctl status firewalld && firewall-cmd --state

2.4 关闭selinux

setenforce 0 sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config && sestatus

2.5 关闭swap

- 修改/etc/fstab文件,注释掉 SWAP 的自动挂载,使用free -m确认 swap 已经关闭。

swapoff -a sed -i '/swap/s/^\(.*\)$/#\1/g' /etc/fstab

2.6 配置iptables的ACCEPT规则

iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat && iptables -P FORWARD ACCEPT

2.7 设置系统参数

- swappiness 参数调整,swap关闭,也必须添加此参数

cat <<EOF > /etc/sysctl.d/k8s.conf vm.swappiness = 0 net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF

2.8 执行如下命令使修改生效

modprobe br_netfilter sysctl -p /etc/sysctl.d/k8s.conf

2.9 安装 ipvs

cat > /etc/sysconfig/modules/ipvs.modules <<EOF #!/bin/bash modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack_ipv4 EOF chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

上面脚本创建了的/etc/sysconfig/modules/ipvs.modules文件,保证在节点重启后能自动加载所需模块。 使用lsmod | grep -e ip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块。

[root@node1 ~]# lsmod | grep -e ip_vs -e nf_conntrack_ipv4 nf_conntrack_ipv4 15053 19 nf_defrag_ipv4 12729 1 nf_conntrack_ipv4 ip_vs_sh 12688 0 ip_vs_wrr 12697 0 ip_vs_rr 12600 4 ip_vs 145458 10 ip_vs_rr,ip_vs_sh,ip_vs_wrr nf_conntrack 139264 10 ip_vs,nf_nat,nf_nat_ipv4,nf_nat_ipv6,xt_conntrack,nf_nat_masquerade_ipv4,nf_nat_masquerade_ipv6,nf_conntrack_netlink,nf_conntrack_ipv4,nf_conntrack_ipv6 libcrc32c 12644 4 xfs,ip_vs,nf_nat,nf_conntrack

三、安装 ipset 软件包

3.1 安装 ipset 软件包

yum install ipset -y

为了便于查看 ipvs 的代理规则,最好安装一下管理工具 ipvsadm:

yum install ipvsadm -y

3.2 同步服务器时间

yum install chrony -y systemctl enable chronyd systemctl start chronyd chronyc sources

四、安装 containerd

4.1 下载源码库r

wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

4.2 安装containerd

# 查看可安装版本 [root@master ~]# yum list | grep containerd containerd.io.x86_64 1.6.10-3.1.el7 @docker-ce-stable # 执行安装 [root@master ~]# yum -y install containerd.io # 查看 [root@master ~]# rpm -qa | grep containerd containerd.io-1.6.10-3.1.el7.x86_64

4.3 创建containerd配置文件

# 创建目录 mkdir -p /etc/containerd containerd config default > /etc/containerd/config.toml # 替换配置文件 sed -i 's#SystemdCgroup = false#SystemdCgroup = true#' /etc/containerd/config.toml sed -i 's#sandbox_image = "registry.k8s.io/pause:3.6"#sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.6"#' /etc/containerd/config.toml

4.4 启动containerd

systemctl enable containerd systemctl start containerd systemctl status containerd

4.5 验证

[root@master ~]# ctr version Client: Version: 1.6.10 Revision: 770bd0108c32f3fb5c73ae1264f7e503fe7b2661 Go version: go1.18.8 Server: Version: 1.6.10 Revision: 770bd0108c32f3fb5c73ae1264f7e503fe7b2661 UUID: 10b91012-6b24-4059-bf92-d71d269a5fbc

五、安装三大件( kubelet、kubeadm、kubectl)

在确保 Containerd安装完成后,上面的相关环境配置也完成了,现在我们就可以来安装 Kubeadm 了, 我们这里是通过指定yum 源的方式来进行安装。

5.1 下载 kubernetes 源码库

cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF

5.2 安装 kubeadm、kubelet、kubectl(我安装的指定版本1.25.0,有版本要求自己设定版本)

yum install -y kubelet-1.25.0 kubeadm-1.25.0 kubectl-1.25.0

5.3 设置运行时

crictl config runtime-endpoint /run/containerd/containerd.sock

5.4 可以看到我们这里安装的是 v1.25.0版本,将 kubelet 设置成开机启动

systemctl daemon-reload systemctl enable kubelet && systemctl start kubelet

注:所有节点上执行------------------------结束----------------------------

六、初始化集群

初始化master(master执行)kubeadm config print init-defaults > kubeadm.yaml

然后根据我们自己的需求修改配置,比如修改 imageRepository 的值,kube-proxy 的模式为 ipvs,需要 注意的是由于我们使用的containerd作为运行时,所以在初始化节点的时候需要指定 cgroupDriver 为systemd。

- advertiseAddress: 192.168.1.92 # 修改为自己的master节点IP

- name: master # 修改为master主机名

- imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers # 修改为阿里云镜像地址

- kubernetesVersion: 1.25.0 # 确认是否为要安装版本,版本根据执行:kubelet --version 得来

- podSubnet: 172.16.0.0/16 # networking: 下添加pod网络

- scheduler: {} # 添加模式为 ipvs

- cgroupDriver: systemd # 指定 cgroupDriver 为 systemd

[root@master ~]# cat kubeadm.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.1.92

bindPort: 6443

nodeRegistration:

criSocket: unix:///var/run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

name: master

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: 1.25.0

networking:

dnsDomain: cluster.local

podSubnet: 172.16.0.0/16

serviceSubnet: 10.96.0.0/12

scheduler: {}

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

---

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

cgroupDriver: systemd

6.3 使用上面的配置文件进行初始化

kubeadm init --config=kubeadm.yaml

【

注意:CPU核心必须大于1

必须关闭Swap区(临时,永久)

】

[init] Using Kubernetes version: v1.25.0

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

.........

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.1.92:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:637dedda374472a68d5e3f58701a50527692ab281d50181a7d516751333ea8e8

6.4 执行拷贝 kubeconfig 文件

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

6.5 添加节点(node1、node2)

-

如果忘记了上面的 join 命令可以使用命令kubeadm token create --print-join-command重新获取。

[root@node1 ~]# kubeadm join 192.168.1.92:6443 --token abcdef.0123456789abcdef \ > --discovery-token-ca-cert-hash sha256:d87669b0c3630a0c5f566097cedee190764712ee0c8d41fc2db00521fcf9f680 [preflight] Running pre-flight checks [preflight] Reading configuration from the cluster... [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml' [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Starting the kubelet [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap... This node has joined the cluster: * Certificate signing request was sent to apiserver and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

node2:

[root@node2 ~]# kubeadm join 192.168.1.92:6443 --token abcdef.0123456789abcdef \ > --discovery-token-ca-cert-hash sha256:637dedda374472a68d5e3f58701a50527692ab281d50181a7d516751333ea8e8 [preflight] Running pre-flight checks [preflight] Reading configuration from the cluster... [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml' [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Starting the kubelet [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap... This node has joined the cluster: * Certificate signing request was sent to apiserver and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

6.6 查看集群状态:

[root@master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION master NotReady control-plane 2m57s v1.25.0 node1 NotReady <none> 47s v1.25.0 node2 NotReady <none> 29s v1.25.0

七、安装网络插件

可以看到是 NotReady 状态,这是因为还没有安装网络插件,必须部署一个 容器网络接口 (CNI) 基于 Pod 网络附加组件,以便您的 Pod 可以相互通信。在安装网络之前,集群 DNS (CoreDNS) 不会启动。接下来安装网络插件,可以在以下两个任一地址中选择需要安装的网络插件(我选用的第二个地址安装),这里我们安装 calio

- https:// kubernetes.io/docs/setup/production-environment/tools/kubeadm/create-cluster-kubeadm/

- https://projectcalico.docs.tigera.io/archive/v3.23/getting-started/kubernetes/self-managed-onprem/onpremises#install-calico

7.1 下载calico文件

[root@master ~]# curl https://projectcalico.docs.tigera.io/archive/v3.23/manifests/calico.yaml -O

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 226k 100 226k 0 0 278k 0 --:--:-- --:--:-- --:--:-- 278k

7.2 编辑calico.yaml文件:

- 注:文件默认IP为:192.168.0.0/16

- name: CALICO_IPV4POOL_CIDR # 由于在init的时候配置的172网段,所以这里需要修改 value: "172.16.0.0/16"

7.3 安装calico网络插件

[root@master ~]# kubectl apply -f calico.yaml configmap/calico-config created customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created clusterrole.rbac.authorization.k8s.io/calico-node created clusterrolebinding.rbac.authorization.k8s.io/calico-node created daemonset.apps/calico-node created serviceaccount/calico-node created deployment.apps/calico-kube-controllers created serviceaccount/calico-kube-controllers created poddisruptionbudget.policy/calico-kube-controllers created

7.4 查看pod运行状态(每秒刷新一次)

[root@master ~]# watch -n 1 kubectl get pod -n kube-system [root@master ~]# kubectl get pod -n kube-system NAME READY STATUS RESTARTS AGE calico-kube-controllers-d8b9b6478-2khtq 1/1 Running 0 110s calico-node-f4t6r 1/1 Running 0 110s calico-node-f6xfz 1/1 Running 0 110s calico-node-mck5r 1/1 Running 0 110s coredns-7f8cbcb969-2ddsl 1/1 Running 0 4d15h coredns-7f8cbcb969-pm5s8 1/1 Running 0 4d15h etcd-master 1/1 Running 1 4d15h kube-apiserver-master 1/1 Running 1 4d15h kube-controller-manager-master 1/1 Running 1 (70s ago) 4d15h kube-proxy-2hzkf 1/1 Running 0 4d15h kube-proxy-grx5m 1/1 Running 0 4d15h kube-proxy-klklc 1/1 Running 0 4d15h kube-scheduler-master 1/1 Running 2 (73s ago) 4d15h

7.5 查看集群状态

[root@master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION master Ready control-plane 4d15h v1.25.0 node1 Ready <none> 4d15h v1.25.0 node2 Ready <none> 4d15h v1.25.0

八、测试

- 使用k8s启动一个deployment资源

[root@master ~]# vim deploy-nginx.yaml

[root@master ~]# cat deploy-nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 3 # 告知 Deployment 运行 3 个与该模板匹配的 Pod

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

[root@master ~]# kubectl apply -f deploy-nginx.yaml

deployment.apps/nginx-deployment created

[root@master ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-deployment-7fb96c846b-48h24 1/1 Running 0 14s

nginx-deployment-7fb96c846b-ms7c9 1/1 Running 0 14s

nginx-deployment-7fb96c846b-zpsf7 1/1 Running 0 14s

查看所有pod运行状态

[root@master ~]# kubectl get pod -A -o wide NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES default nginx-deployment-7fb96c846b-48h24 1/1 Running 0 61s 172.16.104.3 node2 <none> <none> default nginx-deployment-7fb96c846b-ms7c9 1/1 Running 0 61s 172.16.166.130 node1 <none> <none> default nginx-deployment-7fb96c846b-zpsf7 1/1 Running 0 61s 172.16.166.131 node1 <none> <none> kube-system calico-kube-controllers-d8b9b6478-2khtq 1/1 Running 0 6m46s 172.16.166.129 node1 <none> <none> kube-system calico-node-f4t6r 1/1 Running 0 6m46s 192.168.1.93 node1 <none> <none> kube-system calico-node-f6xfz 1/1 Running 0 6m46s 192.168.1.92 master <none> <none> kube-system calico-node-mck5r 1/1 Running 0 6m46s 192.168.1.94 node2 <none> <none> kube-system coredns-7f8cbcb969-2ddsl 1/1 Running 0 4d15h 172.16.104.2 node2 <none> <none> kube-system coredns-7f8cbcb969-pm5s8 1/1 Running 0 4d15h 172.16.104.1 node2 <none> <none> kube-system etcd-master 1/1 Running 1 4d15h 192.168.1.92 master <none> <none> kube-system kube-apiserver-master 1/1 Running 1 4d15h 192.168.1.92 master <none> <none> kube-system kube-controller-manager-master 1/1 Running 1 (6m6s ago) 4d15h 192.168.1.92 master <none> <none> kube-system kube-proxy-2hzkf 1/1 Running 0 4d15h 192.168.1.94 node2 <none> <none> kube-system kube-proxy-grx5m 1/1 Running 0 4d15h 192.168.1.92 master <none> <none> kube-system kube-proxy-klklc 1/1 Running 0 4d15h 192.168.1.93 node1 <none> <none> kube-system kube-scheduler-master 1/1 Running 2 (6m9s ago) 4d15h 192.168.1.92 master <none> <none>

浙公网安备 33010602011771号

浙公网安备 33010602011771号