2.安装Spark与Python练习

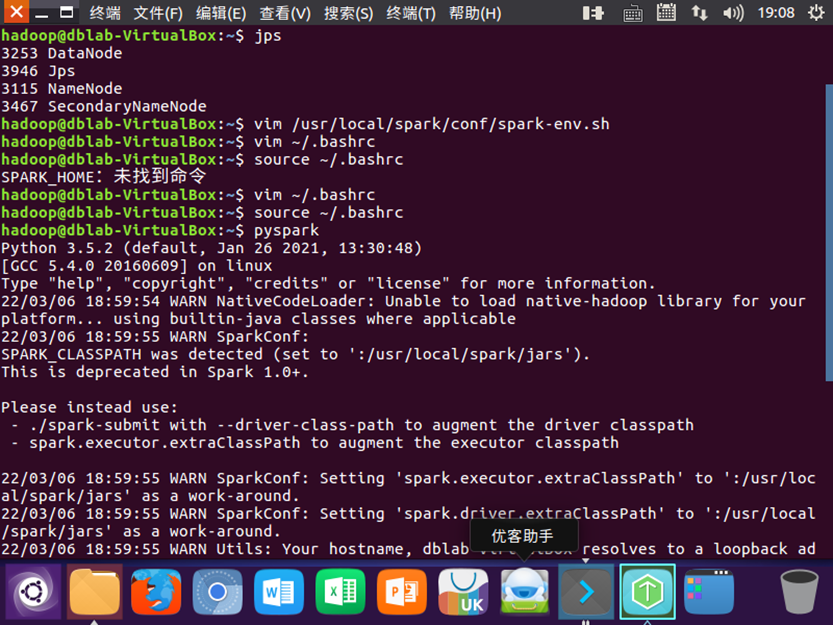

1检查基础环境hadoop,jdk

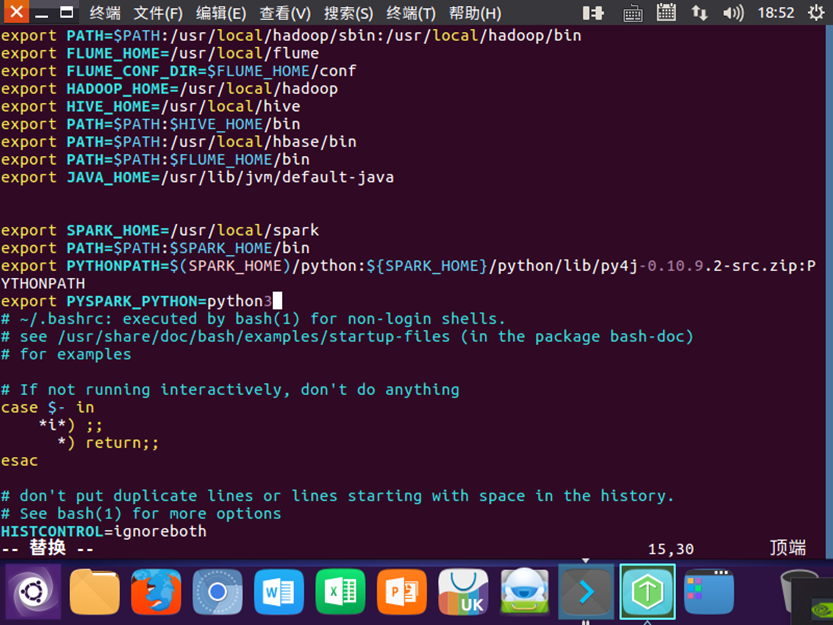

2配置

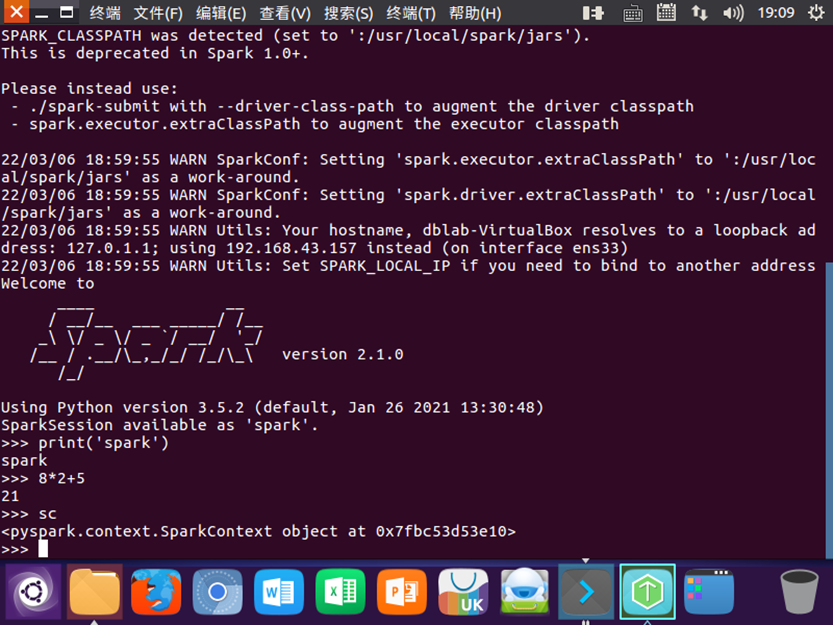

3试运行python代码

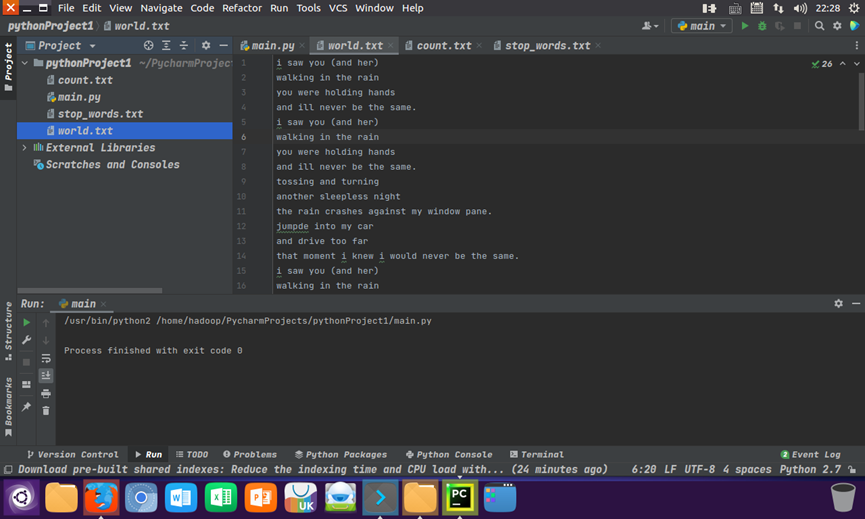

二python编程练习:

准备文本文件:world.txt

读文件,预处理:大小写,标点符号,停用词,分词 main.py

with open("Under the Red Dragon.txt", "r") as f: text=f.read() text = text.lower() for ch in '!@#$%^&*(_)-+=\\[]}{|;:\'\"`~,<.>?/': text=text.replace(ch," ") words = text.split() # 以空格分割文本 stop_words = [] with open('stop_words.txt','r') as f: # 读取停用词文件 for line in f: stop_words.append(line.strip('\n')) afterwords=[] for i in range(len(words)): z=1 for j in range(len(stop_words)): if words[i]==stop_words[j]: continue else: if z==len(stop_words): afterwords.append(words[i]) break z=z+1 continue

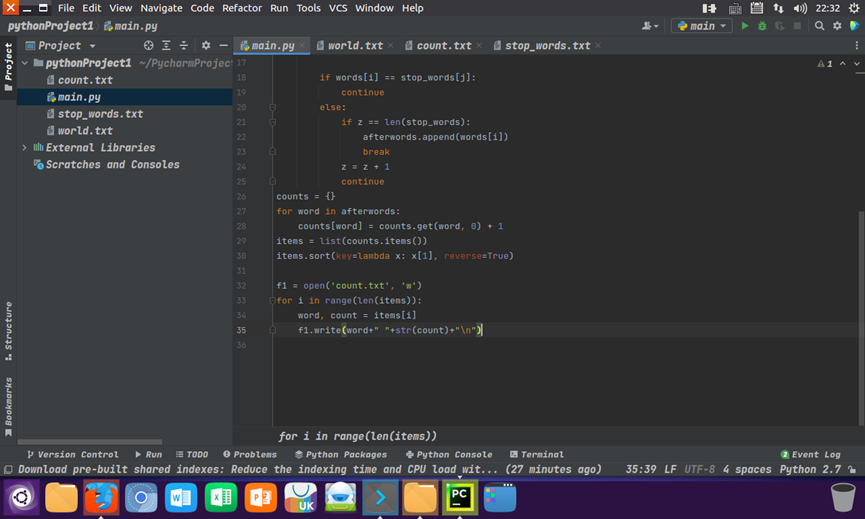

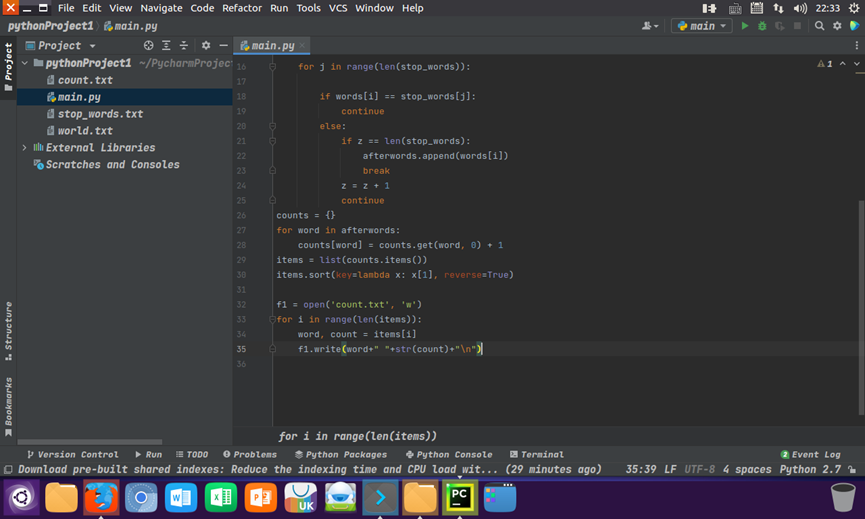

统计每个单词出现的次数,按词频大小排序,结果写文件 main.py

counts = {} for word in afterwords: counts[word] = counts.get(word, 0) + 1 items = list(counts.items()) items.sort(key=lambda x: x[1], reverse=True) f1 = open('count.txt', 'w') for i in range(len(items)): word, count = items[i] f1.write(word+" "+str(count)+"\n")

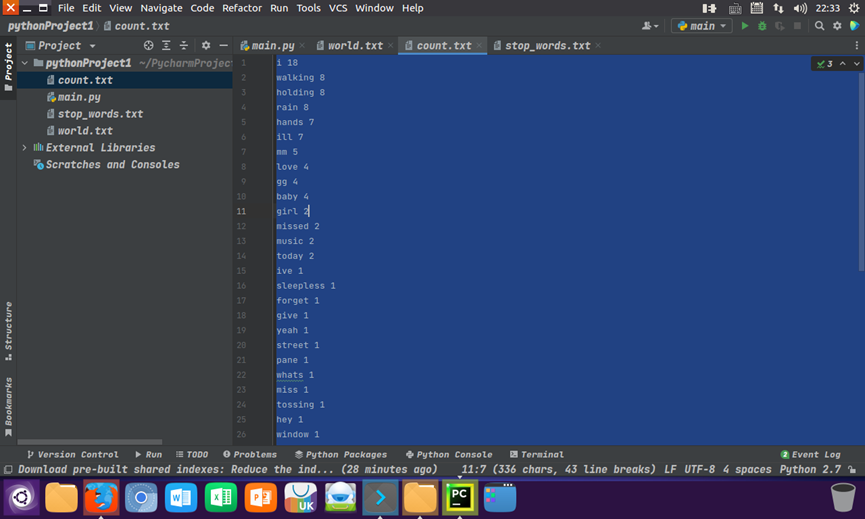

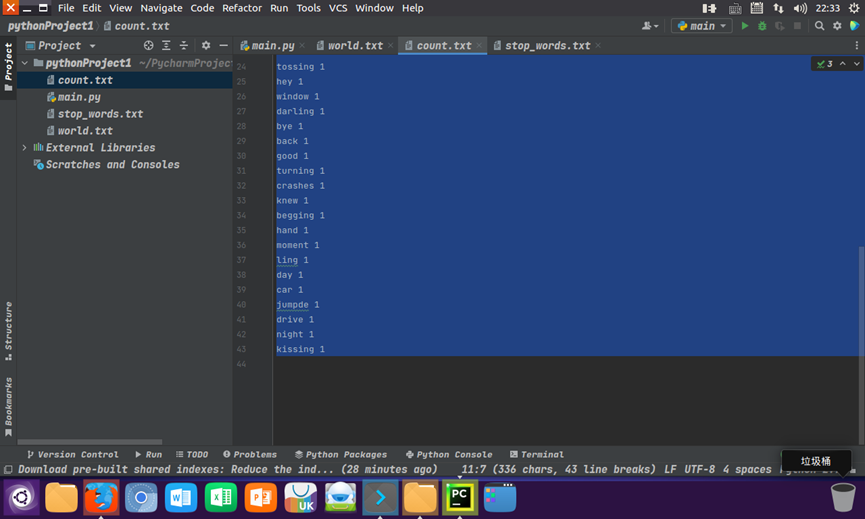

输出结果

三、使用PyCharm搭建编程环境:Ubuntu 16.04 + PyCharm + spark

浙公网安备 33010602011771号

浙公网安备 33010602011771号