C#最基本的小说爬虫

新手学习C#,自己折腾弄了个简单的小说爬虫,实现了把小说内容爬下来写入txt,还只能爬指定网站。

第一次搞爬虫,涉及到了网络协议,正则表达式,弄得手忙脚乱跑起来效率还差劲,慢慢改吧。

爬的目标:http://www.166xs.com/xiaoshuo/83/83557/

一、先写HttpWebRequest把网站扒下来

这里有几个坑,大概说下:

第一个就是记得弄个代理IP爬网站,第一次忘了弄代理然后ip就被封了。。。。。

第二个就是要判断网页是否压缩,第一次没弄结果各种转码gbk utf都是乱码。后面解压就好了。

/// <summary> /// 抓取网页并转码 /// </summary> /// <param name="url"></param> /// <param name="post_parament"></param> /// <returns></returns> public string HttpGet(string url, string post_parament) { string html; HttpWebRequest Web_Request = (HttpWebRequest)WebRequest.Create(url); Web_Request.Timeout = 30000; Web_Request.Method = "GET"; Web_Request.UserAgent = "Mozilla/4.0"; Web_Request.Headers.Add("Accept-Encoding", "gzip, deflate"); //Web_Request.Credentials = CredentialCache.DefaultCredentials; //设置代理属性WebProxy------------------------------------------------- WebProxy proxy = new WebProxy("111.13.7.120", 80); //在发起HTTP请求前将proxy赋值给HttpWebRequest的Proxy属性 Web_Request.Proxy = proxy; HttpWebResponse Web_Response = (HttpWebResponse)Web_Request.GetResponse(); if (Web_Response.ContentEncoding.ToLower() == "gzip") // 如果使用了GZip则先解压 { using (Stream Stream_Receive = Web_Response.GetResponseStream()) { using (var Zip_Stream = new GZipStream(Stream_Receive, CompressionMode.Decompress)) { using (StreamReader Stream_Reader = new StreamReader(Zip_Stream, Encoding.Default)) { html = Stream_Reader.ReadToEnd(); } } } } else { using (Stream Stream_Receive = Web_Response.GetResponseStream()) { using (StreamReader Stream_Reader = new StreamReader(Stream_Receive, Encoding.Default)) { html = Stream_Reader.ReadToEnd(); } } } return html; }

二、下面就是用正则处理内容了,由于正则表达式不熟悉所以重复动作太多。

1.先获取网页内容

IWebHttpRepository webHttpRepository = new WebHttpRepository(); string html = webHttpRepository.HttpGet(Url_Txt.Text, "");

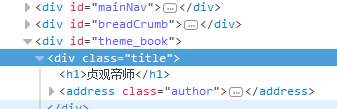

2.获取书名和文章列表

书名

文章列表

string Novel_Name = Regex.Match(html, @"(?<=<h1>)([\S\s]*?)(?=</h1>)").Value; //获取书名 Regex Regex_Menu = new Regex(@"(?is)(?<=<dl class=""book_list"">).+?(?=</dl>)"); string Result_Menu = Regex_Menu.Match(html).Value; //获取列表内容 Regex Regex_List = new Regex(@"(?is)(?<=<dd>).+?(?=</dd>)"); var Result_List = Regex_List.Matches(Result_Menu); //获取列表集合

3.因为章节列表前面有多余的<dd>,所以要剔除

int i = 0; //计数 string Menu_Content = ""; //所有章节 foreach (var x in Result_List) { if (i < 4) { //前面五个都不是章节列表,所以剔除 } else { Menu_Content += x.ToString(); } i++; }

4.然后获取<a>的href和innerHTML,然后遍历访问获得内容和章节名称并处理,然后写入txt

Regex Regex_Href = new Regex(@"(?is)<a[^>]*?href=(['""]?)(?<url>[^'""\s>]+)\1[^>]*>(?<text>(?:(?!</?a\b).)*)</a>"); MatchCollection Result_Match_List = Regex_Href.Matches(Menu_Content); //获取href链接和a标签 innerHTML string Novel_Path = Directory.GetCurrentDirectory() + "\\Novel\\" + Novel_Name + ".txt"; //小说地址 File.Create(Novel_Path).Close(); StreamWriter Write_Content = new StreamWriter(Novel_Path); foreach (Match Result_Single in Result_Match_List) { string Url_Text = Result_Single.Groups["url"].Value; string Content_Text = Result_Single.Groups["text"].Value; string Content_Html = webHttpRepository.HttpGet(Url_Txt.Text + Url_Text, "");//获取内容页 Regex Rege_Content = new Regex(@"(?is)(?<=<p class=""Book_Text"">).+?(?=</p>)"); string Result_Content = Rege_Content.Match(Content_Html).Value; //获取文章内容 Regex Regex_Main = new Regex(@"( )(.*)"); string Rsult_Main = Regex_Main.Match(Result_Content).Value; //正文 string Screen_Content = Rsult_Main.Replace(" ", "").Replace("<br />", "\r\n"); Write_Content.WriteLine(Content_Text + "\r\n");//写入标题 Write_Content.WriteLine(Screen_Content);//写入内容 } Write_Content.Dispose(); Write_Content.Close(); MessageBox.Show(Novel_Name+".txt 创建成功!"); System.Diagnostics.Process.Start(Directory.GetCurrentDirectory() + "\\Novel\\");

三、小说写入成功

浙公网安备 33010602011771号

浙公网安备 33010602011771号