|

|

Posted on

2019-04-09 20:47

心默默言

阅读( 771)

评论()

收藏

举报

PCA——主成分分析

简介

PCA全称Principal Component Analysis,即主成分分析,是一种常用的数据降维方法。它可以通过线性变换将原始数据变换为一组各维度线性无关的表示,以此来提取数据的主要线性分量。

z=wTx

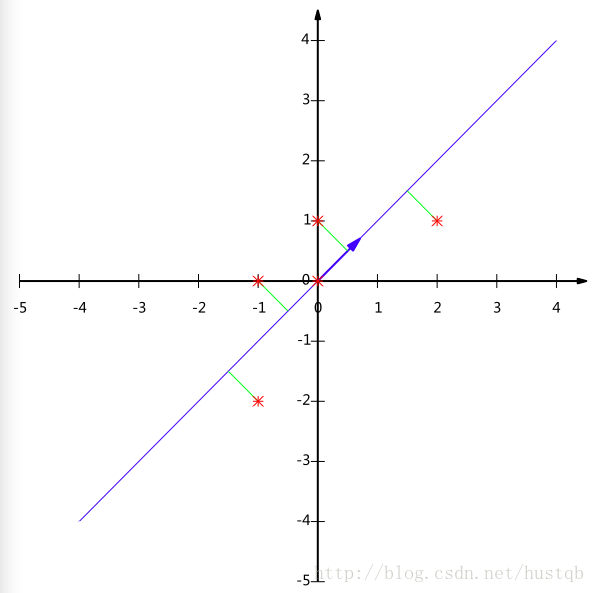

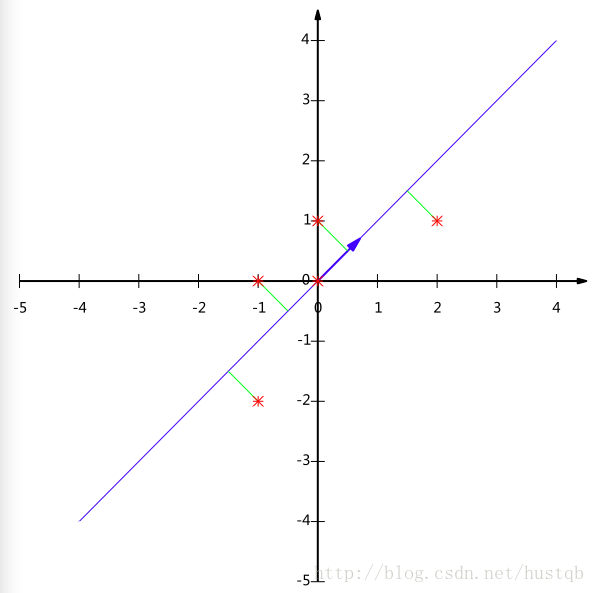

其中,z为低维矩阵,x为高维矩阵,w为两者之间的映射关系。假如我们有二维数据(原始数据有两个特征轴——特征1和特征2)如下图所示,样本点分布为斜45°的蓝色椭圆区域。

PCA算法认为斜45°为主要线性分量,与之正交的虚线是次要线性分量(应当舍去以达到降维的目的)。

划重点:

- 线性变换=>新特征轴可由原始特征轴线性变换表征

- 线性无关=>构建的特征轴是正交的

- 主要线性分量(或者说是主成分)=>方差加大的方向

- PCA算法的求解就是找到主要线性分量及其表征方式的过程

相应的,PCA解释方差并对离群点很敏感:少量原远离中心的点对方差有很大的影响,从而也对特征向量有很大的影响。

线性变换

一个矩阵与一个列向量A相乘,等到一个新的列向量B,则称该矩阵为列向量A到列向量B的线性变换。

我们希望投影后投影值尽可能分散,而这种分散程度,可以用数学上的方差来表述。

即寻找一个一维基,使得所有数据变换为这个基上的坐标表示后,方差值最大。

解释:方差越大,说明数据越分散。通常认为,数据的某个特征维度上数据越分散,该特征越重要。

对于更高维度,还有一个问题需要解决,考虑三维降到二维问题。与之前相同,首先我们希望找到一个方向使得投影后方差最大,这样就完成了第一个方向的选择,继而我们选择第二个投影方向。如果我们还是单纯只选择方差最大的方向,很明显,这个方向与第一个方向应该是“几乎重合在一起”,显然这样的维度是没有用的,因此,应该有其他约束条件——就是正交

解释:从直观上说,让两个字段尽可能表示更多的原始信息,我们是不希望它们之间存在(线性)相关性的,因为相关性意味着两个字段不是完全独立,必然存在重复表示的信息。

字段在本文中指,降维后的样本的特征轴

数学上可以用两个字段的协方差表示其相关性:

注意:应该除以m-1

当协方差为0时,表示两个字段线性不相关。

总结一下,PCA的优化目标是:

将一组N维向量降为K维(K大于0,小于N),其目标是选择K个单位正交基,使得原始数据变换到这组基上后,各字段两两间协方差为0,而字段的方差则尽可能大。

所以现在的重点是方差和协方差

协方差

在统计学上,协方差用来刻画两个随机变量之间的相关性,反映的是变量之间的二阶统计特性。考虑两个随机变量Xi 和 Xj ,它们的协方差定义为:

协方差矩阵:

假设有m个变量,特征维度为2,a1表示变量1的a特征。那么构成的数据集矩阵为:

再假设它们的均值都是0,对于有两个均值为0的m维向量组成的向量组,

可以发现对角线上的元素是两个字段的方差,其他元素是两个字段的协方差,两者都被统一到了一个矩阵——协方差矩阵中。

回顾一下前面所说的PCA算法的目标:方差max,协方差min!!

要达到PCA降维目的,等价于将协方差矩阵对角化:即除对角线外的其他元素化为0,并且在对角线上将元素按大小从上到下排列,这样我们就达到了优化目的。

设原始数据矩阵X对应的协方差矩阵为C,而P是一组基按行组成的矩阵,设Y=PX,则Y为X对P做基变换后的数据。设Y的协方差矩阵为D,我们推导一下D与C的关系:

解释:想让原始数据集X =>pca成数据集Y,使得Y的协方差矩阵是个对角矩阵。

有上述推导可得,若有矩阵P能使X的协方差矩阵对角化,则P就是我们要找的PCA变换。

优化目标变成了寻找一个矩阵P,满足 是一个对角矩阵,并且对角元素按从大到小依次排列,那么P的前K行就是要寻找的基,用P的前K行组成的矩阵乘以X就使得X从N维降到了K维,并满足上述优化条件。 是一个对角矩阵,并且对角元素按从大到小依次排列,那么P的前K行就是要寻找的基,用P的前K行组成的矩阵乘以X就使得X从N维降到了K维,并满足上述优化条件。

矩阵对角化

首先,原始数据矩阵X的协方差矩阵C是一个实对称矩阵,它有特殊的数学性质:

- 实对称矩阵不同特征值对应的特征向量必然正交。

- 设特征值λ重数为r,则必然存在r个线性无关的特征向量对应于λ,因此可以将这r个特征向量单位正交化。

P是协方差矩阵的特征向量单位化后按行排列出的矩阵,其中每一行都是C的一个特征向量。如果设P按照中特征值的从大到小,将特征向量从上到下排列,则用P的前K行组成的矩阵乘以原始数据矩阵X,就得到了我们需要的降维后的数据矩阵Y。

算法及实例

PCA算法

小例子:

降维过程的示意图

https://www.jianshu.com/u/1ebb0a071a9f

| | 5.1 | 3.5 | 1.4 | 0.2 | Iris-setosa |

|---|

| 0 |

4.9 |

3.0 |

1.4 |

0.2 |

Iris-setosa |

|---|

| 1 |

4.7 |

3.2 |

1.3 |

0.2 |

Iris-setosa |

|---|

| 2 |

4.6 |

3.1 |

1.5 |

0.2 |

Iris-setosa |

|---|

| 3 |

5.0 |

3.6 |

1.4 |

0.2 |

Iris-setosa |

|---|

| 4 |

5.4 |

3.9 |

1.7 |

0.4 |

Iris-setosa |

|---|

| | sepal_len | sepal_wid | petal_len | petal_wid | class |

|---|

| 0 |

4.9 |

3.0 |

1.4 |

0.2 |

Iris-setosa |

|---|

| 1 |

4.7 |

3.2 |

1.3 |

0.2 |

Iris-setosa |

|---|

| 2 |

4.6 |

3.1 |

1.5 |

0.2 |

Iris-setosa |

|---|

| 3 |

5.0 |

3.6 |

1.4 |

0.2 |

Iris-setosa |

|---|

| 4 |

5.4 |

3.9 |

1.7 |

0.4 |

Iris-setosa |

|---|

[[-1.1483555 -0.11805969 -1.35396443 -1.32506301]

[-1.3905423 0.34485856 -1.41098555 -1.32506301]

[-1.51163569 0.11339944 -1.29694332 -1.32506301]

[-1.02726211 1.27069504 -1.35396443 -1.32506301]

[-0.54288852 1.9650724 -1.18290109 -1.0614657 ]

[-1.51163569 0.8077768 -1.35396443 -1.19326436]

[-1.02726211 0.8077768 -1.29694332 -1.32506301]

[-1.75382249 -0.34951881 -1.35396443 -1.32506301]

[-1.1483555 0.11339944 -1.29694332 -1.45686167]

[-0.54288852 1.50215416 -1.29694332 -1.32506301]

[-1.2694489 0.8077768 -1.23992221 -1.32506301]

[-1.2694489 -0.11805969 -1.35396443 -1.45686167]

[-1.87491588 -0.11805969 -1.52502777 -1.45686167]

[-0.05851493 2.19653152 -1.46800666 -1.32506301]

[-0.17960833 3.122368 -1.29694332 -1.0614657 ]

[-0.54288852 1.9650724 -1.41098555 -1.0614657 ]

[-0.90616871 1.03923592 -1.35396443 -1.19326436]

[-0.17960833 1.73361328 -1.18290109 -1.19326436]

[-0.90616871 1.73361328 -1.29694332 -1.19326436]

[-0.54288852 0.8077768 -1.18290109 -1.32506301]

[-0.90616871 1.50215416 -1.29694332 -1.0614657 ]

[-1.51163569 1.27069504 -1.58204889 -1.32506301]

[-0.90616871 0.57631768 -1.18290109 -0.92966704]

[-1.2694489 0.8077768 -1.06885886 -1.32506301]

[-1.02726211 -0.11805969 -1.23992221 -1.32506301]

[-1.02726211 0.8077768 -1.23992221 -1.0614657 ]

[-0.78507531 1.03923592 -1.29694332 -1.32506301]

[-0.78507531 0.8077768 -1.35396443 -1.32506301]

[-1.3905423 0.34485856 -1.23992221 -1.32506301]

[-1.2694489 0.11339944 -1.23992221 -1.32506301]

[-0.54288852 0.8077768 -1.29694332 -1.0614657 ]

[-0.78507531 2.42799064 -1.29694332 -1.45686167]

[-0.42179512 2.65944976 -1.35396443 -1.32506301]

[-1.1483555 0.11339944 -1.29694332 -1.45686167]

[-1.02726211 0.34485856 -1.46800666 -1.32506301]

[-0.42179512 1.03923592 -1.41098555 -1.32506301]

[-1.1483555 0.11339944 -1.29694332 -1.45686167]

[-1.75382249 -0.11805969 -1.41098555 -1.32506301]

[-0.90616871 0.8077768 -1.29694332 -1.32506301]

[-1.02726211 1.03923592 -1.41098555 -1.19326436]

[-1.63272909 -1.73827353 -1.41098555 -1.19326436]

[-1.75382249 0.34485856 -1.41098555 -1.32506301]

[-1.02726211 1.03923592 -1.23992221 -0.79786838]

[-0.90616871 1.73361328 -1.06885886 -1.0614657 ]

[-1.2694489 -0.11805969 -1.35396443 -1.19326436]

[-0.90616871 1.73361328 -1.23992221 -1.32506301]

[-1.51163569 0.34485856 -1.35396443 -1.32506301]

[-0.66398191 1.50215416 -1.29694332 -1.32506301]

[-1.02726211 0.57631768 -1.35396443 -1.32506301]

[ 1.39460583 0.34485856 0.52773232 0.25652088]

[ 0.66804545 0.34485856 0.41369009 0.38831953]

[ 1.27351244 0.11339944 0.64177455 0.38831953]

[-0.42179512 -1.73827353 0.12858453 0.12472222]

[ 0.78913885 -0.58097793 0.47071121 0.38831953]

[-0.17960833 -0.58097793 0.41369009 0.12472222]

[ 0.54695205 0.57631768 0.52773232 0.52011819]

[-1.1483555 -1.50681441 -0.27056327 -0.27067375]

[ 0.91023225 -0.34951881 0.47071121 0.12472222]

[-0.78507531 -0.81243705 0.07156341 0.25652088]

[-1.02726211 -2.43265089 -0.15652104 -0.27067375]

[ 0.06257847 -0.11805969 0.24262675 0.38831953]

[ 0.18367186 -1.96973265 0.12858453 -0.27067375]

[ 0.30476526 -0.34951881 0.52773232 0.25652088]

[-0.30070172 -0.34951881 -0.09949993 0.12472222]

[ 1.03132564 0.11339944 0.35666898 0.25652088]

[-0.30070172 -0.11805969 0.41369009 0.38831953]

[-0.05851493 -0.81243705 0.18560564 -0.27067375]

[ 0.42585866 -1.96973265 0.41369009 0.38831953]

[-0.30070172 -1.27535529 0.07156341 -0.1388751 ]

[ 0.06257847 0.34485856 0.58475344 0.78371551]

[ 0.30476526 -0.58097793 0.12858453 0.12472222]

[ 0.54695205 -1.27535529 0.64177455 0.38831953]

[ 0.30476526 -0.58097793 0.52773232 -0.00707644]

[ 0.66804545 -0.34951881 0.29964787 0.12472222]

[ 0.91023225 -0.11805969 0.35666898 0.25652088]

[ 1.15241904 -0.58097793 0.58475344 0.25652088]

[ 1.03132564 -0.11805969 0.69879566 0.65191685]

[ 0.18367186 -0.34951881 0.41369009 0.38831953]

[-0.17960833 -1.04389617 -0.15652104 -0.27067375]

[-0.42179512 -1.50681441 0.0145423 -0.1388751 ]

[-0.42179512 -1.50681441 -0.04247882 -0.27067375]

[-0.05851493 -0.81243705 0.07156341 -0.00707644]

[ 0.18367186 -0.81243705 0.75581678 0.52011819]

[-0.54288852 -0.11805969 0.41369009 0.38831953]

[ 0.18367186 0.8077768 0.41369009 0.52011819]

[ 1.03132564 0.11339944 0.52773232 0.38831953]

[ 0.54695205 -1.73827353 0.35666898 0.12472222]

[-0.30070172 -0.11805969 0.18560564 0.12472222]

[-0.42179512 -1.27535529 0.12858453 0.12472222]

[-0.42179512 -1.04389617 0.35666898 -0.00707644]

[ 0.30476526 -0.11805969 0.47071121 0.25652088]

[-0.05851493 -1.04389617 0.12858453 -0.00707644]

[-1.02726211 -1.73827353 -0.27056327 -0.27067375]

[-0.30070172 -0.81243705 0.24262675 0.12472222]

[-0.17960833 -0.11805969 0.24262675 -0.00707644]

[-0.17960833 -0.34951881 0.24262675 0.12472222]

[ 0.42585866 -0.34951881 0.29964787 0.12472222]

[-0.90616871 -1.27535529 -0.44162661 -0.1388751 ]

[-0.17960833 -0.58097793 0.18560564 0.12472222]

[ 0.54695205 0.57631768 1.2690068 1.70630611]

[-0.05851493 -0.81243705 0.75581678 0.91551417]

[ 1.51569923 -0.11805969 1.21198569 1.17911148]

[ 0.54695205 -0.34951881 1.04092235 0.78371551]

[ 0.78913885 -0.11805969 1.15496457 1.31091014]

[ 2.12116622 -0.11805969 1.61113348 1.17911148]

[-1.1483555 -1.27535529 0.41369009 0.65191685]

[ 1.75788602 -0.34951881 1.44007014 0.78371551]

[ 1.03132564 -1.27535529 1.15496457 0.78371551]

[ 1.63679263 1.27069504 1.32602791 1.70630611]

[ 0.78913885 0.34485856 0.75581678 1.04731282]

[ 0.66804545 -0.81243705 0.869859 0.91551417]

[ 1.15241904 -0.11805969 0.98390123 1.17911148]

[-0.17960833 -1.27535529 0.69879566 1.04731282]

[-0.05851493 -0.58097793 0.75581678 1.57450745]

[ 0.66804545 0.34485856 0.869859 1.4427088 ]

[ 0.78913885 -0.11805969 0.98390123 0.78371551]

[ 2.24225961 1.73361328 1.6681546 1.31091014]

[ 2.24225961 -1.04389617 1.78219682 1.4427088 ]

[ 0.18367186 -1.96973265 0.69879566 0.38831953]

[ 1.27351244 0.34485856 1.09794346 1.4427088 ]

[-0.30070172 -0.58097793 0.64177455 1.04731282]

[ 2.24225961 -0.58097793 1.6681546 1.04731282]

[ 0.54695205 -0.81243705 0.64177455 0.78371551]

[ 1.03132564 0.57631768 1.09794346 1.17911148]

[ 1.63679263 0.34485856 1.2690068 0.78371551]

[ 0.42585866 -0.58097793 0.58475344 0.78371551]

[ 0.30476526 -0.11805969 0.64177455 0.78371551]

[ 0.66804545 -0.58097793 1.04092235 1.17911148]

[ 1.63679263 -0.11805969 1.15496457 0.52011819]

[ 1.87897942 -0.58097793 1.32602791 0.91551417]

[ 2.48444641 1.73361328 1.49709126 1.04731282]

[ 0.66804545 -0.58097793 1.04092235 1.31091014]

[ 0.54695205 -0.58097793 0.75581678 0.38831953]

[ 0.30476526 -1.04389617 1.04092235 0.25652088]

[ 2.24225961 -0.11805969 1.32602791 1.4427088 ]

[ 0.54695205 0.8077768 1.04092235 1.57450745]

[ 0.66804545 0.11339944 0.98390123 0.78371551]

[ 0.18367186 -0.11805969 0.58475344 0.78371551]

[ 1.27351244 0.11339944 0.92688012 1.17911148]

[ 1.03132564 0.11339944 1.04092235 1.57450745]

[ 1.27351244 0.11339944 0.75581678 1.4427088 ]

[-0.05851493 -0.81243705 0.75581678 0.91551417]

[ 1.15241904 0.34485856 1.21198569 1.4427088 ]

[ 1.03132564 0.57631768 1.09794346 1.70630611]

[ 1.03132564 -0.11805969 0.81283789 1.4427088 ]

[ 0.54695205 -1.27535529 0.69879566 0.91551417]

[ 0.78913885 -0.11805969 0.81283789 1.04731282]

[ 0.42585866 0.8077768 0.92688012 1.4427088 ]

[ 0.06257847 -0.11805969 0.75581678 0.78371551]]

Covariance matrix

[[ 1.00675676 -0.10448539 0.87716999 0.82249094]

[-0.10448539 1.00675676 -0.41802325 -0.35310295]

[ 0.87716999 -0.41802325 1.00675676 0.96881642]

[ 0.82249094 -0.35310295 0.96881642 1.00675676]]

NumPy covariance matrix:

[[ 1.00675676 -0.10448539 0.87716999 0.82249094]

[-0.10448539 1.00675676 -0.41802325 -0.35310295]

[ 0.87716999 -0.41802325 1.00675676 0.96881642]

[ 0.82249094 -0.35310295 0.96881642 1.00675676]]

Eigenvectors

[[ 0.52308496 -0.36956962 -0.72154279 0.26301409]

[-0.25956935 -0.92681168 0.2411952 -0.12437342]

[ 0.58184289 -0.01912775 0.13962963 -0.80099722]

[ 0.56609604 -0.06381646 0.63380158 0.52321917]]

Eigenvalues

[2.92442837 0.93215233 0.14946373 0.02098259]

[(2.92442836911111, array([ 0.52308496, -0.25956935, 0.58184289, 0.56609604])), (0.9321523302535064, array([-0.36956962, -0.92681168, -0.01912775, -0.06381646])), (0.14946373489813336, array([-0.72154279, 0.2411952 , 0.13962963, 0.63380158])), (0.020982592764270974, array([ 0.26301409, -0.12437342, -0.80099722, 0.52321917]))]

----------

Eigenvalues in descending order:

2.92442836911111

0.9321523302535064

0.14946373489813336

0.020982592764270974

[72.6200333269203, 23.14740685864416, 3.711515564584526, 0.521044249851025]

Out[18]:

array([ 72.62003333, 95.76744019, 99.47895575, 100. ])

[1 2 3 4]

-----------

[ 1 3 6 10]

Matrix W:

[[ 0.52308496 -0.36956962]

[-0.25956935 -0.92681168]

[ 0.58184289 -0.01912775]

[ 0.56609604 -0.06381646]]

Out[21]:

array([[-2.10795032, 0.64427554],

[-2.38797131, 0.30583307],

[-2.32487909, 0.56292316],

[-2.40508635, -0.687591 ],

[-2.08320351, -1.53025171],

[-2.4636848 , -0.08795413],

[-2.25174963, -0.25964365],

[-2.3645813 , 1.08255676],

[-2.20946338, 0.43707676],

[-2.17862017, -1.08221046],

[-2.34525657, -0.17122946],

[-2.24590315, 0.6974389 ],

[-2.66214582, 0.92447316],

[-2.2050227 , -1.90150522],

[-2.25993023, -2.73492274],

[-2.21591283, -1.52588897],

[-2.20705382, -0.52623535],

[-1.9077081 , -1.4415791 ],

[-2.35411558, -1.17088308],

[-1.93202643, -0.44083479],

[-2.21942518, -0.96477499],

[-2.79116421, -0.50421849],

[-1.83814105, -0.11729122],

[-2.24572458, -0.17450151],

[-1.97825353, 0.59734172],

[-2.06935091, -0.27755619],

[-2.18514506, -0.56366755],

[-2.15824269, -0.34805785],

[-2.28843932, 0.30256102],

[-2.16501749, 0.47232759],

[-1.8491597 , -0.45547527],

[-2.62023392, -1.84237072],

[-2.44885384, -2.1984673 ],

[-2.20946338, 0.43707676],

[-2.23112223, 0.17266644],

[-2.06147331, -0.6957435 ],

[-2.20946338, 0.43707676],

[-2.45783833, 0.86912843],

[-2.1884075 , -0.30439609],

[-2.30357329, -0.48039222],

[-1.89932763, 2.31759817],

[-2.57799771, 0.4400904 ],

[-1.98020921, -0.50889705],

[-2.14679556, -1.18365675],

[-2.09668176, 0.68061705],

[-2.39554894, -1.16356284],

[-2.41813611, 0.34949483],

[-2.24196231, -1.03745802],

[-2.22484727, -0.04403395],

[ 1.09225538, -0.86148748],

[ 0.72045861, -0.59920238],

[ 1.2299583 , -0.61280832],

[ 0.37598859, 1.756516 ],

[ 1.05729685, 0.21303055],

[ 0.36816104, 0.58896262],

[ 0.73800214, -0.77956125],

[-0.52021731, 1.84337921],

[ 0.9113379 , -0.02941906],

[-0.01292322, 1.02537703],

[-0.15020174, 2.65452146],

[ 0.42437533, 0.05686991],

[ 0.52894687, 1.77250558],

[ 0.70241525, 0.18484154],

[-0.05385675, 0.42901221],

[ 0.86277668, -0.50943908],

[ 0.33388091, 0.18785518],

[ 0.13504146, 0.7883247 ],

[ 1.19457128, 1.63549265],

[ 0.13677262, 1.30063807],

[ 0.72711201, -0.40394501],

[ 0.45564294, 0.41540628],

[ 1.21038365, 0.94282042],

[ 0.61327355, 0.4161824 ],

[ 0.68512164, 0.06335788],

[ 0.85951424, -0.25016762],

[ 1.23906722, 0.08500278],

[ 1.34575245, -0.32669695],

[ 0.64732915, 0.22336443],

[-0.06728496, 1.05414028],

[ 0.10033285, 1.56100021],

[-0.00745518, 1.57050182],

[ 0.2179082 , 0.77368423],

[ 1.04116321, 0.63744742],

[ 0.20719664, 0.27736006],

[ 0.42154138, -0.85764157],

[ 1.03691937, -0.52112206],

[ 1.015435 , 1.39413373],

[ 0.0519502 , 0.20903977],

[ 0.25582921, 1.32747797],

[ 0.25384813, 1.11700714],

[ 0.60915822, -0.02858679],

[ 0.31116522, 0.98711256],

[-0.39679548, 2.01314578],

[ 0.26536661, 0.85150613],

[ 0.07385897, 0.17160757],

[ 0.20854936, 0.37771566],

[ 0.55843737, 0.15286277],

[-0.47853403, 1.53421644],

[ 0.23545172, 0.59332536],

[ 1.8408037 , -0.86943848],

[ 1.13831104, 0.70171953],

[ 2.19615974, -0.54916658],

[ 1.42613827, 0.05187679],

[ 1.8575403 , -0.28797217],

[ 2.74511173, -0.78056359],

[ 0.34010583, 1.5568955 ],

[ 2.29180093, -0.40328242],

[ 1.98618025, 0.72876171],

[ 2.26382116, -1.91685818],

[ 1.35591821, -0.69255356],

[ 1.58471851, 0.43102351],

[ 1.87342402, -0.41054652],

[ 1.23656166, 1.16818977],

[ 1.45128483, 0.4451459 ],

[ 1.58276283, -0.67521526],

[ 1.45956552, -0.25105642],

[ 2.43560434, -2.55096977],

[ 3.29752602, 0.01266612],

[ 1.23377366, 1.71954411],

[ 2.03218282, -0.90334021],

[ 0.95980311, 0.57047585],

[ 2.88717988, -0.38895776],

[ 1.31405636, 0.48854962],

[ 1.69619746, -1.01153249],

[ 1.94868773, -0.99881497],

[ 1.1574572 , 0.31987373],

[ 1.007133 , -0.06550254],

[ 1.7733922 , 0.19641059],

[ 1.85327106, -0.55077372],

[ 2.4234788 , -0.2397454 ],

[ 2.31353522, -2.62038074],

[ 1.84800289, 0.18799967],

[ 1.09649923, 0.29708201],

[ 1.1812503 , 0.81858241],

[ 2.79178861, -0.83668445],

[ 1.57340399, -1.07118383],

[ 1.33614369, -0.420823 ],

[ 0.91061354, -0.01965942],

[ 1.84350913, -0.66872729],

[ 2.00701161, -0.60663655],

[ 1.89319854, -0.68227708],

[ 1.13831104, 0.70171953],

[ 2.03519535, -0.86076914],

[ 1.99464025, -1.04517619],

[ 1.85977129, -0.37934387],

[ 1.54200377, 0.90808604],

[ 1.50925493, -0.26460621],

[ 1.3690965 , -1.01583909],

[ 0.94680339, 0.02182097]])

进一步讨论 根据上面对PCA的数学原理的解释,我们可以了解到一些PCA的能力和限制。PCA本质上是将方差最大的方向作为主要特征,并且在各个正交方向上将数据“离相关”,也就是让它们在不同正交方向上没有相关性。

因此,PCA也存在一些限制,例如它可以很好的解除线性相关,但是对于高阶相关性就没有办法了,对于存在高阶相关性的数据,可以考虑Kernel PCA,通过Kernel函数将非线性相关转为线性相关,关于这点就不展开讨论了。另外,PCA假设数据各主特征是分布在正交方向上,如果在非正交方向上存在几个方差较大的方向,PCA的效果就大打折扣了。

最后需要说明的是,PCA是一种无参数技术,也就是说面对同样的数据,如果不考虑清洗,谁来做结果都一样,没有主观参数的介入,所以PCA便于通用实现,但是本身无法个性化的优化。

|

![]() 是一个对角矩阵,并且对角元素按从大到小依次排列,那么P的前K行就是要寻找的基,用P的前K行组成的矩阵乘以X就使得X从N维降到了K维,并满足上述优化条件。

是一个对角矩阵,并且对角元素按从大到小依次排列,那么P的前K行就是要寻找的基,用P的前K行组成的矩阵乘以X就使得X从N维降到了K维,并满足上述优化条件。

浙公网安备 33010602011771号

浙公网安备 33010602011771号