《深度学习入门-基于Python的理论与实现》读书笔记-07

四维数组

import numpy as np x = np.random.rand(10,1,28,28) 访问第1个数据 #print(x[0]) #如果要访问第1个数据的第1个通道的空间数据 print(x[0][0]) #28*28

基于 im2col的展开

im2col是一个函数,将输入数据展开以适合滤波器(权重)。 对3维的输入数据应用im2col后,数据转换为2维矩阵(正确地讲,是把包含批数量的4维数据转换成了2维数据)。

import numpy as np import sys,os sys.path.append(os.pardir) from common.util import im2col x1 = np.random.rand(1,3,7,7) #个数、通道、高、长 coll = im2col(x1,5,5,stride=1,pad=0) #滤波器大小、步幅、填充 print(coll.shape) #(9, 75) x2 = np.random.rand(10,3,7,7) #10个数据 coll = im2col(x2,5,5,stride=1,pad=0) print(coll.shape) #(90, 75)

使用im2col来实现卷积层

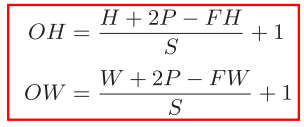

通道数为C、高度为H、 长度为W的数据的形状可以写成(C,H,W)。滤波器也一样,要按(channel,height,width)的顺序书写。比如,通道数为C、滤波器高度为FH(Filter Height)、长度为FW(Filter Width)时,可以写成(C,FH, FW)。

import numpy as np import sys, os sys.path.append(os.pardir) from common.util import im2col class Convolution: def __init__(self,W,b,stride=0,pad=0): self.W = W #权重 self.b = b #偏置 self.stride = stride #步幅 self.pad = pad #填充 def forward(self,x): FN,C,FH,FW = self.W.shape #滤波器个数、通道数、高、长 N,C,H,W = x.shape #数据个数、通道数、高、长 #假设输入大小为(H, W),滤波器大小为(FH, FW),输出大小为(OH, OW),填充为P,步幅为S。 out_h = int (1+(H+2*self.pad-FH)/self.stride) out_w = int (1+(W+2*self.pad-FW)/self.stride) #进行二维数组乘积 col = im2col(x,FH,FW,self.stride,self.pad) col_W = self.W.reshape(FN,-1).T #滤波器的展开 out = np.dot(col,col_W) + self.b #输出时还原成4维数组 out = out.reshape(N,out_h,out_w,-1).transpose(0,3,1,2) return out

3.通过在reshape时指定为-1,reshape函数会自动计算-1维度上的元素个数,以使多维数组的元素个数前后一致。比如,(10, 3, 5, 5)形状的数组的元素个数共有750个,指定reshape(10,-1)后,就会转换成(10, 75)形状的数组。

class Pooling: def _init_(self,pool_h,pool_w,stride=1,pad=0): self.pool_h = pool_h self.pool_w = pool_w self.stride = stride self.pad = pad def forward(self,x): N,C,H,W = x.shape out_h = int(1+(H-self.pool_h)/self.stride) out_w = int(1+(W-self.pool_w)/self.stride) #1.展开 col = im2col(x,self.pool_h,self.pool_w,self.stride,self.pad) col = col.reshape(-1,self.pool_h*self.pool_w) #2.最大值 out = np.max(col,axis=1) #3.转换 out = out.reshape(N,out_h,out_w,C).transpose(0,3,1,2) return out

1.transpose(0,3,1,2)指定轴顺序,第一个是前面序号0的,第二个是前面序号3的,第三个是前面序号1的,第四个是前面序号2的

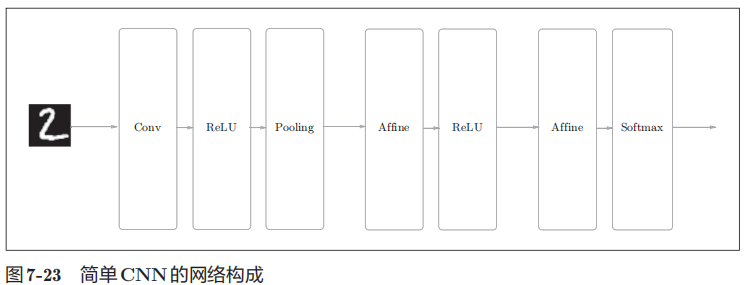

网络的构成是“Convolution - ReLU - Pooling -Affine - ReLU - Affine - Softmax”,我们将它实现为名为SimpleConvNet的类。

首先来看一下SimpleConvNet的初始化(__init__),取下面这些参数。

参数

• input_dim―输入数据的维度:(通道,高,长)

• conv_param―卷积层的超参数(字典)。字典的关键字如下:

filter_num―滤波器的数量

filter_size―滤波器的大小

stride―步幅

pad―填充

• hidden_size―隐藏层(全连接)的神经元数量

• output_size―输出层(全连接)的神经元数量

• weitght_int_std―初始化时权重的标准差

# coding: utf-8 import sys, os sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定 import pickle import numpy as np from collections import OrderedDict from common.layers import * from common.gradient import numerical_gradient class SimpleConvNet: """简单的ConvNet conv - relu - pool - affine - relu - affine - softmax Parameters ---------- input_size : 输入大小(MNIST的情况下为784) hidden_size_list : 隐藏层的神经元数量的列表(e.g. [100, 100, 100]) output_size : 输出大小(MNIST的情况下为10) activation : 'relu' or 'sigmoid' weight_init_std : 指定权重的标准差(e.g. 0.01) 指定'relu'或'he'的情况下设定“He的初始值” 指定'sigmoid'或'xavier'的情况下设定“Xavier的初始值” """ def __init__(self, input_dim=(1, 28, 28), conv_param={'filter_num':30, 'filter_size':5, 'pad':0, 'stride':1}, hidden_size=100, output_size=10, weight_init_std=0.01): filter_num = conv_param['filter_num'] filter_size = conv_param['filter_size'] filter_pad = conv_param['pad'] filter_stride = conv_param['stride'] input_size = input_dim[1] conv_output_size = (input_size - filter_size + 2*filter_pad) / filter_stride + 1 pool_output_size = int(filter_num * (conv_output_size/2) * (conv_output_size/2)) # 初始化权重 self.params = {} self.params['W1'] = weight_init_std * \ np.random.randn(filter_num, input_dim[0], filter_size, filter_size) self.params['b1'] = np.zeros(filter_num) self.params['W2'] = weight_init_std * \ np.random.randn(pool_output_size, hidden_size) self.params['b2'] = np.zeros(hidden_size) self.params['W3'] = weight_init_std * \ np.random.randn(hidden_size, output_size) self.params['b3'] = np.zeros(output_size) # 生成层 self.layers = OrderedDict() self.layers['Conv1'] = Convolution(self.params['W1'], self.params['b1'], conv_param['stride'], conv_param['pad']) self.layers['Relu1'] = Relu() self.layers['Pool1'] = Pooling(pool_h=2, pool_w=2, stride=2) self.layers['Affine1'] = Affine(self.params['W2'], self.params['b2']) self.layers['Relu2'] = Relu() self.layers['Affine2'] = Affine(self.params['W3'], self.params['b3']) self.last_layer = SoftmaxWithLoss() def predict(self, x): for layer in self.layers.values(): x = layer.forward(x) return x def loss(self, x, t): """求损失函数 参数x是输入数据、t是教师标签 """ y = self.predict(x) return self.last_layer.forward(y, t) def accuracy(self, x, t, batch_size=100): if t.ndim != 1 : t = np.argmax(t, axis=1) acc = 0.0 for i in range(int(x.shape[0] / batch_size)): tx = x[i*batch_size:(i+1)*batch_size] tt = t[i*batch_size:(i+1)*batch_size] y = self.predict(tx) y = np.argmax(y, axis=1) acc += np.sum(y == tt) return acc / x.shape[0] def numerical_gradient(self, x, t): """求梯度(数值微分) Parameters ---------- x : 输入数据 t : 教师标签 Returns ------- 具有各层的梯度的字典变量 grads['W1']、grads['W2']、...是各层的权重 grads['b1']、grads['b2']、...是各层的偏置 """ loss_w = lambda w: self.loss(x, t) grads = {} for idx in (1, 2, 3): grads['W' + str(idx)] = numerical_gradient(loss_w, self.params['W' + str(idx)]) grads['b' + str(idx)] = numerical_gradient(loss_w, self.params['b' + str(idx)]) return grads def gradient(self, x, t): """求梯度(误差反向传播法) Parameters ---------- x : 输入数据 t : 教师标签 Returns ------- 具有各层的梯度的字典变量 grads['W1']、grads['W2']、...是各层的权重 grads['b1']、grads['b2']、...是各层的偏置 """ # forward self.loss(x, t) # backward dout = 1 dout = self.last_layer.backward(dout) layers = list(self.layers.values()) layers.reverse() for layer in layers: dout = layer.backward(dout) # 设定 grads = {} grads['W1'], grads['b1'] = self.layers['Conv1'].dW, self.layers['Conv1'].db grads['W2'], grads['b2'] = self.layers['Affine1'].dW, self.layers['Affine1'].db grads['W3'], grads['b3'] = self.layers['Affine2'].dW, self.layers['Affine2'].db return grads def save_params(self, file_name="params.pkl"): params = {} for key, val in self.params.items(): params[key] = val with open(file_name, 'wb') as f: pickle.dump(params, f) def load_params(self, file_name="params.pkl"): with open(file_name, 'rb') as f: params = pickle.load(f) for key, val in params.items(): self.params[key] = val for i, key in enumerate(['Conv1', 'Affine1', 'Affine2']): self.layers[key].W = self.params['W' + str(i+1)] self.layers[key].b = self.params['b' + str(i+1)]

浙公网安备 33010602011771号

浙公网安备 33010602011771号