keepalived高可用

1. keepalived简介

1.1 keepalived是什么?

Keepalived 软件起初是专为LVS负载均衡软件设计的,用来管理并监控LVS集群系统中各个服务节点的状态,后来又加入了可以实现高可用的VRRP功能。因此,Keepalived除了能够管理LVS软件外,还可以作为其他服务(例如:Nginx、Haproxy、MySQL等)的高可用解决方案软件。

Keepalived软件主要是通过VRRP协议实现高可用功能的。VRRP是Virtual Router RedundancyProtocol(虚拟路由器冗余协议)的缩写,VRRP出现的目的就是为了解决静态路由单点故障问题的,它能够保证当个别节点宕机时,整个网络可以不间断地运行。

所以,Keepalived 一方面具有配置管理LVS的功能,同时还具有对LVS下面节点进行健康检查的功能,另一方面也可实现系统网络服务的高可用功能。

keepalived官网

1.2 keepalived的重要功能

keepalived 有三个重要的功能,分别是:

- 管理LVS负载均衡软件

- 实现LVS集群节点的健康检查

- 作为系统网络服务的高可用性(failover)

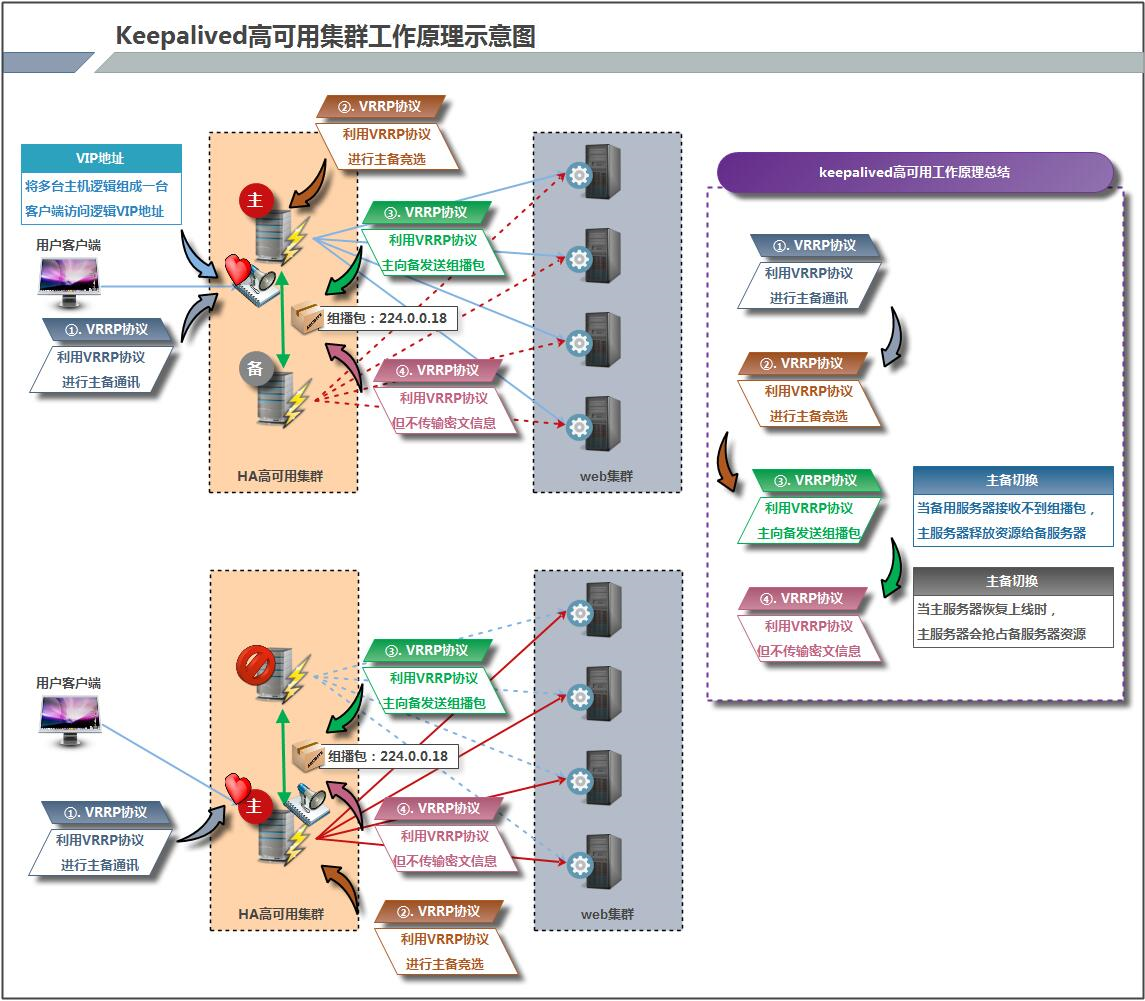

1.3 keepalived高可用故障转移的原理

Keepalived 高可用服务之间的故障切换转移,是通过 VRRP (Virtual Router Redundancy Protocol ,虚拟路由器冗余协议)来实现的。

在 Keepalived 服务正常工作时,主 Master 节点会不断地向备节点发送(多播的方式)心跳消息,用以告诉备 Backup 节点自己还活看,当主 Master 节点发生故障时,就无法发送心跳消息,备节点也就因此无法继续检测到来自主 Master 节点的心跳了,于是调用自身的接管程序,接管主 Master 节点的 IP 资源及服务。而当主 Master 节点恢复时,备 Backup 节点又会释放主节点故障时自身接管的IP资源及服务,恢复到原来的备用角色。

那么,什么是VRRP呢?

VRRP ,全 称 Virtual Router Redundancy Protocol ,中文名为虚拟路由冗余协议 ,VRRP的出现就是为了解决静态踣甶的单点故障问题,VRRP是通过一种竞选机制来将路由的任务交给某台VRRP路由器的。

1.4 keepalived原理

1.4.1 keepalived高可用架构图

1.4.2 keepalived工作原理描述

Keepalived高可用对之间是通过VRRP通信的,因此,我们从 VRRP开始了解起:

1) VRRP,全称 Virtual Router Redundancy Protocol,中文名为虚拟路由冗余协议,VRRP的出现是为了解决静态路由的单点故障。

2) VRRP是通过一种竟选协议机制来将路由任务交给某台 VRRP路由器的。

3) VRRP用 IP多播的方式(默认多播地址(224.0_0.18))实现高可用对之间通信。

4) 工作时主节点发包,备节点接包,当备节点接收不到主节点发的数据包的时候,就启动接管程序接管主节点的开源。备节点可以有多个,通过优先级竞选,但一般 Keepalived系统运维工作中都是一对。

5) VRRP使用了加密协议加密数据,但Keepalived官方目前还是推荐用明文的方式配置认证类型和密码。

介绍完 VRRP,接下来我再介绍一下 Keepalived服务的工作原理:

Keepalived高可用是通过 VRRP 进行通信的, VRRP是通过竞选机制来确定主备的,主的优先级高于备,因此,工作时主会优先获得所有的资源,备节点处于等待状态,当主挂了的时候,备节点就会接管主节点的资源,然后顶替主节点对外提供服务。

在 Keepalived 服务之间,只有作为主的服务器会一直发送 VRRP 广播包,告诉备它还活着,此时备不会枪占主,当主不可用时,即备监听不到主发送的广播包时,就会启动相关服务接管资源,保证业务的连续性.接管速度最快可以小于1秒。

2. keepalived配置文件讲解

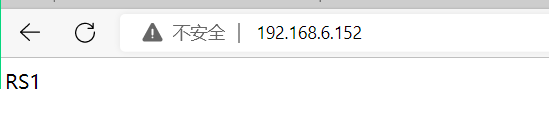

主:192.168.6.152 主机名:node1

备:192.168.6.153 主机名:node2

# 主上下载nginx [root@node1 ~]# dnf -y install nginx [root@node1 ~]# echo 'RS1' > /usr/share/nginx/html/index.html #增加网页内容 [root@node1 ~]# systemctl enable --now nginx #设置开机自启 Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service. # 备上下载nginx [root@node2 ~]# dnf -y install nginx [root@node2 ~]# echo 'RS2' > /usr/share/nginx/html/index.html [root@node2 ~]# systemctl enable --now nginx Created symlink /etc/systemd/system/multi-user.target.wants/nginx.service → /usr/lib/systemd/system/nginx.service.

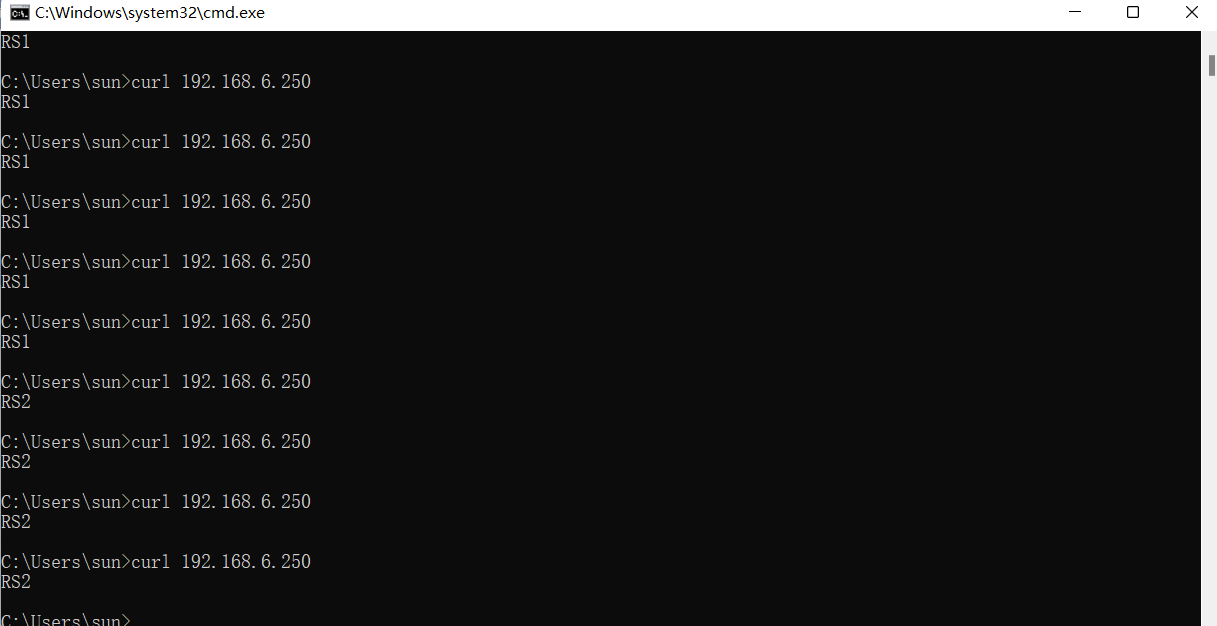

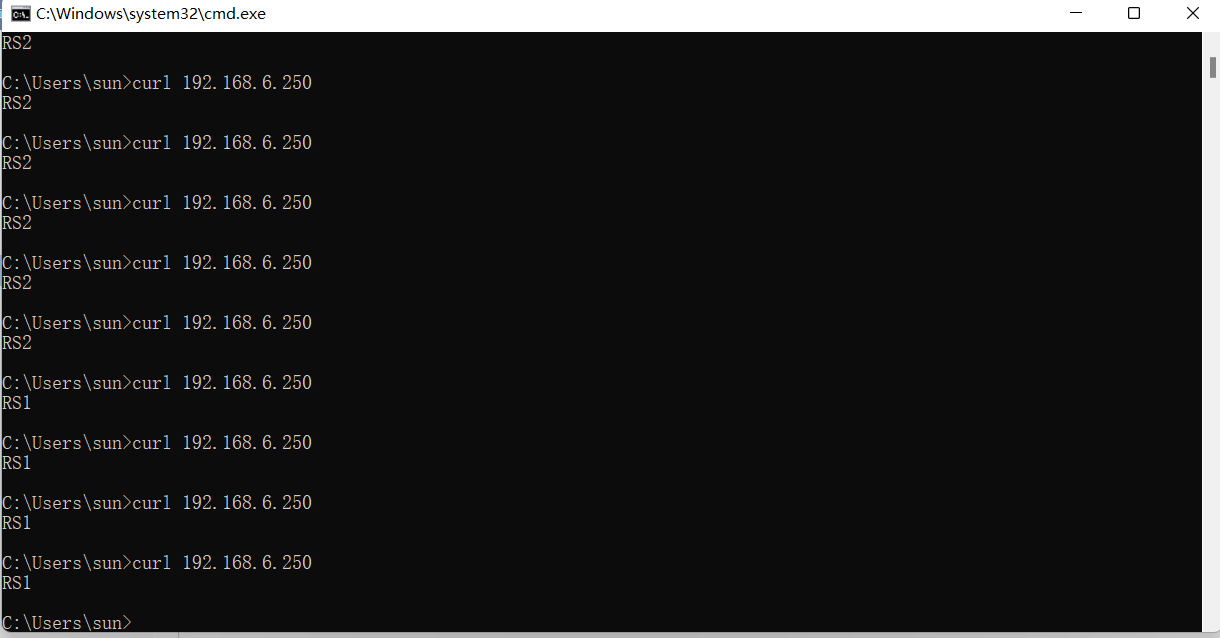

访问

# 下载keepalived [root@node1 ~]# dnf -y install keepalived #主备都下载keepalived [root@node2 ~]# dnf -y install keepalived # 查看安装生成的文件 [root@node1 ~]# rpm -ql keepalived /etc/keepalived /etc/keepalived/keepalived.conf # 主配置文件 /etc/sysconfig/keepalived /usr/bin/genhash /usr/lib/.build-id /usr/lib/.build-id/0a /usr/lib/.build-id/0a/410997e11c666114ca6d785e58ff0cc248744e /usr/lib/.build-id/6f /usr/lib/.build-id/6f/ba0d6bad6cb5ff7b074e703849ed93bebf4a0f /usr/lib/systemd/system/keepalived.service # 服务控制文件

4.3 keepalived配置

4.3.1 配置主keepalived

# [root@node1 keepalived]# cp keepalived.conf keepalived.conf123 # 把主配置文件复制一个原本的改个名备份一个 [root@node1 keepalived]# ls keepalived.conf keepalived.conf123 [root@node1 keepalived]# > keepalived.conf #清空内容 [root@node1 keepalived]# vim keepalived.conf # 编辑主配置文件 ! Configuration File for keepalived global_defs { # 全局配置 router_id lb01 } vrrp_instance VI_1 { #定义实例 state MASTER # 定义keepalived节点的初始状态,可以为MASTER和BACKUP interface ens160 # VRRP实施绑定的网卡接口, virtual_router_id 51 # 虚拟路由的ID,同一集群要一致 priority 100 #定义优先级,按优先级来决定主备角色优先级越大越有限 advert_int 1 # 主备通讯时间间隔 authentication { #配置认证 auth_type PASS #认证方式此处为密码 auth_pass 023654 # 修改密码 } virtual_ipaddress { #要使用的VIP地址 192.168.6.250 # 修改vip } } virtual_server 192.168.6.250 80 { # 配置虚拟服务器 delay_loop 6 # 健康检查时间间隔 lb_algo rr # lvs调度算法 lb_kind DR #lvs模式 persistence_timeout 50 #持久化超时时间,单位是秒 protocol TCP #4层协议 real_server 192.168.6.152 80 { # 定义真实处理请求的服务器 weight 1 # 给服务器指定权重,默认为1 TCP_CHECK { connect_port 80 #端口号为80 connect_timeout 3 # 连接超时时间 nb_get_retry 3 # 连接次数 delay_before_retry 3 # 在尝试之前延迟多少时间 } } real_server 192.168.6.153 80 { weight 1 TCP_CHECK { connect_port 80 connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } } [root@node1 keepalived]# systemctl enable --now keepalived Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

配置备keepalived

# [root@node2 ~]# cd /etc/keepalived/ [root@node2 keepalived]# ls keepalived.conf [root@node2 keepalived]# cp keepalived.conf keepalived.conf123 [root@node2 keepalived]# > keepalived.conf [root@node2 keepalived]# vim /etc/keepalived/keepalived.conf ! Configuration File for keepalived global_defs { router_id lb02 } vrrp_instance VI_1 { state BACKUP interface ens160 virtual_router_id 51 priority 90 advert_int 1 authentication { auth_type PASS auth_pass 023654 } virtual_ipaddress { 192.168.6.250 } } virtual_server 192.168.6.250 80 { delay_loop 6 lb_algo rr lb_kind DR persistence_timeout 50 protocol TCP real_server 192.168.6.152 80 { weight 1 TCP_CHECK { connect_port 80 connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } real_server 192.168.6.153 80 { weight 1 TCP_CHECK { connect_port 80 connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } }

[root@node2 keepalived]# systemctl enable --now keepalived

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

查看VIP在哪里

# 在MASTER上查看 [root@node1 keepalived]# ip a 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000 link/ether 00:0c:29:d7:b0:2d brd ff:ff:ff:ff:ff:ff inet 192.168.6.152/24 brd 192.168.6.255 scope global dynamic noprefixroute ens160 valid_lft 1765sec preferred_lft 1765sec inet 192.168.6.250/32 scope global ens160 # 主上有vip valid_lft forever preferred_lft forever inet6 fe80::6e9:511e:b6a8:693c/64 scope link noprefixroute valid_lft forever preferred_lft forever #在SLAVE上查看 [root@node2 keepalived]# ip a 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000 link/ether 00:0c:29:42:d0:bc brd ff:ff:ff:ff:ff:ff inet 192.168.6.153/24 brd 192.168.6.255 scope global dynamic noprefixroute ens160 valid_lft 1789sec preferred_lft 1789sec inet6 fe80::da77:9e16:6359:1029/64 scope link noprefixroute valid_lft forever preferred_lft forever

让keepalived监控nginx负载均衡机

keepalived通过脚本来监控nginx负载均衡机的状态

在master上编写脚本

[root@node1 ~]# mkdir /scripts # 创建一个脚本目录为了标准化 [root@node1 ~]# cd /scripts/ [root@node1 scripts]# vim check_n.sh #编写健康检查脚本 #!/bin/bash # nginx停掉就停掉keeplived nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l) if [ $nginx_status -lt 1 ];then systemctl stop keepalived fi [root@node1 scripts]# chmod +x check_n.sh # 增加执行权限 [root@node1 scripts]# ll total 4 -rwxr-xr-x 1 root root 142 Aug 30 23:28 check_n.sh [root@node1 scripts]# vim notify.sh #!/bin/bash case "$1" in master) nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l) if [ $nginx_status -lt 1 ];then systemctl start nginx fi sendmail ;; backup) nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l) if [ $nginx_status -gt 0 ];then systemctl stop nginx fi ;; *) echo "Usage:$0 master|backup VIP" ;; esac [root@node1 scripts]# chmod +x notify.sh [root@node1 scripts]# ll total 8 -rwxr-xr-x 1 root root 142 Aug 30 23:28 check_n.sh -rwxr-xr-x 1 root root 444 Aug 30 23:32 notify.sh

在slave上编写脚本

[root@node2 ~]# mkdir /scripts #创建脚本目录为了标准化 [root@node2 ~]# cd /scripts/ [root@node2 scripts]# vim notify.sh #!/bin/bash case "$1" in master) # 为主的时候启动nginx服务 nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l) if [ $nginx_status -lt 1 ];then systemctl start nginx fi sendmail ;; backup) # 为备的时候停nginx服务释放资源, nginx_status=$(ps -ef|grep -Ev "grep|$0"|grep '\bnginx\b'|wc -l) if [ $nginx_status -gt 0 ];then systemctl stop nginx fi ;; *) echo "Usage:$0 master|backup VIP" ;; esac [root@node2 scripts]# chmod +x notify.sh # 增加权限 [root@node2 scripts]# ll total 4 -rwxr-xr-x 1 root root 445 Aug 30 23:39 notify.sh

配置keepalived加入监控脚本的配置

配置主keepalived

[root@node1 scripts]# vim /etc/keepalived/keepalived.conf ! Configuration File for keepalived global_defs { router_id lb01 } vrrp_script nginx_check { script "/scripts/check_n.sh" interval 1 weight -20 } vrrp_instance VI_1 { state MASTER interface ens160 virtual_router_id 51 priority 100 advert_int 1 authentication { auth_type PASS auth_pass 023654 } virtual_ipaddress { 192.168.6.250 } track_script { nginx_check } notify_master "/scripts/notify.sh master 192.168.6.250" } virtual_server 192.168.6.250 80 { delay_loop 6 lb_algo rr lb_kind DR persistence_timeout 50 protocol TCP real_server 192.168.6.152 80 { weight 1 TCP_CHECK { connect_port 80 connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } real_server 192.168.6.153 80 { weight 1 TCP_CHECK { connect_port 80 connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } } [root@node1 scripts]# systemctl restart keepalived

配置备keepalived

backup无需检测nginx是否正常,当升级为MASTER时启动nginx,当降级为BACKUP时关闭

[root@node2 scripts]# vim /etc/keepalived/keepalived.conf ! Configuration File for keepalived global_defs { router_id lb02 } vrrp_instance VI_1 { state BACKUP interface ens160 virtual_router_id 51 priority 90 advert_int 1 authentication { auth_type PASS auth_pass 023654 } virtual_ipaddress { 192.168.6.250 } notify_master "/scripts/notify.sh master 192.168.6.250" notify_backup "/scripts/notify.sh backup 192.168.6.250" } virtual_server 192.168.6.250 80 { delay_loop 6 lb_algo rr lb_kind DR persistence_timeout 50 protocol TCP real_server 192.168.6.152 80 { weight 1 TCP_CHECK { connect_port 80 connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } real_server 192.168.6.153 80 { weight 1 TCP_CHECK { connect_port 80 connect_timeout 3 nb_get_retry 3 delay_before_retry 3 } } } [root@node2 scripts]# systemctl restart keepalived

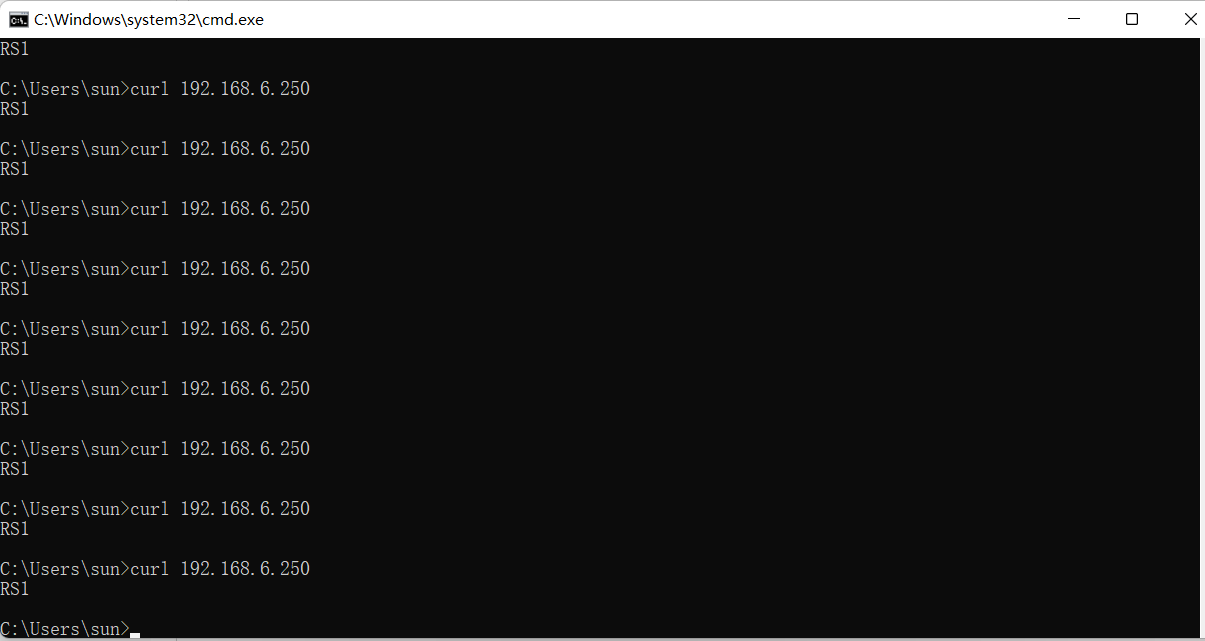

模拟主出现问题挂掉了

[root@node1 keepalived]# ss -antl #有80端口 State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 128 0.0.0.0:80 0.0.0.0:* LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 128 [::]:80 [::]:* LISTEN 0 128 [::]:22 [::]:* [root@node1 keepalived]# ip a # 有vip 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000 link/ether 00:0c:29:d7:b0:2d brd ff:ff:ff:ff:ff:ff inet 192.168.6.152/24 brd 192.168.6.255 scope global dynamic noprefixroute ens160 valid_lft 1359sec preferred_lft 1359sec inet 192.168.6.250/32 scope global ens160 valid_lft forever preferred_lft forever inet6 fe80::6e9:511e:b6a8:693c/64 scope link noprefixroute valid_lft forever preferred_lft forever [root@node1 keepalived]# systemctl status keepalived #keepalived是启动的 ● keepalived.service - LVS and VRRP High Availability Monitor Loaded: loaded (/usr/lib/systemd/system/keepalived.service; enabled; vendor preset: disa> Active: active (running) since Wed 2022-08-31 09:33:52 CST; 3min 16s ago Process: 13631 ExecStart=/usr/sbin/keepalived $KEEPALIVED_OPTIONS (code=exited, status=0/> Main PID: 13633 (keepalived) Tasks: 3 (limit: 11202) Memory: 6.4M CGroup: /system.slice/keepalived.service ├─13633 /usr/sbin/keepalived -D ├─13635 /usr/sbin/keepalived -D └─13636 /usr/sbin/keepalived -D

停掉主的

[root@node1 keepalived]# systemctl stop nginx # 模拟主当机,关闭nginx服务 [root@node1 keepalived]# ss -antl #80端口号没有了 State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 128 [::]:22 [::]:* [root@node1 keepalived]# ip a #vip也没有了 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000 link/ether 00:0c:29:d7:b0:2d brd ff:ff:ff:ff:ff:ff inet 192.168.6.152/24 brd 192.168.6.255 scope global dynamic noprefixroute ens160 valid_lft 937sec preferred_lft 937sec inet6 fe80::6e9:511e:b6a8:693c/64 scope link noprefixroute valid_lft forever preferred_lft forever [root@node1 keepalived]# systemctl status keepalived # keepalived也停掉了 ● keepalived.service - LVS and VRRP High Availability Monitor Loaded: loaded (/usr/lib/systemd/system/keepalived.service; enabled; vendor preset: disa> Active: inactive (dead) since Wed 2022-08-31 09:40:39 CST; 31s ago Process: 13631 ExecStart=/usr/sbin/keepalived $KEEPALIVED_OPTIONS (code=exited, status=0/> Main PID: 13633 (code=exited, status=0/SUCCESS)

查看备主机

[root@node2 keepalived]# ss -antl # 立马接收资源80端口起来了 State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 128 0.0.0.0:80 0.0.0.0:* LISTEN 0 128 [::]:22 [::]:* LISTEN 0 128 [::]:80 [::]:* [root@node2 keepalived]# ip a # vip也起来了 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000 link/ether 00:0c:29:42:d0:bc brd ff:ff:ff:ff:ff:ff inet 192.168.6.153/24 brd 192.168.6.255 scope global dynamic noprefixroute ens160 valid_lft 1723sec preferred_lft 1723sec inet 192.168.6.250/32 scope global ens160 valid_lft forever preferred_lft forever inet6 fe80::da77:9e16:6359:1029/64 scope link noprefixroute valid_lft forever preferred_lft forever [root@node2 keepalived]# systemctl status keepalived # keeplived启动了 ● keepalived.service - LVS and VRRP High Availability Monitor Loaded: loaded (/usr/lib/systemd/system/keepalived.service; enabled; vendor preset: disa> Active: active (running) since Wed 2022-08-31 09:29:30 CST; 13min ago Process: 16872 ExecStart=/usr/sbin/keepalived $KEEPALIVED_OPTIONS (code=exited, status=0/> Main PID: 16873 (keepalived) Tasks: 3 (limit: 11202) Memory: 5.2M CGroup: /system.slice/keepalived.service ├─16873 /usr/sbin/keepalived -D ├─16875 /usr/sbin/keepalived -D └─16876 /usr/sbin/keepalived -D

此时访问到的是rs2

模拟主修复好了,重新启动,立马抢占资源,vip,备停掉

# 主

[root@node1 keepalived]# systemctl start nginx keepalived [root@node1 keepalived]# ss -antl State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 128 0.0.0.0:80 0.0.0.0:* LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 128 [::]:80 [::]:* LISTEN 0 128 [::]:22 [::]:* [root@node1 keepalived]# ip a 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000 link/ether 00:0c:29:d7:b0:2d brd ff:ff:ff:ff:ff:ff inet 192.168.6.152/24 brd 192.168.6.255 scope global dynamic noprefixroute ens160 valid_lft 1503sec preferred_lft 1503sec inet 192.168.6.250/32 scope global ens160 valid_lft forever preferred_lft forever inet6 fe80::6e9:511e:b6a8:693c/64 scope link noprefixroute valid_lft forever preferred_lft forever [root@node1 keepalived]# systemctl status keeplived Unit keeplived.service could not be found. [root@node1 keepalived]# systemctl status keepalived ● keepalived.service - LVS and VRRP High Availability Monitor Loaded: loaded (/usr/lib/systemd/system/keepalived.service; enabled; vendor preset: disa> Active: active (running) since Wed 2022-08-31 09:46:23 CST; 36s ago Process: 16114 ExecStart=/usr/sbin/keepalived $KEEPALIVED_OPTIONS (code=exited, status=0/> Main PID: 16115 (keepalived) Tasks: 3 (limit: 11202) Memory: 6.1M CGroup: /system.slice/keepalived.service ├─16115 /usr/sbin/keepalived -D ├─16116 /usr/sbin/keepalived -D └─16117 /usr/sbin/keepalived -D

# 备 [root@node2 keepalived]# ss -antl State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 128 [::]:22 [::]:* [root@node2 keepalived]# systemctl status keepalived ● keepalived.service - LVS and VRRP High Availability Monitor Loaded: loaded (/usr/lib/systemd/system/keepalived.service; enabled; vendor preset: disa> Active: active (running) since Wed 2022-08-31 09:54:22 CST; 8min ago Process: 17022 ExecStart=/usr/sbin/keepalived $KEEPALIVED_OPTIONS (code=exited, status=0/> Main PID: 17023 (keepalived) Tasks: 3 (limit: 11202) Memory: 5.3M CGroup: /system.slice/keepalived.service ├─17023 /usr/sbin/keepalived -D ├─17024 /usr/sbin/keepalived -D └─17025 /usr/sbin/keepalived -D

[root@node2 keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:42:d0:bc brd ff:ff:ff:ff:ff:ff

inet 192.168.6.153/24 brd 192.168.6.255 scope global dynamic noprefixroute ens160

valid_lft 1285sec preferred_lft 1285sec

inet6 fe80::da77:9e16:6359:1029/64 scope link noprefixroute

valid_lft forever preferred_lft forever

浙公网安备 33010602011771号

浙公网安备 33010602011771号