Canal相关(zk,配置文件)

canal.instance.filter.regex:

mysql 数据解析关注的表,Perl正则表达式.

多个正则之间以逗号(,)分隔,转义符需要双斜杠(\\)

常见例子:

1. 所有表:.* or .*\\..*

2. canal schema下所有表: canal\\..*

3. canal下的以canal打头的表:canal\\.canal.*

4. canal schema下的一张表:canal\\.test1

5. 多个规则组合使用:canal\\..*,mysql.test1,mysql.test2 (逗号分隔)

canal.instance.filter.black.regex : mysql 数据解析表的黑名单,表达式规则见白名单的规则

https://github.com/alibaba/canal/wiki/AdminGuide

几点说明:

1. mysql链接时的起始位置

canal.instance.master.journal.name + canal.instance.master.position : 精确指定一个binlog位点,进行启动

canal.instance.master.timestamp : 指定一个时间戳,canal会自动遍历mysql binlog,找到对应时间戳的binlog位点后,进行启动

不指定任何信息:默认从当前数据库的位点,进行启动。(show master status)

2. mysql解析关注表定义

标准的Perl正则,注意转义时需要双斜杠:\\

3. mysql链接的编码

目前canal版本仅支持一个数据库只有一种编码,如果一个库存在多个编码,需要通过filter.regex配置,将其拆分为多个canal instance,为每个instance指定不同的编码

在介绍instance配置之前,先了解一下canal如何维护一份增量订阅&消费的关系信息:

解析位点 (parse模块会记录,上一次解析binlog到了什么位置,对应组件为:CanalLogPositionManager)

消费位点 (canal server在接收了客户端的ack后,就会记录客户端提交的最后位点,对应的组件为:CanalMetaManager)

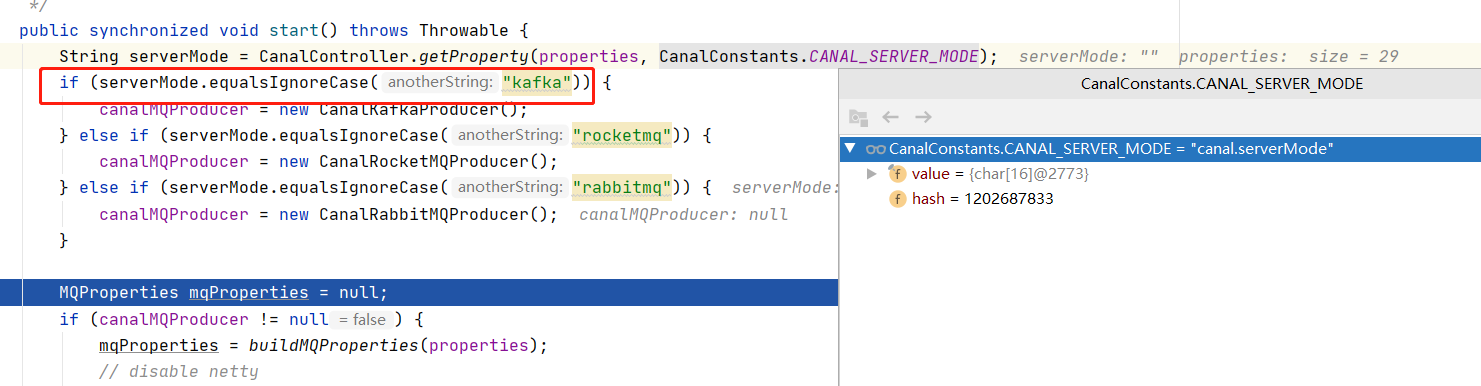

canal-admin模式,canal.serverMode必须配,不然会报NPE

2020-06-23 00:00:14.984 [destination = omssaps , address = mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local/192.168.228.211:3306 , EventParser] WARN c.a.o.c.p.inbo und.mysql.rds.RdsBinlogEventParserProxy - ---> begin to find start position, it will be long time for reset or first position 2020-06-23 00:00:15.024 [destination = omssaps , address = mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local/192.168.228.211:3306 , EventParser] WARN c.a.o.c.p.inbo und.mysql.rds.RdsBinlogEventParserProxy - prepare to find start position just last position {"identity":{"slaveId":-1,"sourceAddress":{"address":"mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local","port":3306}},"postion":{"gtid":"","included":false,"journa lName":"mysql-uat-new-mysqlha-0-bin.001768","position":345523555,"serverId":100,"timestamp":1585193491000}} 2020-06-23 00:00:15.042 [destination = omssaps , address = mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local/192.168.228.211:3306 , EventParser] WARN c.a.o.c.p.inbo und.mysql.rds.RdsBinlogEventParserProxy - ---> find start position successfully, EntryPosition[included=false,journalName=mysql-uat-new-mysqlha-0-bin.001768,position=345523 555,serverId=100,gtid=,timestamp=1585193491000] cost : 58ms , the next step is binlog dump 2020-06-23 00:00:15.056 [destination = omssaps , address = mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local/192.168.228.211:3306 , EventParser] ERROR c.a.o.canal.pa rse.inbound.mysql.dbsync.DirectLogFetcher - I/O error while reading from client socket java.io.IOException: Received error packet: errno = 1236, sqlstate = HY000 errmsg = Could not find first log file name in binary log index file at com.alibaba.otter.canal.parse.inbound.mysql.dbsync.DirectLogFetcher.fetch(DirectLogFetcher.java:102) ~[canal.parse-1.1.3.jar:na] at com.alibaba.otter.canal.parse.inbound.mysql.MysqlConnection.dump(MysqlConnection.java:235) [canal.parse-1.1.3.jar:na] at com.alibaba.otter.canal.parse.inbound.AbstractEventParser$3.run(AbstractEventParser.java:257) [canal.parse-1.1.3.jar:na] at java.lang.Thread.run(Thread.java:748) [na:1.8.0_181] 2020-06-23 00:00:15.056 [destination = omssaps , address = mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local/192.168.228.211:3306 , EventParser] ERROR c.a.o.c.p.inbo und.mysql.rds.RdsBinlogEventParserProxy - dump address mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local/192.168.228.211:3306 has an error, retrying. caused by java.io.IOException: Received error packet: errno = 1236, sqlstate = HY000 errmsg = Could not find first log file name in binary log index file at com.alibaba.otter.canal.parse.inbound.mysql.dbsync.DirectLogFetcher.fetch(DirectLogFetcher.java:102) ~[canal.parse-1.1.3.jar:na] at com.alibaba.otter.canal.parse.inbound.mysql.MysqlConnection.dump(MysqlConnection.java:235) ~[canal.parse-1.1.3.jar:na] at com.alibaba.otter.canal.parse.inbound.AbstractEventParser$3.run(AbstractEventParser.java:257) ~[canal.parse-1.1.3.jar:na] at java.lang.Thread.run(Thread.java:748) [na:1.8.0_181] 2020-06-23 00:00:15.057 [destination = omssaps , address = mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local/192.168.228.211:3306 , EventParser] ERROR com.alibaba.ot ter.canal.common.alarm.LogAlarmHandler - destination:omssaps[java.io.IOException: Received error packet: errno = 1236, sqlstate = HY000 errmsg = Could not find first log fi le name in binary log index file at com.alibaba.otter.canal.parse.inbound.mysql.dbsync.DirectLogFetcher.fetch(DirectLogFetcher.java:102) at com.alibaba.otter.canal.parse.inbound.mysql.MysqlConnection.dump(MysqlConnection.java:235) at com.alibaba.otter.canal.parse.inbound.AbstractEventParser$3.run(AbstractEventParser.java:257) at java.lang.Thread.run(Thread.java:748) ]

http://whitesock.iteye.com/blog/1329616

http://www.iteye.com/topic/1129002 七锋已经fix了canal的,要合到dbsync里面。

http://www.bitscn.com/pdb/mysql/201404/228411.html

http://mechanics.flite.com/blog/2014/04/29/disabling-binlog-checksum-for-mysql-5-dot-5-slash-5-dot-6-master-master-replication/

查询资料发现mysql版本为5.6时,

这个错误一般出现在master5.6,slave在低版本的情况下。这是由于5.6使用了crc32做binlog的checksum;

当一个event被写入binary log(二进制日志)的时候,checksum也同时写入binary log,然后在event通过网络传输到从服务器(slave)之后,再在从服务器中对其进行验证并写入从服务器的relay log.

由于每一步都记录了event和checksum,所以我们可以很快地找出问题所在。

在master1中设置binlog_checksum =none;

mysql> show variables like "%sum%";

+---------------------------+--------+

| Variable_name | Value |

+---------------------------+--------+

| binlog_checksum | CRC32 |

| innodb_checksum_algorithm | innodb |

| innodb_checksums | ON |

| master_verify_checksum | OFF |

| slave_sql_verify_checksum | ON |

+---------------------------+--------+

5 rows in set (0.00 sec)

mysql> set global binlog_checksum='NONE'

Query OK, 0 rows affected (0.09 sec)

mysql> show variables like "%sum%";

+---------------------------+--------+

| Variable_name | Value |

+---------------------------+--------+

| binlog_checksum | NONE |

| innodb_checksum_algorithm | innodb |

| innodb_checksums | ON |

| master_verify_checksum | OFF |

| slave_sql_verify_checksum | ON |

+---------------------------+--------+

————————————————

版权声明:本文为CSDN博主「arkblue」的原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接及本声明。

原文链接:https://blog.csdn.net/arkblue/article/details/44280647

2020-06-23 20:36:04.987 [destination = omssaps , address = mysql.zkh360.com/120.27.222.64:13306 , EventParser] ERROR com.alibaba.otter.canal.common.alarm.LogAlarmHandl er - destination:omssaps[com.google.common.collect.ComputationException: com.alibaba.fastjson.JSONException: deserialize inet adress error at com.google.common.collect.MapMaker$ComputingMapAdapter.get(MapMaker.java:889) at com.alibaba.otter.canal.meta.MemoryMetaManager.getCursor(MemoryMetaManager.java:91) at com.alibaba.otter.canal.meta.PeriodMixedMetaManager.getCursor(PeriodMixedMetaManager.java:150) at com.alibaba.otter.canal.parse.index.MetaLogPositionManager.getLatestIndexBy(MetaLogPositionManager.java:57) at com.alibaba.otter.canal.parse.index.FailbackLogPositionManager.getLatestIndexBy(FailbackLogPositionManager.java:68) at com.alibaba.otter.canal.parse.inbound.mysql.MysqlEventParser.findStartPositionInternal(MysqlEventParser.java:424) at com.alibaba.otter.canal.parse.inbound.mysql.MysqlEventParser.findStartPosition(MysqlEventParser.java:366) at com.alibaba.otter.canal.parse.inbound.AbstractEventParser$3.run(AbstractEventParser.java:186) at java.lang.Thread.run(Thread.java:748) Caused by: com.alibaba.fastjson.JSONException: deserialize inet adress error at com.alibaba.fastjson.serializer.MiscCodec.deserialze(MiscCodec.java:316) at com.alibaba.fastjson.parser.DefaultJSONParser.parseObject(DefaultJSONParser.java:642) at com.alibaba.fastjson.parser.DefaultJSONParser.parseObject(DefaultJSONParser.java:610) at com.alibaba.fastjson.serializer.MiscCodec.deserialze(MiscCodec.java:189) at com.alibaba.fastjson.parser.deserializer.FastjsonASMDeserializer_3_LogIdentity.deserialze(Unknown Source) at com.alibaba.fastjson.parser.deserializer.JavaBeanDeserializer.deserialze(JavaBeanDeserializer.java:184) at com.alibaba.fastjson.parser.deserializer.FastjsonASMDeserializer_2_LogPosition.deserialze(Unknown Source) at com.alibaba.fastjson.parser.deserializer.JavaBeanDeserializer.deserialze(JavaBeanDeserializer.java:184) at com.alibaba.fastjson.parser.deserializer.JavaBeanDeserializer.deserialze(JavaBeanDeserializer.java:557) at com.alibaba.fastjson.parser.deserializer.JavaBeanDeserializer.deserialze(JavaBeanDeserializer.java:188) at com.alibaba.fastjson.parser.deserializer.JavaBeanDeserializer.deserialze(JavaBeanDeserializer.java:184) at com.alibaba.fastjson.parser.DefaultJSONParser.parseObject(DefaultJSONParser.java:642) at com.alibaba.fastjson.JSON.parseObject(JSON.java:350) at com.alibaba.fastjson.JSON.parseObject(JSON.java:318) at com.alibaba.fastjson.JSON.parseObject(JSON.java:281) at com.alibaba.fastjson.JSON.parseObject(JSON.java:381) at com.alibaba.fastjson.JSON.parseObject(JSON.java:361) at com.alibaba.otter.canal.common.utils.JsonUtils.unmarshalFromByte(JsonUtils.java:36) at com.alibaba.otter.canal.meta.ZooKeeperMetaManager.getCursor(ZooKeeperMetaManager.java:153) at com.alibaba.otter.canal.meta.PeriodMixedMetaManager$3.apply(PeriodMixedMetaManager.java:65) at com.alibaba.otter.canal.meta.PeriodMixedMetaManager$3.apply(PeriodMixedMetaManager.java:62) at com.google.common.collect.ComputingConcurrentHashMap$ComputingValueReference.compute(ComputingConcurrentHashMap.java:356) at com.google.common.collect.ComputingConcurrentHashMap$ComputingSegment.compute(ComputingConcurrentHashMap.java:182) at com.google.common.collect.ComputingConcurrentHashMap$ComputingSegment.getOrCompute(ComputingConcurrentHashMap.java:151) at com.google.common.collect.ComputingConcurrentHashMap.getOrCompute(ComputingConcurrentHashMap.java:67) at com.google.common.collect.MapMaker$ComputingMapAdapter.get(MapMaker.java:885) ... 8 more Caused by: java.net.UnknownHostException: mysql-uat-new-mysqlha-readonly.zkh-uat.svc.cluster.local: Name or service not known at java.net.Inet4AddressImpl.lookupAllHostAddr(Native Method) at java.net.InetAddress$2.lookupAllHostAddr(InetAddress.java:928) at java.net.InetAddress.getAddressesFromNameService(InetAddress.java:1323) at java.net.InetAddress.getAllByName0(InetAddress.java:1276) at java.net.InetAddress.getAllByName(InetAddress.java:1192) at java.net.InetAddress.getAllByName(InetAddress.java:1126) at java.net.InetAddress.getByName(InetAddress.java:1076) at com.alibaba.fastjson.serializer.MiscCodec.deserialze(MiscCodec.java:314) ... 33 more ]

canal和otter共用一个zk,在/otter/canal/destinations/ 节点上会复用的

otter中关于canal在zk上的标记:

com.alibaba.otter.shared.arbitrate.impl.ArbitrateViewServiceImpl

/** * 查询当前的仲裁器的一些运行状态视图 * * @author jianghang 2011-9-27 下午05:27:38 * @version 4.0.0 */ public class ArbitrateViewServiceImpl implements ArbitrateViewService { private static final String CANAL_PATH = "/otter/canal/destinations/%s"; private static final String CANAL_DATA_PATH = CANAL_PATH + "/%s"; private static final String CANAL_CURSOR_PATH = CANAL_PATH + "/%s/cursor"; private ZkClientx zookeeper = ZooKeeperClient.getInstance(); public MainStemEventData mainstemData(Long channelId, Long pipelineId) { String path = ManagePathUtils.getMainStem(channelId, pipelineId); try { byte[] bytes = zookeeper.readData(path); return JsonUtils.unmarshalFromByte(bytes, MainStemEventData.class); } catch (ZkException e) { return null; } } public Long getNextProcessId(Long channelId, Long pipelineId) { String processRoot = ManagePathUtils.getProcessRoot(channelId, pipelineId); IZkConnection connection = zookeeper.getConnection(); // zkclient会将获取stat信息和正常的操作分开,使用原生的zk进行优化 ZooKeeper orginZk = ((ZooKeeperx) connection).getZookeeper(); Stat processParentStat = new Stat(); // 获取所有的process列表 try { orginZk.getChildren(processRoot, false, processParentStat); return (Long) ((processParentStat.getCversion() + processParentStat.getNumChildren()) / 2L); } catch (Exception e) { return -1L; } } public List<ProcessStat> listProcesses(Long channelId, Long pipelineId) { List<ProcessStat> processStats = new ArrayList<ProcessStat>(); String processRoot = ManagePathUtils.getProcessRoot(channelId, pipelineId); IZkConnection connection = zookeeper.getConnection(); // zkclient会将获取stat信息和正常的操作分开,使用原生的zk进行优化 ZooKeeper orginZk = ((ZooKeeperx) connection).getZookeeper(); // 获取所有的process列表 List<String> processNodes = zookeeper.getChildren(processRoot); List<Long> processIds = new ArrayList<Long>(); for (String processNode : processNodes) { processIds.add(ManagePathUtils.getProcessId(processNode)); } Collections.sort(processIds); for (int i = 0; i < processIds.size(); i++) { Long processId = processIds.get(i); // 当前的process可能会有变化 ProcessStat processStat = new ProcessStat(); processStat.setPipelineId(pipelineId); processStat.setProcessId(processId); List<StageStat> stageStats = new ArrayList<StageStat>(); processStat.setStageStats(stageStats); try { String processPath = ManagePathUtils.getProcess(channelId, pipelineId, processId); Stat zkProcessStat = new Stat(); List<String> stages = orginZk.getChildren(processPath, false, zkProcessStat); Collections.sort(stages, new StageComparator()); StageStat prev = null; for (String stage : stages) {// 循环每个process下的stage String stagePath = processPath + "/" + stage; Stat zkStat = new Stat(); StageStat stageStat = new StageStat(); stageStat.setPipelineId(pipelineId); stageStat.setProcessId(processId); byte[] bytes = orginZk.getData(stagePath, false, zkStat); if (bytes != null && bytes.length > 0) { // 特殊处理zookeeper里的data信息,manager没有对应node中PipeKey的对象,所以导致反序列化会失败,需要特殊处理,删除'@'符号 String json = StringUtils.remove(new String(bytes, "UTF-8"), '@'); EtlEventData data = JsonUtils.unmarshalFromString(json, EtlEventData.class); stageStat.setNumber(data.getNumber()); stageStat.setSize(data.getSize()); Map exts = new HashMap(); if (!CollectionUtils.isEmpty(data.getExts())) { exts.putAll(data.getExts()); } exts.put("currNid", data.getCurrNid()); exts.put("nextNid", data.getNextNid()); exts.put("desc", data.getDesc()); stageStat.setExts(exts); } if (prev != null) {// 对应的start时间为上一个节点的结束时间 stageStat.setStartTime(prev.getEndTime()); } else { stageStat.setStartTime(zkProcessStat.getMtime()); // process的最后修改时间,select // await成功后会设置USED标志位 } stageStat.setEndTime(zkStat.getMtime()); if (ArbitrateConstants.NODE_SELECTED.equals(stage)) { stageStat.setStage(StageType.SELECT); } else if (ArbitrateConstants.NODE_EXTRACTED.equals(stage)) { stageStat.setStage(StageType.EXTRACT); } else if (ArbitrateConstants.NODE_TRANSFORMED.equals(stage)) { stageStat.setStage(StageType.TRANSFORM); // } else if // (ArbitrateConstants.NODE_LOADED.equals(stage)) { // stageStat.setStage(StageType.LOAD); } prev = stageStat; stageStats.add(stageStat); } // 添加一个当前正在处理的 StageStat currentStageStat = new StageStat(); currentStageStat.setPipelineId(pipelineId); currentStageStat.setProcessId(processId); if (prev == null) { byte[] bytes = orginZk.getData(processPath, false, zkProcessStat); if (bytes == null || bytes.length == 0) { continue; // 直接认为未使用,忽略之 } ProcessNodeEventData nodeData = JsonUtils.unmarshalFromByte(bytes, ProcessNodeEventData.class); if (nodeData.getStatus().isUnUsed()) {// process未使用,直接忽略 continue; // 跳过该process } else { currentStageStat.setStage(StageType.SELECT);// select操作 currentStageStat.setStartTime(zkProcessStat.getMtime()); } } else { // 判断上一个节点,确定当前的stage StageType stage = prev.getStage(); if (stage.isSelect()) { currentStageStat.setStage(StageType.EXTRACT); } else if (stage.isExtract()) { currentStageStat.setStage(StageType.TRANSFORM); } else if (stage.isTransform()) { currentStageStat.setStage(StageType.LOAD); } else if (stage.isLoad()) {// 已经是最后一个节点了 continue; } currentStageStat.setStartTime(prev.getEndTime());// 开始时间为上一个节点的结束时间 } if (currentStageStat.getStage().isLoad()) {// load必须为第一个process节点 if (i == 0) { stageStats.add(currentStageStat); } } else { stageStats.add(currentStageStat);// 其他情况都添加 } } catch (NoNodeException e) { // ignore } catch (KeeperException e) { throw new ArbitrateException(e); } catch (InterruptedException e) { // ignore } catch (UnsupportedEncodingException e) { // ignore } processStats.add(processStat); } return processStats; } public PositionEventData getCanalCursor(String destination, short clientId) { String path = String.format(CANAL_CURSOR_PATH, destination, String.valueOf(clientId)); try { IZkConnection connection = zookeeper.getConnection(); // zkclient会将获取stat信息和正常的操作分开,使用原生的zk进行优化 ZooKeeper orginZk = ((ZooKeeperx) connection).getZookeeper(); Stat stat = new Stat(); byte[] bytes = orginZk.getData(path, false, stat); PositionEventData eventData = new PositionEventData(); eventData.setCreateTime(new Date(stat.getCtime())); eventData.setModifiedTime(new Date(stat.getMtime())); eventData.setPosition(new String(bytes, "UTF-8")); return eventData; } catch (Exception e) { return null; } } public void removeCanalCursor(String destination, short clientId) { String path = String.format(CANAL_CURSOR_PATH, destination, String.valueOf(clientId)); zookeeper.delete(path); } @Override public void removeCanal(String destination, short clientId) { String path = String.format(CANAL_DATA_PATH, destination, String.valueOf(clientId)); zookeeper.deleteRecursive(path); } public void removeCanal(String destination) { String path = String.format(CANAL_PATH, destination); zookeeper.deleteRecursive(path); } }

cannal中在zk上的标识:

/** * 存储结构: * * <pre> * /otter * canal * cluster * destinations * dest1 * running (EPHEMERAL) * cluster * client1 * running (EPHEMERAL) * cluster * filter * cursor * mark * 1 * 2 * 3 * </pre> * * @author zebin.xuzb @ 2012-6-21 * @version 1.0.0 */ public class ZookeeperPathUtils { public static final String ZOOKEEPER_SEPARATOR = "/"; public static final String OTTER_ROOT_NODE = ZOOKEEPER_SEPARATOR + "otter"; public static final String CANAL_ROOT_NODE = OTTER_ROOT_NODE + ZOOKEEPER_SEPARATOR + "canal"; public static final String DESTINATION_ROOT_NODE = CANAL_ROOT_NODE + ZOOKEEPER_SEPARATOR + "destinations"; public static final String FILTER_NODE = "filter"; public static final String BATCH_MARK_NODE = "mark"; public static final String PARSE_NODE = "parse"; public static final String CURSOR_NODE = "cursor"; public static final String RUNNING_NODE = "running"; public static final String CLUSTER_NODE = "cluster"; public static final String DESTINATION_NODE = DESTINATION_ROOT_NODE + ZOOKEEPER_SEPARATOR + "{0}"; public static final String DESTINATION_PARSE_NODE = DESTINATION_NODE + ZOOKEEPER_SEPARATOR + PARSE_NODE; public static final String DESTINATION_CLIENTID_NODE = DESTINATION_NODE + ZOOKEEPER_SEPARATOR + "{1}"; public static final String DESTINATION_CURSOR_NODE = DESTINATION_CLIENTID_NODE + ZOOKEEPER_SEPARATOR + CURSOR_NODE; public static final String DESTINATION_CLIENTID_FILTER_NODE = DESTINATION_CLIENTID_NODE + ZOOKEEPER_SEPARATOR + FILTER_NODE; public static final String DESTINATION_CLIENTID_BATCH_MARK_NODE = DESTINATION_CLIENTID_NODE + ZOOKEEPER_SEPARATOR + BATCH_MARK_NODE; public static final String DESTINATION_CLIENTID_BATCH_MARK_WITH_ID_PATH = DESTINATION_CLIENTID_BATCH_MARK_NODE + ZOOKEEPER_SEPARATOR + "{2}"; /** * 服务端当前正在提供服务的running节点 */ public static final String DESTINATION_RUNNING_NODE = DESTINATION_NODE + ZOOKEEPER_SEPARATOR + RUNNING_NODE; /** * 客户端当前正在工作的running节点 */ public static final String DESTINATION_CLIENTID_RUNNING_NODE = DESTINATION_CLIENTID_NODE + ZOOKEEPER_SEPARATOR + RUNNING_NODE; /** * 整个canal server的集群列表 */ public static final String CANAL_CLUSTER_ROOT_NODE = CANAL_ROOT_NODE + ZOOKEEPER_SEPARATOR + CLUSTER_NODE; public static final String CANAL_CLUSTER_NODE = CANAL_CLUSTER_ROOT_NODE + ZOOKEEPER_SEPARATOR + "{0}"; /** * 针对某个destination的工作的集群列表 */ public static final String DESTINATION_CLUSTER_ROOT = DESTINATION_NODE + ZOOKEEPER_SEPARATOR + CLUSTER_NODE; public static final String DESTINATION_CLUSTER_NODE = DESTINATION_CLUSTER_ROOT + ZOOKEEPER_SEPARATOR + "{1}"; public static String getDestinationPath(String destinationName) { return MessageFormat.format(DESTINATION_NODE, destinationName); } public static String getClientIdNodePath(String destinationName, short clientId) { return MessageFormat.format(DESTINATION_CLIENTID_NODE, destinationName, String.valueOf(clientId)); } public static String getFilterPath(String destinationName, short clientId) { return MessageFormat.format(DESTINATION_CLIENTID_FILTER_NODE, destinationName, String.valueOf(clientId)); } public static String getBatchMarkPath(String destinationName, short clientId) { return MessageFormat.format(DESTINATION_CLIENTID_BATCH_MARK_NODE, destinationName, String.valueOf(clientId)); } public static String getBatchMarkWithIdPath(String destinationName, short clientId, Long batchId) { return MessageFormat.format(DESTINATION_CLIENTID_BATCH_MARK_WITH_ID_PATH, destinationName, String.valueOf(clientId), getBatchMarkNode(batchId)); } public static String getCursorPath(String destination, short clientId) { return MessageFormat.format(DESTINATION_CURSOR_NODE, destination, String.valueOf(clientId)); } public static String getCanalClusterNode(String node) { return MessageFormat.format(CANAL_CLUSTER_NODE, node); } /** * 服务端当前正在提供服务的running节点 */ public static String getDestinationServerRunning(String destination) { return MessageFormat.format(DESTINATION_RUNNING_NODE, destination); } /** * 客户端当前正在工作的running节点 */ public static String getDestinationClientRunning(String destination, short clientId) { return MessageFormat.format(DESTINATION_CLIENTID_RUNNING_NODE, destination, String.valueOf(clientId)); } public static String getDestinationClusterNode(String destination, String node) { return MessageFormat.format(DESTINATION_CLUSTER_NODE, destination, node); } public static String getDestinationClusterRoot(String destination) { return MessageFormat.format(DESTINATION_CLUSTER_ROOT, destination); } public static String getParsePath(String destination) { return MessageFormat.format(DESTINATION_PARSE_NODE, destination); } /** * 将batchNode转换为Long */ public static short getClientId(String clientNode) { return Short.valueOf(clientNode); } /** * 将batchNode转换为Long */ public static long getBatchMarkId(String batchMarkNode) { return Long.valueOf(batchMarkNode); } /** * 将batchId转化为zookeeper中的node名称 */ public static String getBatchMarkNode(Long batchId) { return StringUtils.leftPad(String.valueOf(batchId.intValue()), 10, '0'); } }

com.alibaba.otter.canal.common.zookeeper.ZookeeperPathUtils

node启动时连接manager的重试操作:

/** * 通讯交互的client的默认实现实现 * * @author jianghang */ public class DefaultCommunicationClientImpl implements CommunicationClient { private static final Logger logger = LoggerFactory.getLogger(DefaultCommunicationClientImpl.class); private CommunicationConnectionFactory factory = null; private int poolSize = 10; private ExecutorService executor = null; private int retry = 3; private int retryDelay = 1000; private boolean discard = false; public DefaultCommunicationClientImpl(){ } public DefaultCommunicationClientImpl(CommunicationConnectionFactory factory){ this.factory = factory; } public void initial() { RejectedExecutionHandler handler = null; if (discard) { handler = new ThreadPoolExecutor.DiscardPolicy(); } else { handler = new ThreadPoolExecutor.AbortPolicy(); } executor = new ThreadPoolExecutor(poolSize, poolSize, 60 * 1000L, TimeUnit.MILLISECONDS, new LinkedBlockingQueue<Runnable>(10 * 1000), new NamedThreadFactory("communication-async"), handler); } public void destory() { executor.shutdown(); } public Object call(final String addr, final Event event) { Assert.notNull(this.factory, "No factory specified"); CommunicationParam params = buildParams(addr); CommunicationConnection connection = null; int count = 0; Throwable ex = null; while (count++ < retry) { try { connection = factory.createConnection(params); return connection.call(event); } catch (Exception e) { logger.error(String.format("call[%s] , retry[%s]", addr, count), e); try { Thread.sleep(count * retryDelay); } catch (InterruptedException e1) { // ignore } ex = e; } finally { if (connection != null) { connection.close(); } } } logger.error("call[{}] failed , event[{}]!", addr, event.toString()); throw new CommunicationException("call[" + addr + "] , Event[" + event.toString() + "]", ex); } public void call(final String addr, final Event event, final Callback callback) { Assert.notNull(this.factory, "No factory specified"); submit(new Runnable() { @Override public void run() { Object obj = call(addr, event); callback.call(obj); } }); } public Object call(final String[] addrs, final Event event) { Assert.notNull(this.factory, "No factory specified"); if (addrs == null || addrs.length == 0) { throw new IllegalArgumentException("addrs example: 127.0.0.1:1099"); } ExecutorCompletionService completionService = new ExecutorCompletionService(executor); List<Future<Object>> futures = new ArrayList<Future<Object>>(addrs.length); List result = new ArrayList(10); for (final String addr : addrs) { futures.add(completionService.submit((new Callable<Object>() { @Override public Object call() throws Exception { return DefaultCommunicationClientImpl.this.call(addr, event); } }))); } Exception ex = null; int errorIndex = 0; while (errorIndex < futures.size()) { try { Future future = completionService.take();// 它也可能被打断 future.get(); } catch (InterruptedException e) { Thread.currentThread().interrupt(); ex = e; break; } catch (ExecutionException e) { ex = e; break; } errorIndex++; } if (errorIndex < futures.size()) { for (int index = 0; index < futures.size(); index++) { Future<Object> future = futures.get(index); if (future.isDone() == false) { future.cancel(true); } } } else { for (int index = 0; index < futures.size(); index++) { Future<Object> future = futures.get(index); try { result.add(future.get()); } catch (InterruptedException e) { // ignore Thread.currentThread().interrupt(); } catch (ExecutionException e) { // ignore } } } if (ex != null) { throw new CommunicationException(String.format("call addr[%s] error by %s", addrs[errorIndex], ex.getMessage()), ex); } else { return result; } } public void call(final String[] addrs, final Event event, final Callback callback) { Assert.notNull(this.factory, "No factory specified"); if (addrs == null || addrs.length == 0) { throw new IllegalArgumentException("addrs example: 127.0.0.1:1099"); } submit(new Runnable() { @Override public void run() { Object obj = call(addrs, event); callback.call(obj); } }); } /** * 直接提交一个异步任务 */ public Future submit(Runnable call) { Assert.notNull(this.factory, "No factory specified"); return executor.submit(call); } /** * 直接提交一个异步任务 */ public Future submit(Callable call) { Assert.notNull(this.factory, "No factory specified"); return executor.submit(call); } // ===================== helper method ================== private CommunicationParam buildParams(String addr) { CommunicationParam params = new CommunicationParam(); String[] strs = StringUtils.split(addr, ":"); if (strs == null || strs.length != 2) { throw new IllegalArgumentException("addr example: 127.0.0.1:1099"); } InetAddress address = null; try { address = InetAddress.getByName(strs[0]); } catch (UnknownHostException e) { throw new CommunicationException("addr_error", "addr[" + addr + "] is unknow!"); } params.setIp(address.getHostAddress()); params.setPort(Integer.valueOf(strs[1])); return params; } }

com.alibaba.otter.shared.communication.core.impl.DefaultCommunicationClientImpl

2020-08-04 17:48:35.353 [main] ERROR c.a.o.s.c.core.impl.DefaultCommunicationClientImpl - call[otter-manager.pro.svc.cluster.local:1099] , retry[2]

com.alibaba.dubbo.rpc.RpcException: Failed to invoke remote method: acceptEvent, provider: dubbo://172.25.3.187:1099/endpoint?acceptEvent.timeout=50000&client=netty&codec=dubbo&connections=30&iothreads=4&lazy=true&pa

yload=8388608&serialization=java&threads=50, cause: client(url: dubbo://172.25.3.187:1099/endpoint?acceptEvent.timeout=50000&client=netty&codec=dubbo&connections=30&heartbeat=60000&iothreads=4&lazy=true&payload=83886

08&send.reconnect=true&serialization=java&threads=50) failed to connect to server /172.25.3.187:1099, error message is:Connection refused

at com.alibaba.dubbo.rpc.protocol.dubbo.DubboInvoker.doInvoke(DubboInvoker.java:101) ~[dubbo-2.5.3.jar:2.5.3]

at com.alibaba.dubbo.rpc.protocol.AbstractInvoker.invoke(AbstractInvoker.java:144) ~[dubbo-2.5.3.jar:2.5.3]

at com.alibaba.dubbo.rpc.proxy.InvokerInvocationHandler.invoke(InvokerInvocationHandler.java:52) ~[dubbo-2.5.3.jar:2.5.3]

at com.alibaba.dubbo.common.bytecode.proxy0.acceptEvent(proxy0.java) ~[na:2.5.3]

last position【运行时位点存在哪?】

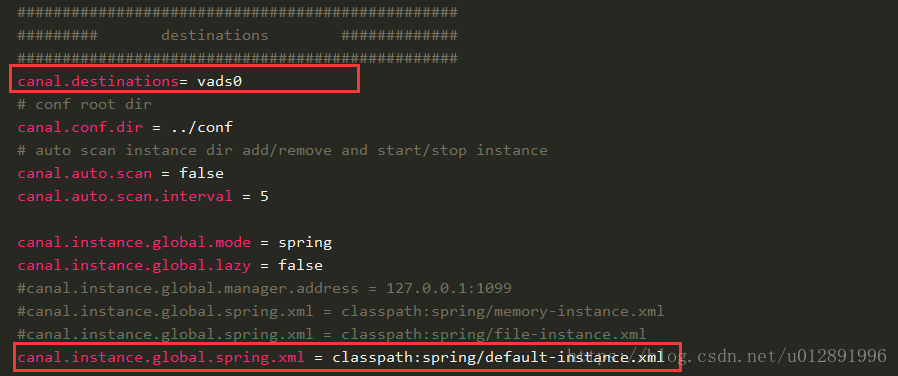

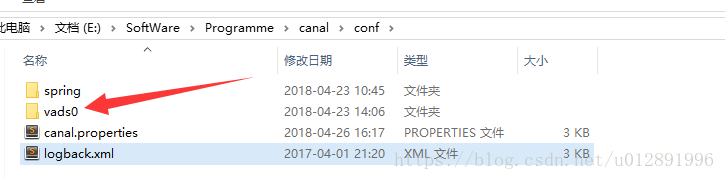

①destinations,节点读取目录,在conf目录下创建一个目录,这边创建的节点名字为vads0

②默认配置是file-instance.xml 这个就是各种信息会使用文件的形式记录,我选择使用这边写的default-instance.xml ,因为我不想去看文件。default有一行配置将游标记录在ZK服务上面。

区别就是cursor文件有没有在ZK上面记录。

https://blog.csdn.net/u012891996/article/details/83061381

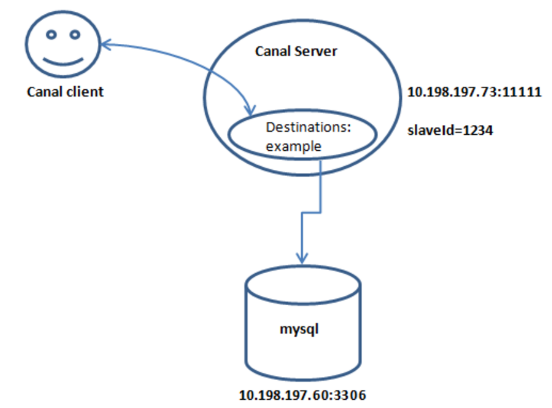

21. 链接方式(参考:http://www.importnew.com/25189.html)

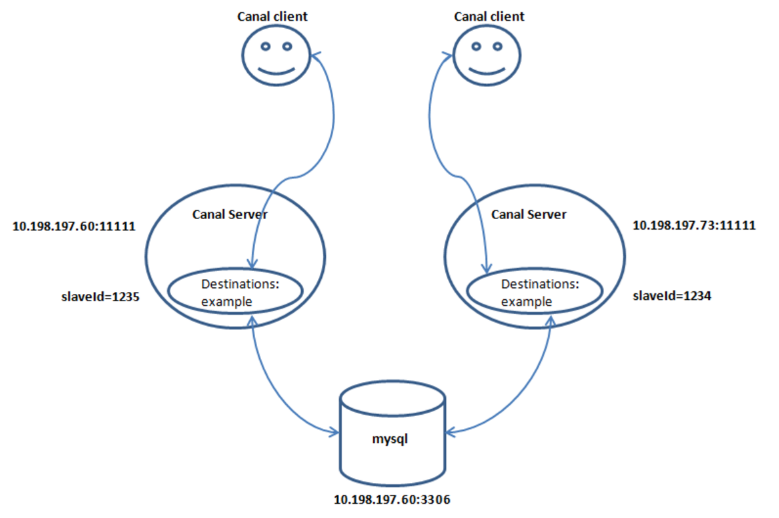

1. HA配置架构图

2. 单连

3. 两个client+两个instance+1个mysql

当mysql变动时,两个client都能获取到变动

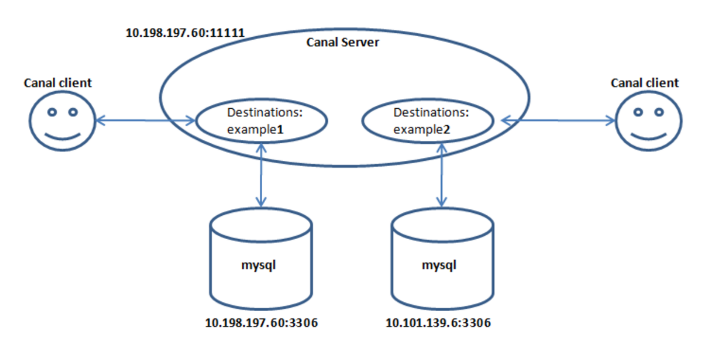

4. 一个server+两个instance+两个mysql+两个client

5. instance****的standby配置

Standby:备库

22. 总结

这里总结了一下Canal的一些点,仅供参考:

- 原理:模拟mysql slave的交互协议,伪装自己为mysql slave,向mysql master发送dump协议;mysql master收到dump请求,开始推送binary log给slave(也就是canal);解析binary log对象(原始为byte流)

- 重复消费问题:在消费端解决。

- 采用开源的open-replicator来解析binlog

- canal需要维护EventStore,可以存取在Memory, File, zk

- canal需要维护客户端的状态,同一时刻一个instance只能有一个消费端消费

- 数据传输格式:protobuff

- 支持binlog format 类型:statement, row, mixed. 多次附加功能只能在row下使用,比如otter

- binlog position可以支持保存在内存,文件,zk中

- instance启动方式:rpc/http; 内嵌

- 有ACK机制

- 无告警,无监控,这两个功能都需要对接外部系统

- 方便快速部署。

23. 我调试成功的代码地址

https://gitee.com/zhiqishao/canal-client

https://www.cnblogs.com/shaozhiqi/p/11534658.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号