1 -单变量高斯分布

单变量高斯分布概率密度函数定义为:

\[p(x)=\frac{1}{\sqrt{2\pi\sigma}}exp\{-\frac{1}{2}(\frac{x-\mu}{\sigma})^2\} \tag{1.1}

\]

式中\(\mu\)为随机变量\(x\)的期望,\(\sigma^2\)为\(x\)的方差,\(\sigma\)称为标准差:

\[\mu=E(x)=\int_{-\infty}^\infty xp(x)dx \tag{1.2}

\]

\[\sigma^2=\int_{-\infty}^\infty(x-\mu)^2p(x)dx \tag{1.3}

\]

可以看出,该概率分布函数,由期望和方差就能完全确定。高斯分布的样本主要都集中在均值附近,且分散程度可以通过标准差来表示,其越大,分散程度也越大,且约有95%的样本落在区间\((\mu-2\sigma,\mu+2\sigma)\)

2 - 多元高斯分布

多元高斯分布的概率密度函数。多元高斯分布的概率密度函数定义:

\[p({\bf x})=\frac{1}{(2\pi)^{\frac{d}{2}}|\Sigma|^\frac{1}{2}}exp\{-\frac{1}{2}({\bf x-\mu})^T{\Sigma}^{-1}({\bf x-\mu})\} \tag{2.1}

\]

其中\({\bf x}=[x_1,x_2,...,x_d]^T\)是\(d\)维的列向量;

\({\bf \mu}=[\mu_1,\mu_2,...,\mu_d]^T\)是\(d\)维均值的列向量;

\(\Sigma\)是\(d\times d\)维的协方差矩阵;

\({\Sigma}^{-1}\)是\(\Sigma\)的逆矩阵;

\(|\Sigma|\)是\(\Sigma\)的行列式;

\((\bf x-\mu)^T\)是\((\bf x-\mu)\)的转置,且

\[\mu=E(\bf x) \tag{2.2}

\]

\[\Sigma=E\{(\bf x-\bf \mu)(\bf x - \mu)^T\}\tag{2.3}

\]

其中\(\mu,\Sigma\)分别是向量\(\bf x\)和矩阵\((\bf x -\mu)(\bf x -\mu)^T\)的期望,诺\(x_i\)是\(\bf x\)的第\(i\)个分量,\(\mu_i\)是\(\mu\)的第\(i\)个分量,\(\sigma_{ij}^2\)是\(\sum\)的第\(i,j\)个元素。则:

\[\mu_i=E(x_i)=\int_{-\infty}^\infty x_ip(x_i)dx_i \tag{2.4}

\]

其中\(p(x_i)\)为边缘分布:

\[p(x_i)=\int_{-\infty}^\infty\cdot\cdot\cdot\int_{-\infty}^\infty p({\bf x})dx_1dx_2 \cdot\cdot\cdot dx_d \tag{2.5}

\]

而

\[\begin{eqnarray}\sigma_{ij}^2

&=&E[(x_i-\mu_i)(x_j-\mu_j)]\\

&=&\int_{-\infty}^\infty\int_{-\infty}^\infty(x_i-\mu_i)(x_j-\mu_j)p(x_i,x_j)dx_idx_j

\end{eqnarray} \tag{2.6}\]

不难证明,协方差矩阵总是对称非负定矩阵,且可表示为:

\[\Sigma=

\begin{bmatrix}

\sigma_{11}^2 & \sigma_{12}^2 \cdot\cdot\cdot \sigma_{1d}^2 \\

\sigma_{12}^2 & \sigma_{22}^2 \cdot\cdot\cdot \sigma_{2d}^2\\

\cdot\cdot\cdot &\cdot\cdot\cdot\\

\sigma_{1d}^2 & \sigma_{2d}^2 \cdot\cdot\cdot \sigma_{dd}^2

\end{bmatrix}\]

对角线上的元素\(\sigma_{ii}^2\)为\(x_i\)的方差,非对角线上的元素\(\sigma_{ij}^2\)为\(x_i\)和\(x_j\)的协方差。

由上面可以看出,均值向量\(\mu\)有\(d\)个参数,协方差矩阵\(\sum\)因为对称,所以有\(d(d+1)/2\)个参数,所以多元高斯分布一共由\(d+d(d+1)/2\)个参数决定。

从多元高斯分布中抽取的样本大部分落在由\(\mu\)和\(\Sigma\)所确定的一个区域里,该区域的中心由向量\(\mu\)决定,区域大小由协方差矩阵\(\Sigma\)决定。且从式子(2.1)可以看出,当指数项为常数时,密度\(p(\bf x)\)值不变,因此等密度点是使指数项为常数的点,即满足:

\[({\bf x}-\mu)^T{\Sigma}^{-1}({\bf x-\mu})=常数 \tag{2.7}

\]

上式的解是一个超椭圆面,且其主轴方向由\(\sum\)的特征向量所决定,主轴的长度与相应的协方差矩阵\(\Sigma\)的特征值成正比。

在数理统计中,式子(2.7)所表示的数量:

\[\gamma^2=({\bf x}-\mu)^T{\Sigma}^{-1}({\bf x}-\mu)

\]

称为\(\bf x\)到\(\mu\)的Mahalanobis距离的平方。所以等密度点轨迹是\(\bf x\)到\(\mu\)的Mahalanobis距离为常数的超椭球面。这个超椭球体大小是样本对于均值向量的离散度度量。对应的M式距离为\(\gamma\)的超椭球体积为:

\[V=V_d|\Sigma|^{\frac{1}{2}}\gamma^d

\]

其中\(V_d\)是d维单位超球体的体积:

\[V_d=\begin{cases}\frac{\pi^{\frac{d}{2}}}{(\frac{d}{2})!},&d 为偶数\\

\frac{2^d\pi^{(\frac{d-1}{2})}(\frac{d-1}{2})!}{d!},d为奇数

\end{cases}\]

如果多元高斯随机向量\(\bf x\)的协方差矩阵是对角矩阵,则\(\bf x\)的分量是相互独立的高斯分布随机变量。

2.1 - 多变量高斯分布中马氏距离的2维表示

上面式2.7是样本点\(\bf x\)与均值向量\(\bf \mu\)之间的马氏距离。我们首先对\(\Sigma\)进行特征分解,即\(\Sigma=\bf U\Lambda U^T\),这里\(\bf U\)是一个正交矩阵,且\(\bf U^TU=I\),\(\bf\Lambda\)是特征值的对角矩阵。且:

\[{\bf\Sigma}^{-1}={\bf U^{-T}\Lambda^{-1}U^{-1}}={\bf U\Lambda^{-1}U^T}=\sum_{i=1}^d\frac{1}{\lambda_i}{\bf u}_i{\bf u}_i^T

\]

这里\({\bf u}_i\)是\(\bf U\)的第\(i\)列,包含了第\(i\)个特征向量。因此可以重写成:

\[\begin{eqnarray}({\bf x-\mu})^T{\Sigma}^{-1}({\bf x-\mu})

&=&({\bf x-\mu})^T\left(\sum_{i=1}^d\frac{1}{\lambda_i}{\bf u}_i{\bf u}_i^T\right)({\bf x-\mu})\\

&=&\sum_{i=1}^d\frac{1}{\lambda_i}({\bf x-\mu})^T{\bf u}_i{\bf u}_i^T({\bf x-\mu})\\

&=&\sum_{i=1}^d\frac{y_i^2}{\lambda_i}

\end{eqnarray}\]

这里\(y_i={\bf u}_i^T(\bf x-\mu)\),可以看出,当只选择两个维度时,即可得到椭圆公式 :

\[\frac{y_1^2}{\lambda_1}+\frac{y_2^2}{\lambda_2}=1

\]

其中该椭圆的长轴与短轴的方向由特征向量而定,轴的长短由特征值大小而定。

ps:所以得出结论,马氏距离就是欧式距离先通过\(\bf \mu\)中心化,然后基于\(\bf U\)旋转得到的。

2.2多变量高斯分布的最大似然估计

假设有\(N\)个iid的高斯分布的样本即${\bf x}_i \(~\) \cal N(\bf \mu,\Sigma)$,则该分布的期望和方差(这里是协方差):

\[\hat\mu=\frac{1}{N}\sum_{i=1}^N{\bf x}_i=\overline{\bf x}\tag{2.2.1}

\]

\[\begin{eqnarray}\hat{\Sigma}

&=&\frac{1}{N}\sum_{i=1}^N({\bf x}_i-{\bf\overline x})({\bf x}_i-{\bf\overline x})^T\\

&=&\frac{1}{N}\sum_{i=1}^N\left({\bf x}_i{\bf x}_i^T-{\bf x}_i{\bf \overline x}^T-{\bf \overline x}{\bf x}_i^T+{\bf \overline x}{\bf \overline x}^T\right)\\

&=&\frac{1}{N}\sum_{i=1}^N\left({\bf x}_i{\bf x}_i^T\right)-2{\bf \overline x}{\bf \overline x}^T+{\bf \overline x}{\bf \overline x}^T\\

&=&\frac{1}{N}\sum_{i=1}^N\left({\bf x}_i{\bf x}_i^T\right)-{\bf \overline x}{\bf \overline x}^T

\end{eqnarray}\tag{2.2.2}\]

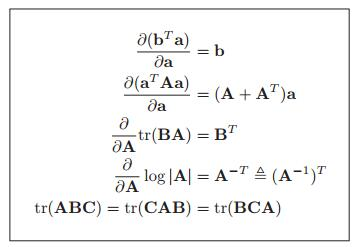

为了求得他们的最大似然估计,需要预先知道如下知识:

![]()

图2.2.1 书mlapp上公式4.10

\[{\bf x^TAx}=tr({\bf x^TAx})=tr({\bf xx^TA})=tr({\bf Axx^T})\tag{2.2.3}

\]

因为多元高斯分布可写成:

\[p(d|\mu,\Sigma)= \frac{1}{{2\pi}^{d/2}}*|\Sigma^{-1}|^{1/2}*\exp\left[-\frac{1}{2}({\bf x-\mu})^T{\Sigma}^{-1}({\bf x-\mu})\right]\tag{2.2.4}

\]

\[\begin{eqnarray}

\scr L({\bf \mu},\Sigma)

&=&\log p(d|{\bf \mu},\Sigma)\\

&=&0+\frac{N}{2}\log|{\bf \Lambda}|-\frac{1}{2}\sum_{i=1}^N({{\bf x}_i-\mu})^T{\bf \Lambda}({{\bf x}_i-\mu})

\end{eqnarray}\tag{2.2.5}\]

这里\(\bf \Lambda=\Sigma^{-1}\)是协方差矩阵的逆矩阵,也就是精度矩阵。

并假设\({\bf y}_i={\bf x}_i-\mu\),采用链式求导法则,且按照图2.2.1第二个公式,得:

\[\begin{eqnarray}

\frac{d}{d\mu}\left(\frac{1}{2}({{\bf x}_i-\mu})^T{\Sigma}^{-1}({{\bf x}_i-\mu})\right)

&=&\frac{d}{d{\bf y}_i}\left({\bf y}_i^T\Sigma^{-1}{\bf y}_i\right)\frac{d{\bf y}_i}{d\mu}\\

&=&(\Sigma^{-1}+\Sigma^{-T}){\bf y}_i(-1)\\

&=&-(\Sigma^{-1}+\Sigma^{-T}){\bf y}_i

\end{eqnarray}\]

且\(\Sigma\)是对称矩阵,所以:

\[\begin{eqnarray}

\frac{d}{d\mu}{\scr L}(\mu,\Sigma)

&=&0+\frac{d}{d\mu}\left(-\frac{1}{2}\sum_{i=1}^N({{\bf x}_i-\mu})^T{\bf \Lambda}({{\bf x}_i-\mu})\right)\\

&=&-\frac{1}{2}\sum_{i=1}^N\left(-(\Sigma^{-1}+\Sigma^{-T}){\bf y}_i\right)\\

&=&\sum_{i=1}^N\Sigma^{-1}{\bf y}_i\\

&=&\Sigma^{-1}\sum_{i=1}^N({\bf x}_i-\mu)=0

\end{eqnarray}\]

从而,多元高斯分布的期望为:\(\hat \mu=\frac{1}{N}\sum_{i=1}^N{\bf x}_i\)

因为

\(\bf A_1B+A_2B=(A_1+A_2)B\)

\(tr({\bf A})+tr({\bf B})=tr(\bf A+B)\)

所以

\(tr({\bf A_1 B})+tr({\bf A_2 B})=tr[(\bf A_1+A_2)B]\)

通过公式2.2.3,且假定\({\bf S}_\mu=\sum_{i=1}^N({{\bf x}_i-\mu})({{\bf x}_i-\mu})^T\)可知公式2.2.5可表示成:

\[\begin{eqnarray}

\scr L({\bf \mu},\Sigma)

&=&\log p(d|{\bf \mu},\Sigma)\\

&=&0+\frac{N}{2}\log|{\bf \Lambda}|-\frac{1}{2}\sum_{i=1}^Ntr[({{\bf x}_i-\mu})({{\bf x}_i-\mu})^T{\bf \Lambda}]\\

&=&\frac{N}{2}\log|{\bf \Lambda}|-\frac{1}{2}tr({\bf S_\mu}{\bf \Lambda})

\end{eqnarray}\tag{2.2.5}\]

所以:

\[\frac{d\scr L(\mu,\Sigma)}{d{\bf \Lambda}}=\frac{N}{2}{\bf \Lambda^{-T}}-\frac{1}{2}{\bf S}_\mu^T=0

\]

\[{\bf \Lambda^{-T}}={\bf \Lambda^{-1}}=\Sigma=\frac{1}{N}{\bf S}_\mu

\]

最后得到了多元高斯分布协方差的期望值为:

\(\hat{\Sigma}

=\frac{1}{N}\sum_{i=1}^N({\bf x}_i-{\bf\mu})({\bf x}_i-{\bf\mu})^T\)

2.3 基于多元变量高斯分布的分类方法

1 - 各个类别的协方差都相等\(\Sigma_{c_k}=\Sigma\):

并且可以直观的知道:

\[p(X={\bf x}|Y=c_k,{\bf \theta}) = {\cal N}({\bf x|\mu}_{c_k},\Sigma_{c_k})\tag{3.1}

\]

ps:基于第\(k\)类基础上关于变量\(\bf x\)的概率,就是先挑选出所有\(k\)类的样本,然后再计算其多元高斯概率。且如果\(\Sigma_{c_k}\)是对角矩阵(即不同特征之间相互独立),则其就等于朴素贝叶斯。

且可知对于多分类问题,给定一个测试样本其特征向量,预测结果为选取概率最大的那个类别:

\[\begin{eqnarray}\hat y({\bf x})

&=&arg\max_{c_k}P(Y={c_k}|X={\bf x})\\

&=&arg\max_{c_k}\frac{P(Y={c_k},X={\bf x})}{P(X={\bf x})}

\end{eqnarray}\tag{3.2}\]

因为对于每个类别计算当前测试样本概率时,分母都是相同的,故省略,比较分子大的就行,也就是联合概率大的那个,从而式子3.2等价于:

\[\hat y({\bf x})=arg\max_{c_k}P(X={\bf x}|Y={c_k})P(Y={c_k})

\]

而所谓LDA,就是当每个类别的协方差都相等,即\(\Sigma_{c_k}=\Sigma\),所以:

\(P(X={\bf x}|Y={c_k})=\frac{1}{(2\pi)^{d/2}|\Sigma|^{1/2}}\exp[-\frac{1}{2}({\bf x-\mu}_{c_k})^T\Sigma^{-1}({\bf x-\mu}_{c_k})]\)

\(P(Y={c_k})=\pi_{c_k}\)

从而,可发现:

\[\begin{eqnarray}P(Y={c_k}|X={\bf x}) \quad

&正比于& \pi_{c_k}\exp[-\frac{1}{2}({\bf x-\mu}_{c_k})^T\Sigma^{-1}({\bf x-\mu}_{c_k})]\\

&=&\pi_{c_k}\exp[-\frac{1}{2}{\bf x}^T\Sigma^{-1}{\bf x}+\frac{1}{2}{\bf x}^T\Sigma^{-1}{\bf \mu}_{c_k}+\frac{1}{2}{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf x}-\frac{1}{2}{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf \mu}_{c_k}]\\

&=&\pi_{c_k}\exp[-\frac{1}{2}{\bf x}^T\Sigma^{-1}{\bf x}+{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf x}-\frac{1}{2}{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf \mu}_{c_k}]\\

&=&exp[{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf x}-\frac{1}{2}{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf \mu}_{c_k}+\log\pi_{c_k}]exp[-\frac{1}{2}{\bf x}^T\Sigma^{-1}{\bf x}]\\

&=&\frac{exp[{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf x}-\frac{1}{2}{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf \mu}_{c_k}+\log\pi_{c_k}]}{exp[\frac{1}{2}{\bf x}^T\Sigma^{-1}{\bf x}]}

\end{eqnarray}\]

从而上式的分母又可以省略

假定\(\gamma_{c_k}=-\frac{1}{2}{\bf \mu}_{c_k}^T\Sigma^{-1}{\bf \mu}_{c_k}+\log\pi_{c_k}\),而\(\beta_{c_k}=\Sigma^{-1}{\bf \mu}_{c_k}\)

从而:

\[P(Y={c_k}|X={\bf x})=\frac{exp({\beta_{c_k}^T{\bf x}+\gamma_{c_k})}}{\sum_{k=1}^{|c|}exp({\beta_{c_k}^T{\bf x}+\gamma_{c_k})}}=S(\eta)_{c_k}

\]

这里\(\eta=[{\beta_{c_1}^T{\bf x}+\gamma_{c_1}},{\beta_{c_2}^T{\bf x}+\gamma_{c_2}},...,{\beta_{c_|c|}^T{\bf x}+\gamma_{c_|c|}}]\),可以发现它就是一个softmax函数,即:

\[S(\eta)_{c_k}=\frac{exp(\eta_{c_k})}{\sum_{k=1}^{|c|}exp(\eta_{c_k})}

\]

softmax之所以这样命名就是因为它有点像max函数。

对于LDA模型,假设将样本空间划分成n个互相独立的空间,则线性分类面,就是该分类面两边的类别预测概率相等的时候,即:

\(P(Y={c_k}|X={\bf x})=P(Y={c_k'}|X={\bf x})\)

\(\beta_{c_k}^T{\bf x}+\gamma_{c_k}=\beta_{c_k'}^T{\bf x}+\gamma_{c_k'}\)

\({\bf x}^T(\beta_{c_k'}-\beta_{c_k})=\eta_{c_k'}-\eta_{c_k}\)

参考资料:

[] 边肇祺。模式识别 第二版

[] Machine learning A Probabilistic Perspective

[] William.Feller, 概率论及其应用(第1卷)

浙公网安备 33010602011771号

浙公网安备 33010602011771号