深度残差网络+自适应参数化ReLU激活函数(调参记录2)

续上一篇:

深度残差网络+自适应参数化ReLU激活函数(调参记录1)

https://www.cnblogs.com/shisuzanian/p/12906954.html

本文依然是测试深度残差网络+自适应参数化ReLU激活函数,残差模块的数量增加到了27个,其他保持不变,卷积核的个数依然是8个、16个到32个,继续测试在Cifar10数据集上的效果。

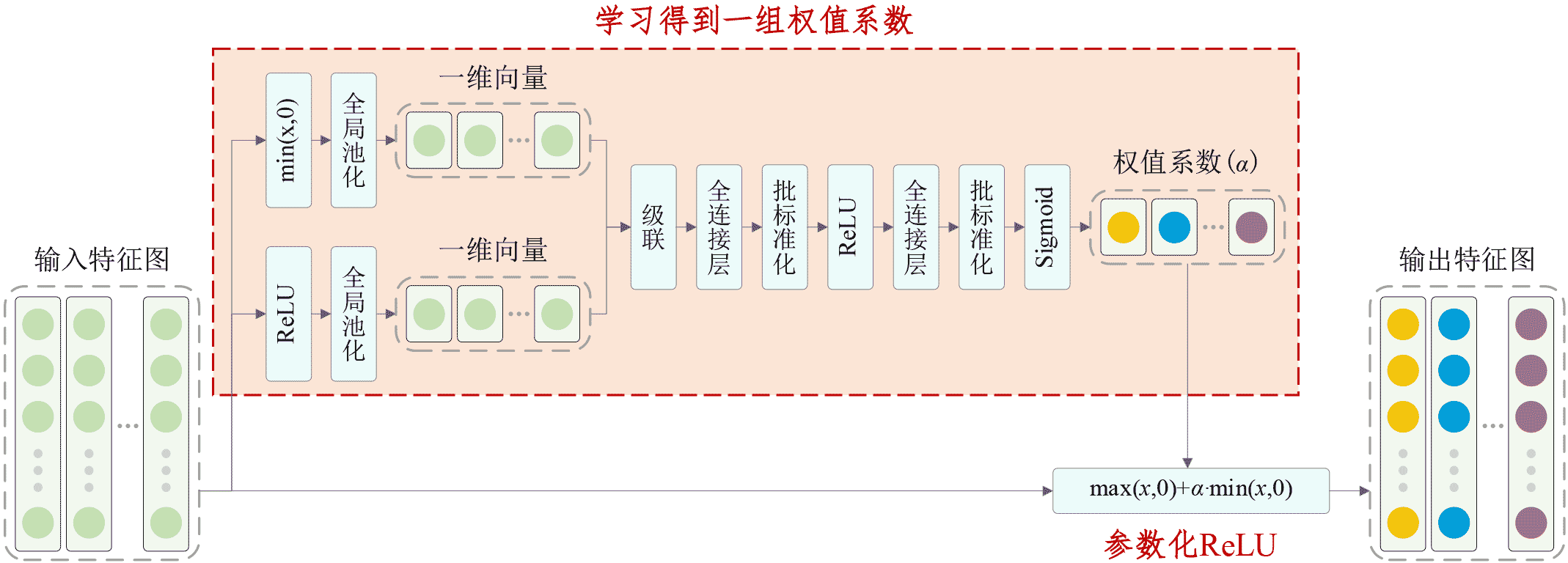

自适应参数化ReLU激活函数是Parametric ReLU的一种改进,基本原理见下图:

具体Keras代码如下:

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Tue Apr 14 04:17:45 2020

Implemented using TensorFlow 1.10.0 and Keras 2.2.1

Minghang Zhao, Shisheng Zhong, Xuyun Fu, Baoping Tang, Shaojiang Dong, Michael Pecht,

Deep Residual Networks with Adaptively Parametric Rectifier Linear Units for Fault Diagnosis,

IEEE Transactions on Industrial Electronics, 2020, DOI: 10.1109/TIE.2020.2972458

@author: Minghang Zhao

"""

from __future__ import print_function

import keras

import numpy as np

from keras.datasets import cifar10

from keras.layers import Dense, Conv2D, BatchNormalization, Activation, Minimum

from keras.layers import AveragePooling2D, Input, GlobalAveragePooling2D, Concatenate, Reshape

from keras.regularizers import l2

from keras import backend as K

from keras.models import Model

from keras import optimizers

from keras.preprocessing.image import ImageDataGenerator

from keras.callbacks import LearningRateScheduler

K.set_learning_phase(1)

# The data, split between train and test sets

(x_train, y_train), (x_test, y_test) = cifar10.load_data()

# Noised data

x_train = x_train.astype('float32') / 255.

x_test = x_test.astype('float32') / 255.

x_test = x_test-np.mean(x_train)

x_train = x_train-np.mean(x_train)

print('x_train shape:', x_train.shape)

print(x_train.shape[0], 'train samples')

print(x_test.shape[0], 'test samples')

# convert class vectors to binary class matrices

y_train = keras.utils.to_categorical(y_train, 10)

y_test = keras.utils.to_categorical(y_test, 10)

# Schedule the learning rate, multiply 0.1 every 400 epoches

def scheduler(epoch):

if epoch % 400 == 0 and epoch != 0:

lr = K.get_value(model.optimizer.lr)

K.set_value(model.optimizer.lr, lr * 0.1)

print("lr changed to {}".format(lr * 0.1))

return K.get_value(model.optimizer.lr)

# An adaptively parametric rectifier linear unit (APReLU)

def aprelu(inputs):

# get the number of channels

channels = inputs.get_shape().as_list()[-1]

# get a zero feature map

zeros_input = keras.layers.subtract([inputs, inputs])

# get a feature map with only positive features

pos_input = Activation('relu')(inputs)

# get a feature map with only negative features

neg_input = Minimum()([inputs,zeros_input])

# define a network to obtain the scaling coefficients

scales_p = GlobalAveragePooling2D()(pos_input)

scales_n = GlobalAveragePooling2D()(neg_input)

scales = Concatenate()([scales_n, scales_p])

scales = Dense(channels//4, activation='linear', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(scales)

scales = BatchNormalization()(scales)

scales = Activation('relu')(scales)

scales = Dense(channels, activation='linear', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(scales)

scales = BatchNormalization()(scales)

scales = Activation('sigmoid')(scales)

scales = Reshape((1,1,channels))(scales)

# apply a paramtetric relu

neg_part = keras.layers.multiply([scales, neg_input])

return keras.layers.add([pos_input, neg_part])

# Residual Block

def residual_block(incoming, nb_blocks, out_channels, downsample=False,

downsample_strides=2):

residual = incoming

in_channels = incoming.get_shape().as_list()[-1]

for i in range(nb_blocks):

identity = residual

if not downsample:

downsample_strides = 1

residual = BatchNormalization()(residual)

residual = aprelu(residual)

residual = Conv2D(out_channels, 3, strides=(downsample_strides, downsample_strides),

padding='same', kernel_initializer='he_normal',

kernel_regularizer=l2(1e-4))(residual)

residual = BatchNormalization()(residual)

residual = aprelu(residual)

residual = Conv2D(out_channels, 3, padding='same', kernel_initializer='he_normal',

kernel_regularizer=l2(1e-4))(residual)

# Downsampling

if downsample_strides > 1:

identity = AveragePooling2D(pool_size=(1,1), strides=(2,2))(identity)

# Zero_padding to match channels

if in_channels != out_channels:

zeros_identity = keras.layers.subtract([identity, identity])

identity = keras.layers.concatenate([identity, zeros_identity])

in_channels = out_channels

residual = keras.layers.add([residual, identity])

return residual

# define and train a model

inputs = Input(shape=(32, 32, 3))

net = Conv2D(8, 3, padding='same', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(inputs)

net = residual_block(net, 9, 8, downsample=False)

net = residual_block(net, 1, 16, downsample=True)

net = residual_block(net, 8, 16, downsample=False)

net = residual_block(net, 1, 32, downsample=True)

net = residual_block(net, 8, 32, downsample=False)

net = BatchNormalization()(net)

net = aprelu(net)

net = GlobalAveragePooling2D()(net)

outputs = Dense(10, activation='softmax', kernel_initializer='he_normal', kernel_regularizer=l2(1e-4))(net)

model = Model(inputs=inputs, outputs=outputs)

sgd = optimizers.SGD(lr=0.1, decay=0., momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])

# data augmentation

datagen = ImageDataGenerator(

# randomly rotate images in the range (deg 0 to 180)

rotation_range=30,

# randomly flip images

horizontal_flip=True,

# randomly shift images horizontally

width_shift_range=0.125,

# randomly shift images vertically

height_shift_range=0.125)

reduce_lr = LearningRateScheduler(scheduler)

# fit the model on the batches generated by datagen.flow().

model.fit_generator(datagen.flow(x_train, y_train, batch_size=100),

validation_data=(x_test, y_test), epochs=1000,

verbose=1, callbacks=[reduce_lr], workers=4)

# get results

K.set_learning_phase(0)

DRSN_train_score1 = model.evaluate(x_train, y_train, batch_size=100, verbose=0)

print('Train loss:', DRSN_train_score1[0])

print('Train accuracy:', DRSN_train_score1[1])

DRSN_test_score1 = model.evaluate(x_test, y_test, batch_size=100, verbose=0)

print('Test loss:', DRSN_test_score1[0])

print('Test accuracy:', DRSN_test_score1[1])

部分实验结果如下(前271个epoch的结果在spyder的窗口里已经不显示了):

1 Epoch 272/1000 2 53s 105ms/step - loss: 0.6071 - acc: 0.8711 - val_loss: 0.6295 - val_acc: 0.8667 3 Epoch 273/1000 4 53s 105ms/step - loss: 0.6078 - acc: 0.8705 - val_loss: 0.6373 - val_acc: 0.8678 5 Epoch 274/1000 6 53s 106ms/step - loss: 0.6043 - acc: 0.8714 - val_loss: 0.6245 - val_acc: 0.8686 7 Epoch 275/1000 8 52s 105ms/step - loss: 0.6056 - acc: 0.8720 - val_loss: 0.6228 - val_acc: 0.8713 9 Epoch 276/1000 10 52s 105ms/step - loss: 0.6059 - acc: 0.8730 - val_loss: 0.6104 - val_acc: 0.8730 11 Epoch 277/1000 12 52s 105ms/step - loss: 0.5980 - acc: 0.8756 - val_loss: 0.6265 - val_acc: 0.8671 13 Epoch 278/1000 14 52s 105ms/step - loss: 0.6093 - acc: 0.8716 - val_loss: 0.6363 - val_acc: 0.8617 15 Epoch 279/1000 16 53s 105ms/step - loss: 0.6051 - acc: 0.8716 - val_loss: 0.6355 - val_acc: 0.8650 17 Epoch 280/1000 18 53s 105ms/step - loss: 0.6062 - acc: 0.8725 - val_loss: 0.6227 - val_acc: 0.8669 19 Epoch 281/1000 20 52s 105ms/step - loss: 0.6025 - acc: 0.8731 - val_loss: 0.6156 - val_acc: 0.8723 21 Epoch 282/1000 22 53s 105ms/step - loss: 0.6031 - acc: 0.8725 - val_loss: 0.6450 - val_acc: 0.8630 23 Epoch 283/1000 24 52s 104ms/step - loss: 0.6030 - acc: 0.8745 - val_loss: 0.6282 - val_acc: 0.8688 25 Epoch 284/1000 26 52s 104ms/step - loss: 0.6049 - acc: 0.8717 - val_loss: 0.6213 - val_acc: 0.8693 27 Epoch 285/1000 28 53s 105ms/step - loss: 0.6005 - acc: 0.8709 - val_loss: 0.6208 - val_acc: 0.8682 29 Epoch 286/1000 30 52s 104ms/step - loss: 0.6049 - acc: 0.8718 - val_loss: 0.6420 - val_acc: 0.8647 31 Epoch 287/1000 32 53s 105ms/step - loss: 0.6040 - acc: 0.8728 - val_loss: 0.6188 - val_acc: 0.8694 33 Epoch 288/1000 34 53s 105ms/step - loss: 0.6011 - acc: 0.8741 - val_loss: 0.6548 - val_acc: 0.8577 35 Epoch 289/1000 36 53s 105ms/step - loss: 0.6060 - acc: 0.8731 - val_loss: 0.6163 - val_acc: 0.8717 37 Epoch 290/1000 38 53s 105ms/step - loss: 0.6047 - acc: 0.8717 - val_loss: 0.6172 - val_acc: 0.8733 39 Epoch 291/1000 40 53s 105ms/step - loss: 0.6029 - acc: 0.8728 - val_loss: 0.6319 - val_acc: 0.8639 41 Epoch 292/1000 42 52s 105ms/step - loss: 0.6011 - acc: 0.8742 - val_loss: 0.6237 - val_acc: 0.8664 43 Epoch 293/1000 44 53s 105ms/step - loss: 0.5998 - acc: 0.8741 - val_loss: 0.6410 - val_acc: 0.8646 45 Epoch 294/1000 46 52s 105ms/step - loss: 0.6001 - acc: 0.8736 - val_loss: 0.6435 - val_acc: 0.8644 47 Epoch 295/1000 48 53s 106ms/step - loss: 0.6022 - acc: 0.8730 - val_loss: 0.6233 - val_acc: 0.8657 49 Epoch 296/1000 50 53s 106ms/step - loss: 0.6015 - acc: 0.8746 - val_loss: 0.6224 - val_acc: 0.8665 51 Epoch 297/1000 52 52s 105ms/step - loss: 0.5995 - acc: 0.8750 - val_loss: 0.6471 - val_acc: 0.8613 53 Epoch 298/1000 54 53s 106ms/step - loss: 0.5992 - acc: 0.8735 - val_loss: 0.6436 - val_acc: 0.8635 55 Epoch 299/1000 56 53s 106ms/step - loss: 0.6040 - acc: 0.8716 - val_loss: 0.6273 - val_acc: 0.8674 57 Epoch 300/1000 58 52s 105ms/step - loss: 0.6008 - acc: 0.8736 - val_loss: 0.6543 - val_acc: 0.8603 59 Epoch 301/1000 60 52s 104ms/step - loss: 0.6023 - acc: 0.8732 - val_loss: 0.6420 - val_acc: 0.8633 61 Epoch 302/1000 62 52s 105ms/step - loss: 0.5992 - acc: 0.8747 - val_loss: 0.6125 - val_acc: 0.8712 63 Epoch 303/1000 64 52s 105ms/step - loss: 0.6016 - acc: 0.8743 - val_loss: 0.6402 - val_acc: 0.8660 65 Epoch 304/1000 66 53s 105ms/step - loss: 0.5998 - acc: 0.8742 - val_loss: 0.6256 - val_acc: 0.8663 67 Epoch 305/1000 68 53s 105ms/step - loss: 0.5998 - acc: 0.8736 - val_loss: 0.6193 - val_acc: 0.8713 69 Epoch 306/1000 70 52s 105ms/step - loss: 0.5977 - acc: 0.8760 - val_loss: 0.6219 - val_acc: 0.8686 71 Epoch 307/1000 72 52s 104ms/step - loss: 0.6000 - acc: 0.8743 - val_loss: 0.6643 - val_acc: 0.8539 73 Epoch 308/1000 74 53s 105ms/step - loss: 0.6022 - acc: 0.8740 - val_loss: 0.6308 - val_acc: 0.8671 75 Epoch 309/1000 76 52s 104ms/step - loss: 0.6083 - acc: 0.8737 - val_loss: 0.6168 - val_acc: 0.8730 77 Epoch 310/1000 78 52s 104ms/step - loss: 0.6008 - acc: 0.8727 - val_loss: 0.6165 - val_acc: 0.8751 79 Epoch 311/1000 80 53s 105ms/step - loss: 0.6046 - acc: 0.8731 - val_loss: 0.6369 - val_acc: 0.8639 81 Epoch 312/1000 82 53s 106ms/step - loss: 0.5976 - acc: 0.8753 - val_loss: 0.6246 - val_acc: 0.8695 83 Epoch 313/1000 84 53s 105ms/step - loss: 0.6037 - acc: 0.8738 - val_loss: 0.6266 - val_acc: 0.8691 85 Epoch 314/1000 86 52s 105ms/step - loss: 0.6007 - acc: 0.8732 - val_loss: 0.6520 - val_acc: 0.8631 87 Epoch 315/1000 88 52s 105ms/step - loss: 0.5993 - acc: 0.8751 - val_loss: 0.6436 - val_acc: 0.8632 89 Epoch 316/1000 90 52s 105ms/step - loss: 0.5996 - acc: 0.8750 - val_loss: 0.6413 - val_acc: 0.8589 91 Epoch 317/1000 92 53s 105ms/step - loss: 0.5998 - acc: 0.8740 - val_loss: 0.6406 - val_acc: 0.8621 93 Epoch 318/1000 94 52s 105ms/step - loss: 0.5992 - acc: 0.8753 - val_loss: 0.6364 - val_acc: 0.8614 95 Epoch 319/1000 96 53s 105ms/step - loss: 0.5983 - acc: 0.8748 - val_loss: 0.6275 - val_acc: 0.8650 97 Epoch 320/1000 98 53s 105ms/step - loss: 0.5987 - acc: 0.8766 - val_loss: 0.6207 - val_acc: 0.8724 99 Epoch 321/1000 100 52s 105ms/step - loss: 0.5979 - acc: 0.8756 - val_loss: 0.6266 - val_acc: 0.8711 101 Epoch 322/1000 102 52s 105ms/step - loss: 0.5981 - acc: 0.8748 - val_loss: 0.6461 - val_acc: 0.8627 103 Epoch 323/1000 104 53s 105ms/step - loss: 0.5966 - acc: 0.8757 - val_loss: 0.6235 - val_acc: 0.8696 105 Epoch 324/1000 106 52s 105ms/step - loss: 0.5940 - acc: 0.8758 - val_loss: 0.6141 - val_acc: 0.8750 107 Epoch 325/1000 108 52s 105ms/step - loss: 0.6007 - acc: 0.8757 - val_loss: 0.6513 - val_acc: 0.8610 109 Epoch 326/1000 110 53s 105ms/step - loss: 0.5988 - acc: 0.8760 - val_loss: 0.6219 - val_acc: 0.8724 111 Epoch 327/1000 112 52s 105ms/step - loss: 0.6003 - acc: 0.8744 - val_loss: 0.6115 - val_acc: 0.8693 113 Epoch 328/1000 114 53s 105ms/step - loss: 0.5942 - acc: 0.8762 - val_loss: 0.6358 - val_acc: 0.8660 115 Epoch 329/1000 116 52s 105ms/step - loss: 0.5923 - acc: 0.8769 - val_loss: 0.6340 - val_acc: 0.8672 117 Epoch 330/1000 118 53s 105ms/step - loss: 0.5954 - acc: 0.8781 - val_loss: 0.6246 - val_acc: 0.8688 119 Epoch 331/1000 120 52s 105ms/step - loss: 0.6015 - acc: 0.8747 - val_loss: 0.6194 - val_acc: 0.8710 121 Epoch 332/1000 122 52s 104ms/step - loss: 0.5980 - acc: 0.8764 - val_loss: 0.6311 - val_acc: 0.8685 123 Epoch 333/1000 124 52s 105ms/step - loss: 0.6019 - acc: 0.8748 - val_loss: 0.6095 - val_acc: 0.8733 125 Epoch 334/1000 126 53s 106ms/step - loss: 0.5964 - acc: 0.8760 - val_loss: 0.6515 - val_acc: 0.8623 127 Epoch 335/1000 128 53s 106ms/step - loss: 0.5973 - acc: 0.8765 - val_loss: 0.6300 - val_acc: 0.8702 129 Epoch 336/1000 130 53s 105ms/step - loss: 0.5953 - acc: 0.8776 - val_loss: 0.6297 - val_acc: 0.8656 131 Epoch 337/1000 132 53s 105ms/step - loss: 0.6005 - acc: 0.8752 - val_loss: 0.6252 - val_acc: 0.8711 133 Epoch 338/1000 134 53s 105ms/step - loss: 0.5949 - acc: 0.8778 - val_loss: 0.6175 - val_acc: 0.8693 135 Epoch 339/1000 136 52s 105ms/step - loss: 0.5996 - acc: 0.8749 - val_loss: 0.6215 - val_acc: 0.8688 137 Epoch 340/1000 138 52s 104ms/step - loss: 0.5921 - acc: 0.8777 - val_loss: 0.6239 - val_acc: 0.8713 139 Epoch 341/1000 140 52s 105ms/step - loss: 0.5910 - acc: 0.8776 - val_loss: 0.6327 - val_acc: 0.8684 141 Epoch 342/1000 142 52s 104ms/step - loss: 0.5952 - acc: 0.8778 - val_loss: 0.6083 - val_acc: 0.8767 143 Epoch 343/1000 144 53s 105ms/step - loss: 0.5965 - acc: 0.8763 - val_loss: 0.6312 - val_acc: 0.8696 145 Epoch 344/1000 146 53s 105ms/step - loss: 0.5965 - acc: 0.8771 - val_loss: 0.6204 - val_acc: 0.8707 147 Epoch 345/1000 148 52s 105ms/step - loss: 0.5932 - acc: 0.8764 - val_loss: 0.6211 - val_acc: 0.8709 149 Epoch 346/1000 150 53s 105ms/step - loss: 0.5900 - acc: 0.8785 - val_loss: 0.6422 - val_acc: 0.8663 151 Epoch 347/1000 152 53s 106ms/step - loss: 0.5919 - acc: 0.8775 - val_loss: 0.6437 - val_acc: 0.8646 153 Epoch 348/1000 154 53s 105ms/step - loss: 0.6001 - acc: 0.8753 - val_loss: 0.6184 - val_acc: 0.8709 155 Epoch 349/1000 156 53s 105ms/step - loss: 0.5952 - acc: 0.8778 - val_loss: 0.6410 - val_acc: 0.8626 157 Epoch 350/1000 158 53s 105ms/step - loss: 0.5946 - acc: 0.8768 - val_loss: 0.6321 - val_acc: 0.8660 159 Epoch 351/1000 160 53s 106ms/step - loss: 0.5931 - acc: 0.8770 - val_loss: 0.6444 - val_acc: 0.8655 161 Epoch 352/1000 162 52s 105ms/step - loss: 0.5969 - acc: 0.8757 - val_loss: 0.6205 - val_acc: 0.8710 163 Epoch 353/1000 164 53s 105ms/step - loss: 0.5978 - acc: 0.8754 - val_loss: 0.6287 - val_acc: 0.8672 165 Epoch 354/1000 166 53s 105ms/step - loss: 0.5925 - acc: 0.8778 - val_loss: 0.6314 - val_acc: 0.8664 167 Epoch 355/1000 168 53s 105ms/step - loss: 0.5942 - acc: 0.8765 - val_loss: 0.6392 - val_acc: 0.8658 169 Epoch 356/1000 170 52s 104ms/step - loss: 0.5961 - acc: 0.8786 - val_loss: 0.6316 - val_acc: 0.8675 171 Epoch 357/1000 172 52s 105ms/step - loss: 0.5945 - acc: 0.8766 - val_loss: 0.6536 - val_acc: 0.8619 173 Epoch 358/1000 174 53s 105ms/step - loss: 0.5957 - acc: 0.8769 - val_loss: 0.6112 - val_acc: 0.8748 175 Epoch 359/1000 176 52s 105ms/step - loss: 0.5992 - acc: 0.8750 - val_loss: 0.6291 - val_acc: 0.8677 177 Epoch 360/1000 178 53s 106ms/step - loss: 0.5935 - acc: 0.8778 - val_loss: 0.6283 - val_acc: 0.8691 179 Epoch 361/1000 180 53s 106ms/step - loss: 0.5886 - acc: 0.8795 - val_loss: 0.6396 - val_acc: 0.8654 181 Epoch 362/1000 182 53s 105ms/step - loss: 0.5900 - acc: 0.8774 - val_loss: 0.6273 - val_acc: 0.8699 183 Epoch 363/1000 184 52s 105ms/step - loss: 0.5952 - acc: 0.8769 - val_loss: 0.6017 - val_acc: 0.8798 185 Epoch 364/1000 186 52s 105ms/step - loss: 0.5928 - acc: 0.8771 - val_loss: 0.6156 - val_acc: 0.8729 187 Epoch 365/1000 188 52s 104ms/step - loss: 0.5997 - acc: 0.8761 - val_loss: 0.6384 - val_acc: 0.8662 189 Epoch 366/1000 190 52s 105ms/step - loss: 0.5946 - acc: 0.8771 - val_loss: 0.6245 - val_acc: 0.8714 191 Epoch 367/1000 192 53s 105ms/step - loss: 0.5958 - acc: 0.8769 - val_loss: 0.6280 - val_acc: 0.8660 193 Epoch 368/1000 194 52s 105ms/step - loss: 0.5917 - acc: 0.8786 - val_loss: 0.6152 - val_acc: 0.8727 195 Epoch 369/1000 196 53s 105ms/step - loss: 0.5895 - acc: 0.8784 - val_loss: 0.6376 - val_acc: 0.8654 197 Epoch 370/1000 198 53s 105ms/step - loss: 0.5948 - acc: 0.8779 - val_loss: 0.6222 - val_acc: 0.8692 199 Epoch 371/1000 200 52s 105ms/step - loss: 0.5895 - acc: 0.8788 - val_loss: 0.6430 - val_acc: 0.8652 201 Epoch 372/1000 202 52s 105ms/step - loss: 0.5891 - acc: 0.8801 - val_loss: 0.6184 - val_acc: 0.8750 203 Epoch 373/1000 204 52s 105ms/step - loss: 0.5912 - acc: 0.8784 - val_loss: 0.6222 - val_acc: 0.8687 205 Epoch 374/1000 206 52s 104ms/step - loss: 0.5899 - acc: 0.8784 - val_loss: 0.6184 - val_acc: 0.8711 207 Epoch 375/1000 208 53s 105ms/step - loss: 0.5921 - acc: 0.8778 - val_loss: 0.6091 - val_acc: 0.8736 209 Epoch 376/1000 210 53s 105ms/step - loss: 0.5927 - acc: 0.8778 - val_loss: 0.6492 - val_acc: 0.8604 211 Epoch 377/1000 212 53s 105ms/step - loss: 0.5969 - acc: 0.8762 - val_loss: 0.6185 - val_acc: 0.8708 213 Epoch 378/1000 214 53s 105ms/step - loss: 0.5901 - acc: 0.8778 - val_loss: 0.6314 - val_acc: 0.8681 215 Epoch 379/1000 216 52s 104ms/step - loss: 0.5936 - acc: 0.8767 - val_loss: 0.6159 - val_acc: 0.8733 217 Epoch 380/1000 218 52s 105ms/step - loss: 0.5941 - acc: 0.8771 - val_loss: 0.6361 - val_acc: 0.8674 219 Epoch 381/1000 220 52s 104ms/step - loss: 0.5910 - acc: 0.8778 - val_loss: 0.6542 - val_acc: 0.8600 221 Epoch 382/1000 222 52s 105ms/step - loss: 0.5915 - acc: 0.8785 - val_loss: 0.6324 - val_acc: 0.8675 223 Epoch 383/1000 224 53s 105ms/step - loss: 0.5905 - acc: 0.8770 - val_loss: 0.6428 - val_acc: 0.8629 225 Epoch 384/1000 226 53s 105ms/step - loss: 0.5887 - acc: 0.8786 - val_loss: 0.6285 - val_acc: 0.8663 227 Epoch 385/1000 228 52s 105ms/step - loss: 0.5908 - acc: 0.8779 - val_loss: 0.6417 - val_acc: 0.8616 229 Epoch 386/1000 230 52s 105ms/step - loss: 0.5887 - acc: 0.8790 - val_loss: 0.6283 - val_acc: 0.8680 231 Epoch 387/1000 232 52s 105ms/step - loss: 0.5864 - acc: 0.8783 - val_loss: 0.6315 - val_acc: 0.8660 233 Epoch 388/1000 234 52s 105ms/step - loss: 0.5842 - acc: 0.8793 - val_loss: 0.6250 - val_acc: 0.8676 235 Epoch 389/1000 236 52s 104ms/step - loss: 0.5876 - acc: 0.8796 - val_loss: 0.6333 - val_acc: 0.8685 237 Epoch 390/1000 238 52s 105ms/step - loss: 0.5907 - acc: 0.8784 - val_loss: 0.6327 - val_acc: 0.8655 239 Epoch 391/1000 240 53s 105ms/step - loss: 0.5887 - acc: 0.8790 - val_loss: 0.6402 - val_acc: 0.8676 241 Epoch 392/1000 242 52s 105ms/step - loss: 0.5937 - acc: 0.8767 - val_loss: 0.6210 - val_acc: 0.8708 243 Epoch 393/1000 244 52s 104ms/step - loss: 0.5870 - acc: 0.8801 - val_loss: 0.6186 - val_acc: 0.8750 245 Epoch 394/1000 246 52s 104ms/step - loss: 0.5937 - acc: 0.8774 - val_loss: 0.6369 - val_acc: 0.8652 247 Epoch 395/1000 248 52s 104ms/step - loss: 0.5891 - acc: 0.8805 - val_loss: 0.6279 - val_acc: 0.8700 249 Epoch 396/1000 250 52s 105ms/step - loss: 0.5955 - acc: 0.8776 - val_loss: 0.6179 - val_acc: 0.8702 251 Epoch 397/1000 252 52s 104ms/step - loss: 0.5877 - acc: 0.8793 - val_loss: 0.6340 - val_acc: 0.8660 253 Epoch 398/1000 254 52s 105ms/step - loss: 0.5899 - acc: 0.8787 - val_loss: 0.5990 - val_acc: 0.8802 255 Epoch 399/1000 256 52s 105ms/step - loss: 0.5899 - acc: 0.8802 - val_loss: 0.6270 - val_acc: 0.8694 257 Epoch 400/1000 258 53s 105ms/step - loss: 0.5942 - acc: 0.8774 - val_loss: 0.6336 - val_acc: 0.8639 259 Epoch 401/1000 260 lr changed to 0.010000000149011612 261 52s 105ms/step - loss: 0.5041 - acc: 0.9091 - val_loss: 0.5454 - val_acc: 0.8967 262 Epoch 402/1000 263 53s 105ms/step - loss: 0.4483 - acc: 0.9265 - val_loss: 0.5314 - val_acc: 0.8978 264 Epoch 403/1000 265 53s 105ms/step - loss: 0.4280 - acc: 0.9327 - val_loss: 0.5212 - val_acc: 0.9015 266 Epoch 404/1000 267 52s 105ms/step - loss: 0.4145 - acc: 0.9351 - val_loss: 0.5156 - val_acc: 0.9033 268 Epoch 405/1000 269 53s 105ms/step - loss: 0.4053 - acc: 0.9367 - val_loss: 0.5152 - val_acc: 0.9042 270 Epoch 406/1000 271 53s 105ms/step - loss: 0.3948 - acc: 0.9398 - val_loss: 0.5083 - val_acc: 0.9021 272 Epoch 407/1000 273 53s 105ms/step - loss: 0.3880 - acc: 0.9389 - val_loss: 0.5085 - val_acc: 0.9031 274 Epoch 408/1000 275 53s 105ms/step - loss: 0.3771 - acc: 0.9433 - val_loss: 0.5094 - val_acc: 0.8987 276 Epoch 409/1000 277 52s 105ms/step - loss: 0.3694 - acc: 0.9441 - val_loss: 0.5006 - val_acc: 0.9039 278 Epoch 410/1000 279 52s 105ms/step - loss: 0.3669 - acc: 0.9432 - val_loss: 0.4927 - val_acc: 0.9054 280 Epoch 411/1000 281 52s 105ms/step - loss: 0.3564 - acc: 0.9466 - val_loss: 0.4973 - val_acc: 0.9034 282 Epoch 412/1000 283 52s 104ms/step - loss: 0.3508 - acc: 0.9476 - val_loss: 0.4929 - val_acc: 0.9032 284 Epoch 413/1000 285 52s 104ms/step - loss: 0.3464 - acc: 0.9468 - val_loss: 0.4919 - val_acc: 0.9024 286 Epoch 414/1000 287 52s 105ms/step - loss: 0.3394 - acc: 0.9487 - val_loss: 0.4842 - val_acc: 0.9032 288 Epoch 415/1000 289 52s 105ms/step - loss: 0.3329 - acc: 0.9498 - val_loss: 0.4827 - val_acc: 0.9059 290 Epoch 416/1000 291 53s 105ms/step - loss: 0.3317 - acc: 0.9494 - val_loss: 0.4873 - val_acc: 0.9024 292 Epoch 417/1000 293 52s 105ms/step - loss: 0.3281 - acc: 0.9485 - val_loss: 0.4812 - val_acc: 0.9074 294 Epoch 418/1000 295 53s 105ms/step - loss: 0.3205 - acc: 0.9514 - val_loss: 0.4796 - val_acc: 0.9038 296 Epoch 419/1000 297 52s 105ms/step - loss: 0.3207 - acc: 0.9497 - val_loss: 0.4775 - val_acc: 0.9039 298 Epoch 420/1000 299 52s 104ms/step - loss: 0.3140 - acc: 0.9518 - val_loss: 0.4753 - val_acc: 0.9052 300 Epoch 421/1000 301 53s 105ms/step - loss: 0.3094 - acc: 0.9520 - val_loss: 0.4840 - val_acc: 0.9020 302 Epoch 422/1000 303 52s 104ms/step - loss: 0.3091 - acc: 0.9513 - val_loss: 0.4684 - val_acc: 0.9064 304 Epoch 423/1000 305 52s 105ms/step - loss: 0.3055 - acc: 0.9515 - val_loss: 0.4629 - val_acc: 0.9065 306 Epoch 424/1000 307 52s 104ms/step - loss: 0.2973 - acc: 0.9526 - val_loss: 0.4696 - val_acc: 0.9044 308 Epoch 425/1000 309 53s 105ms/step - loss: 0.2965 - acc: 0.9529 - val_loss: 0.4659 - val_acc: 0.9030 310 Epoch 426/1000 311 52s 105ms/step - loss: 0.2955 - acc: 0.9531 - val_loss: 0.4584 - val_acc: 0.9067 312 Epoch 427/1000 313 52s 105ms/step - loss: 0.2948 - acc: 0.9519 - val_loss: 0.4514 - val_acc: 0.9071 314 Epoch 428/1000 315 53s 105ms/step - loss: 0.2871 - acc: 0.9542 - val_loss: 0.4584 - val_acc: 0.9081 316 Epoch 429/1000 317 52s 105ms/step - loss: 0.2849 - acc: 0.9541 - val_loss: 0.4684 - val_acc: 0.9037 318 Epoch 430/1000 319 53s 105ms/step - loss: 0.2832 - acc: 0.9537 - val_loss: 0.4588 - val_acc: 0.9049 320 Epoch 431/1000 321 53s 106ms/step - loss: 0.2785 - acc: 0.9553 - val_loss: 0.4595 - val_acc: 0.9063 322 Epoch 432/1000 323 53s 106ms/step - loss: 0.2777 - acc: 0.9546 - val_loss: 0.4516 - val_acc: 0.9059 324 Epoch 433/1000 325 53s 106ms/step - loss: 0.2788 - acc: 0.9528 - val_loss: 0.4521 - val_acc: 0.9031 326 Epoch 434/1000 327 53s 107ms/step - loss: 0.2743 - acc: 0.9555 - val_loss: 0.4679 - val_acc: 0.9015 328 Epoch 435/1000 329 53s 106ms/step - loss: 0.2739 - acc: 0.9540 - val_loss: 0.4512 - val_acc: 0.9053 330 Epoch 436/1000 331 53s 105ms/step - loss: 0.2701 - acc: 0.9555 - val_loss: 0.4622 - val_acc: 0.9034 332 Epoch 437/1000 333 53s 105ms/step - loss: 0.2697 - acc: 0.9547 - val_loss: 0.4585 - val_acc: 0.9015 334 Epoch 438/1000 335 53s 105ms/step - loss: 0.2663 - acc: 0.9552 - val_loss: 0.4556 - val_acc: 0.9027 336 Epoch 439/1000 337 53s 106ms/step - loss: 0.2641 - acc: 0.9553 - val_loss: 0.4538 - val_acc: 0.9023 338 Epoch 440/1000 339 53s 106ms/step - loss: 0.2649 - acc: 0.9548 - val_loss: 0.4458 - val_acc: 0.9047 340 Epoch 441/1000 341 53s 106ms/step - loss: 0.2601 - acc: 0.9561 - val_loss: 0.4499 - val_acc: 0.9032 342 Epoch 442/1000 343 53s 106ms/step - loss: 0.2610 - acc: 0.9549 - val_loss: 0.4533 - val_acc: 0.9042 344 Epoch 443/1000 345 53s 105ms/step - loss: 0.2608 - acc: 0.9543 - val_loss: 0.4542 - val_acc: 0.9054 346 Epoch 444/1000 347 53s 105ms/step - loss: 0.2606 - acc: 0.9547 - val_loss: 0.4585 - val_acc: 0.9003 348 Epoch 445/1000 349 53s 105ms/step - loss: 0.2567 - acc: 0.9554 - val_loss: 0.4549 - val_acc: 0.8993 350 Epoch 446/1000 351 53s 105ms/step - loss: 0.2551 - acc: 0.9555 - val_loss: 0.4653 - val_acc: 0.8983 352 Epoch 447/1000 353 53s 106ms/step - loss: 0.2554 - acc: 0.9558 - val_loss: 0.4561 - val_acc: 0.9000 354 Epoch 448/1000 355 53s 106ms/step - loss: 0.2565 - acc: 0.9540 - val_loss: 0.4562 - val_acc: 0.9002 356 Epoch 449/1000 357 53s 106ms/step - loss: 0.2528 - acc: 0.9551 - val_loss: 0.4515 - val_acc: 0.8996 358 Epoch 450/1000 359 53s 106ms/step - loss: 0.2545 - acc: 0.9545 - val_loss: 0.4475 - val_acc: 0.9015 360 Epoch 451/1000 361 53s 106ms/step - loss: 0.2554 - acc: 0.9543 - val_loss: 0.4460 - val_acc: 0.9059 362 Epoch 452/1000 363 53s 105ms/step - loss: 0.2506 - acc: 0.9545 - val_loss: 0.4526 - val_acc: 0.8997 364 Epoch 453/1000 365 53s 106ms/step - loss: 0.2517 - acc: 0.9542 - val_loss: 0.4442 - val_acc: 0.8999 366 Epoch 454/1000 367 53s 105ms/step - loss: 0.2517 - acc: 0.9543 - val_loss: 0.4523 - val_acc: 0.9001 368 Epoch 455/1000 369 53s 106ms/step - loss: 0.2458 - acc: 0.9560 - val_loss: 0.4329 - val_acc: 0.9029 370 Epoch 456/1000 371 53s 106ms/step - loss: 0.2495 - acc: 0.9546 - val_loss: 0.4407 - val_acc: 0.9026 372 Epoch 457/1000 373 53s 105ms/step - loss: 0.2451 - acc: 0.9553 - val_loss: 0.4378 - val_acc: 0.9025 374 Epoch 458/1000 375 53s 106ms/step - loss: 0.2472 - acc: 0.9543 - val_loss: 0.4403 - val_acc: 0.9026 376 Epoch 459/1000 377 53s 106ms/step - loss: 0.2461 - acc: 0.9550 - val_loss: 0.4359 - val_acc: 0.9041 378 Epoch 460/1000 379 53s 105ms/step - loss: 0.2475 - acc: 0.9531 - val_loss: 0.4423 - val_acc: 0.9021 380 Epoch 461/1000 381 53s 106ms/step - loss: 0.2450 - acc: 0.9537 - val_loss: 0.4392 - val_acc: 0.9019 382 Epoch 462/1000 383 53s 105ms/step - loss: 0.2452 - acc: 0.9543 - val_loss: 0.4408 - val_acc: 0.8996 384 Epoch 463/1000 385 53s 106ms/step - loss: 0.2441 - acc: 0.9545 - val_loss: 0.4495 - val_acc: 0.8999 386 Epoch 464/1000 387 53s 106ms/step - loss: 0.2439 - acc: 0.9539 - val_loss: 0.4413 - val_acc: 0.9029 388 Epoch 465/1000 389 53s 105ms/step - loss: 0.2406 - acc: 0.9555 - val_loss: 0.4503 - val_acc: 0.8977 390 Epoch 466/1000 391 53s 106ms/step - loss: 0.2445 - acc: 0.9541 - val_loss: 0.4388 - val_acc: 0.9025 392 Epoch 467/1000 393 53s 105ms/step - loss: 0.2402 - acc: 0.9547 - val_loss: 0.4306 - val_acc: 0.9027 394 Epoch 468/1000 395 53s 105ms/step - loss: 0.2402 - acc: 0.9565 - val_loss: 0.4391 - val_acc: 0.9040 396 Epoch 469/1000 397 53s 105ms/step - loss: 0.2419 - acc: 0.9546 - val_loss: 0.4442 - val_acc: 0.8987 398 Epoch 470/1000 399 53s 105ms/step - loss: 0.2409 - acc: 0.9537 - val_loss: 0.4414 - val_acc: 0.9007 400 Epoch 471/1000 401 53s 106ms/step - loss: 0.2446 - acc: 0.9527 - val_loss: 0.4478 - val_acc: 0.8971 402 Epoch 472/1000 403 53s 106ms/step - loss: 0.2374 - acc: 0.9553 - val_loss: 0.4522 - val_acc: 0.8967 404 Epoch 473/1000 405 53s 106ms/step - loss: 0.2418 - acc: 0.9528 - val_loss: 0.4440 - val_acc: 0.8983 406 Epoch 474/1000 407 53s 106ms/step - loss: 0.2394 - acc: 0.9552 - val_loss: 0.4418 - val_acc: 0.9000 408 Epoch 475/1000 409 52s 105ms/step - loss: 0.2403 - acc: 0.9539 - val_loss: 0.4379 - val_acc: 0.9031 410 Epoch 476/1000 411 52s 105ms/step - loss: 0.2379 - acc: 0.9543 - val_loss: 0.4358 - val_acc: 0.8999 412 Epoch 477/1000 413 52s 105ms/step - loss: 0.2409 - acc: 0.9529 - val_loss: 0.4433 - val_acc: 0.9006 414 Epoch 478/1000 415 53s 106ms/step - loss: 0.2389 - acc: 0.9533 - val_loss: 0.4410 - val_acc: 0.9009 416 Epoch 479/1000 417 53s 105ms/step - loss: 0.2385 - acc: 0.9544 - val_loss: 0.4427 - val_acc: 0.9007 418 Epoch 480/1000 419 53s 106ms/step - loss: 0.2399 - acc: 0.9538 - val_loss: 0.4248 - val_acc: 0.9018 420 Epoch 481/1000 421 53s 106ms/step - loss: 0.2367 - acc: 0.9540 - val_loss: 0.4425 - val_acc: 0.9005 422 Epoch 482/1000 423 53s 106ms/step - loss: 0.2376 - acc: 0.9544 - val_loss: 0.4424 - val_acc: 0.9010 424 Epoch 483/1000 425 53s 105ms/step - loss: 0.2400 - acc: 0.9537 - val_loss: 0.4414 - val_acc: 0.8987 426 Epoch 484/1000 427 52s 105ms/step - loss: 0.2367 - acc: 0.9539 - val_loss: 0.4423 - val_acc: 0.8994 428 Epoch 485/1000 429 53s 105ms/step - loss: 0.2356 - acc: 0.9547 - val_loss: 0.4297 - val_acc: 0.9013 430 Epoch 486/1000 431 53s 105ms/step - loss: 0.2378 - acc: 0.9543 - val_loss: 0.4286 - val_acc: 0.9039 432 Epoch 487/1000 433 53s 107ms/step - loss: 0.2347 - acc: 0.9551 - val_loss: 0.4304 - val_acc: 0.9018 434 Epoch 488/1000 435 87/500 [====>.........................] - ETA: 42s - loss: 0.2317 - acc: 0.9568Traceback (most recent call last): 436 437 File "C:\Users\hitwh\.spyder-py3\temp.py", line 148, in <module> 438 verbose=1, callbacks=[reduce_lr], workers=4) 439 440 File "C:\Users\hitwh\Anaconda3\envs\Initial\lib\site-packages\keras\legacy\interfaces.py", line 91, in wrapper 441 return func(*args, **kwargs) 442 443 File "C:\Users\hitwh\Anaconda3\envs\Initial\lib\site-packages\keras\engine\training.py", line 1415, in fit_generator 444 initial_epoch=initial_epoch) 445 446 File "C:\Users\hitwh\Anaconda3\envs\Initial\lib\site-packages\keras\engine\training_generator.py", line 213, in fit_generator 447 class_weight=class_weight) 448 449 File "C:\Users\hitwh\Anaconda3\envs\Initial\lib\site-packages\keras\engine\training.py", line 1215, in train_on_batch 450 outputs = self.train_function(ins) 451 452 File "C:\Users\hitwh\Anaconda3\envs\Initial\lib\site-packages\keras\backend\tensorflow_backend.py", line 2666, in __call__ 453 return self._call(inputs) 454 455 File "C:\Users\hitwh\Anaconda3\envs\Initial\lib\site-packages\keras\backend\tensorflow_backend.py", line 2636, in _call 456 fetched = self._callable_fn(*array_vals) 457 458 File "C:\Users\hitwh\Anaconda3\envs\Initial\lib\site-packages\tensorflow\python\client\session.py", line 1382, in __call__ 459 run_metadata_ptr) 460 461 KeyboardInterrupt

无意中按了Ctrl+C,把程序给中断了,没跑完。本来设置跑1000个epoch,只跑到第488个。验证集上的准确率已经到了90%。

Minghang Zhao, Shisheng Zhong, Xuyun Fu, Baoping Tang, Shaojiang Dong, Michael Pecht, Deep Residual Networks with Adaptively Parametric Rectifier Linear Units for Fault Diagnosis, IEEE Transactions on Industrial Electronics, 2020, DOI: 10.1109/TIE.2020.2972458

浙公网安备 33010602011771号

浙公网安备 33010602011771号