ZooKeeper学习之路 (九)利用ZooKeeper搭建Hadoop的HA集群

Hadoop HA 原理概述

为什么会有 hadoop HA 机制呢?

HA:High Available,高可用

在Hadoop 2.0之前,在HDFS 集群中NameNode 存在单点故障 (SPOF:A Single Point of Failure)。 对于只有一个 NameNode 的集群,如果 NameNode 机器出现故障(比如宕机或是软件、硬件 升级),那么整个集群将无法使用,直到 NameNode 重新启动

那如何解决呢?

HDFS 的 HA 功能通过配置 Active/Standby 两个 NameNodes 实现在集群中对 NameNode 的 热备来解决上述问题。如果出现故障,如机器崩溃或机器需要升级维护,这时可通过此种方 式将 NameNode 很快的切换到另外一台机器。

在一个典型的 HDFS(HA) 集群中,使用两台单独的机器配置为 NameNodes 。在任何时间点, 确保 NameNodes 中只有一个处于 Active 状态,其他的处在 Standby 状态。其中 ActiveNameNode 负责集群中的所有客户端操作,StandbyNameNode 仅仅充当备机,保证一 旦 ActiveNameNode 出现问题能够快速切换。

为了能够实时同步 Active 和 Standby 两个 NameNode 的元数据信息(实际上 editlog),需提 供一个共享存储系统,可以是 NFS、QJM(Quorum Journal Manager)或者 Zookeeper,Active Namenode 将数据写入共享存储系统,而 Standby 监听该系统,一旦发现有新数据写入,则 读取这些数据,并加载到自己内存中,以保证自己内存状态与 Active NameNode 保持基本一 致,如此这般,在紧急情况下 standby 便可快速切为 active namenode。为了实现快速切换, Standby 节点获取集群的最新文件块信息也是很有必要的。为了实现这一目标,DataNode 需 要配置 NameNodes 的位置,并同时给他们发送文件块信息以及心跳检测。

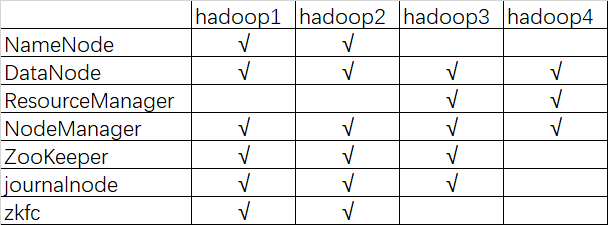

集群规划

描述:hadoop HA 集群的搭建依赖于 zookeeper,所以选取三台当做 zookeeper 集群 ,总共准备了四台主机,分别是 hadoop1,hadoop2,hadoop3,hadoop4 其中 hadoop1 和 hadoop2 做 namenode 的主备切换,hadoop3 和 hadoop4 做 resourcemanager 的主备切换

四台机器

集群服务器准备

1、 修改主机名

2、 修改 IP 地址

3、 添加主机名和 IP 映射

4、 添加普通用户 hadoop 用户并配置 sudoer 权限

5、 设置系统启动级别

6、 关闭防火墙/关闭 Selinux

7、 安装 JDK 两种准备方式:

1、 每个节点都单独设置,这样比较麻烦。线上环境可以编写脚本实现

2、 虚拟机环境可是在做完以上 7 步之后,就进行克隆

3、 然后接着再给你的集群配置 SSH 免密登陆和搭建时间同步服务

8、 配置 SSH 免密登录

9、 同步服务器时间

具体操作可以参考普通分布式搭建过程http://www.cnblogs.com/qingyunzong/p/8496127.html

集群安装

1、安装 Zookeeper 集群

具体安装步骤参考之前的文档http://www.cnblogs.com/qingyunzong/p/8619184.html

2、安装 hadoop 集群

(1)获取安装包

从官网或是镜像站下载

http://mirrors.hust.edu.cn/apache/

(2)上传解压缩

[hadoop@hadoop1 ~]$ ls apps hadoop-2.7.5-centos-6.7.tar.gz movie2.jar users.dat zookeeper.out data log output2 zookeeper-3.4.10.tar.gz [hadoop@hadoop1 ~]$ tar -zxvf hadoop-2.7.5-centos-6.7.tar.gz -C apps/

(3)修改配置文件

配置文件目录:/home/hadoop/apps/hadoop-2.7.5/etc/hadoop

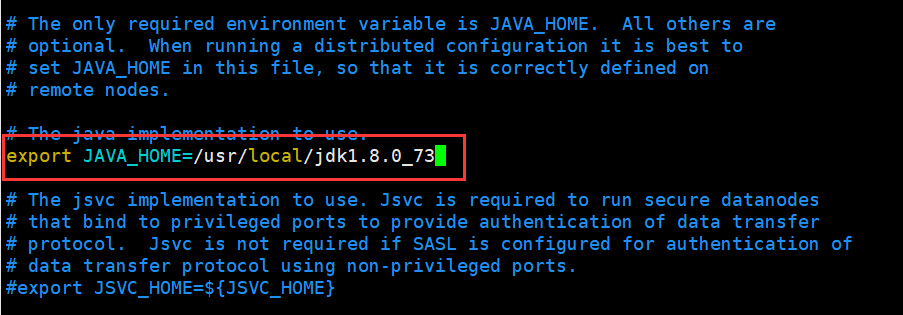

修改 hadoop-env.sh文件

[hadoop@hadoop1 ~]$ cd apps/hadoop-2.7.5/etc/hadoop/ [hadoop@hadoop1 hadoop]$ echo $JAVA_HOME /usr/local/jdk1.8.0_73 [hadoop@hadoop1 hadoop]$ vi hadoop-env.sh

修改core-site.xml

[hadoop@hadoop1 hadoop]$ vi core-site.xml

1 <configuration> 2 <!-- 指定hdfs的nameservice为myha01 --> 3 <property> 4 <name>fs.defaultFS</name> 5 <value>hdfs://myha01/</value> 6 </property> 7 8 <!-- 指定hadoop临时目录 --> 9 <property> 10 <name>hadoop.tmp.dir</name> 11 <value>/home/hadoop/data/hadoopdata/</value> 12 </property> 13 14 <!-- 指定zookeeper地址 --> 15 <property> 16 <name>ha.zookeeper.quorum</name> 17 <value>hadoop1:2181,hadoop2:2181,hadoop3:2181,hadoop4:2181</value> 18 </property> 19 20 <!-- hadoop链接zookeeper的超时时长设置 --> 21 <property> 22 <name>ha.zookeeper.session-timeout.ms</name> 23 <value>1000</value> 24 <description>ms</description> 25 </property> 26 </configuration>

修改hdfs-site.xml

[hadoop@hadoop1 hadoop]$ vi hdfs-site.xml

1 <configuration> 2 3 <!-- 指定副本数 --> 4 <property> 5 <name>dfs.replication</name> 6 <value>2</value> 7 </property> 8 9 <!-- 配置namenode和datanode的工作目录-数据存储目录 --> 10 <property> 11 <name>dfs.namenode.name.dir</name> 12 <value>/home/hadoop/data/hadoopdata/dfs/name</value> 13 </property> 14 <property> 15 <name>dfs.datanode.data.dir</name> 16 <value>/home/hadoop/data/hadoopdata/dfs/data</value> 17 </property> 18 19 <!-- 启用webhdfs --> 20 <property> 21 <name>dfs.webhdfs.enabled</name> 22 <value>true</value> 23 </property> 24 25 <!--指定hdfs的nameservice为myha01,需要和core-site.xml中的保持一致 26 dfs.ha.namenodes.[nameservice id]为在nameservice中的每一个NameNode设置唯一标示符。 27 配置一个逗号分隔的NameNode ID列表。这将是被DataNode识别为所有的NameNode。 28 例如,如果使用"myha01"作为nameservice ID,并且使用"nn1"和"nn2"作为NameNodes标示符 29 --> 30 <property> 31 <name>dfs.nameservices</name> 32 <value>myha01</value> 33 </property> 34 35 <!-- myha01下面有两个NameNode,分别是nn1,nn2 --> 36 <property> 37 <name>dfs.ha.namenodes.myha01</name> 38 <value>nn1,nn2</value> 39 </property> 40 41 <!-- nn1的RPC通信地址 --> 42 <property> 43 <name>dfs.namenode.rpc-address.myha01.nn1</name> 44 <value>hadoop1:9000</value> 45 </property> 46 47 <!-- nn1的http通信地址 --> 48 <property> 49 <name>dfs.namenode.http-address.myha01.nn1</name> 50 <value>hadoop1:50070</value> 51 </property> 52 53 <!-- nn2的RPC通信地址 --> 54 <property> 55 <name>dfs.namenode.rpc-address.myha01.nn2</name> 56 <value>hadoop2:9000</value> 57 </property> 58 59 <!-- nn2的http通信地址 --> 60 <property> 61 <name>dfs.namenode.http-address.myha01.nn2</name> 62 <value>hadoop2:50070</value> 63 </property> 64 65 <!-- 指定NameNode的edits元数据的共享存储位置。也就是JournalNode列表 66 该url的配置格式:qjournal://host1:port1;host2:port2;host3:port3/journalId 67 journalId推荐使用nameservice,默认端口号是:8485 --> 68 <property> 69 <name>dfs.namenode.shared.edits.dir</name> 70 <value>qjournal://hadoop1:8485;hadoop2:8485;hadoop3:8485/myha01</value> 71 </property> 72 73 <!-- 指定JournalNode在本地磁盘存放数据的位置 --> 74 <property> 75 <name>dfs.journalnode.edits.dir</name> 76 <value>/home/hadoop/data/journaldata</value> 77 </property> 78 79 <!-- 开启NameNode失败自动切换 --> 80 <property> 81 <name>dfs.ha.automatic-failover.enabled</name> 82 <value>true</value> 83 </property> 84 85 <!-- 配置失败自动切换实现方式 --> 86 <property> 87 <name>dfs.client.failover.proxy.provider.myha01</name> 88 <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> 89 </property> 90 91 <!-- 配置隔离机制方法,多个机制用换行分割,即每个机制暂用一行 --> 92 <property> 93 <name>dfs.ha.fencing.methods</name> 94 <value> 95 sshfence 96 shell(/bin/true) 97 </value> 98 </property> 99 100 <!-- 使用sshfence隔离机制时需要ssh免登陆 --> 101 <property> 102 <name>dfs.ha.fencing.ssh.private-key-files</name> 103 <value>/home/hadoop/.ssh/id_rsa</value> 104 </property> 105 106 <!-- 配置sshfence隔离机制超时时间 --> 107 <property> 108 <name>dfs.ha.fencing.ssh.connect-timeout</name> 109 <value>30000</value> 110 </property> 111 112 <property> 113 <name>ha.failover-controller.cli-check.rpc-timeout.ms</name> 114 <value>60000</value> 115 </property> 116 </configuration>

修改mapred-site.xml

[hadoop@hadoop1 hadoop]$ cp mapred-site.xml.template mapred-site.xml [hadoop@hadoop1 hadoop]$ vi mapred-site.xml

1 <configuration> 2 <!-- 指定mr框架为yarn方式 --> 3 <property> 4 <name>mapreduce.framework.name</name> 5 <value>yarn</value> 6 </property> 7 8 <!-- 指定mapreduce jobhistory地址 --> 9 <property> 10 <name>mapreduce.jobhistory.address</name> 11 <value>hadoop1:10020</value> 12 </property> 13 14 <!-- 任务历史服务器的web地址 --> 15 <property> 16 <name>mapreduce.jobhistory.webapp.address</name> 17 <value>hadoop1:19888</value> 18 </property> 19 </configuration>

修改yarn-site.xml

[hadoop@hadoop1 hadoop]$ vi yarn-site.xml

1 <configuration> 2 <!-- 开启RM高可用 --> 3 <property> 4 <name>yarn.resourcemanager.ha.enabled</name> 5 <value>true</value> 6 </property> 7 8 <!-- 指定RM的cluster id --> 9 <property> 10 <name>yarn.resourcemanager.cluster-id</name> 11 <value>yrc</value> 12 </property> 13 14 <!-- 指定RM的名字 --> 15 <property> 16 <name>yarn.resourcemanager.ha.rm-ids</name> 17 <value>rm1,rm2</value> 18 </property> 19 20 <!-- 分别指定RM的地址 --> 21 <property> 22 <name>yarn.resourcemanager.hostname.rm1</name> 23 <value>hadoop3</value> 24 </property> 25 26 <property> 27 <name>yarn.resourcemanager.hostname.rm2</name> 28 <value>hadoop4</value> 29 </property> 30 31 <!-- 指定zk集群地址 --> 32 <property> 33 <name>yarn.resourcemanager.zk-address</name> 34 <value>hadoop1:2181,hadoop2:2181,hadoop3:2181</value> 35 </property> 36 37 <property> 38 <name>yarn.nodemanager.aux-services</name> 39 <value>mapreduce_shuffle</value> 40 </property> 41 42 <property> 43 <name>yarn.log-aggregation-enable</name> 44 <value>true</value> 45 </property> 46 47 <property> 48 <name>yarn.log-aggregation.retain-seconds</name> 49 <value>86400</value> 50 </property> 51 52 <!-- 启用自动恢复 --> 53 <property> 54 <name>yarn.resourcemanager.recovery.enabled</name> 55 <value>true</value> 56 </property> 57 58 <!-- 制定resourcemanager的状态信息存储在zookeeper集群上 --> 59 <property> 60 <name>yarn.resourcemanager.store.class</name> 61 <value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value> 62 </property> 63 </configuration>

修改slaves

[hadoop@hadoop1 hadoop]$ vi slaves

hadoop1

hadoop2

hadoop3

hadoop4

(4)将hadoop安装包分发到其他集群节点

重点强调: 每台服务器中的hadoop安装包的目录必须一致, 安装包的配置信息还必须保持一致

重点强调: 每台服务器中的hadoop安装包的目录必须一致, 安装包的配置信息还必须保持一致

重点强调: 每台服务器中的hadoop安装包的目录必须一致, 安装包的配置信息还必须保持一致

[hadoop@hadoop1 apps]$ scp -r hadoop-2.7.5/ hadoop2:$PWD [hadoop@hadoop1 apps]$ scp -r hadoop-2.7.5/ hadoop3:$PWD [hadoop@hadoop1 apps]$ scp -r hadoop-2.7.5/ hadoop4:$PWD

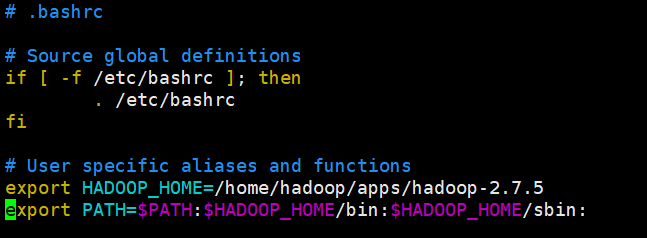

(5)配置Hadoop环境变量

千万注意:

1、如果你使用root用户进行安装。 vi /etc/profile 即可 系统变量

2、如果你使用普通用户进行安装。 vi ~/.bashrc 用户变量

本人是用的hadoop用户安装的

[hadoop@hadoop1 ~]$ vi .bashrc

export HADOOP_HOME=/home/hadoop/apps/hadoop-2.7.5 export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:

使环境变量生效

[hadoop@hadoop1 bin]$ source ~/.bashrc

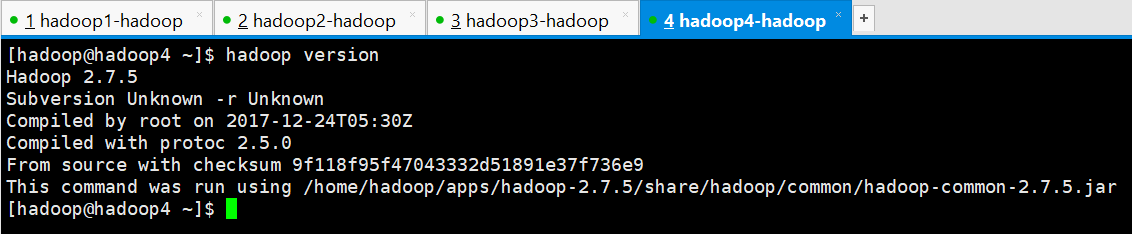

(6)查看hadoop版本

[hadoop@hadoop4 ~]$ hadoop version Hadoop 2.7.5 Subversion Unknown -r Unknown Compiled by root on 2017-12-24T05:30Z Compiled with protoc 2.5.0 From source with checksum 9f118f95f47043332d51891e37f736e9 This command was run using /home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/hadoop-common-2.7.5.jar [hadoop@hadoop4 ~]$

Hadoop HA集群的初始化

重点强调:一定要按照以下步骤逐步进行操作

重点强调:一定要按照以下步骤逐步进行操作

重点强调:一定要按照以下步骤逐步进行操作

1、启动ZooKeeper

启动4台服务器上的zookeeper服务

hadoop1

[hadoop@hadoop1 conf]$ zkServer.sh start ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Starting zookeeper ... STARTED [hadoop@hadoop1 conf]$ jps 2674 Jps 2647 QuorumPeerMain [hadoop@hadoop1 conf]$ zkServer.sh status ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Mode: follower [hadoop@hadoop1 conf]$

hadoop2

[hadoop@hadoop2 conf]$ zkServer.sh start ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Starting zookeeper ... STARTED [hadoop@hadoop2 conf]$ jps 2592 QuorumPeerMain 2619 Jps [hadoop@hadoop2 conf]$ zkServer.sh status ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Mode: follower [hadoop@hadoop2 conf]$

hadoop3

[hadoop@hadoop3 conf]$ zkServer.sh start ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Starting zookeeper ... STARTED [hadoop@hadoop3 conf]$ jps 16612 QuorumPeerMain 16647 Jps [hadoop@hadoop3 conf]$ zkServer.sh status ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Mode: leader [hadoop@hadoop3 conf]$

hadoop4

[hadoop@hadoop4 conf]$ zkServer.sh start ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Starting zookeeper ... STARTED [hadoop@hadoop4 conf]$ jps 3596 Jps 3567 QuorumPeerMain [hadoop@hadoop4 conf]$ zkServer.sh status ZooKeeper JMX enabled by default Using config: /home/hadoop/apps/zookeeper-3.4.10/bin/../conf/zoo.cfg Mode: observer [hadoop@hadoop4 conf]$

2、在你配置的各个journalnode节点启动该进程

按照之前的规划,我的是在hadoop1、hadoop2、hadoop3上进行启动,启动命令如下

hadoop1

[hadoop@hadoop1 conf]$ hadoop-daemon.sh start journalnode starting journalnode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-journalnode-hadoop1.out [hadoop@hadoop1 conf]$ jps 2739 JournalNode 2788 Jps 2647 QuorumPeerMain [hadoop@hadoop1 conf]$

hadoop2

[hadoop@hadoop2 conf]$ hadoop-daemon.sh start journalnode starting journalnode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-journalnode-hadoop2.out [hadoop@hadoop2 conf]$ jps 2592 QuorumPeerMain 3049 JournalNode 3102 Jps [hadoop@hadoop2 conf]$

hadoop3

[hadoop@hadoop3 conf]$ hadoop-daemon.sh start journalnode starting journalnode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-journalnode-hadoop3.out [hadoop@hadoop3 conf]$ jps 16612 QuorumPeerMain 16712 JournalNode 16766 Jps [hadoop@hadoop3 conf]$

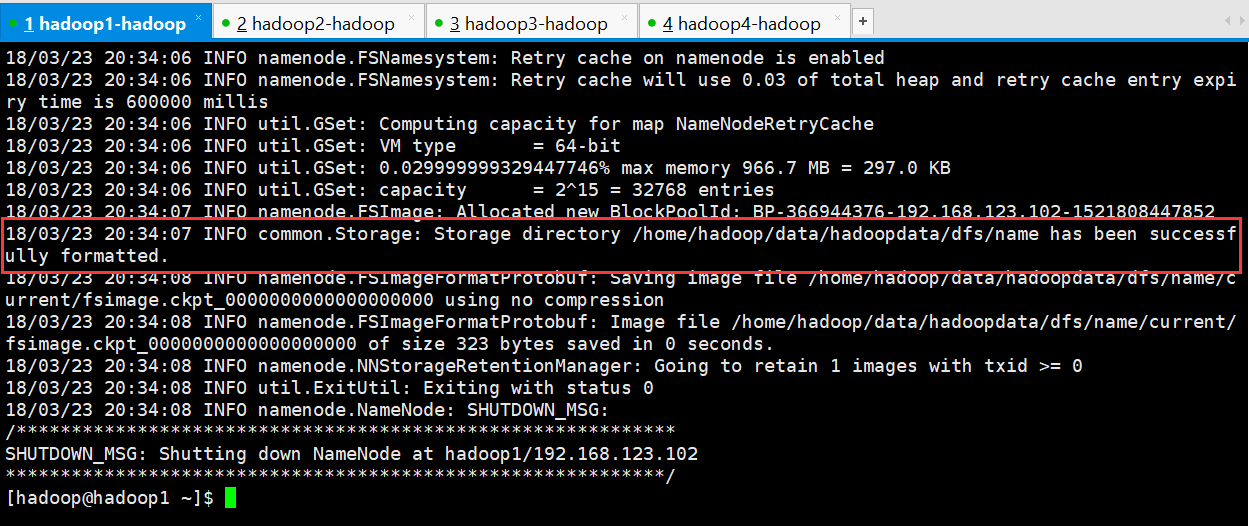

3、格式化namenode

先选取一个namenode(hadoop1)节点进行格式化

[hadoop@hadoop1 ~]$ hadoop namenode -format

1 [hadoop@hadoop1 ~]$ hadoop namenode -format 2 DEPRECATED: Use of this script to execute hdfs command is deprecated. 3 Instead use the hdfs command for it. 4 5 18/03/23 20:34:05 INFO namenode.NameNode: STARTUP_MSG: 6 /************************************************************ 7 STARTUP_MSG: Starting NameNode 8 STARTUP_MSG: host = hadoop1/192.168.123.102 9 STARTUP_MSG: args = [-format] 10 STARTUP_MSG: version = 2.7.5 11 STARTUP_MSG: classpath = /home/hadoop/apps/hadoop-2.7.5/etc/hadoop:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/paranamer-2.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/httpclient-4.2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jets3t-0.9.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-net-3.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jetty-sslengine-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-math3-3.1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jsch-0.1.54.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jsp-api-2.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/hadoop-auth-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/mockito-all-1.8.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/zookeeper-3.4.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jersey-json-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/gson-2.2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/httpcore-4.2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-httpclient-3.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jettison-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/xmlenc-0.52.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/hadoop-annotations-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/curator-framework-2.7.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/curator-client-2.7.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-digester-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/activation-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/stax-api-1.0-2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/avro-1.7.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/junit-4.11.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-collections-3.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/hamcrest-core-1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-configuration-1.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/hadoop-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/hadoop-nfs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/hadoop-common-2.7.5-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-2.7.5-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/aopalliance-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-json-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/javax.inject-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-client-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jettison-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/guice-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/activation-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-client-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-api-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-registry-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/javax.inject-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/guice-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/junit-4.11.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.5-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/contrib/capacity-scheduler/*.jar:/home/hadoop/apps/hadoop-2.7.5/contrib/capacity-scheduler/*.jar 12 STARTUP_MSG: build = Unknown -r Unknown; compiled by 'root' on 2017-12-24T05:30Z 13 STARTUP_MSG: java = 1.8.0_73 14 ************************************************************/ 15 18/03/23 20:34:05 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 16 18/03/23 20:34:05 INFO namenode.NameNode: createNameNode [-format] 17 18/03/23 20:34:05 WARN common.Util: Path /home/hadoop/data/hadoopdata/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration. 18 18/03/23 20:34:05 WARN common.Util: Path /home/hadoop/data/hadoopdata/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration. 19 Formatting using clusterid: CID-5fa14c27-b311-4a35-8640-f32749a3c7c5 20 18/03/23 20:34:06 INFO namenode.FSNamesystem: No KeyProvider found. 21 18/03/23 20:34:06 INFO namenode.FSNamesystem: fsLock is fair: true 22 18/03/23 20:34:06 INFO namenode.FSNamesystem: Detailed lock hold time metrics enabled: false 23 18/03/23 20:34:06 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000 24 18/03/23 20:34:06 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true 25 18/03/23 20:34:06 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000 26 18/03/23 20:34:06 INFO blockmanagement.BlockManager: The block deletion will start around 2018 三月 23 20:34:06 27 18/03/23 20:34:06 INFO util.GSet: Computing capacity for map BlocksMap 28 18/03/23 20:34:06 INFO util.GSet: VM type = 64-bit 29 18/03/23 20:34:06 INFO util.GSet: 2.0% max memory 966.7 MB = 19.3 MB 30 18/03/23 20:34:06 INFO util.GSet: capacity = 2^21 = 2097152 entries 31 18/03/23 20:34:06 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false 32 18/03/23 20:34:06 INFO blockmanagement.BlockManager: defaultReplication = 2 33 18/03/23 20:34:06 INFO blockmanagement.BlockManager: maxReplication = 512 34 18/03/23 20:34:06 INFO blockmanagement.BlockManager: minReplication = 1 35 18/03/23 20:34:06 INFO blockmanagement.BlockManager: maxReplicationStreams = 2 36 18/03/23 20:34:06 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000 37 18/03/23 20:34:06 INFO blockmanagement.BlockManager: encryptDataTransfer = false 38 18/03/23 20:34:06 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000 39 18/03/23 20:34:06 INFO namenode.FSNamesystem: fsOwner = hadoop (auth:SIMPLE) 40 18/03/23 20:34:06 INFO namenode.FSNamesystem: supergroup = supergroup 41 18/03/23 20:34:06 INFO namenode.FSNamesystem: isPermissionEnabled = true 42 18/03/23 20:34:06 INFO namenode.FSNamesystem: Determined nameservice ID: myha01 43 18/03/23 20:34:06 INFO namenode.FSNamesystem: HA Enabled: true 44 18/03/23 20:34:06 INFO namenode.FSNamesystem: Append Enabled: true 45 18/03/23 20:34:06 INFO util.GSet: Computing capacity for map INodeMap 46 18/03/23 20:34:06 INFO util.GSet: VM type = 64-bit 47 18/03/23 20:34:06 INFO util.GSet: 1.0% max memory 966.7 MB = 9.7 MB 48 18/03/23 20:34:06 INFO util.GSet: capacity = 2^20 = 1048576 entries 49 18/03/23 20:34:06 INFO namenode.FSDirectory: ACLs enabled? false 50 18/03/23 20:34:06 INFO namenode.FSDirectory: XAttrs enabled? true 51 18/03/23 20:34:06 INFO namenode.FSDirectory: Maximum size of an xattr: 16384 52 18/03/23 20:34:06 INFO namenode.NameNode: Caching file names occuring more than 10 times 53 18/03/23 20:34:06 INFO util.GSet: Computing capacity for map cachedBlocks 54 18/03/23 20:34:06 INFO util.GSet: VM type = 64-bit 55 18/03/23 20:34:06 INFO util.GSet: 0.25% max memory 966.7 MB = 2.4 MB 56 18/03/23 20:34:06 INFO util.GSet: capacity = 2^18 = 262144 entries 57 18/03/23 20:34:06 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033 58 18/03/23 20:34:06 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0 59 18/03/23 20:34:06 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000 60 18/03/23 20:34:06 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10 61 18/03/23 20:34:06 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10 62 18/03/23 20:34:06 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25 63 18/03/23 20:34:06 INFO namenode.FSNamesystem: Retry cache on namenode is enabled 64 18/03/23 20:34:06 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis 65 18/03/23 20:34:06 INFO util.GSet: Computing capacity for map NameNodeRetryCache 66 18/03/23 20:34:06 INFO util.GSet: VM type = 64-bit 67 18/03/23 20:34:06 INFO util.GSet: 0.029999999329447746% max memory 966.7 MB = 297.0 KB 68 18/03/23 20:34:06 INFO util.GSet: capacity = 2^15 = 32768 entries 69 18/03/23 20:34:07 INFO namenode.FSImage: Allocated new BlockPoolId: BP-366944376-192.168.123.102-1521808447852 70 18/03/23 20:34:07 INFO common.Storage: Storage directory /home/hadoop/data/hadoopdata/dfs/name has been successfully formatted. 71 18/03/23 20:34:08 INFO namenode.FSImageFormatProtobuf: Saving image file /home/hadoop/data/hadoopdata/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression 72 18/03/23 20:34:08 INFO namenode.FSImageFormatProtobuf: Image file /home/hadoop/data/hadoopdata/dfs/name/current/fsimage.ckpt_0000000000000000000 of size 323 bytes saved in 0 seconds. 73 18/03/23 20:34:08 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0 74 18/03/23 20:34:08 INFO util.ExitUtil: Exiting with status 0 75 18/03/23 20:34:08 INFO namenode.NameNode: SHUTDOWN_MSG: 76 /************************************************************ 77 SHUTDOWN_MSG: Shutting down NameNode at hadoop1/192.168.123.102 78 ************************************************************/ 79 [hadoop@hadoop1 ~]$

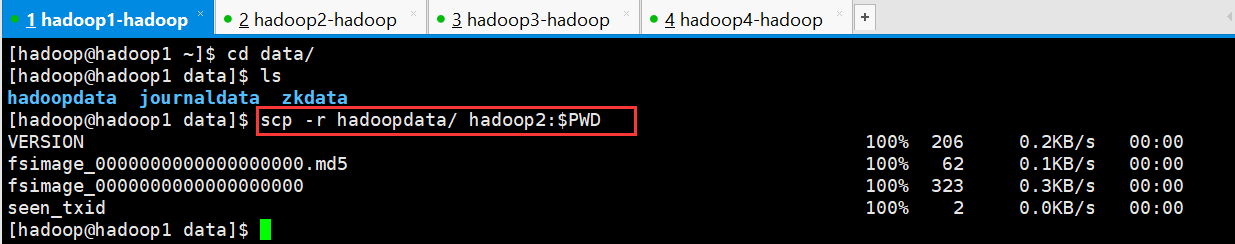

4、要把在hadoop1节点上生成的元数据 给复制到 另一个namenode(hadoop2)节点上

[hadoop@hadoop1 ~]$ cd data/

[hadoop@hadoop1 data]$ ls

hadoopdata journaldata zkdata

[hadoop@hadoop1 data]$ scp -r hadoopdata/ hadoop2:$PWD

VERSION 100% 206 0.2KB/s 00:00

fsimage_0000000000000000000.md5 100% 62 0.1KB/s 00:00

fsimage_0000000000000000000 100% 323 0.3KB/s 00:00

seen_txid 100% 2 0.0KB/s 00:00

[hadoop@hadoop1 data]$

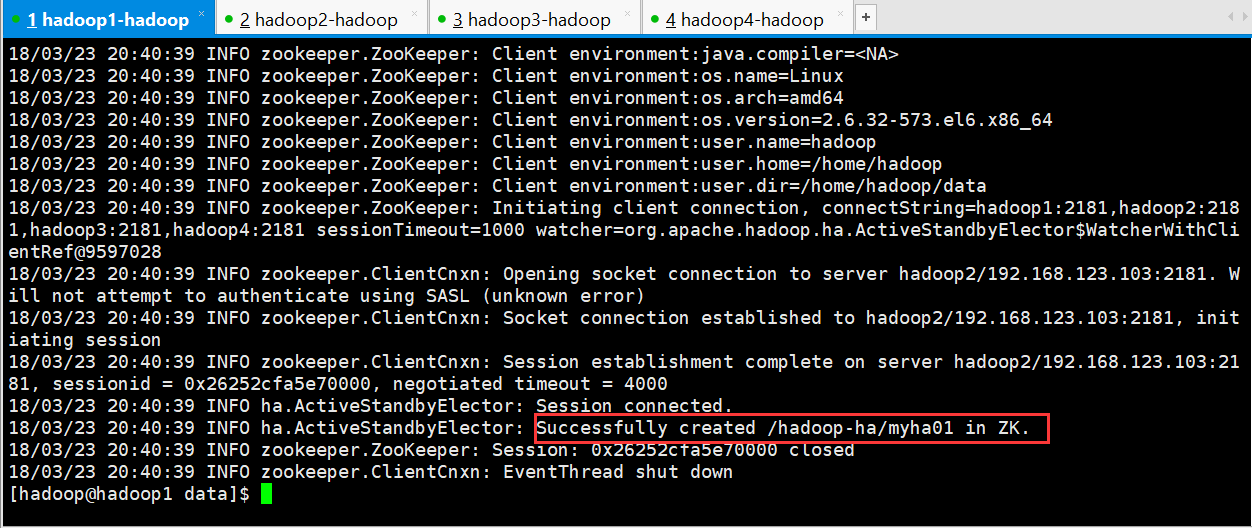

5、格式化zkfc

重点强调:只能在nameonde节点进行

重点强调:只能在nameonde节点进行

重点强调:只能在nameonde节点进行

[hadoop@hadoop1 data]$ hdfs zkfc -formatZK

1 [hadoop@hadoop1 data]$ hdfs zkfc -formatZK 2 18/03/23 20:40:38 INFO tools.DFSZKFailoverController: Failover controller configured for NameNode NameNode at hadoop1/192.168.123.102:9000 3 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:zookeeper.version=3.4.6-1569965, built on 02/20/2014 09:09 GMT 4 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:host.name=hadoop1 5 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:java.version=1.8.0_73 6 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:java.vendor=Oracle Corporation 7 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:java.home=/usr/local/jdk1.8.0_73/jre 8 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:java.class.path=/home/hadoop/apps/hadoop-2.7.5/etc/hadoop:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/paranamer-2.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/httpclient-4.2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jets3t-0.9.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-net-3.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jetty-sslengine-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-math3-3.1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jsch-0.1.54.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jsp-api-2.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/hadoop-auth-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/mockito-all-1.8.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/zookeeper-3.4.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jersey-json-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/gson-2.2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/httpcore-4.2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-httpclient-3.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jettison-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/xmlenc-0.52.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/hadoop-annotations-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/curator-framework-2.7.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/curator-client-2.7.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-digester-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/activation-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/stax-api-1.0-2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/avro-1.7.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/junit-4.11.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-collections-3.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/hamcrest-core-1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/commons-configuration-1.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/hadoop-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/hadoop-nfs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/common/hadoop-common-2.7.5-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/hdfs/hadoop-hdfs-2.7.5-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/aopalliance-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-json-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/javax.inject-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-client-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jettison-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/guice-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/activation-1.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-client-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-api-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-registry-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/javax.inject-1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/guice-3.0.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/junit-4.11.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.5-tests.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.5.jar:/home/hadoop/apps/hadoop-2.7.5/contrib/capacity-scheduler/*.jar 9 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:java.library.path=/home/hadoop/apps/hadoop-2.7.5/lib/native 10 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:java.io.tmpdir=/tmp 11 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:java.compiler=<NA> 12 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:os.name=Linux 13 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:os.arch=amd64 14 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:os.version=2.6.32-573.el6.x86_64 15 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:user.name=hadoop 16 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:user.home=/home/hadoop 17 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Client environment:user.dir=/home/hadoop/data 18 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Initiating client connection, connectString=hadoop1:2181,hadoop2:2181,hadoop3:2181,hadoop4:2181 sessionTimeout=1000 watcher=org.apache.hadoop.ha.ActiveStandbyElector$WatcherWithClientRef@9597028 19 18/03/23 20:40:39 INFO zookeeper.ClientCnxn: Opening socket connection to server hadoop2/192.168.123.103:2181. Will not attempt to authenticate using SASL (unknown error) 20 18/03/23 20:40:39 INFO zookeeper.ClientCnxn: Socket connection established to hadoop2/192.168.123.103:2181, initiating session 21 18/03/23 20:40:39 INFO zookeeper.ClientCnxn: Session establishment complete on server hadoop2/192.168.123.103:2181, sessionid = 0x26252cfa5e70000, negotiated timeout = 4000 22 18/03/23 20:40:39 INFO ha.ActiveStandbyElector: Session connected. 23 18/03/23 20:40:39 INFO ha.ActiveStandbyElector: Successfully created /hadoop-ha/myha01 in ZK. 24 18/03/23 20:40:39 INFO zookeeper.ZooKeeper: Session: 0x26252cfa5e70000 closed 25 18/03/23 20:40:39 INFO zookeeper.ClientCnxn: EventThread shut down 26 [hadoop@hadoop1 data]$

启动集群

1、启动HDFS

可以从启动输出日志里面看到启动了哪些进程

[hadoop@hadoop1 ~]$ start-dfs.sh Starting namenodes on [hadoop1 hadoop2] hadoop2: starting namenode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-namenode-hadoop2.out hadoop1: starting namenode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-namenode-hadoop1.out hadoop3: starting datanode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-datanode-hadoop3.out hadoop4: starting datanode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-datanode-hadoop4.out hadoop2: starting datanode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-datanode-hadoop2.out hadoop1: starting datanode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-datanode-hadoop1.out Starting journal nodes [hadoop1 hadoop2 hadoop3] hadoop3: journalnode running as process 16712. Stop it first. hadoop2: journalnode running as process 3049. Stop it first. hadoop1: journalnode running as process 2739. Stop it first. Starting ZK Failover Controllers on NN hosts [hadoop1 hadoop2] hadoop2: starting zkfc, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-zkfc-hadoop2.out hadoop1: starting zkfc, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-zkfc-hadoop1.out [hadoop@hadoop1 ~]$

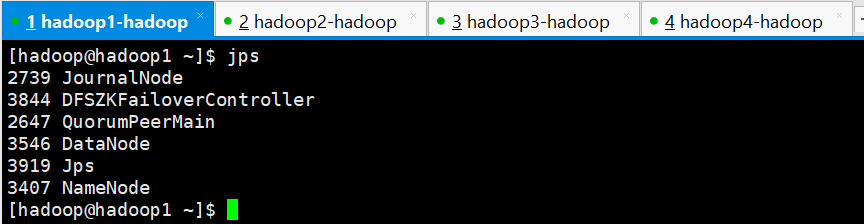

查看各节点进程是否正常

hadoop1

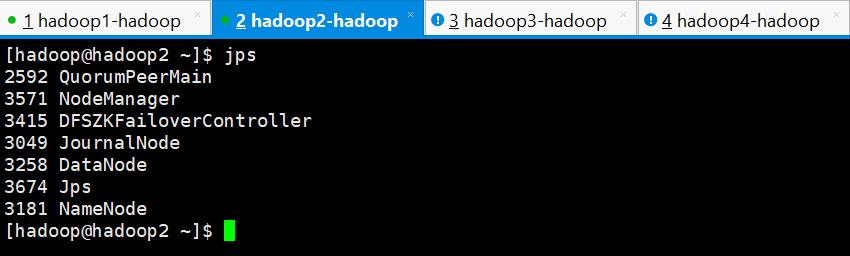

hadoop2

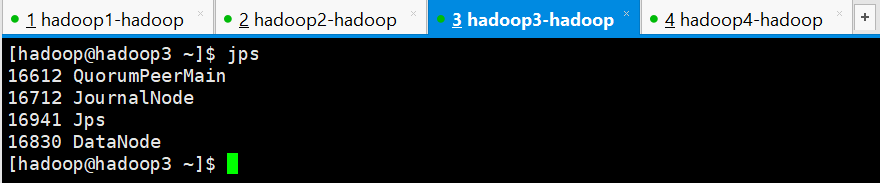

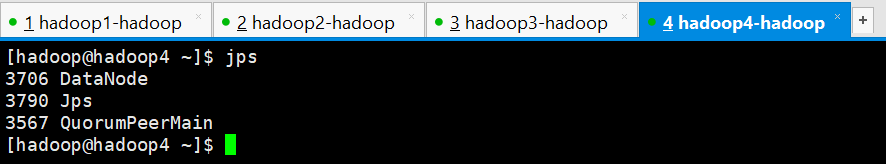

hadoop3

hadoop4

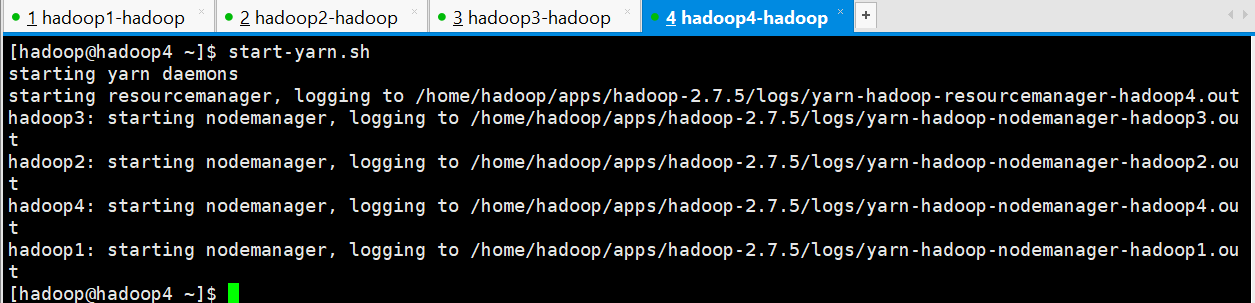

2、启动YARN

在主备 resourcemanager 中随便选择一台进行启动

[hadoop@hadoop4 ~]$ start-yarn.sh starting yarn daemons starting resourcemanager, logging to /home/hadoop/apps/hadoop-2.7.5/logs/yarn-hadoop-resourcemanager-hadoop4.out hadoop3: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.7.5/logs/yarn-hadoop-nodemanager-hadoop3.out hadoop2: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.7.5/logs/yarn-hadoop-nodemanager-hadoop2.out hadoop4: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.7.5/logs/yarn-hadoop-nodemanager-hadoop4.out hadoop1: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.7.5/logs/yarn-hadoop-nodemanager-hadoop1.out [hadoop@hadoop4 ~]$

正常启动之后,检查各节点的进程

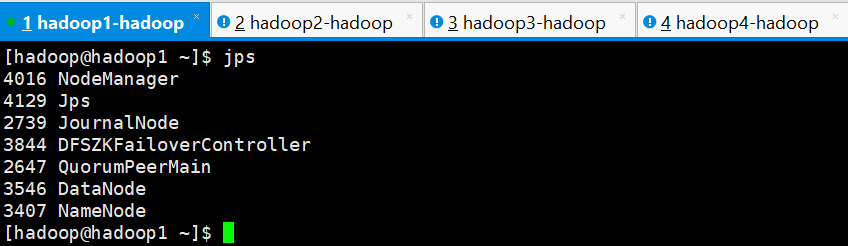

hadoop1

hadoop2

hadoop3

hadoop4

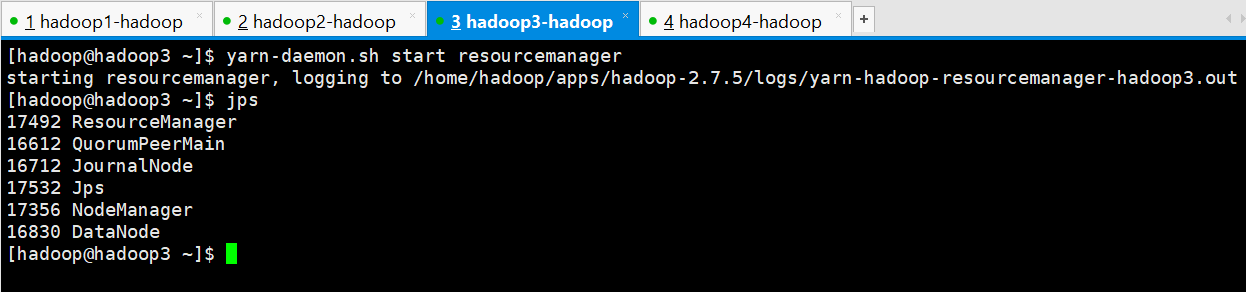

若备用节点的 resourcemanager 没有启动起来,则手动启动起来,在hadoop3上进行手动启动

[hadoop@hadoop3 ~]$ yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /home/hadoop/apps/hadoop-2.7.5/logs/yarn-hadoop-resourcemanager-hadoop3.out [hadoop@hadoop3 ~]$ jps 17492 ResourceManager 16612 QuorumPeerMain 16712 JournalNode 17532 Jps 17356 NodeManager 16830 DataNode [hadoop@hadoop3 ~]$

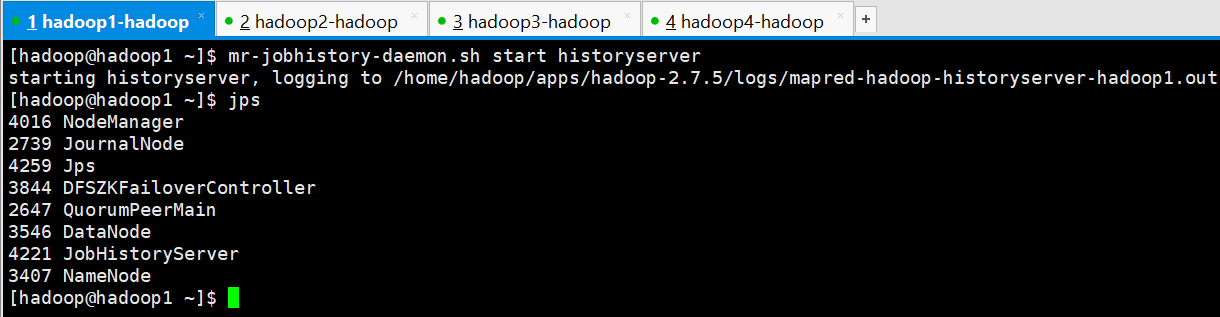

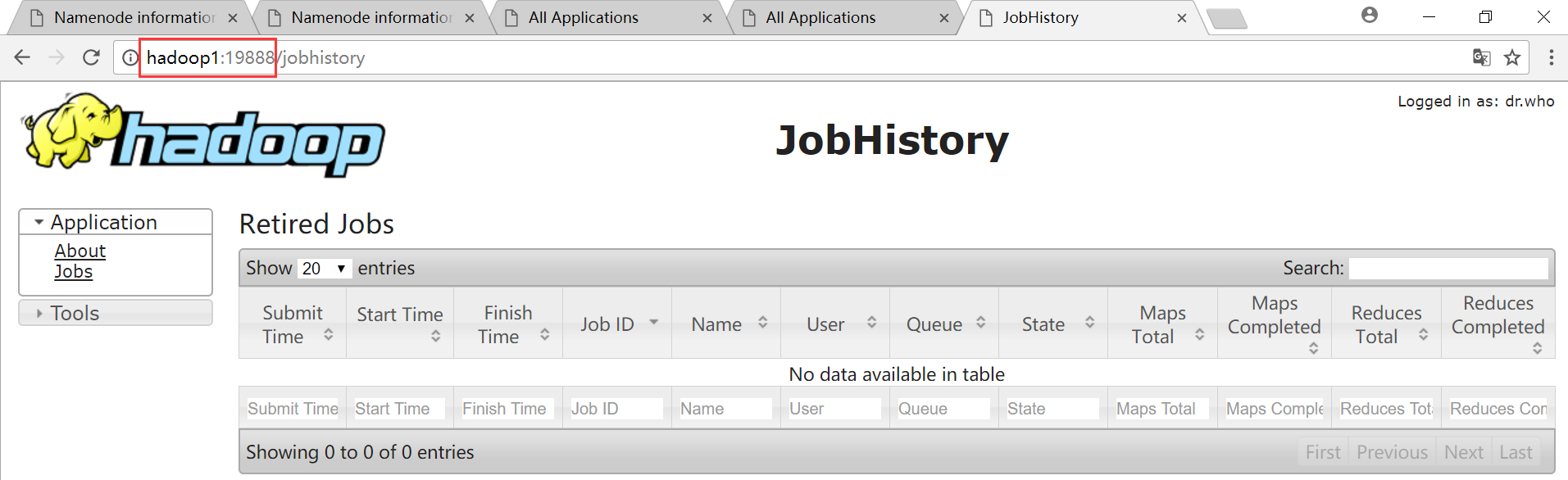

3、启动 mapreduce 任务历史服务器

[hadoop@hadoop1 ~]$ mr-jobhistory-daemon.sh start historyserver starting historyserver, logging to /home/hadoop/apps/hadoop-2.7.5/logs/mapred-hadoop-historyserver-hadoop1.out [hadoop@hadoop1 ~]$ jps 4016 NodeManager 2739 JournalNode 4259 Jps 3844 DFSZKFailoverController 2647 QuorumPeerMain 3546 DataNode 4221 JobHistoryServer 3407 NameNode [hadoop@hadoop1 ~]$

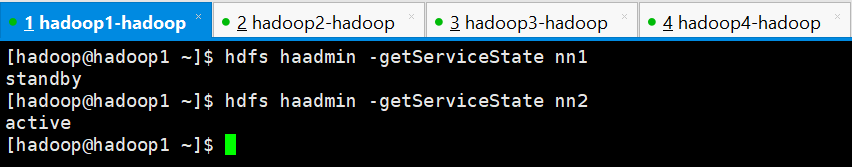

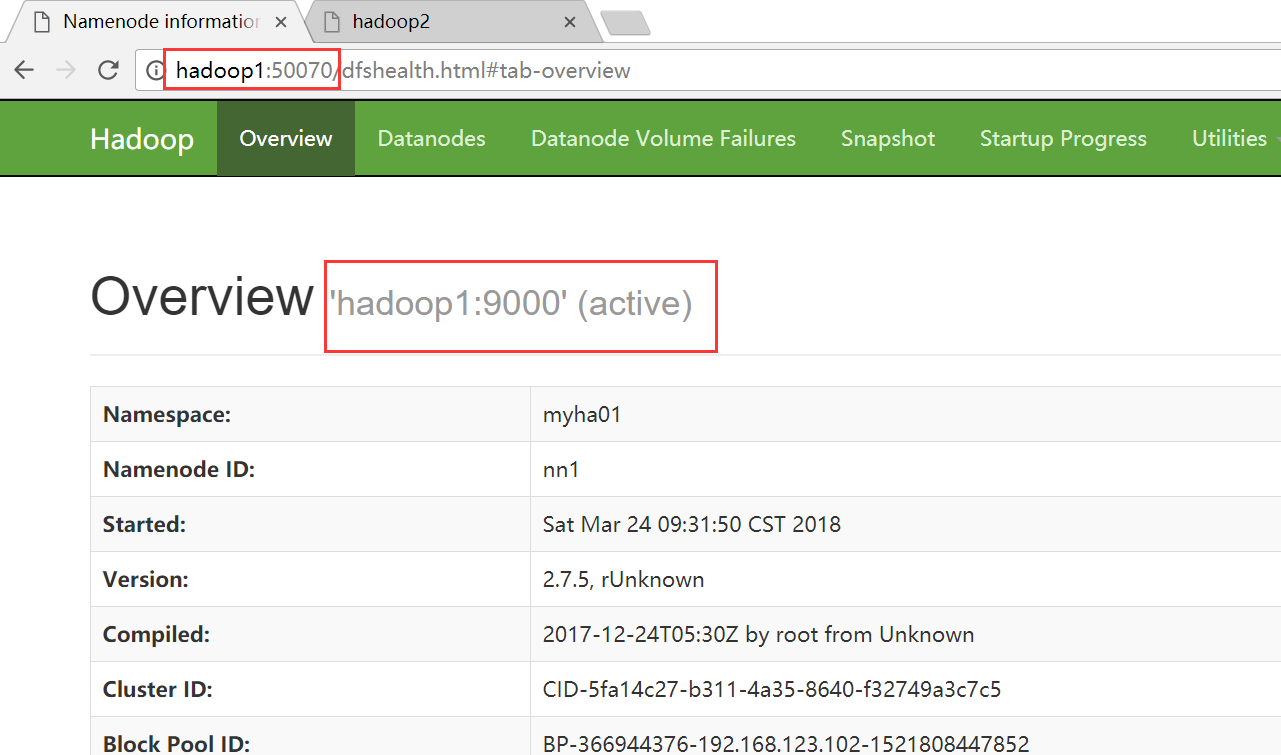

4、查看各主节点的状态

HDFS

[hadoop@hadoop1 ~]$ hdfs haadmin -getServiceState nn1 standby [hadoop@hadoop1 ~]$ hdfs haadmin -getServiceState nn2 active [hadoop@hadoop1 ~]$

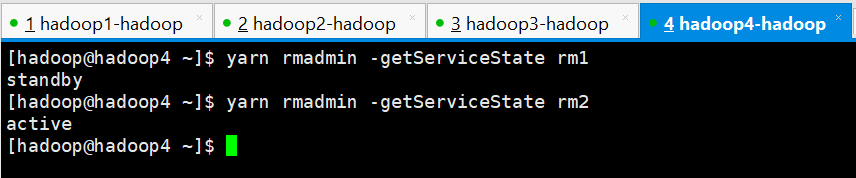

YARN

[hadoop@hadoop4 ~]$ yarn rmadmin -getServiceState rm1 standby [hadoop@hadoop4 ~]$ yarn rmadmin -getServiceState rm2 active [hadoop@hadoop4 ~]$

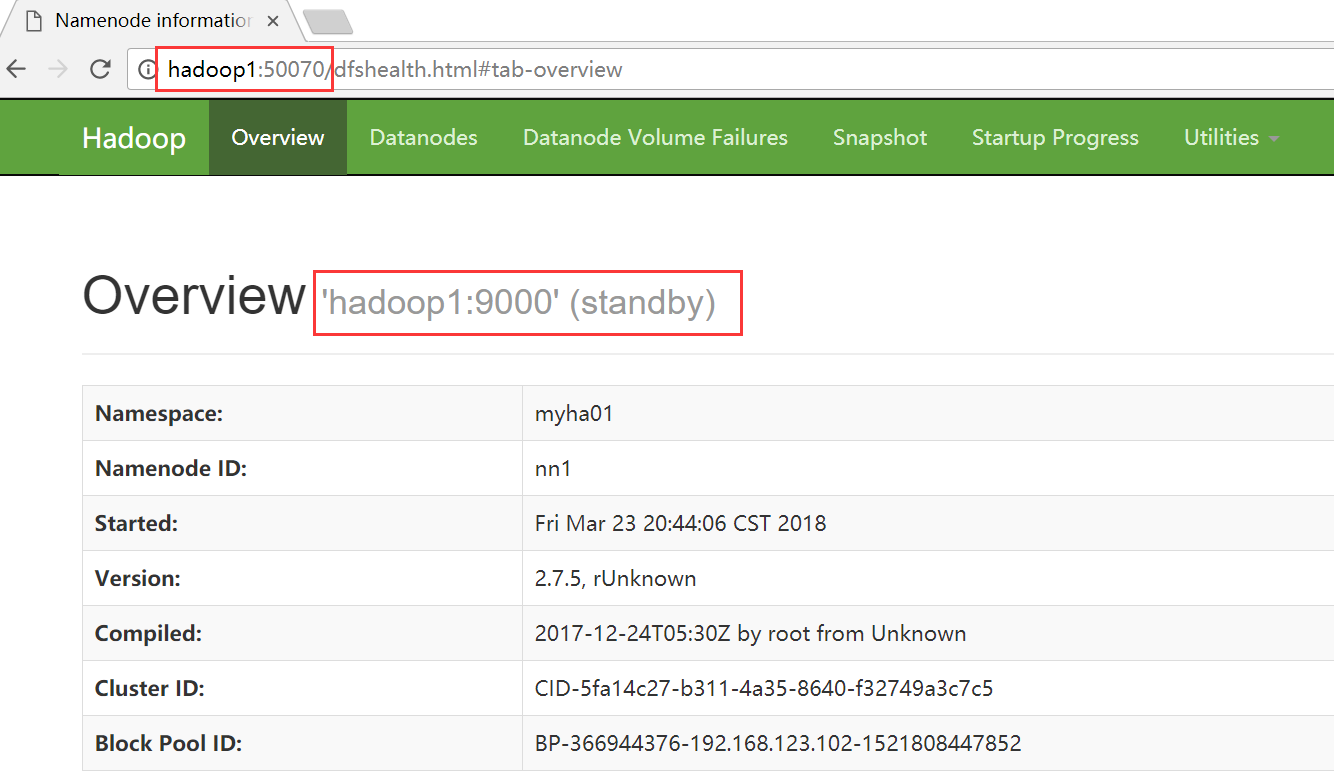

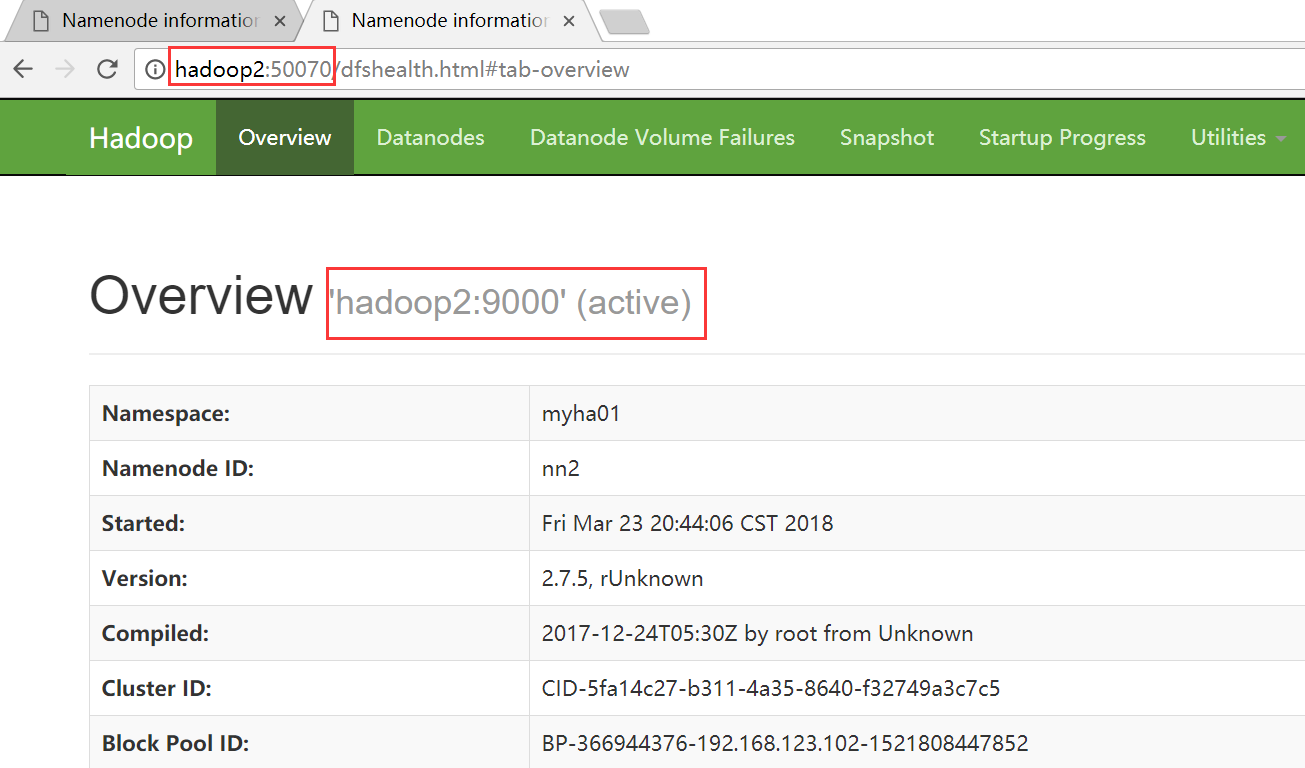

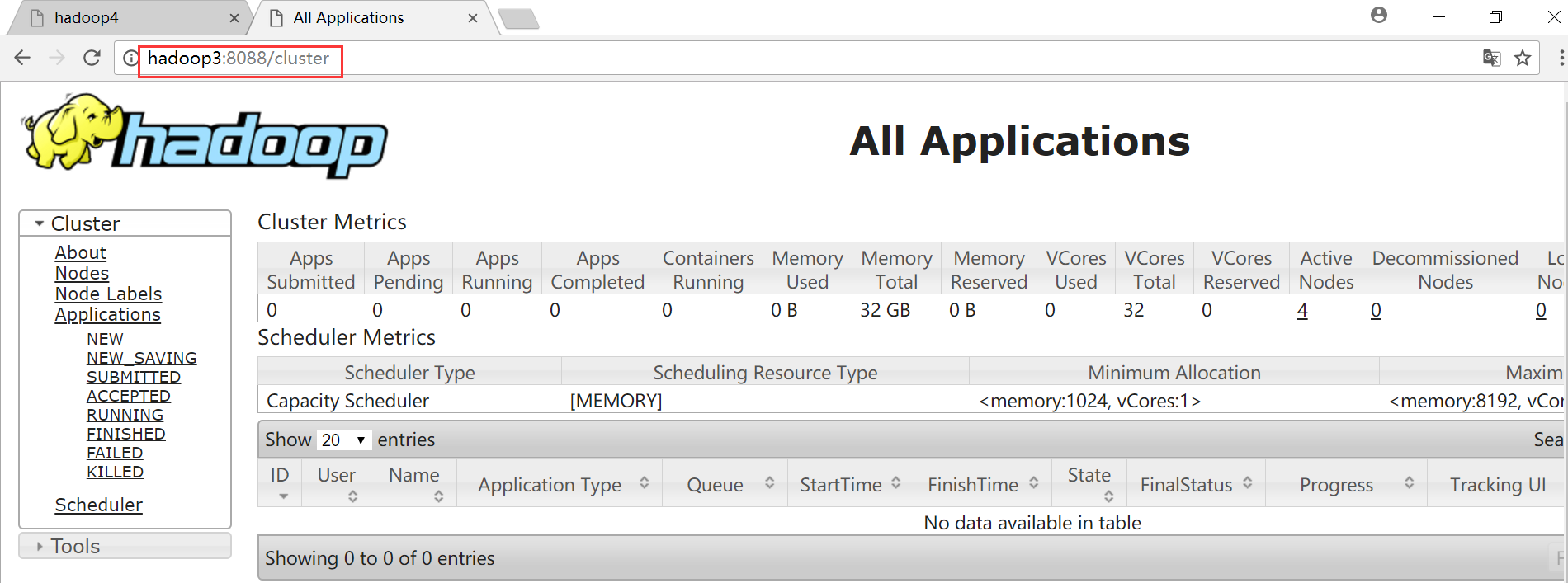

5、WEB界面进行查看

HDFS

hadoop1

hadoop2

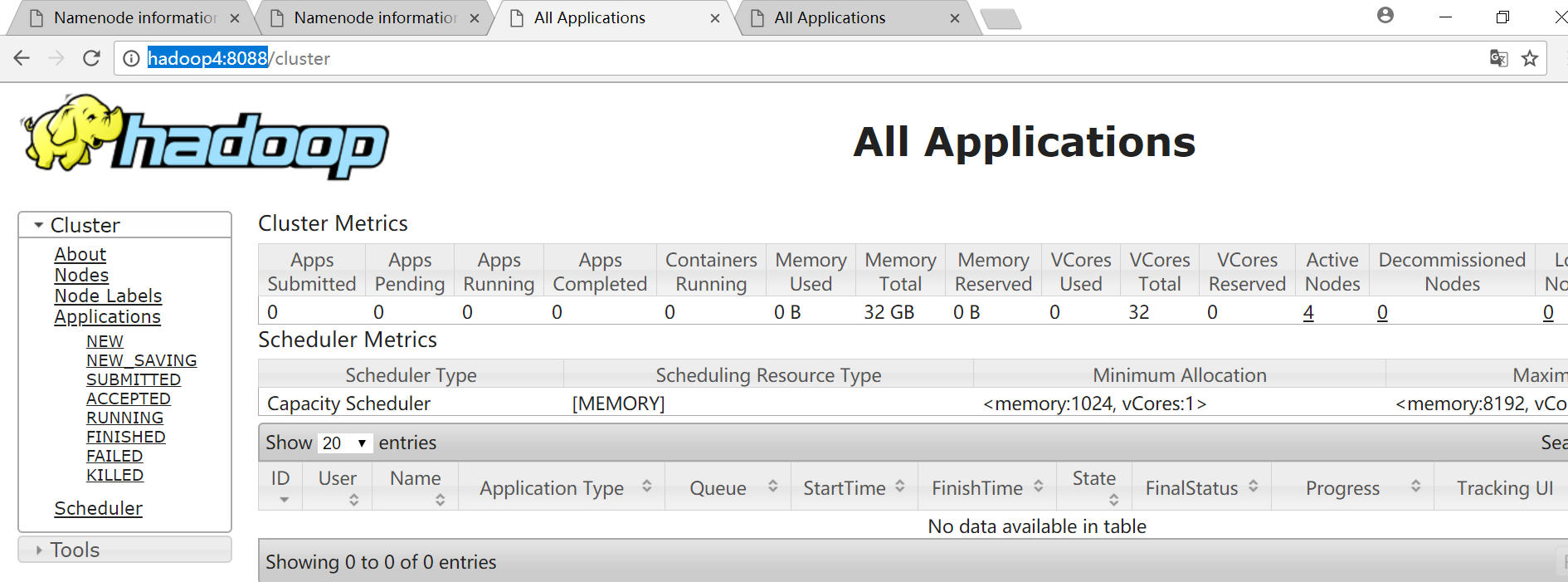

YARN

standby节点会自动跳到avtive节点

MapReduce历史服务器web界面

集群性能测试

1、干掉 active namenode, 看看集群有什么变化

目前hadoop2上的namenode节点是active状态,干掉他的进程看看hadoop1上的standby状态的namenode能否自动切换成active状态

[hadoop@hadoop2 ~]$ jps 4032 QuorumPeerMain 4400 DFSZKFailoverController 4546 NodeManager 4198 DataNode 4745 Jps 4122 NameNode 4298 JournalNode [hadoop@hadoop2 ~]$ kill -9 4122

hadoop2

hadoop1

自动切换成功

2、在上传文件的时候干掉 active namenode, 看看有什么变化

首先将hadoop2上的namenode节点手动启动起来

[hadoop@hadoop2 ~]$ hadoop-daemon.sh start namenode starting namenode, logging to /home/hadoop/apps/hadoop-2.7.5/logs/hadoop-hadoop-namenode-hadoop2.out [hadoop@hadoop2 ~]$ jps 4032 QuorumPeerMain 4400 DFSZKFailoverController 4546 NodeManager 4198 DataNode 4823 NameNode 4298 JournalNode 4908 Jps [hadoop@hadoop2 ~]$

找一个比较大的文件,进行文件上传操作,5秒钟的时候干掉active状态的namenode,看看文件是否能上传成功

hadoop2进行上传

[hadoop@hadoop2 ~]$ ll 总用量 194368 drwxrwxr-x 4 hadoop hadoop 4096 3月 23 19:48 apps drwxrwxr-x 5 hadoop hadoop 4096 3月 23 20:38 data -rw-rw-r-- 1 hadoop hadoop 199007110 3月 24 09:51 hadoop-2.7.5-centos-6.7.tar.gz drwxrwxr-x 3 hadoop hadoop 4096 3月 21 19:47 log -rw-rw-r-- 1 hadoop hadoop 9935 3月 24 09:48 zookeeper.out [hadoop@hadoop2 ~]$ hadoop fs -put hadoop-2.7.5-centos-6.7.tar.gz /hadoop/

hadoop1准备随时干掉namenode

[hadoop@hadoop1 ~]$ jps 4128 DataNode 4498 DFSZKFailoverController 3844 QuorumPeerMain 4327 JournalNode 5095 Jps 4632 NodeManager 4814 JobHistoryServer 4015 NameNode [hadoop@hadoop1 ~]$ kill -9 4015

hadoop2上的信息,在干掉hadoop1上namenode进程的时候,hadoop2报错

1 [hadoop@hadoop2 ~]$ hadoop fs -put hadoop-2.7.5-centos-6.7.tar.gz /hadoop/ 2 18/03/24 09:54:34 INFO retry.RetryInvocationHandler: Exception while invoking addBlock of class ClientNamenodeProtocolTranslatorPB over hadoop1/192.168.123.102:9000. Trying to fail over immediately. 3 java.io.EOFException: End of File Exception between local host is: "hadoop2/192.168.123.103"; destination host is: "hadoop1":9000; : java.io.EOFException; For more details see: http://wiki.apache.org/hadoop/EOFException 4 at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) 5 at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62) 6 at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) 7 at java.lang.reflect.Constructor.newInstance(Constructor.java:422) 8 at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:792) 9 at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:765) 10 at org.apache.hadoop.ipc.Client.call(Client.java:1480) 11 at org.apache.hadoop.ipc.Client.call(Client.java:1413) 12 at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229) 13 at com.sun.proxy.$Proxy10.addBlock(Unknown Source) 14 at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:418) 15 at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) 16 at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) 17 at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) 18 at java.lang.reflect.Method.invoke(Method.java:497) 19 at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:191) 20 at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102) 21 at com.sun.proxy.$Proxy11.addBlock(Unknown Source) 22 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.locateFollowingBlock(DFSOutputStream.java:1588) 23 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1373) 24 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:554) 25 Caused by: java.io.EOFException 26 at java.io.DataInputStream.readInt(DataInputStream.java:392) 27 at org.apache.hadoop.ipc.Client$Connection.receiveRpcResponse(Client.java:1085) 28 at org.apache.hadoop.ipc.Client$Connection.run(Client.java:980) 29 18/03/24 09:54:34 INFO retry.RetryInvocationHandler: Exception while invoking addBlock of class ClientNamenodeProtocolTranslatorPB over hadoop2/192.168.123.103:9000 after 1 fail over attempts. Trying to fail over after sleeping for 1454ms. 30 org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.ipc.StandbyException): Operation category READ is not supported in state standby 31 at org.apache.hadoop.hdfs.server.namenode.ha.StandbyState.checkOperation(StandbyState.java:87) 32 at org.apache.hadoop.hdfs.server.namenode.NameNode$NameNodeHAContext.checkOperation(NameNode.java:1802) 33 at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkOperation(FSNamesystem.java:1321) 34 at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getNewBlockTargets(FSNamesystem.java:3084) 35 at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalBlock(FSNamesystem.java:3051) 36 at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.addBlock(NameNodeRpcServer.java:725) 37 at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.addBlock(ClientNamenodeProtocolServerSideTranslatorPB.java:493) 38 at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java) 39 at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) 40 at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:982) 41 at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2217) 42 at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2213) 43 at java.security.AccessController.doPrivileged(Native Method) 44 at javax.security.auth.Subject.doAs(Subject.java:422) 45 at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1754) 46 at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2213) 47 48 at org.apache.hadoop.ipc.Client.call(Client.java:1476) 49 at org.apache.hadoop.ipc.Client.call(Client.java:1413) 50 at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229) 51 at com.sun.proxy.$Proxy10.addBlock(Unknown Source) 52 at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:418) 53 at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) 54 at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) 55 at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) 56 at java.lang.reflect.Method.invoke(Method.java:497) 57 at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:191) 58 at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102) 59 at com.sun.proxy.$Proxy11.addBlock(Unknown Source) 60 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.locateFollowingBlock(DFSOutputStream.java:1588) 61 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1373) 62 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:554) 63 18/03/24 09:54:36 INFO retry.RetryInvocationHandler: Exception while invoking addBlock of class ClientNamenodeProtocolTranslatorPB over hadoop1/192.168.123.102:9000 after 2 fail over attempts. Trying to fail over after sleeping for 1109ms. 64 java.net.ConnectException: Call From hadoop2/192.168.123.103 to hadoop1:9000 failed on connection exception: java.net.ConnectException: 拒绝连接; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused 65 at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) 66 at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62) 67 at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) 68 at java.lang.reflect.Constructor.newInstance(Constructor.java:422) 69 at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:792) 70 at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:732) 71 at org.apache.hadoop.ipc.Client.call(Client.java:1480) 72 at org.apache.hadoop.ipc.Client.call(Client.java:1413) 73 at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229) 74 at com.sun.proxy.$Proxy10.addBlock(Unknown Source) 75 at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:418) 76 at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) 77 at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) 78 at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) 79 at java.lang.reflect.Method.invoke(Method.java:497) 80 at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:191) 81 at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102) 82 at com.sun.proxy.$Proxy11.addBlock(Unknown Source) 83 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.locateFollowingBlock(DFSOutputStream.java:1588) 84 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1373) 85 at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:554) 86 Caused by: java.net.ConnectException: 拒绝连接 87 at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method) 88 at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717) 89 at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206) 90 at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531) 91 at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:495) 92 at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:615) 93 at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:713) 94 at org.apache.hadoop.ipc.Client$Connection.access$2900(Client.java:376) 95 at org.apache.hadoop.ipc.Client.getConnection(Client.java:1529) 96 at org.apache.hadoop.ipc.Client.call(Client.java:1452) 97 ... 14 more 98 [hadoop@hadoop2 ~]$

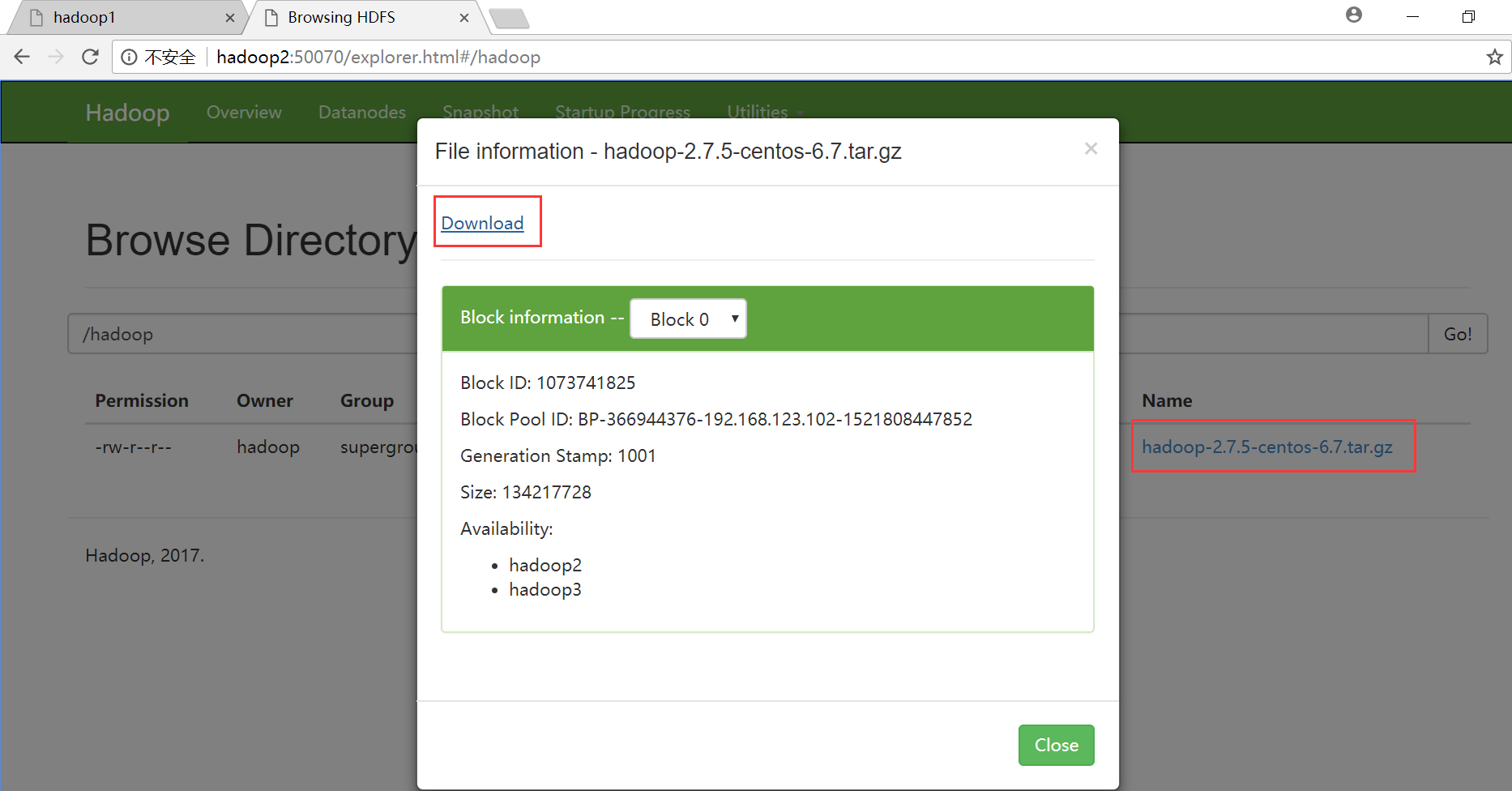

在HDFS系统或web界面查看是否上传成功

命令查看

[hadoop@hadoop1 ~]$ hadoop fs -ls /hadoop/ Found 1 items -rw-r--r-- 2 hadoop supergroup 199007110 2018-03-24 09:54 /hadoop/hadoop-2.7.5-centos-6.7.tar.gz [hadoop@hadoop1 ~]$

web界面下载

发现HDFS系统的文件大小和我们要上传的文件大小一致,均为199007110,说明在上传过程中干掉active状态的namenode,我们仍可以上传成功,HA起作用了

3、干掉 active resourcemanager, 看看集群有什么变化

目前hadoop4上的resourcemanager是活动的,干掉他的进程观察情况

[hadoop@hadoop4 ~]$ jps 3248 ResourceManager 3028 QuorumPeerMain 3787 Jps 3118 DataNode 3358 NodeManager [hadoop@hadoop4 ~]$ kill -9 3248

发现hadoop4的web界面打不开了

打开hadoop3上YARN的web界面查看,发现hadoop3上的resourcemanager变为active状态

4、在执行任务的时候干掉 active resourcemanager,看看集群有什么变化

上传一个比较大的文件到HDFS系统上

[hadoop@hadoop1 output2]$ hadoop fs -mkdir -p /words/input/ [hadoop@hadoop1 output2]$ ll 总用量 82068 -rw-r--r--. 1 hadoop hadoop 84034300 3月 21 22:18 part-r-00000 -rw-r--r--. 1 hadoop hadoop 0 3月 21 22:18 _SUCCESS [hadoop@hadoop1 output2]$ hadoop fs -put part-r-00000 /words/input/words.txt [hadoop@hadoop1 output2]$

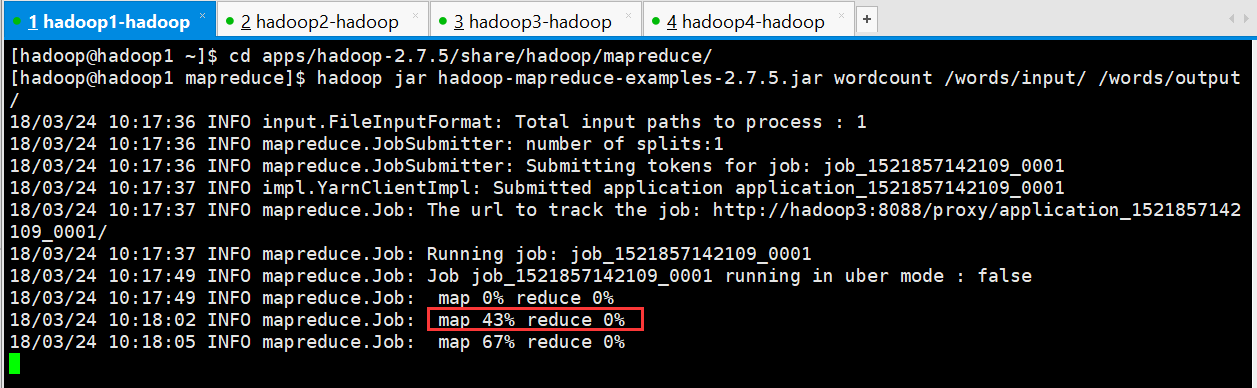

执行wordcount进行单词统计,在map执行过程中干掉active状态的resourcemanager,观察情况变化

首先启动hadoop4上的resourcemanager进程

[hadoop@hadoop4 ~]$ yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /home/hadoop/apps/hadoop-2.7.5/logs/yarn-hadoop-resourcemanager-hadoop4.out [hadoop@hadoop4 ~]$ jps 3028 QuorumPeerMain 3847 ResourceManager 3884 Jps 3118 DataNode 3358 NodeManager [hadoop@hadoop4 ~]$

在hadoop1上执行单词统计

[hadoop@hadoop1 ~]$ cd apps/hadoop-2.7.5/share/hadoop/mapreduce/ [hadoop@hadoop1 mapreduce]$ hadoop jar hadoop-mapreduce-examples-2.7.5.jar wordcount /words/input/ /words/output/

在hadoop3上随时准备干掉resourcemanager进程

[hadoop@hadoop3 ~]$ jps 3488 JournalNode 3601 NodeManager 4378 Jps 3291 QuorumPeerMain 3389 DataNode 3757 ResourceManager [hadoop@hadoop3 ~]$ kill -9 3757

在map阶段进行到43%时干掉resourcemanager进程

1 [hadoop@hadoop1 mapreduce]$ hadoop jar hadoop-mapreduce-examples-2.7.5.jar wordcount /words/input/ /words/output/ 2 18/03/24 10:17:36 INFO input.FileInputFormat: Total input paths to process : 1 3 18/03/24 10:17:36 INFO mapreduce.JobSubmitter: number of splits:1 4 18/03/24 10:17:36 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1521857142109_0001 5 18/03/24 10:17:37 INFO impl.YarnClientImpl: Submitted application application_1521857142109_0001 6 18/03/24 10:17:37 INFO mapreduce.Job: The url to track the job: http://hadoop3:8088/proxy/application_1521857142109_0001/ 7 18/03/24 10:17:37 INFO mapreduce.Job: Running job: job_1521857142109_0001 8 18/03/24 10:17:49 INFO mapreduce.Job: Job job_1521857142109_0001 running in uber mode : false 9 18/03/24 10:17:49 INFO mapreduce.Job: map 0% reduce 0% 10 18/03/24 10:18:02 INFO mapreduce.Job: map 43% reduce 0% 11 18/03/24 10:18:05 INFO mapreduce.Job: map 67% reduce 0% 12 18/03/24 10:18:11 INFO mapreduce.Job: map 100% reduce 0% 13 18/03/24 10:19:21 INFO mapreduce.Job: map 100% reduce 100% 14 18/03/24 10:19:21 INFO mapreduce.Job: Job job_1521857142109_0001 completed successfully 15 18/03/24 10:19:21 INFO mapreduce.Job: Counters: 49 16 File System Counters 17 FILE: Number of bytes read=153040486 18 FILE: Number of bytes written=229809001 19 FILE: Number of read operations=0 20 FILE: Number of large read operations=0 21 FILE: Number of write operations=0 22 HDFS: Number of bytes read=84034400 23 HDFS: Number of bytes written=69223279 24 HDFS: Number of read operations=6 25 HDFS: Number of large read operations=0 26 HDFS: Number of write operations=2 27 Job Counters 28 Launched map tasks=1 29 Launched reduce tasks=1 30 Data-local map tasks=1 31 Total time spent by all maps in occupied slots (ms)=19896 32 Total time spent by all reduces in occupied slots (ms)=10751 33 Total time spent by all map tasks (ms)=19896 34 Total time spent by all reduce tasks (ms)=10751 35 Total vcore-milliseconds taken by all map tasks=19896 36 Total vcore-milliseconds taken by all reduce tasks=10751 37 Total megabyte-milliseconds taken by all map tasks=20373504 38 Total megabyte-milliseconds taken by all reduce tasks=11009024 39 Map-Reduce Framework 40 Map input records=1000209 41 Map output records=3875230 42 Map output bytes=99535220 43 Map output materialized bytes=76520240 44 Input split bytes=100 45 Combine input records=3875230 46 Combine output records=1782760 47 Reduce input groups=1776439 48 Reduce shuffle bytes=76520240 49 Reduce input records=1782760 50 Reduce output records=1776439 51 Spilled Records=5348280 52 Shuffled Maps =1 53 Failed Shuffles=0 54 Merged Map outputs=1 55 GC time elapsed (ms)=746 56 CPU time spent (ms)=16660 57 Physical memory (bytes) snapshot=290443264 58 Virtual memory (bytes) snapshot=4132728832 59 Total committed heap usage (bytes)=141328384 60 Shuffle Errors 61 BAD_ID=0 62 CONNECTION=0 63 IO_ERROR=0 64 WRONG_LENGTH=0 65 WRONG_MAP=0 66 WRONG_REDUCE=0 67 File Input Format Counters 68 Bytes Read=84034300 69 File Output Format Counters 70 Bytes Written=69223279 71 [hadoop@hadoop1 mapreduce]$

发现计算过程没有任何报错,web界面也显示任务执行成功

浙公网安备 33010602011771号

浙公网安备 33010602011771号