MapReduce案例七:小文件合并

一、数据样例

- 文件一:one.txt

pangtouyu yinain yutang chaojidan

pikaqiu Dalen study let me happy

- 文件二:two.txt

longlong fanfan

mazong kailun yuhang yixin

longlong fanfan

mazong kailun yuhang yixin

- 文件三:three.txt

shuaige changmo zhenqiang

dongli lingu xuanxuan

二、需求

- 无论hdfs还是mapreduce,对于小文件都有损效率,实践中,又难免面临处理大量小文件的场景,此时,就需要有相应解决方案。将多个小文件合并成一个文件SequenceFile,SequenceFile里面存储着多个文件,存储的形式为文件路径+名称为key,文件内容为value。

三、分析

-

小文件的优化无非以下几种方式:

(1)在数据采集的时候,就将小文件或小批数据合成大文件再上传HDFS

(2)在业务处理之前,在HDFS上使用mapreduce程序对小文件进行合并

(3)在mapreduce处理时,可采用CombineTextInputFormat提高效率 -

本节采用自定义InputFormat的方式,处理输入小文件的问题。

(1)自定义一个类继承FileInputFormat

(2)改写RecordReader,实现一次读取一个完整文件封装为KV

(3)在输出时使用SequenceFileOutPutFormat输出合并文件

四、代码实现

- 1、创建 WholeRecordReader 类:

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

public class WholeRecordReader extends RecordReader<NullWritable, BytesWritable>{

private Configuration configuration;

private FileSplit split;

private boolean processed = false;

private BytesWritable value = new BytesWritable();

@Override

public void initialize(InputSplit split, TaskAttemptContext context) throws IOException, InterruptedException {

this.split = (FileSplit)split;

configuration = context.getConfiguration();

}

@Override

public boolean nextKeyValue() throws IOException, InterruptedException {

if (!processed) {

// 1 定义缓存区

byte[] contents = new byte[(int)split.getLength()];

FileSystem fs = null;

FSDataInputStream fis = null;

try {

// 2 获取文件系统

Path path = split.getPath();

fs = path.getFileSystem(configuration);

// 3 读取数据

fis = fs.open(path);

// 4 读取文件内容

IOUtils.readFully(fis, contents, 0, contents.length);

// 5 输出文件内容

value.set(contents, 0, contents.length);

} catch (Exception e) {

}finally {

IOUtils.closeStream(fis);

}

processed = true;

return true;

}

return false;

}

@Override

public NullWritable getCurrentKey() throws IOException, InterruptedException {

return NullWritable.get();

}

@Override

public BytesWritable getCurrentValue() throws IOException, InterruptedException {

return value;

}

@Override

public float getProgress() throws IOException, InterruptedException {

return processed? 1:0;

}

@Override

public void close() throws IOException {

}

}

- 2、创建 WholeFileInputformat 类:

import java.io.IOException;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.JobContext;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

// 定义类继承FileInputFormat

public class WholeFileInputformat extends FileInputFormat<NullWritable, BytesWritable>{

@Override

protected boolean isSplitable(JobContext context, Path filename) {

return false;

}

@Override

public RecordReader<NullWritable, BytesWritable> createRecordReader(InputSplit split, TaskAttemptContext context)

throws IOException, InterruptedException {

WholeRecordReader recordReader = new WholeRecordReader();

recordReader.initialize(split, context);

return recordReader;

}

}

- 3、创建 SequenceFileMapper 类:

import java.io.IOException;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

public class SequenceFileMapper extends Mapper<NullWritable, BytesWritable, Text, BytesWritable>{

Text k = new Text();

@Override

protected void setup(Mapper<NullWritable, BytesWritable, Text, BytesWritable>.Context context)

throws IOException, InterruptedException {

// 1 获取文件切片信息

FileSplit inputSplit = (FileSplit) context.getInputSplit();

// 2 获取切片名称

String name = inputSplit.getPath().toString();

// 3 设置key的输出

k.set(name);

}

@Override

protected void map(NullWritable key, BytesWritable value,

Context context)

throws IOException, InterruptedException {

context.write(k, value);

}

}

- 4、创建 SequenceFileReducer 类:

import java.io.IOException;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class SequenceFileReducer extends Reducer<Text, BytesWritable, Text, BytesWritable> {

@Override

protected void reduce(Text key, Iterable<BytesWritable> values, Context context)

throws IOException, InterruptedException {

context.write(key, values.iterator().next());

}

}

- 5、创建 SequenceFileDriver 类:

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.BytesWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.SequenceFileOutputFormat;

public class SequenceFileDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

args = new String[] { "e:/input/inputinputformat", "e:/output1" };

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(SequenceFileDriver.class);

job.setMapperClass(SequenceFileMapper.class);

job.setReducerClass(SequenceFileReducer.class);

// 设置输入的inputFormat

job.setInputFormatClass(WholeFileInputformat.class);

// 设置输出的outputFormat

job.setOutputFormatClass(SequenceFileOutputFormat.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(BytesWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(BytesWritable.class);

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.waitForCompletion(true);

}

}

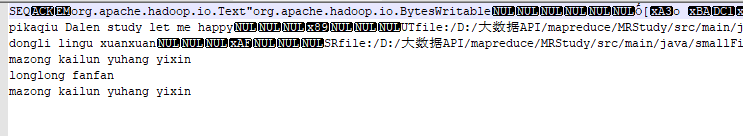

- 结果图:

作者:落花桂

本文版权归作者和博客园共有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。

浙公网安备 33010602011771号

浙公网安备 33010602011771号