MapReduce案例四:共同好友

一、数据样式

人:好友1,好友2...

A:B,C,D,F,E,O

B:A,C,E,K

C:F,A,D,I

D:A,E,F,L

E:B,C,D,M,L

F:A,B,C,D,E,O,M

G:A,C,D,E,F

H:A,C,D,E,O

I:A,O

J:B,O

K:A,C,D

L:D,E,F

M:E,F,G

O:A,H,I,J

二、需求

求出哪些人两两之间有共同好友,及他俩的共同好友都有谁?

三、分析

-

1、先求出A、B、C、….等是谁的好友,比如说现在是人:好友1,好友2...的形式,先求好友--人1,人2...的结果。即先求出那些人有哪些共同好友。

-

2、以好友--人1,人2...的形式作为第二次MapReduce的数据源,然后求出两个人之间的共同好友,即人1-人2 好友1 好友2...,人1-人3 好友1 好友2...的形式。

四、程序实现

- 1、第一次Mapper,创建 OneShareFriendsMapper 类:

import java.io.IOException;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class OneShareFriendsMapper extends Mapper<LongWritable, Text, Text, Text>{

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, Text>.Context context)

throws IOException, InterruptedException {

// 1 获取一行 A:B,C,D,F,E,O

String line = value.toString();

// 2 切割

String[] fileds = line.split(":");

// 3 获取person和好友

String person = fileds[0];

String[] friends = fileds[1].split(",");

// 4写出去

for(String friend: friends){

// 输出 <好友,人>

context.write(new Text(friend), new Text(person));

}

}

}

- 2、第一次Reducer,创建 OneShareFriendsReducer 类:

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class OneShareFriendsReducer extends Reducer<Text, Text, Text, Text>{

@Override

protected void reduce(Text key, Iterable<Text> values, Context context)

throws IOException, InterruptedException {

StringBuffer sb = new StringBuffer();

//1 拼接

for(Text person: values){

sb.append(person).append(",");

}

//2 写出

context.write(key, new Text(sb.toString()));

}

}

- 3、第一次Driver,创建 OneShareFriendsDriver 类:

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class OneShareFriendsDriver {

public static void main(String[] args) throws Exception {

args = new String[]{"D:\\大数据API\\friends.txt", "D:\\大数据API\\dataone"};

// 1 获取job对象

Configuration configuration = new Configuration();

Job job = Job.getInstance(configuration);

// 2 指定jar包运行的路径

job.setJarByClass(OneShareFriendsDriver.class);

// 3 指定map/reduce使用的类

job.setMapperClass(OneShareFriendsMapper.class);

job.setReducerClass(OneShareFriendsReducer.class);

// 4 指定map输出的数据类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

// 5 指定最终输出的数据类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

// 6 指定job的输入原始所在目录

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 7 提交

boolean result = job.waitForCompletion(true);

System.exit(result?1:0);

}

}

- 4、第二次Mapper,创建 TwoShareFriendsMapper 类:

import java.io.IOException;

import java.util.Arrays;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class TwoShareFriendsMapper extends Mapper<LongWritable, Text, Text, Text>{

@Override

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

// A I,K,C,B,G,F,H,O,D,

// 友 人,人,人

String line = value.toString();

String[] friend_persons = line.split("\t");

String friend = friend_persons[0];

String[] persons = friend_persons[1].split(",");

Arrays.sort(persons);

for (int i = 0; i < persons.length - 1; i++) {

for (int j = i + 1; j < persons.length; j++) {

// 发出 <人-人,好友> ,这样,相同的“人-人”对的所有好友就会到同1个reduce中去

context.write(new Text(persons[i] + "-" + persons[j]), new Text(friend));

}

}

}

}

- 5、第二次Reducer,创建 TwoShareFriendsReducer 类:

import java.io.IOException;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class TwoShareFriendsReducer extends Reducer<Text, Text, Text, Text>{

@Override

protected void reduce(Text key, Iterable<Text> values, Context context)

throws IOException, InterruptedException {

StringBuffer sb = new StringBuffer();

for (Text friend : values) {

sb.append(friend).append(" ");

}

context.write(key, new Text(sb.toString()));

}

}

- 6、第二次Driver,创建 TwoShareFriendsDriver 类:

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class TwoShareFriendsDriver {

public static void main(String[] args) throws Exception {

args = new String[]{"D:\\大数据API\\dataone", "D:\\大数据API\\datatwo"};

// 1 获取job对象

Configuration configuration = new Configuration();

Job job = Job.getInstance(configuration);

// 2 指定jar包运行的路径

job.setJarByClass(TwoShareFriendsDriver.class);

// 3 指定map/reduce使用的类

job.setMapperClass(TwoShareFriendsMapper.class);

job.setReducerClass(TwoShareFriendsReducer.class);

// 4 指定map输出的数据类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

// 5 指定最终输出的数据类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

// 6 指定job的输入原始所在目录

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 7 提交

boolean result = job.waitForCompletion(true);

System.exit(result?1:0);

}

}

- 补充

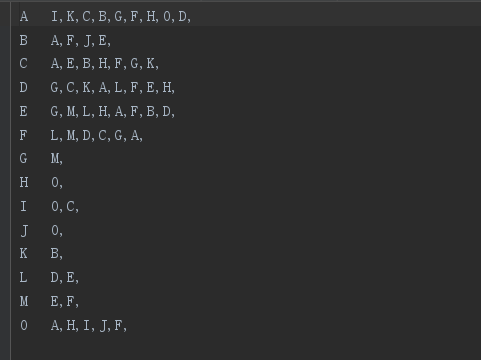

第一次MapReduce的结果图:

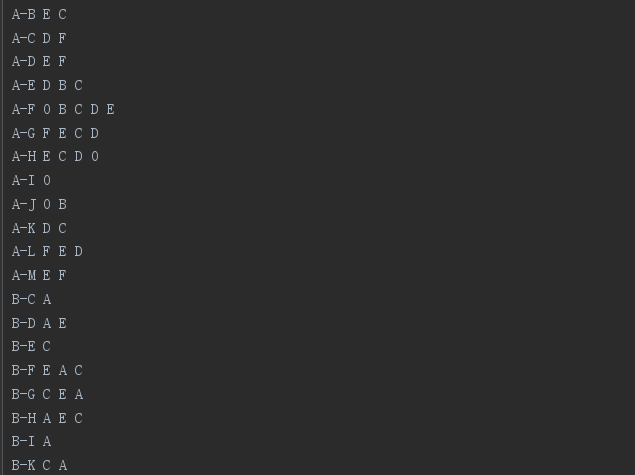

第二次MapReduce的结果图:

五、两次job串联

如果不想写多次driver代码,可以把两次job并联,代码如下:

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.jobcontrol.ControlledJob;

import org.apache.hadoop.mapreduce.lib.jobcontrol.JobControl;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class AllShareFriendsReducer {

public static void main(String[] args) throws IOException {

args = new String[]{"D:\\大数据API\\friends.txt","D:\\大数据API\\dataone1","D:\\大数据API\\\\datatwo1"};

Configuration conf = new Configuration();

Job job1 = Job.getInstance(conf);

job1.setMapperClass(OneShareFriendsMapper.class);

job1.setReducerClass(OneShareFriendsReducer.class);

job1.setMapOutputKeyClass(Text.class);

job1.setMapOutputValueClass(Text.class);

job1.setOutputKeyClass(Text.class);

job1.setOutputValueClass(Text.class);

FileInputFormat.setInputPaths(job1, new Path(args[0]));

FileOutputFormat.setOutputPath(job1, new Path(args[1]));

Job job2 = Job.getInstance(conf);

job2.setMapperClass(TwoShareFriendsMapper.class);

job2.setReducerClass(TwoShareFriendsReducer.class);

job2.setMapOutputKeyClass(Text.class);

job2.setMapOutputValueClass(Text.class);

job2.setOutputKeyClass(Text.class);

job2.setOutputValueClass(Text.class);

FileInputFormat.setInputPaths(job2, new Path(args[1]));

FileOutputFormat.setOutputPath(job2, new Path(args[2]));

JobControl control = new JobControl("Andy");

ControlledJob ajob = new ControlledJob(job1.getConfiguration());

ControlledJob bjob = new ControlledJob(job2.getConfiguration());

bjob.addDependingJob(ajob);

control.addJob(ajob);

control.addJob(bjob);

Thread thread = new Thread(control);

thread.start();

}

}

作者:落花桂

本文版权归作者和博客园共有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。

浙公网安备 33010602011771号

浙公网安备 33010602011771号