测试神经网络

- 使用Keras进行自动验证

- 使用Keras进行手工验证

- 使用Keras进行K折交叉验证

1 分割数据

数据量大和网络复杂会造成训练时间很长,所以需要将数据分成训练、测试或验证数据集。Keras提供两种办法:

- 自动验证

- 手工验证

Keras可以将数据自动分出一部分,每次训练后进行验证。在训练时用validation_split参数可以指定验证数据的比例,一般是总数据的20%或者33%。

1 下面的代码加入了自动验证:

import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' # MLP with automatic validation set from keras.models import Sequential from keras.layers import Dense import numpy # fix random seed for reproducibility seed = 7 numpy.random.seed(seed) # load pima indians dataset dataset = numpy.loadtxt("pima-indians-diabetes.csv", delimiter=",") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # create model model = Sequential() model.add(Dense(12, input_dim=8, kernel_initializer='uniform', activation='relu')) model.add(Dense(8, kernel_initializer='uniform', activation='relu')) model.add(Dense(1, kernel_initializer='uniform', activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Fit the model model.fit(X, Y, validation_split=0.33, epochs=150, batch_size=10)

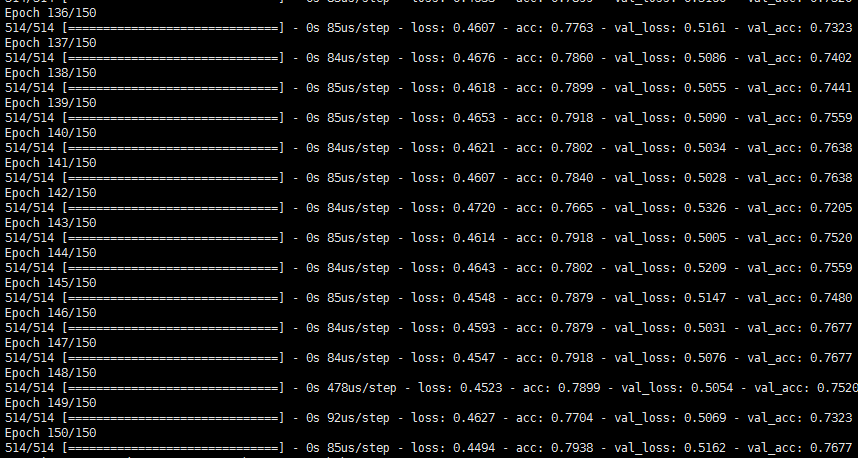

训练时,每轮会显示训练和测试数据的数据:

2 手工验证

Keras也可以手工进行验证。我们定义一个train_test_split函数,将数据分成2:1的测试和验证数据集。在调用fit()方法时需要加入validation_data参数作为验证数据,数组的项目分别是输入和输出数据。

import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' # MLP with manual validation set from keras.models import Sequential from keras.layers import Dense # from sklearn.cross_validation import train_test_split # 由于cross_validation将要被移除This module will be removed in 0.20.所以使用下面的model_selection来导入train_test_split from sklearn.model_selection import train_test_split import numpy # fix random seed for reproducibility seed = 7 numpy.random.seed(seed) # load pima indians dataset dataset = numpy.loadtxt("pima-indians-diabetes.csv", delimiter="\t") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # split into 67% for train and 33% for test X_train, X_test, y_train, y_test = train_test_split(X, Y, test_size=0.33, random_state=seed) # create model model = Sequential() model.add(Dense(12, input_dim=8, kernel_initializer='uniform', activation='relu')) model.add(Dense(8, kernel_initializer='uniform', activation='relu')) model.add(Dense(1, kernel_initializer='uniform', activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Fit the model model.fit(X_train, y_train, validation_data=(X_test,y_test), epochs=150, batch_size=10)

和自动化验证一样,每轮训练后,Keras会输出训练和验证结果:

3 手工K折交叉验证

机器学习的金科玉律是K折验证,以验证模型对未来数据的预测能力。K折验证的方法是:将数据分成K组,留下1组验证,其他数据用作训练,直到每种分发的性能一致。

深度学习一般不用交叉验证,因为对算力要求太高。例如,K折的次数一般是5或者10折:每组都需要训练并验证,训练时间成倍上升。然而,如果数据量小,交叉验证的效果更好,误差更小。

scikit-learn有StratifiedKFold类,我们用它把数据分成10组。抽样方法是分层抽样,尽可能保证每组数据量一致。然后我们在每组上训练模型,使用verbose=0参数关闭每轮的输出。

训练后,Keras会输出模型的性能,并存储模型。最终,Keras输出性能的平均值和标准差,为性能估算提供更准确的估计:

import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' # MLP for Pima Indians Dataset with 10-fold cross validation from keras.models import Sequential from keras.layers import Dense from sklearn.cross_validation import StratifiedKFold #from sklearn.model_selection import StratifiedKFold import numpy # fix random seed for reproducibility seed = 7 numpy.random.seed(seed) # load pima indians dataset dataset = numpy.loadtxt("pima-indians-diabetes.csv", delimiter="\t") # split into input (X) and output (Y) variables X = dataset[:,0:8] Y = dataset[:,8] # define 10-fold cross validation test harness kfold = StratifiedKFold(y=Y,n_folds=10, shuffle=True, random_state=seed) cvscores = [] for i, (train, test) in enumerate(kfold): # create model model = Sequential() model.add(Dense(12, input_dim=8, kernel_initializer='uniform', activation='relu')) model.add(Dense(8, kernel_initializer='uniform', activation='relu')) model.add(Dense(1, kernel_initializer='uniform', activation='sigmoid')) # Compile model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Fit the model model.fit(X[train], Y[train], epochs=150, batch_size=10, verbose=0) # evaluate the model scores = model.evaluate(X[test], Y[test], verbose=0) print("%s: %.2f%%" % (model.metrics_names[1], scores[1]*100)) cvscores.append(scores[1] * 100) print("%.2f%% (+/- %.2f%%)" % (numpy.mean(cvscores), numpy.std(cvscores)))

输出是:

浙公网安备 33010602011771号

浙公网安备 33010602011771号