Machine_Learning_in_Action06 - SVM

SVM

介绍 SVM

使用SMO优化算法

使用核函数对数据进行空间转化

与其他分类器对比

- SVM 的实现方法有多种,本章介绍最常见的一种:序列最小化优化算法(SMO, sequential minimal optimization algorithm)

通过最大化间隔分离数据

- 优点

- 泛化错误率低,计算量小,结果易解释

- 缺点

- 对参数和核函数的选择敏感,原始分类器不加修改仅适用于二分类问题

- 适用数据类型:数值型和标称型数据

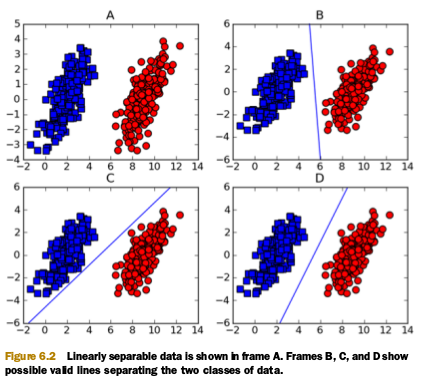

线性可分的数据,分界线叫做超平面。

我们希望分类器能将数据尽可能地远离超平面,我们想要最大化边界距离(margin),目的是为了能在有限的数据集上训练时有尽可能好的健壮性。

与超平面距离最近的点叫做支持向量,我们要做的是最大化支持向量与分类超平面的距离。

寻找最大边界距离

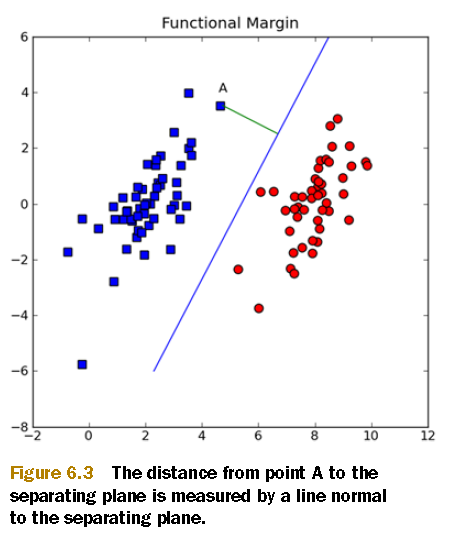

分类超平面往往是 \(\mathbf{w}^T\mathbf{x} + b\) 的形式,A 点到超平面的距离是 \(|\mathbf{w}^T\mathbf{x} + b| / ||\mathbf{w}||\) 。b 是一个常数,类似于逻辑回归中的 \(w_0\) ,w 和 b 描述了分类超平面。

以分类器的形式处理优化问题

理解分类器是如何工作的有助于理解优化问题。对于分类函数sigmoid,我们将数据输入函数,输出一个类别,类别是0或1,这里我们将sigmoid函数替换为阶跃函数,输入小于0时取值为-1,否则为1.

为何要将类别从0和1替换为-1和1,是因为这样在数学上是可控的,-1和1只差了一个符号。这样我们就只用一个公式就能表示点到分类面的距离 \(label*(\mathbf{w}^T\mathbf{x}+b)\) 。如果一个点距离分类面很远,并且是在正数据集一侧,则 \(\mathbf{w}^T\mathbf{x}+b\) 将会是一个很大的正值,\(label*(\mathbf{w}^T\mathbf{x}+b)\) 是一个比较大的正数。而对于负数据集,它也是一个正的值。

现在的目标是找到一组 \(\mathbf{w}\) 和 b 来定义分类器。为此我们需要找到最小的边界距离的点(支持向量),我们必须最大化边界距离:

直接求解这个问题是很困难的,所以我们换一种形式。先看花括号内的部分,多重优化是很困难的,所以我们可以保持一部分不变,最大化另一部分。如果我们令支持向量的 \(label·(\mathbf{w^Tx} + b)\) 为1,那么我们需要最大化 \(||w||^{-1}\)。不是所有的 \(label·(\mathbf{w^Tx} + b)\) 都是1,只有最靠近分类面的是1,远离分类面的,内积将会更大。

优化问题变成了一个限定性优化问题,为了得到最好的值,\(label·(\mathbf{w^Tx} + b)\) 被限制大于等于1。这种问题是常见的限定性优化问题,通常使用拉格朗日乘子法。

上述的优化过程有个假设,就是假设数据集是100% 线性可分的,而现实并不如此,所以可以引入一个松弛因子(slack variables),允许有数据点分布在分类面的错误的一边

SVM 的一般框架

SVM 的一般方法:

- 收集数据

- 准备:数值型数据

- 分析:可视化来找到分类超平面

- 训练

- 测试

- 应用

- 目前SVM是二分类,想要应用于多分类,需要手动修改代码

用 SMO 算法进行效率优化

在此之前,人们使用二次规划求解优化问题,它会消耗大量计算资源并且很复杂。

现在我们要使用SMO算法,然后会写一个简化版本来说明它是如何工作。简化版可以处理少量数据。下一节我们会使用完整版本,它的运行速度比简化版快很多。

Platt 的 SMO 算法

1996年 John Platt 发表了SMO算法,用来训练 SVM。SMO代表 Sequential Minimal Optimization。它会将大的优化问题拆分成小的问题。

SMO算法的工作原理是:每次循环中选择两个alpha进行优化处理。一旦找到一对合适的slpha,那么就增大其中一个同时减小另一个。这里所谓“合适”就是指两个alpha必须要符合一定的条件,一是这两个alpha必须要在间隔边界之外,二是这两个alpha还没有进行过区间化处理货不在边界上。

用简化的SMO 解决小数据集问题

Platt SMO算法的完整版需要更多的代码,简化版代码量小,但是执行需要的时间却很长。外循环步骤循环决定了了要优化的最佳alpha,而简化版会跳过这一步。首先在数据集上遍历每个alpha,然后再剩下的alpha集合中随机选择另一个alpha,构建alpha对。这里有个地方要注意,就是我们需要同时改变alpha的值,因为他们满足一个约束 \(\sum{\alpha_i·label^{(i)}} = 0\)。改变一个alpha的值可能会破坏这个约束,所以我们一般会同时改变这两个值。

为此,我们创建一个辅助函数,从一个范围中随机选择一个值。再创建另一个辅助函数,对较大的值进行截断。

import numpy as np

def loadDataSet(fileName):

dataMat = []; labelMat = []

fr = open(fileName)

for line in fr.readlines():

lineArr = line.strip().split('\t')

dataMat.append([float(lineArr[0]), float(lineArr[1])])

labelMat.append(float(lineArr[2]))

return dataMat,labelMat

def selectJrand(i,m):

j=i

while (j==i):

j = int(np.random.uniform(0,m))

return j

def clipAlpha(aj,H,L):

if aj > H:

aj = H

if L > aj:

aj = L

return aj

def smoSimple(dataMatIn, classLabels, C, toler, maxIter):

dataMatrix = np.mat(dataMatIn); labelMat = np.mat(classLabels).transpose()

b = 0; m,n = np.shape(dataMatrix)

alphas = np.mat(np.zeros((m,1)))

iter = 0

while (iter < maxIter):

alphaPairsChanged = 0

for i in range(m):

fXi = float(np.multiply(alphas,labelMat).T*(dataMatrix*dataMatrix[i,:].T)) + b

Ei = fXi - float(labelMat[i])

if ((labelMat[i]*Ei < -toler) and (alphas[i] < C)) or \

((labelMat[i]*Ei > toler) and \

(alphas[i] > 0)):

j = selectJrand(i,m)

fXj = float(np.multiply(alphas,labelMat).T*(dataMatrix*dataMatrix[j,:].T)) + b

Ej = fXj - float(labelMat[j])

alphaIold = alphas[i].copy();

alphaJold = alphas[j].copy();

if (labelMat[i] != labelMat[j]):

L = max(0, alphas[j] - alphas[i])

H = min(C, C + alphas[j] - alphas[i])

else:

L = max(0, alphas[j] + alphas[i] - C)

H = min(C, alphas[j] + alphas[i])

if L==H: print("L==H"); continue

eta = 2.0 * dataMatrix[i,:]*dataMatrix[j,:].T - \

dataMatrix[i,:]*dataMatrix[i,:].T - \

dataMatrix[j,:]*dataMatrix[j,:].T

if eta >= 0: print("eta>=0"); continue

alphas[j] -= labelMat[j]*(Ei - Ej)/eta

alphas[j] = clipAlpha(alphas[j],H,L)

if (abs(alphas[j] - alphaJold) < 0.00001):

print("j not moving enough")

continue

alphas[i] += labelMat[j]*labelMat[i]*\

(alphaJold - alphas[j])

b1 = b - Ei- labelMat[i]*(alphas[i]-alphaIold)*\

dataMatrix[i,:]*dataMatrix[i,:].T - \

labelMat[j]*(alphas[j]-alphaJold)*\

dataMatrix[i,:]*dataMatrix[j,:].T

b2 = b - Ej- labelMat[i]*(alphas[i]-alphaIold)*\

dataMatrix[i,:]*dataMatrix[j,:].T - \

labelMat[j]*(alphas[j]-alphaJold)*\

dataMatrix[j,:]*dataMatrix[j,:].T

if (0 < alphas[i]) and (C > alphas[i]): b = b1

elif (0 < alphas[j]) and (C > alphas[j]): b = b2

else: b = (b1 + b2)/2.0

alphaPairsChanged += 1

print("iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

if (alphaPairsChanged == 0): iter += 1

else: iter = 0

print("iteration number: %d" % iter)

return b,alphas

if __name__ == '__main__':

dataArr,labelArr = loadDataSet('testSet.txt')

print(dataArr)

b, alphas = smoSimple(dataArr, labelArr, 0.6, 0.01, 40)

print(b, alphas[alphas>0])

for i in range(100):

if alphas[i]>0.0: print(dataArr[i], labelArr[i])

使用完整 Platt SMO 算法加速优化

import numpy as np

def loadDataSet(fileName):

dataMat = []; labelMat = []

fr = open(fileName)

for line in fr.readlines():

lineArr = line.strip().split('\t')

dataMat.append([float(lineArr[0]), float(lineArr[1])])

labelMat.append(float(lineArr[2]))

return dataMat,labelMat

class optStruct:

def __init__(self,dataMatIn, classLabels, C, toler):

self.X = dataMatIn

self.labelMat = classLabels

self.C = C

self.tol = toler

self.m = np.shape(dataMatIn)[0]

self.alphas = np.mat(np.zeros((self.m,1)))

self.b = 0

self.eCache = np.mat(np.zeros((self.m,2)))

def calcEk(oS, k):

fXk = float(np.multiply(oS.alphas,oS.labelMat).T*\

(oS.X*oS.X[k,:].T)) + oS.b

Ek = fXk - float(oS.labelMat[k])

return Ek

def selectJ(i, oS, Ei):

maxK = -1; maxDeltaE = 0; Ej = 0

oS.eCache[i] = [1,Ei]

validEcacheList = np.nonzero(oS.eCache[:,0].A)[0]

if (len(validEcacheList)) > 1:

for k in validEcacheList:

if k == i: continue

Ek = calcEk(oS, k)

deltaE = abs(Ei - Ek)

if (deltaE > maxDeltaE):

maxK = k; maxDeltaE = deltaE; Ej = Ek

return maxK, Ej

else:

j = selectJrand(i, oS.m)

Ej = calcEk(oS, j)

return j, Ej

def updateEk(oS, k):

Ek = calcEk(oS, k)

oS.eCache[k] = [1,Ek]

def selectJrand(i,m):

j=i

while (j==i):

j = int(np.random.uniform(0,m))

return j

def clipAlpha(aj,H,L):

if aj > H:

aj = H

if L > aj:

aj = L

return aj

def innerL(i, oS):

Ei = calcEk(oS, i)

if ((oS.labelMat[i]*Ei < -oS.tol) and (oS.alphas[i] < oS.C)) or\

((oS.labelMat[i]*Ei > oS.tol) and (oS.alphas[i] > 0)):

j,Ej = selectJ(i, oS, Ei)

alphaIold = oS.alphas[i].copy(); alphaJold = oS.alphas[j].copy();

if (oS.labelMat[i] != oS.labelMat[j]):

L = max(0, oS.alphas[j] - oS.alphas[i])

H = min(oS.C, oS.C + oS.alphas[j] - oS.alphas[i])

else:

L = max(0, oS.alphas[j] + oS.alphas[i] - oS.C)

H = min(oS.C, oS.alphas[j] + oS.alphas[i])

if L==H: print("L==H"); return 0

eta = 2.0 * oS.X[i,:]*oS.X[j,:].T - oS.X[i,:]*oS.X[i,:].T - \

oS.X[j,:]*oS.X[j,:].T

if eta >= 0: print("eta>=0"); return 0

oS.alphas[j] -= oS.labelMat[j]*(Ei - Ej)/eta

oS.alphas[j] = clipAlpha(oS.alphas[j],H,L)

updateEk(oS, j)

if (abs(oS.alphas[j] - alphaJold) < 0.00001):

print("j not moving enough"); return 0

oS.alphas[i] += oS.labelMat[j]*oS.labelMat[i]*\

(alphaJold - oS.alphas[j])

updateEk(oS, i)

b1 = oS.b - Ei- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*\

oS.X[i,:]*oS.X[i,:].T - oS.labelMat[j]*\

(oS.alphas[j]-alphaJold)*oS.X[i,:]*oS.X[j,:].T

b2 = oS.b - Ej- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*\

oS.X[i,:]*oS.X[j,:].T - oS.labelMat[j]*\

(oS.alphas[j]-alphaJold)*oS.X[j,:]*oS.X[j,:].T

if (0 < oS.alphas[i]) and (oS.C > oS.alphas[i]): oS.b = b1

elif (0 < oS.alphas[j]) and (oS.C > oS.alphas[j]): oS.b = b2

else: oS.b = (b1 + b2)/2.0

return 1

else: return 0

def smoP(dataMatIn, classLabels, C, toler, maxIter, kTup=('lin', 0)):

oS = optStruct(np.mat(dataMatIn),np.mat(classLabels).transpose(),C,toler)

iter = 0

entireSet = True; alphaPairsChanged = 0

while (iter < maxIter) and ((alphaPairsChanged > 0) or (entireSet)):

alphaPairsChanged = 0

if entireSet:

for i in range(oS.m):

alphaPairsChanged += innerL(i,oS)

print("fullSet, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

else:

nonBoundIs = np.nonzero((oS.alphas.A > 0) * (oS.alphas.A < C))[0]

for i in nonBoundIs:

alphaPairsChanged += innerL(i,oS)

print("non-bound, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

if entireSet: entireSet = False

elif (alphaPairsChanged == 0): entireSet = True

print("iteration number: %d" % iter)

return oS.b,oS.alphas

if __name__ == '__main__':

dataArr,labelArr = loadDataSet('testSet.txt')

print(dataArr)

b, alphas = smoP(dataArr, labelArr, 0.6, 0.01, 40)

print(b, alphas[alphas>0])

for i in range(100):

if alphas[i]>0.0: print(dataArr[i], labelArr[i])

对复杂数据集使用核函数

利用核函数可以处理非线性可分数据的情况,径向基函数(radial bisis function)是一种常用的核函数。

使用径向基函数作为核函数

所谓径向基函数,就是基于向量距离进行运算的函数,其高斯版本为:

其中,\(\sigma\) 是用户定义的用于确定到达率(reach)或者函数值跌落到0的速度参数

import numpy as np

def loadDataSet(fileName):

dataMat = []; labelMat = []

fr = open(fileName)

for line in fr.readlines():

lineArr = line.strip().split('\t')

dataMat.append([float(lineArr[0]), float(lineArr[1])])

labelMat.append(float(lineArr[2]))

return dataMat,labelMat

def selectJ(i, oS, Ei):

maxK = -1; maxDeltaE = 0; Ej = 0

oS.eCache[i] = [1,Ei]

validEcacheList = np.nonzero(oS.eCache[:,0].A)[0]

if (len(validEcacheList)) > 1:

for k in validEcacheList:

if k == i: continue

Ek = calcEk(oS, k)

deltaE = abs(Ei - Ek)

if (deltaE > maxDeltaE):

maxK = k; maxDeltaE = deltaE; Ej = Ek

return maxK, Ej

else:

j = selectJrand(i, oS.m)

Ej = calcEk(oS, j)

return j, Ej

def selectJrand(i,m):

j=i

while (j==i):

j = int(np.random.uniform(0,m))

return j

def clipAlpha(aj,H,L):

if aj > H:

aj = H

if L > aj:

aj = L

return aj

def updateEk(oS, k):

Ek = calcEk(oS, k)

oS.eCache[k] = [1,Ek]

def smoP(dataMatIn, classLabels, C, toler, maxIter, kTup=('lin', 0)):

oS = optStruct(np.mat(dataMatIn),np.mat(classLabels).transpose(),C,toler, kTup)

iter = 0

entireSet = True; alphaPairsChanged = 0

while (iter < maxIter) and ((alphaPairsChanged > 0) or (entireSet)):

alphaPairsChanged = 0

if entireSet:

for i in range(oS.m):

alphaPairsChanged += innerL(i,oS)

print("fullSet, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

else:

nonBoundIs = np.nonzero((oS.alphas.A > 0) * (oS.alphas.A < C))[0]

for i in nonBoundIs:

alphaPairsChanged += innerL(i,oS)

print("non-bound, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

if entireSet: entireSet = False

elif (alphaPairsChanged == 0): entireSet = True

print("iteration number: %d" % iter)

return oS.b,oS.alphas

def kernelTrans(X, A, kTup):

m,n = np.shape(X)

K = np.mat(np.zeros((m,1)))

if kTup[0]=='lin': K = X * A.T

elif kTup[0]=='rbf':

for j in range(m):

deltaRow = X[j,:] - A

K[j] = deltaRow*deltaRow.T

K = np.exp(K /(-1*kTup[1]**2))

else: raise NameError('Houston We Have a Problem -- That Kernel is not recognized')

return K

class optStruct:

def __init__(self,dataMatIn, classLabels, C, toler, kTup):

self.X = dataMatIn

self.labelMat = classLabels

self.C = C

self.tol = toler

self.m = np.shape(dataMatIn)[0]

self.alphas = np.mat(np.zeros((self.m,1)))

self.b = 0

self.eCache = np.mat(np.zeros((self.m,2)))

self.K = np.mat(np.zeros((self.m,self.m)))

for i in range(self.m):

self.K[:,i] = kernelTrans(self.X, self.X[i,:], kTup)

def innerL(i, oS):

Ei = calcEk(oS, i)

if ((oS.labelMat[i]*Ei < -oS.tol) and (oS.alphas[i] < oS.C)) or\

((oS.labelMat[i]*Ei > oS.tol) and (oS.alphas[i] > 0)):

j,Ej = selectJ(i, oS, Ei)

alphaIold = oS.alphas[i].copy(); alphaJold = oS.alphas[j].copy();

if (oS.labelMat[i] != oS.labelMat[j]):

L = max(0, oS.alphas[j] - oS.alphas[i])

H = min(oS.C, oS.C + oS.alphas[j] - oS.alphas[i])

else:

L = max(0, oS.alphas[j] + oS.alphas[i] - oS.C)

H = min(oS.C, oS.alphas[j] + oS.alphas[i])

if L==H: print("L==H"); return 0

eta = 2.0 * oS.K[i,j] - oS.K[i,i] - oS.K[j,j]

if eta >= 0: print("eta>=0"); return 0

oS.alphas[j] -= oS.labelMat[j]*(Ei - Ej)/eta

oS.alphas[j] = clipAlpha(oS.alphas[j],H,L)

updateEk(oS, j)

if (abs(oS.alphas[j] - alphaJold) < 0.00001):

print("j not moving enough"); return 0

oS.alphas[i] += oS.labelMat[j]*oS.labelMat[i]*\

(alphaJold - oS.alphas[j])

updateEk(oS, i)

b1 = oS.b - Ei- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*oS.K[i,i] -\

oS.labelMat[j]*(oS.alphas[j]-alphaJold)*oS.K[i,j]

b2 = oS.b - Ej- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*oS.K[i,j]-\

oS.labelMat[j]*(oS.alphas[j]-alphaJold)*oS.K[j,j]

if (0 < oS.alphas[i]) and (oS.C > oS.alphas[i]): oS.b = b1

elif (0 < oS.alphas[j]) and (oS.C > oS.alphas[j]): oS.b = b2

else: oS.b = (b1 + b2)/2.0

return 1

else: return 0

def calcEk(oS, k):

fXk = float(np.multiply(oS.alphas,oS.labelMat).T*oS.K[:,k] + oS.b)

Ek = fXk - float(oS.labelMat[k])

return Ek

def testRbf(k1=1.3):

dataArr,labelArr = loadDataSet('testSetRBF.txt')

b,alphas = smoP(dataArr, labelArr, 200, 0.0001, 10000, ('rbf', k1))

datMat=np.mat(dataArr); labelMat = np.mat(labelArr).transpose()

svInd=np.nonzero(alphas.A>0)[0]

sVs=datMat[svInd]

labelSV = labelMat[svInd];

print("there are %d Support Vectors" % np.shape(sVs)[0])

m,n = np.shape(datMat)

errorCount = 0

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],('rbf', k1))

predict=kernelEval.T * np.multiply(labelSV,alphas[svInd]) + b

if np.sign(predict)!=np.sign(labelArr[i]): errorCount += 1

print("the training error rate is: %f" % (float(errorCount)/m))

dataArr,labelArr = loadDataSet('testSetRBF2.txt')

errorCount = 0

datMat=np.mat(dataArr); labelMat = np.mat(labelArr).transpose()

m,n = np.shape(datMat)

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],('rbf', k1))

predict=kernelEval.T * np.multiply(labelSV,alphas[svInd]) + b

if np.sign(predict)!=np.sign(labelArr[i]): errorCount += 1

print("the test error rate is: %f" % (float(errorCount)/m))

if __name__ == '__main__':

testRbf()

例:重新回顾手写数字分类问题

import numpy as np

import os

def img2vector(filename):

returnVect = np.zeros((1,1024))

fr = open(filename)

for i in range(32):

lineStr = fr.readline()

for j in range(32):

returnVect[0,32*i+j] = int(lineStr[j])

return returnVect

def smoP(dataMatIn, classLabels, C, toler, maxIter, kTup=('lin', 0)):

oS = optStruct(np.mat(dataMatIn),np.mat(classLabels).transpose(),C,toler, kTup)

iter = 0

entireSet = True; alphaPairsChanged = 0

while (iter < maxIter) and ((alphaPairsChanged > 0) or (entireSet)):

alphaPairsChanged = 0

if entireSet:

for i in range(oS.m):

alphaPairsChanged += innerL(i,oS)

print("fullSet, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

else:

nonBoundIs = np.nonzero((oS.alphas.A > 0) * (oS.alphas.A < C))[0]

for i in nonBoundIs:

alphaPairsChanged += innerL(i,oS)

print("non-bound, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

if entireSet: entireSet = False

elif (alphaPairsChanged == 0): entireSet = True

print("iteration number: %d" % iter)

return oS.b,oS.alphas

def kernelTrans(X, A, kTup):

m,n = np.shape(X)

K = np.mat(np.zeros((m,1)))

if kTup[0]=='lin': K = X * A.T

elif kTup[0]=='rbf':

for j in range(m):

deltaRow = X[j,:] - A

K[j] = deltaRow*deltaRow.T

K = np.exp(K /(-1*kTup[1]**2))

else: raise NameError('Houston We Have a Problem -- That Kernel is not recognized')

return K

class optStruct:

def __init__(self,dataMatIn, classLabels, C, toler, kTup):

self.X = dataMatIn

self.labelMat = classLabels

self.C = C

self.tol = toler

self.m = np.shape(dataMatIn)[0]

self.alphas = np.mat(np.zeros((self.m,1)))

self.b = 0

self.eCache = np.mat(np.zeros((self.m,2)))

self.K = np.mat(np.zeros((self.m,self.m)))

for i in range(self.m):

self.K[:,i] = kernelTrans(self.X, self.X[i,:], kTup)

def innerL(i, oS):

Ei = calcEk(oS, i)

if ((oS.labelMat[i]*Ei < -oS.tol) and (oS.alphas[i] < oS.C)) or\

((oS.labelMat[i]*Ei > oS.tol) and (oS.alphas[i] > 0)):

j,Ej = selectJ(i, oS, Ei)

alphaIold = oS.alphas[i].copy(); alphaJold = oS.alphas[j].copy();

if (oS.labelMat[i] != oS.labelMat[j]):

L = max(0, oS.alphas[j] - oS.alphas[i])

H = min(oS.C, oS.C + oS.alphas[j] - oS.alphas[i])

else:

L = max(0, oS.alphas[j] + oS.alphas[i] - oS.C)

H = min(oS.C, oS.alphas[j] + oS.alphas[i])

if L==H: print("L==H"); return 0

eta = 2.0 * oS.K[i,j] - oS.K[i,i] - oS.K[j,j]

if eta >= 0: print("eta>=0"); return 0

oS.alphas[j] -= oS.labelMat[j]*(Ei - Ej)/eta

oS.alphas[j] = clipAlpha(oS.alphas[j],H,L)

updateEk(oS, j)

if (abs(oS.alphas[j] - alphaJold) < 0.00001):

print("j not moving enough"); return 0

oS.alphas[i] += oS.labelMat[j]*oS.labelMat[i]*\

(alphaJold - oS.alphas[j])

updateEk(oS, i)

b1 = oS.b - Ei- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*oS.K[i,i] -\

oS.labelMat[j]*(oS.alphas[j]-alphaJold)*oS.K[i,j]

b2 = oS.b - Ej- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*oS.K[i,j]-\

oS.labelMat[j]*(oS.alphas[j]-alphaJold)*oS.K[j,j]

if (0 < oS.alphas[i]) and (oS.C > oS.alphas[i]): oS.b = b1

elif (0 < oS.alphas[j]) and (oS.C > oS.alphas[j]): oS.b = b2

else: oS.b = (b1 + b2)/2.0

return 1

else: return 0

def calcEk(oS, k):

fXk = float(np.multiply(oS.alphas,oS.labelMat).T*oS.K[:,k] + oS.b)

Ek = fXk - float(oS.labelMat[k])

return Ek

def selectJ(i, oS, Ei):

maxK = -1; maxDeltaE = 0; Ej = 0

oS.eCache[i] = [1,Ei]

validEcacheList = np.nonzero(oS.eCache[:,0].A)[0]

if (len(validEcacheList)) > 1:

for k in validEcacheList:

if k == i: continue

Ek = calcEk(oS, k)

deltaE = abs(Ei - Ek)

if (deltaE > maxDeltaE):

maxK = k; maxDeltaE = deltaE; Ej = Ek

return maxK, Ej

else:

j = selectJrand(i, oS.m)

Ej = calcEk(oS, j)

return j, Ej

def selectJrand(i,m):

j=i

while (j==i):

j = int(np.random.uniform(0,m))

return j

def clipAlpha(aj,H,L):

if aj > H:

aj = H

if L > aj:

aj = L

return aj

def updateEk(oS, k):

Ek = calcEk(oS, k)

oS.eCache[k] = [1,Ek]

def loadImages(dirName):

from os import listdir

hwLabels = []

trainingFileList = os.listdir(dirName)

m = len(trainingFileList)

trainingMat = np.zeros((m,1024))

for i in range(m):

fileNameStr = trainingFileList[i]

fileStr = fileNameStr.split('.')[0]

classNumStr = int(fileStr.split('_')[0])

if classNumStr == 9: hwLabels.append(-1)

else: hwLabels.append(1)

trainingMat[i,:] = img2vector('%s/%s' % (dirName, fileNameStr))

return trainingMat, hwLabels

def kernelTrans(X, A, kTup):

m,n = np.shape(X)

K = np.mat(np.zeros((m,1)))

if kTup[0]=='lin': K = X * A.T

elif kTup[0]=='rbf':

for j in range(m):

deltaRow = X[j,:] - A

K[j] = deltaRow*deltaRow.T

K = np.exp(K /(-1*kTup[1]**2))

else: raise NameError('Houston We Have a Problem -- That Kernel is not recognized')

return K

def testDigits(kTup=('rbf', 10)):

dataArr,labelArr = loadImages('digits/trainingDigits')

b,alphas = smoP(dataArr, labelArr, 200, 0.0001, 300, kTup)

datMat=np.mat(dataArr); labelMat = np.mat(labelArr).transpose()

svInd=np.nonzero(alphas.A>0)[0]

sVs=datMat[svInd]

labelSV = labelMat[svInd];

print("there are %d Support Vectors" % np.shape(sVs)[0])

m,n = np.shape(datMat)

errorCount = 0

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],kTup)

predict=kernelEval.T * np.multiply(labelSV,alphas[svInd]) + b

if np.sign(predict)!=np.sign(labelArr[i]): errorCount += 1

print("the training error rate is: %f" % (float(errorCount)/m))

dataArr,labelArr = loadImages('digits/testDigits')

errorCount = 0

datMat=np.mat(dataArr); labelMat = np.mat(labelArr).transpose()

m,n = np.shape(datMat)

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],kTup)

predict=kernelEval.T * np.multiply(labelSV,alphas[svInd]) + b

if np.sign(predict)!=np.sign(labelArr[i]): errorCount += 1

print("the test error rate is: %f" % (float(errorCount)/m))

if __name__ == '__main__':

testDigits()

浙公网安备 33010602011771号

浙公网安备 33010602011771号