TCP/IP 协议栈在 Linux 内核中的运行时序分析

一、调研要求

在深入理解Linux内核任务调度(中断处理、softirg、tasklet、wq、内核线程等)机制的基础上,分析梳理send和recv过程中TCP/IP协议栈相关的运行任务实体及相互协作的时序分析。

编译、部署、运行、测评、原理、源代码分析、跟踪调试等;

应该包括时序图。

二、Linux简述

1.Linux内核概述

1.1 Linux内核的任务

1.从技术层面讲,内核是硬件与软件之间的一个中间层。作用是将应用层序的请求传递给硬件,并充当底层驱动程序,对系统中的各种设备和组件进行寻址。

2.从应用程序的层面讲,应用程序与硬件没有联系,只与内核有联系,内核是应用程序知道的层次中的最底层。在实际工作中内核抽象了相关细节。

3.内核是一个资源管理程序。负责将可用的共享资源(CPU时间、磁盘空间、网络连接等)分配得到各个系统进程。

4.内核就像一个库,提供了一组面向系统的命令。系统调用对于应用程序来说,就像调用普通函数一样。

1.2 Linux内核的结构

如上图所示,Linux内核主要由5个子系统组成:

1.进程调度子系统:控制进程对CPU的访问,采用适当的调度策略使各进程能够合理的使用CPU

2.内存管理子系统:能够允许多个进程安全的共享内存区域。Linux的内存管理支持虚拟内存,在计算机中运行的程序,其代码、数据和堆栈的总量可以超过实际内存的大小,操作系统只是把当前使用的程序块保留在内存中,其余的程序块则保存在磁盘中。必要时,操作系统负责在磁盘和内存之间交换程序块。

3.虚拟文件子系统:这部分隐藏了各种硬件的具体细节,为所有的设备提供统一的接口,从而提供并支持与其他操作系统兼容的多种文件系统格式。

4.网络接口子系统:提供了对各种网络标准的存取和各种网络硬件的支持。

5.进程间通信子系统:支持进程间各种通信机制

2.协议栈简介

计算机网络常用分层模型有两种,OSI模型和TCP/IP模型。

2.1 OSI模型

以上为OSI模型的示意图。OSI模型分为7层,从上至下分别为应用层、表示层、会话层、传输层、网络层、数据链路层和物理层。各层的作用上图已经简要给出。OSI是一个定义良好的协议规范集,并有许多可选部分完成类似的任务。它定义了开放系统的层次结构、层次之间的相互关系以及各层所包括的可能的任务,作为一个框架来协调和组织各层所提供的服务。但是OSI参考模型并没有提供一个可以实现的方法,而是描述了一些概念,用来协调进程间通信标准的制定。即OSI参考模型并不是一个标准,而是一个在制定标准时所使用的概念性框架。

2.2 TCP/IP模型

上图为TCP/IP模型与OSI模型的对应图。TCP/IP模型从上至下分别为应用层、传输层、网络层、数据链路层和物理层。其中应用层对应OSI模型中的应用层、表示层、会话层。网络接口层对应OSI数据链路层和物理层。其余层和OSI层类似。

3 Linux内核协议栈

Linux网络栈也是按照分层的思想来设计的,Linux网络栈分为五层,分别是

- 系统调用接口层:提供socket接口的系统调用;

- 协议无关的接口层:就是socket层,这一层的目的是屏蔽底层的不同协议,以便与系统调用层之间的接口可以简单实现;

- 网络协议实现层:各类网络协议的具体实现;

- 设备无关的驱动接口层:这一层提供一组通用函数供底层网络设备驱动程序使用,便于对高层协议栈进行操作;

- 驱动程序层:负责与底层物理设备进行通信。

结构如上图所示。

三、实验环节

1 本次调研采用的代码

1.1 服务端

#include <stdio.h> /* perror */

#include <stdlib.h> /* exit */

#include <sys/types.h> /* WNOHANG */

#include <sys/wait.h> /* waitpid */

#include <string.h> /* memset */

#include <sys/time.h>

#include <sys/types.h>

#include <unistd.h>

#include <fcntl.h>

#include <sys/socket.h>

#include <errno.h>

#include <arpa/inet.h>

#include <netdb.h> /* gethostbyname */

#define true 1

#define false 0

#define MYPORT 3490 /* 监听的端口 */

#define BACKLOG 10 /* listen的请求接收队列长度 */

int main()

{

int sockfd, new_fd; /* 监听端口,数据端口 */

struct sockaddr_in sa; /* 自身的地址信息 */

struct sockaddr_in their_addr; /* 连接对方的地址信息 */

unsigned int sin_size;

if ((sockfd = socket(PF_INET, SOCK_STREAM, 0)) == -1)

{

perror("socket");

exit(1);

}

sa.sin_family = AF_INET;

sa.sin_port = htons(MYPORT); /* 网络字节顺序 */

sa.sin_addr.s_addr = INADDR_ANY; /* 自动填本机IP */

memset(&(sa.sin_zero), 0, 8); /* 其余部分置0 */

if (bind(sockfd, (struct sockaddr *)&sa, sizeof(sa)) == -1)

{

perror("bind");

exit(1);

}

if (listen(sockfd, BACKLOG) == -1)

{

perror("listen");

exit(1);

}

/* 主循环 */

while (1)

{

sin_size = sizeof(struct sockaddr_in);

new_fd = accept(sockfd,

(struct sockaddr *)&their_addr, &sin_size);

if (new_fd == -1)

{

perror("accept");

continue;

}

printf("Got connection from %s\n",

inet_ntoa(their_addr.sin_addr));

if (fork() == 0)

{

/* 子进程 */

if (send(new_fd, "Hello, world!\n", 14, 0) == -1)

perror("send");

close(new_fd);

exit(0);

}

close(new_fd);

/*清除所有子进程 */

while (waitpid(-1, NULL, WNOHANG) > 0)

;

}

close(sockfd);

return true;

1.2 客户端

#include <stdio.h> /* perror */

#include <stdlib.h> /* exit */

#include <sys/types.h> /* WNOHANG */

#include <sys/wait.h> /* waitpid */

#include <string.h> /* memset */

#include <sys/time.h>

#include <sys/types.h>

#include <unistd.h>

#include <fcntl.h>

#include <sys/socket.h>

#include <errno.h>

#include <arpa/inet.h>

#include <netdb.h> /* gethostbyname */

#define true 1

#define false 0

#define PORT 3490 /* Server的端口 */

#define MAXDATASIZE 100 /* 一次可以读的最大字节数 */

int main(int argc, char *argv[])

{

int sockfd, numbytes;

char buf[MAXDATASIZE];

struct hostent *he; /* 主机信息 */

struct sockaddr_in server_addr; /* 对方地址信息 */

if (argc != 2)

{

fprintf(stderr, "usage: client hostname\n");

exit(1);

}

/* get the host info */

if ((he = gethostbyname(argv[1])) == NULL)

{

/* 注意:获取DNS信息时,显示出错需要用herror而不是perror */

/* herror 在新的版本中会出现警告,已经建议不要使用了 */

perror("gethostbyname");

exit(1);

}

if ((sockfd = socket(PF_INET, SOCK_STREAM, 0)) == -1)

{

perror("socket");

exit(1);

}

server_addr.sin_family = AF_INET;

server_addr.sin_port = htons(PORT); /* short, NBO */

server_addr.sin_addr = *((struct in_addr *)he->h_addr_list[0]);

memset(&(server_addr.sin_zero), 0, 8); /* 其余部分设成0 */

if (connect(sockfd, (struct sockaddr *)&server_addr,

sizeof(struct sockaddr)) == -1)

{

perror("connect");

exit(1);

}

if ((numbytes = recv(sockfd, buf, MAXDATASIZE, 0)) == -1)

{

perror("recv");

exit(1);

}

buf[numbytes] = '\0';

printf("Received: %s", buf);

close(sockfd);

return true;

}

2 send和recv函数介绍

2.1 send函数

函数声明

ssize_t recv(int sockfd, void *buff, size_t nbytes, int flags);

参数介绍:

sockfd:指定发送端套接字描述符

buff: 存放要发送数据的缓冲区

nbytes: 实际要改善的数据的字节数

flags: 一般设置为0

函数流程:

send先比较发送数据的长度nbytes和套接字sockfd的发送缓冲区的长度,如果nbytes > 套接字sockfd的发送缓冲区的长度, 该函数返回SOCKET_ERROR;

如果nbtyes <= 套接字sockfd的发送缓冲区的长度,那么send先检查协议是否正在发送sockfd的发送缓冲区中的数据,如果是就等待协议把数据发送完,如果协议还没有开始发送sockfd的发送缓冲区中的数据或者sockfd的发送缓冲区中没有数据,那么send就比较sockfd的发送缓冲区的剩余空间和nbytes;

如果 nbytes > 套接字sockfd的发送缓冲区剩余空间的长度,send就一起等待协议把套接字sockfd的发送缓冲区中的数据发送完;

如果 nbytes < 套接字sockfd的发送缓冲区剩余空间大小,send就仅仅把buf中的数据copy到剩余空间里(注意并不是send把套接字sockfd的发送缓冲区中的数据传到连接的另一端的,而是协议传送的,send仅仅是把buf中的数据copy到套接字sockfd的发送缓冲区的剩余空间里);

如果send函数copy成功,就返回实际copy的字节数,如果send在copy数据时出现错误,那么send就返回SOCKET_ERROR; 如果在等待协议传送数据时网络断开,send函数也返回SOCKET_ERROR;

send函数把buff中的数据成功copy到sockfd的改善缓冲区的剩余空间后它就返回了,但是此时这些数据并不一定马上被传到连接的另一端。如果协议在后续的传送过程中出现网络错误的话,那么下一个socket函数就会返回SOCKET_ERROR。(每一个除send的socket函数在执行的最开始总要先等待套接字的发送缓冲区中的数据被协议传递完毕才能继续,如果在等待时出现网络错误那么该socket函数就返回SOCKET_ERROR)

在unix系统下,如果send在等待协议传送数据时网络断开,调用send的进程会接收到一个SIGPIPE信号,进程对该信号的处理是进程终止。

2.2 recv函数

函数声明

ssize_t send(int sockfd, const void *buff, size_t nbytes, int flags);

参数介绍:

sockfd:指定发送端套接字描述符

buff: 存放要发送数据的缓冲区

nbytes: 实际要改善的数据的字节数

flags: 一般设置为0

函数流程:

recv先等待s的发送缓冲区的数据被协议传送完毕,如果协议在传送sock的发送缓冲区中的数据时出现网络错误,那么recv函数返回SOCKET_ERROR

如果套接字sockfd的发送缓冲区中没有数据或者数据被协议成功发送完毕后,recv先检查套接字sockfd的接收缓冲区,如果sockfd的接收缓冲区中没有数据或者协议正在接收数据,那么recv就一起等待,直到把数据接收完毕。当协议把数据接收完毕,recv函数就把s的接收缓冲区中的数据copy到buff中(注意协议接收到的数据可能大于buff的长度,所以在这种情况下要调用几次recv函数才能把sockfd的接收缓冲区中的数据copy完。recv函数仅仅是copy数据,真正的接收数据是协议来完成的)

recv函数返回其实际copy的字节数,如果recv在copy时出错,那么它返回SOCKET_ERROR。如果recv函数在等待协议接收数据时网络中断了,那么它返回0。

在unix系统下,如果recv函数在等待协议接收数据时网络断开了,那么调用 recv的进程会接收到一个SIGPIPE信号,进程对该信号的默认处理是进程终止。

3 send过程内核调用流程

服务端与客户端在通信过程中会执行socket通信,在使用send系统调用时,从上至下分别经过应用层、传输层、网络层、数据链路层,下面我们依此看一下详细过程。

3.1 应用层

1 网络应用调用Socket API socket (int family, int type, int protocol) 创建一个 socket,该调用最终会调用 Linux system call socket() ,并最终调用 Linux Kernel 的 sock_create() 方法。该方法返回被创建好了的那个 socket 的 file descriptor。对于每一个 userspace 网络应用创建的 socket,在内核中都有一个对应的 struct socket和 struct sock。其中,struct sock 有三个队列(queue),分别是 rx , tx 和 err,在 sock 结构被初始化的时候,这些缓冲队列也被初始化完成;在收据收发过程中,每个 queue 中保存要发送或者接受的每个 packet 对应的 Linux 网络栈 sk_buffer 数据结构的实例 skb。socket结构如下

// socket_state: socket 状态

// type: socket type

// flags: socket flags

// ops: 专用协议的socket的操作

// file: 与socket 有关的指针列表

// sk: 负责协议相关结构体,这样就让这个这个结构体和协议分开。

// wq: 等待队列

struct socket {

socket_state state;

kmemcheck_bitfield_begin(type);

short type;

kmemcheck_bitfield_end(type);

unsigned long flags;

struct socket_wq __rcu *wq;

struct file *file;

struct sock *sk;

const struct proto_ops *ops;

};

2 对于 TCP socket 来说,应用调用 connect()API ,使得客户端和服务器端通过该 socket 建立一个虚拟连接。在此过程中,TCP 协议栈通过三次握手会建立 TCP 连接。默认地,该 API 会等到 TCP 握手完成连接建立后才返回。在建立连接的过程中的一个重要步骤是,确定双方使用的 Maxium Segemet Size (MSS)。因为 UDP 是面向无连接的协议,因此它是不需要该步骤的。

3 应用调用 Linux Socket 的 send 或者 write API 来发出一个 message 给接收端

4 sock_sendmsg 被调用,它使用 socket descriptor 获取 sock struct,创建 message header 和 socket control message

5 _sock_sendmsg 被调用,根据 socket 的协议类型,调用相应协议的发送函数。TCP 调用 tcp_sendmsg 函数, UDP调用send()/sendto()/sendmsg() 三个 system call 中的任意一个来发送 UDP message,它们最终都会调用内核中的 udp_sendmsg() 函数。

根据上述分析,发送端首先创建socket,然后通过send系统调用发送数据。具体到源码级别,会通过send、sendto、sendmsg来发送数据。

__sys_sendto函数:

int __sys_sendto(int fd, void __user *buff, size_t len, unsigned int flags,

struct sockaddr __user *addr, int addr_len)

{

struct socket *sock;

struct sockaddr_storage address;

int err;

struct msghdr msg;

struct iovec iov;

int fput_needed;

err = import_single_range(WRITE, buff, len, &iov, &msg.msg_iter);

if (unlikely(err))

return err;

sock = sockfd_lookup_light(fd, &err, &fput_needed);

if (!sock)

goto out;

msg.msg_name = NULL;

msg.msg_control = NULL;

msg.msg_controllen = 0;

msg.msg_namelen = 0;

if (addr) {

err = move_addr_to_kernel(addr, addr_len, &address);

if (err < 0)

goto out_put;

msg.msg_name = (struct sockaddr *)&address;

msg.msg_namelen = addr_len;

}

if (sock->file->f_flags & O_NONBLOCK)

flags |= MSG_DONTWAIT;

msg.msg_flags = flags;

err = sock_sendmsg(sock, &msg);

out_put:

fput_light(sock->file, fput_needed);

out:

return err;

}

__sys_sendto函数其实做了3件事:

1.通过fd获取了对应的struct socket

2.创建了用来描述要发送的数据的结构体struct msghdr。

3.调用了sock_sendmsg来执行实际的发送。

Sendto构建完这些后,调用sock_sendmsg继续执行发送流程。sock_sendmsg继续调用sock_sendmsg(),sock_sendmsg()最后调用struct socket->ops->sendmsg,即对应套接字类型的sendmsg()函数,所有的套接字类型的sendmsg()函数都是 sock_sendmsg,该函数首先检查本地端口是否已绑定,无绑定则执行自动绑定,而后调用具体协议的sendmsg函数。

sock_sendmsg函数:

int sock_sendmsg(struct socket *sock, struct msghdr *msg)

{

int err = security_socket_sendmsg(sock, msg,

msg_data_left(msg));

return err ?: sock_sendmsg_nosec(sock, msg);

}

EXPORT_SYMBOL(sock_sendmsg);

在sock_sendmsg()函数中,为了在发送多个消息时,提高性能会调用sock_sendmsg_nosec()。

sock_sendmsg_nosec函数:

static inline int sock_sendmsg_nosec(struct socket *sock, struct msghdr *msg)

{

int ret = INDIRECT_CALL_INET(sock->ops->sendmsg, inet6_sendmsg,

inet_sendmsg, sock, msg,

msg_data_left(msg));

BUG_ON(ret == -EIOCBQUEUED);

return ret;

}

继续追踪这个函数,会看到最终调用的是inet_sendmsg。

inet_sendmsg函数:

int inet_sendmsg(struct socket *sock, struct msghdr *msg, size_t size)

{

struct sock *sk = sock->sk;

if (unlikely(inet_send_prepare(sk)))

return -EAGAIN;

return INDIRECT_CALL_2(sk->sk_prot->sendmsg, tcp_sendmsg, udp_sendmsg,

sk, msg, size);

}

EXPORT_SYMBOL(inet_sendmsg);

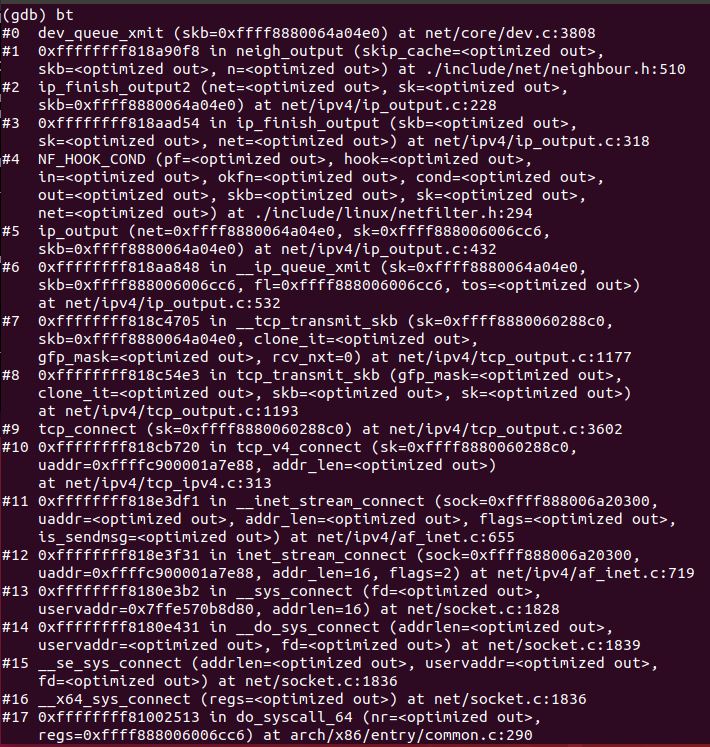

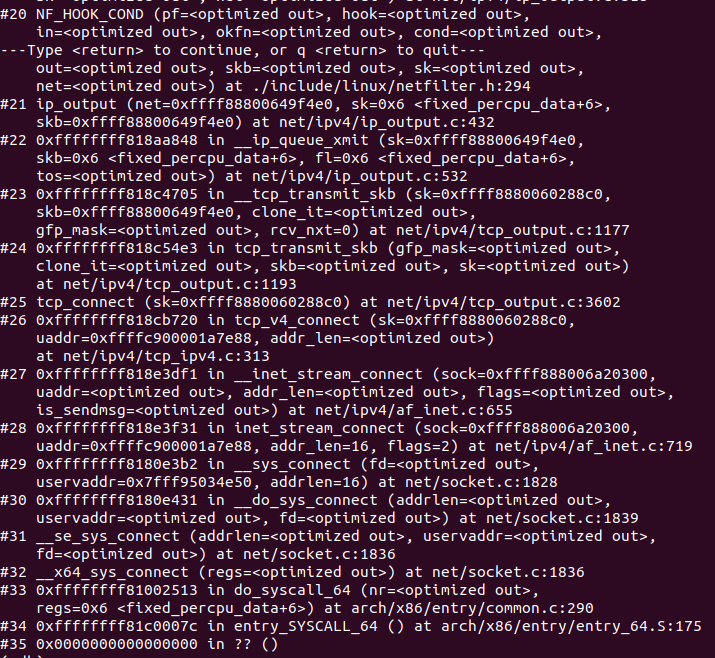

使用gdb验证

3.2 传输层

入口函数为上层调用的tcp_sendmsg,具体过程如下:

1 tcp_sendmsg() 函数,首先该函数上锁,然后调用tcp_sendmsg_locked()函数,源码如下

//net/ipv4/tcp.c

int tcp_sendmsg(struct sock *sk, struct msghdr *msg, size_t size)

{

int ret;

lock_sock(sk);

ret = tcp_sendmsg_locked(sk, msg, size);

release_sock(sk);

return ret;

}

EXPORT_SYMBOL(tcp_sendmsg);

从上段代码可以看出,发送过程涉及到上锁和释放锁的操作,发送函数为tcp_sendmsg_locked函数;

2 tcp_sendmsg_locked()函数 处理用户数据的存放,将所有的数据组织成发送队列,然后调用tcp_push(),源码如下

//net/ipv4/tcp.c

int tcp_sendmsg_locked(struct sock *sk, struct msghdr *msg, size_t size)

{

struct tcp_sock *tp = tcp_sk(sk);

struct ubuf_info *uarg = NULL;

struct sk_buff *skb;

struct sockcm_cookie sockc;

int flags, err, copied = 0;

int mss_now = 0, size_goal, copied_syn = 0;

int process_backlog = 0;

bool zc = false;

long timeo;

......

wait_for_sndbuf:

set_bit(SOCK_NOSPACE, &sk->sk_socket->flags);

wait_for_memory:

//如果已经有数据拷贝至发送队列,那就先把这部分发送,再等待内存释放

if (copied)

tcp_push(sk, flags & ~MSG_MORE, mss_now,

TCP_NAGLE_PUSH, size_goal);

err = sk_stream_wait_memory(sk, &timeo);

if (err != 0)

goto do_error;

mss_now = tcp_send_mss(sk, &size_goal, flags);

}

out:

if (copied) {

tcp_tx_timestamp(sk, sockc.tsflags);

tcp_push(sk, flags, mss_now, tp->nonagle, size_goal);

}

out_nopush:

sock_zerocopy_put(uarg);

return copied + copied_syn;

do_error:

skb = tcp_write_queue_tail(sk);

do_fault:

tcp_remove_empty_skb(sk, skb);

if (copied + copied_syn)

goto out;

out_err:

sock_zerocopy_put_abort(uarg, true);

err = sk_stream_error(sk, flags, err);

/* make sure we wake any epoll edge trigger waiter */

if (unlikely(tcp_rtx_and_write_queues_empty(sk) && err == -EAGAIN)) {

sk->sk_write_space(sk);

tcp_chrono_stop(sk, TCP_CHRONO_SNDBUF_LIMITED);

}

return err;

}

该代码主要逻辑为:首先即为检查连接状态的TCPF_ESTABLISHED和TCPF_CLOSE_WAIT,如果不是这两个状态,即代表要等待连接或者进行出错处理;然后就是检查数据的是否分段,获取最大的MSS数据,将数据复制到skb队列进行发送。

3 tcp_push()函数,源码如下

//net/ipv4/tcp.c

static void tcp_push(struct sock *sk, int flags, int mss_now,

int nonagle, int size_goal)

{

struct tcp_sock *tp = tcp_sk(sk);

struct sk_buff *skb;

//获取发送队列中的最后一个skb

skb = tcp_write_queue_tail(sk);

if (!skb)

return;

//判断是否需要设置PSH标记

if (!(flags & MSG_MORE) || forced_push(tp))

tcp_mark_push(tp, skb);

//如果设置了MSG_OOB选项,就记录紧急指针

tcp_mark_urg(tp, flags);

//判断是否需要阻塞小包,将多个小数据段合并到一个skb中一起发送

if (tcp_should_autocork(sk, skb, size_goal)) {

/* avoid atomic op if TSQ_THROTTLED bit is already set */

//设置TSQ_THROTTLED标志

if (!test_bit(TSQ_THROTTLED, &sk->sk_tsq_flags)) {

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPAUTOCORKING);

set_bit(TSQ_THROTTLED, &sk->sk_tsq_flags);

}

/* It is possible TX completion already happened

* before we set TSQ_THROTTLED.

*/

//有可能在设置TSQ_THROTTLED前,网卡txring已经完成发送

//因此再次检查条件,避免错误阻塞数据报文

if (refcount_read(&sk->sk_wmem_alloc) > skb->truesize)

return;

}

//应用程序用MSG_MORE标识告诉4层将会有更多的小数据包的传输

//然后将这个标记再传递给3层,3层就会提前划分一个mtu大小的数据包,来组合这些数据帧

if (flags & MSG_MORE)

nonagle = TCP_NAGLE_CORK;

//TCP层还没处理完,接着往下走

__tcp_push_pending_frames(sk, mss_now, nonagle);

}

此函数涉及到小包阻塞的问题,并且会判断这个skb的元素是否需要push,如果需要就将tcp头部字段的push置一,然后执行__tcp_push_pending_frames()函数。

4 __tcp_push_pending_frames()函数,源码如下

/* net/ipv4/tcp_output.c

* Push out any pending frames which were held back due to

* TCP_CORK or attempt at coalescing tiny packets.

* The socket must be locked by the caller.

*/

void __tcp_push_pending_frames(struct sock *sk, unsigned int cur_mss,

int nonagle)

{

/* If we are closed, the bytes will have to remain here.

* In time closedown will finish, we empty the write queue and

* all will be happy.

*/

//如果连接处于关闭状态,返回不处理

if (unlikely(sk->sk_state == TCP_CLOSE))

return;

//tcp_write_xmit会再次判断是否要推迟数据发送,使用nagle算法

if (tcp_write_xmit(sk, cur_mss, nonagle, 0,

sk_gfp_mask(sk, GFP_ATOMIC)))

//发送失败,检测是否需要开启零窗口探测定时器

tcp_check_probe_timer(sk);

}

该函数会发出任何由于TCP_CORK或者尝试合并小数据包而被延迟挂起的帧,发送使用tcp_write_xmit()函数。

5 tcp_write_xmit()函数,源码如下

//net/ipv4/tcp_output.c

static bool tcp_write_xmit(struct sock *sk, unsigned int mss_now, int nonagle,

int push_one, gfp_t gfp)

{

struct tcp_sock *tp = tcp_sk(sk);

struct sk_buff *skb;

unsigned int tso_segs, sent_pkts;

int cwnd_quota;

int result;

bool is_cwnd_limited = false, is_rwnd_limited = false;

u32 max_segs;

//统计已发送的报文总数

sent_pkts = 0;

tcp_mstamp_refresh(tp);

//如果只发送一个数据报文,则不做MTU探测

if (!push_one) {

/* Do MTU probing. */

result = tcp_mtu_probe(sk);

if (!result) {

return false;

} else if (result > 0) {

sent_pkts = 1;

}

}

max_segs = tcp_tso_segs(sk, mss_now);

//若发送队列未满,则准备发送报文

while ((skb = tcp_send_head(sk))) {

unsigned int limit;

if (unlikely(tp->repair) && tp->repair_queue == TCP_SEND_QUEUE) {

/* "skb_mstamp_ns" is used as a start point for the retransmit timer */

skb->skb_mstamp_ns = tp->tcp_wstamp_ns = tp->tcp_clock_cache;

list_move_tail(&skb->tcp_tsorted_anchor, &tp->tsorted_sent_queue);

tcp_init_tso_segs(skb, mss_now);

goto repair; /* Skip network transmission */

}

if (tcp_pacing_check(sk))

break;

tso_segs = tcp_init_tso_segs(skb, mss_now);

BUG_ON(!tso_segs);

//检查发送窗口的大小

cwnd_quota = tcp_cwnd_test(tp, skb);

if (!cwnd_quota) {

if (push_one == 2)

/* Force out a loss probe pkt. */

cwnd_quota = 1;

else

break;

}

if (unlikely(!tcp_snd_wnd_test(tp, skb, mss_now))) {

is_rwnd_limited = true;

break;

}

if (tso_segs == 1) {

if (unlikely(!tcp_nagle_test(tp, skb, mss_now,

(tcp_skb_is_last(sk, skb) ?

nonagle : TCP_NAGLE_PUSH))))

break;

} else {

if (!push_one &&

tcp_tso_should_defer(sk, skb, &is_cwnd_limited,

&is_rwnd_limited, max_segs))

break;

}

limit = mss_now;

if (tso_segs > 1 && !tcp_urg_mode(tp))

limit = tcp_mss_split_point(sk, skb, mss_now,

min_t(unsigned int,

cwnd_quota,

max_segs),

nonagle);

if (skb->len > limit &&

unlikely(tso_fragment(sk, skb, limit, mss_now, gfp)))

break;

if (tcp_small_queue_check(sk, skb, 0))

break;

/* Argh, we hit an empty skb(), presumably a thread

* is sleeping in sendmsg()/sk_stream_wait_memory().

* We do not want to send a pure-ack packet and have

* a strange looking rtx queue with empty packet(s).

*/

if (TCP_SKB_CB(skb)->end_seq == TCP_SKB_CB(skb)->seq)

break;

if (unlikely(tcp_transmit_skb(sk, skb, 1, gfp)))

break;

......

}

此函数为具体发送过程,检查连接状态和拥塞窗口的大小,然后将skb队列发送出去。此函数调用了tcp_transmit_skb。

6 tcp_transmit_skb()函数,源码如下

//net/ipv4/tcp_output.c

static int tcp_transmit_skb(struct sock *sk, struct sk_buff *skb, int clone_it,

gfp_t gfp_mask)

{

return __tcp_transmit_skb(sk, skb, clone_it, gfp_mask,

tcp_sk(sk)->rcv_nxt);

}

可以看出,它调用了__tcp_transmit_skb函数。

7 __tcp_transmit_skb()函数,源码如下

//net/ipv4/tcp_output.c

/* This routine actually transmits TCP packets queued in by

* tcp_do_sendmsg(). This is used by both the initial

* transmission and possible later retransmissions.

* All SKB's seen here are completely headerless. It is our

* job to build the TCP header, and pass the packet down to

* IP so it can do the same plus pass the packet off to the

* device.

*

* We are working here with either a clone of the original

* SKB, or a fresh unique copy made by the retransmit engine.

*/

static int __tcp_transmit_skb(struct sock *sk, struct sk_buff *skb,

int clone_it, gfp_t gfp_mask, u32 rcv_nxt)

{

const struct inet_connection_sock *icsk = inet_csk(sk);

struct inet_sock *inet;

struct tcp_sock *tp;

struct tcp_skb_cb *tcb;

struct tcp_out_options opts;

unsigned int tcp_options_size, tcp_header_size;

struct sk_buff *oskb = NULL;

struct tcp_md5sig_key *md5;

struct tcphdr *th;

u64 prior_wstamp;

int err;

......

/* Build TCP header and checksum it. 构建TCP头部和校验和*/

th = (struct tcphdr *)skb->data;

th->source = inet->inet_sport;

th->dest = inet->inet_dport;

th->seq = htonl(tcb->seq);

th->ack_seq = htonl(rcv_nxt);

*(((__be16 *)th) + 6) = htons(((tcp_header_size >> 2) << 12) |

tcb->tcp_flags);

th->check = 0;

th->urg_ptr = 0;

/* The urg_mode check is necessary during a below snd_una win probe */

if (unlikely(tcp_urg_mode(tp) && before(tcb->seq, tp->snd_up))) {

if (before(tp->snd_up, tcb->seq + 0x10000)) {

th->urg_ptr = htons(tp->snd_up - tcb->seq);

th->urg = 1;

} else if (after(tcb->seq + 0xFFFF, tp->snd_nxt)) {

th->urg_ptr = htons(0xFFFF);

th->urg = 1;

}

}

tcp_options_write((__be32 *)(th + 1), tp, &opts);

skb_shinfo(skb)->gso_type = sk->sk_gso_type;

if (likely(!(tcb->tcp_flags & TCPHDR_SYN))) {

th->window = htons(tcp_select_window(sk));

tcp_ecn_send(sk, skb, th, tcp_header_size);

} else {

/* RFC1323: The window in SYN & SYN/ACK segments

* is never scaled.

*/

th->window = htons(min(tp->rcv_wnd, 65535U));

}

#ifdef CONFIG_TCP_MD5SIG

/* Calculate the MD5 hash, as we have all we need now */

if (md5) {

sk_nocaps_add(sk, NETIF_F_GSO_MASK);

tp->af_specific->calc_md5_hash(opts.hash_location,

md5, sk, skb);

}

#endif

icsk->icsk_af_ops->send_check(sk, skb);

if (likely(tcb->tcp_flags & TCPHDR_ACK))

tcp_event_ack_sent(sk, tcp_skb_pcount(skb), rcv_nxt);

if (skb->len != tcp_header_size) {

tcp_event_data_sent(tp, sk);

tp->data_segs_out += tcp_skb_pcount(skb);

tp->bytes_sent += skb->len - tcp_header_size;

}

if (after(tcb->end_seq, tp->snd_nxt) || tcb->seq == tcb->end_seq)

TCP_ADD_STATS(sock_net(sk), TCP_MIB_OUTSEGS,

tcp_skb_pcount(skb));

tp->segs_out += tcp_skb_pcount(skb);

/* OK, its time to fill skb_shinfo(skb)->gso_{segs|size} */

skb_shinfo(skb)->gso_segs = tcp_skb_pcount(skb);

skb_shinfo(skb)->gso_size = tcp_skb_mss(skb);

/* Leave earliest departure time in skb->tstamp (skb->skb_mstamp_ns) */

/* Cleanup our debris for IP stacks */

memset(skb->cb, 0, max(sizeof(struct inet_skb_parm),

sizeof(struct inet6_skb_parm)));

tcp_add_tx_delay(skb, tp);

err = icsk->icsk_af_ops->queue_xmit(sk, skb, &inet->cork.fl);

if (unlikely(err > 0)) {

tcp_enter_cwr(sk);

err = net_xmit_eval(err);

}

if (!err && oskb) {

tcp_update_skb_after_send(sk, oskb, prior_wstamp);

tcp_rate_skb_sent(sk, oskb);

}

return err;

}

该函数功能为构造tcp的头内容,计算校验和等。

使用gdb验证

3.3 网络层

网络层起始函数为ip_queue_xmit(),执行过程如下;

1 ip_queue_xmit()函数,源码如下

//include/net/ip.h

static inline int ip_queue_xmit(struct sock *sk, struct sk_buff *skb,

struct flowi *fl)

{

return __ip_queue_xmit(sk, skb, fl, inet_sk(sk)->tos);

}

该函数调用__ip_queue_xmit()。

2 __ip_queue_xmit()函数,源码如下

//net/ipv4/ip_output.c

/* Note: skb->sk can be different from sk, in case of tunnels */

int __ip_queue_xmit(struct sock *sk, struct sk_buff *skb, struct flowi *fl,

__u8 tos)

{

struct inet_sock *inet = inet_sk(sk);

struct net *net = sock_net(sk);

struct ip_options_rcu *inet_opt;

struct flowi4 *fl4;

struct rtable *rt;

struct iphdr *iph;

int res;

/* Skip all of this if the packet is already routed,

* f.e. by something like SCTP.

*/

rcu_read_lock();

inet_opt = rcu_dereference(inet->inet_opt);

fl4 = &fl->u.ip4;

//获得skb中的路由缓存

rt = skb_rtable(skb);

//如果存在缓存,直接跳到packet_routed位置执行否则通过ip_route_output_ports查找路由缓存,

if (rt)

goto packet_routed;

/* Make sure we can route this packet. */

//检查控制块中的路由缓存

rt = (struct rtable *)__sk_dst_check(sk, 0);

//缓存过期了

if (!rt) {

__be32 daddr;

/* Use correct destination address if we have options. */

//使用正确的地址

daddr = inet->inet_daddr;

if (inet_opt && inet_opt->opt.srr)

daddr = inet_opt->opt.faddr;

/* If this fails, retransmit mechanism of transport layer will

* keep trying until route appears or the connection times

* itself out.

*/

//查找路由缓存

rt = ip_route_output_ports(net, fl4, sk,

daddr, inet->inet_saddr,

inet->inet_dport,

inet->inet_sport,

sk->sk_protocol,

RT_CONN_FLAGS_TOS(sk, tos),

sk->sk_bound_dev_if);

//失败

if (IS_ERR(rt))

goto no_route;

//设置控制块的路由缓存

sk_setup_caps(sk, &rt->dst);

}

//将路由设置到SVB中

skb_dst_set_noref(skb, &rt->dst);

packet_routed:

if (inet_opt && inet_opt->opt.is_strictroute && rt->rt_uses_gateway)

goto no_route;

/* OK, we know where to send it, allocate and build IP header. */

//接下来我们知道了目的地址,我们就可以构造并且加入ip头部内容

skb_push(skb, sizeof(struct iphdr) + (inet_opt ? inet_opt->opt.optlen : 0));

skb_reset_network_header(skb);

iph = ip_hdr(skb);

*((__be16 *)iph) = htons((4 << 12) | (5 << 8) | (tos & 0xff));

if (ip_dont_fragment(sk, &rt->dst) && !skb->ignore_df)

iph->frag_off = htons(IP_DF);

else

iph->frag_off = 0;

iph->ttl = ip_select_ttl(inet, &rt->dst);

iph->protocol = sk->sk_protocol;

ip_copy_addrs(iph, fl4);

/* Transport layer set skb->h.foo itself. */

if (inet_opt && inet_opt->opt.optlen) {

iph->ihl += inet_opt->opt.optlen >> 2;

ip_options_build(skb, &inet_opt->opt, inet->inet_daddr, rt, 0);

}

ip_select_ident_segs(net, skb, sk,

skb_shinfo(skb)->gso_segs ?: 1);

/* TODO : should we use skb->sk here instead of sk ? */

skb->priority = sk->sk_priority;

skb->mark = sk->sk_mark;

res = ip_local_out(net, sk, skb);

rcu_read_unlock();

return res;

no_route:

//无路由情况下的处理

rcu_read_unlock();

IP_INC_STATS(net, IPSTATS_MIB_OUTNOROUTES);

kfree_skb(skb);

return -EHOSTUNREACH;

}

该函数进行路由,构造部分ip头部内容,执行完之后,调用ip_local_out()函数来发送数据。

3 ip_local_out()函数,源码如下

//net/ipv4/ip_output.c

int ip_local_out(struct net *net, struct sock *sk, struct sk_buff *skb)

{

int err;

err = __ip_local_out(net, sk, skb);

if (likely(err == 1))

err = dst_output(net, sk, skb);

return err;

}

它调用了__ip_local_out()函数。

4 __ip_local_out()函数,源码如下

//net/ipv4/ip_output.c

int __ip_local_out(struct net *net, struct sock *sk, struct sk_buff *skb)

{

struct iphdr *iph = ip_hdr(skb);

//设置ip包总长度

iph->tot_len = htons(skb->len);

//计算相应的校验和

ip_send_check(iph);

/* if egress device is enslaved to an L3 master device pass the

* skb to its handler for processing

*/

skb = l3mdev_ip_out(sk, skb);

if (unlikely(!skb))

return 0;

//设置协议

skb->protocol = htons(ETH_P_IP);

//要经过netfilter的LOCAL_HOOK hook点

return nf_hook(NFPROTO_IPV4, NF_INET_LOCAL_OUT,

net, sk, skb, NULL, skb_dst(skb)->dev,

dst_output);

}

此函数设置ip头部长度,校验和,设置协议,然后放入到NF_INET_LOCAL_OUT hook点,此函数设置了数据包的总长度和校验和,通过netfilter的LOCAL_OUT检查,若通过,则调用dst_output函数发送数据包。

5 dst_output()函数,源码如下

//include/net/dst.h

/* Output packet to network from transport. */

static inline int dst_output(struct net *net, struct sock *sk, struct sk_buff *skb)

{

return skb_dst(skb)->output(net, sk, skb);

}

6 ip_output()函数,源码如下

//net/ipv4/ip_output.c

int ip_output(struct net *net, struct sock *sk, struct sk_buff *skb)

{

struct net_device *dev = skb_dst(skb)->dev;

IP_UPD_PO_STATS(net, IPSTATS_MIB_OUT, skb->len);

skb->dev = dev;

skb->protocol = htons(ETH_P_IP);

return NF_HOOK_COND(NFPROTO_IPV4, NF_INET_POST_ROUTING,

net, sk, skb, NULL, dev,

ip_finish_output,

!(IPCB(skb)->flags & IPSKB_REROUTED));

}

该函数调用了__ip_finish_output()函数。

7 __ip_finish_output()函数,源码如下

//net/ipv4/ip_output.c

static int ip_finish_output(struct net *net, struct sock *sk, struct sk_buff *skb)

{

int ret;

ret = BPF_CGROUP_RUN_PROG_INET_EGRESS(sk, skb);

switch (ret) {

case NET_XMIT_SUCCESS:

return __ip_finish_output(net, sk, skb);

case NET_XMIT_CN:

return __ip_finish_output(net, sk, skb) ? : ret;

default:

kfree_skb(skb);

return ret;

}

}

//net/ipv4/ip_output.c

static int __ip_finish_output(struct net *net, struct sock *sk, struct sk_buff *skb)

{

unsigned int mtu;

#if defined(CONFIG_NETFILTER) && defined(CONFIG_XFRM)

/* Policy lookup after SNAT yielded a new policy */

if (skb_dst(skb)->xfrm) {

IPCB(skb)->flags |= IPSKB_REROUTED;

return dst_output(net, sk, skb);

}

#endif

//获得mtu

mtu = ip_skb_dst_mtu(sk, skb);

if (skb_is_gso(skb))

return ip_finish_output_gso(net, sk, skb, mtu);

//如果需要分片就进行分片,否则直接调用ip_finish_output2(net, sk, skb);

if (skb->len > mtu || (IPCB(skb)->flags & IPSKB_FRAG_PMTU))

return ip_fragment(net, sk, skb, mtu, ip_finish_output2);

return ip_finish_output2(net, sk, skb);

}

在不分片的情况下,调用ip_finish_output2函数。

8 ip_finish_output2()函数,源码如下

//net/ipv4/ip_output.c

static int ip_finish_output2(struct net *net, struct sock *sk, struct sk_buff *skb)

{

struct dst_entry *dst = skb_dst(skb);

struct rtable *rt = (struct rtable *)dst;

struct net_device *dev = dst->dev;

unsigned int hh_len = LL_RESERVED_SPACE(dev);

struct neighbour *neigh;

bool is_v6gw = false;

if (rt->rt_type == RTN_MULTICAST) {

IP_UPD_PO_STATS(net, IPSTATS_MIB_OUTMCAST, skb->len);

} else if (rt->rt_type == RTN_BROADCAST)

IP_UPD_PO_STATS(net, IPSTATS_MIB_OUTBCAST, skb->len);

/* Be paranoid, rather than too clever. */

if (unlikely(skb_headroom(skb) < hh_len && dev->header_ops)) {

struct sk_buff *skb2;

skb2 = skb_realloc_headroom(skb, LL_RESERVED_SPACE(dev));

if (!skb2) {

kfree_skb(skb);

return -ENOMEM;

}

if (skb->sk)

skb_set_owner_w(skb2, skb->sk);

consume_skb(skb);

skb = skb2;

}

if (lwtunnel_xmit_redirect(dst->lwtstate)) {

int res = lwtunnel_xmit(skb);

if (res < 0 || res == LWTUNNEL_XMIT_DONE)

return res;

}

rcu_read_lock_bh();

//arp协议执行开始处

neigh = ip_neigh_for_gw(rt, skb, &is_v6gw);

if (!IS_ERR(neigh)) {

int res;

sock_confirm_neigh(skb, neigh);

/* if crossing protocols, can not use the cached header */

res = neigh_output(neigh, skb, is_v6gw);

rcu_read_unlock_bh();

return res;

}

rcu_read_unlock_bh();

net_dbg_ratelimited("%s: No header cache and no neighbour!\n",

__func__);

kfree_skb(skb);

return -EINVAL;

}

此函数检查skb的头部空间是否能够容纳下二层头部,如果空间不足,重新申请skb,然后通过通过邻居子系统neigh_output()输出。

9 neigh_output()函数,源码如下

/include/net/neighbour.h

static inline int neigh_output(struct neighbour *n, struct sk_buff *skb,

bool skip_cache)

{

const struct hh_cache *hh = &n->hh;

if ((n->nud_state & NUD_CONNECTED) && hh->hh_len && !skip_cache)

return neigh_hh_output(hh, skb);

else

return n->output(n, skb);

}

功能是输出分为有二层头有缓存和没有两种情况,有缓存时调用neigh_hh_output()进行快速输出,没有缓存时,则调用邻居子系统的输出回调函数进行慢速输出,neigh_hh_output()。

10 neigh_hh_output()函数,源码如下

//include/net/neighbour.h

static inline int neigh_hh_output(const struct hh_cache *hh, struct sk_buff *skb)

{

unsigned int hh_alen = 0;

unsigned int seq;

unsigned int hh_len;

//拷贝二层头到skb

do {

seq = read_seqbegin(&hh->hh_lock);

hh_len = READ_ONCE(hh->hh_len);

if (likely(hh_len <= HH_DATA_MOD)) {

hh_alen = HH_DATA_MOD;

/* skb_push() would proceed silently if we have room for

* the unaligned size but not for the aligned size:

* check headroom explicitly.

*/

if (likely(skb_headroom(skb) >= HH_DATA_MOD)) {

/* this is inlined by gcc */

memcpy(skb->data - HH_DATA_MOD, hh->hh_data,

HH_DATA_MOD);

}

} else {

hh_alen = HH_DATA_ALIGN(hh_len);

if (likely(skb_headroom(skb) >= hh_alen)) {

memcpy(skb->data - hh_alen, hh->hh_data,

hh_alen);

}

}

} while (read_seqretry(&hh->hh_lock, seq));

if (WARN_ON_ONCE(skb_headroom(skb) < hh_alen)) {

kfree_skb(skb);

return NET_XMIT_DROP;

}

__skb_push(skb, hh_len);

return dev_queue_xmit(skb);

}

此函数结束,网络层追踪完毕。

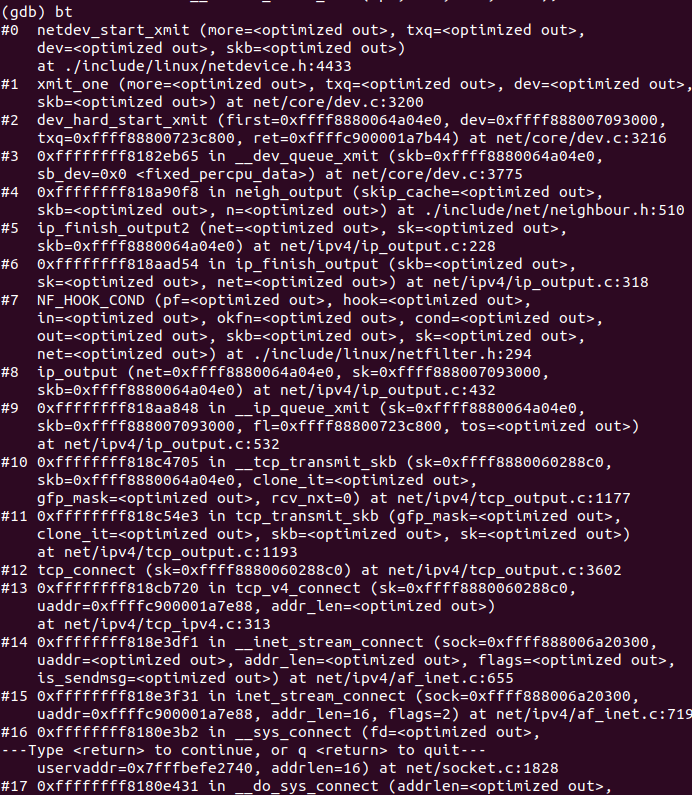

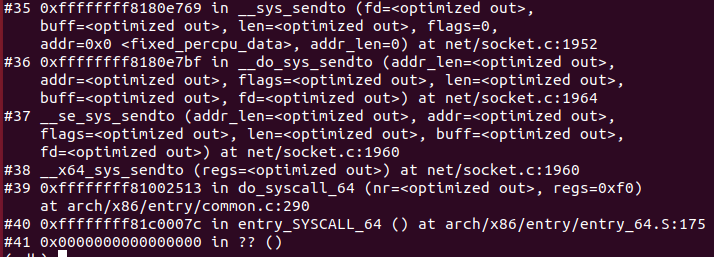

使用gdb进行验证

3.4 网络接口层

该层为TCP/IP协议最底层,对应OSI模型的数据链路层和物理层。

入口函数为dev_queue_xmit(),执行过程如下:

1 dev_queue_xmit()函数,源码如下

//net/core/dev.c

int dev_queue_xmit(struct sk_buff *skb)

{

return __dev_queue_xmit(skb, NULL);

}

该函数调用了__dev_queue_xmit()函数。

2 __dev_queue_xmit()函数,源码如下

//net/core/dev.c

static int __dev_queue_xmit(struct sk_buff *skb, struct net_device *sb_dev)

{

struct net_device *dev = skb->dev;

struct netdev_queue *txq;

struct Qdisc *q;

int rc = -ENOMEM;

bool again = false;

skb_reset_mac_header(skb);

if (unlikely(skb_shinfo(skb)->tx_flags & SKBTX_SCHED_TSTAMP))

__skb_tstamp_tx(skb, NULL, skb->sk, SCM_TSTAMP_SCHED);

/* Disable soft irqs for various locks below. Also

* stops preemption for RCU.

*/

rcu_read_lock_bh();

skb_update_prio(skb);

qdisc_pkt_len_init(skb);

#ifdef CONFIG_NET_CLS_ACT

skb->tc_at_ingress = 0;

# ifdef CONFIG_NET_EGRESS

if (static_branch_unlikely(&egress_needed_key)) {

skb = sch_handle_egress(skb, &rc, dev);

if (!skb)

goto out;

}

# endif

#endif

/* If device/qdisc don't need skb->dst, release it right now while

* its hot in this cpu cache.

*/

if (dev->priv_flags & IFF_XMIT_DST_RELEASE)

skb_dst_drop(skb);

else

skb_dst_force(skb);

txq = netdev_core_pick_tx(dev, skb, sb_dev);

q = rcu_dereference_bh(txq->qdisc);

trace_net_dev_queue(skb);

if (q->enqueue) {

rc = __dev_xmit_skb(skb, q, dev, txq);

goto out;

}

/* The device has no queue. Common case for software devices:

* loopback, all the sorts of tunnels...

* Really, it is unlikely that netif_tx_lock protection is necessary

* here. (f.e. loopback and IP tunnels are clean ignoring statistics

* counters.)

* However, it is possible, that they rely on protection

* made by us here.

* Check this and shot the lock. It is not prone from deadlocks.

*Either shot noqueue qdisc, it is even simpler 8)

*/

if (dev->flags & IFF_UP) {

int cpu = smp_processor_id(); /* ok because BHs are off */

if (txq->xmit_lock_owner != cpu) {

if (dev_xmit_recursion())

goto recursion_alert;

skb = validate_xmit_skb(skb, dev, &again);

if (!skb)

goto out;

HARD_TX_LOCK(dev, txq, cpu);

if (!netif_xmit_stopped(txq)) {

dev_xmit_recursion_inc();

skb = dev_hard_start_xmit(skb, dev, txq, &rc);

dev_xmit_recursion_dec();

if (dev_xmit_complete(rc)) {

HARD_TX_UNLOCK(dev, txq);

goto out;

}

}

HARD_TX_UNLOCK(dev, txq);

net_crit_ratelimited("Virtual device %s asks to queue packet!\n",

dev->name);

} else {

/* Recursion is detected! It is possible,

* unfortunately

*/

recursion_alert:

net_crit_ratelimited("Dead loop on virtual device %s, fix it urgently!\n",

dev->name);

}

}

rc = -ENETDOWN;

rcu_read_unlock_bh();

atomic_long_inc(&dev->tx_dropped);

kfree_skb_list(skb);

return rc;

out:

rcu_read_unlock_bh();

return rc;

}

此函数涉及到链路层各个方面的检查,然后调用dev_hard_start_xmit函数向下发送数据。

3 dev_hard_start_xmit函数,源码如下

//net/core/dev.c

struct sk_buff *dev_hard_start_xmit(struct sk_buff *first, struct net_device *dev,

struct netdev_queue *txq, int *ret)

{

struct sk_buff *skb = first;

int rc = NETDEV_TX_OK;

while (skb) {

struct sk_buff *next = skb->next; //取出skb的下一个数据单元

skb_mark_not_on_list(skb);

//将此数据包送到driver Tx函数,因为dequeue的数据也会从这里发送,所以会有next

rc = xmit_one(skb, dev, txq, next != NULL);

//如果发送不成功,next还原到skb->next 退出

if (unlikely(!dev_xmit_complete(rc))) {

skb->next = next;

goto out;

}

//如果发送成功,把next置给skb,一般的next为空 这样就返回,如果不为空就继续发!

skb = next;

//如果txq被stop,并且skb需要发送,就产生TX Busy的问题!

if (netif_tx_queue_stopped(txq) && skb) {

rc = NETDEV_TX_BUSY;

break;

}

}

out:

*ret = rc;

return skb;

}

此函数调用xmit_one()函数发送一个或多个数据。

4 xmit_one()函数,源码如下

//net/core/dev.c

static int xmit_one(struct sk_buff *skb, struct net_device *dev,

struct netdev_queue *txq, bool more)

{

unsigned int len;

int rc;

if (dev_nit_active(dev))

dev_queue_xmit_nit(skb, dev);

len = skb->len;

trace_net_dev_start_xmit(skb, dev);

rc = netdev_start_xmit(skb, dev, txq, more);

trace_net_dev_xmit(skb, rc, dev, len);

return rc;

}

函数执行过程中,使用netdev_start_xmit来启动物理层的接口,进而调用__netdev_start_xmit函数。

5 netdev_start_xmit()函数,源码如下

//include/linux/netdevice.h

static inline netdev_tx_t netdev_start_xmit(struct sk_buff *skb, struct net_device *dev,

struct netdev_queue *txq, bool more)

{

const struct net_device_ops *ops = dev->netdev_ops;

netdev_tx_t rc;

rc = __netdev_start_xmit(ops, skb, dev, more);

if (rc == NETDEV_TX_OK)

txq_trans_update(txq);

return rc;

}

该函数调用了__netdev_start_xmit()函数。

6 __netdev_start_xmit()函数,源码如下

//include/linux/netdevice.h

static inline netdev_tx_t __netdev_start_xmit(const struct net_device_ops *ops,

struct sk_buff *skb, struct net_device *dev,

bool more)

{

__this_cpu_write(softnet_data.xmit.more, more);

return ops->ndo_start_xmit(skb, dev);

}

该函数调用相关驱动将数据发给网卡。

网络接口层追踪完毕。

使用gdb验证

4 recv过程内核调用流程

接收过程是从下至上的,依次经过网络接口层、网络层、传输层、应用层。

4.1 网络接口层

入口函数为net_rx_action函数,执行流程如下:

1 net_rx_action()函数,源码如下

//net/core/dev.c

static __latent_entropy void net_rx_action(struct softirq_action *h)

{

struct softnet_data *sd = this_cpu_ptr(&softnet_data);

unsigned long time_limit = jiffies +

usecs_to_jiffies(netdev_budget_usecs);

int budget = netdev_budget;

LIST_HEAD(list);

LIST_HEAD(repoll);

local_irq_disable();

list_splice_init(&sd->poll_list, &list);

local_irq_enable();

for (;;) {

struct napi_struct *n;

if (list_empty(&list)) {

if (!sd_has_rps_ipi_waiting(sd) && list_empty(&repoll))

goto out;

break;

}

n = list_first_entry(&list, struct napi_struct, poll_list);

budget -= napi_poll(n, &repoll);

/* If softirq window is exhausted then punt.

* Allow this to run for 2 jiffies since which will allow

* an average latency of 1.5/HZ.

*/

if (unlikely(budget <= 0 ||

time_after_eq(jiffies, time_limit))) {

sd->time_squeeze++;

break;

}

}

local_irq_disable();

list_splice_tail_init(&sd->poll_list, &list);

list_splice_tail(&repoll, &list);

list_splice(&list, &sd->poll_list);

if (!list_empty(&sd->poll_list))

__raise_softirq_irqoff(NET_RX_SOFTIRQ);

net_rps_action_and_irq_enable(sd);

out:

__kfree_skb_flush();

}

此函数调用napi_poll函数来处理数据包,该函数将数据包转为内核可以识别的skb格式,然后调用napi_gro_receive函数。

2 napi_gro_receive函数,源码如下

//net/core/dev.c

gro_result_t napi_gro_receive(struct napi_struct *napi, struct sk_buff *skb)

{

gro_result_t ret;

skb_mark_napi_id(skb, napi);

trace_napi_gro_receive_entry(skb);

skb_gro_reset_offset(skb);

ret = napi_skb_finish(dev_gro_receive(napi, skb), skb);

trace_napi_gro_receive_exit(ret);

return ret;

}

该函数调用netif_receive_skb_core()函数。

3 netif_receive_skb_core()函数,源码如下

//net/core/dev.c

int netif_receive_skb_core(struct sk_buff *skb)

{

int ret;

rcu_read_lock();

ret = __netif_receive_skb_one_core(skb, false);

rcu_read_unlock();

return ret;

}

EXPORT_SYMBOL(netif_receive_skb_core);

调用__netif_receive_skb_one_core函数,将数据传至网络层。

4 __netif_receive_skb_one_core()函数,源码如下

//net/core/dev.c

static int __netif_receive_skb_one_core(struct sk_buff *skb, bool pfmemalloc)

{

struct net_device *orig_dev = skb->dev;

struct packet_type *pt_prev = NULL;

int ret;

ret = __netif_receive_skb_core(skb, pfmemalloc, &pt_prev);

if (pt_prev)

ret = INDIRECT_CALL_INET(pt_prev->func, ipv6_rcv, ip_rcv, skb,

skb->dev, pt_prev, orig_dev);

return ret;

}

网络接口层追踪完毕。

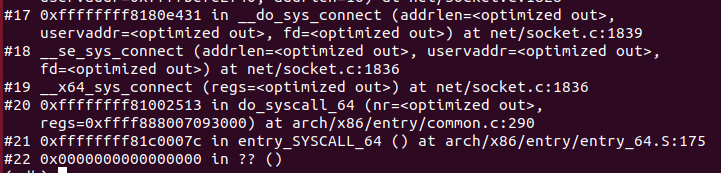

使用gdb进行验证

4.2 网络层

入口函数为ip_rcv,执行过程如下:

1 ip_rcv函数,源码如下

//net/ipv4/ip_input.c

int ip_rcv(struct sk_buff *skb, struct net_device *dev, struct packet_type *pt,

struct net_device *orig_dev)

{

struct net *net = dev_net(dev);

skb = ip_rcv_core(skb, net);

if (skb == NULL)

return NET_RX_DROP;

return NF_HOOK(NFPROTO_IPV4, NF_INET_PRE_ROUTING,

net, NULL, skb, dev, NULL,

ip_rcv_finish);

}

首先会进入hook点NF_INET_PRE_ROUTING进行处理,然后调用ip_rcv_finish()函数。

2 ip_rcv_finish()函数,源码如下

//net/ipv4/ip_input.c

static int ip_rcv_finish(struct net *net, struct sock *sk, struct sk_buff *skb)

{

struct net_device *dev = skb->dev;

int ret;

/* if ingress device is enslaved to an L3 master device pass the

* skb to its handler for processing

*/

skb = l3mdev_ip_rcv(skb);

if (!skb)

return NET_RX_SUCCESS;

ret = ip_rcv_finish_core(net, sk, skb, dev);

if (ret != NET_RX_DROP)

ret = dst_input(skb);

return ret;

}

此函数完成路由表的查询,通过ip_rcv_finish_core()函数判断下一条的路由是需要转发,丢弃还是传给上层协议,加入传给上层协议那么调用dst_input()。

3 dst_input()函数,源码如下

//include/net/dst.h

static inline int dst_input(struct sk_buff *skb)

{

return skb_dst(skb)->input(skb);

}

4 ip_local_deliver函数,源码如下

//net/ipv4/ip_input.c

/*

* Deliver IP Packets to the higher protocol layers.

*/

int ip_local_deliver(struct sk_buff *skb)

{

/*

* Reassemble IP fragments.

*/

struct net *net = dev_net(skb->dev);

if (ip_is_fragment(ip_hdr(skb))) {

if (ip_defrag(net, skb, IP_DEFRAG_LOCAL_DELIVER))

return 0;

}

return NF_HOOK(NFPROTO_IPV4, NF_INET_LOCAL_IN,

net, NULL, skb, skb->dev, NULL,

ip_local_deliver_finish);

}

此函数在路由结束后会判断是否分片,若有分片,则先整合,之后再传入本机的要需要再经过一个HOOK点,NF_INET_LOCAL_IN。

5 ip_local_deliver_finish函数,源码如下

static int ip_local_deliver_finish(struct net *net, struct sock *sk, struct sk_buff *skb)

{

__skb_pull(skb, skb_network_header_len(skb));

rcu_read_lock();

ip_protocol_deliver_rcu(net, skb, ip_hdr(skb)->protocol);

rcu_read_unlock();

return 0;

}

通过HOOK之后执行此函数。

6 ip_protocol_deliver_rcu函数,源码如下

//net/ipv4/ip_input.c

void ip_protocol_deliver_rcu(struct net *net, struct sk_buff *skb, int protocol)

{

const struct net_protocol *ipprot;

int raw, ret;

resubmit:

raw = raw_local_deliver(skb, protocol);

ipprot = rcu_dereference(inet_protos[protocol]);

if (ipprot) {

if (!ipprot->no_policy) {

if (!xfrm4_policy_check(NULL, XFRM_POLICY_IN, skb)) {

kfree_skb(skb);

return;

}

nf_reset_ct(skb);

}

//调用相应的处理函数

ret = INDIRECT_CALL_2(ipprot->handler, tcp_v4_rcv, udp_rcv,

skb);

if (ret < 0) {

protocol = -ret;

goto resubmit;

}

__IP_INC_STATS(net, IPSTATS_MIB_INDELIVERS);

} else {

if (!raw) {

if (xfrm4_policy_check(NULL, XFRM_POLICY_IN, skb)) {

__IP_INC_STATS(net, IPSTATS_MIB_INUNKNOWNPROTOS);

icmp_send(skb, ICMP_DEST_UNREACH,

ICMP_PROT_UNREACH, 0);

}

kfree_skb(skb);

} else {

__IP_INC_STATS(net, IPSTATS_MIB_INDELIVERS);

consume_skb(skb);

}

}

}

函数执行之后进入传输层。

使用gdb进行验证

4.3 传输层

入口函数tcp_v4_rcv(),执行过程如下

1 tcp_v4_rcv()函数,源码如下

//net/ipv4/tcp_ipv4.c

int tcp_v4_rcv(struct sk_buff *skb)

{

struct net *net = dev_net(skb->dev);

struct sk_buff *skb_to_free;

int sdif = inet_sdif(skb);

const struct iphdr *iph;

const struct tcphdr *th;

bool refcounted;

struct sock *sk;

int ret;

.....

if (sk->sk_state == TCP_LISTEN) {

ret = tcp_v4_do_rcv(sk, skb);

goto put_and_return;

}

sk_incoming_cpu_update(sk);

bh_lock_sock_nested(sk);

tcp_segs_in(tcp_sk(sk), skb);

ret = 0;

if (!sock_owned_by_user(sk)) {

skb_to_free = sk->sk_rx_skb_cache;

sk->sk_rx_skb_cache = NULL;

ret = tcp_v4_do_rcv(sk, skb);

} else {

if (tcp_add_backlog(sk, skb))

goto discard_and_relse;

skb_to_free = NULL;

}

.......

}

该函数功能如下:(1) 设置TCP_CB (2) 查找控制块 (3)根据控制块状态做不同处理,包括TCP_TIME_WAIT状态处理,TCP_NEW_SYN_RECV状态处理,TCP_LISTEN状态处理 (4) 接收TCP段。

在LISTEN情况下,该函数会调用 tcp_v4_do_rcv()。

2 tcp_v4_do_rcv函数,源码如下

//net/ipv4/tcp_ipv4.c

/* The socket must have it's spinlock held when we get

* here, unless it is a TCP_LISTEN socket.

*

* We have a potential double-lock case here, so even when

* doing backlog processing we use the BH locking scheme.

* This is because we cannot sleep with the original spinlock

* held.

*/

int tcp_v4_do_rcv(struct sock *sk, struct sk_buff *skb)

{

struct sock *rsk;

if (sk->sk_state == TCP_ESTABLISHED) { /* Fast path */

struct dst_entry *dst = sk->sk_rx_dst;

sock_rps_save_rxhash(sk, skb);

sk_mark_napi_id(sk, skb);

if (dst) {

if (inet_sk(sk)->rx_dst_ifindex != skb->skb_iif ||

!dst->ops->check(dst, 0)) {

dst_release(dst);

sk->sk_rx_dst = NULL;

}

}

tcp_rcv_established(sk, skb);

return 0;

}

if (tcp_checksum_complete(skb))

goto csum_err;

if (sk->sk_state == TCP_LISTEN) {

struct sock *nsk = tcp_v4_cookie_check(sk, skb);

if (!nsk)

goto discard;

if (nsk != sk) {

if (tcp_child_process(sk, nsk, skb)) {

rsk = nsk;

goto reset;

}

return 0;

}

} else

sock_rps_save_rxhash(sk, skb);

if (tcp_rcv_state_process(sk, skb)) {

rsk = sk;

goto reset;

}

return 0;

reset:

tcp_v4_send_reset(rsk, skb);

discard:

kfree_skb(skb);

/* Be careful here. If this function gets more complicated and

* gcc suffers from register pressure on the x86, sk (in %ebx)

* might be destroyed here. This current version compiles correctly,

* but you have been warned.

*/

return 0;

csum_err:

TCP_INC_STATS(sock_net(sk), TCP_MIB_CSUMERRORS);

TCP_INC_STATS(sock_net(sk), TCP_MIB_INERRS);

goto discard;

}

tcp_v4_do_ecv()检查状态如果是established,就调用tcp_rcv_established()函数。

3 tcp_rcv_established()函数,源码如下

//net/ipv4/tcp_input.c

void tcp_rcv_established(struct sock *sk, struct sk_buff *skb)

{

const struct tcphdr *th = (const struct tcphdr *)skb->data;

struct tcp_sock *tp = tcp_sk(sk);

unsigned int len = skb->len;

/* TCP congestion window tracking */

trace_tcp_probe(sk, skb);

tcp_mstamp_refresh(tp);

if (unlikely(!sk->sk_rx_dst))

inet_csk(sk)->icsk_af_ops->sk_rx_dst_set(sk, skb);

if ((tcp_flag_word(th) & TCP_HP_BITS) == tp->pred_flags &&

TCP_SKB_CB(skb)->seq == tp->rcv_nxt &&

!after(TCP_SKB_CB(skb)->ack_seq, tp->snd_nxt)) {

int tcp_header_len = tp->tcp_header_len;

/* Timestamp header prediction: tcp_header_len

* is automatically equal to th->doff*4 due to pred_flags

* match.

*/

/* Check timestamp */

if (tcp_header_len == sizeof(struct tcphdr) + TCPOLEN_TSTAMP_ALIGNED) {

/* No? Slow path! */

if (!tcp_parse_aligned_timestamp(tp, th))

goto slow_path;

/* If PAWS failed, check it more carefully in slow path */

if ((s32)(tp->rx_opt.rcv_tsval - tp->rx_opt.ts_recent) < 0)

goto slow_path;

/* DO NOT update ts_recent here, if checksum fails

* and timestamp was corrupted part, it will result

* in a hung connection since we will drop all

* future packets due to the PAWS test.

*/

}

if (len <= tcp_header_len) {

/* Bulk data transfer: sender */

if (len == tcp_header_len) {

/* Predicted packet is in window by definition.

* seq == rcv_nxt and rcv_wup <= rcv_nxt.

* Hence, check seq<=rcv_wup reduces to:

*/

if (tcp_header_len ==

(sizeof(struct tcphdr) + TCPOLEN_TSTAMP_ALIGNED) &&

tp->rcv_nxt == tp->rcv_wup)

tcp_store_ts_recent(tp);

/* We know that such packets are checksummed

* on entry.

*/

tcp_ack(sk, skb, 0);

__kfree_skb(skb);

tcp_data_snd_check(sk);

/* When receiving pure ack in fast path, update

* last ts ecr directly instead of calling

* tcp_rcv_rtt_measure_ts()

*/

tp->rcv_rtt_last_tsecr = tp->rx_opt.rcv_tsecr;

return;

} else { /* Header too small */

TCP_INC_STATS(sock_net(sk), TCP_MIB_INERRS);

goto discard;

}

} else {

int eaten = 0;

bool fragstolen = false;

if (tcp_checksum_complete(skb))

goto csum_error;

if ((int)skb->truesize > sk->sk_forward_alloc)

goto step5;

/* Predicted packet is in window by definition.

* seq == rcv_nxt and rcv_wup <= rcv_nxt.

* Hence, check seq<=rcv_wup reduces to:

*/

if (tcp_header_len ==

(sizeof(struct tcphdr) + TCPOLEN_TSTAMP_ALIGNED) &&

tp->rcv_nxt == tp->rcv_wup)

tcp_store_ts_recent(tp);

tcp_rcv_rtt_measure_ts(sk, skb);

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPHPHITS);

/* Bulk data transfer: receiver */

__skb_pull(skb, tcp_header_len);

eaten = tcp_queue_rcv(sk, skb, &fragstolen);

tcp_event_data_recv(sk, skb);

if (TCP_SKB_CB(skb)->ack_seq != tp->snd_una) {

/* Well, only one small jumplet in fast path... */

tcp_ack(sk, skb, FLAG_DATA);

tcp_data_snd_check(sk);

if (!inet_csk_ack_scheduled(sk))

goto no_ack;

}

__tcp_ack_snd_check(sk, 0);

........

}

该函数会调用tcp_data_queue()函数。

4 tcp_data_queue()函数,源码如下

//net/ipv4/tcp_input.c

static void tcp_data_queue(struct sock *sk, struct sk_buff *skb)

{

struct tcp_sock *tp = tcp_sk(sk);

bool fragstolen;

int eaten;

if (TCP_SKB_CB(skb)->seq == TCP_SKB_CB(skb)->end_seq) {

__kfree_skb(skb);

return;

}

skb_dst_drop(skb);

__skb_pull(skb, tcp_hdr(skb)->doff * 4);

tcp_ecn_accept_cwr(sk, skb);

tp->rx_opt.dsack = 0;

/* Queue data for delivery to the user.

* Packets in sequence go to the receive queue.

* Out of sequence packets to the out_of_order_queue.

*/

if (TCP_SKB_CB(skb)->seq == tp->rcv_nxt) {

if (tcp_receive_window(tp) == 0) {

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPZEROWINDOWDROP);

goto out_of_window;

}

/* Ok. In sequence. In window. */

queue_and_out:

if (skb_queue_len(&sk->sk_receive_queue) == 0)

sk_forced_mem_schedule(sk, skb->truesize);

else if (tcp_try_rmem_schedule(sk, skb, skb->truesize)) {

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPRCVQDROP);

goto drop;

}

eaten = tcp_queue_rcv(sk, skb, &fragstolen);

if (skb->len)

tcp_event_data_recv(sk, skb);

if (TCP_SKB_CB(skb)->tcp_flags & TCPHDR_FIN)

tcp_fin(sk);

if (!RB_EMPTY_ROOT(&tp->out_of_order_queue)) {

tcp_ofo_queue(sk);

/* RFC5681. 4.2. SHOULD send immediate ACK, when

* gap in queue is filled.

*/

if (RB_EMPTY_ROOT(&tp->out_of_order_queue))

inet_csk(sk)->icsk_ack.pending |= ICSK_ACK_NOW;

}

if (tp->rx_opt.num_sacks)

tcp_sack_remove(tp);

tcp_fast_path_check(sk);

if (eaten > 0)

kfree_skb_partial(skb, fragstolen);

if (!sock_flag(sk, SOCK_DEAD))

tcp_data_ready(sk);

return;

}

......

该函数使用tcp_queue_rcv()将数据加入到接收队列。

5 tcp_queue_rcv()函数,源码如下

//net/ipv4/tcp_input.c

static int __must_check tcp_queue_rcv(struct sock *sk, struct sk_buff *skb,

bool *fragstolen)

{

int eaten;

struct sk_buff *tail = skb_peek_tail(&sk->sk_receive_queue);

eaten = (tail &&

tcp_try_coalesce(sk, tail,

skb, fragstolen)) ? 1 : 0;

tcp_rcv_nxt_update(tcp_sk(sk), TCP_SKB_CB(skb)->end_seq);

if (!eaten) {

__skb_queue_tail(&sk->sk_receive_queue, skb);

skb_set_owner_r(skb, sk);

}

return eaten;

}

该函数是将接收到的skb加入接收队列,然后调用tcp_data_ready()。

6 tcp_data_ready()函数,源码如下

//net/ipv4/tcp_input.c

void tcp_data_ready(struct sock *sk)

{

const struct tcp_sock *tp = tcp_sk(sk);

int avail = tp->rcv_nxt - tp->copied_seq;

if (avail < sk->sk_rcvlowat && !sock_flag(sk, SOCK_DONE))

return;

sk->sk_data_ready(sk);

}

提醒当前sock,有数据可读事件。

传输层追踪完毕。

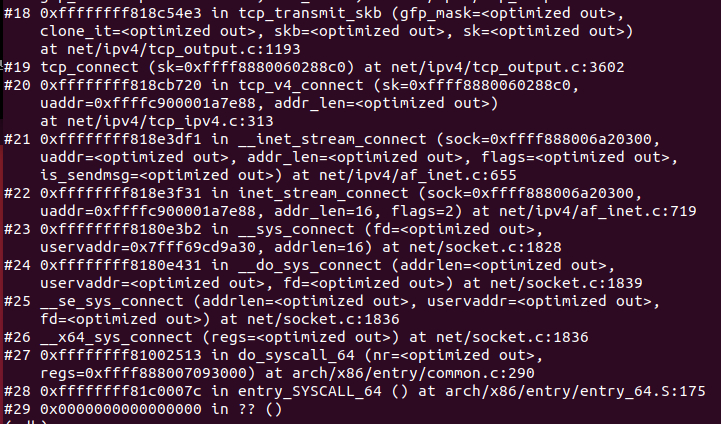

使用gdb进行验证

4.4 应用层

入口函数recv,执行过程如下:

1 recv函数,源码如下

//net/socket.c

SYSCALL_DEFINE6(recvfrom, int, fd, void __user *, ubuf, size_t, size,

unsigned int, flags, struct sockaddr __user *, addr,

int __user *, addr_len)

{

return __sys_recvfrom(fd, ubuf, size, flags, addr, addr_len);

}

/*

* Receive a datagram from a socket.

*/

SYSCALL_DEFINE4(recv, int, fd, void __user *, ubuf, size_t, size,

unsigned int, flags)

{

return __sys_recvfrom(fd, ubuf, size, flags, NULL, NULL);

}

该函数调用__sys_recvfrom()系统调用。

2 __sys_recvfrom函数,源码如下

//net/socket.c

/*

* Receive a frame from the socket and optionally record the address of the

* sender. We verify the buffers are writable and if needed move the

* sender address from kernel to user space.

*/

int __sys_recvfrom(int fd, void __user *ubuf, size_t size, unsigned int flags,

struct sockaddr __user *addr, int __user *addr_len)

{

struct socket *sock;

struct iovec iov;

struct msghdr msg;

struct sockaddr_storage address;

int err, err2;

int fput_needed;

err = import_single_range(READ, ubuf, size, &iov, &msg.msg_iter);

if (unlikely(err))

return err;

sock = sockfd_lookup_light(fd, &err, &fput_needed);

if (!sock)

goto out;

msg.msg_control = NULL;

msg.msg_controllen = 0;

/* Save some cycles and don't copy the address if not needed */

msg.msg_name = addr ? (struct sockaddr *)&address : NULL;

/* We assume all kernel code knows the size of sockaddr_storage */

msg.msg_namelen = 0;

msg.msg_iocb = NULL;

msg.msg_flags = 0;

if (sock->file->f_flags & O_NONBLOCK)

flags |= MSG_DONTWAIT;

err = sock_recvmsg(sock, &msg, flags);

if (err >= 0 && addr != NULL) {

err2 = move_addr_to_user(&address,

msg.msg_namelen, addr, addr_len);

if (err2 < 0)

err = err2;

}

fput_light(sock->file, fput_needed);

out:

return err;

}

该函数创建一些必要结构体,然后调用sock_recvmsg()。

3 sock_recvmsg()函数,源码如下

//net/socket.c

int sock_recvmsg(struct socket *sock, struct msghdr *msg, int flags)

{

int err = security_socket_recvmsg(sock, msg, msg_data_left(msg), flags);

return err ?: sock_recvmsg_nosec(sock, msg, flags);

}

函数功能是进行安全性检查,然后调用sock_recvmsg_nosec()。

4 sock_recvmsg_nosec()函数,源码如下

//net/socket.c

static inline int sock_recvmsg_nosec(struct socket *sock, struct msghdr *msg,

int flags)

{

return INDIRECT_CALL_INET(sock->ops->recvmsg, inet6_recvmsg,

inet_recvmsg, sock, msg, msg_data_left(msg),

flags);

}

该函数调用net_recvmsg函数()。

5 net_recvmsg()函数,源码如下

//net/ipv4/af_inet.c

int inet_recvmsg(struct socket *sock, struct msghdr *msg, size_t size,

int flags)

{

struct sock *sk = sock->sk;

int addr_len = 0;

int err;

if (likely(!(flags & MSG_ERRQUEUE)))

sock_rps_record_flow(sk);

err = INDIRECT_CALL_2(sk->sk_prot->recvmsg, tcp_recvmsg, udp_recvmsg,

sk, msg, size, flags & MSG_DONTWAIT,

flags & ~MSG_DONTWAIT, &addr_len);

if (err >= 0)

msg->msg_namelen = addr_len;

return err;

}

//include/linux/indirect_call_wrapper.h

#define INDIRECT_CALL_1(f, f1, ...) \

({ \

likely(f == f1) ? f1(__VA_ARGS__) : f(__VA_ARGS__); \

})

#define INDIRECT_CALL_2(f, f2, f1, ...) \

({ \

likely(f == f2) ? f2(__VA_ARGS__) : \

INDIRECT_CALL_1(f, f1, __VA_ARGS__); \

})

应用层追踪完毕。

使用gdb进行验证

四、时序图