反扒设置 | 代理ip | 代理user_agent | hreaders 设置 | redis-server使用 去重 | images 图片保存 路径设置

from scrapy import log import logging import random from scrapy.downloadermiddlewares.useragent import UserAgentMiddleware class UserAgent(UserAgentMiddleware): def __init__(self, user_agent=''): self.user_agent = user_agent def process_request(self, request, spider): ua = random.choice(self.user_agent_list) if ua: #显示当前使用的useragent #print "********Current UserAgent:%s************" %ua #记录 log.msg('Current UserAgent: '+ua, level=logging.DEBUG) request.headers.setdefault('User-Agent', ua) user_agent_list = [\ "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 " "(KHTML, like Gecko) Chrome/22.0.1207.1 Safari/537.1", "Mozilla/5.0 (X11; CrOS i686 2268.111.0) AppleWebKit/536.11 " "(KHTML, like Gecko) Chrome/20.0.1132.57 Safari/536.11", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.6 " "(KHTML, like Gecko) Chrome/20.0.1092.0 Safari/536.6", "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.6 " "(KHTML, like Gecko) Chrome/20.0.1090.0 Safari/536.6", "Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.1 " "(KHTML, like Gecko) Chrome/19.77.34.5 Safari/537.1", "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/536.5 " "(KHTML, like Gecko) Chrome/19.0.1084.9 Safari/536.5", "Mozilla/5.0 (Windows NT 6.0) AppleWebKit/536.5 " "(KHTML, like Gecko) Chrome/19.0.1084.36 Safari/536.5", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3", "Mozilla/5.0 (Windows NT 5.1) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3", "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_0) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3", "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3", "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3", "Mozilla/5.0 (Windows NT 6.1) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3", "Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 " "(KHTML, like Gecko) Chrome/19.0.1061.0 Safari/536.3", "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/535.24 " "(KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24", "Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/535.24 " "(KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24" ] 代理: import random, base64 class ProxyMiddleware(object): #代理IP列表 proxyList = [ \ '114.231.158.79:8088', '123.233.153.151:8118' ] def process_request(self, request, spider): # Set the location of the proxy pro_adr = random.choice(self.proxyList) request.meta['proxy'] = "http://" + pro_adr settings.py: ROBOTSTXT_OBEY = False ITEM_PIPELINES = { 'ip_proxy_pool.pipelines.IpProxyPoolPipeline': 300, } #爬取间隔 DOWNLOAD_DELAY = 1 # 禁用cookie COOKIES_ENABLED = False # 重写默认请求头 DEFAULT_REQUEST_HEADERS = { 'Accept': 'text/html, application/xhtml+xml, application/xml', 'Accept-Language': 'zh-CN,zh;q=0.8', 'Host':'ip84.com', 'Referer':'http://ip84.com/', 'X-XHR-Referer':'http://ip84.com/' } #激活自定义UserAgent和代理IP # See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html DOWNLOADER_MIDDLEWARES = { 'ip_proxy_pool.useragent.UserAgent': 1, 'ip_proxy_pool.proxymiddlewares.ProxyMiddleware':100, 'scrapy.downloadermiddleware.useragent.UserAgentMiddleware' : None, }

1. 代理user-agent 每次随机选择一个 user-agent

1. setting 设置

# 设置多个user-agent 选择 USER_AGENTS = [ 'Mozilla/5.0 (Linux; Android 4.1.1; Nexus 7 Build/JRO03D) AppleWebKit/535.19 (KHTML, like Gecko) Chrome/18.0.1025.166 Safari/535.19', 'Mozilla/5.0 (Linux; U; Android 4.0.4; en-gb; GT-I9300 Build/IMM76D) AppleWebKit/534.30 (KHTML, like Gecko) Version/4.0 Mobile Safari/534.30', 'Mozilla/5.0 (Linux; U; Android 2.2; en-gb; GT-P1000 Build/FROYO) AppleWebKit/533.1 (KHTML, like Gecko) Version/4.0 Mobile Safari/533.1', 'Mozilla/5.0 (Android; Mobile; rv:14.0) Gecko/14.0 Firefox/14.0', 'Mozilla/5.0 (Android; Tablet; rv:14.0) Gecko/14.0 Firefox/14.0', 'Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:21.0) Gecko/20130331 Firefox/21.0', 'Mozilla/5.0 (Windows NT 6.2; WOW64; rv:21.0) Gecko/20100101 Firefox/21.0', 'Mozilla/5.0 (Linux; Android 4.0.4; Galaxy Nexus Build/IMM76B) AppleWebKit/535.19 (KHTML, like Gecko) Chrome/18.0.1025.133 Mobile Safari/535.19', 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_2) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.93 Safari/537.36', 'Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.94 Safari/537.36', 'Mozilla/5.0 (compatible; WOW64; MSIE 10.0; Windows NT 6.2)', 'Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; en) Presto/2.9.168 Version/11.52', 'Mozilla/5.0 (Macintosh; U; Intel Mac OS X 10_6_6; en-US) AppleWebKit/533.20.25 (KHTML, like Gecko) Version/5.0.4 Safari/533.20.27', ]

1.2 setting 继续设置

# 开启中间件 数值越小 优先执行 DOWNLOADER_MIDDLEWARES = { # 'fm.middlewares.ProxyMiddleware': 553, # 代理 ip 'fm.middlewares.UserAgentMiddleware': 333, # 请求头列表 #'fm.middlewares.UserAgenDownLoadMiddlewares': 333, # 请求头列表 }

1.3 中间件设置 Middleware

# 中间件 User_Agent 需要第三方插件 from fm.settings import USER_AGENTS from random import choice class UserAgentMiddleware(object): def process_request(self, request, spider): request.headers.setdefault(b'User-Agent', choice(USER_AGENTS))

1.4 案例 随机user-agent 使用 案例

import random class UserAgentMiddleware(object): USER_AGENT = [ 'Mozilla/5.0 (Android; Mobile; rv:14.0) Gecko/14.0 Firefox/14.0', 'Mozilla/5.0 (Android; Tablet; rv:14.0) Gecko/14.0 Firefox/14.0', 'Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:21.0) Gecko/20130331 Firefox/21.0', 'Mozilla/5.0 (Windows NT 6.2; WOW64; rv:21.0) Gecko/20100101 Firefox/21.0', 'Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.94 Safari/537.36', 'Mozilla/5.0 (compatible; WOW64; MSIE 10.0; Windows NT 6.2)', 'Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; en) Presto/2.9.168 Version/11.52', "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)", "Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)", "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)", "Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)", "Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6", "Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0", "Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5", "Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11", "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20", "Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52", ] def process_request(self, request, spider): print('-----开始选择user-agent') user_agent = random.choice(self.USER_AGENT) request.headers['User-Agent'] = user_agent return None

2. 代理 ip 设置 随机更换 ip

2.1 setting 设置

# 开启中间件 数值越小 优先执行 DOWNLOADER_MIDDLEWARES = { 'fm.proxymiddlewares.CheckProxyMiddleware': 547, 'fm.proxymiddlewares.ProxyMiddleware': 453, # 代理 ip 'fm.middlewares.UserAgentMiddleware': 333, # 请求头列表 #'fm.middlewares.UserAgenDownLoadMiddlewares': 333, # 请求头列表 }

# 动态ip 列表 PROXIES = [ "http://181.34.53.19:3308",

]

2.2 代码 创建文件 proxymiddlewares .py

''' 1. 代理ip 设置 2. 登录 3. 不登录 ''' import random class ProxyMiddleware(object): def process_request(self, request, spider): # 需要用户登录 # request.meta['proxy'] = 'http://账号:密码@IP地址:端口号' # 不需要登录 直接使用 # request.meta['proxy'] = 'http://IP地址:端口号' ip = random.choice(spider.settings.get('PROXIES')) print('测试IP:', ip) request.meta['proxy'] = ip class CheckProxyMiddleware(object): def process_response(self, request, response, spider): print('代理IP:', request.meta['proxy']) return response

案例 : 随机 ip 使用案例

class ProxyMiddleware(object): PROXY_LIST = [ 'http://106.14.255.124:80', 'http://221.181.238.59:9091', 'http://124.204.33.162:8000', ] def process_request(self, request, spider): print('-----开始检测代理 ip') # 随机挑选ip request.meta['proxy'] = random.choice(self.PROXY_LIST) return None def process_response(self, request, response, spider): print('-------> 检测', response.status) if response.status != 200: request.dont_filter = True print('-----ip不可用------', request.meta['proxy']) return request return response

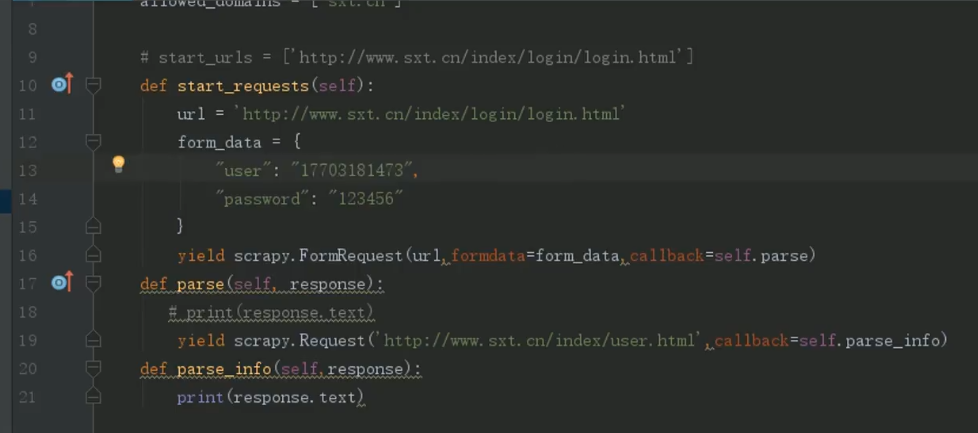

3. cookies 设置 登录 设置

4. redis 的使用 案例

4.1 去重 保存数据 ---- 在pipeline 代码

from scrapy.exceptions import DropItem import json import hashlib # 引入redis第三方 from redis import StrictRedis # redis 的使用 class JianCha: def get_media_requests(self, item, info): # 1. 创建 - 目的就是检查当前redis 是否保存已经处理过的数据 redis_client = StrictRedis(host='localhost', port=6379, db=0) # 2. item转成字符串 item_str = json.dumps(item) # 3. hash出唯一的hash值 md5 = hashlib.md5() md5.update(item_str) hash_data = md5.hexdigest() # 判断redis是否有这个值 if redis_client.get(hash_data): # 有 不下载 扔掉 raise DropItem('数据已经存在redis') else: # 没有传递 需要记录到redis里面 继续下载 redis_client.set(hash_data, item_str) return item

4.2 setting 代码 设置上面的pipeline

# 开启管道

ITEM_PIPELINES = {

'fm.pipelines.JianCha': 300, }

4.3 去重 爬取去重代码

find_exist = FALSE

# 1. 创建 - 目的就是检查当前redis 是否保存已经处理过的数据 redis_client = StrictRedis(host='localhost', port=6379, db=0) # 2. item转成字符串 item_str = json.dumps(item) # 3. hash出唯一的hash值 md5 = hashlib.md5() md5.update(item_str.encode()) hash_data = md5.hexdigest() if redis_client.get(hash_data): # 有数据 find_exist = True pass yield item if not find_exist: # 下一页的出具处理代码 pass

5. 图片保存方法 管道

5.1 定义文件路径 与 文件名称

class ImagePipeline(ImagesPipeline): # 重写方法 def get_media_requests(self, item, info): for image_url in item['image_urls']: # 文件名字 name = image_url.split('/')[-1] yield scrapy.Request(image_url, meta={'name': name, 'image_path': item['image_path']}) def file_path(self, request, response=None, info=None, *, item=None): image_path = request.meta['image_path'] #print(image_path) name = request.meta['name'] #print(name) # 返回 路径 与 文件名称 return image_path + '/' + name

浙公网安备 33010602011771号

浙公网安备 33010602011771号