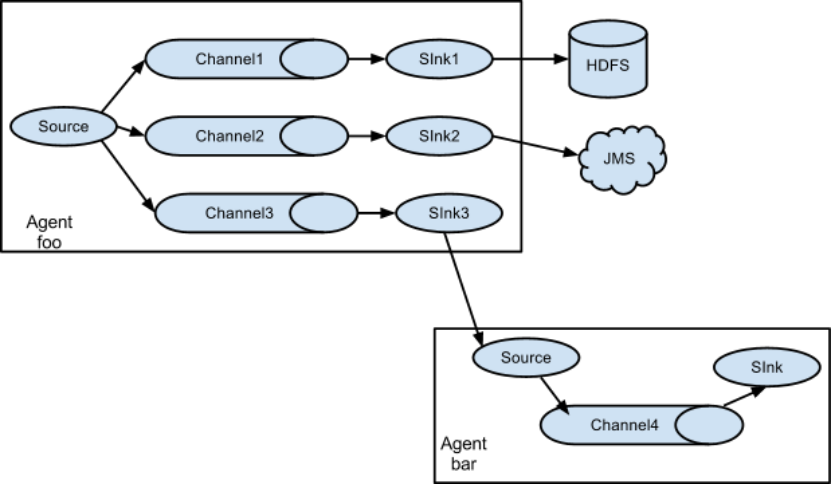

案例五:扇出(fan out)-Flume与Flume之间数据传递:单Flume多Channel、Sink

防止数据丢失可以将数据存入磁盘而不是内存。

一个source可以发送给多个channel

一个sink只能连接一个channel

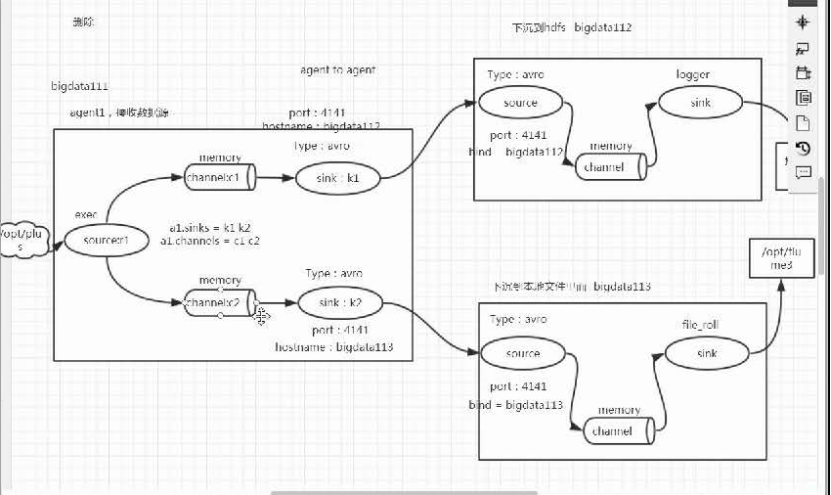

目标:使用flume1监控文件变动,flume1将变动内容传递给flume-2,flume-2负责存储到HDFS。同时flume1将变动内容传递给flume-3,flume-3负责输出到local

分步实现:

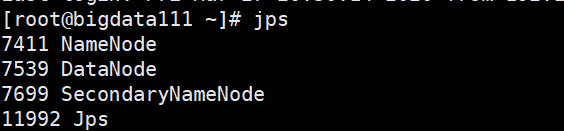

部署之前,这里先启动HDFS(无HA的)

在bigdata111上输入:start-dfs.sh

各个机器上启动的进程如下:

1.创建flume-fanout-1.conf

用于监控某文件的变动,同时产生两个channel和两个sink分别输送给flume2和flume3:

[root@bigdata111 myconf]# vi flume-fanout-1.conf

|

# 1.agent a1.sources = r1 a1.sinks = k1 k2 a1.channels = c1 c2 # 将数据流复制给多个channel a1.sources.r1.selector.type = replicating

# 2.source a1.sources.r1.type = exec a1.sources.r1.command = tail -F /opt/plus a1.sources.r1.shell = /bin/bash -c

# 3.sink1 a1.sinks.k1.type = avro a1.sinks.k1.hostname = bigdata112 a1.sinks.k1.port = 4141

# sink2 a1.sinks.k2.type = avro a1.sinks.k2.hostname = bigdata113 a1.sinks.k2.port = 4141

# 4.channel—1 a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100

# 4.channel—2 a1.channels.c2.type = memory a1.channels.c2.capacity = 1000 a1.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel a1.sources.r1.channels = c1 c2 a1.sinks.k1.channel = c1 a1.sinks.k2.channel = c2 |

2.创建flume-fanout-2.conf

用于接收flume1的event,同时产生1个channel和1个sink,将数据输送成logger:

[root@bigdata112 myconf]# vi flume-fanout-2.conf

|

#定义source、channels、sinks # 1 agent a2.sources = r1 a2.sinks = k1 a2.channels = c1

#配置source 一个 # 2 source a2.sources.r1.type = avro a2.sources.r1.bind = bigdata112 a2.sources.r1.port = 4141

#配置sink 一个 # 3 sink a2.sinks.k1.type = logger

#配置channel 一个 # 4 channel a2.channels.c1.type = memory a2.channels.c1.capacity = 1000 a2.channels.c1.transactionCapacity = 100

#5 Bind a2.sources.r1.channels = c1 a2.sinks.k1.channel = c1 |

3. 创建flume-fanout-3.conf

用于接收flume1的event,同时产生1个channel和1个sink,将数据输送给本地目录:

[root@bigdata113 myconf]# vi flume-fanout-3.conf

|

#定义agent #1 agent a3.sources = r1 a3.sinks = k1 a3.channels = c1

#配置source 一个 # 2 source a3.sources.r1.type = avro a3.sources.r1.bind = bigdata113 a3.sources.r1.port = 4141

#配置sink 一个 #3 sink a3.sinks.k1.type = file_roll #备注:此处的文件夹需要先创建好 a3.sinks.k1.sink.directory = /opt/flume3

#配置channel 一个 # 4 channel a3.channels.c1.type = memory a3.channels.c1.capacity = 1000 a3.channels.c1.transactionCapacity = 100

#连接 # 5 Bind a3.sources.r1.channels = c1 a3.sinks.k1.channel = c1 |

尖叫提示:输出的本地目录必须是已经存在的目录,如果该目录不存在,并不会创建新的目录。

bigdata113上创建文件夹:/opt/flume3

[root@bigdata113 opt]# mkdir flume3

[root@bigdata113 opt]# ls

ACA ACA2 ACA.zip flume3 module software

4.执行测试

分别开启对应flume-job(依次启动flume1,flume-2,flume-3),同时产生文件变动并观察结果:

|

[root@bigdata111 myconf]# flume-ng agent -c ../conf/ -n a1 -f flume-fanout-1.conf -Dflume.root.logger==INFO,console [root@bigdata112 myconf]# flume-ng agent -c ../conf/ -n a2 -f flume-fanout-2.conf -Dflume.root.logger==INFO,console [root@bigdata113 myconf]# flume-ng agent -c ../conf/ -n a3 -f flume-fanout-3.conf -Dflume.root.logger==INFO,console |

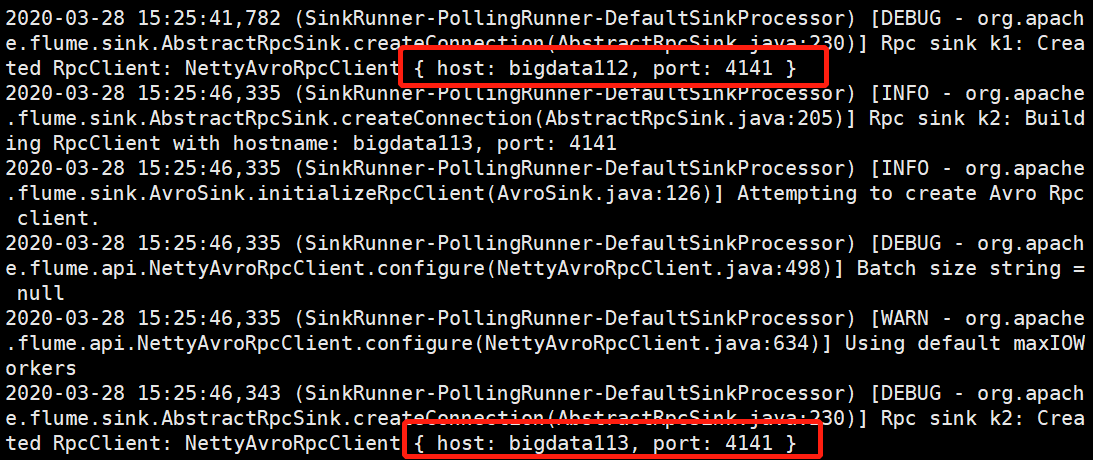

执行之后,各个机器状态是这样的:

bigdata111:

如果出现连接被拒绝的情况,要检查机器与机器之间相连接的端口是否统一、主机名是否正确-即:sinks端的主机名应是sources的落处。

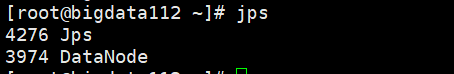

bigdata112:

![]()

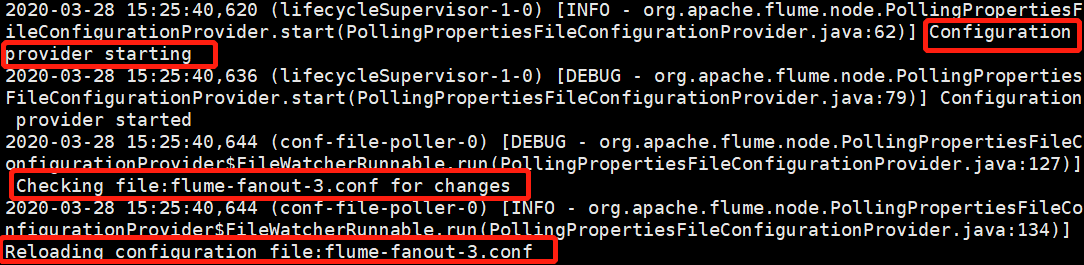

bigdata113:

5.实践结果

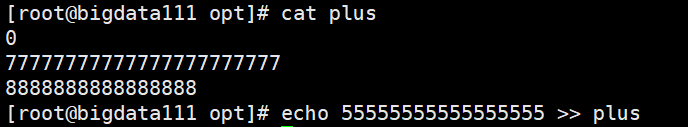

(1)在bigdata111上查看监控文件/opt/plus的现有内容、之后进行追加:

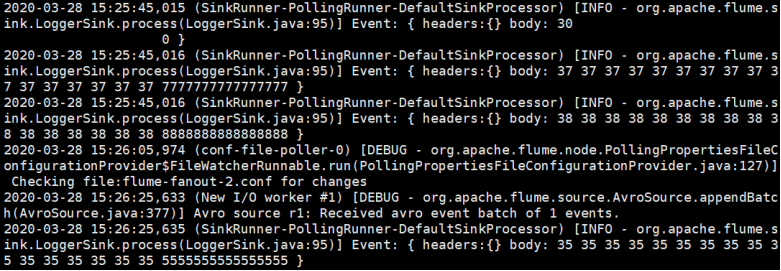

(2)在bigdata112上查看监控的日志:

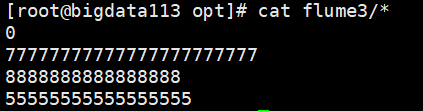

(3)在bigdata113上查看存放有监控内容的目录下的所有文件内容/opt/flume3:

这样就实现了:监控bigdata111上文件/opt/plus的内容,并将其输入传送到bigdata112的日志和bigdata113的/opt/flume目录中。

浙公网安备 33010602011771号

浙公网安备 33010602011771号