Docker(2)

复杂安装详说

mysql 主从复制

主服务容器启动与配置

新启主服务容器

docker run -p 3307:3306 --name mysql-master -v /usr/local/docker/myMysql/mysql-master/log:/var/log/mysql -v /usr/local/docker/myMysql/mysql-master/data:/var/lib/mysql -v /usr/local/docker/myMysql/mysql-master/conf:/etc/mysql -e MYSQL_ROOT_PASSWORD=root -d mysql:5.7.38

修改对应的配置文件

[mysqld]

##设置server_id,同一局域网中需要唯一

server_id=101

## 指定不需要同步的数据库名称

binlog-ignore-db=mysql

## 开启二进制日志功能

log-bin=mall-mysql-bin

## 设置二进制日志使用内存大小(事务)

binlog_cache_size=1M

## 设置使用的二进制日志格式(mixed,statement,row)

binlog_format=mixed

## 二进制日期过期清理时间,默认值为0,表示不自动清理

expire_logs_days=7

## 跳过主从复制中遇到的所有错误或指定类型的错误,避免 slave 端复制中断.

## 如: 1062 错误是指一些主键重复, 1032 错误是指主从数据库数据不一致.

slave_skip_errors=1062

修改完配置后重启配置容器

docker restart mysql-master

[root@VM-0-12-centos conf]# docker restart mysql-master

mysql-master

进入容器创建同步信任用户

[root@VM-0-12-centos mysql-master]# docker exec -it a675179083b3 bash

root@a675179083b3:/# mysql -uroot -proot

mysql> CREATE USER 'slave'@'%' IDENTIFIED BY '123456';

Query OK, 0 rows affected (0.01 sec)

mysql> GRANT REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'slave'@'%';

Query OK, 0 rows affected (0.01 sec)

从服务容器启动与配置

新建从服务器容器实例3308

docker run -p 3308:3306 --name mysql-slave -v /usr/local/docker/myMysql/mysql-slave/log:/var/log/mysql -v /usr/local/docker/myMysql/mysql-slave/data:/var/lib/mysql -v /usr/local/docker/myMysql/mysql-slave/conf:/etc/mysql -e MYSQL_ROOT_PASSWORD=root -d mysql:5.7.38

修改对应的配置文件

[mysqld]

## 设置server_id,同一局域网中需要唯一

server_id=102

## 指定不需要同步的数据库名称

binlog-ignore-db=mysql

## 开启二进制日志功能,以备Slave作为其它数据库实例的Master时使用

log-bin=mall-mysql-slave1-bin

## 设置二进制日志使用内存大小(事务)

binlog_cache_size=1M

## 设置使用的二进制日志格式(mixed,statement,row)

binlog_format=mixed

## 二进制日期过期清理时间,默认值为0,表示不自动清理

expire_log_days=7

## 跳过主从复制中遇到的所有错误或指定类型的错误,避免 slave 端复制中断.

## 如: 1062 错误是指一些主键重复, 1032 错误是指主从数据库数据不一致.

slave_skip_errors=1062

## relay_log 配置中继日志

relay_log=mall-mysql-relay-bin

## log_slave_updates 表示 slave 将复制事件写进自己的二进制日志

log_slave_updates=1

## slave 设置为只读(具有super权限的用户除外)

read_only=1

主服务器查看同步状态

mysql> show master status;

+-----------------------+----------+--------------+------------------+-------------------+

| File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set |

+-----------------------+----------+--------------+------------------+-------------------+

| mall-mysql-bin.000001 | 617 | | mysql | |

+-----------------------+----------+--------------+------------------+-------------------+

1 row in set (0.00 sec)

配置主从复制

下面的一些数据需要从从服务器执行查看同步状态的sql获取.

change master to master_host='宿主机ip', master_user='slave', master_password='123456', master_port=3307, master_log_file='mall-mysql-bin.000001', master_log_pos=617, master_connect_retry=30;

进入从服务容器中的mysql,执行绑定语句.

[root@VM-0-12-centos conf]# docker exec -it mysql-slave bash

root@5422c3fba9c3:/# mysql -uroot -proot

mysql: [Warning] Using a password on the command line interface can be insecure.

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 2

Server version: 5.7.38-log MySQL Community Server (GPL)

Copyright (c) 2000, 2022, Oracle and/or its affiliates.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> change master to master_host='宿主机ip', master_user='slave', master_password='123456', master_port=3307, master_log_file='mall-mysql-bin.000001', master_log_pos=617, master_connect_retry=30;

Query OK, 0 rows affected, 2 warnings (0.04 sec)

查看从服务的同步状态

mysql> show slave status\G;

*************************** 1. row ***************************

Slave_IO_State:

Master_Host: 172.17.0.12

Master_User: slave

Master_Port: 3307

Connect_Retry: 30

Master_Log_File: mall-mysql-bin.000001

Read_Master_Log_Pos: 617

Relay_Log_File: mall-mysql-relay-bin.000001

Relay_Log_Pos: 4

Relay_Master_Log_File: mall-mysql-bin.000001

Slave_IO_Running: No

Slave_SQL_Running: No

Replicate_Do_DB:

Replicate_Ignore_DB:

Replicate_Do_Table:

Replicate_Ignore_Table:

Replicate_Wild_Do_Table:

Replicate_Wild_Ignore_Table:

Last_Errno: 0

Last_Error:

Skip_Counter: 0

Exec_Master_Log_Pos: 617

Relay_Log_Space: 154

Until_Condition: None

Until_Log_File:

Until_Log_Pos: 0

Master_SSL_Allowed: No

Master_SSL_CA_File:

Master_SSL_CA_Path:

Master_SSL_Cert:

Master_SSL_Cipher:

Master_SSL_Key:

Seconds_Behind_Master: NULL

Master_SSL_Verify_Server_Cert: No

Last_IO_Errno: 0

Last_IO_Error:

Last_SQL_Errno: 0

Last_SQL_Error:

Replicate_Ignore_Server_Ids:

Master_Server_Id: 0

Master_UUID:

Master_Info_File: /var/lib/mysql/master.info

SQL_Delay: 0

SQL_Remaining_Delay: NULL

Slave_SQL_Running_State:

Master_Retry_Count: 86400

Master_Bind:

Last_IO_Error_Timestamp:

Last_SQL_Error_Timestamp:

Master_SSL_Crl:

Master_SSL_Crlpath:

Retrieved_Gtid_Set:

Executed_Gtid_Set:

Auto_Position: 0

Replicate_Rewrite_DB:

Channel_Name:

Master_TLS_Version:

1 row in set (0.00 sec)

ERROR:

No query specified

两个状态是 no

Slave_IO_Running: No

Slave_SQL_Running: No

从服务开启同步

mysql> start slave;

Query OK, 0 rows affected (0.00 sec)

再次查看同步状态

mysql> show slave status\G;

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 172.17.0.12

Master_User: slave

Master_Port: 3307

Connect_Retry: 30

Master_Log_File: mall-mysql-bin.000001

Read_Master_Log_Pos: 617

Relay_Log_File: mall-mysql-relay-bin.000002

Relay_Log_Pos: 325

Relay_Master_Log_File: mall-mysql-bin.000001

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

变成yes后说明开启成功了.

测试

主机创建数据库

mysql> create database db01;

Query OK, 1 row affected (0.01 sec)

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| db01 |

| mysql |

| performance_schema |

| sys |

+--------------------+

5 rows in set (0.00 sec)

从机自动同步

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| db01 |

| mysql |

| performance_schema |

| sys |

+--------------------+

5 rows in set (0.00 sec)

redis 集群模式

三主三从 redis 集群配置

新建 6 个 docker 容器 redis 实例

--net host : 使用宿主机的 IP 和端口,默认;

--cluster-enabled yes 开启redis集群

--appendonly yes 开启持久化

docker run -d --name redis-node-1 --net host --privileged=true -v /usr/local/docker/redis/share/redis-node-1:/data redis:6.0.8 --cluster-enabled yes --appendonly yes --port 6381

docker run -d --name redis-node-2 --net host --privileged=true -v /usr/local/docker/redis/share/redis-node-2:/data redis:6.0.8 --cluster-enabled yes --appendonly yes --port 6382

docker run -d --name redis-node-3 --net host --privileged=true -v /usr/local/docker/redis/share/redis-node-3:/data redis:6.0.8 --cluster-enabled yes --appendonly yes --port 6383

docker run -d --name redis-node-4 --net host --privileged=true -v /usr/local/docker/redis/share/redis-node-4:/data redis:6.0.8 --cluster-enabled yes --appendonly yes --port 6384

docker run -d --name redis-node-5 --net host --privileged=true -v /usr/local/docker/redis/share/redis-node-5:/data redis:6.0.8 --cluster-enabled yes --appendonly yes --port 6385

docker run -d --name redis-node-6 --net host --privileged=true -v /usr/local/docker/redis/share/redis-node-6:/data redis:6.0.8 --cluster-enabled yes --appendonly yes --port 6386

进入容器redis-node-1并为6台机器构建集群关系

进入容器

docker exec -it redis-node-1 /bin/bash

构建主从关系

--cluster-replicas 1 表示为每个master创建一个slave节点

redis-cli --cluster create 172.17.0.12:6381 172.17.0.12:6382 172.17.0.12:6383 172.17.0.12:6384 172.17.0.12:6385 172.17.0.12:6386 --cluster-replicas 1

>>> Performing hash slots allocation on 6 nodes...

Master[0] -> Slots 0 - 5460

Master[1] -> Slots 5461 - 10922

Master[2] -> Slots 10923 - 16383

Adding replica 172.17.0.12:6385 to 172.17.0.12:6381

Adding replica 172.17.0.12:6386 to 172.17.0.12:6382

Adding replica 172.17.0.12:6384 to 172.17.0.12:6383

>>> Trying to optimize slaves allocation for anti-affinity

[WARNING] Some slaves are in the same host as their master

M: 361302e8e7e36c0b3f1a309350d5afeec0daa8f9 172.17.0.12:6381

slots:[0-5460] (5461 slots) master

M: 99243ec2d72f887298e045ec1615a0a2aac26d14 172.17.0.12:6382

slots:[5461-10922] (5462 slots) master

M: 6962b90f44f73ab4ce0a6a0d594f631f9aef0267 172.17.0.12:6383

slots:[10923-16383] (5461 slots) master

S: f71862404831a793f70fb01b7e7d63b51de5fe63 172.17.0.12:6384

replicates 6962b90f44f73ab4ce0a6a0d594f631f9aef0267

S: dd83f60bacaf1f794bffca2aa6e5acc899abb15f 172.17.0.12:6385

replicates 361302e8e7e36c0b3f1a309350d5afeec0daa8f9

S: 9e5aefde3dac77480e655ca14ab7967309d63607 172.17.0.12:6386

replicates 99243ec2d72f887298e045ec1615a0a2aac26d14

Can I set the above configuration? (type 'yes' to accept): yes

>>> Nodes configuration updated

>>> Assign a different config epoch to each node

>>> Sending CLUSTER MEET messages to join the cluster

Waiting for the cluster to join

....

>>> Performing Cluster Check (using node 172.17.0.12:6381)

M: 361302e8e7e36c0b3f1a309350d5afeec0daa8f9 172.17.0.12:6381

slots:[0-5460] (5461 slots) master

1 additional replica(s)

M: 99243ec2d72f887298e045ec1615a0a2aac26d14 172.17.0.12:6382

slots:[5461-10922] (5462 slots) master

1 additional replica(s)

M: 6962b90f44f73ab4ce0a6a0d594f631f9aef0267 172.17.0.12:6383

slots:[10923-16383] (5461 slots) master

1 additional replica(s)

S: dd83f60bacaf1f794bffca2aa6e5acc899abb15f 172.17.0.12:6385

slots: (0 slots) slave

replicates 361302e8e7e36c0b3f1a309350d5afeec0daa8f9

S: 9e5aefde3dac77480e655ca14ab7967309d63607 172.17.0.12:6386

slots: (0 slots) slave

replicates 99243ec2d72f887298e045ec1615a0a2aac26d14

S: f71862404831a793f70fb01b7e7d63b51de5fe63 172.17.0.12:6384

slots: (0 slots) slave

replicates 6962b90f44f73ab4ce0a6a0d594f631f9aef0267

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

进入6381查看集群状态

127.0.0.1:6381> cluster info

cluster_state:ok

cluster_slots_assigned:16384

cluster_slots_ok:16384

cluster_slots_pfail:0

cluster_slots_fail:0

cluster_known_nodes:6

cluster_size:3

cluster_current_epoch:6

cluster_my_epoch:1

cluster_stats_messages_ping_sent:241

cluster_stats_messages_pong_sent:251

cluster_stats_messages_sent:492

cluster_stats_messages_ping_received:246

cluster_stats_messages_pong_received:241

cluster_stats_messages_meet_received:5

cluster_stats_messages_received:492

127.0.0.1:6381> cluster nodes

99243ec2d72f887298e045ec1615a0a2aac26d14 172.17.0.12:6382@16382 master - 0 1656403925000 2 connected 5461-10922

6962b90f44f73ab4ce0a6a0d594f631f9aef0267 172.17.0.12:6383@16383 master - 0 1656403924000 3 connected 10923-16383

361302e8e7e36c0b3f1a309350d5afeec0daa8f9 172.17.0.12:6381@16381 myself,master - 0 1656403922000 1 connected 0-5460

dd83f60bacaf1f794bffca2aa6e5acc899abb15f 172.17.0.12:6385@16385 slave 361302e8e7e36c0b3f1a309350d5afeec0daa8f9 0 1656403923000 1 connected

9e5aefde3dac77480e655ca14ab7967309d63607 172.17.0.12:6386@16386 slave 99243ec2d72f887298e045ec1615a0a2aac26d14 0 1656403924000 2 connected

f71862404831a793f70fb01b7e7d63b51de5fe63 172.17.0.12:6384@16384 slave 6962b90f44f73ab4ce0a6a0d594f631f9aef0267 0 1656403925596 3 connected

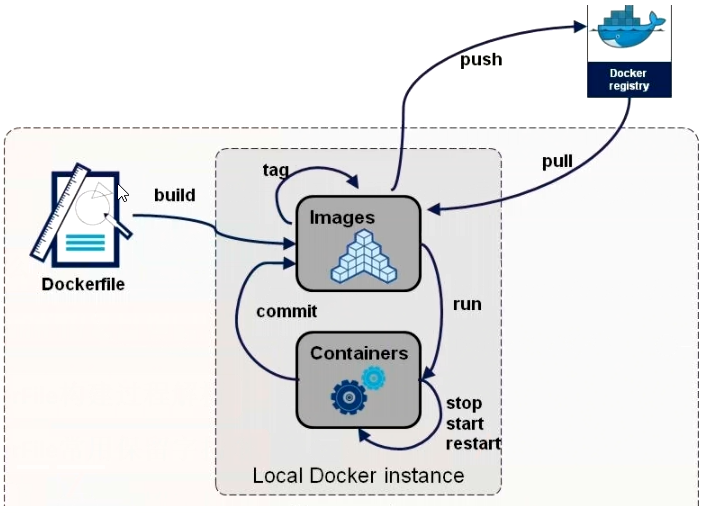

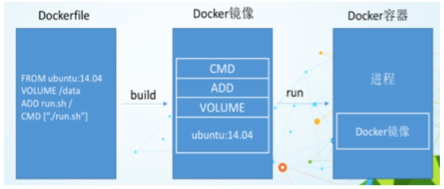

DockerFile

是什么

Dockerfile是用来构建Docker镜像的文本文件,是由一条条构建镜像所需的指令和参数构成的脚本。其中包含用户可以在命令行上调用以组合图像的所有命令。使用用户可以创建一个自动生成,该生成连续执行多个命令行指令。

官网: https://docs.docker.com/engine/reference/builder/

构建三步骤

编写DockerFile文件

docker build 命令构建镜像

docker run 根据镜像运行容器实例

DockerFile构建过程解析

DockerFile 内容基础知识

- 每条保留字指令都必须为大写字母且后面要跟随至少一个参数

- 指令按照从上到下,顺序执行

- #表示注释

- 每条指令都会创建一个新的镜像层并对镜像进行提交

Docker 执行 DockerFile 的大致流程

- docker从基础镜像运行一个容器

- 执行一条指令并对容器作出修改

- 执行类似docker commit的操作提交一个新的镜像层

- docker再基于刚提交的镜像运行一个新容器

- 执行dockerfile中的下一条指令直到所有指令都执行完成

小总结

从应用软件的角度来看,Dockerfile、Docker镜像与Docker容器分别代表软件的三个不同阶段:

- Dockerfile是软件的原材料;

- Docker镜像是软件的交付品;

- Docker容器则可以认为是软件镜像的运行态,也即依照镜像运行的容器实例;

Dockerfile 面向开发,Docker 镜像成为交付标准,Docker 容器则涉及部署与运维,三者缺一不可,合力充当Docker体系的基石。

- DockerFile, 需要定义一个 DockerFile, DockerFile 定义了进程需要的一切东西. DockerFile 涉及的内容包括执行代码或者是文件, 环境变量, 依赖包, 运行时环境, 动态链接库, 操作系统的发行版, 服务进程和内核进程等等.

- Docker 镜像,在用 DockerFile 定义一个文件之后, Docker build 时会产生一个 Docker 镜像, 当运行 Docker 镜像时会真正开始提供服务;

- Docker 容器,容器是直接提供服务的.

DockerFile 的保留字指令

参考tomcat8的DockerFile: https://github.com/docker-library/tomcat

FROM

基础镜像,当前新镜像是基于哪个镜像的,指定一个已经存在的镜像作为模板,第一条必须是from

MAINTAINER

镜像维护者的姓名和邮箱地址

RUN

容器构建时需要运行的命令

RUN是在 docker build时运行

两种格式

shell格式: RUN yum -y install vim

exec 格式.

EXPOSE

当前容器对外暴露出的端口

WORKDIR

指定在创建容器后,终端默认登陆的进来工作目录,一个落脚点

USER

指定该镜像以什么样的用户去执行,如果都不指定,默认是root

ENV

用来在构建镜像过程中设置环境变量

ENV MY_PATH /usr/mytest, 这个环境变量可以在后续的任何RUN指令中使用,这就如同在命令前面指定了环境变量前缀一样;也可以在其它指令中直接使用这些环境变量, 比如:WORKDIR $MY_PATH

ADD

将宿主机目录下的文件拷贝进镜像且会自动处理URL和解压tar压缩包

COPY

类似ADD,拷贝文件和目录到镜像中。将从构建上下文目录中 <源路径> 的文件/目录复制到新的一层的镜像内的 <目标路径> 位置;

COPY ["src", "dest"]

VOLUME

容器数据卷,用于数据保存和持久化工作

CMD

指定容器启动后要干的事情.

注意

Dockerfile 中可以有多个 CMD 指令,但只有最后一个生效,CMD 会被 docker run 之后的参数替换

它和前面RUN命令的区别

CMD是在docker run 时运行。

RUN是在 docker build时运行。

ENTRYPOINT

也是用来指定一个容器启动时要运行的命令;

类似于 CMD 指令,但是ENTRYPOINT不会被docker run后面的命令覆盖,而且这些命令行参数会被当作参数送给 ENTRYPOINT 指令指定的程序;

自定义编写DockerFile

Centos7 镜像具备 vim+ifconfig+jdk8

编写 Dockerfile

FROM centos:centos7

MAINTAINER metype<<metype@aliyun.com>

ENV MYPATH /usr/local

WORKDIR $MYPATH

#安装vim编辑器

RUN yum -y install vim

#安装ifconfig命令查看网络IP

RUN yum -y install net-tools

# 安装java8及lib库

RUN yum -y install glibc.i686

RUN mkdir /usr/local/java

# ADD 是相对路径 jar, 把jdk添加到容器中,安装包必须要和Dockerfile文件在同一位置

ADD jdk-8u201-linux-x64.tar.gz /usr/local/java/

# 配置java 环境变量

ENV JAVA_HOME /usr/local/java/jdk1.8.0_201

ENV JRE_HOME %JAVA_HOME/jre

ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar:$JRE_HOME/lib:$CLASSPATH

ENV PATH $JAVA_HOME/bin:$PATH

EXPOSE 80

CMD echo $MYPATH

CMD echo "success-----------------------ok"

CMD /bin/bash

错误记录

Step 5/15 : RUN yum -y install glibc.i686

---> Running in 92eba1ce9c2c

CentOS Linux 8 - AppStream 96 B/s | 38 B 00:00

Error: Failed to download metadata for repo 'appstream': Cannot prepare internal mirrorlist: No URLs in mirrorlist

The command '/bin/sh -c yum -y install glibc.i686' returned a non-zero code: 1

遇到这个错误, 多半是centos的版本问题. 默认的话是下载最新的centos的镜像. FROM 镜像如果本地有的话直接就是用本地的镜像了, 如果没有的. 会自己去下一下.

这个时候指定一下对应的版本就可以了.

执行编译

docker build -t centosjava8:1.0 .

编译成功后产生对应的镜像.

[root@VM-0-12-centos myfile]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

centosjava8 1.0 eda5ce3a0a33 6 seconds ago 1.21GB

运行镜像

[root@VM-0-12-centos myfile]# docker run -it centosjava8:1.5 /bin/bash

[root@4a952f66d016 local]# pwd

/usr/local

[root@4a952f66d016 local]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.1.1 netmask 255.255.0.0 broadcast 192.168.255.255

RX packets 8 bytes 656 (656.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@3fa9b7674be0 local]# java -version

java version "1.8.0_201"

Java(TM) SE Runtime Environment (build 1.8.0_201-b09)

Java HotSpot(TM) 64-Bit Server VM (build 25.201-b09, mixed mode)

虚悬镜像

查看虚悬镜像

[root@VM-0-12-centos myfile]# docker image ls -f dangling=true

REPOSITORY TAG IMAGE ID CREATED SIZE

删除虚悬镜像

docker image prune

微服务实战

编写测试项目

@RestController

public class TestController {

@Value("${server.port}")

private String port;

@GetMapping("/getPort")

public String getPort() {

return "服务对应的端口是:" + port;

}

}

编写Dokcerfile

# 基础镜像使用java

FROM java:8

# 作者

MAINTAINER metype

# VOLUME 指定临时文件目录为/tmp,在主机/var/lib/docker目录下创建了一个临时文件并链接到容器的/tmp

VOLUME /tmp

# 将jar包添加到容器中并更名为metype-docker.jar

ADD docker-boot-0.0.1-SNAPSHOT.jar metype-docker.jar

# 运行jar包

RUN bash -c 'touch /metype-docker.jar'

ENTRYPOINT ["java","-jar","/metype-docker.jar"]

#暴露8080端口

EXPOSE 8080

执行编译

[root@VM-0-12-centos springfile]# docker build -t springfile:1.0 .

Sending build context to Docker daemon 17.85MB

Step 1/7 : FROM java:8

8: Pulling from library/java

5040bd298390: Pull complete

fce5728aad85: Pull complete

76610ec20bf5: Pull complete

60170fec2151: Pull complete

e98f73de8f0d: Pull complete

11f7af24ed9c: Pull complete

49e2d6393f32: Pull complete

bb9cdec9c7f3: Pull complete

Digest: sha256:c1ff613e8ba25833d2e1940da0940c3824f03f802c449f3d1815a66b7f8c0e9d

Status: Downloaded newer image for java:8

---> d23bdf5b1b1b

Step 2/7 : MAINTAINER metype

---> Running in 9b3390e340db

Removing intermediate container 9b3390e340db

---> 1a145f30d992

Step 3/7 : VOLUME /tmp

---> Running in 0357696b4426

Removing intermediate container 0357696b4426

---> 035fe5536124

Step 4/7 : ADD docker-boot-1.0-SNAPSHOT.jar metype-docker.jar

---> 055b37060802

Step 5/7 : RUN bash -c 'touch /metype-docker.jar'

---> Running in 9f5db939f3f9

Removing intermediate container 9f5db939f3f9

---> 983740de7055

Step 6/7 : ENTRYPOINT ["java","-jar","/metype-docker.jar"]

---> Running in f4fab6647669

Removing intermediate container f4fab6647669

---> 702475b93c6a

Step 7/7 : EXPOSE 8080

---> Running in 2e78dcd8a49f

Removing intermediate container 2e78dcd8a49f

---> 5edf8581113d

Successfully built 5edf8581113d

Successfully tagged springfile:1.0

查看编译的镜像

[root@VM-0-12-centos springfile]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

springfile 1.0 5edf8581113d 57 seconds ago 679MB

运行镜像

[root@VM-0-12-centos springfile]# docker run -p 8080:8080 -d springfile:1.0

29e45b597964023f5150e0a34d92c67e37280682bfb0c41f9bab3e2edf9fa8fd

访问测试

服务对应的端口是:8080

Dokcer 网络

是什么

docker启动后,网络情况:

[root@VM-0-12-centos ~]# ifconfig

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet netmask 255.255.0.0 broadcast 172.18.255.255

inet6 fe80::42:4dff:fe14:40b7 prefixlen 64 scopeid 0x20<link>

ether 02:42:4d:14:40:b7 txqueuelen 0 (Ethernet)

RX packets 69701 bytes 37799629 (36.0 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 77094 bytes 253620305 (241.8 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet netmask 255.255.240.0 broadcast

inet6 fe80::5054:ff:fef9:55ab prefixlen 64 scopeid 0x20<link>

ether 52:54:00:f9:55:ab txqueuelen 1000 (Ethernet)

RX packets 47838389 bytes 10670522116 (9.9 GiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 45340795 bytes 6765443388 (6.3 GiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 3220109 bytes 2279345444 (2.1 GiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 3220109 bytes 2279345444 (2.1 GiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

会产生一个名为docker0的虚拟网桥

常用基本命令

所有命令

[root@VM-0-12-centos ~]# docker network --help

Usage: docker network COMMAND

Manage networks

Commands:

connect 将容器连接到网络

create 创建一个网络

disconnect 断开容器与网络的连接

inspect 显示一个或多个网络的详细信息

ls 查看网络列表

prune 删除所有未使用的网络

rm 删除一个或多个网络

创建网络

[root@VM-0-12-centos ~]# docker network create aa_network

1478a96c18d29ce3484226b3725b34d71715cc089388c005918aa573e3a7700d

查看所有的网络

[root@VM-0-12-centos ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

1478a96c18d2 aa_network bridge local

4a092463baec bridge bridge local

7ae20de2806e host host local

9d820dd40aa0 none null local

删除一个网络

[root@VM-0-12-centos ~]# docker network rm 1478a96c18d2

1478a96c18d2

[root@VM-0-12-centos ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

4a092463baec bridge bridge local

7ae20de2806e host host local

9d820dd40aa0 none null local

查看一个网络的详细信息

[root@VM-0-12-centos ~]# docker network inspect 4a092463baec

[

{

"Name": "bridge",

"Id": "4a092463baecdb84e011b9fcab9610721d25b1f8ce34ea4916ae6aea5554baaf",

"Created": "2022-06-29T21:37:57.695736669+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

"Config": [

{

"Subnet": "175.18.0.0/16",

"Gateway": "175.18.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {

"af8a51cde1a781b482146832ffe3996dfe958ac9f504a24b63d4a9e33b8369ef": {

"Name": "mysql",

"EndpointID": "723bb926ba019c7c65da0d9b2f1a2e71d316d2e571e35f288dfd8df7039a418c",

"MacAddress": "02:42:ac:12:00:02",

"IPv4Address": "175.18.0.2/16",

"IPv6Address": ""

}

},

"Options": {

"com.docker.network.bridge.default_bridge": "true",

"com.docker.network.bridge.enable_icc": "true",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.host_binding_ipv4": "0.0.0.0",

"com.docker.network.bridge.name": "docker0",

"com.docker.network.driver.mtu": "1500"

},

"Labels": {}

}

]

能干嘛

容器间的互联和通信以及端口映射

容器IP变动时候可以通过服务名直接网络通信而不受到影响

网络模式

| 网络模式 | 简介 |

|---|---|

| bridge | 为每一个容器分配, 设置 IP 等, 并将容器连接到一个 docker0虚拟网桥, 默认为该模式. 使用--network bridge指定,默认使用docker0 |

| host | 容器将不会虚拟出自己的网卡, 配置自己的 IP等, 而是使用宿主机的 IP 和端口. 使用--network host指定 |

| none | 容器有独立的 Network namespace, 但并没有对其进行任何网络设置,如分配 veth pair 和网 桥连接,IP 等。 使用--network none指定 |

| container | 新创建的容器不会创建自己的网卡和配置自己的 IP, 而是和一个指定的容器共享 IP、端口范围 等。 使用--network container:NAME或者容器ID指定 |

容器实例内默认网络IP生产规则: docker容器内部的ip是有可能会发生改变的

案例说明

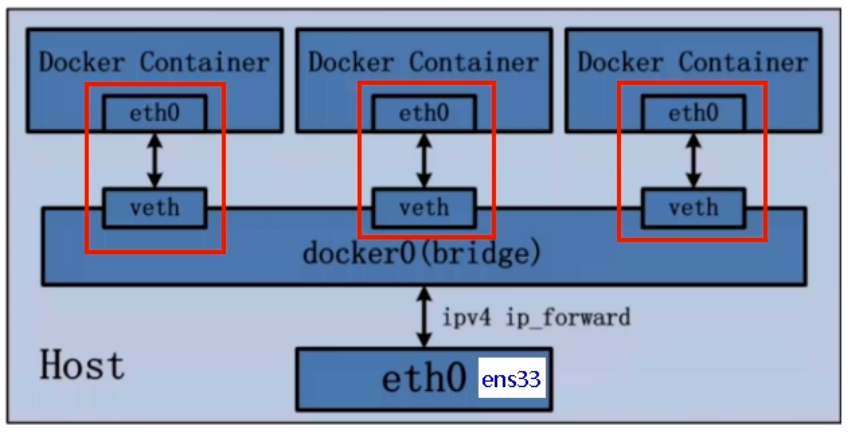

bridge

是什么

Docker 服务默认会创建一个 docker0 网桥(其上有一个 docker0 内部接口),该桥接网络的名称为docker0,它在内核层连通了其他的物理或虚拟网卡,这就将所有容器和本地主机都放到同一个物理网络。Docker 默认指定了 docker0 接口 的 IP 地址和子网掩码,让主机和容器之间可以通过网桥相互通信。

# 查看 bridge 网络的详细信息,并通过 grep 获取名称项

[root@VM-0-12-centos springfile]# docker network inspect bridge | grep name

"com.docker.network.bridge.name": "docker0",

# ifconfig

[root@VM-0-12-centos springfile]# ifconfig | grep docker

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

说明

- Docker使用Linux桥接,在宿主机虚拟一个Docker容器网桥(docker0),Docker启动一个容器时会根据Docker网桥的网段分配给容器一个IP地址,称为Container-IP,同时Docker网桥是每个容器的默认网关。因为在同一宿主机内的容器都接入同一个网桥,这样容器之间就能够通过容器的Container-IP直接通信。

- docker run 的时候,没有指定network的话默认使用的网桥模式就是bridge,使用的就是docker0。在宿主机ifconfig,就可以看到docker0和自己create的network(后面讲)eth0,eth1,eth2……代表网卡一,网卡二,网卡三……,lo代表127.0.0.1,即localhost,inet addr用来表示网卡的IP地址.

- 网桥 docker0 创建一对对等虚拟设备接口一个叫veth,另一个叫eth0,成对匹配。

- 整个宿主机的网桥模式都是docker0, 类似一个交换机有一堆接口,每个接口叫 veth, 在本地主机和容器内分别创建一个虚拟接口,并让他们彼

此联通(这样一对接口叫 veth pair); - 每个容器实例内部也有一块网卡,每个接口叫eth0;

- docker0上面的每个 veth 匹配某个容器实例内部的 eth0, 两两配对,一一匹配。

通过上述,将宿主机上的所有容器都连接到这个内部网络上,两个容器在同一个网络下,会从这个网关下各自拿到分配的,此时两个容器的网络是互通的。

- 整个宿主机的网桥模式都是docker0, 类似一个交换机有一堆接口,每个接口叫 veth, 在本地主机和容器内分别创建一个虚拟接口,并让他们彼

示例

[root@VM-0-12-centos ~]# docker run -d -p 8081:8080 --name tomcat81 tomcat

b71ea094c6b9d42b9a44629a4e017869cd5beb52ae1cfca43868e4bc3b87e72f

[root@VM-0-12-centos ~]# docker run -d -p 8082:8080 --name tomcat82 tomcat

c60dcb132dd4b96571b5d2eb230955b8c8b91c5f03b1ac9a231ffb2cb00c7d4c

[root@VM-0-12-centos ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

c60dcb132dd4 tomcat "catalina.sh run" 3 seconds ago Up 2 seconds 0.0.0.0:8082->8080/tcp, :::8082->8080/tcp tomcat82

b71ea094c6b9 tomcat "catalina.sh run" 16 seconds ago Up 15 seconds 0.0.0.0:8081->8080/tcp, :::8081->8080/tcp tomcat81

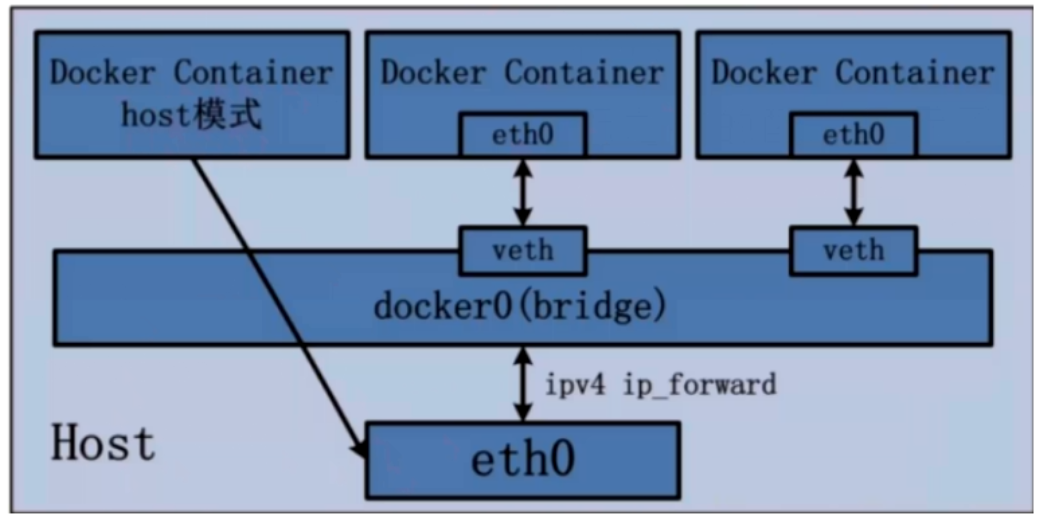

host

说明

容器将不会获得一个独立的 Network Namespace, 而是和宿主机共用一个 Network Namespace。容器将不会虚拟出自己的网卡而是使用宿主机的IP和端口。

示例

[root@VM-0-12-centos ~]# docker run -d -p 8083:8080 --network host --name tomcat83 tomcat

WARNING: Published ports are discarded when using host network mode

2dc6db4387560d55d2f1a03067179b550531389757fa8f9f0c6bdb5c73373204

docker ps 查看

[root@VM-0-12-centos ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

2dc6db438756 tomcat "catalina.sh run" 4 minutes ago Up 4 minutes tomcat83

c60dcb132dd4 tomcat "catalina.sh run" 52 minutes ago Up 51 minutes 0.0.0.0:8082->8080/tcp, :::8082->8080/tcp tomcat82

b71ea094c6b9 tomcat "catalina.sh run" 52 minutes ago Up 52 minutes 0.0.0.0:8081->8080/tcp, :::8081->8080/tcp tomcat81

tomcat83 的容器的 PORTS 为空, 也就是不存在端口的映射.

所以正确的启动就不需要加 -p 指令了.

[root@VM-0-12-centos ~]# docker run -d --network host tomcat

b25bdbecc071c48cea35e35b218d1a07ce323b7ffec0f0a2fbedabfa981c00fb

不会有警告信息.

访问的话就是和访问宿主机的端口是一样的, 直接返回宿主机的8080端口即可.

容器编排

是什么

Compose 是 Docker 公司推出的一个工具软件,可以管理多个 Docker 容器组成一个应用。你需要定义一个 YAML 格式的配置文件docker-compose.yml,写好多个容器之间的调用关系。然后,只要一个命令,就能同时启动/关闭这些容器, 负责实现对 Docker 容器集群的快速编排.

能干嘛

docker建议我们每一个容器中只运行一个服务,因为docker容器本身占用资源极少,所以最好是将每个服务单独的分割开来但是这样我们又面临了一个问题?

如果我需要同时部署好多个服务,难道要每个服务单独写Dockerfile然后在构建镜像,构建容器,这样累都累死了,所以docker官方给我们提供了docker-compose多服务部署的工具

例如要实现一个Web微服务项目,除了Web服务容器本身,往往还需要再加上后端的数据库mysql服务容器,redis服务器,注册中心eureka,甚至还包括负载均衡容器等等。。。。。。

Compose允许用户通过一个单独的 docker-compose.yml 模板文件(YAML 格式)来定义一组相关联的应用容器为一个项目(project)。

可以很容易地用一个配置文件定义一个多容器的应用,然后使用一条指令安装这个应用的所有依赖,完成构建。Docker-Compose 解决了容器与容器之间如何管理编排的问题。

下载

官网: https://docs.docker.com/compose/compose-file/compose-file-v3/

下载方式: https://docs.docker.com/compose/install/compose-plugin/#installing-compose-on-linux-systems

步骤

下载到本地

[root@VM-0-12-centos ~]# curl -SL https://github.com/docker/compose/releases/download/v2.6.1/docker-compose-linux-x86_64 -o /usr/local/bin/docker-compose

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- --:--:-- --:--:-- 0

39 24.5M 39 9922k 0 0 39083 0 0:10:58 0:04:19 0:06:39 39710^

加强写权限

[root@VM-0-12-centos bin]# chmod +x docker-compose

验证

[root@VM-0-12-centos bin]# docker-compose --version

Docker Compose version v2.6.1

核心概念

一文件

docker-compose.yml

两要素

工程=多个服务

服务(service)

一个个应用容器实例,比如订单微服务、库存微服务、mysql容器、nginx容器或者redis容器

工程(project)

由一组关联的应用容器组成的一个完整业务单元,在 docker-compose.yml 文件中定义。

使用步骤

- 编写Dockerfile定义各个微服务应用并构建出对应的镜像文件

- 使用 docker-compose.yml 定义一个完整业务单元,安排好整体应用中的各个容器服务。

- 最后,执行docker-compose up命令 来启动并运行整个应用程序,完成一键部署上线

常用命令

Compose常用命令

docker-compose -h #查看帮助

docker-compose up #启动所有docker--compose服务

docker-compose up -d #启动所有docker-compose服务并后台运行

docker-compose down #停止并删除容器、网络、卷、镜像。

docker-compose exec yml里面的服务id #进入容器实例内部docker-compose exec docker-compose.yml文件中写的服务id/bin/bash

docker-compose ps #展示当前docker-compose编排过的运行的所有容器

docker-compose top #展示当前docker-compose编排过的容器进程

docker-compose logs yml里面的服务id #查看容器输出日志

docker-compose config #检查配置

docker-compose config -q #检查配置,有问题才有输出

docker-compose restart #重启服务

docker-compose start #启动服务

docker-compose stop #停止服务

编写docker-compose.yml

version: "3"

services:

microService:

image: docker-boot:1.6

container_name: ms01

ports:

- "8080:6001"

volumes:

- /usr/local/docker/springfile:/data

networks:

- atguigu_net

depends_on:

- redis

- mysql

redis:

image: redis:6.0.8

ports:

- "6379:6379"

volumes:

- /usr/local/docker/redis/redis/redis.conf:/etc/redis/redis.conf

- /usr/local/docker/redis/redis/data:/data

networks:

- atguigu_net

command: redis-server /etc/redis/redis.conf

mysql:

image: mysql:5.7.38

environment:

MYSQL_ROOT_PASSWORD: 'root'

MYSQL_ALLOW_EMPTY_PASSWORD: 'no'

MYSQL_DATABASE: 'db2022'

MYSQL_USER: 'zzyy'

MYSQL_PASSWORD: 'zzyy123'

ports:

- "33016:3306"

volumes:

- /usr/local/docker/mysql/db:/var/lib/mysql

- /usr/local/docker/mysql/conf/my.cnf:/etc/my.cnf

- /usr/local/docker/mysql/init:/docker-entrypoint-initdb.d

networks:

- atguigu_net

command: --default-authentication-plugin=mysql_native_password #解决外部无法访问

networks:

atguigu_net:

mysql

mysql:

image: mysql:5.7.38

environment:

MYSQL_ROOT_PASSWORD: 'root'

MYSQL_ALLOW_EMPTY_PASSWORD: 'no'

MYSQL_DATABASE: 'db2022'

MYSQL_USER: 'zzyy'

MYSQL_PASSWORD: 'zzyy123'

ports:

- "33016:3306"

volumes:

- /usr/local/docker/mysql/db:/var/lib/mysql

- /usr/local/docker/mysql/conf/my.cnf:/etc/my.cnf

- /usr/local/docker/mysql/init:/docker-entrypoint-initdb.d

networks:

- atguigu_net

command: --default-authentication-plugin=mysql_native_password #解决外部无法访问

mysql:

image: "mysql:5.7.35"

container_name: "mysql"

ports:

- "3306:3306"

environment:

MYSQL_ROOT_PASSWORD: "aea_root"

TZ: "Asia/Shanghai"

volumes:

- /opt/web/aea/mysql/conf/:/etc/mysql/conf.d

- /opt/web/aea/mysql/data:/var/lib/mysql

restart: "always"

logging:

driver: "json-file"

options:

max-size: "1g"

networks:

- aea-net

redis

redis:

image: "redis:6.0.8"

container_name: "redis"

ports:

- "6379:6379"

environment:

TZ: "Asia/Shanghai"

volumes:

- /usr/local/docker/redis/redis.conf:/etc/redis/redis.conf

- /usr/local/docker/redis/data:/data

restart: "always"

command: [ "redis-server", "/etc/redis/redis.conf" ]

logging:

driver: "json-file"

options:

max-size: "1g"

networks:

- gulimall-net

redis.conf

bind 127.0.0.1

protected-mode yes

port 6379

tcp-backlog 511

timeout 0

tcp-keepalive 300

daemonize no

supervised no

pidfile /var/run/redis_6379.pid

loglevel notice

logfile ""

databases 16

always-show-logo yes

save 900 1

save 300 10

save 60 10000

stop-writes-on-bgsave-error yes

rdbcompression yes

rdbchecksum yes

dbfilename dump.rdb

rdb-del-sync-files no

dir ./

replica-serve-stale-data yes

replica-read-only yes

repl-diskless-sync no

repl-diskless-sync-delay 5

repl-diskless-load disabled

repl-disable-tcp-nodelay no

replica-priority 100

acllog-max-len 128

lazyfree-lazy-eviction no

lazyfree-lazy-expire no

lazyfree-lazy-server-del no

replica-lazy-flush no

lazyfree-lazy-user-del no

oom-score-adj no

oom-score-adj-values 0 200 800

appendonly no

appendfilename "appendonly.aof"

appendfsync everysec

no-appendfsync-on-rewrite no

auto-aof-rewrite-percentage 100

auto-aof-rewrite-min-size 64mb

aof-load-truncated yes

aof-use-rdb-preamble yes

lua-time-limit 5000

slowlog-log-slower-than 10000

slowlog-max-len 128

latency-monitor-threshold 0

notify-keyspace-events ""

hash-max-ziplist-entries 512

hash-max-ziplist-value 64

list-max-ziplist-size -2

list-compress-depth 0

set-max-intset-entries 512

zset-max-ziplist-entries 128

zset-max-ziplist-value 64

hll-sparse-max-bytes 3000

stream-node-max-bytes 4096

stream-node-max-entries 100

activerehashing yes

client-output-buffer-limit normal 0 0 0

client-output-buffer-limit replica 256mb 64mb 60

client-output-buffer-limit pubsub 32mb 8mb 60

hz 10

dynamic-hz yes

aof-rewrite-incremental-fsync yes

rdb-save-incremental-fsync yes

jemalloc-bg-thread yes

nginx

nginx:

container_name: nginx

image: nginx:1.20.2

ports:

- "80:80"

- "443:443"

restart: always

volumes:

- /usr/local/docker/nginx/conf/nginx.conf:/etc/nginx/nginx.conf:ro

- /usr/local/docker/nginx/html/:/usr/local/web/html/

- /usr/local/docker/nginx/attachment/:/usr/local/web/attachment/

environment:

- TZ=Asia/Shanghai

networks:

- gulimall-net

xxl-job

xxl-job:

container_name: xxl-job-admin

image: xxl-job-admin:2.3.0

build: /opt/docker/images/xxl-job-image-build

ports:

- "5360:8080"

environment:

PARAMS: --spring.datasource.url=jdbc:mysql://mysql:3306/xxl_job?useUnicode=true&characterEncoding=UTF-8&autoReconnect=true&serverTimezone=Asia/Shanghai --spring.datasource.username=root --spring.datasource.password=aea_root --xxl.job.accessToken=8888

JAVA_OPTS: -Xms1g -Xmx1g

restart: always

volumes:

- /opt/web/aea/xxl-job:/data/applogs

networks:

- aea-net

rabbitmq

rabbitmq:

container_name: "rabbitmq"

image: "rabbitmq:3.8.22-management"

ports:

- "3521:5672"

- "13567:15672"

environment:

TZ: "Asia/Shanghai"

volumes:

- /opt/web/aea/rabbitmq/etc:/etc/rabbitmq

- /opt/web/aea/rabbitmq/data:/var/lib/rabbitmq

- /opt/web/aea/rabbitmq/plugins:/plugins

restart: "always"

logging:

driver: "json-file"

options:

max-size: "1g"

networks:

- aea-net

elasticsearch

elasticsearch:

container_name: "elasticsearch"

image: "elasticsearch:7.4.2"

ports:

- "9200:9200"

- "9300:9300"

environment:

- discovery.type=single-node

- "ES_JAVA_OPTS: -Xms64m -Xmx512m"

volumes:

- /usr/local/docker/elasticsearch/config/elasticsearch.yml:/usr/share/elasticsearch/config/elasticsearch.yml

- /usr/local/docker/elasticsearch/data:/usr/share/elasticsearch/data

- /usr/local/docker/elasticsearch/plugins:/usr/share/elasticsearch/plugins -it elasticsearch:7.4.2

restart: "always"

networks:

- gulimall-net

elasticsearch.yml

http.host: 0.0.0.0

kibana

kibana:

container_name: "kibana"

image: "kibana:7.4.2"

ports:

- "5601:5601"

environment:

- ELASTICSEARCH_HOSTS=http://elasticsearch:9200

nacos

nacos:

container_name: "nacos"

image: "nacos/nacos-server:1.1.4"

ports:

- "8848:8848"

environment:

- JVM_XMS=256m

- JVM_XMX=256m

- NACOS_AUTH_ENABLE=true

- MODE=standalone

networks:

- gulimall-net

验证

curl -X POST "http://127.0.0.1:8848/nacos/v1/cs/configs?dataId=nacos.cfg.dataId&group=test&content=helloWorld"

轻量级可视化工具 Portainer

是什么

Portainer 是一款轻量级的应用,它提供了图形化界面,用于方便地管理 Docker 环境,包括单机环境和集群环境。

安装

docker 命令安装

docker run -d -p 8000:8000 -p 9000:9000 --name portainer --restart=always -v /var/run/docker.sock:/var/run/docker.sock -v /usr/local/docker/portainer/portainer_data:/data portainer/portainer

浙公网安备 33010602011771号

浙公网安备 33010602011771号