1.逻辑回归是怎么防止过拟合的?为什么正则化可以防止过拟合?(大家用自己的话介绍下)

逻辑回归利用正则化防止过拟合。正则化削减了容易过拟合的那部分假设空间,从而降低过拟合风险。过拟合的时候,拟合函数的系数往往非常大,而正则化是通过约束参数的范数使其不要太大,所以可以在一定程度上减少过拟合情况。

2.用logiftic回归来进行实践操作,数据不限。

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split

cancer = load_breast_cancer()

x = cancer.data

y = cancer.target

x_train, x_test, y_train, y_test = train_test_split(x, y, test_size=0.3, random_state=6)

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import classification_report

model = LogisticRegression()

model.fit(x_train, y_train)

y_pre = model.predict(x_test)

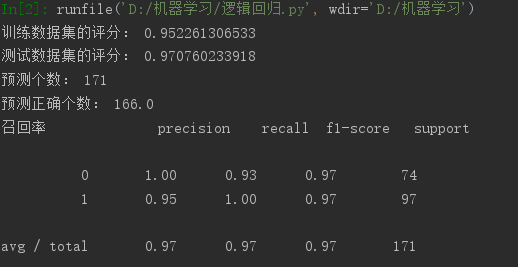

print('训练数据集的评分:', model.score(x_train, y_train))

print('测试数据集的评分:', model.score(x_test, y_test))

print('预测个数:', x_test.shape[0])

print('预测正确个数:', x_test.shape[0] * model.score(x_test, y_test))

print("召回率", classification_report(y_test, y_pre))

浙公网安备 33010602011771号

浙公网安备 33010602011771号