elasticsearch之ik分词器的基本操作

elasticsearch之ik分词器的基本操作

前言

- 首先将

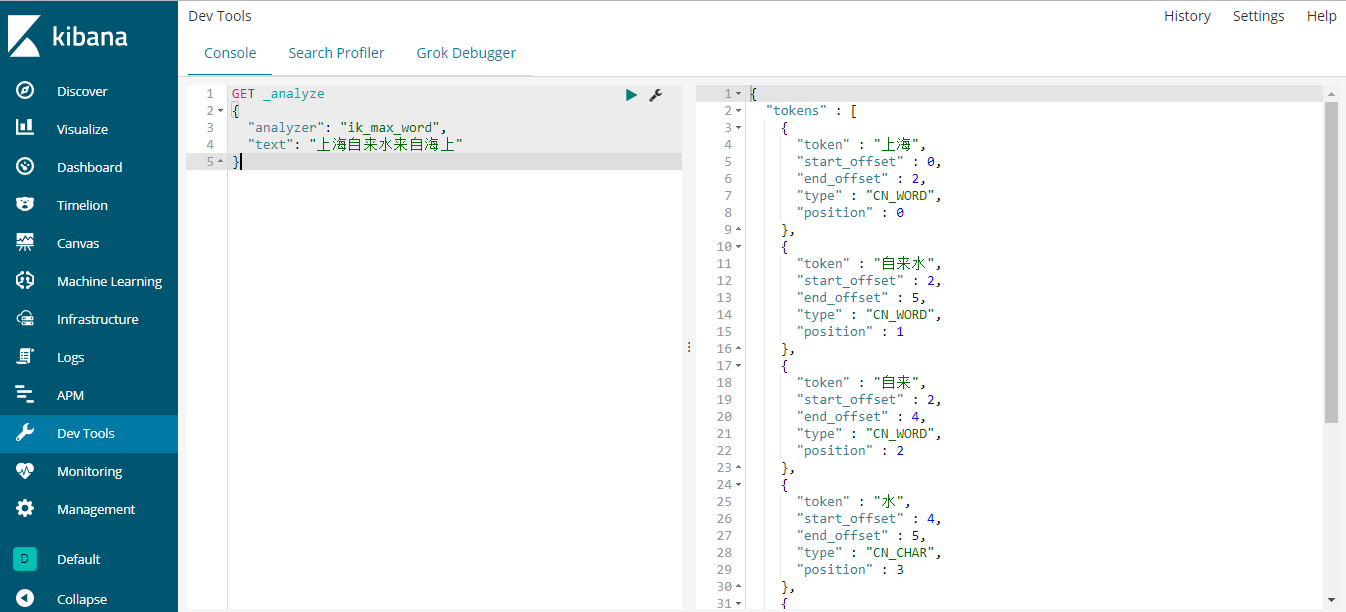

elascticsearch和kibana服务重启,让插件生效。 - 然后地址栏输入

http://localhost:5601,在Dev Tools中的Console界面的左侧输入命令,再点击绿色的执行按钮执行。

第一个ik示例

来个简单的示例。

GET _analyze

{

"analyzer": "ik_max_word",

"text": "上海自来水来自海上"

}右侧就显示出结果了如下所示:

{

"tokens" : [

{

"token" : "上海",

"start_offset" : 0,

"end_offset" : 2,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "自来水",

"start_offset" : 2,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 1

},

{

"token" : "自来",

"start_offset" : 2,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 2

},

{

"token" : "水",

"start_offset" : 4,

"end_offset" : 5,

"type" : "CN_CHAR",

"position" : 3

},

{

"token" : "来自",

"start_offset" : 5,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 4

},

{

"token" : "海上",

"start_offset" : 7,

"end_offset" : 9,

"type" : "CN_WORD",

"position" : 5

}

]

}

那么你可能对开始的analyzer:ik_max_word有一丝的疑惑,这个家伙是干嘛的呀?我们就来看看这个家伙到底是什么鬼!

ik_max_word

现在有这样的一个索引:

PUT ik1

{

"mappings": {

"doc": {

"dynamic": false,

"properties": {

"content": {

"type": "text",

"analyzer": "ik_max_word"

}

}

}

}

}上例中,ik_max_word参数会将文档做最细粒度的拆分,以穷尽尽可能的组合。

接下来为该索引添加几条数据:

PUT ik1/doc/1

{

"content":"今天是个好日子"

}

PUT ik1/doc/2

{

"content":"心想的事儿都能成"

}

PUT ik1/doc/3

{

"content":"我今天不活了"

}现在让我们开始查询,随便查!

GET ik1/_search

{

"query": {

"match": {

"content": "心想"

}

}

}查询结果如下:

{

"took" : 1,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : 1,

"max_score" : 0.2876821,

"hits" : [

{

"_index" : "ik1",

"_type" : "doc",

"_id" : "2",

"_score" : 0.2876821,

"_source" : {

"content" : "心想的事儿都能成"

}

}

]

}

}成功的返回了一条数据。我们再来以今天为条件来查询。

GET ik1/_search

{

"query": {

"match": {

"content": "今天"

}

}

}结果如下:

{

"took" : 2,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : 2,

"max_score" : 0.2876821,

"hits" : [

{

"_index" : "ik1",

"_type" : "doc",

"_id" : "1",

"_score" : 0.2876821,

"_source" : {

"content" : "今天是个好日子"

}

},

{

"_index" : "ik1",

"_type" : "doc",

"_id" : "3",

"_score" : 0.2876821,

"_source" : {

"content" : "我今天不活了"

}

}

]

}

}上例的返回中,成功的查询到了两条结果。

与ik_max_word对应还有另一个参数。让我们一起来看下。

ik_smart

与ik_max_word对应的是ik_smart参数,该参数将文档作最粗粒度的拆分。

GET _analyze

{

"analyzer": "ik_smart",

"text": "今天是个好日子"

}上例中,我们以最粗粒度的拆分文档。

结果如下:

{

"tokens" : [

{

"token" : "今天是",

"start_offset" : 0,

"end_offset" : 3,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "个",

"start_offset" : 3,

"end_offset" : 4,

"type" : "CN_CHAR",

"position" : 1

},

{

"token" : "好日子",

"start_offset" : 4,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 2

}

]

}再来看看以最细粒度的拆分文档。

GET _analyze

{

"analyzer": "ik_max_word",

"text": "今天是个好日子"

}结果如下:

{

"tokens" : [

{

"token" : "今天是",

"start_offset" : 0,

"end_offset" : 3,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "今天",

"start_offset" : 0,

"end_offset" : 2,

"type" : "CN_WORD",

"position" : 1

},

{

"token" : "是",

"start_offset" : 2,

"end_offset" : 3,

"type" : "CN_CHAR",

"position" : 2

},

{

"token" : "个",

"start_offset" : 3,

"end_offset" : 4,

"type" : "CN_CHAR",

"position" : 3

},

{

"token" : "好日子",

"start_offset" : 4,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 4

},

{

"token" : "日子",

"start_offset" : 5,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 5

}

]

}由上面的对比可以发现,两个参数的不同,所以查询结果也肯定不一样,视情况而定用什么粒度。

在基本操作方面,除了粗细粒度,别的按照之前的操作即可,就像下面两个短语查询和短语前缀查询一样。

ik之短语查询

ik中的短语查询参照之前的短语查询即可。

GET ik1/_search

{

"query": {

"match_phrase": {

"content": "今天"

}

}

}结果如下:

{

"took" : 1,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : 2,

"max_score" : 0.2876821,

"hits" : [

{

"_index" : "ik1",

"_type" : "doc",

"_id" : "1",

"_score" : 0.2876821,

"_source" : {

"content" : "今天是个好日子"

}

},

{

"_index" : "ik1",

"_type" : "doc",

"_id" : "3",

"_score" : 0.2876821,

"_source" : {

"content" : "我今天不活了"

}

}

]

}

}ik之短语前缀查询

同样的,我们第2部分的快速上手部分的操作在ik中同样适用。

GET ik1/_search

{

"query": {

"match_phrase_prefix": {

"content": {

"query": "今天好日子",

"slop": 2

}

}

}

}结果如下:

{

"took" : 1,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : 2,

"max_score" : 0.2876821,

"hits" : [

{

"_index" : "ik1",

"_type" : "doc",

"_id" : "1",

"_score" : 0.2876821,

"_source" : {

"content" : "今天是个好日子"

}

},

{

"_index" : "ik1",

"_type" : "doc",

"_id" : "3",

"_score" : 0.2876821,

"_source" : {

"content" : "我今天不活了"

}

}

]

}

}欢迎斧正,that's all

浙公网安备 33010602011771号

浙公网安备 33010602011771号