深度学习1-2

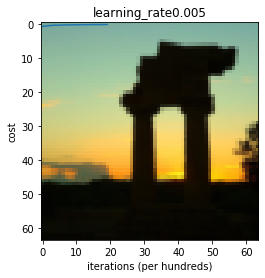

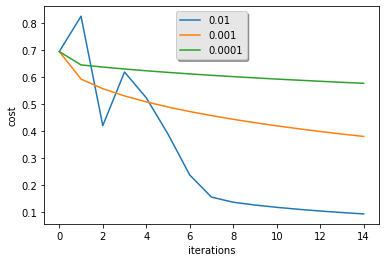

# -*- coding: utf-8 -*- """ Created on Thu Aug 1 16:30:55 2019 @author: Administrator """ import numpy as np import matplotlib.pyplot as plt import h5py import scipy from PIL import Image from scipy import ndimage import scipy.misc #from lr_utils import load_dataset #train_set_x_orig, train_set_y, test_set_x_orig, test_set_y, classes = load_dataset()#加载数据 train_dataset = h5py.File("D:/deeplearning/dataset/train_catvnoncat.h5") test_dataset = h5py.File("D:/deeplearning/dataset/test_catvnoncat.h5") train_set_x_orig = np.array(train_dataset["train_set_x"][:])#加载训练数据(209, 64, 64, 3) train_set_y_orig = np.array(train_dataset["train_set_y"][:]) test_set_x_orig = np.array(test_dataset["test_set_x"][:]) test_set_y_orig = np.array(test_dataset["test_set_y"][:]) classes = np.array(test_dataset["list_classes"][:]) # the list of classes train_set_y = train_set_y_orig.reshape(1,train_set_y_orig.shape[0]) test_set_y = test_set_y_orig.reshape(1,test_set_y_orig.shape[0]) # Example of a picture index = 40 #25th图 plt.imshow(train_set_x_orig[index]) print ("y = " + str(train_set_y[:, index]) + ", it's a '" + classes[np.squeeze(train_set_y[:, index])].decode("utf-8") + "' picture.") ### START CODE HERE ### (≈ 3 lines of code) m_train = train_set_x_orig.shape[0] m_test = test_set_x_orig.shape[0] num_px = train_set_x_orig.shape[1] ### END CODE HERE ### print ("Number of training examples: m_train = " + str(m_train)) print ("Number of testing examples: m_test = " + str(m_test)) print ("Height/Width of each image: num_px = " + str(num_px)) print ("Each image is of size: (" + str(num_px) + ", " + str(num_px) + ", 3)") print ("train_set_x shape: " + str(train_set_x_orig.shape)) print ("train_set_y shape: " + str(train_set_y.shape)) print ("test_set_x shape: " + str(test_set_x_orig.shape)) print ("test_set_y shape: " + str(test_set_y.shape)) # Reshape the training and test examples ### START CODE HERE ### (≈ 2 lines of code) train_set_x_flatten = train_set_x_orig.reshape(m_train, -1).T test_set_x_flatten = test_set_x_orig.reshape(m_test, -1).T ### END CODE HERE ### print ("train_set_x_flatten shape: " + str(train_set_x_flatten.shape)) print ("train_set_y shape: " + str(train_set_y.shape)) print ("test_set_x_flatten shape: " + str(test_set_x_flatten.shape)) print ("test_set_y shape: " + str(test_set_y.shape)) print ("sanity check after reshaping: " + str(train_set_x_flatten[0:5,0])) train_set_x = train_set_x_flatten/255. test_set_x = test_set_x_flatten/255. #the logistic function def sigmod(x): s = 1.0/ (1 + 1 / np.exp(x)) return s #Sigmoid gradient def sigmod_derivative(x): s = 1.0 / (1 + 1/ np.exp(x)) ds = s * (1-s) return ds def image2vector(image): x = image.reshape(image.shape[0] * image.shape[1] * image.shape[2],1) return x def normalizeRows(x): x_norm = np.linalg.norm(x,axis=1,keepdims=True)#计算行范数 s = x / x_norm return s # Gradient function L1 def L1(yhat,y): loss = np.sum(np.abs(y - yhat)) return loss # Gradient function L2 def L2(yhat,y): loss = np.sum(np.power((yhat - y),2)) return loss def initialize_with_zeros(dim): w = np.zeros((dim,1)) b = 0 assert(w.shape == (dim,1)) assert(isinstance(b,float) or isinstance(b,int)) return w,b #propagate function def propagate(w,b,X,Y): m = X.shape[1] A = sigmod(np.dot(w.T,X)+b) cost = -(1/m)*np.sum(Y*np.log(A)+(1-Y)*np.log(1-A)) dw = 1/m*np.dot(X,(A-Y).T) db = 1/m*np.sum(A-Y) assert(dw.shape == w.shape) assert(db.dtype == float) cost == np.squeeze(cost) assert(cost.shape == ()) grads = {"dw":dw, "db":db } return grads,cost def optimize(w,b,X,Y,num_iterations,learning_rate): costs=[] for i in range(num_iterations): grads,cost = propagate(w,b,X,Y) dw = grads["dw"] db = grads["db"] w = w - learning_rate*dw b = b - learning_rate*db if i % 100 == 0: costs.append(cost) if i % 100 == 0: print ("Cost after iteration %i: %f" %(i, cost)) params = {"w":w, "b":b } grads = {"dw":dw, "db":db } return params,grads,costs def predict(w,b,X): m = X.shape[1] Y_prediction = np.zeros((1,m)) w = w.reshape(X.shape[0],1) A = sigmod(np.dot(w.T,X)+b) for i in range(A.shape[1]): if A[0,i] > 0.5: Y_prediction[0,i] = 1 else: Y_prediction[0,i] = 0 assert(Y_prediction.shape == (1, m)) return Y_prediction def model(X_train, Y_train, X_test, Y_test, num_iterations = 2000, learning_rate = 0.5): w,b = initialize_with_zeros(X_train.shape[0]) parameters, grads, costs = optimize(w,b,X_train, Y_train,num_iterations,learning_rate) w = parameters["w"] b = parameters["b"] Y_prediction_test = predict(w, b, X_test) Y_prediction_train = predict(w, b, X_train) print("train accuracy: {} %".format(100 - np.mean(np.abs(Y_prediction_train - Y_train)) * 100)) print("test accuracy: {} %".format(100 - np.mean(np.abs(Y_prediction_test - Y_test)) * 100)) d = {"costs": costs, "Y_prediction_test": Y_prediction_test, "Y_prediction_train" : Y_prediction_train, "w" : w, "b" : b, "learning_rate" : learning_rate, "num_iterations": num_iterations} return d d = model(train_set_x, train_set_y, test_set_x, test_set_y, num_iterations = 2000, learning_rate = 0.005) costs = np.squeeze(d["costs"]) plt.plot(costs) plt.ylabel('cost') plt.xlabel('iterations (per hundreds)') plt.title("learning_rate"+str(d["learning_rate"])) plt.show() learning_rates = [0.01, 0.001, 0.0001] models = {} for i in learning_rates: print ("learning rate is: " + str(i)) models[str(i)] = model(train_set_x, train_set_y, test_set_x, test_set_y, num_iterations = 1500, learning_rate = i) print ('\n' + "-------------------------------------------------------" + '\n') for i in learning_rates: plt.plot(np.squeeze(models[str(i)]["costs"]), label= str(models[str(i)]["learning_rate"])) plt.ylabel('cost') plt.xlabel('iterations') legend = plt.legend(loc='upper center', shadow=True) frame = legend.get_frame() frame.set_facecolor('0.90') plt.show() ## START CODE HERE ## (PUT YOUR IMAGE NAME) my_image = "Sample1.jpg" # change this to the name of your image file ## END CODE HERE ## # We preprocess the image to fit your algorithm. fname = my_image image = np.array(ndimage.imread(fname, flatten=False)) my_image = scipy.misc.imresize(image, size=(num_px,num_px)).reshape((1, num_px*num_px*3)).T my_predicted_image = predict(d["w"], d["b"], my_image) plt.imshow(image) print("y = " + str(np.squeeze(my_predicted_image)) + ", your algorithm predicts a \"" + classes[int(np.squeeze(my_predicted_image)),].decode("utf-8") + "\" picture.")

运行结果:

y = [0], it's a 'non-cat' picture.

Number of training examples: m_train = 209

Number of testing examples: m_test = 50

Height/Width of each image: num_px = 64

Each image is of size: (64, 64, 3)

train_set_x shape: (209, 64, 64, 3)

train_set_y shape: (1, 209)

test_set_x shape: (50, 64, 64, 3)

test_set_y shape: (1, 50)

train_set_x_flatten shape: (12288, 209)

train_set_y shape: (1, 209)

test_set_x_flatten shape: (12288, 50)

test_set_y shape: (1, 50)

sanity check after reshaping: [17 31 56 22 33]

Cost after iteration 0: 0.693147

Cost after iteration 100: 0.584508

Cost after iteration 200: 0.466949

Cost after iteration 300: 0.376007

Cost after iteration 400: 0.331463

Cost after iteration 500: 0.303273

Cost after iteration 600: 0.279880

Cost after iteration 700: 0.260042

Cost after iteration 800: 0.242941

Cost after iteration 900: 0.228004

Cost after iteration 1000: 0.214820

Cost after iteration 1100: 0.203078

Cost after iteration 1200: 0.192544

Cost after iteration 1300: 0.183033

Cost after iteration 1400: 0.174399

Cost after iteration 1500: 0.166521

Cost after iteration 1600: 0.159305

Cost after iteration 1700: 0.152667

Cost after iteration 1800: 0.146542

Cost after iteration 1900: 0.140872

train accuracy: 99.04306220095694 %

test accuracy: 70.0 %

learning rate is: 0.01

Cost after iteration 0: 0.693147

Cost after iteration 100: 0.823921

Cost after iteration 200: 0.418944

Cost after iteration 300: 0.617350

Cost after iteration 400: 0.522116

Cost after iteration 500: 0.387709

Cost after iteration 600: 0.236254

Cost after iteration 700: 0.154222

Cost after iteration 800: 0.135328

Cost after iteration 900: 0.124971

Cost after iteration 1000: 0.116478

Cost after iteration 1100: 0.109193

Cost after iteration 1200: 0.102804

Cost after iteration 1300: 0.097130

Cost after iteration 1400: 0.092043

train accuracy: 99.52153110047847 %

test accuracy: 68.0 %

Cost after iteration 0: 0.693147

Cost after iteration 100: 0.823921

Cost after iteration 200: 0.418944

Cost after iteration 300: 0.617350

Cost after iteration 400: 0.522116

Cost after iteration 500: 0.387709

Cost after iteration 600: 0.236254

Cost after iteration 700: 0.154222

Cost after iteration 800: 0.135328

Cost after iteration 900: 0.124971

Cost after iteration 1000: 0.116478

Cost after iteration 1100: 0.109193

Cost after iteration 1200: 0.102804

Cost after iteration 1300: 0.097130

Cost after iteration 1400: 0.092043

train accuracy: 99.52153110047847 %

test accuracy: 68.0 %

-------------------------------------------------------

learning rate is: 0.001

Cost after iteration 0: 0.693147

Cost after iteration 100: 0.591289

Cost after iteration 200: 0.555796

Cost after iteration 300: 0.528977

Cost after iteration 400: 0.506881

Cost after iteration 500: 0.487880

Cost after iteration 600: 0.471108

Cost after iteration 700: 0.456046

Cost after iteration 800: 0.442350

Cost after iteration 900: 0.429782

Cost after iteration 1000: 0.418164

Cost after iteration 1100: 0.407362

Cost after iteration 1200: 0.397269

Cost after iteration 1300: 0.387802

Cost after iteration 1400: 0.378888

train accuracy: 88.99521531100478 %

test accuracy: 64.0 %

Cost after iteration 0: 0.693147

Cost after iteration 100: 0.591289

Cost after iteration 200: 0.555796

Cost after iteration 300: 0.528977

Cost after iteration 400: 0.506881

Cost after iteration 500: 0.487880

Cost after iteration 600: 0.471108

Cost after iteration 700: 0.456046

Cost after iteration 800: 0.442350

Cost after iteration 900: 0.429782

Cost after iteration 1000: 0.418164

Cost after iteration 1100: 0.407362

Cost after iteration 1200: 0.397269

Cost after iteration 1300: 0.387802

Cost after iteration 1400: 0.378888

train accuracy: 88.99521531100478 %

test accuracy: 64.0 %

-------------------------------------------------------

learning rate is: 0.0001

Cost after iteration 0: 0.693147

Cost after iteration 100: 0.643677

Cost after iteration 200: 0.635737

Cost after iteration 300: 0.628572

Cost after iteration 400: 0.622040

Cost after iteration 500: 0.616029

Cost after iteration 600: 0.610455

Cost after iteration 700: 0.605248

Cost after iteration 800: 0.600354

Cost after iteration 900: 0.595729

Cost after iteration 1000: 0.591339

Cost after iteration 1100: 0.587153

Cost after iteration 1200: 0.583149

Cost after iteration 1300: 0.579307

Cost after iteration 1400: 0.575611

train accuracy: 68.42105263157895 %

test accuracy: 36.0 %

Cost after iteration 0: 0.693147

Cost after iteration 100: 0.643677

Cost after iteration 200: 0.635737

Cost after iteration 300: 0.628572

Cost after iteration 400: 0.622040

Cost after iteration 500: 0.616029

Cost after iteration 600: 0.610455

Cost after iteration 700: 0.605248

Cost after iteration 800: 0.600354

Cost after iteration 900: 0.595729

Cost after iteration 1000: 0.591339

Cost after iteration 1100: 0.587153

Cost after iteration 1200: 0.583149

Cost after iteration 1300: 0.579307

Cost after iteration 1400: 0.575611

train accuracy: 68.42105263157895 %

test accuracy: 36.0 %

-------------------------------------------------------

浙公网安备 33010602011771号

浙公网安备 33010602011771号