Hadoop综合大作业+补爬虫大作业

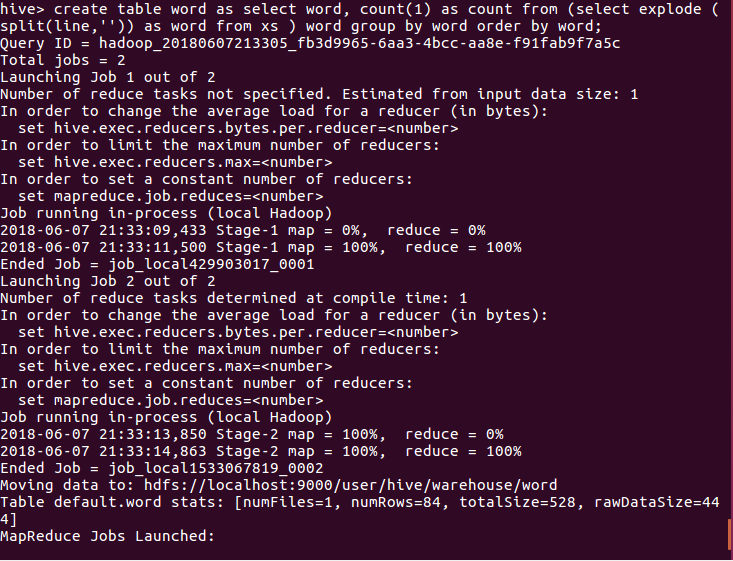

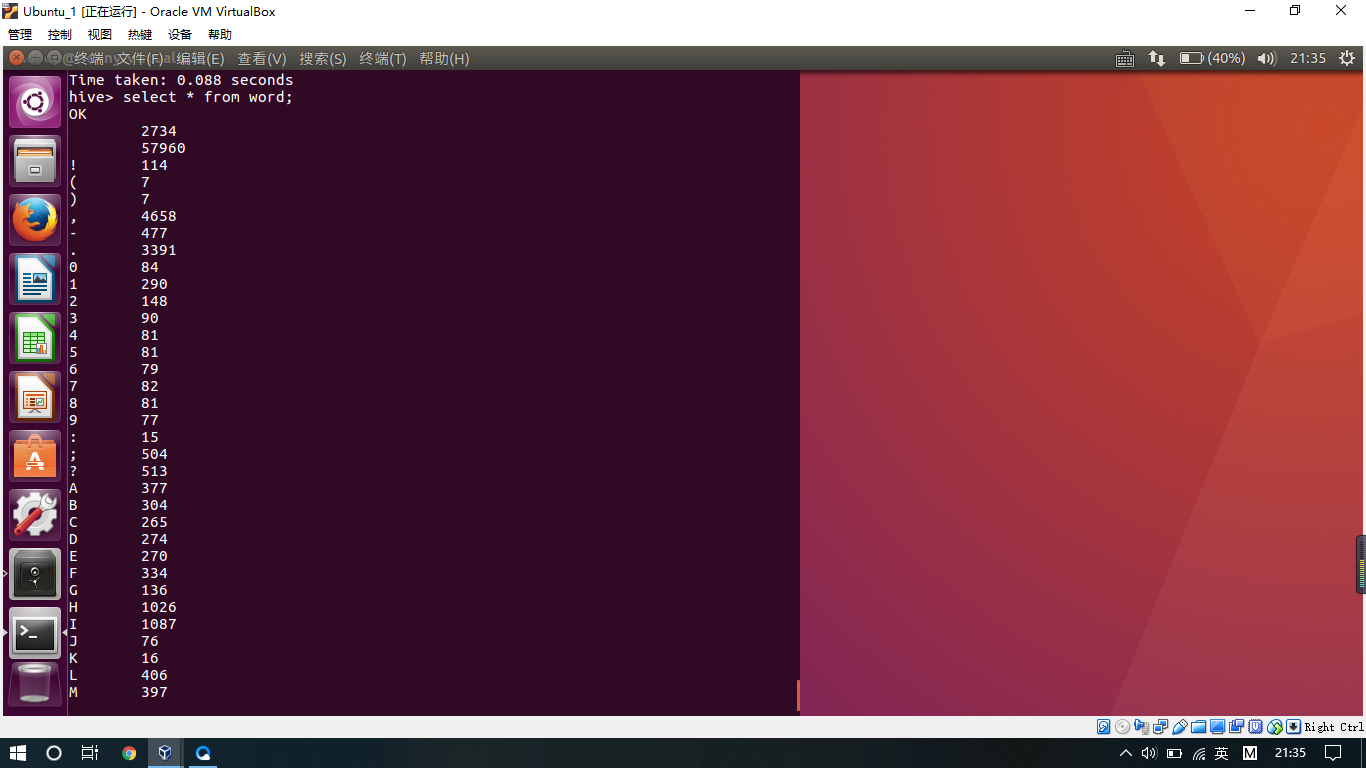

1.用Hive对爬虫大作业产生的文本文件(或者英文词频统计下载的英文长篇小说)词频统计。

在网络上下载了一本英文小说

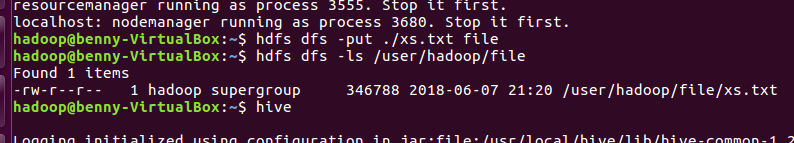

现在将xs。txt放入HDFS中并用hive查询统计,截图如下:

2.补《爬虫大作业》

import requests, re, jieba from bs4 import BeautifulSoup from datetime import datetime def getNewsDetail(newsUrl): resd = requests.get(newsUrl) resd.encoding = 'gb2312' soupd = BeautifulSoup(resd.text, 'html.parser') content = soupd.select('#Cnt-Main-Article-QQ')[0].text info = soupd.select('.a_Info')[0].text date = re.search('(\d{4}.\d{2}.\d{2}\s\d{2}.\d{2})', info).group(1) dateTime = datetime.strptime(date, '%Y-%m-%d %H:%M') sources = soupd.select('.a_source')[0].text # if soupd.select('.a_author')!=null: # author = soupd.select('.a_author')[0].text writeNews(content) keyWords = getKeyWords(content) print('发布时间:{0}\n来源:{1}'.format(dateTime, sources)) print('关键词:{}、{}、{}'.format(keyWords[0], keyWords[1], keyWords[2])) print(content) # 将新闻内容写入到文件 def writeNews(content): f = open('news.txt', 'a', encoding='utf-8') f.write(content) f.close() def getKeyWords(content): content = ''.join(re.findall('[\u4e00-\u9fa5]', content)) wordSet = set(jieba._lcut(content)) wordDict = {} for i in wordSet: wordDict[i] = content.count(i) deleteList, keyWords = [], [] for i in wordDict.keys(): if len(i) < 2: deleteList.append(i) for i in deleteList: del wordDict[i] dictList = list(wordDict.items()) dictList.sort(key=lambda item: item[1], reverse=True) for i in range(3): keyWords.append(dictList[i][0]) return keyWords def getListPage(listUrl): res = requests.get(listUrl) res.encoding = 'gbk' soup = BeautifulSoup(res.text, 'html.parser') for new in soup.select('.Q-tpList'): newsUrl = new.select('a')[0]['href'] # title = new.select('a')[0].text # print('标题:{0}\n链接:{1}'.format(title, newsUrl)) print(newsUrl) getNewsDetail(newsUrl) # break listUrl = 'http://tech.qq.com/ydhl.htm' getListPage(listUrl) for i in range(2, 20): listUrl = '/http://tech.qq.com/a/20170628/it_2016_%02d/' % i getListPage(listUrl)

2.用Hive对爬虫大作业产生的csv文件进行数据分析,写一篇博客描述你的分析过程和分析结果。