docker容器网络配置

Linux内核实现名称空间的创建

ip netns命令

[root@node0 ~]# ip netns help

Usage: ip netns list #列出已有

ip netns add NAME # 添加名称空间

ip netns attach NAME PID #查看PID

ip netns set NAME NETNSID #设置netnsid

ip [-all] netns delete [NAME] #删除

ip netns identify [PID]

ip netns pids NAME

ip [-all] netns exec [NAME] cmd ...

ip netns monitor

ip netns list-id

NETNSID := auto | POSITIVE-INT

默认情况下,Linux系统中是没有任何 Network Namespace的,所以ip netns list命令不会返回任何信息。

创建network namespace

//命令

ip netns add NAME

[root@node0 ~]# ip netns add ns1

//查看

[root@node0 ~]# ip netns list

ns1

[root@node0 ~]# ll /var/run/netns/

total 0

-r--r--r--. 1 root root 0 Mar 2 09:31 ns1

不能创建同名namespace报错显示文件已存在

操作network namespace

ip netns exec Network Namespace [COMMAND]

//查看ns1空间的网卡

[root@node0 ~]# ip netns exec ns1 ip a

1: lo: <LOOPBACK> mtu 65536 qdisc noop state DOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

//外部开启lo网卡

[root@node0 ~]# ip netns exec ns1 ip link set lo up

[root@node0 ~]# ip netns exec ns1 ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

//内部ping

[root@node0 ~]# ip netns exec ns1 ping 127.0.0.1

PING 127.0.0.1 (127.0.0.1) 56(84) bytes of data.

64 bytes from 127.0.0.1: icmp_seq=1 ttl=64 time=0.030 ms

64 bytes from 127.0.0.1: icmp_seq=2 ttl=64 time=0.058 ms

^C

--- 127.0.0.1 ping statistics ---

2 packets transmitted, 2 received, 0% packet loss, time 77ms

rtt min/avg/max/mdev = 0.030/0.044/0.058/0.014 ms

转移设备

我们可以在不同网络名字容器之间转移设备(如veth),设备转移出去后该ns的设备就不存在了

- veth属于可转移设备

- lo、vxlan、ppp、bridge等是不可以转移

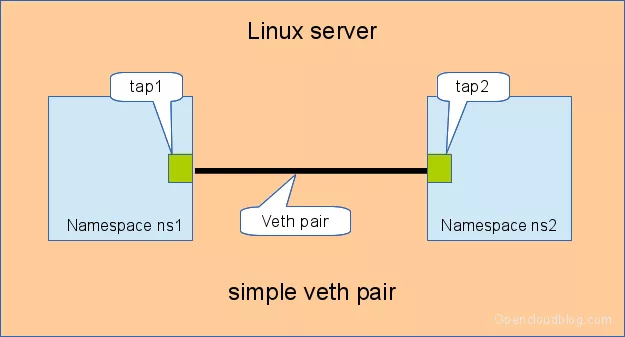

veth pair

全称:Virtual Ethernet Pair

特点:是一个成对的端口,数据包从一端进入,从另一端出

作用:用于在不同的network namespace之间的通信,方便连接传输

创建veth pair

//创建veth类型网卡

[root@node0 ~]# ip link add type veth

//veth1和veth0以成对形式出现

[root@node0 ~]# ip a|grep veth

6: veth0@veth1: <BROADCAST,MULTICAST,M-DOWN> mtu 1500 qdisc noop state DOWN group default qlen 1000

7: veth1@veth0: <BROADCAST,MULTICAST,M-DOWN> mtu 1500 qdisc noop state DOWN group default qlen 1000

通过转移veth设备实现Netns之间的通信

- 新增netns “ns2”

[root@node0 ~]# ip netns add ns2

[root@node0 ~]# ip netns list

ns2

ns1

- 分别将veth网卡分配给ns1,ns2

[root@node0 ~]# ip link set veth0 netns ns1

[root@node0 ~]# ip link set veth1 netns ns2

- 查看宿主机网卡

//显示转移走了

[root@node0 ~]# ip a|grep veth

[root@node0 ~]#

- 查看ns1,ns2上网卡

[root@node0 ~]# ip netns exec ns1 ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

6: veth0@if7: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 3a:b7:aa:0c:f3:8a brd ff:ff:ff:ff:ff:ff link-netns ns2

[root@node0 ~]# ip netns exec ns2 ip a

1: lo: <LOOPBACK> mtu 65536 qdisc noop state DOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

7: veth1@if6: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether ba:78:24:1d:53:2f brd ff:ff:ff:ff:ff:ff link-netns ns1

- 启动网卡

[root@node0 ~]# ip netns exec ns1 ip link set veth0 up

[root@node0 ~]# ip netns exec ns2 ip link set veth1 up

- 为veth网卡配置ip

[root@node0 ~]# ip netns exec ns1 ip addr add 12.1.1.1/24 dev veth0

[root@node0 ~]# ip netns exec ns2 ip addr add 12.1.1.2/24 dev veth1

- ns内部ping测试通信

//查看ip

[root@node0 ~]# ip netns exec ns1 ip a|grep veth

6: veth0@if7: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

inet 12.1.1.1/24 scope global veth0

[root@node0 ~]# ip netns exec ns2 ip a|grep veth

7: veth1@if6: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

inet 12.1.1.2/24 scope global veth1

//ping通

[root@node0 ~]# ip netns exec ns1 ping 12.1.1.2

PING 12.1.1.2 (12.1.1.2) 56(84) bytes of data.

64 bytes from 12.1.1.2: icmp_seq=1 ttl=64 time=0.048 ms

64 bytes from 12.1.1.2: icmp_seq=2 ttl=64 time=0.113 ms

64 bytes from 12.1.1.2: icmp_seq=3 ttl=64 time=0.123 ms

^C

--- 12.1.1.2 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 69ms

rtt min/avg/max/mdev = 0.048/0.094/0.123/0.035 ms

- ns内部更改网卡名称

//关闭网卡

[root@node0 ~]# ip netns exec ns1 ip link set down veth0

//重命名为e0

[root@node0 ~]# ip netns exec ns1 ip link set dev veth0 name e0

//激活查看网卡状态

[root@node0 ~]# ip netns exec ns1 ip link set up e0

[root@node0 ~]# ip netns exec ns1 ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

6: e0@if7: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 3a:b7:aa:0c:f3:8a brd ff:ff:ff:ff:ff:ff link-netns ns2

inet 12.1.1.1/24 scope global e0

valid_lft forever preferred_lft forever

inet6 fe80::38b7:aaff:fe0c:f38a/64 scope link

valid_lft forever preferred_lft forever

四种网络模式配置

bridge模式

//默认是bridge模式,加不加--network bridge是一样的

[root@node0 ~]# docker run -it --name test --rm busybox:latest

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

10: eth0@if11: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

//

[root@node0 ~]# docker run -it --name test --network bridge --rm busybox:latest

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

12: eth0@if13: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

host模式

[root@node0 ~]# docker run -it --name test1 --network host --rm busybox:latest

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq qlen 1000

link/ether 00:0c:29:bb:6f:ff brd ff:ff:ff:ff:ff:ff

inet 192.168.94.142/24 brd 192.168.94.255 scope global dynamic ens160

valid_lft 1060sec preferred_lft 1060sec

inet6 fe80::c566:2591:64e:9b7c/64 scope link

valid_lft forever preferred_lft forever

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue

link/ether 02:42:5d:a2:df:a3 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:5dff:fea2:dfa3/64 scope link

valid_lft forever preferred_lft forever

13: vethbed54cf@if12: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue master docker0

link/ether fa:dc:52:c1:c2:3a brd ff:ff:ff:ff:ff:ff

inet6 fe80::f8dc:52ff:fec1:c23a/64 scope link

valid_lft forever preferred_lft forever

//对比主机

[root@node0 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:bb:6f:ff brd ff:ff:ff:ff:ff:ff

inet 192.168.94.142/24 brd 192.168.94.255 scope global dynamic noprefixroute ens160

valid_lft 1037sec preferred_lft 1037sec

inet6 fe80::c566:2591:64e:9b7c/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:5d:a2:df:a3 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:5dff:fea2:dfa3/64 scope link

valid_lft forever preferred_lft forever

13: vethbed54cf@if12: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master docker0 state UP group default

link/ether fa:dc:52:c1:c2:3a brd ff:ff:ff:ff:ff:ff link-netnsid 2

inet6 fe80::f8dc:52ff:fec1:c23a/64 scope link

valid_lft forever preferred_lft forever

container模式

//拉一个容器

[root@node0 ~]# docker run -it --name test --rm busybox:latest

//查看ip

[root@node0 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5273b69ab45a busybox:latest "sh" 5 minutes ago Up 5 minutes test

//复制test容器id选用container模式创建test1

[root@node0 ~]# docker run -it --name test1 --network container:5273b69ab45a --rm busybox:latest

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

12: eth0@if13: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

//test ip查看地址相同

[root@node0 ~]# docker run -it --name test --rm busybox:latest

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

10: eth0@if11: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

- 验证文件隔离

//test1端创建目录a

[root@node0 ~]# docker run -it --name test1 --network container:5273b69ab45a --rm busybox:latest

/ # mkdir a

/ # ls

a bin dev etc home proc root sys tmp usr var

//test端查看

[root@node0 ~]# docker run -it --name test --network bridge --rm busybox:latest

/ # ls

bin dev etc home proc root sys tmp usr var

//test端部署网页,启动httpd服务

/ # mkdir web

/ # echo 'hello world' > /web/index.html

/ # httpd -h web/

/ # netstat -antl

Active Internet connections (servers and established)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 :::80 :::* LISTEN

//test1端通样创建index.html但内容更改,访问

/ # mkdir web

/ # echo 'bad website' > index.html/web

/ # wget -O - -q 172.17.0.2:80

hello world

本地网页文件没有影响test机文件,说明只是ip共享

none模式

[root@node0 ~]# docker run -it --name test1 --network none --rm busybox:latest

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

容器常用操作

容器主机名相关配置

[root@node0 ~]# docker run -it --name test --network bridge --rm busybox:latest

/ # hostname

5273b69ab45a #如不指定主机名则是这样

- 外部设定主机名

[root@node0 ~]# docker run -it --name test --hostname fxx --rm busybox:latest

/ # hostname

fxx

//主机名自动注入映射

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 fxx

// DNS自动配置为宿主机

/ # cat /etc/resolv.conf

# Generated by NetworkManager

search localdomain

nameserver 192.168.94.2

//ping外网

/ # ping baidu.com

PING baidu.com (220.181.38.148): 56 data bytes

64 bytes from 220.181.38.148: seq=0 ttl=127 time=40.780 ms

^C

--- baidu.com ping statistics ---

2 packets transmitted, 1 packets received, 50% packet loss

round-trip min/avg/max = 40.780/40.780/40.780 ms

- 手动写入主机映射ip

//添加test.com的主机映射

[root@node0 ~]# docker run -it --name test --hostname fxx --add-host test.com:1.1.1.1 --rm busybox:latest

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

1.1.1.1 test.com

172.17.0.2 fxx

开放容器端口

docker run -p [Port]

-p能指定端口映射到宿主机中,方便外部主机通过宿主机访问容器内应用

-p选项的使用格式:

- -p

- 指定容器的端口映射到宿主机所有地址的一个动态端口

- -p

: - 将容器的端口映射到主机的指定端口

- -p

:: - 将容器端口映射到宿主机指定ip的一个动态端口

- -p

: : - 指定容器端口映射至主指定ip的指定端口

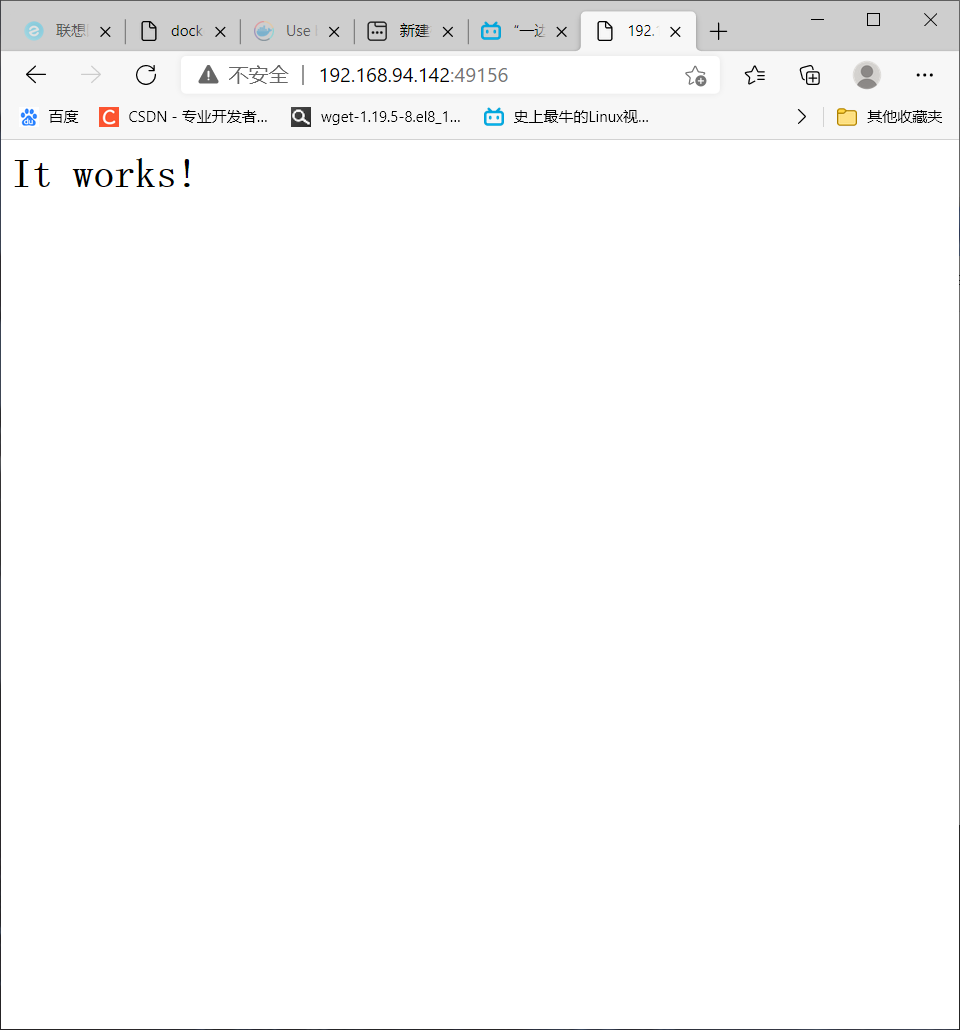

映射到动态随机端口

[root@node0 ~]# docker run --rm -p 80 httpd

//另开终端

[root@node0 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

7f8b82ed83d4 httpd "httpd-foreground" 3 minutes ago Up 3 minutes 0.0.0.0:49156->80/tcp festive_leavitt

//被映射到宿主机的49156端口上

[root@node0 ~]# docker port 7f8b82ed83d4

80/tcp -> 0.0.0.0:49156

成功访问

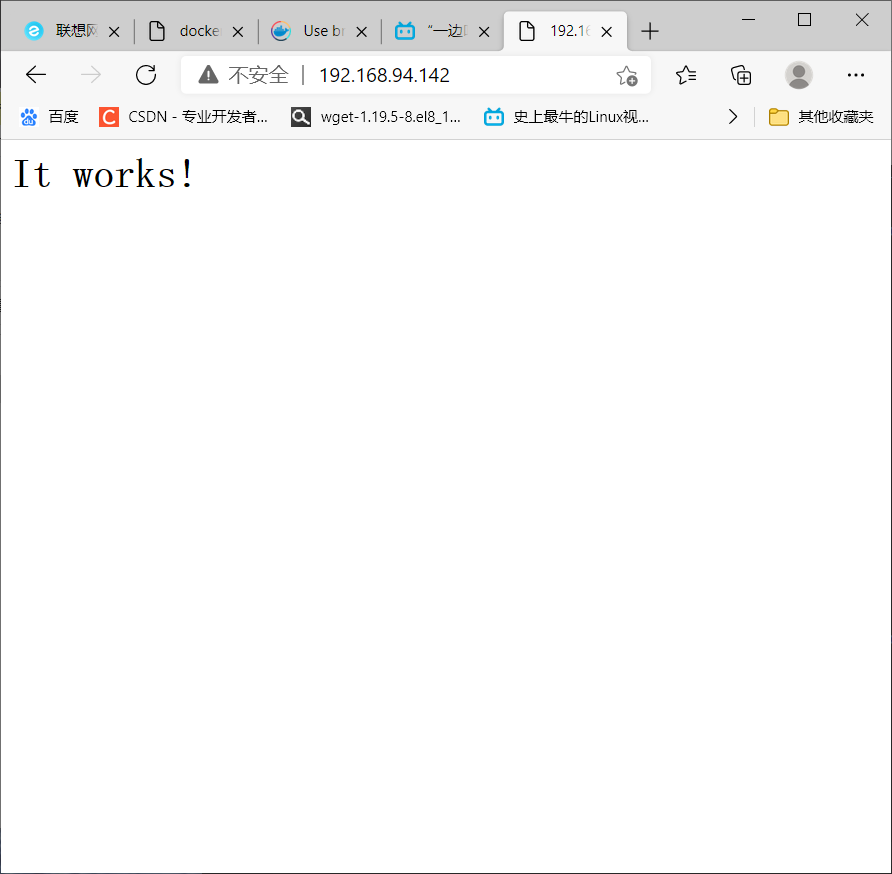

映射到指定端口

[root@node0 ~]# docker run --rm -p 80:80 httpd

root@node0 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

bb752c9d68cd httpd "httpd-foreground" About a minute ago Up About a minute 0.0.0.0:80->80/tcp wonderful_euler

[root@node0 ~]# docker port bb752c9d68cd

80/tcp -> 0.0.0.0:80

成功访问(默认80端口)

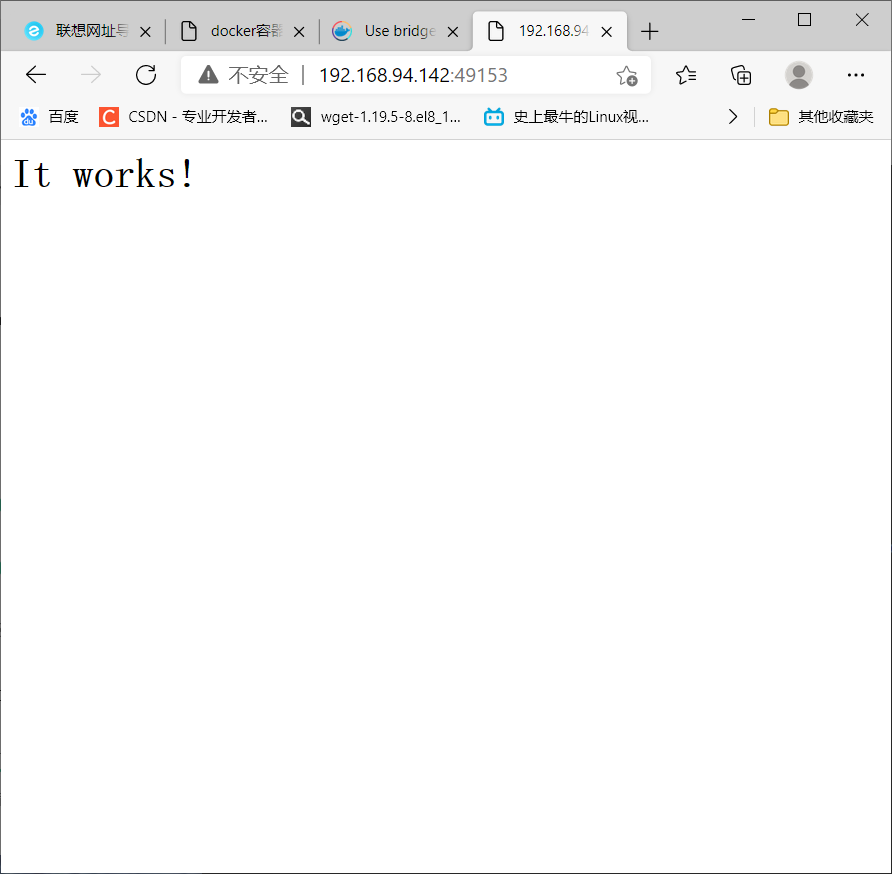

映射端口到指定ip的随机端口

[root@node0 ~]# docker run --rm -p 192.168.94.142::80 httpd

[root@node0 ~]# docker port 00ae6fe51564

80/tcp -> 192.168.94.142:49153

成功访问

映射端口到指定ip的指定端口

[root@node0 ~]# docker run --rm -p 192.168.94.142:50:80 httpd

[root@node0 ~]# docker port c176943e1a5a

80/tcp -> 192.168.94.142:50

成功访问

iptables防火墙规则将随容器的创建自动生成,随容器的删除自动删除规则。

自定义docker0桥的网络属性信息

通过修改配置文件修改docker0网桥的默认值

[root@node0 ~]# vim /etc/docker/daemon.json

以修改ip为例子

[root@node0 ~]# vim /etc/docker/daemon.json

{

"bip":"172.180.94.1/24", #bip全程bridgeip,添加ip即可修改

"registry-mirrors": ["https://q4mxx5nr.mirror.aliyuncs.com"]

}

自定义网桥(区别于docker0)

[root@node0 ~]# docker network create -d bridge --subnet "172.168.2.0/24" --gateway "172.168.2.1" br0

[root@node0 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

b4d054c05cf8 br0 bridge local

7e3e08673191 bridge bridge local

6c47a3759e21 host host local

ccfa7497bd38 none null local

[root@node0 ~]# ip a|grep br-

3: br-b4d054c05cf8: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

inet 172.168.2.1/24 brd 172.168.2.255 scope global br-b4d054c05cf8

- 用新网桥来创建容器

[root@node0 ~]# docker run -it --name t0 --rm --network br0 busybox

不同网桥间容器通信

//创建t0容器分配br0网桥

[root@node0 ~]# docker run -it --name t0 --rm --network br0 busybox

//开新端口创建t1容器分配bridge(docker0) 网桥

[root@node0 ~]# docker run -it --name t1 --rm --network bridge busybox

//开新终端分配各自网络给对方容器

[root@node0 ~]# docker network connect br0 t1

[root@node0 ~]# docker network connect bridge t0

//交互模式相互ping

//t0端

[root@node0 ~]# docker run -it --name t0 --rm --network br0 busybox

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

11: eth0@if12: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:a8:02:02 brd ff:ff:ff:ff:ff:ff

inet 172.168.2.2/24 brd 172.168.2.255 scope global eth0

valid_lft forever preferred_lft forever

15: eth1@if16: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:03 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.3/16 brd 172.17.255.255 scope global eth1

valid_lft forever preferred_lft forever

/ # ping 172.17.0.2

PING 172.17.0.2 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.206 ms

64 bytes from 172.17.0.2: seq=1 ttl=64 time=0.246 ms

^C

--- 172.17.0.2 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.206/0.226/0.246 ms

//t1端

[root@node0 ~]# docker run -it --name t1 --rm --network bridge busybox

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

9: eth0@if10: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

13: eth1@if14: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:a8:02:03 brd ff:ff:ff:ff:ff:ff

inet 172.168.2.3/24 brd 172.168.2.255 scope global eth1

valid_lft forever preferred_lft forever

/ # ping 172.168.2.2

PING 172.168.2.2 (172.168.2.2): 56 data bytes

64 bytes from 172.168.2.2: seq=0 ttl=64 time=0.197 ms

64 bytes from 172.168.2.2: seq=1 ttl=64 time=0.249 ms

^C

--- 172.168.2.2 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.197/0.223/0.249 ms

浙公网安备 33010602011771号

浙公网安备 33010602011771号