(原)人脸姿态识别Fine-Grained Head Pose EstimationWithout Keypoints

转载请注明出处:

https://www.cnblogs.com/darkknightzh/p/12150096.html

论文:

Fine-Grained Head Pose EstimationWithout Keypoints

论文网址:

https://arxiv.org/abs/1710.00925v5

官方pytorch网址:

https://github.com/natanielruiz/deep-head-pose

说明:该代码是pytorch早期的版本。

参考网址:

https://www.cnblogs.com/aoru45/p/11186629.html

1 简介

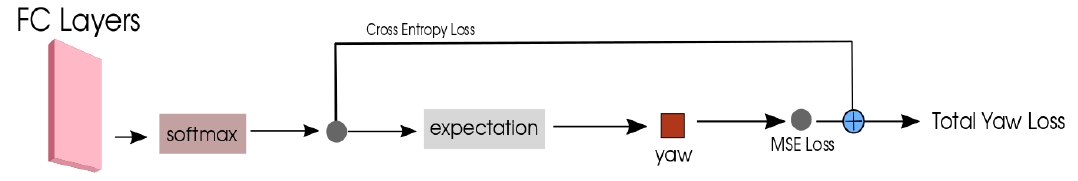

该论文通过训练多损失的CNN网络来预测人脸的pitch,yaw和roll三个角度值。两个损失分别是分类损失和回归损失。图像通过网络后,得到pitch,yaw和roll这三组特征。

训练阶段:分类损失将特征通过softmax后直接分类,回归损失则是计算归一化后特征的数学期望,并将期望和实际的角度计算MSE loss。

测试阶段:使用归一化后特征的数学期望得到预测的pitch,yaw和roll。

参考网址中指出:分类的loss占比会影响梯度方向,从而会起到一个导向作用,引导回归往一个合适的方向,这是梯度方向上的引导。

2 网络结构

网络结构如下图所示:

输入图像通过Resnet50骨干网络得到特征,而后通过三个fc层,分别作为pitch,yaw和roll的分类输入。代码中CrossEntropyLoss包含了softmax,因而实际上和上图一致。感觉上图右侧按照下面的画法,更易理解吧(只画出了yaw,另外两个同理)。

分类时,起点-99度(包含),终点102度(不包含),间隔3,共67个值,里面共66个间隔,作为离散的类别,对这些使用CrossEntropyLoss计算损失。另一方面,将softmax后归一化的特征作为概率,和对应类别相乘并求和,作为期望,和实际角度计算MSELoss。

损失函数如下:

$L=H(y,\hat{y})+\alpha \centerdot MSE(y,\hat{y})$

其中$y$为预测值,$\hat{y}$为真实值。H代表交叉熵损失。

3 代码

3.1 网络结构

hopenet网络结构代码如下:

1 class Hopenet(nn.Module): 2 # Hopenet with 3 output layers for yaw, pitch and roll 3 # Predicts Euler angles by binning and regression with the expected value 4 def __init__(self, block, layers, num_bins): 5 self.inplanes = 64 6 super(Hopenet, self).__init__() 7 self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False) 8 self.bn1 = nn.BatchNorm2d(64) 9 self.relu = nn.ReLU(inplace=True) 10 self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1) 11 self.layer1 = self._make_layer(block, 64, layers[0]) 12 self.layer2 = self._make_layer(block, 128, layers[1], stride=2) 13 self.layer3 = self._make_layer(block, 256, layers[2], stride=2) 14 self.layer4 = self._make_layer(block, 512, layers[3], stride=2) 15 self.avgpool = nn.AvgPool2d(7) # 至此为resnet的结构。得到特征 16 self.fc_yaw = nn.Linear(512 * block.expansion, num_bins) # 特征到分类的三个fc层。 17 self.fc_pitch = nn.Linear(512 * block.expansion, num_bins) # 特征到分类的三个fc层。 18 self.fc_roll = nn.Linear(512 * block.expansion, num_bins) # 特征到分类的三个fc层。 19 20 self.fc_finetune = nn.Linear(512 * block.expansion + 3, 3) # Vestigial layer from previous experiments 未使用 21 22 for m in self.modules(): # 初始化模型参数 23 if isinstance(m, nn.Conv2d): 24 n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels 25 m.weight.data.normal_(0, math.sqrt(2. / n)) 26 elif isinstance(m, nn.BatchNorm2d): 27 m.weight.data.fill_(1) 28 m.bias.data.zero_() 29 30 def _make_layer(self, block, planes, blocks, stride=1): 31 downsample = None 32 if stride != 1 or self.inplanes != planes * block.expansion: 33 downsample = nn.Sequential( 34 nn.Conv2d(self.inplanes, planes * block.expansion, kernel_size=1, stride=stride, bias=False), 35 nn.BatchNorm2d(planes * block.expansion), 36 ) 37 38 layers = [] 39 layers.append(block(self.inplanes, planes, stride, downsample)) 40 self.inplanes = planes * block.expansion 41 for i in range(1, blocks): 42 layers.append(block(self.inplanes, planes)) 43 44 return nn.Sequential(*layers) 45 46 def forward(self, x): 47 x = self.conv1(x) 48 x = self.bn1(x) 49 x = self.relu(x) 50 x = self.maxpool(x) 51 52 x = self.layer1(x) 53 x = self.layer2(x) 54 x = self.layer3(x) 55 x = self.layer4(x) 56 57 x = self.avgpool(x) 58 x = x.view(x.size(0), -1) 59 pre_yaw = self.fc_yaw(x) 60 pre_pitch = self.fc_pitch(x) 61 pre_roll = self.fc_roll(x) 62 63 return pre_yaw, pre_pitch, pre_roll

说明:self.fc_finetune未使用

3.2 训练代码

1 def parse_args(): 2 """Parse input arguments.""" 3 parser = argparse.ArgumentParser(description='Head pose estimation using the Hopenet network.') 4 parser.add_argument('--gpu', dest='gpu_id', help='GPU device id to use [0]', default=0, type=int) 5 parser.add_argument('--num_epochs', dest='num_epochs', help='Maximum number of training epochs.', default=5, type=int) 6 parser.add_argument('--batch_size', dest='batch_size', help='Batch size.', default=16, type=int) 7 parser.add_argument('--lr', dest='lr', help='Base learning rate.', default=0.001, type=float) 8 parser.add_argument('--dataset', dest='dataset', help='Dataset type.', default='Pose_300W_LP', type=str) 9 parser.add_argument('--data_dir', dest='data_dir', help='Directory path for data.', default='', type=str) 10 parser.add_argument('--filename_list', dest='filename_list', help='Path to text file containing relative paths for every example.', default='', type=str) 11 parser.add_argument('--output_string', dest='output_string', help='String appended to output snapshots.', default = '', type=str) 12 parser.add_argument('--alpha', dest='alpha', help='Regression loss coefficient.', default=0.001, type=float) 13 parser.add_argument('--snapshot', dest='snapshot', help='Path of model snapshot.', default='', type=str) 14 15 args = parser.parse_args() 16 return args 17 18 def get_ignored_params(model): # Generator function that yields ignored params. 19 b = [model.conv1, model.bn1, model.fc_finetune] 20 for i in range(len(b)): 21 for module_name, module in b[i].named_modules(): 22 if 'bn' in module_name: 23 module.eval() 24 for name, param in module.named_parameters(): 25 yield param 26 27 def get_non_ignored_params(model): # Generator function that yields params that will be optimized. 28 b = [model.layer1, model.layer2, model.layer3, model.layer4] 29 for i in range(len(b)): 30 for module_name, module in b[i].named_modules(): 31 if 'bn' in module_name: 32 module.eval() 33 for name, param in module.named_parameters(): 34 yield param 35 36 def get_fc_params(model): # Generator function that yields fc layer params. 37 b = [model.fc_yaw, model.fc_pitch, model.fc_roll] 38 for i in range(len(b)): 39 for module_name, module in b[i].named_modules(): 40 for name, param in module.named_parameters(): 41 yield param 42 43 def load_filtered_state_dict(model, snapshot): 44 # By user apaszke from discuss.pytorch.org 45 model_dict = model.state_dict() 46 snapshot = {k: v for k, v in snapshot.items() if k in model_dict} 47 model_dict.update(snapshot) 48 model.load_state_dict(model_dict) 49 50 if __name__ == '__main__': 51 args = parse_args() 52 53 cudnn.enabled = True 54 num_epochs = args.num_epochs 55 batch_size = args.batch_size 56 gpu = args.gpu_id 57 58 if not os.path.exists('output/snapshots'): 59 os.makedirs('output/snapshots') 60 61 # ResNet50 structure 62 # -99到99,以3为step,共67个bin,这些bin之间共66个位置。因而最后一个参数为66 63 model = hopenet.Hopenet(torchvision.models.resnet.Bottleneck, [3, 4, 6, 3], 66) 64 65 if args.snapshot == '': 66 load_filtered_state_dict(model, model_zoo.load_url('https://download.pytorch.org/models/resnet50-19c8e357.pth')) 67 else: 68 saved_state_dict = torch.load(args.snapshot) 69 model.load_state_dict(saved_state_dict) 70 71 print('Loading data.') 72 73 transformations = transforms.Compose([transforms.Scale(240), 74 transforms.RandomCrop(224), transforms.ToTensor(), 75 transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])]) 76 77 if args.dataset == 'Pose_300W_LP': # 载入数据的dataset 78 pose_dataset = datasets.Pose_300W_LP(args.data_dir, args.filename_list, transformations) 79 elif args.dataset == 'Pose_300W_LP_random_ds': 80 pose_dataset = datasets.Pose_300W_LP_random_ds(args.data_dir, args.filename_list, transformations) 81 elif args.dataset == 'Synhead': 82 pose_dataset = datasets.Synhead(args.data_dir, args.filename_list, transformations) 83 elif args.dataset == 'AFLW2000': 84 pose_dataset = datasets.AFLW2000(args.data_dir, args.filename_list, transformations) 85 elif args.dataset == 'BIWI': 86 pose_dataset = datasets.BIWI(args.data_dir, args.filename_list, transformations) 87 elif args.dataset == 'AFLW': 88 pose_dataset = datasets.AFLW(args.data_dir, args.filename_list, transformations) 89 elif args.dataset == 'AFLW_aug': 90 pose_dataset = datasets.AFLW_aug(args.data_dir, args.filename_list, transformations) 91 elif args.dataset == 'AFW': 92 pose_dataset = datasets.AFW(args.data_dir, args.filename_list, transformations) 93 else: 94 print('Error: not a valid dataset name') 95 sys.exit() 96 97 train_loader = torch.utils.data.DataLoader(dataset=pose_dataset, batch_size=batch_size, shuffle=True, num_workers=2) 98 99 model.cuda(gpu) 100 criterion = nn.CrossEntropyLoss().cuda(gpu) # 自带softmax 101 reg_criterion = nn.MSELoss().cuda(gpu) 102 alpha = args.alpha # Regression loss coefficient 103 softmax = nn.Softmax().cuda(gpu) 104 idx_tensor = [idx for idx in range(66)] 105 idx_tensor = Variable(torch.FloatTensor(idx_tensor)).cuda(gpu) 106 107 optimizer = torch.optim.Adam([{'params': get_ignored_params(model), 'lr': 0}, 108 {'params': get_non_ignored_params(model), 'lr': args.lr}, 109 {'params': get_fc_params(model), 'lr': args.lr * 5}], 110 lr = args.lr) # 不同层给与不同的学习率 111 112 print('Ready to train network.') 113 for epoch in range(num_epochs): 114 for i, (images, labels, cont_labels, name) in enumerate(train_loader): # labels为离散化的bin,cont_labels为实际值 115 images = Variable(images).cuda(gpu) 116 117 label_yaw = Variable(labels[:,0]).cuda(gpu) # Binned labels 118 label_pitch = Variable(labels[:,1]).cuda(gpu) 119 label_roll = Variable(labels[:,2]).cuda(gpu) 120 121 label_yaw_cont = Variable(cont_labels[:,0]).cuda(gpu) # Continuous labels 122 label_pitch_cont = Variable(cont_labels[:,1]).cuda(gpu) 123 label_roll_cont = Variable(cont_labels[:,2]).cuda(gpu) 124 125 yaw, pitch, roll = model(images) # Forward pass 126 127 loss_yaw = criterion(yaw, label_yaw) # Cross entropy loss 此处为分类损失,对应类别0-65 128 loss_pitch = criterion(pitch, label_pitch) # label_yaw等为离散化的bin,只有真实的bin为1,其他为0. 129 loss_roll = criterion(roll, label_roll) # pitch等为特征 130 131 yaw_predicted = softmax(yaw) # MSE loss 此处为回归损失 132 pitch_predicted = softmax(pitch) # yaw_predicted等为特征通过softmax后归一化的特征(即概率) 133 roll_predicted = softmax(roll) 134 135 # 离散型随机变量的一切可能的取值(idx_tensor)与对应的概率(yaw_predicted)乘积之和称为该离散型随机变量的数学期望 136 yaw_predicted = torch.sum(yaw_predicted * idx_tensor, 1) * 3 - 99 # 将归一化的特征对应的实际预测值求和,最终为bz个。 137 pitch_predicted = torch.sum(pitch_predicted * idx_tensor, 1) * 3 - 99 # 此处每个概率乘以对应类别,而后求和,得到期望。 138 roll_predicted = torch.sum(roll_predicted * idx_tensor, 1) * 3 - 99 139 140 loss_reg_yaw = reg_criterion(yaw_predicted, label_yaw_cont) # 预测值的总的期望和真实值计算回归损失 141 loss_reg_pitch = reg_criterion(pitch_predicted, label_pitch_cont) 142 loss_reg_roll = reg_criterion(roll_predicted, label_roll_cont) 143 144 loss_yaw += alpha * loss_reg_yaw # Total loss 总损失 145 loss_pitch += alpha * loss_reg_pitch 146 loss_roll += alpha * loss_reg_roll 147 148 loss_seq = [loss_yaw, loss_pitch, loss_roll] 149 grad_seq = [torch.ones(1).cuda(gpu) for _ in range(len(loss_seq))] 150 optimizer.zero_grad() 151 torch.autograd.backward(loss_seq, grad_seq) # 此处可以认为是不同损失加权(权重均为1)。 152 optimizer.step() 153 154 if (i+1) % 100 == 0: 155 print ('Epoch [%d/%d], Iter [%d/%d] Losses: Yaw %.4f, Pitch %.4f, Roll %.4f' 156 %(epoch+1, num_epochs, i+1, len(pose_dataset)//batch_size, loss_yaw.data[0], loss_pitch.data[0], loss_roll.data[0])) 157 158 # Save models at numbered epochs.d 159 if epoch % 1 == 0 and epoch < num_epochs: # 保存模型 160 print('Taking snapshot...') 161 torch.save(model.state_dict(), 162 'output/snapshots/' + args.output_string + '_epoch_'+ str(epoch+1) + '.pkl')

3.3 dataset代码

1 class Pose_300W_LP(Dataset): 2 # Head pose from 300W-LP dataset 3 def __init__(self, data_dir, filename_path, transform, img_ext='.jpg', annot_ext='.mat', image_mode='RGB'): 4 self.data_dir = data_dir 5 self.transform = transform 6 self.img_ext = img_ext 7 self.annot_ext = annot_ext 8 9 filename_list = get_list_from_filenames(filename_path) 10 11 self.X_train = filename_list 12 self.y_train = filename_list 13 self.image_mode = image_mode 14 self.length = len(filename_list) 15 16 def __getitem__(self, index): 17 img = Image.open(os.path.join(self.data_dir, self.X_train[index] + self.img_ext)) 18 img = img.convert(self.image_mode) 19 mat_path = os.path.join(self.data_dir, self.y_train[index] + self.annot_ext) 20 21 # Crop the face loosely 22 pt2d = utils.get_pt2d_from_mat(mat_path) # 得到2D的landmarks 23 x_min = min(pt2d[0,:]) 24 y_min = min(pt2d[1,:]) 25 x_max = max(pt2d[0,:]) 26 y_max = max(pt2d[1,:]) 27 28 k = np.random.random_sample() * 0.2 + 0.2 # k = 0.2 to 0.40 29 x_min -= 0.6 * k * abs(x_max - x_min) 30 y_min -= 2 * k * abs(y_max - y_min) # why 2??? 31 x_max += 0.6 * k * abs(x_max - x_min) 32 y_max += 0.6 * k * abs(y_max - y_min) 33 img = img.crop((int(x_min), int(y_min), int(x_max), int(y_max))) # 随机裁减 34 35 pose = utils.get_ypr_from_mat(mat_path) # We get the pose in radians,弧度 36 pitch = pose[0] * 180 / np.pi # 弧度转换为度 37 yaw = pose[1] * 180 / np.pi 38 roll = pose[2] * 180 / np.pi 39 40 rnd = np.random.random_sample() # 随机水平旋转 41 if rnd < 0.5: 42 yaw = -yaw # 摇头需要取反 43 roll = -roll # 摆头需要取反 44 img = img.transpose(Image.FLIP_LEFT_RIGHT) 45 46 47 rnd = np.random.random_sample() # Blur 48 if rnd < 0.05: 49 img = img.filter(ImageFilter.BLUR) 50 51 # Bin values 52 bins = np.array(range(-99, 102, 3)) # -99到99,以3为step,共67个bin,这些bin之间共66个位置。 53 # 返回输入在bins中的位置。当输入<bin[0],输出为0。由于此处输入不会<bin[0],因而减1,使得输出范围为0-65 54 binned_pose = np.digitize([yaw, pitch, roll], bins) - 1 55 56 # Get target tensors 57 labels = binned_pose 58 cont_labels = torch.FloatTensor([yaw, pitch, roll]) # 实际的label 59 60 if self.transform is not None: 61 img = self.transform(img) 62 63 return img, labels, cont_labels, self.X_train[index] 64 65 def __len__(self): 66 # 122,450 67 return self.length

3.4 测试代码

说明:测试代码进行了修改,只读取一张图像,输入人脸框。

1 def parse_args(): 2 """Parse input arguments.""" 3 parser = argparse.ArgumentParser(description='Head pose estimation using the Hopenet network.') 4 parser.add_argument('--gpu', dest='gpu_id', help='GPU device id to use [0]', default=-1, type=int) 5 parser.add_argument('--snapshot', dest='snapshot', help='Path of model snapshot.', default='hopenet_robust_alpha1.pkl', type=str) 6 args = parser.parse_args() 7 return args 8 9 if __name__ == '__main__': 10 args = parse_args() 11 12 gpu = args.gpu_id 13 14 if gpu >= 0: 15 cudnn.enabled = True 16 17 # ResNet50 structure 18 model = hopenet.Hopenet(torchvision.models.resnet.Bottleneck, [3, 4, 6, 3], 66) # 载入模型 19 20 print('Loading snapshot.') 21 if gpu >= 0: 22 saved_state_dict = torch.load(args.snapshot) 23 else: 24 saved_state_dict = torch.load(args.snapshot, map_location=torch.device('cpu')) # 无GPU的话,载入模型参数到CPU中 25 model.load_state_dict(saved_state_dict) 26 27 transformations = transforms.Compose([transforms.Scale(224), 28 transforms.CenterCrop(224), transforms.ToTensor(), 29 transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])]) 30 31 if gpu>=0: 32 model.cuda(gpu) 33 34 model.eval() # Change model to 'eval' mode (BN uses moving mean/var). 35 total = 0 36 37 idx_tensor = [idx for idx in range(66)] 38 idx_tensor = torch.FloatTensor(idx_tensor) 39 if gpu>=0: 40 idx_tensor = idx_tensor.cuda(gpu) 41 42 img_path = '1.jpg' 43 frame = cv2.imread(img_path, 1) 44 cv2_frame = cv2.cvtColor(frame,cv2.COLOR_BGR2RGB) 45 46 x_min, y_min, x_max, y_max = 439,375,642,596 # 人脸框位置 47 48 bbox_width = abs(x_max - x_min) # 人脸框宽 49 bbox_height = abs(y_max - y_min) # 人脸框高 50 # x_min -= 3 * bbox_width / 4 51 # x_max += 3 * bbox_width / 4 52 # y_min -= 3 * bbox_height / 4 53 # y_max += bbox_height / 4 54 x_min -= 50 # 增大人脸框 55 x_max += 50 56 y_min -= 50 57 y_max += 30 58 x_min = max(x_min, 0) 59 y_min = max(y_min, 0) 60 x_max = min(frame.shape[1], x_max) 61 y_max = min(frame.shape[0], y_max) 62 63 img = cv2_frame[y_min:y_max,x_min:x_max] # Crop face loosely。裁减人脸 64 img = Image.fromarray(img) 65 66 img = transformations(img) # Transform 67 img_shape = img.size() 68 img = img.view(1, img_shape[0], img_shape[1], img_shape[2]) # NCHW 69 img = torch.tensor(img) 70 if gpu >= 0: 71 img = img.cuda(gpu) 72 73 yaw, pitch, roll = model(img) # 得到特征 74 75 yaw_predicted = F.softmax(yaw) # 特征归一化 76 pitch_predicted = F.softmax(pitch) 77 roll_predicted = F.softmax(roll) 78 79 # 得到期望,将期望变换到连续值的度数 80 yaw_predicted = torch.sum(yaw_predicted.data[0] * idx_tensor) * 3 - 99 # Get continuous predictions in degrees. 81 pitch_predicted = torch.sum(pitch_predicted.data[0] * idx_tensor) * 3 - 99 82 roll_predicted = torch.sum(roll_predicted.data[0] * idx_tensor) * 3 - 99 83 84 frame = utils.draw_axis(frame, yaw_predicted, pitch_predicted, roll_predicted, tdx = (x_min + x_max) / 2, tdy= (y_min + y_max) / 2, size = bbox_height/2) 85 print(yaw_predicted.item(), pitch_predicted.item(), roll_predicted.item()) 86 cv2.imwrite('{}.png'.format(os.path.splitext(img_path)[0]), frame)

其中draw_axis如下:

1 def draw_axis(img, yaw, pitch, roll, tdx=None, tdy=None, size = 100): 2 pitch = pitch * np.pi / 180 # 变换到弧度 3 yaw = -(yaw * np.pi / 180) 4 roll = roll * np.pi / 180 5 6 if tdx != None and tdy != None: 7 tdx = tdx 8 tdy = tdy 9 else: 10 height, width = img.shape[:2] 11 tdx = width / 2 12 tdy = height / 2 13 14 # X-Axis pointing to right. drawn in red # x轴长度,红色指向右侧 15 x1 = size * (cos(yaw) * cos(roll)) + tdx 16 y1 = size * (cos(pitch) * sin(roll) + cos(roll) * sin(pitch) * sin(yaw)) + tdy 17 18 # Y-Axis | drawn in green # y轴长度,绿色指向下方 19 # v 20 x2 = size * (-cos(yaw) * sin(roll)) + tdx 21 y2 = size * (cos(pitch) * cos(roll) - sin(pitch) * sin(yaw) * sin(roll)) + tdy 22 23 # Z-Axis (out of the screen) drawn in blue # z轴长度,蓝色,指向屏幕外面 24 x3 = size * (sin(yaw)) + tdx 25 y3 = size * (-cos(yaw) * sin(pitch)) + tdy 26 27 cv2.line(img, (int(tdx), int(tdy)), (int(x1),int(y1)),(0,0,255),3) 28 cv2.line(img, (int(tdx), int(tdy)), (int(x2),int(y2)),(0,255,0),3) 29 cv2.line(img, (int(tdx), int(tdy)), (int(x3),int(y3)),(255,0,0),2) 30 31 return img

posted on 2020-01-04 19:21 darkknightzh 阅读(1714) 评论(0) 收藏 举报

浙公网安备 33010602011771号

浙公网安备 33010602011771号