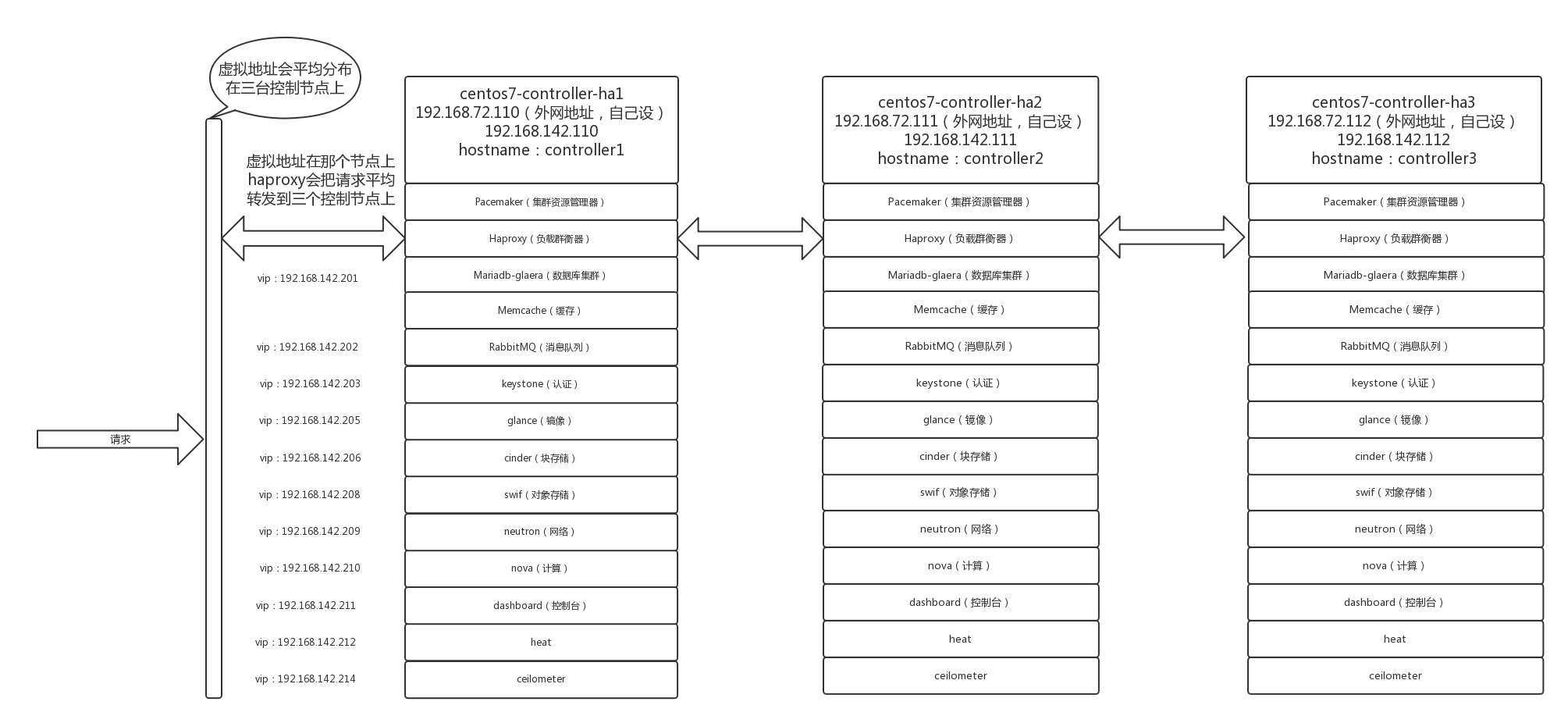

openstack ha 部署

一、控制节点架构如下图:

二、初始化环境:

1、配置IP地址:

1、节点1:

ip addr add dev eth0 192.168.142.110/24 echo 'ip addr add dev eth0 192.168.142.110/24' >> /etc/rc.local chmod +x /etc/rc.d/rc.local

2、节点2: ip addr add dev eth0 192.168.142.111/24 echo 'ip addr add dev eth0 192.168.142.111/24' >> /etc/rc.local chmod +x /etc/rc.d/rc.local 3、节点3: ip addr add dev eth0 192.168.142.112/24 echo 'ip addr add dev eth0 192.168.142.112/24' >> /etc/rc.local chmod +x /etc/rc.d/rc.local

2、更改主机名:

配置主机名+修改/etc/hosts文件: 1、节点1 hostnamectl --static --transient set-hostname controller1 hosts文件: 192.168.142.110 controller1 192.168.142.111 controller2 192.168.142.112 controller3 2、节点2: hostnamectl --static --transient set-hostname controller2 hosts文件: 192.168.142.110 controller1 192.168.142.111 controller2 192.168.142.112 controller3 3、节点3: hostnamectl --static --transient set-hostname controller3 hosts文件: 192.168.142.110 controller1 192.168.142.111 controller2 192.168.142.112 controller3

3、设置防火墙及selinux:

systemctl disable firewalld systemctl stop firewalld sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

4、设置时间同步:

yum install ntp -y

ntpdate cn.pool.ntp.org

5、安装基础软件包:

yum install -y centos-release-openstack-ocata yum upgrade -y yum install -y python-openstackclient

三、安装基础基础服务:

1、安装Pacemaker

(1~4在三个节点都执行)

1、配置免密码登录:

节点1: ssh-keygen -t rsa ssh-copy-id root@controller2 ssh-copy-id root@controller3 节点2: ssh-keygen -t rsa ssh-copy-id root@controller1 ssh-copy-id root@controller3 节点3: ssh-keygen -t rsa ssh-copy-id root@controller1 ssh-copy-id root@controller2 2、安装pacemaker yum install -y pcs pacemaker corosync fence-agents-all resource-agents 3、启动pcsd服务(开机自启动) systemctl start pcsd.service systemctl enable pcsd.service 4、创建集群用户: echo 'password' |passwd --stdin hacluster (此用户在安装pcs时候会自动创建) 5、集群各节点之间进行认证: pcs cluster auth controller1 controller2 controller3 -u hacluster -p password (此处需要输入的用户名必须为pcs自动创建的hacluster,其他用户不能添加成功) 6、创建并启动名为openstack-ha的集群: pcs cluster setup --start --name openstack-ha controller1 controller2 controller3

6、设置集群自启动:

pcs cluster enable --all

7、查看并设置集群属性:

查看当前集群状态:

pcs cluster status

检验Corosync的安装及当前corosync状态:

corosync-cfgtool -s corosync-cmapctl | grep members pcs status corosync

检查配置是否正确(假若没有输出任何则配置正确):

crm_verify -L -V

禁用STONITH:

pcs property set stonith-enabled=false

无法仲裁时候,选择忽略:

pcs property set no-quorum-policy=ignore

2、Haproxy安装配置:

(1~3在三个节点都执行) 1、安装haproxy: yum install -y haproxy lrzsz 2、初始化环境: echo "net.ipv4.ip_nonlocal_bind=1" > /etc/sysctl.d/haproxy.conf

sysctl -p echo 1 > /proc/sys/net/ipv4/ip_nonlocal_bind cat >/etc/sysctl.d/tcp_keepalive.conf << EOF net.ipv4.tcp_keepalive_intvl = 1 net.ipv4.tcp_keepalive_probes = 5 net.ipv4.tcp_keepalive_time = 5 EOF sysctl net.ipv4.tcp_keepalive_intvl=1 sysctl net.ipv4.tcp_keepalive_probes=5 sysctl net.ipv4.tcp_keepalive_time=5 mv /etc/haproxy/haproxy.cfg /etc/haproxy/haproxy.cfg.bak &&cd /etc/haproxy/ (上传haproxy.cfg文件)

haproxy的配置文件:

global daemon group haproxy maxconn 4000 pidfile /var/run/haproxy.pid user haproxy stats socket /var/lib/haproxy/stats log 192.168.142.110 local0 defaults mode tcp maxconn 10000 timeout connect 10s timeout client 1m timeout server 1m timeout check 10s listen stats mode http bind 192.168.142.110:8080 stats enable stats hide-version stats uri /haproxy?openstack stats realm Haproxy\Statistics stats admin if TRUE stats auth admin:admin stats refresh 10s frontend vip-db bind 192.168.142.201:3306 timeout client 90m default_backend db-vms-galera frontend vip-qpid bind 192.168.142.215:5672 timeout client 120s default_backend qpid-vms frontend vip-horizon bind 192.168.142.211:80 timeout client 180s cookie SERVERID insert indirect nocache default_backend horizon-vms frontend vip-ceilometer bind 192.168.142.214:8777 timeout client 90s default_backend ceilometer-vms frontend vip-rabbitmq option clitcpka bind 192.168.142.202:5672 timeout client 900m default_backend rabbitmq-vms frontend vip-keystone-admin bind 192.168.142.203:35357 default_backend keystone-admin-vms backend keystone-admin-vms balance roundrobin server controller1-vm 192.168.142.110:35357 check inter 1s server controller2-vm 192.168.142.111:35357 check inter 1s server controller3-vm 192.168.142.112:35357 check inter 1s frontend vip-keystone-public bind 192.168.142.203:5000 default_backend keystone-public-vms backend keystone-public-vms balance roundrobin server controller1-vm 192.168.142.110:5000 check inter 1s server controller2-vm 192.168.142.111:5000 check inter 1s server controller3-vm 192.168.142.112:5000 check inter 1s frontend vip-glance-api bind 192.168.142.205:9191 default_backend glance-api-vms backend glance-api-vms balance roundrobin server controller1-vm 192.168.142.110:9191 check inter 1s server controller2-vm 192.168.142.111:9191 check inter 1s server controller3-vm 192.168.142.112:9191 check inter 1s frontend vip-glance-registry bind 192.168.142.205:9292 default_backend glance-registry-vms backend glance-registry-vms balance roundrobin server controller1-vm 192.168.142.110:9292 check inter 1s server controller2-vm 192.168.142.111:9292 check inter 1s server controller3-vm 192.168.142.112:9292 check inter 1s frontend vip-cinder bind 192.168.142.206:8776 default_backend cinder-vms backend cinder-vms balance roundrobin server controller1-vm 192.168.142.110:8776 check inter 1s server controller2-vm 192.168.142.111:8776 check inter 1s server controller3-vm 192.168.142.112:8776 check inter 1s frontend vip-swift bind 192.168.142.208:8080 default_backend swift-vms backend swift-vms balance roundrobin server controller1-vm 192.168.142.110:8080 check inter 1s server controller2-vm 192.168.142.111:8080 check inter 1s server controller3-vm 192.168.142.112:8080 check inter 1s frontend vip-neutron bind 192.168.142.209:9696 default_backend neutron-vms backend neutron-vms balance roundrobin server controller1-vm 192.168.142.110:9696 check inter 1s server controller2-vm 192.168.142.111:9696 check inter 1s server controller3-vm 192.168.142.112:9696 check inter 1s frontend vip-nova-vnc-novncproxy bind 192.168.142.210:6080 default_backend nova-vnc-novncproxy-vms backend nova-vnc-novncproxy-vms balance roundrobin server controller1-vm 192.168.142.110:6080 check inter 1s server controller2-vm 192.168.142.111:6080 check inter 1s server controller3-vm 192.168.142.112:6080 check inter 1s frontend vip-nova-vnc-xvpvncproxy bind 192.168.142.210:6081 default_backend nova-vnc-xvpvncproxy-vms backend nova-vnc-xvpvncproxy-vms balance roundrobin server controller1-vm 192.168.142.110:6081 check inter 1s server controller2-vm 192.168.142.111:6081 check inter 1s server controller3-vm 192.168.142.112:6081 check inter 1s frontend vip-nova-metadata bind 192.168.142.210:8775 default_backend nova-metadata-vms backend nova-metadata-vms balance roundrobin server controller1-vm 192.168.142.110:8775 check inter 1s server controller2-vm 192.168.142.111:8775 check inter 1s server controller3-vm 192.168.142.112:8775 check inter 1s frontend vip-nova-api bind 192.168.142.210:8774 default_backend nova-api-vms backend nova-api-vms balance roundrobin server controller1-vm 192.168.142.110:8774 check inter 1s server controller2-vm 192.168.142.111:8774 check inter 1s server controller3-vm 192.168.142.112:8774 check inter 1s backend horizon-vms balance roundrobin timeout server 108s server controller1-vm 192.168.142.110:80 check inter 1s server controller2-vm 192.168.142.111:80 check inter 1s server controller3-vm 192.168.142.112:80 check inter 1s frontend vip-heat-cfn bind 192.168.142.212:8000 default_backend heat-cfn-vms backend heat-cfn-vms balance roundrobin server controller1-vm 192.168.142.110:8000 check inter 1s server controller2-vm 192.168.142.111:8000 check inter 1s server controller3-vm 192.168.142.112:8000 check inter 1s frontend vip-heat-cloudw bind 192.168.142.212:8004 default_backend heat-cloudw-vms backend heat-cloudw-vms balance roundrobin server controller1-vm 192.168.142.110:8004 check inter 1s server controller2-vm 192.168.142.111:8004 check inter 1s server controller3-vm 192.168.142.112:8004 check inter 1s frontend vip-heat-srv bind 192.168.142.212:8004 default_backend heat-srv-vms backend heat-srv-vms balance roundrobin server controller1-vm 192.168.142.110:8004 check inter 1s server controller2-vm 192.168.142.111:8004 check inter 1s server controller3-vm 192.168.142.112:8004 check inter 1s backend ceilometer-vms balance roundrobin server controller1-vm 192.168.142.110:8777 check inter 1s server controller2-vm 192.168.142.111:8777 check inter 1s server controller3-vm 192.168.142.112:8777 check inter 1s backend qpid-vms stick-table type ip size 2 stick on dst timeout server 120s server controller1-vm 192.168.142.110:5672 check inter 1s server controller2-vm 192.168.142.111:5672 check inter 1s server controller3-vm 192.168.142.112:5672 check inter 1s backend db-vms-galera option httpchk option tcpka stick-table type ip size 1000 stick on dst timeout server 90m server controller1-vm 192.168.142.110:3306 check inter 1s port 9200 backup on-marked-down shutdown-sessions server controller2-vm 192.168.142.111:3306 check inter 1s port 9200 backup on-marked-down shutdown-sessions server controller3-vm 192.168.142.112:3306 check inter 1s port 9200 backup on-marked-down shutdown-sessions backend rabbitmq-vms option srvtcpka balance roundrobin timeout server 900m server controller1-vm 192.168.142.110:5672 check inter 1s server controller2-vm 192.168.142.111:5672 check inter 1s server controller3-vm 192.168.142.112:5672 check inter 1s

3、创建haproxy的pacemaker资源: pcs resource create lb-haproxy systemd:haproxy --clone pcs resource enable lb-haproxy

4、创建vip(Python脚本)

import os components=['db','rabbitmq','keystone','memcache','glance','cinder', 'swift-brick','swift','neutron','nova','horizon','heat', 'mongodb','ceilometer','qpid'] offset=201 internal_network='192.168.142' for section in components: if section in ['memcache','swift-brick','mongodb']: pass else: print("%s:%s.%s"%(section,internal_network,offset)) # os.system('pcs resource delete vip-%s '%section) os.system('pcs resource create vip-%s IPaddr2 ip=%s.%s nic=eth0'%(section,internal_network,offset)) os.system('pcs constraint order start vip-%s then lb-haproxy-clone kind=Optional'%section) os.system('pcs constraint colocation add vip-%s with lb-haproxy-clone'%section) offset += 1 os.system('pcs cluster stop --all') os.system('pcs cluster start --all')

3、安装mariadb:

(1~6在三个节点都执行)

1、安装mariadb-galera:

yum install mariadb-galera-server xinetd rsync -y

pcs resource disable lb-haproxy

2、galera集群检查: cat > /etc/sysconfig/clustercheck << EOF MYSQL_USERNAME="clustercheck" MYSQL_PASSWORD="hagluster" MYSQL_HOST="localhost" MYSQL_PORT="3306" EOF

3、创建集群用户: systemctl start mariadb.service

mysql_secure_installation mysql -e "CREATE USER 'clustercheck'@'localhost' IDENTIFIED BY 'hagluster';" systemctl stop mariadb.service

4、配置galera.cnf文件:

cat > /etc/my.cnf.d/galera.cnf << EOF [mysqld] skip-name-resolve=1 binlog_format=ROW default-storage-engine=innodb innodb_autoinc_lock_mode=2 innodb_locks_unsafe_for_binlog=1 query_cache_size=0 query_cache_type=0 bind_address=192.168.142.110 wsrep_cluster_address = "gcomm://" wsrep_cluster_address = "gcomm://192.168.142.111,192.168.142.112" wsrep_provider=/usr/lib64/galera/libgalera_smm.so wsrep_cluster_name="galera_cluster" wsrep_slave_threads=1 wsrep_certify_nonPK=1 wsrep_max_ws_rows=131072 wsrep_max_ws_size=1073741824 wsrep_debug=0 wsrep_convert_LOCK_to_trx=0 wsrep_retry_autocommit=1 wsrep_auto_increment_control=1 wsrep_drupal_282555_workaround=0 wsrep_causal_reads=0 wsrep_notify_cmd= wsrep_sst_method=rsync wsrep_on=ON EOF

cat > /etc/my.cnf.d/galera.cnf << EOF [mysqld] skip-name-resolve=1 binlog_format=ROW default-storage-engine=innodb innodb_autoinc_lock_mode=2 innodb_locks_unsafe_for_binlog=1 query_cache_size=0 query_cache_type=0 bind_address=192.168.142.111 wsrep_cluster_address = "gcomm://192.168.142.110,192.168.142.112" wsrep_provider=/usr/lib64/galera/libgalera_smm.so wsrep_cluster_name="galera_cluster" wsrep_slave_threads=1 wsrep_certify_nonPK=1 wsrep_max_ws_rows=131072 wsrep_max_ws_size=1073741824 wsrep_debug=0 wsrep_convert_LOCK_to_trx=0 wsrep_retry_autocommit=1 wsrep_auto_increment_control=1 wsrep_drupal_282555_workaround=0 wsrep_causal_reads=0 wsrep_notify_cmd= wsrep_sst_method=rsync wsrep_on=ON EOF

cat > /etc/my.cnf.d/galera.cnf << EOF [mysqld] skip-name-resolve=1 binlog_format=ROW default-storage-engine=innodb innodb_autoinc_lock_mode=2 innodb_locks_unsafe_for_binlog=1 query_cache_size=0 query_cache_type=0 bind_address=192.168.142.112 wsrep_cluster_address = "gcomm://192.168.142.110,192.168.142.111" wsrep_provider=/usr/lib64/galera/libgalera_smm.so wsrep_cluster_name="galera_cluster" wsrep_slave_threads=1 wsrep_certify_nonPK=1 wsrep_max_ws_rows=131072 wsrep_max_ws_size=1073741824 wsrep_debug=0 wsrep_convert_LOCK_to_trx=0 wsrep_retry_autocommit=1 wsrep_auto_increment_control=1 wsrep_drupal_282555_workaround=0 wsrep_causal_reads=0 wsrep_notify_cmd= wsrep_sst_method=rsync wsrep_on=ON EOF

5、配置基于http对数据库检查: cat > /etc/xinetd.d/galera-monitor << EOF service galera-monitor { port = 9200 disable = no socket_type = stream protocol = tcp wait = no user = root group = root groups = yes server = /usr/bin/clustercheck type = UNLISTED per_source = UNLIMITED log_on_success = log_on_failure = HOST flags = REUSE } EOF systemctl enable xinetd systemctl start xinetd

6、授权: chown mysql:mysql -R /var/log/mariadb chown mysql:mysql -R /var/lib/mysql chown mysql:mysql -R /var/run/mariadb/

7、创建galera集群资源

pcs resource create galera galera enable_creation=true wsrep_cluster_address="gcomm://controller1,controller2,controller3" additional_parameters='--open-files-limit=16384' meta master-max=3 ordered=true op promote timeout=300s on-fail=block --master

pcs resource enable lb-haproxy

pcs constraint order start lb-haproxy-clone then start galera-master

8、检查服务:

clustercheck pcs resource show

创建数据库表:

CREATE DATABASE keystone; GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY 'keystone'; GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY 'keystone'; CREATE DATABASE glance; GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' IDENTIFIED BY 'glance'; GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY 'glance'; CREATE DATABASE nova_api; CREATE DATABASE nova; CREATE DATABASE nova_cell0; GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY 'nova'; GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY 'nova'; GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY 'nova'; GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY 'nova'; GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' IDENTIFIED BY 'nova'; GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY 'nova'; CREATE DATABASE neutron; GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY 'neutron'; GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY 'neutron'; CREATE DATABASE cinder; GRANT ALL PRIVILEGES ON cinder.* TO 'cinder'@'localhost' IDENTIFIED BY 'cinder'; GRANT ALL PRIVILEGES ON cinder.* TO 'cinder'@'%' IDENTIFIED BY 'cinder'; CREATE DATABASE heat; GRANT ALL PRIVILEGES ON heat.* TO 'heat'@'localhost' IDENTIFIED BY 'heat'; GRANT ALL PRIVILEGES ON heat.* TO 'heat'@'%' IDENTIFIED BY 'heat'; flush privileges;

4、安装memcache:

(1 在三个控制节点上执行)

1、安装memcache:

yum install -y memcached

2、创建pacemaker资源 pcs resource create memcached systemd:memcached --clone interleave=true pcs status

5、安装RabbitMQ:

(1~3在三个节点都执行)

1、安装rabbitmq: yum install -y rabbitmq-server

2、添加rabbitmq文件: 节点1: cat > /etc/rabbitmq/rabbitmq-env.conf << EOF NODE_IP_ADDRESS=192.168.142.110 EOF 节点2: cat > /etc/rabbitmq/rabbitmq-env.conf << EOF NODE_IP_ADDRESS=192.168.142.111 EOF 节点3: cat > /etc/rabbitmq/rabbitmq-env.conf << EOF NODE_IP_ADDRESS=192.168.142.112 EOF

3、创建目录并授权: mkdir -p /var/lib/rabbitmq chown -R rabbitmq:rabbitmq /var/lib/rabbitmq

4、创建pacemaker资源 pcs resource create rabbitmq-cluster ocf:rabbitmq:rabbitmq-server-ha --master erlang_cookie=DPMDALGUKEOMPTHWPYKC node_port=5672 op monitor interval=30 timeout=120 op monitor interval=27 role=Master timeout=120 op monitor interval=103 role=Slave timeout=120 OCF_CHECK_LEVEL=30 op start interval=0 timeout=120 op stop interval=0 timeout=120 op promote interval=0 timeout=60 op demote interval=0 timeout=60 op notify interval=0 timeout=60 meta notify=true ordered=false interleave=false master-max=1 master-node-max=1

5、配置集群队列镜像,用户及权限(在主节点上): rabbitmqctl set_policy ha-all "." '{"ha-mode":"all", "ha-sync-mode":"automatic"}' --apply-to all --priority 0 rabbitmqctl add_user openstack openstack rabbitmqctl set_permissions openstack ".*" ".*" ".*"

6、查看配置是否成功: rabbitmqctl list_policies rabbitmqctl list_users rabbitmqctl list_permissions

5、安装mongodb:

(1~3在三个节点上都执行)

1、安装软件包:

yum -y install mongodb mongodb-server

2、修改配置文件: sed -i "s/bind_ip = 127.0.0.1/bind_ip = 0.0.0.0/g" /etc/mongod.conf sed -i "s/#replSet = arg/replSet = ceilometer/g" /etc/mongod.conf sed -i "s/#smallfiles = true/smallfiles = true/g" /etc/mongod.conf

3、测试是否能启动: systemctl start mongod systemctl status mongod systemctl stop mongod

4、创建pacemaker资源:

pcs resource create mongodb systemd:mongod op start timeout=300s --clone

5、编写创建集群脚本: cat >> /root/mongo_replica_setup.js << EOF rs.initiate() sleep(10000) rs.add("controller1"); rs.add("controller2"); rs.add("controller3"); EOF

6、执行脚本: mongo /root/mongo_replica_setup.js

7、查看集群时候创建成功:

mongo #进入交互窗口后执行:

rs.status()

ceilometer:PRIMARY> rs.status() { "set" : "ceilometer", "date" : ISODate("2017-12-07T06:41:00Z"), "myState" : 1, "members" : [ { "_id" : 0, "name" : "controller1:27017", "health" : 1, "state" : 1, "stateStr" : "PRIMARY", "uptime" : 1163, "optime" : Timestamp(1512628074, 2), "optimeDate" : ISODate("2017-12-07T06:27:54Z"), "electionTime" : Timestamp(1512628064, 2), "electionDate" : ISODate("2017-12-07T06:27:44Z"), "self" : true }, { "_id" : 1, "name" : "controller2:27017", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 786, "optime" : Timestamp(1512628074, 2), "optimeDate" : ISODate("2017-12-07T06:27:54Z"), "lastHeartbeat" : ISODate("2017-12-07T06:40:59Z"), "lastHeartbeatRecv" : ISODate("2017-12-07T06:40:59Z"), "pingMs" : 1, "syncingTo" : "controller1:27017" }, { "_id" : 2, "name" : "controller3:27017", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 786, "optime" : Timestamp(1512628074, 2), "optimeDate" : ISODate("2017-12-07T06:27:54Z"), "lastHeartbeat" : ISODate("2017-12-07T06:40:59Z"), "lastHeartbeatRecv" : ISODate("2017-12-07T06:40:59Z"), "pingMs" : 1, "syncingTo" : "controller1:27017" } ], "ok" : 1 }

五、安装openstack服务:

1、安装配置keystone:

(1~3在三个控制节点执行)

1、安装软件包: yum install -y openstack-keystone httpd mod_wsgi openstack-utils python-keystoneclient 2、修改配置文件: openstack-config --set /etc/keystone/keystone.conf database connection mysql+pymysql://keystone:keystone@192.168.142.201/keystone openstack-config --set /etc/keystone/keystone.conf token provider fernet openstack-config --set /etc/keystone/keystone.conf memcache servers controller1:11211,controller2:11211,controller3:11211 openstack-config --set /etc/keystone/keystone.conf oslo_messaging_rabbit rabbit_host controller1,controller2,controller3 openstack-config --set /etc/keystone/keystone.conf oslo_messaging_rabbit rabbit_ha_queues true openstack-config --set /etc/keystone/keystone.conf oslo_messaging_rabbit heartbeat_timeout_threshold 60 openstack-config --set /etc/keystone/keystone.conf oslo_messaging_rabbit rabbit_userid openstack openstack-config --set /etc/keystone/keystone.conf oslo_messaging_rabbit rabbit_password openstack 查看配置: cat /etc/keystone/keystone.conf | grep -v "^#"|grep -v "^$" 3、修改httpd配置文件: ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/ sed -i "s/Listen 80/Listen 81/g" /etc/httpd/conf/httpd.conf 节点1: sed -i "s/#ServerName www.example.com:80/ServerName controller1:80/g" /etc/httpd/conf/httpd.conf sed -i "s/Listen 5000/Listen 192.168.142.110:5000/g" /etc/httpd/conf.d/wsgi-keystone.conf sed -i "s/Listen 35357/Listen 192.168.142.110:35357/g" /etc/httpd/conf.d/wsgi-keystone.conf 节点2: sed -i "s/#ServerName www.example.com:80/ServerName controller2:80/g" /etc/httpd/conf/httpd.conf sed -i "s/Listen 5000/Listen 192.168.142.111:5000/g" /etc/httpd/conf.d/wsgi-keystone.conf sed -i "s/Listen 35357/Listen 192.168.142.111:35357/g" /etc/httpd/conf.d/wsgi-keystone.conf 节点3: sed -i "s/#ServerName www.example.com:80/ServerName controller3:80/g" /etc/httpd/conf/httpd.conf sed -i "s/Listen 5000/Listen 192.168.142.112:5000/g" /etc/httpd/conf.d/wsgi-keystone.conf sed -i "s/Listen 35357/Listen 192.168.142.112:35357/g" /etc/httpd/conf.d/wsgi-keystone.conf 4、创建pacemaker资源: pcs resource create keystone-http systemd:httpd --clone interleave=true 5、同步数据库: su -s /bin/sh -c "keystone-manage db_sync" keystone 6、创建管理员账户(节点1执行): keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone keystone-manage credential_setup --keystone-user keystone --keystone-group keystone keystone-manage bootstrap --bootstrap-password keystone --bootstrap-admin-url http://192.168.142.203:35357/v3/ --bootstrap-internal-url http://192.168.142.203:5000/v3/ --bootstrap-public-url http://192.168.142.203:5000/v3/ --bootstrap-region-id RegionOne7、配置环境变量: export OS_USERNAME=admin export OS_PASSWORD=keystone export OS_PROJECT_NAME=admin export OS_USER_DOMAIN_NAME=Default export OS_PROJECT_DOMAIN_NAME=Default export OS_AUTH_URL=http://192.168.142.203:35357/v3 export OS_IDENTITY_API_VERSION=3 7、将keys文件复制到其他两个节点: 节点(2,3)执行: mkdir /etc/keystone/credential-keys/ mkdir /etc/keystone/fernet-keys/ 节点(1)执行: scp /etc/keystone/credential-keys/* root@controller2:/etc/keystone/credential-keys/ scp /etc/keystone/credential-keys/* root@controller3:/etc/keystone/credential-keys/ scp /etc/keystone/credential-keys/* root@controller2:/etc/keystone/fernet-keys/ scp /etc/keystone/credential-keys/* root@controller3:/etc/keystone/fernet-keys/ 节点(2,3)执行: chown -R keystone:keystone /etc/keystone/credential-keys/ chown -R keystone:keystone /etc/keystone/fernet-keys/ chmod 700 /etc/keystone/credential-keys/ chmod 700 /etc/keystone/fernet-keys/ 8、创建服务及用户: openstack project create --domain default --description "Service Project" service openstack project create --domain default --description "Demo Project" demo openstack user create --domain default --password demo demo openstack role create user openstack role add --project demo --user demo user openstack --os-auth-url http://192.168.142.203:35357/v3 --os-project-domain-name default --os-user-domain-name default --os-project-name admin --os-username admin token issue openstack --os-auth-url http://192.168.142.203:5000/v3 --os-project-domain-name default --os-user-domain-name default --os-project-name demo --os-username demo token issue --os-password demo 9、为glance服务创建用户、服务及endpoint: openstack user create --domain default --password glance glance openstack role add --project service --user glance admin openstack service create --name glance --description "OpenStack Image" image openstack endpoint create --region RegionOne image public http://192.168.142.205:9292 openstack endpoint create --region RegionOne image internal http://192.168.142.205:9292 openstack endpoint create --region RegionOne image admin http://192.168.142.205:9292 10、为cinder服务创建用户、服务及endpoint: openstack user create --domain default --password cinder cinder openstack role add --project service --user cinder admin openstack service create --name cinderv2 --description "OpenStack Block Storage" volumev2 openstack service create --name cinderv3 --description "OpenStack Block Storage" volumev3 openstack endpoint create --region RegionOne volumev2 public http://192.168.142.206:8776/v2/%\(project_id\)s openstack endpoint create --region RegionOne volumev2 internal http://192.168.142.206:8776/v2/%\(project_id\)s openstack endpoint create --region RegionOne volumev2 admin http://192.168.142.206:8776/v2/%\(project_id\)s openstack endpoint create --region RegionOne volumev3 public http://192.168.142.206:8776/v3/%\(project_id\)s openstack endpoint create --region RegionOne volumev3 internal http://192.168.142.206:8776/v3/%\(project_id\)s openstack endpoint create --region RegionOne volumev3 admin http://192.168.142.206:8776/v3/%\(project_id\)s 11、为neutron服务创建用户、服务及endpoint: openstack user create --domain default --password neutron neutron openstack role add --project service --user neutron admin openstack service create --name neutron --description "OpenStack Networking" network openstack endpoint create --region RegionOne network public http://192.168.142.209:9696 openstack endpoint create --region RegionOne network internal http://192.168.142.209:9696 openstack endpoint create --region RegionOne network admin http://192.168.142.209:9696 12、为nova服务创建用户、服务及endpoint: openstack user create --domain default --password nova nova openstack role add --project service --user nova admin openstack service create --name nova --description "OpenStack Compute" compute openstack endpoint create --region RegionOne compute public http://192.168.142.210:8774/v2.1 openstack endpoint create --region RegionOne compute internal http://192.168.142.210:8774/v2.1 openstack endpoint create --region RegionOne compute admin http://192.168.142.210:8774/v2.1

openstack user create --domain default --password placement placement

openstack role add --project service --user placement admin

openstack service create --name placement --description "Placement API" placement

openstack endpoint create --region RegionOne placement public http://192.168.142.210:8778

openstack endpoint create --region RegionOne placement internal http://192.168.142.210:8778

openstack endpoint create --region RegionOne placement admin http://192.168.142.210:8778

13、为heat服务创建用户、服务及endpoint: openstack user create --domain default --password heat heat openstack role add --project service --user heat admin openstack service create --name heat --description "Orchestration" orchestration openstack service create --name heat-cfn --description "Orchestration" cloudformation openstack endpoint create --region RegionOne orchestration public http://192.168.142.212:8004/v1/%\(tenant_id\)s openstack endpoint create --region RegionOne orchestration internal http://192.168.142.212:8004/v1/%\(tenant_id\)s openstack endpoint create --region RegionOne orchestration admin http://192.168.142.212:8004/v1/%\(tenant_id\)s openstack endpoint create --region RegionOne cloudformation public http://192.168.142.212:8000/v1 openstack endpoint create --region RegionOne cloudformation internal http://192.168.142.212:8000/v1 openstack endpoint create --region RegionOne cloudformation admin http://192.168.142.212:8000/v1 14、为ceilometer服务创建用户、服务及endpoint: openstack user create --domain default --password ceilometer ceilometer

验证配置结果:

1、验证keystone服务是否正常: unset OS_AUTH_URL OS_PASSWORD openstack --os-auth-url http://192.168.142.203:35357/v3 --os-project-domain-name default --os-user-domain-name default --os-project-name admin --os-username admin token issue openstack --os-auth-url http://192.168.142.203:5000/v3 --os-project-domain-name default --os-user-domain-name default --os-project-name demo --os-username demo token issue 2、查看用户: openstack user list 3、查看服务: openstack service list 4、查看服务目录: openstack endpoint list

2、安装glance:

(1~3在三个控制节点上执行)

1、安装软件包: yum install -y openstack-glance cp /etc/glance/glance-api.conf /etc/glance/glance-api.conf.bak cp /etc/glance/glance-registry.conf /etc/glance/glance-registry.conf.bak 2、修改glance-api文件: openstack-config --set /etc/glance/glance-api.conf database connection mysql+pymysql://glance:glance@192.168.142.201/glance openstack-config --set /etc/glance/glance-api.conf paste_deploy flavor keystone openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_uri http://192.168.142.203:5000 openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_url http://192.168.142.203:35357 openstack-config --set /etc/glance/glance-api.conf keystone_authtoken memcached_servers controller1:11211,controller2:11211,controller3:11211 openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_type password openstack-config --set /etc/glance/glance-api.conf keystone_authtoken project_domain_name default openstack-config --set /etc/glance/glance-api.conf keystone_authtoken user_domain_name default openstack-config --set /etc/glance/glance-api.conf keystone_authtoken project_name service openstack-config --set /etc/glance/glance-api.conf keystone_authtoken username glance openstack-config --set /etc/glance/glance-api.conf keystone_authtoken password glance openstack-config --set /etc/glance/glance-api.conf glance_store stores file,http openstack-config --set /etc/glance/glance-api.conf glance_store default_store file openstack-config --set /etc/glance/glance-api.conf glance_store filesystem_store_datadir /var/lib/glance/images/ openstack-config --set /etc/glance/glance-api.conf oslo_messaging_rabbit rabbit_userid openstack openstack-config --set /etc/glance/glance-api.conf oslo_messaging_rabbit rabbit_password openstack openstack-config --set /etc/glance/glance-api.conf oslo_messaging_rabbit rabbit_hosts controller1,controller2,controller3 openstack-config --set /etc/glance/glance-api.conf oslo_messaging_rabbit rabbit_ha_queues true openstack-config --set /etc/glance/glance-api.conf oslo_messaging_rabbit heartbeat_timeout_threshold 60 openstack-config --set /etc/glance/glance-api.conf DEFAULT registry_host 192.168.142.205 节点1: openstack-config --set /etc/glance/glance-api.conf DEFAULT bind_host 192.168.142.110 节点2: openstack-config --set /etc/glance/glance-api.conf DEFAULT bind_host 192.168.142.111 节点3: openstack-config --set /etc/glance/glance-api.conf DEFAULT bind_host 192.168.142.112 3、修改glance-registry文件: openstack-config --set /etc/glance/glance-registry.conf database connection mysql+pymysql://glance:glance@192.168.142.201/glance openstack-config --set /etc/glance/glance-registry.conf paste_deploy flavor keystone openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_uri http://192.168.142.203:5000 openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_url http://192.168.142.203:35357 openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken memcached_servers controller1:11211,controller2:11211,controller3:11211 openstack-config --set /etc/glanceg/lance-registry.conf keystone_authtoken auth_type password openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken project_domain_name default openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken user_domain_name default openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken project_name service openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken username glance openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken password glance openstack-config --set /etc/glance/glance-registry.conf oslo_messaging_rabbit rabbit_userid openstack openstack-config --set /etc/glance/glance-registry.conf oslo_messaging_rabbit rabbit_password openstack openstack-config --set /etc/glance/glance-registry.conf oslo_messaging_rabbit rabbit_hosts controller1,controller2,controller3 openstack-config --set /etc/glance/glance-registry.conf oslo_messaging_rabbit rabbit_ha_queues true openstack-config --set /etc/glance/glance-registry.conf oslo_messaging_rabbit heartbeat_timeout_threshold 60 openstack-config --set /etc/glance/glance-registry.conf DEFAULT registry_host 192.168.142.205 节点1: openstack-config --set /etc/glance/glance-registry.conf DEFAULT bind_host 192.168.142.110 节点2: openstack-config --set /etc/glance/glance-registry.conf DEFAULT bind_host 192.168.142.111 节点3: openstack-config --set /etc/glance/glance-registry.conf DEFAULT bind_host 192.168.142.112 4、创建nfs资源: pcs resource create glance-fs Filesystem device="192.168.72.100:/home/glance" directory="/var/lib/glance" fstype="nfs" options="v3" --clone chown glance:nobody /var/lib/glance

5、同步数据库: su -s /bin/sh -c "glance-manage db_sync" glance

6、创建pacemaker资源: pcs resource create glance-registry systemd:openstack-glance-registry --clone interleave=true pcs resource create glance-api systemd:openstack-glance-api --clone interleave=true pcs constraint order start glance-fs-clone then glance-registry-clone pcs constraint colocation add glance-registry-clone with glance-fs-clone pcs constraint order start glance-registry-clone then glance-api-clone pcs constraint colocation add glance-api-clone with glance-registry-clone pcs constraint order start keystone-http-clone then glance-registry-clone

3、安装配置cinder:

4、安装配置neutron:

1、安装软件包: yum install -y openstack-neutron openstack-neutron-openvswitch openstack-neutron-ml2 python-neutronclient which 2、修改neutron.conf文件:

cp /etc/neutron/neutron.conf /etc/neutron/neutron.conf.bak

节点1: openstack-config --set /etc/neutron/neutron.conf DEFAULT bind_host 192.168.142.110 节点2: openstack-config --set /etc/neutron/neutron.conf DEFAULT bind_host 192.168.142.111 节点3: openstack-config --set /etc/neutron/neutron.conf DEFAULT bind_host 192.168.142.112 ######default###### openstack-config --set /etc/neutron/neutron.conf DEFAULT rpc_backend rabbit openstack-config --set /etc/neutron/neutron.conf DEFAULT auth_strategy keystone openstack-config --set /etc/neutron/neutron.conf DEFAULT core_plugin ml2 openstack-config --set /etc/neutron/neutron.conf DEFAULT service_plugins router openstack-config --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_status_changes True openstack-config --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_data_changes True #######keystone###### openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_url http://192.168.142.203:35357 openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_uri http://192.168.142.203:5000 openstack-config --set /etc/neutron/neutron.conf keystone_authtoken memcached_servers controller1:11211,controller2:11211,controller3:11211 openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_type password openstack-config --set /etc/neutron/neutron.conf keystone_authtoken project_domain_name default openstack-config --set /etc/neutron/neutron.conf keystone_authtoken user_domain_name default openstack-config --set /etc/neutron/neutron.conf keystone_authtoken project_name service openstack-config --set /etc/neutron/neutron.conf keystone_authtoken username neutron openstack-config --set /etc/neutron/neutron.conf keystone_authtoken password neutron ######database####### openstack-config --set /etc/neutron/neutron.conf database connection mysql+pymysql://neutron:neutron@192.168.142.201:3306/neutron ######rabbit###### openstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_hosts controller1,controller2,controller3 openstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_ha_queues true openstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit heartbeat_timeout_threshold 60 openstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_userid openstack openstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_password openstack ######nova###### openstack-config --set /etc/neutron/neutron.conf nova auth_url http://192.168.142.203:35357/ openstack-config --set /etc/neutron/neutron.conf nova auth_type password openstack-config --set /etc/neutron/neutron.conf nova project_domain_id default openstack-config --set /etc/neutron/neutron.conf nova user_domain_id default openstack-config --set /etc/neutron/neutron.conf nova region_name RegionOne openstack-config --set /etc/neutron/neutron.conf nova project_name service openstack-config --set /etc/neutron/neutron.conf nova username nova openstack-config --set /etc/neutron/neutron.conf nova password nova

3、修改ml2_conf.ini文件:

cp /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugins/ml2/ml2_conf.ini.bak

节点1:

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs local_ip 192.168.142.110

节点2:

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs local_ip 192.168.142.111

节点3:

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs local_ip 192.168.142.112

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 type_drivers flat,vlan,gre,vxlan

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 tenant_network_types gre

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 mechanism_drivers openvswitch

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_gre tunnel_id_ranges 1:1000

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup enable_security_group True

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup enable_ipset True

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_flat flat_networks external

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ovs bridge_mappings external:br-ex

openstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini agent tunnel_types gre

rm -rf /etc/neutron/plugin.ini && ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

4、l3与dhcp高可用设置:

openstack-config --set /etc/neutron/neutron.conf DEFAULT l3_ha True

openstack-config --set /etc/neutron/neutron.conf DEFAULT allow_automatic_l3agent_failover True

openstack-config --set /etc/neutron/neutron.conf DEFAULT max_l3_agents_per_router 3

openstack-config --set /etc/neutron/neutron.conf DEFAULT min_l3_agents_per_router 2

openstack-config --set /etc/neutron/neutron.conf DEFAULT dhcp_agents_per_network 3

5、启动openvswitch:

systemctl enable openvswitch

systemctl start openvswitch

ovs-vsctl del-br br-int

ovs-vsctl del-br br-ex

ovs-vsctl del-br br-tun

ovs-vsctl add-br br-int

ovs-vsctl add-br br-ex

ovs-vsctl add-port br-ex ens8

ethtool -K ens8 gro off

openstack-config --set /etc/neutron/plugins/openvswitch/ovs_neutron_plugin.ini agent l2_population False

6、修改metadata.agent.ini文件:

cp -a /etc/neutron/metadata_agent.ini /etc/neutron/metadata_agent.ini_bak

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT auth_strategy keystone

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT auth_uri http://192.168.142.203:5000

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT auth_url http://192.168.142.203:35357

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT auth_host 192.168.142.203

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT auth_region regionOne

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT admin_tenant_name services

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT admin_user neutron

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT admin_password neutron

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT project_domain_id default

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT user_domain_id default

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT nova_metadata_ip 192.168.142.210

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT nova_metadata_port 8775

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT metadata_proxy_shared_secret neutron_shared_secret

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT metadata_workers 4

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT metadata_backlog 2048

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT verbose True

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT debug True

7、修改dhcp_agent.ini文件:

cp -a /etc/neutron/dhcp_agent.ini /etc/neutron/dhcp_agent.ini_bak

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT interface_driver neutron.agent.linux.interface.OVSInterfaceDriver

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_delete_namespaces False

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_driver neutron.agent.linux.dhcp.Dnsmasq

openstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT dnsmasq_config_file /etc/neutron/dnsmasq-neutron.conf

echo "dhcp-option-force=26,1454" >/etc/neutron/dnsmasq-neutron.conf

pkill dnsmasq

8、修改L3_agent文件:

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT interface_driver neutron.agent.linux.interface.OVSInterfaceDriver

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT handle_internal_only_routers True

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT send_arp_for_ha 3

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT router_delete_namespaces False

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT external_network_bridge br-ex

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT verbose True

openstack-config --set /etc/neutron/l3_agent.ini DEFAULT debug True

rm -rf /usr/lib/systemd/system/neutron-openvswitch-agent.service.orig && cp /usr/lib/systemd/system/neutron-openvswitch-agent.service /usr/lib/systemd/system/neutron-openvswitch-agent.service.orig

sed -i 's,plugins/openvswitch/ovs_neutron_plugin.ini,plugin.ini,g' /usr/lib/systemd/system/neutron-openvswitch-agent.service

9、修改/etc/sysctl.conf:

sed -e '/^net.bridge/d' -e '/^net.ipv4.conf/d' -i /etc/sysctl.conf

echo "net.bridge.bridge-nf-call-ip6tables=1" >>/etc/sysctl.conf

echo "net.bridge.bridge-nf-call-iptables=1" >>/etc/sysctl.conf

echo "net.ipv4.conf.all.rp_filter=0" >>/etc/sysctl.conf

echo "net.ipv4.conf.default.rp_filter=0" >>/etc/sysctl.conf

sysctl -p >>/dev/null

# source adminrc su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron systemctl start neutron-server systemctl stop neutron-server pcs resource delete neutron-server-api --force pcs resource delete neutron-scale --force pcs resource delete neutron-ovs-cleanup --force pcs resource delete neutron-netns-cleanup --force pcs resource delete neutron-openvswitch-agent --force pcs resource delete neutron-dhcp-agent --force pcs resource delete neutron-l3-agent --force pcs resource delete neutron-metadata-agent --force pcs resource create neutron-server-api systemd:neutron-server op start timeout=180 --clone interleave=true pcs resource create neutron-scale ocf:neutron:NeutronScale --clone globally-unique=true clone-max=3 interleave=true pcs resource create neutron-ovs-cleanup ocf:neutron:OVSCleanup --clone interleave=true pcs resource create neutron-netns-cleanup ocf:neutron:NetnsCleanup --clone interleave=true pcs resource create neutron-openvswitch-agent systemd:neutron-openvswitch-agent --clone interleave=true pcs resource create neutron-dhcp-agent systemd:neutron-dhcp-agent --clone interleave=true pcs resource create neutron-l3-agent systemd:neutron-l3-agent --clone interleave=true pcs resource create neutron-metadata-agent systemd:neutron-metadata-agent --clone interleave=true pcs constraint order start keystone-clone then neutron-server-api-clone pcs constraint order start neutron-scale-clone then neutron-openvswitch-agent-clone pcs constraint colocation add neutron-openvswitch-agent-clone with neutron-scale-clone pcs constraint order start neutron-scale-clone then neutron-ovs-cleanup-clone pcs constraint colocation add neutron-ovs-cleanup-clone with neutron-scale-clone pcs constraint order start neutron-ovs-cleanup-clone then neutron-netns-cleanup-clone pcs constraint colocation add neutron-netns-cleanup-clone with neutron-ovs-cleanup-clone pcs constraint order start neutron-netns-cleanup-clone then neutron-openvswitch-agent-clone pcs constraint colocation add neutron-openvswitch-agent-clone with neutron-netns-cleanup-clone pcs constraint order start neutron-openvswitch-agent-clone then neutron-dhcp-agent-clone pcs constraint colocation add neutron-dhcp-agent-clone with neutron-openvswitch-agent-clone pcs constraint order start neutron-dhcp-agent-clone then neutron-l3-agent-clone pcs constraint colocation add neutron-l3-agent-clone with neutron-dhcp-agent-clone pcs constraint order start neutron-l3-agent-clone then neutron-metadata-agent-clone pcs constraint colocation add neutron-metadata-agent-clone with neutron-l3-agent-clone pcs constraint order start neutron-server-api-clone then neutron-scale-clone pcs constraint order start keystone-clone then neutron-scale-clone source /root/adminrc loop=0; while ! neutron net-list > /dev/null 2>&1 && [ "$loop" -lt 60 ]; do echo waiting neutron to be stable loop=$((loop + 1)) sleep 5 done if neutron router-list then neutron router-gateway-clear admin-router ext-net neutron router-interface-delete admin-router admin-subnet neutron router-delete admin-router neutron subnet-delete admin-subnet neutron subnet-delete ext-subnet neutron net-delete admin-net neutron net-delete ext-net fi neutron net-create ext-net --router:external --provider:physical_network external --provider:network_type flat neutron subnet-create ext-net 192.168.115.0/24 --name ext-subnet --allocation-pool start=192.168.115.200,end=192.168.115.250 --disable-dhcp --gateway 192.168.115.254 neutron net-create admin-net neutron subnet-create admin-net 192.128.1.0/24 --name admin-subnet --gateway 192.128.1.1 neutron router-create admin-router neutron router-interface-add admin-router admin-subnet neutron router-gateway-set admin-router ext-net

浙公网安备 33010602011771号

浙公网安备 33010602011771号