HMM XSS检测

HMM XSS检测

转自:http://www.freebuf.com/articles/web/133909.html前言

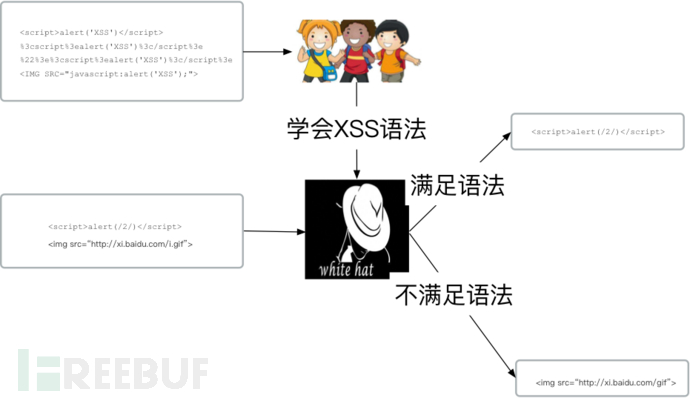

上篇我们介绍了HMM的基本原理以及常见的基于参数的异常检测实现,这次我们换个思路,把机器当一个刚入行的白帽子,我们训练他学会XSS的攻击语法,然后再让机器从访问日志中寻找符合攻击语法的疑似攻击日志。

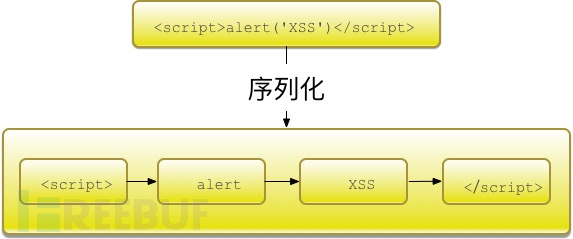

通过词法分割,可以把攻击载荷序列化成观察序列,举例如下:

词集/词袋模型

词集和词袋模型是机器学习中非常常用的一个数据处理模型,它们用于特征化字符串型数据。一般思路是将样本分词后,统计每个词的频率,即词频,根据需要选择全部或者部分词作为哈希表键值,并依次对该哈希表编号,这样就可以使用该哈希表对字符串进行编码。

- 词集模型:单词构成的集合,集合自然每个元素都只有一个,也即词集中的每个单词都只有一个

- 词袋模型:如果一个单词在文档中出现不止一次,并统计其出现的次数

本章使用词集模型即可。

假设存在如下数据集合:

dataset = [['my', 'dog', 'has', 'flea', 'problems', 'help', 'please'], ['maybe', 'not', 'take', 'him', 'to', 'dog', 'park', 'stupid'], ['my', 'dalmation', 'is', 'so', 'cute', 'I', 'love', 'him'], ['stop', 'posting', 'stupid', 'worthless', 'garbage'], ['mr', 'licks', 'ate', 'my', 'steak', 'how', 'to', 'stop', 'him'], ['quit', 'buying', 'worthless', 'dog', 'food', 'stupid']]首先生成词汇表:

vocabSet = set()

for doc in dataset:

vocabSet |= set(doc)

vocabList = list(vocabSet)根据词汇表生成词集:

# 词集模型

SOW = []

for doc in dataset:

vec = [0]*len(vocabList)

for i, word in enumerate(vocabList):

if word in doc:

vec[i] = 1

SOW.append(doc)

简化后的词集模型的核心代码如下:

fredist = nltk.FreqDist(tokens_list) # 单文件词频

keys=fredist.keys()

keys=keys[:max] #只提取前N个频发使用的单词 其余泛化成0

for localkey in keys: # 获取统计后的不重复词集

if localkey in wordbag.keys(): # 判断该词是否已在词集中

continue

else:

wordbag[localkey] = index_wordbag

index_wordbag += 1

数据处理与特征提取

常见的XSS攻击载荷列举如下:

<script>alert('XSS')</script>

%3cscript%3ealert('XSS')%3c/script%3e

%22%3e%3cscript%3ealert('XSS')%3c/script%3e

<IMG SRC="javascript:alert('XSS');">

<IMG SRC=javascript:alert("XSS")>

<IMG SRC=javascript:alert('XSS')>

<img src=xss onerror=alert(1)>

<IMG """><SCRIPT>alert("XSS")</SCRIPT>">

<IMG SRC=javascript:alert(String.fromCharCode(88,83,83))>

<IMG SRC="jav ascript:alert('XSS');">

<IMG SRC="jav ascript:alert('XSS');">

<BODY BACKGROUND="javascript:alert('XSS')">

<BODY ONLOAD=alert('XSS')>

需要支持的词法切分原则为:

单双引号包含的内容 ‘XSS’

http/https链接 http://xi.baidu.com/xss.js

<>标签 <script>

<>标签开头 <BODY

属性标签 ONLOAD=

<>标签结尾 >

函数体 “javascript:alert(‘XSS’);”

字符数字标量 代码实现举例如下:

tokens_pattern = r'''(?x)

"[^"]+"

|http://\S+

|</\w+>

|<\w+>

|<\w+

|\w+=

|>

|\w+\([^<]+\) #函数 比如alert(String.fromCharCode(88,83,83))

|\w+

'''

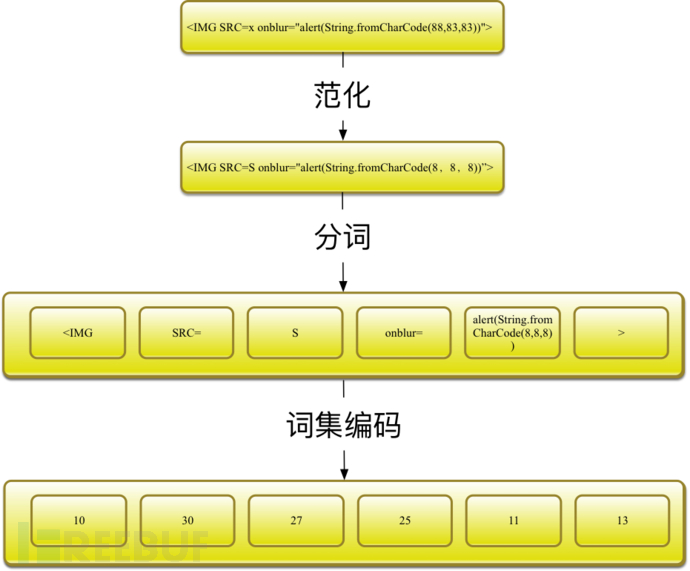

words=nltk.regexp_tokenize(line, tokens_pattern)另外,为了减少向量空间,需要把数字和字符以及超链接范化,具体原则为:

#数字常量替换成8

line, number = re.subn(r'\d+', "8", line)

#ulr日换成http://u

line, number = re.subn(r'(http|https)://[a-zA-Z0-9\.@&/#!#\?]+', "http://u", line)

#干掉注释

line, number = re.subn(r'\/\*.?\*\/', "", line)

范化后分词效果示例为:

#原始参数值:"><img src=x onerror=prompt(0)>)

#分词后:

['>', '<img', 'src=', 'x', 'onerror=', 'prompt(8)', '>']

#原始参数值:<iframe src="x-javascript:alert(document.domain);"></iframe>)

#分词后:

['<iframe', 'src=', '"x-javascript:alert(document.domain);"', '>', '</iframe>']

#原始参数值:<marquee><h1>XSS by xss</h1></marquee> )

#分词后:

['<marquee>', '<h8>', 'XSS', 'by', 'xss', '</h8>', '</marquee>']

#原始参数值:<script>-=alert;-(1)</script> "onmouseover="confirm(document.domain);"" </script>)

#分词后:

['<script>', 'alert', '8', '</script>', '"onmouseover="', 'confirm(document.domain)', '</script>']

#原始参数值:<script>alert(2)</script> "><img src=x onerror=prompt(document.domain)>)

#分词后:

['<script>', 'alert(8)', '</script>', '>', '<img', 'src=', 'x', 'onerror=', 'prompt(document.domain)', '>']结合词集模型,完整的流程举例如下:

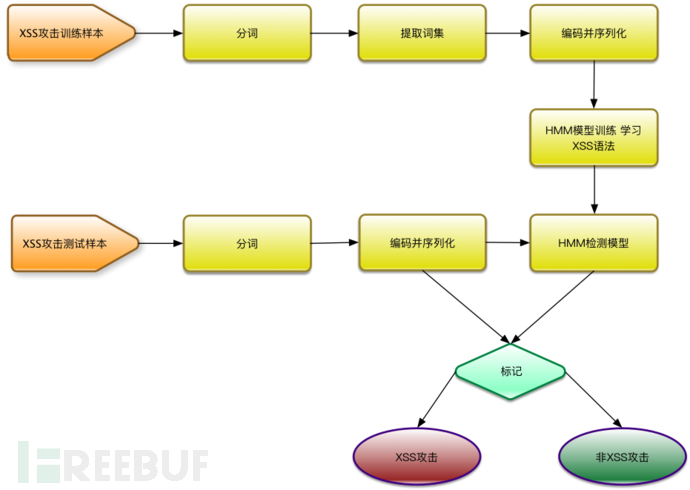

训练模型

将范化后的向量X以及对应的长度矩阵X_lens输入即可,需要X_lens的原因是参数样本的长度可能不一致,所以需要单独输入。

remodel = hmm.GaussianHMM(n_components=3, covariance_type="full", n_iter=100)

remodel.fit(X,X_lens)验证模型

整个系统运行过程如下:

验证阶段利用训练出来的HMM模型,输入观察序列获取概率,从而判断观察序列的合法性,训练样本是1000条典型的XSS攻击日志,通过分词、计算词集,提炼出200个特征,全部样本就用这200个特征进行编码并序列化,使用20000条正常日志和20000条XSS攻击识别(类似JSFUCK这类编码的暂时不支持),准确率达到90%以上,其中验证环节的核心代码如下:

with open(filename) as f:

for line in f:

line = line.strip('\n')

line = urllib.unquote(line)

h = HTMLParser.HTMLParser()

line = h.unescape(line)

if len(line) >= MIN_LEN:

line, number = re.subn(r'\d+', "8", line)

line, number = re.subn(r'(http|https)://[a-zA-Z0-9\.@&/#!#\?:]+', "http://u", line)

line, number = re.subn(r'\/\*.?\*\/', "", line)

words = do_str(line)

vers = []

for word in words:

if word in wordbag.keys():

vers.append([wordbag[word]])

else:

vers.append([-1])

np_vers = np.array(vers)

pro = remodel.score(np_vers)

if pro >= T:

print "SCORE:(%d) XSS_URL:(%s) " % (pro,line)较完整的代码如下:

# -*- coding:utf-8 -*- import sys import urllib import urlparse import re from hmmlearn import hmm import numpy as np from sklearn.externals import joblib import HTMLParser import nltk #处理参数值的最小长度 MIN_LEN=10 #状态个数 N=5 #最大似然概率阈值 T=-200 #字母 #数字 1 #<>,:"' #其他字符2 SEN=['<','>',',',':','\'','/',';','"','{','}','(',')'] index_wordbag=1 #词袋索引 wordbag={} #词袋 #</script><script>alert(String.fromCharCode(88,83,83))</script> #<IMG SRC=x onchange="alert(String.fromCharCode(88,83,83))"> #<;IFRAME SRC=http://ha.ckers.org/scriptlet.html <; #';alert(String.fromCharCode(88,83,83))//\';alert(String.fromCharCode(88,83,83))//";alert(String.fromCharCode(88,83,83)) # //\";alert(String.fromCharCode(88,83,83))//--></SCRIPT>">'><SCRIPT>alert(String.fromCharCode(88,83,83))</SCRIPT> tokens_pattern = r'''(?x) "[^"]+" |http://\S+ |</\w+> |<\w+> |<\w+ |\w+= |> |\w+\([^<]+\) #函数 比如alert(String.fromCharCode(88,83,83)) |\w+ ''' def ischeck(str): if re.match(r'^(http)',str): return False for i, c in enumerate(str): if ord(c) > 127 or ord(c) < 31: return False if c in SEN: return True #排除中文干扰 只处理127以内的字符 return False def do_str(line): words=nltk.regexp_tokenize(line, tokens_pattern) #print words return words def load_wordbag(filename,max=100): X = [[0]] X_lens = [1] tokens_list=[] global wordbag global index_wordbag with open(filename) as f: for line in f: line=line.strip('\n') #url解码 line=urllib.unquote(line) #处理html转义字符 h = HTMLParser.HTMLParser() line=h.unescape(line) if len(line) >= MIN_LEN: #print "Learning xss query param:(%s)" % line #数字常量替换成8 line, number = re.subn(r'\d+', "8", line) #ulr日换成http://u line, number = re.subn(r'(http|https)://[a-zA-Z0-9\.@&/#!#\?:=]+', "http://u", line) #干掉注释 line, number = re.subn(r'\/\*.?\*\/', "", line) #print "Learning xss query etl param:(%s) " % line tokens_list+=do_str(line) #X=np.concatenate( [X,vers]) #X_lens.append(len(vers)) fredist = nltk.FreqDist(tokens_list) # 单文件词频 keys=fredist.keys() keys=keys[:max] for localkey in keys: # 获取统计后的不重复词集 if localkey in wordbag.keys(): # 判断该词是否已在词袋中 continue else: wordbag[localkey] = index_wordbag index_wordbag += 1 print "GET wordbag size(%d)" % index_wordbag def main(filename): X = [[-1]] X_lens = [1] X = [] X_lens = [] global wordbag global index_wordbag with open(filename) as f: for line in f: line=line.strip('\n') #url解码 line=urllib.unquote(line) #处理html转义字符 h = HTMLParser.HTMLParser() line=h.unescape(line) vers=[] if len(line) >= MIN_LEN: #print "Learning xss query param:(%s)" % line #数字常量替换成8 line, number = re.subn(r'\d+', "8", line) #ulr日换成http://u line, number = re.subn(r'(http|https)://[a-zA-Z0-9\.@&/#!#\?:]+', "http://u", line) #干掉注释 line, number = re.subn(r'\/\*.?\*\/', "", line) #print "Learning xss query etl param:(%s) " % line words=do_str(line) for word in words: if word in wordbag.keys(): vers.append([wordbag[word]]) else: vers.append([-1]) print word, vers np_vers = np.array(vers) print "np_vers:", np_vers, "X:", X #print np_vers X=np.concatenate([X,np_vers]) X_lens.append(len(np_vers)) #print X_lens remodel = hmm.GaussianHMM(n_components=N, covariance_type="full", n_iter=100) print X remodel.fit(X,X_lens) joblib.dump(remodel, "xss-train.pkl") return remodel def test(remodel,filename): with open(filename) as f: for line in f: line = line.strip('\n') # url解码 line = urllib.unquote(line) # 处理html转义字符 h = HTMLParser.HTMLParser() line = h.unescape(line) if len(line) >= MIN_LEN: #print "CHK XSS_URL:(%s) " % (line) # 数字常量替换成8 line, number = re.subn(r'\d+', "8", line) # ulr日换成http://u line, number = re.subn(r'(http|https)://[a-zA-Z0-9\.@&/#!#\?:]+', "http://u", line) # 干掉注释 line, number = re.subn(r'\/\*.?\*\/', "", line) # print "Learning xss query etl param:(%s) " % line words = do_str(line) #print "GET Tokens (%s)" % words vers = [] for word in words: # print "ADD %s" % word if word in wordbag.keys(): vers.append([wordbag[word]]) else: vers.append([-1]) np_vers = np.array(vers) #print np_vers #print "CHK SCORE:(%d) QUREY_PARAM:(%s) XSS_URL:(%s) " % (pro, v, line) pro = remodel.score(np_vers) if pro >= T: print "SCORE:(%d) XSS_URL:(%s) " % (pro,line) #print line def test_normal(remodel,filename): with open(filename) as f: for line in f: # 切割参数 result = urlparse.urlparse(line) # url解码 query = urllib.unquote(result.query) params = urlparse.parse_qsl(query, True) for k, v in params: v=v.strip('\n') #print "CHECK v:%s LINE:%s " % (v, line) if len(v) >= MIN_LEN: # print "CHK XSS_URL:(%s) " % (line) # 数字常量替换成8 v, number = re.subn(r'\d+', "8", v) # ulr日换成http://u v, number = re.subn(r'(http|https)://[a-zA-Z0-9\.@&/#!#\?:]+', "http://u", v) # 干掉注释 v, number = re.subn(r'\/\*.?\*\/', "", v) # print "Learning xss query etl param:(%s) " % line words = do_str(v) # print "GET Tokens (%s)" % words vers = [] for word in words: # print "ADD %s" % word if word in wordbag.keys(): vers.append([wordbag[word]]) else: vers.append([-1]) np_vers = np.array(vers) # print np_vers # print "CHK SCORE:(%d) QUREY_PARAM:(%s) XSS_URL:(%s) " % (pro, v, line) pro = remodel.score(np_vers) print "CHK SCORE:(%d) QUREY_PARAM:(%s)" % (pro, v) #if pro >= T: #print "SCORE:(%d) XSS_URL:(%s) " % (pro, v) #print line if __name__ == '__main__': #test(remodel,sys.argv[2]) load_wordbag(sys.argv[1],2000) #print wordbag.keys() remodel = main(sys.argv[1]) #test_normal(remodel, sys.argv[2]) test(remodel, sys.argv[2])

浙公网安备 33010602011771号

浙公网安备 33010602011771号