线性回归算法

1、

回归算法的定义:

回归和分类的区别:

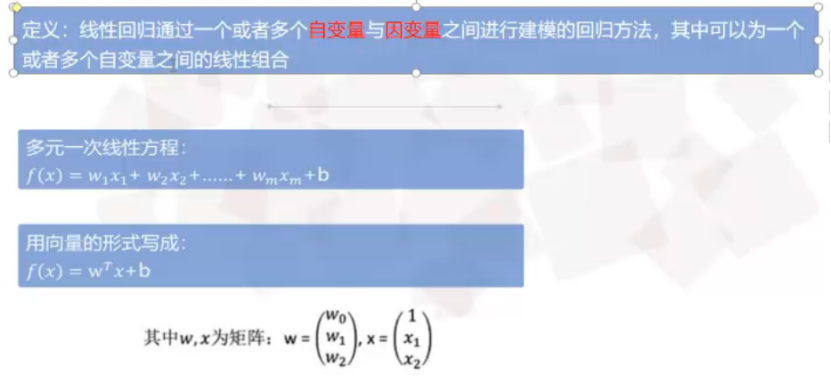

线性回归算法:

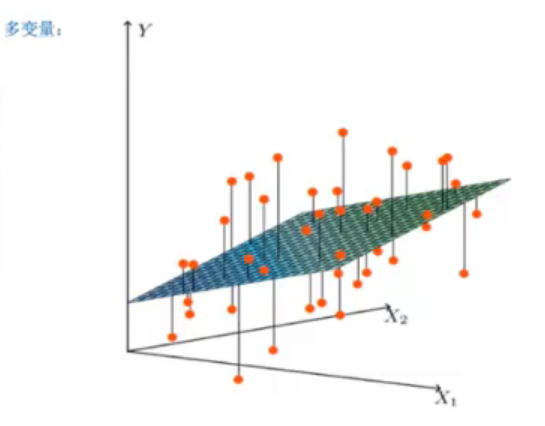

多变量线性回归:

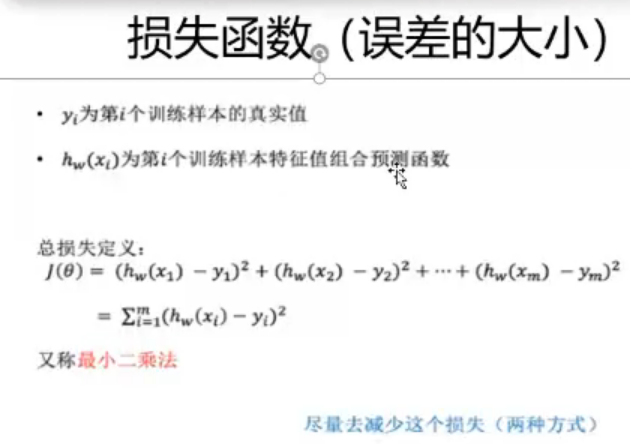

损失函数、最小二乘

线性回归模型经常用最小二乘逼近来拟合

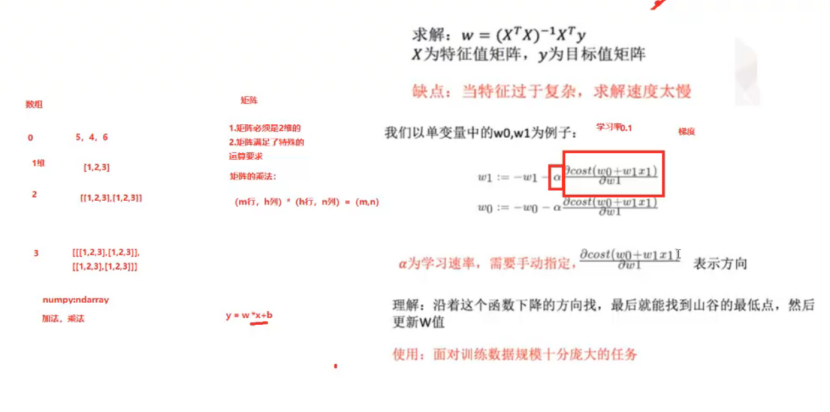

梯度下降:

import random import time import matplotlib.pyplot as plt xs = [0.1*x for x in range(0,10)] ys = [12*i+4 for i in xs] print(xs) print(ys) w = random.random() b = random.random() a1=[] b1=[] for i in range(100): for x,y in zip(xs,ys): #遍历 o = w*x + b e = (o-y) loss = e**2 dw = 2*e*x #求导 db = 2*e*1 w = w - 0.1*dw b = b - 0.1*db print('loss={0}, w={1}, b={2}'.format(loss,w,b)) a1.append(i) b1.append(loss) plt.plot(a1,b1) plt.pause(0.1) plt.show()

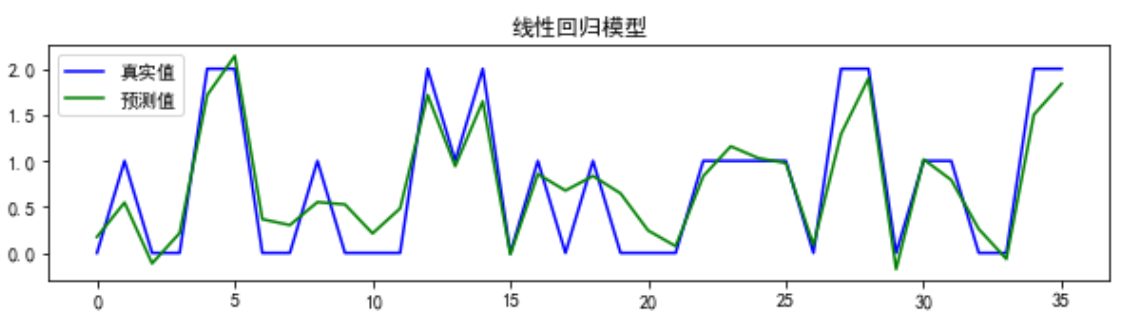

结果图:

2、线性回归的应用:

吸烟对死亡率的影响;

石油价格的走势;

股票价格的走势;

家庭用电预测等等。

3、

from sklearn.datasets import load_wine from sklearn.model_selection import train_test_split from sklearn.linear_model import LinearRegression import matplotlib.pyplot as plt data = load_wine() # 载入load_wine数据集 x = data['data'] y = data['target'] x_train, x_test, y_train, y_test = train_test_split(x, y, test_size=0.2, random_state=5) LR_model = LinearRegression().fit(x_train, y_train) # 构建线性回归模型 pre1 = LR_model.predict(x_test) p = plt.figure(figsize=(10,5)) a = p.add_subplot(2,1,1) plt.rcParams['font.sans-serif'] = 'SimHei' plt.rcParams['axes.unicode_minus'] = False plt.plot(range(y_test.size),y_test,color="blue") plt.plot(range(y_test.size),pre1,color="green") plt.legend(["真实值", "预测值"]) plt.title("线性回归模型") plt.show()

浙公网安备 33010602011771号

浙公网安备 33010602011771号