爬虫第四次作业

作业①:

(1)DangdangMySQL实验

-

要求:熟练掌握 scrapy 中 Item、Pipeline 数据的序列化输出方法;Scrapy+Xpath+MySQL数据库存储技术路线爬取当当网站图书数据

-

关键词:学生自由选择

-

输出信息:MYSQL的输出信息如下

items.py

import scrapy

class DangdangItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

title = scrapy.Field()

author = scrapy.Field()

date = scrapy.Field()

publisher = scrapy.Field()

detail = scrapy.Field()

price = scrapy.Field()

pass

pipelines.py

import pymysql

class DangdangPipeline:

# 连接数据库

def open_spider(self, spider):

print("opened")

try:

self.con = pymysql.connect(host="127.0.0.1", port=3306, user="root", passwd="123456", db="mydb", charset="utf8")

self.cursor = self.con.cursor(pymysql.cursors.DictCursor)

self.cursor.execute("delete from books")

self.opened = True

self.count = 0

except Exception as err:

print(err)

self.opened = False

# 关闭数据库

def close_spider(self, spider):

if self.opened:

self.con.commit()

self.con.close()

self.opened = False

print("closed")

print("总共爬取", self.count, "本书籍")

def process_item(self, item, spider):

try:

print(item["title"])

print(item["author"])

print(item["publisher"])

print(item["date"])

print(item["price"])

print(item["detail"])

print()

# 将数据插入数据库的表中

if self.opened:

self.cursor.execute("insert into books (bTitle,bAuthor,bPublisher,bDate,bPrice,bDetail) values (%s,%s,%s,%s,%s,%s)",

(item["title"], item["author"], item["publisher"], item["date"], item["price"], item["detail"]))

self.count += 1

except Exception as err:

print(err)

return item

settings.py

BOT_NAME = 'dangdang'

SPIDER_MODULES = ['dangdang.spiders']

NEWSPIDER_MODULE = 'dangdang.spiders'

ROBOTSTXT_OBEY = False

ITEM_PIPELINES = {

'dangdang.pipelines.DangdangPipeline': 300,

}

mySpider.py

import scrapy

from ..items import DangdangItem

from bs4 import UnicodeDammit

class MySpider(scrapy.Spider):

name = "mySpider"

key = '大数据' # 搜索关键词为大数据

source_url = "http://search.dangdang.com"

def start_requests(self):

url = MySpider.source_url+"/?key="+MySpider.key

yield scrapy.Request(url=url, callback=self.parse)

def parse(self, response):

try:

dammit = UnicodeDammit(response.body, ["utf-8", "gbk"])

data = dammit.unicode_markup

selector = scrapy.Selector(text=data)

# 获取根据关键词搜索到的图书信息

lis = selector.xpath("//li['@ddt-pit'][starts-with(@class,'line')]")

for li in lis:

title = li.xpath("./a[position()=1]/@title").extract_first()

price = li.xpath("./p[@class='price']/span[@class='search_now_price']/text()").extract_first()

author = li.xpath("./p[@class='search_book_author']/span[position()=1]/a/@title").extract_first()

date = li.xpath("./p[@class='search_book_author']/span[position()= last()-1]/text()").extract_first()

publisher = li.xpath("./p[@class='search_book_author']/span[position()=last()]/a/@title").extract_first()

detail = li.xpath("./p[@class='detail']/text()").extract_first()

# 处理数据格式并交由pipelines处理

item = DangdangItem()

item["title"] = title.strip() if title else ""

item["author"] = author.strip() if author else ""

item["date"] = date.strip(' /') if date else ""

item["publisher"] = publisher.strip() if publisher else ""

item["price"] = price.strip() if price else ""

item["detail"] = detail.strip() if detail else ""

yield item

link = selector.xpath("//div[@class='paging']/ul[@name='Fy']/li[@class='next']/a/@href").extract_first()

# 若下一页不为空则翻页

if link:

url = response.urljoin(link)

yield scrapy.Request(url=url, callback=self.parse)

except Exception as err:

print(err)

(2)心得体会

总体实现不算很难,课本上有实例

作业②:

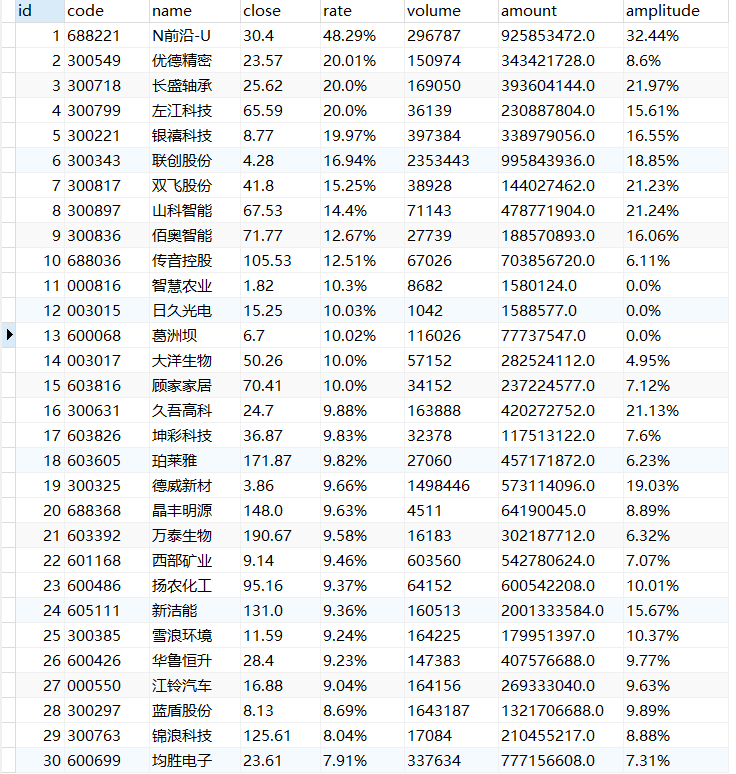

(1)StockMySQL实验

-

要求:熟练掌握 scrapy 中 Item、Pipeline 数据的序列化输出方法;Scrapy+Xpath+MySQL数据库存储技术路线爬取股票相关信息

-

候选网站:东方财富网:https://www.eastmoney.com/

-

输出信息:MYSQL数据库存储和输出格式如下,表头应是英文命名例如:序号id,股票代码:bStockNo……,由同学们自行定义设计表头:

序号 股票代码 股票名称 最新报价 涨跌幅 涨跌额 成交量 成交额 振幅 最高 最低 今开 昨收 1 688093 N世华 28.47 62.22% 10.92 26.13万 7.6亿 22.34 32.0 28.08 30.2 17.55 2......

items.py

import scrapy

class StockItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

code = scrapy.Field()

name = scrapy.Field()

close = scrapy.Field()

rate = scrapy.Field()

volume = scrapy.Field()

amount = scrapy.Field()

amplitude = scrapy.Field()

pass

pipelines.py

import pymysql

class StockPipeline:

# 连接数据库

def open_spider(self, spider):

print("opened")

try:

self.con = pymysql.connect(host="127.0.0.1", port=3306, user="root", passwd="123456", db="mydb",

charset="utf8")

self.cursor = self.con.cursor(pymysql.cursors.DictCursor)

self.cursor.execute("delete from stocks")

self.opened = True

self.count = 0

except Exception as err:

print(err)

self.opened = False

# 关闭数据库

def close_spider(self, spider):

if self.opened:

self.con.commit()

self.con.close()

self.opened = False

print("closed")

print("总共爬取", self.count, "支股票")

def process_item(self, item, spider):

try:

print(item["code"])

print(item["name"])

print(item["close"])

print(item["rate"])

print(item["volume"])

print(item["amount"])

print(item["amplitude"])

print()

# 将数据插入数据库的表中

if self.opened:

self.cursor.execute("insert into stocks (code,name,close,rate,volume,amount,amplitude) values (%s,%s,%s,%s,%s,%s,%s)",

(item["code"], item["name"], item["close"], item["rate"], item["volume"], item["amount"], item["amplitude"]))

self.count += 1

except Exception as err:

print(err)

return item

settings.py

BOT_NAME = 'stock'

SPIDER_MODULES = ['stock.spiders']

NEWSPIDER_MODULE = 'stock.spiders'

ROBOTSTXT_OBEY = False

ITEM_PIPELINES = {

'stock.pipelines.StockPipeline': 300,

}

mySpider.py

import scrapy

from ..items import StockItem

import re

import json

class MySpider(scrapy.Spider):

name = "mySpider"

start_urls = ["http://54.push2.eastmoney.com/api/qt/clist/get?cb=jQuery1124015380571520090935_1602750256400&pn=1&pz=20&po=1&np=1&ut=bd1d9ddb04089700cf9c27f6f7426281&fltt=2&invt=2&fid=f3&fs=m:0+t:6,m:0+t:13,m:0+t:80,m:1+t:2,m:1+t:23&fields=f1,f2,f3,f4,f5,f6,f7,f8,f9,f10,f12,f13,f14,f15,f16,f17,f18,f20,f21,f23,f24,f25,f22,f11,f62,f128,f136,f115,f152&_=1602750256401"]

def parse(self, response):

try:

data = response.text

# 去除多余信息并转换成json

start = data.index('{')

data = json.loads(data[start:len(data) - 2])

if data['data']:

# 选择和处理数据格式

for stock in data['data']['diff']:

item = StockItem()

item["code"] = str(stock['f12'])

item["name"] = stock['f14']

item["close"] = None if stock['f2'] == "-" else str(stock['f2'])

item["rate"] = None if stock['f3'] == "-" else str(stock['f3'])+'%'

item["volume"] = None if stock['f5'] == "-" else str(stock['f5'])

item["amount"] = None if stock['f6'] == "-" else str(stock['f6'])

item["amplitude"] = None if stock['f7'] == "-" else str(stock['f7'])+'%'

yield item

# 查询当前页面并翻页

pn = re.compile("pn=[0-9]*").findall(response.url)[0]

page = int(pn[3:])

url = response.url.replace("pn="+str(page), "pn="+str(page+1))

yield scrapy.Request(url=url, callback=self.parse)

except Exception as err:

print(err)

(2)心得体会

涨跌额不知道为啥就是爬不出来,最后选择了放弃,俺以后有空了再试试,其他部分跟以前的差不多,就是多了个导入数据库,就还好

作业③:

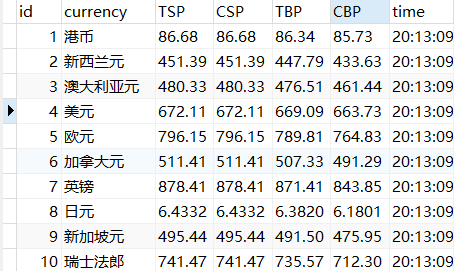

(1)CurrencyMySQL实验

-

要求:熟练掌握 scrapy 中 Item、Pipeline 数据的序列化输出方法;使用scrapy框架+Xpath+MySQL数据库存储技术路线爬取外汇网站数据。

-

候选网站:招商银行网:http://fx.cmbchina.com/hq/

-

输出信息:MYSQL数据库存储和输出格式

Id Currency TSP CSP TBP CBP Time 1 港币 86.60 86.60 86.26 85.65 15:36:30 2......

items.py

import scrapy

class CurrencyItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

currency = scrapy.Field()

TSP = scrapy.Field()

CSP = scrapy.Field()

TBP = scrapy.Field()

CBP = scrapy.Field()

time = scrapy.Field()

pass

pipelines.py

import pymysql

class CurrencyPipeline:

# 连接数据库

def open_spider(self, spider):

print("opened")

try:

self.con = pymysql.connect(host="127.0.0.1", port=3306, user="root", passwd="123456", db="mydb",charset="utf8")

self.cursor = self.con.cursor(pymysql.cursors.DictCursor)

self.cursor.execute("delete from currency")

self.opened = True

except Exception as err:

print(err)

self.opened = False

# 关闭数据库

def close_spider(self, spider):

if self.opened:

self.con.commit()

self.con.close()

self.opened = False

print("closed")

def process_item(self, item, spider):

try:

print(item["currency"])

print(item["TSP"])

print(item["CSP"])

print(item["TBP"])

print(item["CBP"])

print(item["time"])

print()

# 将数据插入数据库的表中

if self.opened:

self.cursor.execute("insert into currency (currency,TSP,CSP,TBP,CBP,time) values (%s,%s,%s,%s,%s,%s)",

(item["currency"], item["TSP"], item["CSP"], item["TBP"], item["CBP"], item["time"]))

except Exception as err:

print(err)

return item

settings.py

BOT_NAME = 'currency'

SPIDER_MODULES = ['currency.spiders']

NEWSPIDER_MODULE = 'currency.spiders'

ROBOTSTXT_OBEY = False

ITEM_PIPELINES = {

'currency.pipelines.CurrencyPipeline': 300,

}

mySpider.py

import scrapy

from ..items import CurrencyItem

from bs4 import UnicodeDammit

class MySpider(scrapy.Spider):

name = "mySpider"

start_urls = ["http://fx.cmbchina.com/Hq/"]

def parse(self, response):

try:

dammit = UnicodeDammit(response.body, ["utf-8", "gbk"])

data = dammit.unicode_markup

selector = scrapy.Selector(text=data)

# 获取外汇信息

trs = selector.xpath("//div[@id='realRateInfo']/table/tr")

for tr in trs[1:]:

currency = tr.xpath("./td[@class='fontbold'][position()=1]/text()").extract_first()

tsp = tr.xpath("./td[@class='numberright'][position()=1]/text()").extract_first()

csp = tr.xpath("./td[@class='numberright'][position()=2]/text()").extract_first()

tbp = tr.xpath("./td[@class='numberright'][position()=3]/text()").extract_first()

cbp = tr.xpath("./td[@class='numberright'][position()=4]/text()").extract_first()

time = tr.xpath("./td[@align='center'][position()=3]/text()").extract_first()

# 处理数据格式并交由pipelines处理

item = CurrencyItem()

item["currency"] = currency.strip() if currency else ""

item["TSP"] = tsp.strip() if tsp else ""

item["CSP"] = csp.strip() if csp else ""

item["TBP"] = tbp.strip() if tbp else ""

item["CBP"] = cbp.strip() if cbp else ""

item["time"] = time.strip() if time else ""

yield item

except Exception as err:

print(err)

(2)心得体会

俺一开始用的是xpath("tag[condition1 and condition2]")不知道为啥不行,后来改成了xpath("tag[condition1][condition2]")才可以

浙公网安备 33010602011771号

浙公网安备 33010602011771号