Apache Kafka Replication Design – High level

参考,https://cwiki.apache.org/confluence/display/KAFKA/Kafka+Replication

Kafka Replication High-level Design

Replication是0.8里面加入的新功能,保障当broker crash后数据不会丢失

设计目标,

提供可配置,需要保障stronger durability可以enable这个功能,如果想要更高的效率而不太在乎数据丢失的话,可以disable这个功能

自动replica管理,当cluster发生变化时,即broker server增加或减少时,可以自动的管理和调整replicas

问题,

1. 如何将partition的replicas均匀的分配到各个broker servers上面?

2. 如何进行replicas同步?

The purpose of adding replication in Kafka is for stronger durability and higher availability. We want to guarantee that any successfully published message will not be lost and can be consumed, even when there are server failures. Such failures can be caused by machine error, program error, or more commonly, software upgrades. We have the following high-level goals:

- Configurable durability guarantees: For example, an application with critical data can choose stronger durability, with increased write latency, and another application generating a large volume of soft-state data can choose weaker durability but better write response time.

- Automated replica management: We want to simplify the assignment of replicas to broker servers and be able to grow the cluster incrementally.

There are mainly two problems that we need to solve here:

- How to assign replicas of a partition to broker servers evenly?

- For a given partition, how to propagate every message to all replicas?

1. 如何均匀的分配partition的replicas?

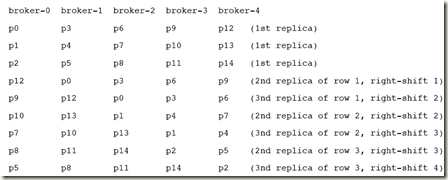

来个例子,15个partitions,5个brokers,做3-replicas

第一个replica怎么放,很简单,15/5,每个broker上依次放3个,如下图,012,345。。。。。。

然后再放其他replica的时候,思路,

a. 当一个broker down的时候,尽量可以把它的load分散到其他所有的broker上,从而避免造成单个broker的负担过重

所以要考虑k,broker-0上的3个partition,012的第二个replica没有都放到broker-1,而是分别放到broker-123上

b. 当然一个partition的多个replica也不能放到同一个broker,那样就没有意义了

考虑j,p0的3个replica分别放在broker-012上

注意这个分配的过程只会在初始化的时候做一次,并且一旦分配好后,会把结果存在zookeeper上,当cluster发生变化时不会重新分配,这样避免当增减broker时做大规模的数据迁移

当增减broker时,只会以最小的数据迁移来move部分的replicas(randomly select m/n partitions to move to b)

这个方法的问题是, 没有考虑到partition和broker server的差异性,简单可用

Suppose there are m partitions assigned to a broker i. The jth replica of partition k will be assigned to broker (i + j + k) mod n.

The following figure illustrates the replica assignments for partitions p0 to p14 on brokers broker-0 to broker-4. In this example, if broker-0 goes down, partitions p0, p1, and p2 can be served from all remaining 4 brokers. We store the information about the replica assignment for ach partition in Zookeeper.

2. replica同步问题

支持同步和异步的方式

异步比较简单,leader存成功,就告诉client存成功,优势是latency,缺点是容易丢数据

同步即需要多个replica都存成功才告诉client存成功,缺点就是latency比较长

在同步中,又需要考虑是否采用quorum-based的设计,或是采用all的设计(primary-backup)

quorum-based的设计,活性比较强,latency小些,问题是,至少要3-replics,并且要保证半数以上的replics是live的

primary-backup的设计需要写所有的replicas,当然问题就是latency比较长,而且一个慢节点会拖慢整个操作,好处就是比较简单,2-replicas也可以,只需要有一个replica是live就ok

Kafka最终选择的是primary-backup方案,比较务实,作为balance

通过各种timeout来部分解决慢节点的问题

并且follower中message写到内存后就向leader发commit,而不等真正写到disk,来优化latency的问题

Synchronous replication

同步方案可以容忍n-1 replica的失败,一个replica被选为leader,而其余的replicas作为followers

leader会维护in-sync replicas (ISR),follower replicas的列表,并且对于每个partition,leader和ISR信息都会存在zookeeper中

有些重要的offset需要解释一下,

log end offset (LEO),表示log中最后的message

high watermark (HW),表示已经被commited的message,HW以下的数据都是各个replicas间同步的,一致的。而以上的数据可能是脏数据,部分replica写成功,但最终失败了

flushed offset,前面说了为了效率message不是立刻被flush到disk的,而是periodically的flush到disk,所以这个offset表示哪些message是在disk上persisted的

这里需要注意的是,flushed offset有可能在HW的前面或后面,这个不一定

Our synchronous replication follows the typical primary-backup approach. Each partition has n replicas and can tolerate n-1 replica failures.

One of the replicas is elected as the leader and the rest of the replicas are followers.

The leader maintains a set of in-sync replicas (ISR): the set of replicas that have fully caught up with the leader. For each partition, we store in Zookeeper the current leader and the current ISR.

Each replica stores messages in a local log and maintains a few important offset positions in the log (depicted in Figure 1). The log end offset (LEO) represents the tail of the log. The high watermark (HW) is the offset of the last committed message. Each log is periodically synced to disks. Data before the flushed offset is guaranteed to be persisted on disks. As we will see, the flush offset can be before or after HW.

Writes

client找到leader,写请求

leader写入local log,然后每个followers通过socket channel获取更新,写入local log,然后发送acknowledgment到leader

leader发现已经收到所有follower发送的acknowledgment,表示message已经被committed,通知client,写成功

leader递增HW,并且定期广播HW到所有的followers,follower会定期去checkpoint HW数据,因为这个很重要,follower必须通过HW来判断那些数据是有效的(committed)

To publish a message to a partition, the client first finds the leader of the partition from Zookeeper and sends the message to the leader. The leader writes the message to its local log. Each follower constantly pulls new messages from the leader using a single socket channel. That way, the follower receives all messages in the same order as written in the leader. The follower writes each received message to its own log and sends an acknowledgment back to the leader. Once the leader receives the acknowledgment from all replicas in ISR, the message is committed. The leader advances the HW and sends an acknowledgment to the client. For better performance, each follower sends an acknowledgment after the message is written to memory. So, for each committed message, we guarantee that the message is stored in multiple replicas in memory. However, there is no guarantee that any replica has persisted the commit message to disks though. Given that correlated failures are relatively rare, this approach gives us a good balance between response time and durability. In the future, we may consider adding options that provide even stronger guarantees. The leader also periodically broadcasts the HW to all followers. The broadcasting can be piggybacked on the return value of the fetch requests from the followers. From time to time, each replica checkpoints its HW to its disk.

Reads

从leader读,注意只有HW下的数据会被读到,即只有committed过的数据会被读到

For simplicity, reads are always served from the leader. Only messages up to the HW are exposed to the reader

Failure scenarios

毫无疑问,这里需要考虑容错的问题

follower失败,很简单,leader可以直接把这个follower drop掉

当follower comeback的时候,需要truncate掉HW以上的数据,然后和leader同步,完成后,leader会把这个follower加会ISR

After a configured timeout period, the leader will drop the failed follower from its ISR and writes will continue on the remaining replicas in ISR. If the failed follower comes back, it first truncates its log to the last checkpointed HW. It then starts to catch up all messages after its HW from the leader. When the follower fully catches up, the leader will add it back to the current ISR.

leader失败比较复杂一些,在写请求不同的阶段分为3种cases,

真正写数据前,简单,client重发

数据写完后,简单,直接选个新leader,继续

数据写入一半,这个有点麻烦,client会超时重发,如果保证在某些replica上,相同message不被写两次

当leader失败的时候,需要重新选一个leader,ISR里面所有followers都可以申请成为leader

依赖zookeeper的分布式锁,谁先register上,谁就是leader

新的leader会将它的LEO作为新的HW,其他的follower自然需要truncate,catchup

There are 3 cases of leader failure which should be considered -

- The leader crashes before writing the messages to its local log. In this case, the client will timeout and resend the message to the new leader.

- The leader crashes after writing the messages to its local log, but before sending the response back to the client

- Atomicity has to be guaranteed: Either all the replicas wrote the messages or none of them

- The client will retry sending the message. In this scenario, the system should ideally ensure that the messages are not written twice. Maybe, one of the replicas had written the message to its local log, committed it, and it gets elected as the new leader.

- The leader crashes after sending the response. In this case, a new leader will be elected and start receiving requests.

When this happens, we need to perform the following steps to elect a new leader.

- Each surviving replica in ISR registers itself in Zookeeper.

- The replica that registers first becomes the new leader. The new leader chooses its LEO as the new HW.

- Each replica registers a listener in Zookeeper so that it will be informed of any leader change. Everytime a replica is notified about a new leader:

- If the replica is not the new leader (it must be a follower), it truncates its log to its HW and then starts to catch up from the new leader.

- The leader waits until all surviving replicas in ISR have caught up or a configured time has passed. The leader writes the current ISR to Zookeeper and opens itself up for both reads and writes.

(Note, during the initial startup when ISR is empty, any replica can become the leader.)

浙公网安备 33010602011771号

浙公网安备 33010602011771号