004.Ceph块设备基础使用

一 基础准备

- 参考《002.Ceph安装部署》文档部署一个基础集群;

- 新增节点主机名及IP在deploy节点添加解析:

1 [root@deploy ~]# echo "172.24.8.75 cephclient" >>/etc/hosts

- 配置国内yum源:

1 [root@cephclient ~]# yum -y update 2 [root@cephclient ~]# rm /etc/yum.repos.d/* -rf 3 [root@cephclient ~]# wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo 4 [root@cephclient ~]# yum -y install epel-release 5 [root@cephclient ~]# mv /etc/yum.repos.d/epel.repo /etc/yum.repos.d/epel.repo.backup 6 [root@cephclient ~]# mv /etc/yum.repos.d/epel-testing.repo /etc/yum.repos.d/epel-testing.repo.backup 7 [root@cephclient ~]# wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

二 块设备

2.1 添加普通用户

1 [root@cephclient ~]# useradd -d /home/cephuser -m cephuser 2 [root@cephclient ~]# echo "cephuser" | passwd --stdin cephuser #cephclient节点创建cephuser用户 3 [root@cephclient ~]# echo "cephuser ALL = (root) NOPASSWD:ALL" > /etc/sudoers.d/cephuser 4 [root@cephclient ~]# chmod 0440 /etc/sudoers.d/cephuser 5 [root@deploy ~]# su - manager 6 [manager@deploy ~]$ ssh-copy-id -i ~/.ssh/id_rsa.pub cephuser@172.24.8.75

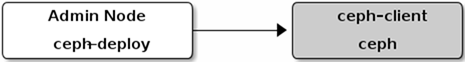

2.2 安装ceph-client

1 [root@deploy ~]# su - manager 2 [manager@deploy ~]$ cd my-cluster/ 3 [manager@deploy my-cluster]$ vi ~/.ssh/config 4 Host node1 5 Hostname node1 6 User cephuser 7 Host node2 8 Hostname node2 9 User cephuser 10 Host node3 11 Hostname node3 12 User cephuser 13 Host cephclient 14 Hostname cephclient #新增cephclient节点信息 15 User cephuser 16 [manager@deploy my-cluster]$ ceph-deploy install cephclient #安装Ceph

注意:若使用ceph-deploy部署的时候出现安装包无法下载,可在部署时候指定ceph.repo为国内源:

1 ceph-deploy install cephclient --repo-url=https://mirrors.aliyun.com/ceph/rpm-mimic/el7/ --gpg-url=https://mirrors.aliyun.com/ceph/keys/release.asc

1 [manager@deploy my-cluster]$ ceph-deploy admin cephclient

提示:为方便后期deploy节点管理cephclient,在CLI中使用命令中简化相关key的输出,可将key复制至相应节点。ceph-deploy 工具会把密钥环复制到/etc/ceph目录,要确保此密钥环文件有读权限(如 sudo chmod +r /etc/ceph/ceph.client.admin.keyring )。

2.3 创建pool

1 [manager@deploy my-cluster]$ ssh node1 sudo ceph osd pool create mytestpool 64

2.4 初始化pool

1 [root@cephclient ~]# ceph osd lspools 2 [root@cephclient ~]# rbd pool init mytestpool

2.5 创建块设备

1 [root@cephclient ~]# rbd create mytestpool/mytestimages --size 4096 --image-feature layering

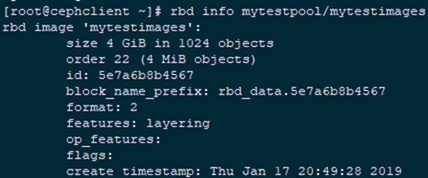

2.6 确认验证

1 [root@cephclient ~]# rbd ls mytestpool 2 mytestimages 3 [root@cephclient ~]# rbd showmapped 4 id pool image snap device 5 0 mytestpool mytestimages - /dev/rbd0 6 [root@cephclient ~]# rbd info mytestpool/mytestimages

2.7 将image映射为块设备

1 [root@cephclient ~]# rbd map mytestpool/mytestimages --name client.admin 2 /dev/rbd0

2.8 格式化设备

1 [root@cephclient ~]# mkfs.ext4 /dev/rbd/mytestpool/mytestimages 2 [root@cephclient ~]# lsblk

2.9 挂载并测试

1 [root@cephclient ~]# sudo mkdir /mnt/ceph-block-device 2 [root@cephclient ~]# sudo mount /dev/rbd/mytestpool/mytestimages /mnt/ceph-block-device/ 3 [root@cephclient ~]# cd /mnt/ceph-block-device/ 4 [root@cephclient ceph-block-device]# echo 'This is my test file!' >> test.txt 5 [root@cephclient ceph-block-device]# ls 6 lost+found test.txt

2.10 自动map

1 [root@cephclient ~]# vim /etc/ceph/rbdmap 2 # RbdDevice Parameters 3 #poolname/imagename id=client,keyring=/etc/ceph/ceph.client.keyring 4 mytestpool/mytestimages id=admin,keyring=/etc/ceph/ceph.client.admin.keyring

2.11 开机挂载

1 [root@cephclient ~]# vi /etc/fstab 2 #…… 3 /dev/rbd/mytestpool/mytestimages /mnt/ceph-block-device ext4 defaults,noatime,_netdev 0 0

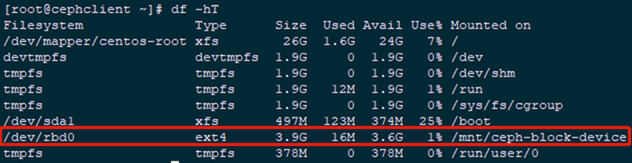

2.12 rbdmap开机启动

1 [root@cephclient ~]# systemctl enable rbdmap.service 2 [root@cephclient ~]# df -hT #查看验证

提示:若出现开机后依旧无法自动挂载,rbdmap也异常,可如下操作:

1 [root@cephclient ~]# vi /usr/lib/systemd/system/rbdmap.service 2 [Unit] 3 Description=Map RBD devices 4 WantedBy=multi-user.target #需要新增此行 5 #……

作者:木二

出处:http://www.cnblogs.com/itzgr/

关于作者:云计算、虚拟化,Linux,多多交流!

本文版权归作者所有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文链接!如有其他问题,可邮件(xhy@itzgr.com)咨询。