【Python数据分析】Python3操作Excel(二) 一些问题的解决与优化

继上一篇【Python数据分析】Python3操作Excel-以豆瓣图书Top250为例 对豆瓣图书Top250进行爬取以后,鉴于还有一些问题没有解决,所以进行了进一步的交流讨论,这期间得到了一只尼玛的帮助与启发,十分感谢!

上次存在的问题如下:

1.写入不能继续的问题

2.在Python IDLE中明明输出正确的结果,写到excel中就乱码了。

上述两个问题促使我改换excel处理模块,因为据说xlwt只支持到Excel 2003,很有可能会出问题。

虽然“一只尼玛”给了一个Validate函数,可是那是针对去除Windows下文件名中非法字符的函数,跟写入excel乱码没有关系,所以还是考虑更换模块。

更换xlsxwriter模块

这次我改成xlsxwriter这个模块,https://pypi.python.org/pypi/XlsxWriter. 同样可以pip3 install xlsxwriter,自动下载安装,简便易行。一些用法样例:

import xlsxwriter

# Create an new Excel file and add a worksheet.

workbook = xlsxwriter.Workbook('demo.xlsx')

worksheet = workbook.add_worksheet()

# Widen the first column to make the text clearer.

worksheet.set_column('A:A', 20)

# Add a bold format to use to highlight cells.

bold = workbook.add_format({'bold': True})

# Write some simple text.

worksheet.write('A1', 'Hello')

# Text with formatting.

worksheet.write('A2', 'World', bold)

# Write some numbers, with row/column notation.

worksheet.write(2, 0, 123)

worksheet.write(3, 0, 123.456)

# Insert an image.

worksheet.insert_image('B5', 'logo.png')

workbook.close()

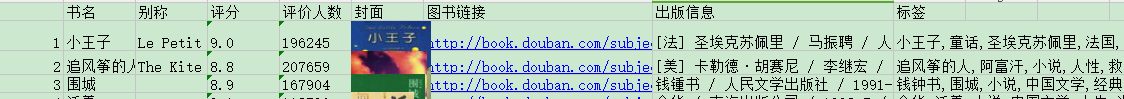

果断更换写入excel的代码。效果如下:

果然鼻子是鼻子脸是脸,该是链接就是链接,不管什么字符都能写,毕竟unicode。

所以说,选对模块很重要!选对模块很重要!选对模块很重要!(重说三)

如果要爬的内容不是很公正标准的字符串或数字的话,我是不会用xlwt啦。

这里有4中Python写入excel的模块对比:http://ju.outofmemory.cn/entry/56671

我截了一个对比图如下,具体可以看上面那篇文章,非常详细!

顺藤摸瓜

这个既然如此顺畅,还可以写入图片,那我们何不试试看呢?

目标:把图片链接那一列的内容换成真正的图片!

其实很简单,因为我们之前已经有了图片的存储路径,把它插入到里面就可以了。

the_img = "I:\\douban\\image\\"+bookName+".jpg"

writelist=[i+j,bookName,nickname,rating,nums,the_img,bookurl,notion,tag]

for k in range(0,9):

if k == 5:

worksheet.insert_image(i+j,k,the_img)

else:

worksheet.write(i+j,k,writelist[k])

出来是这样的效果,显然不美观,那我们应该适当调整一些每行的高度,以及让他们居中试试看:

查阅xlsxwriter文档可知,可以这么设置行列宽度和居中:(当然,这些操作在excel中可以直接做,而且可能会比写代码更快,但是我倒是想更多试试这个模块)

format = workbookx.add_format()

format.set_align('justify')

format.set_align('center')

format.set_align('vjustify')

format.set_align('vcenter')

format.set_text_wrap()

worksheet.set_row(0,12,format)

for i in range(1,251):

worksheet.set_row(i,70)

worksheet.set_column('A:A',3,format)

worksheet.set_column('B:C',17,format)

worksheet.set_column('D:D',4,format)

worksheet.set_column('E:E',7,format)

worksheet.set_column('F:F',10,format)

worksheet.set_column('G:G',19,format)

worksheet.set_column('H:I',40,format)

至此完成了excel的写入,只不过设置格式这块实在繁杂,得不断调试距离,大小,所以在excel里面做会简单些。

最终代码:

# -*- coding:utf-8 -*- import requests import re import xlwt import xlsxwriter from bs4 import BeautifulSoup from datetime import datetime import codecs now = datetime.now() #开始计时 print(now) def validate(title): #from nima rstr = r"[\/\\\:\*\?\"\<\>\|]" # '/\:*?"<>|-' new_title = re.sub(rstr, "", title) return new_title txtfile = codecs.open("top2501.txt",'w','utf-8') url = "http://book.douban.com/top250?" header = { "User-Agent": "Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/49.0.2623.13 Safari/537.36", "Referer": "http://book.douban.com/" } image_dir = "I:\\douban\\image\\" #下载图片 def download_img(imageurl,imageName = "xxx.jpg"): rsp = requests.get(imageurl, stream=True) image = rsp.content path = image_dir + imageName +'.jpg' #print(path) with open(path,'wb') as file: file.write(image) #建立Excel workbookx = xlsxwriter.Workbook('I:\\douban\\btop250.xlsx') worksheet = workbookx.add_worksheet() format = workbookx.add_format() format.set_align('justify') format.set_align('center') format.set_align('vjustify') format.set_align('vcenter') format.set_text_wrap() worksheet.set_row(0,12,format) for i in range(1,251): worksheet.set_row(i,70) worksheet.set_column('A:A',3,format) worksheet.set_column('B:C',17,format) worksheet.set_column('D:D',4,format) worksheet.set_column('E:E',7,format) worksheet.set_column('F:F',10,format) worksheet.set_column('G:G',19,format) worksheet.set_column('H:I',40,format) item = ['书名','别称','评分','评价人数','封面','图书链接','出版信息','标签'] for i in range(1,9): worksheet.write(0,i,item[i-1]) s = requests.Session() #建立会话 s.get(url,headers=header) for i in range(0,250,25): geturl = url + "/start=" + str(i) #要获取的页面地址 print("Now to get " + geturl) postData = {"start":i} #post数据 res = s.post(url,data = postData,headers = header) #post soup = BeautifulSoup(res.content.decode(),"html.parser") #BeautifulSoup解析 table = soup.findAll('table',{"width":"100%"}) #找到所有图书信息的table sz = len(table) #sz = 25,每页列出25篇文章 for j in range(1,sz+1): #j = 1~25 sp = BeautifulSoup(str(table[j-1]),"html.parser") #解析每本图书的信息 imageurl = sp.img['src'] #找图片链接 bookurl = sp.a['href'] #找图书链接 bookName = sp.div.a['title'] nickname = sp.div.span #找别名 if(nickname): #如果有别名则存储别名否则存’无‘ nickname = nickname.string.strip() else: nickname = "" notion = str(sp.find('p',{"class":"pl"}).string) #抓取出版信息,注意里面的.string还不是真的str类型 rating = str(sp.find('span',{"class":"rating_nums"}).string) #抓取平分数据 nums = sp.find('span',{"class":"pl"}).string #抓取评分人数 nums = nums.replace('(','').replace(')','').replace('\n','').strip() nums = re.findall('(\d+)人评价',nums)[0] download_img(imageurl,bookName) #下载图片 book = requests.get(bookurl) #打开该图书的网页 sp3 = BeautifulSoup(book.content,"html.parser") #解析 taglist = sp3.find_all('a',{"class":" tag"}) #找标签信息 tag = "" lis = [] for tagurl in taglist: sp4 = BeautifulSoup(str(tagurl),"html.parser") #解析每个标签 lis.append(str(sp4.a.string)) tag = ','.join(lis) #加逗号 the_img = "I:\\douban\\image\\"+bookName+".jpg" writelist=[i+j,bookName,nickname,rating,nums,the_img,bookurl,notion,tag] for k in range(0,9): if k == 5: worksheet.insert_image(i+j,k,the_img) else: worksheet.write(i+j,k,writelist[k]) txtfile.write(str(writelist[k])) txtfile.write('\t') txtfile.write(u'\r\n') end = datetime.now() #结束计时 print(end) print("程序耗时: " + str(end-now)) txtfile.close() workbookx.close()

运行结果如下:

2016-03-28 11:40:50.525635 Now to get http://book.douban.com/top250?/start=0 Now to get http://book.douban.com/top250?/start=25 Now to get http://book.douban.com/top250?/start=50 Now to get http://book.douban.com/top250?/start=75 Now to get http://book.douban.com/top250?/start=100 Now to get http://book.douban.com/top250?/start=125 Now to get http://book.douban.com/top250?/start=150 Now to get http://book.douban.com/top250?/start=175 Now to get http://book.douban.com/top250?/start=200 Now to get http://book.douban.com/top250?/start=225 2016-03-28 11:48:14.946184 程序耗时: 0:07:24.420549

顺利爬完250本书。此次爬取行动就正确性来说已告完成!

本次耗时7分24秒,还是显得太慢了。下一步就应该是如何在提高效率上面下功夫了。

作者:whatbeg

出处1:http://whatbeg.com/

出处2:http://www.cnblogs.com/whatbeg/

本文版权归作者和博客园共有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。

更多精彩文章抢先看?详见我的独立博客: whatbeg.com

浙公网安备 33010602011771号

浙公网安备 33010602011771号