python找寻合适的日志库logging Handler——Handler自定义实现

最近在用python tornado开发一个app的服务端。投产的系统肯定需要包含日志功能,这里就自然想到了用python自带的logging库。

logging中日志内容的输出都交由Handler来实现,但是logging中的自带的Handler都不能满足我们的需求。

我们希望能按时间段分割日志,如果使用FileHandler日志只能保存在一个文件,到后期日志文件会非常大,读写都成问题;而TimedRotatingFileHandler虽然可分割日志但是多进程时可能会造成日志文件被相互覆盖,导致日志丢失。

如此我便开始踏上找寻合适Handler的路上。

首先尝试使用FileHandler,然后写个脚本(比如用supervisord)定时切分日志的方式。但这里有一个问题是日志文件会被正在运行FileHandler保持,无法重命名,日志还是会一直写到同一个文件,尝试失败。

然后尝试使用继承logging自带的切分文件处理TimedRotatingFileHandler再重写处理切分日志的方法。

这里使用了一个网友所写的类

#!/usr/bin/env python # -*- coding: utf-8 -*- # # Copyright 2012 Ethan Zhang<http://github.com/Ethan-Zhang> # # Licensed under the Apache License, Version 2.0 (the "License"); you may # not use this file except in compliance with the License. You may obtain # a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, WITHOUT # WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the # License for the specific language governing permissions and limitations # under the License. import time import os from logging.handlers import TimedRotatingFileHandler class MultiProcessTimedRotatingFileHandler(TimedRotatingFileHandler): def doRollover(self): """ do a rollover; in this case, a date/time stamp is appended to the filename when the rollover happens. However, you want the file to be named for the start of the interval, not the current time. If there is a backup count, then we have to get a list of matching filenames, sort them and remove the one with the oldest suffix. """ if self.stream: self.stream.close() # get the time that this sequence started at and make it a TimeTuple t = self.rolloverAt - self.interval if self.utc: timeTuple = time.gmtime(t) else: timeTuple = time.localtime(t) dfn = self.baseFilename + "." + time.strftime(self.suffix, timeTuple) #if os.path.exists(dfn): # os.remove(dfn) if not os.path.exists(dfn): os.rename(self.baseFilename, dfn) if self.backupCount > 0: # find the oldest log file and delete it #s = glob.glob(self.baseFilename + ".20*") #if len(s) > self.backupCount: # s.sort() # os.remove(s[0]) for s in self.getFilesToDelete(): os.remove(s) #print "%s -> %s" % (self.baseFilename, dfn) self.mode = 'a' self.stream = self._open() currentTime = int(time.time()) newRolloverAt = self.computeRollover(currentTime) while newRolloverAt <= currentTime: newRolloverAt = newRolloverAt + self.interval #If DST changes and midnight or weekly rollover, adjust for this. if (self.when == 'MIDNIGHT' or self.when.startswith('W')) and not self.utc: dstNow = time.localtime(currentTime)[-1] dstAtRollover = time.localtime(newRolloverAt)[-1] if dstNow != dstAtRollover: if not dstNow: # DST kicks in before next rollover, so we need to deduct an hour newRolloverAt = newRolloverAt - 3600 else: # DST bows out before next rollover, so we need to add an hour newRolloverAt = newRolloverAt + 3600 self.rolloverAt = newRolloverAt

最后经过验证还是会出现日志文件被覆盖的情况,这个方案又被自己给否定。

“文件式的Handler不能满足需求,要就尝试则用数据库存日志吧。” 经过以上的失败后这个想法在浮现在脑海。

要实现这个方式需要两个步骤;第一步,自定义一个Handler;第二步,选择一中数据库存放日志。

如何自定义一个Handler呢?这个可用用顺藤摸瓜的方式找到方案。

首先,到python logging库中找到其自带的handler;对比找到一个自定义Handler最基本的约束,也就是找到其中最简单Handler的实现。

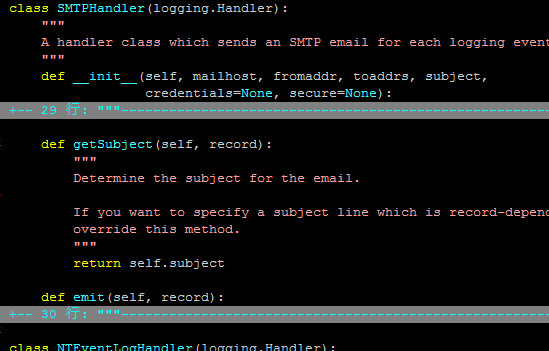

在handlers.py 文件下找到了最简单的SMTPHandler

其中getSubject只是给emit内部调用,所以很容易就得出实现自定义Handler的方式为:继承logging.Handler 然后再实现emit方法(写入日志时会调用)。

第一步已经实现,第二步就是选数据库了。

首先想选sqlite,查了一下其性能不太好,后面就尝试选mongodb。

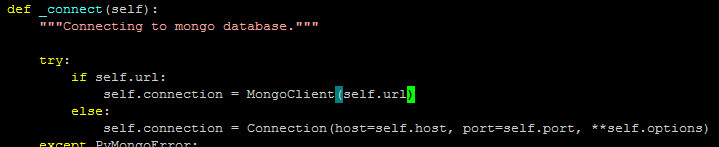

在要自力更生的写一个之前,手贱的到网上一搜果然已经有人写好的mongodb handler,唉...

import logging from bson.timestamp import Timestamp from pymongo import Connection from pymongo.collection import Collection from pymongo.errors import OperationFailure, PyMongoError """ Example format of generated bson document: { 'thread': -1216977216, 'threadName': 'MainThread', 'level': 'ERROR', 'timestamp': Timestamp(1290895671, 63), 'message': 'test message', 'module': 'test_module', 'fileName': '/var/projects/python/log4mongo-python/tests/test_handlers.py', 'lineNumber': 38, 'method': 'test_emit_exception', 'loggerName': 'testLogger', 'exception': { 'stackTrace': 'Traceback (most recent call last): File "/var/projects/python/log4mongo-python/tests/test_handlers.py", line 36, in test_emit_exception raise Exception(\'exc1\') Exception: exc1', 'message': 'exc1', 'code': 0 } } """ class MongoFormatter(logging.Formatter): DEFAULT_PROPERTIES = logging.LogRecord('', '', '', '', '', '', '', '').__dict__.keys() def format(self, record): """Formats LogRecord into python dictionary.""" # Standard document document = { 'timestamp': Timestamp(int(record.created), int(record.msecs)), 'level': record.levelname, 'thread': record.thread, 'threadName': record.threadName, 'message': record.getMessage(), 'loggerName': record.name, 'fileName': record.pathname, 'module': record.module, 'method': record.funcName, 'lineNumber': record.lineno } # Standard document decorated with exception info if record.exc_info is not None: document.update({ 'exception': { 'message': str(record.exc_info[1]), 'code': 0, 'stackTrace': self.formatException(record.exc_info) } }) # Standard document decorated with extra contextual information if len(self.DEFAULT_PROPERTIES) != len(record.__dict__): contextual_extra = set(record.__dict__).difference(set(self.DEFAULT_PROPERTIES)) if contextual_extra: for key in contextual_extra: document[key] = record.__dict__[key] return document class MongoHandler(logging.Handler): def __init__(self, level=logging.NOTSET, host='localhost', port=27017, database_name='logs', collection='logs', username=None, password=None, fail_silently=False, formatter=None, capped=False, capped_max=1000, capped_size=1000000, **options): """Setting up mongo handler, initializing mongo database connection via pymongo.""" logging.Handler.__init__(self, level) self.host = host self.port = port self.database_name = database_name self.collection_name = collection self.username = username self.password = password self.fail_silently = fail_silently self.connection = None self.db = None self.collection = None self.authenticated = False self.formatter = formatter or MongoFormatter() self.capped = capped self.capped_max = capped_max self.capped_size = capped_size self.options = options self._connect() def _connect(self): """Connecting to mongo database.""" try: self.connection = Connection(host=self.host, port=self.port, **self.options) except PyMongoError: if self.fail_silently: return else: raise self.db = self.connection[self.database_name] if self.username is not None and self.password is not None: self.authenticated = self.db.authenticate(self.username, self.password) if self.capped: try: # We don't want to override the capped collection (and it throws an error anyway) self.collection = Collection(self.db, self.collection_name, capped=True, max=self.capped_max, size=self.capped_size) except OperationFailure: # Capped collection exists, so get it. self.collection = self.db[self.collection_name] else: self.collection = self.db[self.collection_name] def close(self): """If authenticated, logging out and closing mongo database connection.""" if self.authenticated: self.db.logout() if self.connection is not None: self.connection.disconnect() def emit(self, record): """Inserting new logging record to mongo database.""" if self.collection is not None: try: self.collection.save(self.format(record)) except Exception: if not self.fail_silently: self.handleError(record)

还好我发现了他的连接不支持mongodb 的主从模式和副本集模式。哈哈,找到了发泄我写代码情绪的地方了(虽然只写了两三行代码)。

mongodb_url='mongodb://192.168.10.200:10001,192.168.10.201:10001' handler=MongoHandler(url=mongodb_url) logger.addHandler(handler)

加上这几句就可以开始使用啦。

本来这应该算高一段落了,但我又想到了这个方案的缺欠——这个日志系统要依赖以数据库,而我们的日志系统是整个系统的基础模块,这个方案又不太合适了。

让我们回到最初的梦想,日志还是直接写到文件中,logging只带的不能有效的分割文件我们就自己写一个。

于是有了这一个完全自己写、支持按时间分割的Handler

#!/usr/bin/env python # -*- coding:utf-8 -*- import logging import os,os.path import datetime _filefmt=os.path.join("logs","%Y-%m-%d","%H.log") class MyLoggerHandler(logging.Handler): def __init__(self,filefmt=None): self.filefmt=filefmt if filefmt is None: self.filefmt=_filefmt logging.Handler.__init__(self) def emit(self,record): msg=self.format(record) _filePath=datetime.datetime.now().strftime(self.filefmt) _dir=os.path.dirname(_filePath) try: if os.path.exists(_dir) is False: os.makedirs(_dir) except Exception: print "can not make dirs" print "filepath is "+_filePath pass try: _fobj=open(_filePath,'a') _fobj.write(msg) _fobj.write("\n") _fobj.flush() _fobj.close() except Exception: print "can not write to file" print "filepath is "+_filePath pass

这里的思路是每次写日志完后马上释放文件的句柄,这样这多进程中就不会照成冲突了。当然这里还可以最一下优化就是先缓冲一部分内容、或一段时间再一次性写入(这个后面觉得性能不敢再做吧)。

回顾一下这个找寻合适Handler的过程,主要集中在三步。第一、确认python自带的handler不合适,第二、确认handler的自定义实现方式,第三、选择日志的存储载体(这里思路似乎走的有点远,远离了最初的设想)。值得欣慰的是这个过程终于可以告一段落了。

浙公网安备 33010602011771号

浙公网安备 33010602011771号