RNN 入门教程 Part 1 – RNN 简介

转载 - Recurrent Neural Networks Tutorial, Part 1 – Introduction to RNNs

Recurrent Neural Networks (RNN) 是当前比较流行的模型,在自然语言处理中有很重要的应用。但是现在对RNN的详细结构模型以及如何实现RNN算法的博客很少,故本文目的是翻译该外文资料,帮助理解大家理解。同时,英文文章写的很有深度,而且翻译错误之处可能会很多,有兴趣的可以参阅英文原文。本教程主要分为以下四个部分:

As part of the tutorial we will implement a recurrent neural network based language model. The applications of language models are two-fold: First, it allows us to score arbitrary sentences based on how likely they are to occur in the real world. This gives us a measure of grammatical and semantic correctness. Such models are typically used as part of Machine Translation systems. Secondly, a language model allows us to generate new text (I think that’s the much cooler application). Training a language model on Shakespeare allows us to generate Shakespeare-like text. This fun post by Andrej Karpathy demonstrates what character-level language models based on RNNs are capable of.

I’m assuming that you are somewhat familiar with basic Neural Networks. If you’re not, you may want to head over to Implementing A Neural Network From Scratch, which guides you through the ideas and implementation behind non-recurrent networks.

什么是RNN模型?

The idea behind RNNs is to make use of sequential information. In a traditional neural network we assume that all inputs (and outputs) are independent of each other. But for many tasks that’s a very bad idea. If you want to predict the next word in a sentence you better know which words came before it. RNNs are called recurrent because they perform the same task for every element of a sequence, with the output being depended on the previous computations. Another way to think about RNNs is that they have a “memory” which captures information about what has been calculated so far. In theory RNNs can make use of information in arbitrarily long sequences, but in practice they are limited to looking back only a few steps (more on this later). Here is what a typical RNN looks like:

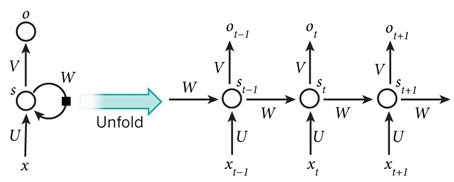

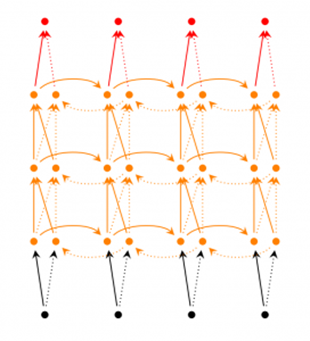

图1.将一个循环神经网络模型RNN按时间序列展开

The above diagram shows a RNN being unrolled (or unfolded) into a full network. By unrolling we simply mean that we write out the network for the complete sequence. For example, if the sequence we care about is a sentence of 5 words, the network would be unrolled into a 5-layer neural network, one layer for each word. The formulas that govern the computation happening in a RNN are as follows:

- $x_t$ 是在时间 $t$ 的输入值. For example, $x_1$ could be a one-hot vector corresponding to the second word of a sentence.

- $s_t$ 是时间$t$ 隐藏层的值. It’s the “memory” of the network. is calculated based on the previous hidden state and the input at the current step: \[s_t=f(Ux_t+Ws_{t-1})\]The function $f$ usually is a nonlinearity such as tanh or ReLU. $s_{-1}$, which is required to calculate the first hidden state, is typically initialized to all zeroes.

- $o_t$ 在时间$t$ 的输出值. For example, if we wanted to predict the next word in a sentence.\[o_t=softmax(Vs_t)\] It would be a vector of probabilities across our vocabulary.

需要注意以下几点:

- 你可以将 hidden state 当作模型 memory. 但是他不能保存较长时间的信息。

- 与传统的神经网络模型不同(每层使用不同的参数),RNN在每次迭代的时候都共享同一组参数($U,V,W$). 这表明,每次计算我们只是输入不同的 $X_t$,其他的参数都是一样的,这种设计方案极大减少了需要学习的参数。

- 每一次迭代(step),都会有一个输出,但我们并不必须使用它.例如,当我们预测一个句子的情感的时候我们可以使用RNN的最后的输出来做预测,而不是输入每一个单词所输出的值做预测(尽管这样也可以) 。这个模型的主要特点就是sequence的所有信息都保存在RNN的hidden state里面。

RNN模型的应用?

RNNs have shown great success in many NLP tasks. At this point I should mention that the most commonly used type of RNNs are LSTMs, which are much better at capturing long-term dependencies than vanilla RNNs are. But don’t worry, LSTMs are essentially the same thing as the RNN we will develop in this tutorial, they just have a different way of computing the hidden state. We’ll cover LSTMs in more detail in a later post. Here are some example applications of RNNs in NLP (by non means an exhaustive list).

语言、文本建模

Given a sequence of words we want to predict the probability of each word given the previous words. Language Models allow us to measure how likely a sentence is, which is an important input for Machine Translation (since high-probability sentences are typically correct). A side-effect of being able to predict the next word is that we get a generative model, which allows us to generate new text by sampling from the output probabilities. And depending on what our training data is we can generate all kinds of stuff. In Language Modeling our input is typically a sequence of words (encoded as one-hot vectors for example), and our output is the sequence of predicted words. When training the network we set $o_t=x_{t+1}$ since we want the output at step to be the actual next word.

相关的语言建模和文本自动生成的论文:

- Recurrent neural network based language model

- Extensions of Recurrent neural network based language model

- Generating Text with Recurrent Neural Networks

机器翻译

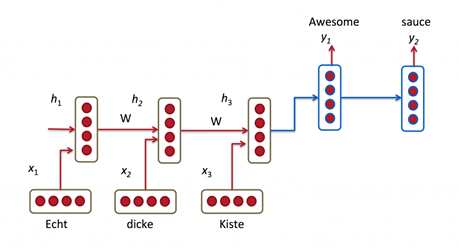

Machine Translation is similar to language modeling in that our input is a sequence of words in our source language (e.g. German). We want to output a sequence of words in our target language (e.g. English). A key difference is that our output only starts after we have seen the complete input, because the first word of our translated sentences may require information captured from the complete input sequence.

图2. RNN 机器翻译模型

机器翻译的相关论文:

- A Recursive Recurrent Neural Network for Statistical Machine Translation

- Sequence to Sequence Learning with Neural Networks

- Joint Language and Translation Modeling with Recurrent Neural Networks

语音识别

Given an input sequence of acoustic signals from a sound wave, we can predict a sequence of phonetic segments together with their probabilities.

语音识别的相关论文:

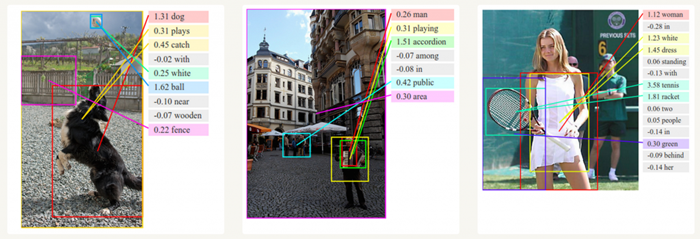

Generating Image Descriptions

Together with convolutional Neural Networks, RNNs have been used as part of a model to generate descriptions for unlabeled images. It’s quite amazing how well this seems to work. The combined model even aligns the generated words with features found in the images.

图3. Deep Visual-Semantic Alignments for Generating Image Descriptions.

训练RNN

训练RNN模型和训练传统的Neural Network 十分相似,都是采用 backpropagation算法,只不过在这里稍微一点不同而已。因为整个模型在每个steps都共享参数parameters,计算gradient的时候不只需要计算当前step的值,还要包含前面所有step的gradient的值. For example, in order to calculate the gradient at $t=4$ we would need to backpropagate 3 steps and sum up the gradients. This is called Backpropagation Through Time (BPTT). If this doesn’t make a whole lot of sense yet, don’t worry, we’ll have a whole post on the gory details. For now, just be aware of the fact that vanilla RNNs trained with BPTT have difficulties learning long-term dependencies (e.g. dependencies between steps that are far apart) due to what is called the vanishing/exploding gradient problem. There exists some machinery to deal with these problems, and certain types of RNNs (like LSTMs) were specifically designed to get around them.

RNN 扩展模型

Over the years researchers have developed more sophisticated types of RNNs to deal with some of the shortcomings of the vanilla RNN model. We will cover them in more detail in a later post, but I want this section to serve as a brief overview so that you are familiar with the taxonomy of models.

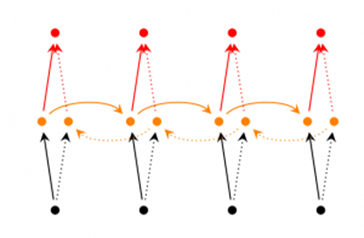

- 双向RNNs模型: are based on the idea that the output at time may not only depend on the previous elements in the sequence, but also future elements. For example, to predict a missing word in a sequence you want to look at both the left and the right context. Bidirectional RNNs are quite simple. They are just two RNNs stacked on top of each other. The output is then computed based on the hidden state of both RNNs.

- 堆叠双向RNN模型: are similar to Bidirectional RNNs, only that we now have multiple layers per time step. In practice this gives us a higher learning capacity (but we also need a lot of training data).

- LSTM 模型: are quite popular these days and we briefly talked about them above. LSTMs don’t have a fundamentally different architecture from RNNs, but they use a different function to compute the hidden state. The memory in LSTMs are called cells and you can think of them as black boxes that take as input the previous state $h_{t-1}$ and current input . Internally these cells decide what to keep in (and what to erase from) memory. They then combine the previous state, the current memory, and the input. In turns out that these types of units are very efficient at capturing long-term dependencies. LSTMs can be quite confusing in the beginning but if you’re interested in learning more this post has an excellent explanation.

结论

到目前为止,我们介绍了基本的RNN模型架构和它的应用领域,在下一篇文章中,我们将将介绍如何使用Python的 numpy 和Theano 分别建立一个RNN算法模型。

如有什么问题,请留言。