102302122许志安作业2

第二次作业

作业①:

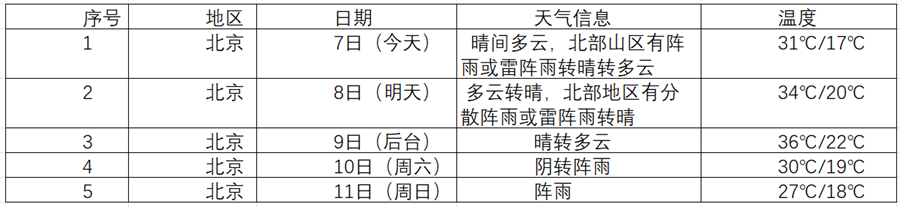

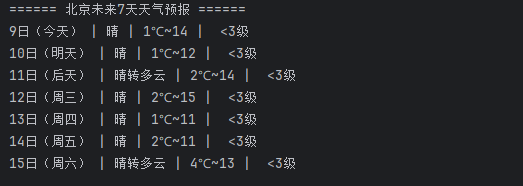

1、爬取城市天气实验:

要求:在中国气象网(http://www.weather.com.cn)给定城市集的7日天气预报,并保存在数据库。

– 输出信息:

代码:

import requests

from bs4 import BeautifulSoup

import sqlite3

city, code = "北京", "101010100"

url = f"http://www.weather.com.cn/weather/{code}.shtml"

headers = {"User-Agent": "Mozilla/5.0"}

resp = requests.get(url, headers=headers)

resp.encoding = "utf-8"

soup = BeautifulSoup(resp.text, "html.parser")

lis = soup.select("ul.t.clearfix li")

with sqlite3.connect("weather.db") as conn:

cur = conn.cursor()

cur.execute("""CREATE TABLE IF NOT EXISTS 天气预报 (

城市 TEXT, 日期 TEXT, 天气 TEXT,

最高温 TEXT, 最低温 TEXT, 风向 TEXT, 风力 TEXT

)""")

print(f"\n====== {city}未来7天天气预报 ======")

for li in lis[:7]:

get = lambda tag, cls=None: (li.find(tag, class_=cls).get_text(strip=True)

if li.find(tag, class_=cls) else "")

date, wea = get("h1"), get("p", "wea")

tem_high, tem_low = get("span"), get("i")

win = li.find("p", class_="win")

wind, power = (win.find("em").get_text(strip=True), win.find("i").get_text(strip=True)) if win else ("", "")

print(f"{date} | {wea} | {tem_low}~{tem_high} | {wind} {power}")

cur.execute("INSERT INTO 天气预报 VALUES (?, ?, ?, ?, ?, ?, ?)",

(city, date, wea, tem_high, tem_low, wind, power))

print(f"\n✅ {city} 7日天气数据已保存至 weather.db。")

输出:

2.心得:

对URL的学习运用更进一步

作业②

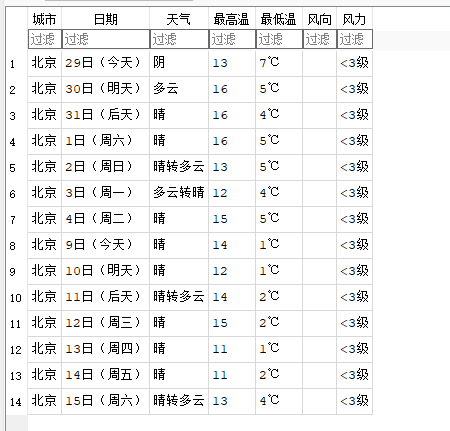

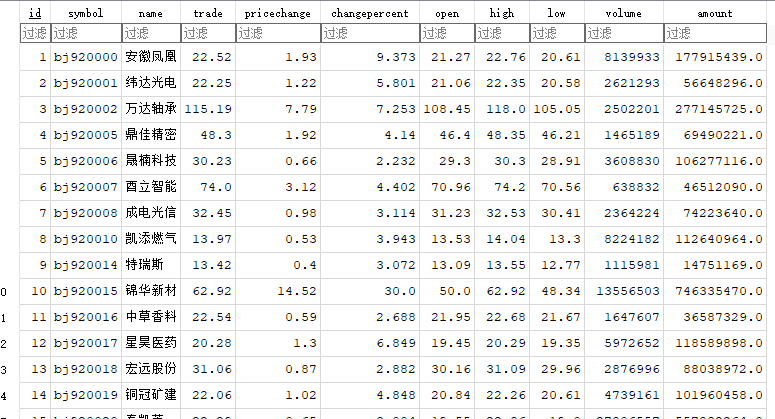

1.爬取股票信息实验

要求:用requests和BeautifulSoup库方法定向爬取股票相关信息,并存储在数据库中。

候选网站:东方财富网:https://www.eastmoney.com/

新浪股票:http://finance.sina.com.cn/stock/

技巧:在谷歌浏览器中进入F12调试模式进行抓包,查找股票列表加载使用的url,并分析api返回的值,并根据所要求的参数可适当更改api的请求参数。根据URL可观察请求的参数f1、f2可获取不同的数值,根据情况可删减请求的参数。

参考链接:https://zhuanlan.zhihu.com/p/50099084

输出信息:

代码:

import requests, sqlite3, json, time

from bs4 import BeautifulSoup

DB = "stocks.db"

URL = "https://vip.stock.finance.sina.com.cn/quotes_service/api/json_v2.php/Market_Center.getHQNodeData"

HEADERS = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64)"}

PARAMS = {"num": "40", "sort": "symbol", "asc": "1", "node": "hs_a", "_s_r_a": "page"}

with sqlite3.connect(DB) as conn:

cur = conn.cursor()

cur.execute("DROP TABLE IF EXISTS 股票信息")

cur.execute("""CREATE TABLE 股票信息(

序号 INTEGER PRIMARY KEY AUTOINCREMENT,

代码 TEXT, 名称 TEXT, 最新价 REAL, 涨跌额 REAL, 涨跌幅 REAL,

开盘价 REAL, 最高价 REAL, 最低价 REAL, 成交量 INTEGER, 成交额 REAL

)""")

for p in range(1, 11):

PARAMS["page"] = str(p)

print(f" 第 {p} 页...")

try:

html = requests.get(URL, headers=HEADERS, params=PARAMS, timeout=10).text

data = json.loads(BeautifulSoup(html, "html.parser").get_text())

except Exception as e:

print(f" 第 {p} 页解析失败: {e}")

continue

for i in data:

cur.execute("""INSERT INTO 股票信息

(代码, 名称, 最新价, 涨跌额, 涨跌幅, 开盘价, 最高价, 最低价, 成交量, 成交额)

VALUES (?, ?, ?, ?, ?, ?, ?, ?, ?, ?)""",

(i.get("symbol"), i.get("name"),

float(i.get("trade") or 0), float(i.get("pricechange") or 0),

float(i.get("changepercent") or 0), float(i.get("open") or 0),

float(i.get("high") or 0), float(i.get("low") or 0),

int(i.get("volume") or 0), float(i.get("amount") or 0)))

conn.commit()

time.sleep(0.5)

print("\n 数据已保存至 stocks.db(表:股票信息)\n前10条记录:")

for row in cur.execute("SELECT 代码, 名称, 最新价, 涨跌幅 FROM 股票信息 LIMIT 10"):

print(row)

输出:

2.心得:

我学会了理解了网络数据接口(API)的调用、正则表达式提取、JSON 数据解析以及数据库的增量更新操作

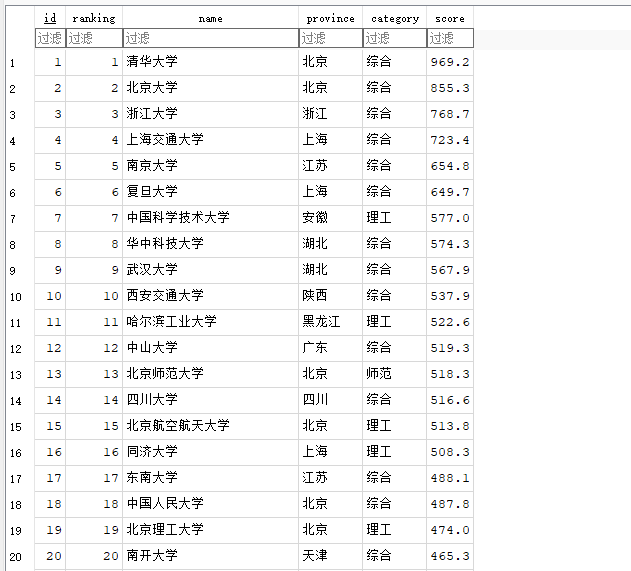

作业③:

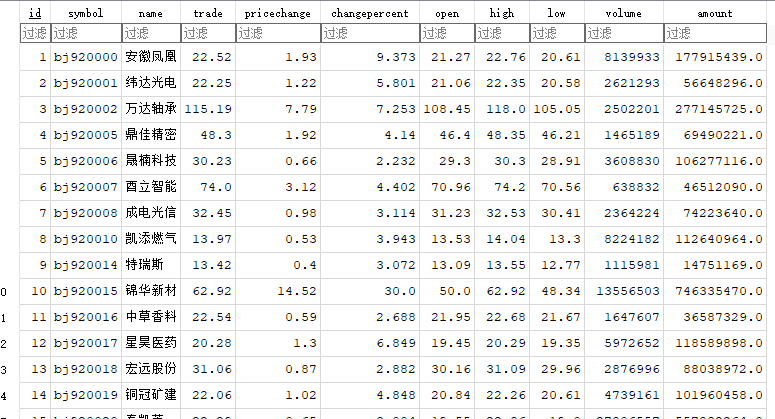

1、爬取大学信息实验

要求:爬取中国大学2021主榜(https://www.shanghairanking.cn/rankings/bcur/2021)所有院校信息,并存储在数据库中,同时将浏览器F12调试分析的过程录制Gif加入至博客中。

技巧:分析该网站的发包情况,分析获取数据的api

输出信息:

Gitee文件夹链接

排名 学校 省市 类型 总分

1 清华大学 北京 综合 969.2

代码:

import re, sqlite3

def read_file(path):

with open(path, encoding="utf-8") as f:

return f.read().strip()

params_str = read_file("params.txt")

args_str = read_file("args.txt")

params = [p.strip() for p in params_str.split(",")]

args = [p.strip().strip('"') for p in re.split(r',\s*(?=(?:[^"]*"[^"]*")*[^"]*$)', args_str)]

for i, arg in enumerate(args):

if arg == 'true': args[i] = True

elif arg == 'false': args[i] = False

elif arg == 'null': args[i] = None

elif re.fullmatch(r'-?\d+', arg): args[i] = int(arg)

elif re.fullmatch(r'-?\d+\.\d+', arg): args[i] = float(arg)

mapping = dict(zip(params, args))

def replace(text, mapping):

for k, v in mapping.items():

text = re.sub(rf'(?<!\w){re.escape(k)}(?!\w)', lambda _: str(v), text)

return text

with open("payload.js", encoding="utf-8") as f:

decoded = replace(f.read(), mapping)

match = re.search(r'univData:\s*\[(.*?)\],\s*indList:', decoded, re.S)

univ_text = match.group(1)

items = re.findall(r'\{(?:[^{}]|(?:\{[^{}]*\}))*?\}', univ_text)

records = []

for i, it in enumerate(items, 1):

name = re.search(r'univNameCn:"(.*?)"', it)

province = re.search(r'province:([^,]+)', it)

utype = re.search(r'univCategory:([^,]+)', it)

score = re.search(r'score:([0-9.]+)', it)

records.append((

i,

name.group(1) if name else "",

province.group(1) if province else "",

utype.group(1) if utype else "",

float(score.group(1)) if score else None

))

with sqlite3.connect("univ.db") as conn:

cur = conn.cursor()

cur.execute("DROP TABLE IF EXISTS univ")

cur.execute('''CREATE TABLE univ(

id INTEGER PRIMARY KEY AUTOINCREMENT,

ranking INTEGER,

name TEXT,

province TEXT,

category TEXT,

score REAL

)''')

cur.executemany('INSERT INTO univ(ranking, name, province, category, score) VALUES (?,?,?,?,?)', records)

conn.commit()

print(f"✅ 数据提取完成,共保存 {len(records)} 条到 univ.db!")

输出:

其中params.txt为:a,b,c,d,e,f,g,h,i,j,k,l,m,n,o,p,q,r,s,t,u,v,w,x,y,z,A,B,C,D,E,F,G,H,I,J,K,L,M,N,O,P,Q,R,S,T,U,V,W,X,Y,Z,,$,aa,ab,ac,ad,ae,af,ag,ah,ai,aj,ak,al,am,an,ao,ap,aq,ar,as,at,au,av,aw,ax,ay,az,aA,aB,aC,aD,aE,aF,aG,aH,aI,aJ,aK,aL,aM,aN,aO,aP,aQ,aR,aS,aT,aU,aV,aW,aX,aY,aZ,a,a$,ba,bb,bc,bd,be,bf,bg,bh,bi,bj,bk,bl,bm,bn,bo,bp,bq,br,bs,bt,bu,bv,bw,bx,by,bz,bA,bB,bC,bD,bE,bF,bG,bH,bI,bJ,bK,bL,bM,bN,bO,bP,bQ,bR,bS,bT,bU,bV,bW,bX,bY,bZ,b_,b$,ca,cb,cc,cd,ce,cf,cg,ch,ci,cj,ck,cl,cm,cn,co,cp,cq,cr,cs,ct,cu,cv,cw,cx,cy,cz,cA,cB,cC,cD,cE,cF,cG,cH,cI,cJ,cK,cL,cM,cN,cO,cP,cQ,cR,cS,cT,cU,cV,cW,cX,cY,cZ,c_,c$,da,db,dc,dd,de,df,dg,dh,di,dj,dk,dl,dm,dn,do0,dp,dq,dr,ds,dt,du,dv,dw,dx,dy,dz,dA,dB,dC,dD,dE,dF,dG,dH,dI,dJ,dK,dL,dM,dN,dO,dP,dQ,dR,dS,dT,dU,dV,dW,dX,dY,dZ,d_,d$,ea,eb,ec,ed,ee,ef,eg,eh,ei,ej,ek,el,em,en,eo,ep,eq,er,es,et,eu,ev,ew,ex,ey,ez,eA,eB,eC,eD,eE,eF,eG,eH,eI,eJ,eK,eL,eM,eN,eO,eP,eQ,eR,eS,eT,eU,eV,eW,eX,eY,eZ,e_,e$,fa,fb,fc,fd,fe,ff,fg,fh,fi,fj,fk,fl,fm,fn,fo,fp,fq,fr,fs,ft,fu,fv,fw,fx,fy,fz,fA,fB,fC,fD,fE,fF,fG,fH,fI,fJ,fK,fL,fM,fN,fO,fP,fQ,fR,fS,fT,fU,fV,fW,fX,fY,fZ,f_,f$,ga,gb,gc,gd,ge,gf,gg,gh,gi,gj,gk,gl,gm,gn,go,gp,gq,gr,gs,gt,gu,gv,gw,gx,gy,gz,gA,gB,gC,gD,gE,gF,gG,gH,gI,gJ,gK,gL,gM,gN,gO,gP,gQ,gR,gS,gT,gU,gV,gW,gX,gY,gZ,g_,g$,ha,hb,hc,hd,he,hf,hg,hh,hi,hj,hk,hl,hm,hn,ho,hp,hq,hr,hs,ht,hu,hv,hw,hx,hy,hz,hA,hB,hC,hD,hE,hF,hG,hH,hI,hJ,hK,hL,hM,hN,hO,hP,hQ,hR,hS,hT,hU,hV,hW,hX,hY,hZ,h_,h$,ia,ib,ic,id,ie,if0,ig,ih,ii,ij,ik,il,im,in0,io,ip,iq,ir,is,it,iu,iv,iw,ix,iy,iz,iA,iB,iC,iD,iE,iF,iG,iH,iI,iJ,iK,iL,iM,iN,iO,iP,iQ,iR,iS,iT,iU,iV,iW,iX,iY,iZ,i_,i$,ja,jb,jc,jd,je,jf,jg,jh,ji,jj,jk,jl,jm,jn,jo,jp,jq,jr,js,jt,ju,jv,jw,jx,jy,jz,jA,jB,jC,jD,jE,jF,jG,jH,jI,jJ,jK,jL,jM,jN,jO,jP,jQ,jR,jS,jT,jU,jV,jW,jX,jY,jZ,j_,j$,ka,kb,kc,kd,ke,kf,kg,kh,ki,kj,kk,kl,km,kn,ko,kp,kq,kr,ks,kt,ku,kv,kw,kx,ky,kz,kA,kB,kC,kD,kE,kF,kG,kH,kI,kJ,kK,kL,kM,kN,kO,kP,kQ,kR,kS,kT,kU,kV,kW,kX,kY,kZ,k_,k$,la,lb,lc,ld,le,lf,lg,lh,li,lj,lk,ll,lm,ln,lo,lp,lq,lr,ls,lt,lu,lv,lw,lx,ly,lz,lA,lB,lC,lD,lE,lF,lG,lH,lI,lJ,lK,lL,lM,lN,lO,lP,lQ,lR,lS,lT,lU,lV,lW,lX,lY,lZ,l_,l$,ma,mb,mc,md,me,mf,mg,mh,mi,mj,mk,ml,mm,mn,mo,mp,mq,mr,ms,mt,mu,mv,mw,mx,my,mz,mA,mB,mC,mD,mE,mF,mG,mH,mI,mJ,mK,mL,mM,mN,mO,mP,mQ,mR,mS,mT,mU,mV,mW,mX,mY,mZ,m_,m$,na,nb,nc,nd,ne,nf,ng,nh,ni,nj,nk,nl,nm,nn,no,np,nq,nr,ns,nt,nu,nv,nw,nx,ny,nz,nA,nB,nC,nD,nE,nF,nG,nH,nI,nJ,nK,nL,nM,nN,nO,nP,nQ,nR,nS,nT,nU,nV,nW,nX,nY,nZ,n_,n$,oa,ob,oc,od,oe,of,og,oh,oi,oj,ok,ol,om,on,oo,op,oq,or,os,ot,ou,ov,ow,ox,oy,oz,oA,oB,oC,oD,oE,oF,oG,oH,oI,oJ,oK,oL,oM,oN,oO,oP,oQ,oR,oS,oT,oU,oV,oW,oX,oY,oZ,o_,o$,pa,pb,pc,pd,pe,pf,pg,ph,pi,pj,pk,pl,pm,pn,po,pp,pq,pr,ps,pt,pu,pv,pw,px,py,pz,pA,pB,pC,pD,pE

args.txt为:"", false, null, 0, "理工", "综合", true, "师范", "双一流", "211", "江苏", "985", "农业", "山东", "河南", "河北", "北京", "辽宁", "陕西", "四川", "广东", "湖北", "湖南", "浙江", "安徽", "江西", 1, "黑龙江", "吉林", "上海", 2, "福建", "山西", "云南", "广西", "贵州", "甘肃", "内蒙古", "重庆", "天津", "新疆", "467", "496", "2025,2024,2023,2022,2021,2020", "林业", "5.8", "533", "2023-01-05T00:00:00+08:00", "23.1", "7.3", "海南", "37.9", "28.0", "4.3", "12.1", "16.8", "11.7", "3.7", "4.6", "297", "397", "21.8", "32.2", "16.6", "37.6", "24.6", "13.6", "13.9", "3.3", "5.2", "8.1", "3.9", "5.1", "5.6", "5.4", "2.6", "162", 93.5, 89.4, "宁夏", "青海", "西藏", 7, "11.3", "35.2", "9.5", "35.0", "32.7", "23.7", "33.2", "9.2", "30.6", "8.5", "22.7", "26.3", "8.0", "10.9", "26.0", "3.2", "6.8", "5.7", "13.8", "6.5", "5.5", "5.0", "13.2", "13.3", "15.6", "18.3", "3.0", "21.3", "12.0", "22.8", "3.6", "3.4", "3.5", "95", "109", "117", "129", "138", "147", "159", "185", "191", "193", "196", "213", "232", "237", "240", "267", "275", "301", "309", "314", "318", "332", "334", "339", "341", "354", "365", "371", "378", "384", "388", "403", "416", "418", "420", "423", "430", "438", "444", "449", "452", "457", "461", "465", "474", "477", "485", "487", "491", "501", "508", "513", "518", "522", "528", 83.4, "538", "555", 2021, 11, 14, 10, "12.8", "42.9", "18.8", "36.6", "4.8", "40.0", "37.7", "11.9", "45.2", "31.8", "10.4", "40.3", "11.2", "30.9", "37.8", "16.1", "19.7", "11.1", "23.8", "29.1", "0.2", "24.0", "27.3", "24.9", "39.5", "20.5", "23.4", "9.0", "4.1", "25.6", "12.9", "6.4", "18.0", "24.2", "7.4", "29.7", "26.5", "22.6", "29.9", "28.6", "10.1", "16.2", "19.4", "19.5", "18.6", "27.4", "17.1", "16.0", "27.6", "7.9", "28.7", "19.3", "29.5", "38.2", "8.9", "3.8", "15.7", "13.5", "1.7", "16.9", "33.4", "132.7", "15.2", "8.7", "20.3", "5.3", "0.3", "4.0", "17.4", "2.7", "160", "161", "164", "165", "166", "167", "168", 130.6, 105.5, 2025, "学生、家长、高校管理人员、高教研究人员等", "中国大学排名(主榜)", 25, 13, 12, "全部", "1", "88.0", 5, "2", "36.1", "25.9", "3", "34.3", "4", "35.5", "21.6", "39.2", "5", "10.8", "4.9", "30.4", "6", "46.2", "7", "0.8", "42.1", "8", "32.1", "22.9", "31.3", "9", "43.0", "25.7", "10", "34.5", "10.0", "26.2", "46.5", "11", "47.0", "33.5", "35.8", "25.8", "12", "46.7", "13.7", "31.4", "33.3", "13", "34.8", "42.3", "13.4", "29.4", "14", "30.7", "15", "42.6", "26.7", "16", "12.5", "17", "12.4", "44.5", "44.8", "18", "10.3", "15.8", "19", "32.3", "19.2", "20", "21", "28.8", "9.6", "22", "45.0", "23", "30.8", "16.7", "16.3", "24", "25", "32.4", "26", "9.4", "27", "33.7", "18.5", "21.9", "28", "30.2", "31.0", "16.4", "29", "34.4", "41.2", "2.9", "30", "38.4", "6.6", "31", "4.4", "17.0", "32", "26.4", "33", "6.1", "34", "38.8", "17.7", "35", "36", "38.1", "11.5", "14.9", "37", "14.3", "18.9", "38", "13.0", "39", "27.8", "33.8", "3.1", "40", "41", "28.9", "42", "28.5", "38.0", "34.0", "1.5", "43", "15.1", "44", "31.2", "120.0", "14.4", "45", "149.8", "7.5", "46", "47", "38.6", "48", "49", "25.2", "50", "19.8", "51", "5.9", "6.7", "52", "4.2", "53", "1.6", "54", "55", "20.0", "56", "39.8", "18.1", "57", "35.6", "58", "10.5", "14.1", "59", "8.2", "60", "140.8", "12.6", "61", "62", "17.6", "63", "64", "1.1", "65", "20.9", "66", "67", "68", "2.1", "69", "123.9", "27.1", "70", "25.5", "37.4", "71", "72", "73", "74", "75", "76", "27.9", "7.0", "77", "78", "79", "80", "81", "82", "83", "84", "1.4", "85", "86", "87", "88", "89", "90", "91", "92", "93", "109.0", "94", 235.7, "97", "98", "99", "100", "101", "102", "103", "104", "105", "106", "107", "108", 223.8, "111", "112", "113", "114", "115", "116", 215.5, "119", "120", "121", "122", "123", "124", "125", "126", "127", "128", 206.7, "131", "132", "133", "134", "135", "136", "137", 201, "140", "141", "142", "143", "144", "145", "146", 194.6, "149", "150", "151", "152", "153", "154", "155", "156", "157", "158", 183.3, "169", "170", "171", "172", "173", "174", "175", "176", "177", "178", "179", "180", "181", "182", "183", "184", 169.6, "187", "188", "189", "190", 168.1, 167, "195", 165.5, "198", "199", "200", "201", "202", "203", "204", "205", "206", "207", "208", "209", "210", "212", 160.5, "215", "216", "217", "218", "219", "220", "221", "222", "223", "224", "225", "226", "227", "228", "229", "230", "231", 153.3, "234", "235", "236", 150.8, "239", 149.9, "242", "243", "244", "245", "246", "247", "248", "249", "250", "251", "252", "253", "254", "255", "256", "257", "258", "259", "260", "261", "262", "263", "264", "265", "266", 139.7, "269", "270", "271", "272", "273", "274", 137, "277", "278", "279", "280", "281", "282", "283", "284", "285", "286", "287", "288", "289", "290", "291", "292", "293", "294", "295", "296", "300", 130.2, "303", "304", "305", "306", "307", "308", 128.4, "311", "312", "313", 125.9, "316", "317", 124.9, "320", "321", "Wuyi University", "322", "323", "324", "325", "326", "327", "328", "329", "330", "331", 120.9, 120.8, "Taizhou University", "336", "337", "338", 119.9, 119.7, "343", "344", "345", "346", "347", "348", "349", "350", "351", "352", "353", 115.4, "356", "357", "358", "359", "360", "361", "362", "363", "364", 112.6, "367", "368", "369", "370", 111, "373", "374", "375", "376", "377", 109.4, "380", "381", "382", "383", 107.6, "386", "387", 107.1, "390", "391", "392", "393", "394", "395", "396", "400", "401", "402", 104.7, "405", "406", "407", "408", "409", "410", "411", "412", "413", "414", "415", 101.2, 101.1, 100.9, "422", 100.3, "425", "426", "427", "428", "429", 99, "432", "433", "434", "435", "436", "437", 97.6, "440", "441", "442", "443", 96.5, "446", "447", "448", 95.8, "451", 95.2, "454", "455", "456", 94.8, "459", "460", 94.3, "463", "464", 93.6, "472", "473", 92.3, "476", 91.7, "479", "480", "481", "482", "483", "484", 90.7, 90.6, "489", "490", 90.2, "493", "494", "495", 89.3, "503", "504", "505", "506", "507", 87.4, "510", "511", "512", 86.8, "515", "516", "517", 86.2, "520", "521", 85.8, "524", "525", "526", "527", 84.6, "530", "531", "532", "537", 82.8, "540", "541", "542", "543", "544", "545", "546", "547", "548", "549", "550", "551", "552", "553", "554", 78.1, "557", "558", "559", "560", "561", "562", "563", "564", "565", "566", "567", "568", "569", "570", "571", "572", "573", "574", "575", "576", "577", "578", "579", "580", "581", "582", 4, "2025-04-15T00:00:00+08:00", "logo\u002Fannual\u002Fbcur\u002F2025.png", "软科中国大学排名于2015年首次发布,多年来以专业、客观、透明的优势赢得了高等教育领域内外的广泛关注和认可,已经成为具有重要社会影响力和权威参考价值的中国大学排名领先品牌。软科中国大学排名以服务中国高等教育发展和进步为导向,采用数百项指标变量对中国大学进行全方位、分类别、监测式评价,向学生、家长和全社会提供及时、可靠、丰富的中国高校可比信息。", 2024, 2023, 2022, 15, 2020, 2019, 2018, 2017, 2016, 2015

2.心得:

通过本次作业,我对“非标准数据解析”和“正则表达式应用”有了更深的理解。

浙公网安备 33010602011771号

浙公网安备 33010602011771号